Keras教学(10):使用Keras搭建ResNet系列残差卷积神经网络

【写在前面】:大家好,我是【猪葛】

一个很看好AI前景的算法工程师

在接下来的系列博客里面我会持续更新Keras的教学内容(文末有大纲)

内容主要分为两部分

第一部分是Keras的基础知识

第二部分是使用Keras搭建FasterCNN、YOLO目标检测神经网络

代码复用性高

如果你也感兴趣,欢迎关注我的动态一起学习

学习建议:

有些内容一开始学起来有点蒙,对照着“学习目标”去学习即可

一步一个脚印,走到山顶再往下看一切风景就全明了了

本篇博客学习目标:1、理解ResNet的网络结构;2、学会搭建ResNet18-layer卷积神经网络

文章目录

- 一、ResNet残差卷积神经网络简介

- 二、ResNet网络结构分析

-

- 2-1、捷径连接

- 2-2、更深的捷径连接

- 2-3、四种不同的残差模块

- 2-4、整体结构

- 三、ResNet网络代码编写

-

- 3-1、细讲使用Keras函数式API搭建ResNet18网络

-

- 3-1-1、ResNet18网络中conv2_x的残差模块a

- 3-1-2、ResNet18网络中conv2_x对应的残差模块b

- 3-1-3、对残差模块a和残差模块b进行第二次封装

- 3-1-4、网络总体结构

- 3-1-5、ResNet18完整结构代码

- 3-1-6、打印出来的网络结构

- 3-2、34-layer网络结构代码

-

- 3-2-1、34-layer网络结构完整代码(写得直白)

- 3-2-2、34-layer网络结构完整代码(写得简洁)

- 3-3、50-layer网络结构代码

- 3-4、101-layer网络结构代码

- 3-5、152-layer网络结构代码

一、ResNet残差卷积神经网络简介

ResNet来源于《Deep Residual Learning for Image Recognition》这篇论文,在2015年,由微软亚洲研究院的何凯明等人共同发表。其研究成果在ILSVRC 2015挑战赛ImageNet数据集上获得分类任务和检测任务双冠军。ResNet论文至今已经获得超 25000 的引用量,可见 ResNet 在人工智能领域的影响力。

我们常说的ResNet是一种基于跳跃连接的深度残差网络算法。根据该算法提出了18 层、34层、50 层、101 层、152 层的 ResNet-18,ResNet-34,ResNet-50,ResNet-101 和 ResNet-152 等模型,甚至成功训练出层数达到1202层的超深的神经网络。

看到这里相比很多人很慌,别怕,你先看一下3-1-5节中ResNet18网络的完整代码,你会发现,其实这个网络真的挺简单的

下面我们开始内容分析

二、ResNet网络结构分析

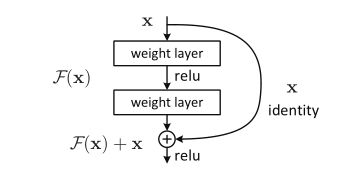

2-1、捷径连接

捷径连接(Shortcut Connections)是构建ResNet的一个主要方法,用来恒等映射和跳层连接。示下图所示,是构建ResNet的一个残差模块(Residual):

配图:捷径连接结构图

其中,x表示的是输入的特征矩阵;网络主路的输出F ( x ) F(x)F(x)是残差函数;网络的支路就是我们所说的捷径连接(Shortcut Connection),其中x identity表示的是恒等映射,也就是:直接将输入的特征矩阵x本身跳层传递到输出。

那么,直接将主路和支路输出相加得:H ( x ) = F ( x ) + x ,最后再加上一个relu激活函数就得到残差模块的输出了

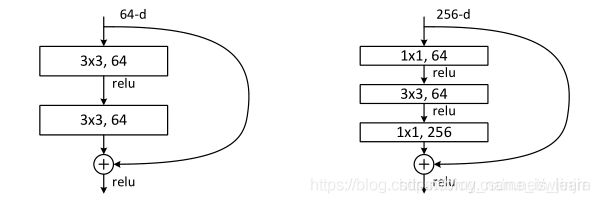

2-2、更深的捷径连接

在ResNet系列网络中,提出了两种主要的捷径连接(Residual)。一种是如下图(左)所示的,应对较低深度的ResNet18、ResNet34,这就是上节讲到的捷径连接;还有一种是下图(右)所示的,应对层数很深的ResNet50、ResNet101、ResNet152等,这就是这节要讲的更深的捷径连接。

配图:两种不同的捷径连接

从结构图中可以看出不同,更深的捷径连接,在3×3的卷积核层前后分别加入了一个1×1的卷积核层,进行降维和升维。使得网络的深度增加,而参数量反而大大减少,有助于网络的训练

因为更深的捷径连接看起来有点像瓶颈,后人也习惯性称之为瓶颈结构,下面的描述我们也使用这个名称

2-3、四种不同的残差模块

在原论文中的完整ResNet结构中,我们可以看见带实现和虚线的两种不同的捷径连接。如下图所示:

配图:捷径连接中支路实线和虚线的区别

图中(左)的支路连接采用的是实线,表示的是不对支路进行处理。图中(右)的支路连接采用的是虚线,表示的是对支路进行两倍的尺寸缩小,例如原先的特征图可能是(56, 56, 64), 经过步长为2, padding为’same’,卷积核个数为128,卷积核大小为(1, 1)的卷积之后就变成(28, 28, 128)了,特征图的尺寸减少了两倍,我们也成为两倍下采样

我们上述讲的是捷径连接的虚实线的区别,其实对于瓶颈结构来说,也是有虚实线的区别,原理都是一样的。

所以我们总共能得到四种不同的捷径连接结构,这四种不同的捷径连接结构我们后面习惯称之为残差模块

现在我们清楚了,对于较低深度的ResNet18、ResNet34网络,我们使用捷径连接的残差模块进行搭建,对于层数很深的ResNet50、ResNet101、ResNet152网络,我们使用瓶颈结构的残差模块进行搭建。下面我们看网络的整体结构

2-4、整体结构

如下表所示,给出了不通过层数的残差网络ResNet的体系结构(图中红色数字是我自己标的,方便后面解释):

配图:残差网络ResNet的体系结构,给这个图画了几个红色标记数字方便解释

为了方便讲解,在解释这个网络结构之前,我们先给残差模块命个名:

- 带是实线的捷径连接的残差模块我们称之为残差模块a,输入和输出的shape一样

- 带是虚线的捷径连接的残差模块我们称之为残差模块b, 输入的shape是输出的两倍

- 带是实线的瓶颈结构的残差模块我们称之为残差模块c,输入和输出的shape一样

- 带是虚线的瓶颈结构的残差模块我们称之为残差模块d, 输入的shape是输出的两倍

因为我们已经知道了,对于较低深度的ResNet18、ResNet34网络,我们使用捷径连接的残差模块进行搭建,对于层数很深的ResNet50、ResNet101、ResNet152网络,我们使用瓶颈结构的残差模块进行搭建。所以较低深度的ResNet18、ResNet34网络,我们使用的是残差模块a和残差模块b,对于层数很深的ResNet50、ResNet101、ResNet152网络,我们使用的是残差模块c和残差模块d

从图中我们可以看出来:

- 网络的输入是(224, 224, 3)的彩色图像

- 第一层是先卷积在池化,卷积核的个数是64, 大小是(7, 7), 步长是2, 所以padding为’same’, 池化的窗口大小是(3, 3),步长是2, padding是’same’

- 中间层由多个残差模块组合而成的残差块依次连接而成,

conv2_x,conv3_x,conv4_x,conv5_x - 最后一层先经过全局平均池化层转为特征向量,再经过节点数为1000的全连接层,最后通过Softmax函数转化为概率输出,实现1000分类。

我们继续来解释一下由多个残差模块组合而成的残差块是如何组成的。

- 对于图中红色数字1的残差块,它是由两个残差模块组成的,第一个是残差模块b,第二个是残差模块a

- 对于图中红色数字2的残差块,它是由三个残差模块组成的,第一个是残差模块b,后面两个是残差模块a

- 对于图中红色数字3的残差块,它是由三个残差模块组成的,第一个是残差模块d,后面两个是残差模块c

- 对于图中红色数字4的残差块,它是由三个残差模块组成的,第一个是残差模块d,后面两个是残差模块c

- 对于图中红色数字5的残差块,它是由三个残差模块组成的,第一个是残差模块d,后面两个是残差模块c

- 对于图中由23个残差模块组成的残差块,它的第一个残差模块是残差模块d,后面的都是残差模块c

- 对于图中由36个残差模块组成的残差块,它的第一个残差模块是残差模块d,后面的都是残差模块c

总结一句话,对于较低深度的ResNet18、ResNet34网络,不管残差块是由多少个残差模块组成,它的第一个残差模块肯定是残差模块b, 剩余的是残差模块a, 对于层数很深的ResNet50、ResNet101、ResNet152网络,它的第一个残差模块肯定是残差模块d, 剩余的是残差模块c。至于残差块里面残差模块中卷积核的大小和卷积核的个数,列表中有详细的标注。并且,所有的卷积和池化操作的padding都为’same’,最后一点是每个卷积操作后面都加了一个BN层。

三、ResNet网络代码编写

3-1、细讲使用Keras函数式API搭建ResNet18网络

这部分我们会对照上面的分析,使用Keras函数式API搭建ResNet18网络。

因为上面的网络核心就是四种残差模块,所以我们先来搭建这四种残差模块。

3-1-1、ResNet18网络中conv2_x的残差模块a

先看ResNet18网络中conv2_x的残差模块a

from tensorflow.keras.layers import Conv2D, BatchNormalization, Activation, Add

# ResNet18网络conv2_x对应的残差模块a

def resiidual_a(input_x):

x = Conv2D(64, (3, 3), 2, 'same')(input_x)

x = BatchNormalization()(x)

x = Activation('relu')(x)

x = Conv2D(64, (3, 3), 2, 'same')(x)

x = BatchNormalization()(x)

x = Activation('relu')(x)

y = Add()([x, input_x])

return y

因为有比较多类似相同的代码,所以我们把他换种方式写成

from tensorflow.keras.layers import Conv2D, BatchNormalization, Activation, Add

def Conv_BN_Relu(filters, kernel_size, strides, input_layer):

x = Conv2D(filters, kernel_size, strides=strides, padding='same')(input_layer)

x = BatchNormalization()(x)

x = Activation('relu')(x)

return x

# ResNet18网络conv2_x对应的残差模块a

def resiidual_a(input_x):

x = Conv_BN_Relu(64, (3, 3), 1, input_x)

x = Conv_BN_Relu(64, (3, 3), 1, x)

y = Add()([x, input_x])

return y

3-1-2、ResNet18网络中conv2_x对应的残差模块b

通过上面的分析可知,ResNet18网络中conv2_x对应的残差模块b就是

from tensorflow.keras.layers import Conv2D, BatchNormalization, Activation, Add

def Conv_BN_Relu(filters, kernel_size, strides, input_layer):

x = Conv2D(filters, kernel_size, strides=strides, padding='same')(input_layer)

x = BatchNormalization()(x)

x = Activation('relu')(x)

return x

# ResNet18网络conv2_x对应的残差模块b

def resiidual_b(input_x):

# 主路

x = Conv_BN_Relu(64, (3, 3), 2, input_x)

x = Conv_BN_Relu(64, (3, 3), 1, x)

# 支路下采样

input_x = Conv_BN_Relu(64, (1, 1), 2, input_x)

# 输出

y = Add()([x, input_x])

return y

3-1-3、对残差模块a和残差模块b进行第二次封装

其它的残差块里面不同的残差模块a或者是残差模块b就是修改一下不同的卷积核个数而已,例如ResNet18里面的conv3_x卷积核的个数变成了128,conv4_x卷积核的个数变成了256, conv5_x卷积核的个数变成了512,

这样看来,其实我们可以将残差模块a进一步封装一下变成下面这种

from tensorflow.keras.layers import Conv2D, BatchNormalization, Activation, Add

def Conv_BN_Relu(filters, kernel_size, strides, input_layer):

x = Conv2D(filters, kernel_size, strides=strides, padding='same')(input_layer)

x = BatchNormalization()(x)

x = Activation('relu')(x)

return x

# ResNet18网络对应的残差模块a

def resiidual_a(input_x, filters):

x = Conv_BN_Relu(filters, (3, 3), 1, input_x)

x = Conv_BN_Relu(filters, (3, 3), 1, x)

y = Add()([x, input_x])

return y

然后将残差模块b封装一下变成下面这种

from tensorflow.keras.layers import Conv2D, BatchNormalization, Activation, Add

def Conv_BN_Relu(filters, kernel_size, strides, input_layer):

x = Conv2D(filters, kernel_size, strides=strides, padding='same')(input_layer)

x = BatchNormalization()(x)

x = Activation('relu')(x)

return x

# ResNet18网络对应的残差模块b

def resiidual_b(input_x, filters):

# 主路

x = Conv_BN_Relu(filters, (3, 3), 2, input_x)

x = Conv_BN_Relu(filters, (3, 3), 1, x)

# 支路下采样

input_x = Conv_BN_Relu(filters, (1, 1), 2, input_x)

# 输出

y = Add()([x, input_x])

return y

到这一步,你还是会发现残差模块a和残差模块b非常类似,我们还可以进一步整理为

其实还有更简单的封装方式,是python编程的知识了,我就不再多阐述了,主要是让同学们理解流程

from tensorflow.keras.layers import Conv2D, BatchNormalization, Activation, Add

def Conv_BN_Relu(filters, kernel_size, strides, input_layer):

x = Conv2D(filters, kernel_size, strides=strides, padding='same')(input_layer)

x = BatchNormalization()(x)

x = Activation('relu')(x)

return x

# ResNet18网络对应的残差模块a和残差模块b

def resiidual_a_or_b(input_x, filters, flag):

if flag == 'a':

# 主路

x = Conv_BN_Relu(filters, (3, 3), 1, input_x)

x = Conv_BN_Relu(filters, (3, 3), 1, x)

# 输出

y = Add()([x, input_x])

return y

elif flag == 'b':

# 主路

x = Conv_BN_Relu(filters, (3, 3), 2, input_x)

x = Conv_BN_Relu(filters, (3, 3), 1, x)

# 支路下采样

input_x = Conv_BN_Relu(filters, (1, 1), 2, input_x)

# 输出

y = Add()([x, input_x])

return y

# 其实还有更简单的封装方式,是python编程的知识了,我就不再多阐述了,主要是让同学们理解流程

3-1-4、网络总体结构

网络总体结构如下:

# 第一层

input_layer = Input((224, 224, 3))

conv1 = Conv_BN_Relu(64, (7, 7), 1, input_layer)

conv1_Maxpooling = MaxPooling2D((3, 3), strides=2, padding='same')(conv1)

# conv2_x

x = resiidual_a_or_b(conv1_Maxpooling, 64, 'b')

x = resiidual_a_or_b(x, 64, 'a')

# conv3_x

x = resiidual_a_or_b(x, 128, 'b')

x = resiidual_a_or_b(x, 128, 'a')

# conv4_x

x = resiidual_a_or_b(x, 256, 'b')

x = resiidual_a_or_b(x, 256, 'a')

# conv5_x

x = resiidual_a_or_b(x, 512, 'b')

x = resiidual_a_or_b(x, 512, 'a')

# 最后一层

x = GlobalAveragePooling2D()(x)

x = Flatten()(x)

x = Dense(1000)(x)

x = Dropout(0.5)(x)

y = Softmax(axis=-1)(x)

model = Model([input_layer], [y])

model.summary()

3-1-5、ResNet18完整结构代码

ResNet完整结构代码

from tensorflow.keras.layers import Conv2D, BatchNormalization, Activation, Add

from tensorflow.keras.layers import Input, MaxPooling2D, GlobalAveragePooling2D, Flatten

from tensorflow.keras.layers import Dense, Dropout, Softmax

from tensorflow.keras.models import Model

def Conv_BN_Relu(filters, kernel_size, strides, input_layer):

x = Conv2D(filters, kernel_size, strides=strides, padding='same')(input_layer)

x = BatchNormalization()(x)

x = Activation('relu')(x)

return x

# ResNet18网络对应的残差模块a和残差模块b

def resiidual_a_or_b(input_x, filters, flag):

if flag == 'a':

# 主路

x = Conv_BN_Relu(filters, (3, 3), 1, input_x)

x = Conv_BN_Relu(filters, (3, 3), 1, x)

# 输出

y = Add()([x, input_x])

return y

elif flag == 'b':

# 主路

x = Conv_BN_Relu(filters, (3, 3), 2, input_x)

x = Conv_BN_Relu(filters, (3, 3), 1, x)

# 支路下采样

input_x = Conv_BN_Relu(filters, (1, 1), 2, input_x)

# 输出

y = Add()([x, input_x])

return y

# 其实还有更简单的封装方式,是python编程的知识了,我就不再多阐述了,主要是让同学们理解流程

# 第一层

input_layer = Input((224, 224, 3))

conv1 = Conv_BN_Relu(64, (7, 7), 1, input_layer)

conv1_Maxpooling = MaxPooling2D((3, 3), strides=2, padding='same')(conv1)

# conv2_x

x = resiidual_a_or_b(conv1_Maxpooling, 64, 'b')

x = resiidual_a_or_b(x, 64, 'a')

# conv3_x

x = resiidual_a_or_b(x, 128, 'b')

x = resiidual_a_or_b(x, 128, 'a')

# conv4_x

x = resiidual_a_or_b(x, 256, 'b')

x = resiidual_a_or_b(x, 256, 'a')

# conv5_x

x = resiidual_a_or_b(x, 512, 'b')

x = resiidual_a_or_b(x, 512, 'a')

# 最后一层

x = GlobalAveragePooling2D()(x)

x = Flatten()(x)

x = Dense(1000)(x)

x = Dropout(0.5)(x)

y = Softmax(axis=-1)(x)

model = Model([input_layer], [y])

model.summary()

3-1-6、打印出来的网络结构

自己对照一下网络的输出尺寸有没有出错,没有出错表示搭建模型是正确的

Model: "model"

__________________________________________________________________________________________________

Layer (type) Output Shape Param # Connected to

==================================================================================================

input_1 (InputLayer) [(None, 224, 224, 3) 0

__________________________________________________________________________________________________

conv2d (Conv2D) (None, 224, 224, 64) 9472 input_1[0][0]

__________________________________________________________________________________________________

batch_normalization (BatchNorma (None, 224, 224, 64) 256 conv2d[0][0]

__________________________________________________________________________________________________

activation (Activation) (None, 224, 224, 64) 0 batch_normalization[0][0]

__________________________________________________________________________________________________

max_pooling2d (MaxPooling2D) (None, 112, 112, 64) 0 activation[0][0]

__________________________________________________________________________________________________

conv2d_1 (Conv2D) (None, 56, 56, 64) 36928 max_pooling2d[0][0]

__________________________________________________________________________________________________

batch_normalization_1 (BatchNor (None, 56, 56, 64) 256 conv2d_1[0][0]

__________________________________________________________________________________________________

activation_1 (Activation) (None, 56, 56, 64) 0 batch_normalization_1[0][0]

__________________________________________________________________________________________________

conv2d_2 (Conv2D) (None, 56, 56, 64) 36928 activation_1[0][0]

__________________________________________________________________________________________________

conv2d_3 (Conv2D) (None, 56, 56, 64) 4160 max_pooling2d[0][0]

__________________________________________________________________________________________________

batch_normalization_2 (BatchNor (None, 56, 56, 64) 256 conv2d_2[0][0]

__________________________________________________________________________________________________

batch_normalization_3 (BatchNor (None, 56, 56, 64) 256 conv2d_3[0][0]

__________________________________________________________________________________________________

activation_2 (Activation) (None, 56, 56, 64) 0 batch_normalization_2[0][0]

__________________________________________________________________________________________________

activation_3 (Activation) (None, 56, 56, 64) 0 batch_normalization_3[0][0]

__________________________________________________________________________________________________

add (Add) (None, 56, 56, 64) 0 activation_2[0][0]

activation_3[0][0]

__________________________________________________________________________________________________

conv2d_4 (Conv2D) (None, 56, 56, 64) 36928 add[0][0]

__________________________________________________________________________________________________

batch_normalization_4 (BatchNor (None, 56, 56, 64) 256 conv2d_4[0][0]

__________________________________________________________________________________________________

activation_4 (Activation) (None, 56, 56, 64) 0 batch_normalization_4[0][0]

__________________________________________________________________________________________________

conv2d_5 (Conv2D) (None, 56, 56, 64) 36928 activation_4[0][0]

__________________________________________________________________________________________________

batch_normalization_5 (BatchNor (None, 56, 56, 64) 256 conv2d_5[0][0]

__________________________________________________________________________________________________

activation_5 (Activation) (None, 56, 56, 64) 0 batch_normalization_5[0][0]

__________________________________________________________________________________________________

add_1 (Add) (None, 56, 56, 64) 0 activation_5[0][0]

add[0][0]

__________________________________________________________________________________________________

conv2d_6 (Conv2D) (None, 28, 28, 128) 73856 add_1[0][0]

__________________________________________________________________________________________________

batch_normalization_6 (BatchNor (None, 28, 28, 128) 512 conv2d_6[0][0]

__________________________________________________________________________________________________

activation_6 (Activation) (None, 28, 28, 128) 0 batch_normalization_6[0][0]

__________________________________________________________________________________________________

conv2d_7 (Conv2D) (None, 28, 28, 128) 147584 activation_6[0][0]

__________________________________________________________________________________________________

conv2d_8 (Conv2D) (None, 28, 28, 128) 8320 add_1[0][0]

__________________________________________________________________________________________________

batch_normalization_7 (BatchNor (None, 28, 28, 128) 512 conv2d_7[0][0]

__________________________________________________________________________________________________

batch_normalization_8 (BatchNor (None, 28, 28, 128) 512 conv2d_8[0][0]

__________________________________________________________________________________________________

activation_7 (Activation) (None, 28, 28, 128) 0 batch_normalization_7[0][0]

__________________________________________________________________________________________________

activation_8 (Activation) (None, 28, 28, 128) 0 batch_normalization_8[0][0]

__________________________________________________________________________________________________

add_2 (Add) (None, 28, 28, 128) 0 activation_7[0][0]

activation_8[0][0]

__________________________________________________________________________________________________

conv2d_9 (Conv2D) (None, 28, 28, 128) 147584 add_2[0][0]

__________________________________________________________________________________________________

batch_normalization_9 (BatchNor (None, 28, 28, 128) 512 conv2d_9[0][0]

__________________________________________________________________________________________________

activation_9 (Activation) (None, 28, 28, 128) 0 batch_normalization_9[0][0]

__________________________________________________________________________________________________

conv2d_10 (Conv2D) (None, 28, 28, 128) 147584 activation_9[0][0]

__________________________________________________________________________________________________

batch_normalization_10 (BatchNo (None, 28, 28, 128) 512 conv2d_10[0][0]

__________________________________________________________________________________________________

activation_10 (Activation) (None, 28, 28, 128) 0 batch_normalization_10[0][0]

__________________________________________________________________________________________________

add_3 (Add) (None, 28, 28, 128) 0 activation_10[0][0]

add_2[0][0]

__________________________________________________________________________________________________

conv2d_11 (Conv2D) (None, 14, 14, 256) 295168 add_3[0][0]

__________________________________________________________________________________________________

batch_normalization_11 (BatchNo (None, 14, 14, 256) 1024 conv2d_11[0][0]

__________________________________________________________________________________________________

activation_11 (Activation) (None, 14, 14, 256) 0 batch_normalization_11[0][0]

__________________________________________________________________________________________________

conv2d_12 (Conv2D) (None, 14, 14, 256) 590080 activation_11[0][0]

__________________________________________________________________________________________________

conv2d_13 (Conv2D) (None, 14, 14, 256) 33024 add_3[0][0]

__________________________________________________________________________________________________

batch_normalization_12 (BatchNo (None, 14, 14, 256) 1024 conv2d_12[0][0]

__________________________________________________________________________________________________

batch_normalization_13 (BatchNo (None, 14, 14, 256) 1024 conv2d_13[0][0]

__________________________________________________________________________________________________

activation_12 (Activation) (None, 14, 14, 256) 0 batch_normalization_12[0][0]

__________________________________________________________________________________________________

activation_13 (Activation) (None, 14, 14, 256) 0 batch_normalization_13[0][0]

__________________________________________________________________________________________________

add_4 (Add) (None, 14, 14, 256) 0 activation_12[0][0]

activation_13[0][0]

__________________________________________________________________________________________________

conv2d_14 (Conv2D) (None, 14, 14, 256) 590080 add_4[0][0]

__________________________________________________________________________________________________

batch_normalization_14 (BatchNo (None, 14, 14, 256) 1024 conv2d_14[0][0]

__________________________________________________________________________________________________

activation_14 (Activation) (None, 14, 14, 256) 0 batch_normalization_14[0][0]

__________________________________________________________________________________________________

conv2d_15 (Conv2D) (None, 14, 14, 256) 590080 activation_14[0][0]

__________________________________________________________________________________________________

batch_normalization_15 (BatchNo (None, 14, 14, 256) 1024 conv2d_15[0][0]

__________________________________________________________________________________________________

activation_15 (Activation) (None, 14, 14, 256) 0 batch_normalization_15[0][0]

__________________________________________________________________________________________________

add_5 (Add) (None, 14, 14, 256) 0 activation_15[0][0]

add_4[0][0]

__________________________________________________________________________________________________

conv2d_16 (Conv2D) (None, 7, 7, 512) 1180160 add_5[0][0]

__________________________________________________________________________________________________

batch_normalization_16 (BatchNo (None, 7, 7, 512) 2048 conv2d_16[0][0]

__________________________________________________________________________________________________

activation_16 (Activation) (None, 7, 7, 512) 0 batch_normalization_16[0][0]

__________________________________________________________________________________________________

conv2d_17 (Conv2D) (None, 7, 7, 512) 2359808 activation_16[0][0]

__________________________________________________________________________________________________

conv2d_18 (Conv2D) (None, 7, 7, 512) 131584 add_5[0][0]

__________________________________________________________________________________________________

batch_normalization_17 (BatchNo (None, 7, 7, 512) 2048 conv2d_17[0][0]

__________________________________________________________________________________________________

batch_normalization_18 (BatchNo (None, 7, 7, 512) 2048 conv2d_18[0][0]

__________________________________________________________________________________________________

activation_17 (Activation) (None, 7, 7, 512) 0 batch_normalization_17[0][0]

__________________________________________________________________________________________________

activation_18 (Activation) (None, 7, 7, 512) 0 batch_normalization_18[0][0]

__________________________________________________________________________________________________

add_6 (Add) (None, 7, 7, 512) 0 activation_17[0][0]

activation_18[0][0]

__________________________________________________________________________________________________

conv2d_19 (Conv2D) (None, 7, 7, 512) 2359808 add_6[0][0]

__________________________________________________________________________________________________

batch_normalization_19 (BatchNo (None, 7, 7, 512) 2048 conv2d_19[0][0]

__________________________________________________________________________________________________

activation_19 (Activation) (None, 7, 7, 512) 0 batch_normalization_19[0][0]

__________________________________________________________________________________________________

conv2d_20 (Conv2D) (None, 7, 7, 512) 2359808 activation_19[0][0]

__________________________________________________________________________________________________

batch_normalization_20 (BatchNo (None, 7, 7, 512) 2048 conv2d_20[0][0]

__________________________________________________________________________________________________

activation_20 (Activation) (None, 7, 7, 512) 0 batch_normalization_20[0][0]

__________________________________________________________________________________________________

add_7 (Add) (None, 7, 7, 512) 0 activation_20[0][0]

add_6[0][0]

__________________________________________________________________________________________________

global_average_pooling2d (Globa (None, 512) 0 add_7[0][0]

__________________________________________________________________________________________________

flatten (Flatten) (None, 512) 0 global_average_pooling2d[0][0]

__________________________________________________________________________________________________

dense (Dense) (None, 1000) 513000 flatten[0][0]

__________________________________________________________________________________________________

dropout (Dropout) (None, 1000) 0 dense[0][0]

__________________________________________________________________________________________________

softmax (Softmax) (None, 1000) 0 dropout[0][0]

==================================================================================================

Total params: 11,708,328

Trainable params: 11,698,600

Non-trainable params: 9,728

__________________________________________________________________________________________________

Process finished with exit code 0

至于其它四种结构34-layer ,50-layer , 101-layer , 152-layer ,搭建原理一模一样,我就不再废话了,如果想加深印象的同学,建议自己写一遍,正所谓纸上得来终觉浅对吧。代码我已经在下面写给你们了,有兴趣的同学可以参考参考我的思路。

3-2、34-layer网络结构代码

3-2-1、34-layer网络结构完整代码(写得直白)

from tensorflow.keras.layers import Conv2D, BatchNormalization, Activation, Add

from tensorflow.keras.layers import Input, MaxPooling2D, GlobalAveragePooling2D, Flatten

from tensorflow.keras.layers import Dense, Dropout, Softmax

from tensorflow.keras.models import Model

def Conv_BN_Relu(filters, kernel_size, strides, input_layer):

x = Conv2D(filters, kernel_size, strides=strides, padding='same')(input_layer)

x = BatchNormalization()(x)

x = Activation('relu')(x)

return x

# ResNet18网络对应的残差模块a和残差模块b

def resiidual_a_or_b(input_x, filters, flag):

if flag == 'a':

# 主路

x = Conv_BN_Relu(filters, (3, 3), 1, input_x)

x = Conv_BN_Relu(filters, (3, 3), 1, x)

# 输出

y = Add()([x, input_x])

return y

elif flag == 'b':

# 主路

x = Conv_BN_Relu(filters, (3, 3), 2, input_x)

x = Conv_BN_Relu(filters, (3, 3), 1, x)

# 支路下采样

input_x = Conv_BN_Relu(filters, (1, 1), 2, input_x)

# 输出

y = Add()([x, input_x])

return y

# 其实还有更简单的封装方式,是python编程的知识了,我就不再多阐述了,主要是让同学们理解流程

# 第一层

input_layer = Input((224, 224, 3))

conv1 = Conv_BN_Relu(64, (7, 7), 1, input_layer)

conv1_Maxpooling = MaxPooling2D((3, 3), strides=2, padding='same')(conv1)

# conv2_x

x = resiidual_a_or_b(conv1_Maxpooling, 64, 'b')

x = resiidual_a_or_b(x, 64, 'a')

x = resiidual_a_or_b(x, 64, 'a')

# conv3_x

x = resiidual_a_or_b(x, 128, 'b')

x = resiidual_a_or_b(x, 128, 'a')

x = resiidual_a_or_b(x, 128, 'a')

x = resiidual_a_or_b(x, 128, 'a')

# conv4_x

x = resiidual_a_or_b(x, 256, 'b')

x = resiidual_a_or_b(x, 256, 'a')

x = resiidual_a_or_b(x, 256, 'a')

x = resiidual_a_or_b(x, 256, 'a')

x = resiidual_a_or_b(x, 256, 'a')

x = resiidual_a_or_b(x, 256, 'a')

# conv5_x

x = resiidual_a_or_b(x, 512, 'b')

x = resiidual_a_or_b(x, 512, 'a')

x = resiidual_a_or_b(x, 512, 'a')

# 最后一层

x = GlobalAveragePooling2D()(x)

x = Flatten()(x)

x = Dense(1000)(x)

x = Dropout(0.5)(x)

y = Softmax(axis=-1)(x)

model = Model([input_layer], [y])

model.summary()

3-2-2、34-layer网络结构完整代码(写得简洁)

无非就是改变一下中间层的写法,让代码看起来更加简洁而已

from tensorflow.keras.layers import Conv2D, BatchNormalization, Activation, Add

from tensorflow.keras.layers import Input, MaxPooling2D, GlobalAveragePooling2D, Flatten

from tensorflow.keras.layers import Dense, Dropout, Softmax

from tensorflow.keras.models import Model

def Conv_BN_Relu(filters, kernel_size, strides, input_layer):

x = Conv2D(filters, kernel_size, strides=strides, padding='same')(input_layer)

x = BatchNormalization()(x)

x = Activation('relu')(x)

return x

def resiidual_a_or_b(input_x, filters, flag):

if flag == 'a':

# 主路

x = Conv_BN_Relu(filters, (3, 3), 1, input_x)

x = Conv_BN_Relu(filters, (3, 3), 1, x)

# 输出

y = Add()([x, input_x])

return y

elif flag == 'b':

# 主路

x = Conv_BN_Relu(filters, (3, 3), 2, input_x)

x = Conv_BN_Relu(filters, (3, 3), 1, x)

# 支路下采样

input_x = Conv_BN_Relu(filters, (1, 1), 2, input_x)

# 输出

y = Add()([x, input_x])

return y

# 其实还有更简单的封装方式,是python编程的知识了,我就不再多阐述了,主要是让同学们理解流程

# 第一层

input_layer = Input((224, 224, 3))

conv1 = Conv_BN_Relu(64, (7, 7), 1, input_layer)

conv1_Maxpooling = MaxPooling2D((3, 3), strides=2, padding='same')(conv1)

x = conv1_Maxpooling

# 中间层

filters = 64

num_residuals = [3, 4, 6, 3]

for i, num_residual in enumerate(num_residuals):

for j in range(num_residual):

if j == 0:

x = resiidual_a_or_b(x, filters, 'b')

else:

x = resiidual_a_or_b(x, filters, 'a')

filters = filters * 2

# 最后一层

x = GlobalAveragePooling2D()(x)

x = Flatten()(x)

x = Dense(1000)(x)

x = Dropout(0.5)(x)

y = Softmax(axis=-1)(x)

model = Model([input_layer], [y])

model.summary()

3-3、50-layer网络结构代码

将原先残差模块a换成残差模块c, 将原先残差模块b换成残差模块d,根据2-4节中的描述就是替换即可,代码对比3-3节和3-2-1节的代码,代码写得非常清楚的,自己看一遍就知道如何替换了

from tensorflow.keras.layers import Conv2D, BatchNormalization, Activation, Add

from tensorflow.keras.layers import Input, MaxPooling2D, GlobalAveragePooling2D, Flatten

from tensorflow.keras.layers import Dense, Dropout, Softmax

from tensorflow.keras.models import Model

def Conv_BN_Relu(filters, kernel_size, strides, input_layer):

x = Conv2D(filters, kernel_size, strides=strides, padding='same')(input_layer)

x = BatchNormalization()(x)

x = Activation('relu')(x)

return x

def resiidual_c_or_d(input_x, filters, flag):

if flag == 'c':

# 主路

x = Conv_BN_Relu(filters, (1, 1), 1, input_x)

x = Conv_BN_Relu(filters, (3, 3), 1, x)

x = Conv_BN_Relu(filters * 4, (1, 1), 1, x)

# 输出

y = Add()([x, input_x])

return y

elif flag == 'd':

# 主路

x = Conv_BN_Relu(filters, (1, 1), 2, input_x)

x = Conv_BN_Relu(filters, (3, 3), 1, x)

x = Conv_BN_Relu(filters * 4, (1, 1), 1, x)

# 支路下采样

input_x = Conv_BN_Relu(filters * 4, (1, 1), 2, input_x)

# 输出

y = Add()([x, input_x])

return y

# 其实还有更简单的封装方式,是python编程的知识了,我就不再多阐述了,主要是让同学们理解流程

# 第一层

input_layer = Input((224, 224, 3))

conv1 = Conv_BN_Relu(64, (7, 7), 1, input_layer)

conv1_Maxpooling = MaxPooling2D((3, 3), strides=2, padding='same')(conv1)

x = conv1_Maxpooling

# 中间层

filters = 64

num_residuals = [3, 4, 6, 3]

for i, num_residual in enumerate(num_residuals):

for j in range(num_residual):

if j == 0:

x = resiidual_c_or_d(x, filters, 'd')

else:

x = resiidual_c_or_d(x, filters, 'c')

filters = filters * 2

# 最后一层

x = GlobalAveragePooling2D()(x)

x = Flatten()(x)

x = Dense(1000)(x)

x = Dropout(0.5)(x)

y = Softmax(axis=-1)(x)

model = Model([input_layer], [y])

model.summary()

3-4、101-layer网络结构代码

相比较于50-layer的网络结构代码,其实我就是修改了一个变量而已

将num_residuals = [3, 4, 6, 3]变成num_residuals = [3, 4, 23, 3]

from tensorflow.keras.layers import Conv2D, BatchNormalization, Activation, Add

from tensorflow.keras.layers import Input, MaxPooling2D, GlobalAveragePooling2D, Flatten

from tensorflow.keras.layers import Dense, Dropout, Softmax

from tensorflow.keras.models import Model

def Conv_BN_Relu(filters, kernel_size, strides, input_layer):

x = Conv2D(filters, kernel_size, strides=strides, padding='same')(input_layer)

x = BatchNormalization()(x)

x = Activation('relu')(x)

return x

# ResNet18网络对应的残差模块a和残差模块b

def resiidual_c_or_d(input_x, filters, flag):

if flag == 'c':

# 主路

x = Conv_BN_Relu(filters, (1, 1), 1, input_x)

x = Conv_BN_Relu(filters, (3, 3), 1, x)

x = Conv_BN_Relu(filters * 4, (1, 1), 1, x)

# 输出

y = Add()([x, input_x])

return y

elif flag == 'd':

# 主路

x = Conv_BN_Relu(filters, (1, 1), 2, input_x)

x = Conv_BN_Relu(filters, (3, 3), 1, x)

x = Conv_BN_Relu(filters * 4, (1, 1), 1, x)

# 支路下采样

input_x = Conv_BN_Relu(filters * 4, (1, 1), 2, input_x)

# 输出

y = Add()([x, input_x])

return y

# 其实还有更简单的封装方式,是python编程的知识了,我就不再多阐述了,主要是让同学们理解流程

# 第一层

input_layer = Input((224, 224, 3))

conv1 = Conv_BN_Relu(64, (7, 7), 1, input_layer)

conv1_Maxpooling = MaxPooling2D((3, 3), strides=2, padding='same')(conv1)

x = conv1_Maxpooling

# 中间层

filters = 64

num_residuals = [3, 4, 23, 3]

for i, num_residual in enumerate(num_residuals):

for j in range(num_residual):

if j == 0:

x = resiidual_c_or_d(x, filters, 'd')

else:

x = resiidual_c_or_d(x, filters, 'c')

filters = filters * 2

# 最后一层

x = GlobalAveragePooling2D()(x)

x = Flatten()(x)

x = Dense(1000)(x)

x = Dropout(0.5)(x)

y = Softmax(axis=-1)(x)

model = Model([input_layer], [y])

model.summary()

3-5、152-layer网络结构代码

相比较于50-layer的网络结构代码,其实也只是修改了一个变量而已

将num_residuals = [3, 4, 6, 3]变成num_residuals = [3, 8, 36, 3]

from tensorflow.keras.layers import Conv2D, BatchNormalization, Activation, Add

from tensorflow.keras.layers import Input, MaxPooling2D, GlobalAveragePooling2D, Flatten

from tensorflow.keras.layers import Dense, Dropout, Softmax

from tensorflow.keras.models import Model

def Conv_BN_Relu(filters, kernel_size, strides, input_layer):

x = Conv2D(filters, kernel_size, strides=strides, padding='same')(input_layer)

x = BatchNormalization()(x)

x = Activation('relu')(x)

return x

# ResNet18网络对应的残差模块a和残差模块b

def resiidual_c_or_d(input_x, filters, flag):

if flag == 'c':

# 主路

x = Conv_BN_Relu(filters, (1, 1), 1, input_x)

x = Conv_BN_Relu(filters, (3, 3), 1, x)

x = Conv_BN_Relu(filters * 4, (1, 1), 1, x)

# 输出

y = Add()([x, input_x])

return y

elif flag == 'd':

# 主路

x = Conv_BN_Relu(filters, (1, 1), 2, input_x)

x = Conv_BN_Relu(filters, (3, 3), 1, x)

x = Conv_BN_Relu(filters * 4, (1, 1), 1, x)

# 支路下采样

input_x = Conv_BN_Relu(filters * 4, (1, 1), 2, input_x)

# 输出

y = Add()([x, input_x])

return y

# 其实还有更简单的封装方式,是python编程的知识了,我就不再多阐述了,主要是让同学们理解流程

# 第一层

input_layer = Input((224, 224, 3))

conv1 = Conv_BN_Relu(64, (7, 7), 1, input_layer)

conv1_Maxpooling = MaxPooling2D((3, 3), strides=2, padding='same')(conv1)

x = conv1_Maxpooling

# 中间层

filters = 64

num_residuals = [3, 8, 36, 3]

for i, num_residual in enumerate(num_residuals):

for j in range(num_residual):

if j == 0:

x = resiidual_c_or_d(x, filters, 'd')

else:

x = resiidual_c_or_d(x, filters, 'c')

filters = filters * 2

# 最后一层

x = GlobalAveragePooling2D()(x)

x = Flatten()(x)

x = Dense(1000)(x)

x = Dropout(0.5)(x)

y = Softmax(axis=-1)(x)

model = Model([input_layer], [y])

model.summary()

文章总结:

- 文章先详细描述解释了ResNet的网络结构,然后再以ResNet18-layer为例子详细一步步推导该如何搭建这个神经网络

- 然后再使用这种方式搭建出34、50、101、152层的ResNet卷积神经网络

本篇文章的所有知识就到这里啦,后面还会持续更新keras的教学内容,下面是教学内容大纲,感兴趣的同学欢迎关注我的动态一起学习呀(教学内容以实战居多)