k8s环境搭建

搭建说明

至少准备三台机器 电脑性能比较好可以开三个虚拟机,cpu或内存不够可以选择购买阿里云或者腾讯云

k8s可以搭建单master和多master,一般学习过程我们就准备三台机器搭建一个master 两个 node

每台机器要求

- cpu 两核以上

- 内存 至少2GB

- 三台机器网络要能互通

- 关闭防火墙 不然后面会出现很多问题需要一个个去开放端口。

- 禁用SELinux

- 关闭swap分区 本来机器性能不够,所以还是把虚拟内存分区关闭

- 时间需要同步

可以参考官网搭建 https://kubernetes.io/zh/docs/setup/production-environment/tools/kubeadm/install-kubeadm/

机器准备

| 10.0.4.11 | node01 |

|---|---|

| 10.0.4.13 | node02 |

| 10.0.4.9 | master |

修改hostname

# 10.0.4.9 执行

hostnamectl set-hostname master

#10.0.4.11执行

hostnamectl set-hostname node01

# 10.0.4.13执行

hostnamectl set-hostname node02

配置host

每台机器执行

cat >> /etc/hosts<<EOF

10.0.4.9 master

10.0.4.11 node01

10.0.4.13 node02

EOF

网络时间同步

每台机器时间最好同步下,避免后面出现问题

每台机器运行

查看是否有 ntpdate

which ntpdate

# 如果没有就安装

yum install ntpdate -y

统一时区上海时区

ln -snf /usr/share/zoneinfo/Asia/Shanghai /etc/localtime

bash -c "echo 'Asia/Shanghai' > /etc/timezone"

使用阿里服务器进行时间更新

# 使用阿里服务器进行时间更新

ntpdate ntp1.aliyun.com

查看当前时间

[root@node01 ~]# date

Tue Nov 1 00:08:10 CST 2022

禁用SELinux

所有节点执行,让容器可以读取主机文件系统

# 临时关闭

setenforce 0

# 永久禁用

sed -i 's/^SELINUX=enforcing$/SELINUX=disabled/' /etc/selinux/config

# 或者设置为permissive也是相当于禁用的

sed -i 's/^SELINUX=enforcing$/SELINUX=permissive/' /etc/selinux/config

关闭防火墙

systemctl stop firewalld

systemctl disable firewalld

systemctl status firewalld

如果不关闭防火墙需要把节点自建相互通信的端口开放

| api-server | 8080,6443 |

|---|---|

| controller-manager | 10252 |

| scheduler | 10251 |

| kubelet | 10250,10255 |

| etcd | 2379,2380 |

| dns | 53 (tcp upd) |

关闭swap分区

关闭swap可以提升性能

# 临时关闭swap

swapoff -a

# 永久关闭

sed -ri 's/.*swap.*/#&/' /etc/fstab

[root@node01 ~]# free -m

total used free shared buff/cache available

Mem: 3694 596 435 0 2662 2806

Swap: 1024 4 1020

[root@node01 ~]# swapoff -a

[root@node01 ~]# sed -ri 's/.*swap.*/#&/' /etc/fstab

[root@node01 ~]# free -m

total used free shared buff/cache available

Mem: 3694 596 434 0 2663 2806

Swap: 0 0 0

配置 k8s 安装源

所有节点配置

cat <<EOF > /etc/yum.repos.d/kubernetes.repo

[k8s]

name=Kubernetes

baseurl=http://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=http://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg

http://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

如果本地有yum源,可以指定baseurl为 file://dir 离线安装

安装 kubeadm,kubelet 和 kubectl

目前最新版本1.24已经移除docker,如果需要docker就指定版本安装

我们使用1.23.9版本

yum install -y kubelet-1.23.9 kubectl-1.23.9 kubeadm-1.23.9

kubeadm:用来初始化集群的指令。kubelet:在集群中的每个节点上用来启动 Pod 和容器等。kubectl:用来与集群通信的命令行工具。

官网提醒

kubeadm 不能帮你安装或者管理 kubelet 或 kubectl, 所以你需要确保它们与通过 kubeadm 安装的控制平面的版本相匹配。 如果不这样做,则存在发生版本偏差的风险,可能会导致一些预料之外的错误和问题。 然而,控制平面与 kubelet 之间可以存在一个次要版本的偏差,但 kubelet 的版本不可以超过 API 服务器的版本。 例如,1.7.0 版本的 kubelet 可以完全兼容 1.8.0 版本的 API 服务器,反之则不可以

查看安装版本是否正确

[root@master k8s]# yum info kubeadm

Loaded plugins: fastestmirror, langpacks

Repository epel is listed more than once in the configuration

Loading mirror speeds from cached hostfile

Installed Packages

Name : kubeadm

Arch : x86_64

Version : 1.23.9

Release : 0

Size : 43 M

Repo : installed

From repo : k8s

Summary : Command-line utility for administering a Kubernetes cluster.

URL : https://kubernetes.io

License : ASL 2.0

Description : Command-line utility for administering a Kubernetes cluster.

Available Packages

Name : kubeadm

Arch : x86_64

Version : 1.25.3

Release : 0

Size : 9.8 M

Repo : k8s

Summary : Command-line utility for administering a Kubernetes cluster.

URL : https://kubernetes.io

License : ASL 2.0

Description : Command-line utility for administering a Kubernetes cluster.

可以看情况先启动kubelet,我是后面在启动的

systemctl start kubectl

systemctl enable kubectl

设置 cgroup driver

docker的默认cgroup驱动cgroupfs,修改为与k8s一致

# 修改/etc/docker/daemon.json 添加一行

# 指定docker的cgroupdriver为systemd,官方推荐 docker和k8s的cgroup driver必须一致 否则启动不了

"exec-opts": ["native.cgroupdriver=systemd"],

# 重启

systemctl daemon-reload

systemctl restart docker

部署master节点

apiserver-advertise-address master节点地址

image-repository 使用阿里云的镜像地址 不然访问很慢

kubernetes-version 版本号

其它就设置为默认值

kubeadm init \

--apiserver-advertise-address=10.0.4.9 \

--image-repository registry.aliyuncs.com/google_containers \

--kubernetes-version=v1.23.9 \

--pod-network-cidr=10.244.0.0/16 \

--service-cidr=10.96.0.0/16

# 将打印的 kubeadm join 记录下来免得后面去找

kubeadm join 10.0.4.9:6443 --token x22atb.reldvil72yia0ac4 \

--discovery-token-ca-cert-hash sha256:c32a489c444bf5242543811c1aad5b5925693341699756ea4523a4228da6e5ff

# 日志里还会提示一段命令 后面需要用到

mkdir -p $HOME/.kube

cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

chown $(id -u):$(id -g) $HOME/.kube/config

# 实在忘记了重新获取token

kubeadm token create --print-join-command

第二种部署方式(选用)

#导出默认配置

kubeadm config print init-defaults > init-kubeadm.conf

# 修改默认配置

# init

kubeadm init --config init-kubeadm.conf

查看版本

kubectl version

# 报错 The connection to the server localhost:8080 was refused - did you specify the right host or port

# 前面没有执行 现在执行

mkdir -p $HOME/.kube

cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

chown $(id -u):$(id -g) $HOME/.kube/config

# 再次访问 kubectl version 此时没有报错了

# 如果其它机器需要使用 kubectl

# 拷贝$HOME/.kube/config到其它机器

查看nodes

[root@master ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

master NotReady control-plane,master 36m v1.23.9

# 状态为NotReady 没有成功 查看日志

tail -f /var/log/messages

# 需要安装网络插件

Nov 1 01:06:05 VM-4-9-centos kubelet: E1101 01:06:05.769861 7352 kubelet.go:2391] "Container runtime network not ready" networkReady="NetworkReady=false reason:NetworkPluginNotReady message:docker: network plugin is not ready: cni config uninitialized"

安装网络插件,便于pod之间可以相互通信

这里我选择kube-flannel,当然也可以选择Calico CNI插件

wget https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

一般是下载不了,需要下载一个,我这里下载了一个

---

kind: Namespace

apiVersion: v1

metadata:

name: kube-flannel

labels:

pod-security.kubernetes.io/enforce: privileged

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: flannel

rules:

- apiGroups:

- ""

resources:

- pods

verbs:

- get

- apiGroups:

- ""

resources:

- nodes

verbs:

- list

- watch

- apiGroups:

- ""

resources:

- nodes/status

verbs:

- patch

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: flannel

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: flannel

subjects:

- kind: ServiceAccount

name: flannel

namespace: kube-flannel

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: flannel

namespace: kube-flannel

---

kind: ConfigMap

apiVersion: v1

metadata:

name: kube-flannel-cfg

namespace: kube-flannel

labels:

tier: node

app: flannel

data:

cni-conf.json: |

{

"name": "cbr0",

"cniVersion": "0.3.1",

"plugins": [

{

"type": "flannel",

"delegate": {

"hairpinMode": true,

"isDefaultGateway": true

}

},

{

"type": "portmap",

"capabilities": {

"portMappings": true

}

}

]

}

net-conf.json: |

{

"Network": "10.244.0.0/16",

"Backend": {

"Type": "vxlan"

}

}

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds

namespace: kube-flannel

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/os

operator: In

values:

- linux

hostNetwork: true

priorityClassName: system-node-critical

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni-plugin

#image: flannelcni/flannel-cni-plugin:v1.1.0 for ppc64le and mips64le (dockerhub limitations may apply)

image: docker.io/rancher/mirrored-flannelcni-flannel-cni-plugin:v1.1.0

command:

- cp

args:

- -f

- /flannel

- /opt/cni/bin/flannel

volumeMounts:

- name: cni-plugin

mountPath: /opt/cni/bin

- name: install-cni

#image: flannelcni/flannel:v0.20.0 for ppc64le and mips64le (dockerhub limitations may apply)

image: docker.io/rancher/mirrored-flannelcni-flannel:v0.20.0

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

#image: flannelcni/flannel:v0.20.0 for ppc64le and mips64le (dockerhub limitations may apply)

image: docker.io/rancher/mirrored-flannelcni-flannel:v0.20.0

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN", "NET_RAW"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

- name: EVENT_QUEUE_DEPTH

value: "5000"

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

- name: xtables-lock

mountPath: /run/xtables.lock

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni-plugin

hostPath:

path: /opt/cni/bin

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

- name: xtables-lock

hostPath:

path: /run/xtables.lock

type: FileOrCreate

# 从yml中可以看到这里与我们搭建master时的 --pod-network-cidr=10.244.0.0/16配置一样

# 如果使用 kube-flannel就最后设置默认值就行

net-conf.json: |

{

"Network": "10.244.0.0/16",

"Backend": {

"Type": "vxlan"

}

}

使用kubectl 安装网络插件

[root@master k8s]# kubectl apply -f kube-flannel.yml

namespace/kube-flannel created

clusterrole.rbac.authorization.k8s.io/flannel created

clusterrolebinding.rbac.authorization.k8s.io/flannel created

serviceaccount/flannel created

configmap/kube-flannel-cfg created

daemonset.apps/kube-flannel-ds created

安装过程中可能会碰到镜像拉取慢的问题

可参考 http://t.csdn.cn/bSam5

等待一段时间再次查看nodes

Ready

[root@master k8s]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

master Ready control-plane,master 50m v1.23.9

# 日志正常

[root@master k8s]# tail -f /var/log/messages

Nov 1 01:42:27 VM-4-9-centos ntpd[655]: Listen normally on 9 cni0 10.244.0.1 UDP 123

Nov 1 01:42:27 VM-4-9-centos ntpd[655]: Listen normally on 10 veth91dca84c fe80::e86e:34ff:fe92:50a7 UDP 123

Nov 1 01:42:27 VM-4-9-centos ntpd[655]: Listen normally on 11 cni0 fe80::d097:f0ff:fed3:e444 UDP 123

Nov 1 01:42:27 VM-4-9-centos ntpd[655]: Listen normally on 12 veth77048412 fe80::9cf4:6cff:fee8:5547 UDP 123

Nov 1 01:43:01 VM-4-9-centos systemd: Started Session 4243 of user root.

Nov 1 01:44:01 VM-4-9-centos systemd: Started Session 4244 of user root.

查看pod

[root@master k8s]# kubectl get pods -A

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-flannel kube-flannel-ds-lpfvv 1/1 Running 0 4m29s

kube-system coredns-6d8c4cb4d-96hvd 1/1 Running 0 53m

kube-system coredns-6d8c4cb4d-wrm4s 1/1 Running 0 53m

kube-system etcd-master 1/1 Running 0 53m

kube-system kube-apiserver-master 1/1 Running 0 53m

kube-system kube-controller-manager-master 1/1 Running 0 53m

kube-system kube-proxy-7kqc5 1/1 Running 0 53m

kube-system kube-scheduler-master 1/1 Running 0 53m

如果安装失败可以使用kubeadm reset 恢复原状重新安装

node节点加入集群

# 找到上面记录的 token 执行

kubeadm join 10.0.4.9:6443 --token x22atb.reldvil72yia0ac4 \

--discovery-token-ca-cert-hash sha256:c32a489c444bf5242543811c1aad5b5925693341699756ea4523a4228da6e5ff

# 报错 需要允许 iptables 检查桥接流量

[ERROR FileContent--proc-sys-net-bridge-bridge-nf-call-iptables]: /proc/sys/net/bridge/bridge-nf-call-iptables contents are not set to 1

允许 iptables 检查桥接流量

设置 net.bridge.bridge-nf-call-iptables =1 以便于Linux 节点的 iptables 能够正确查看桥接流量

cat <<EOF | sudo tee /etc/modules-load.d/k8s.conf

overlay

br_netfilter

EOF

sudo modprobe overlay

sudo modprobe br_netfilter

cat <<EOF | sudo tee /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

net.ipv4.ip_forward = 1

EOF

# 不需要重新启动

sudo sysctl --system

再次执行

#如果token忘记了或者过期了可以重新生成一个

kubeadm token create --print-join-command --ttl=0

[root@node01 ~]# kubeadm join 10.0.4.9:6443 --token q1g5bp.qtc45zl0umpu1viy --discovery-token-ca-cert-hash sha256:c32a489c444bf5242543811c1aad5b5925693341699756ea4523a4228da6e5ff

[preflight] Running pre-flight checks

[preflight] Reading configuration from the cluster...

[preflight] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -o yaml'

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Starting the kubelet

[kubelet-start] Waiting for the kubelet to perform the TLS Bootstrap...

This node has joined the cluster:

* Certificate signing request was sent to apiserver and a response was received.

* The Kubelet was informed of the new secure connection details.

Run 'kubectl get nodes' on the control-plane to see this node join the cluster.

###############

[root@node01 ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

master Ready control-plane,master 125m v1.23.9

node01 NotReady <none> 53s v1.23.9

#另外一台node节点也加入,等待一段时间访问 都已经Ready

[root@node02 ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

master Ready control-plane,master 129m v1.23.9

node01 Ready <none> 5m47s v1.23.9

node02 Ready <none> 3m44s v1.23.9

如果碰到提示 systemctl enable kebuctl.service 之类的信息 复制执行一下即可

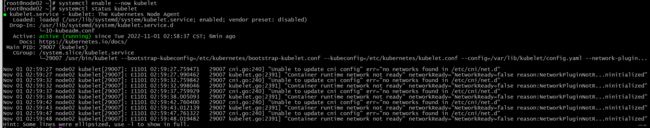

启动kubelet

如果启动有报错,通过命令 journalctl -f -u kubelet 查看日志

# 现在启动

systemctl enable --now kubelet

安装dashboard

下载 https://raw.githubusercontent.com/kubernetes/dashboard/v2.6.0/aio/deploy/recommended.yaml

这里我修改了一下信息 添加 type: NodePort 暴露端口30000 不设置的话只能部署ingress访问

kind: Service

apiVersion: v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

spec:

type: NodePort

ports:

- port: 443

targetPort: 8443

nodePort: 30000

selector:

k8s-app: kubernetes-dashboard

[root@master k8s]# kubectl apply -f kube-dashboard.yml

namespace/kubernetes-dashboard created

serviceaccount/kubernetes-dashboard created

service/kubernetes-dashboard created

secret/kubernetes-dashboard-certs created

secret/kubernetes-dashboard-csrf created

secret/kubernetes-dashboard-key-holder created

configmap/kubernetes-dashboard-settings created

role.rbac.authorization.k8s.io/kubernetes-dashboard created

clusterrole.rbac.authorization.k8s.io/kubernetes-dashboard created

rolebinding.rbac.authorization.k8s.io/kubernetes-dashboard created

clusterrolebinding.rbac.authorization.k8s.io/kubernetes-dashboard created

deployment.apps/kubernetes-dashboard created

service/dashboard-metrics-scraper created

deployment.apps/dashboard-metrics-scraper created

[root@master k8s]# kubectl get pods -A | grep dashboard

kubernetes-dashboard dashboard-metrics-scraper-6f669b9c9b-prj4m 1/1 Running 0 63s

kubernetes-dashboard kubernetes-dashboard-67b9478795-zzrds 1/1 Running 0 63s

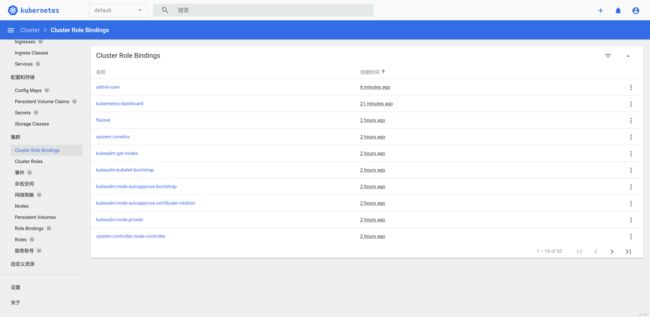

给dashboard创建管理员角色

kube-dashboard-adminuser.yml

[root@master k8s]# kubectl apply -f kube-dashboard-adminuser.yml

serviceaccount/admin-user created

clusterrolebinding.rbac.authorization.k8s.io/admin-user created

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: admin-user

namespace: kubernetes-dashboard

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: admin-user

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- kind: ServiceAccount

name: admin-user

namespace: kubernetes-dashboard

# 确定 ip

kubectl get pods,svc -n kubernetes-dashboard -o wide

访问https://ip:9200

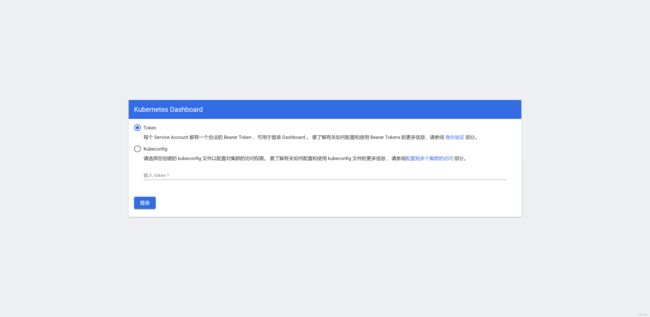

获取登录token

[root@master k8s]# kubectl describe secrets -n kubernetes-dashboard admin-user-token | grep token | awk 'NR==3{print $2}'

eyJhbGciOiJSUzI1NiIsImtpZCI6Imh3NGJpbjlZQjZubDg0OWY2Ri1xMDdLSkV6dC1fM2MyMzVmVW5XZnhlelkifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlcm5ldGVzLWRhc2hib2FyZCIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJhZG1pbi11c2VyLXRva2VuLXd4bnNxIiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQubmFtZSI6ImFkbWluLXVzZXIiLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC51aWQiOiJkOGJkZmNhNi1jZDAxLTQzOTgtODE1Mi0wZGYyNGYxOTQzMzMiLCJzdWIiOiJzeXN0ZW06c2VydmljZWFjY291bnQ6a3ViZXJuZXRlcy1kYXNoYm9hcmQ6YWRtaW4tdXNlciJ9.qBmPRz_8fUjYbFAn9jMTjHtZMeBvEQPRBtkwC5ZuYE4CNU3-6z81G3c8uuWxrZvgEei_BYUXrYxlChMksQkMMhn6xjR3o1PhLEHAz7o6Vv0jeYfXY0-aFe2PRzSc3aZjoEHhz7-G5OMSiGU9W1_Ltg7PqetwfXSPo39rIweo4P0AKY689IChq3nZXDX2MjExvuqVsCVgRSilPf1azUsZLC_R-cwHfOloPDgBWmbKDatbL_LqRtmMQ705YQH_G89I257Mf2Ki-KsCB8sm7uqrt1EwU4ovU5UEDk05hwxcEXIay2m5vXyVOESysJMR8g9j2F4B8ulv0ixpE41-eC0tlQ

登录成功