用AI制作训练数据集

本节内容我们使用SAM将边界框转换为分割数据集,这对于实例分割数据集的制作非常有用,下面我会一步步给出我的代码,希望对你有用。

有兴趣的朋友可以研究一下这本书,详细的介绍了数据集制作到分割的实际项目应用!

步骤 1. 安装与设置

import torchimport torchvisionprint("PyTorch version:", torch.__version__)print("Torchvision version:", torchvision.__version__)print("CUDA is available:", torch.cuda.is_available())import sys!{sys.executable} -m pip install opencv-python matplotlib!{sys.executable} -m pip install 'git+https://github.com/facebookresearch/segment-anything.git'!mkdir images!wget -P images https://raw.githubusercontent.com/facebookresearch/segment-anything/main/notebooks/images/truck.jpg!wget -P images https://raw.githubusercontent.com/facebookresearch/segment-anything/main/notebooks/images/groceries.jpg!wget https://dl.fbaipublicfiles.com/segment_anything/sam_vit_h_4b8939.pthimport numpy as npimport torchimport matplotlib.pyplot as pltimport cv2def show_mask(mask, ax, random_color=False):if random_color:color = np.concatenate([np.random.random(3), np.array([0.6])], axis=0)else:color = np.array([30/255, 144/255, 255/255, 0.6])h, w = mask.shape[-2:]mask_image = mask.reshape(h, w, 1) * color.reshape(1, 1, -1)ax.imshow(mask_image)def show_points(coords, labels, ax, marker_size=375):pos_points = coords[labels==1]neg_points = coords[labels==0]ax.scatter(pos_points[:, 0], pos_points[:, 1], color='green', marker='*', s=marker_size, edgecolor='white', linewidth=1.25)ax.scatter(neg_points[:, 0], neg_points[:, 1], color='red', marker='*', s=marker_size, edgecolor='white', linewidth=1.25)def show_box(box, ax):x0, y0 = box[0], box[1]w, h = box[2] - box[0], box[3] - box[1]ax.add_patch(plt.Rectangle((x0, y0), w, h, edgecolor='green', facecolor=(0,0,0,0), lw=2))import syssys.path.append("..")from segment_anything import sam_model_registry, SamPredictorsam_checkpoint = "sam_vit_h_4b8939.pth"model_type = "vit_h"device = "cuda"sam = sam_model_registry[model_type](checkpoint=sam_checkpoint)sam.to(device=device)predictor = SamPredictor(sam)image = cv2.imread('/notebooks/images/000027.jpg')image = cv2.cvtColor(image, cv2.COLOR_BGR2RGB)

图像分割

步骤二、 获取mask

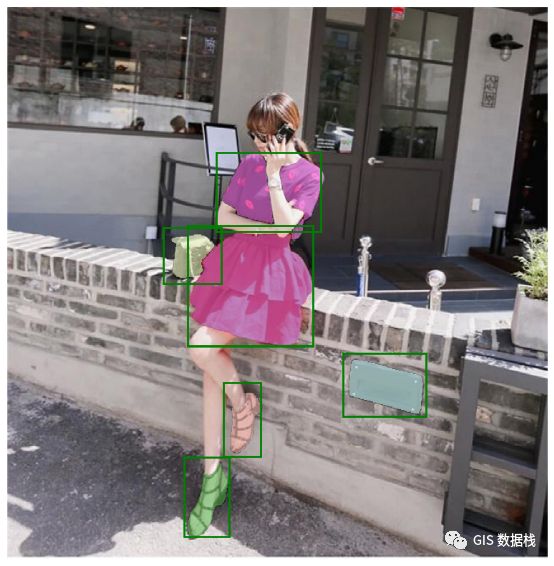

通过将 yolo 格式转换为 SAM 期望的边界框格式,我们得到了这些掩码的边界框。这是这张图片的边界框。对yolo熟悉的朋友对这个应该非常熟悉!

bounding_boxes = [[209, 532, 262, 626], [256, 444, 300, 531], [213, 258, 362, 401], [200, 96, 376, 623], [247, 172, 371, 265], [397, 409, 496, 484], [184, 261, 253, 327]]用于将这些框输入到 SAM 预测器中。我们将它们转换为张量。input_boxes = torch.tensor(bounding_boxes, device=predictor.device)这样做之后提取新的masktransformed_boxes = predictor.transform.apply_boxes_torch(input_boxes, image.shape[:2])masks, _, _ = predictor.predict_torch(point_coords=None,point_labels=None,boxes=transformed_boxes,multimask_output=False,)plt.figure(figsize=(10, 10))plt.imshow(image)for mask in masks:show_mask(mask.cpu().numpy(), plt.gca(), random_color=True)for box in input_boxes:show_box(box.cpu().numpy(), plt.gca())plt.axis('off')plt.show()

这是没有人遮罩,它把所有东西都染成绿色