大模型入门(四)—— 基于peft 微调 LLaMa模型

原文链接 https://www.cnblogs.com/jiangxinyang/p/17330352.html

llama-7b模型大小大约27G,本文在单张/两张 16G V100上基于hugging face的peft库实现了llama-7b的微调。

1、模型和数据准备

使用的大模型:https://huggingface.co/decapoda-research/llama-7b-hf,已经是float16的模型。

微调数据集:https://github.com/LC1332/Chinese-alpaca-lora/blob/main/data/trans_chinese_alpaca_data.json

微调的代码已上传到github:https://github.com/jiangxinyang227/LLM-tuning/tree/master/llama_tuning

2、微调技巧

1)lora微调。float16的模型刚刚好存放在16G的GPU上,没有太多显存用于存放梯度、优化器等参数,因此在这里使用lora微调部分参数。

2)混合精度训练,因为llama-7b有27g,想在单张V100上加载就需要转换成float16才行,而lora参数用的是float32,需要使用混合精度训练。同时混合精度训练也会有所加速。

3)梯度累积,单张gpu在存放完模型参数,lora参数、梯度、优化器等参数之后只剩下很少的显存给到输入输出等中间变量,经测试单张V100的极限大致是batch size=1,sequence length=200,只能使用梯度累积实现mini-batch训练。

4)当有多张卡时,可以使用数据并行、模型并行等方法微调,数据并行只是将模型复制到每张GPU上,因此单张GPU的batch size仍然只能是1,模型并行会将模型均分到每个GPU上,可以增大每张GPU上的batch size,在2张V100上测试了ddp(数据并行)和 基于zero-3 + cpu offload(数据并行+模型并行+CPU)。

3、要注意的代码讲解

3.1 data_helper.py

data_helper.py中主要注意下tokenizer()函数,一是padding是在左边padding,和我们通常的右边padding不太一样;二是labels中的pad_id=-100,因为pytorch中label=-100时不参与loss的计算。

![]()

def tokenize(self, prompt, add_eos_token=True):

# there's probably a way to do this with the tokenizer settings

# but again, gotta move fast

result = self.tokenizer(

prompt,

truncation=True,

max_length=self.sequence_len,

padding=False,

return_tensors=None

)

input_ids, attention_mask, labels = [], [], []

if (

result["input_ids"][-1] != self.eos_token_id

and len(result["input_ids"]) < self.sequence_len

and add_eos_token

):

result["input_ids"].append(self.eos_token_id)

result["attention_mask"].append(1)

pad_len = self.sequence_len - len(result["input_ids"])

if pad_len <= 0:

input_ids = result["input_ids"][:self.sequence_len]

attention_mask = result["attention_mask"][:self.sequence_len]

labels = input_ids.copy()

else:

input_ids = [self.pad_token_id] * pad_len + result["input_ids"]

attention_mask = [0] * pad_len + result["attention_mask"]

labels = [self.label_pad_token_id] * pad_len + result["input_ids"]

return input_ids, attention_mask, labels

![]()

3.2 metric.py

在指标计算中只实现了准确率,在这里要注意的是生成任务是前n-1个token生成第n个token,因此这里的预测结果和label要做一次不同的移位,即

pred_y = pred_y[:-1]

true_y = true_y[1:]

只要注意这里就好了,剩下的你需要计算什么指标都可以。

![]()

def accuracy(pred_ys, true_ys, masks):

total = 0

corr = 0

for pred_y, true_y, mask in zip(pred_ys, true_ys, masks):

# 做一层转换,让生成的结果对应上预测的结果,即前n-1个token预测第n个token

pred_y = pred_y[:-1]

true_y = true_y[1:]

mask = mask[:-1]

for p, t, m in zip(pred_y, true_y, mask):

if m == 1:

total += 1

if p == t:

corr += 1

return corr / total if total > 0 else 0

![]()

4、训练方式

4.1 单GPU训练

单GPU训练很好理解,训练的时候只要注意下面的一段代码即可,混合精度训练+梯度累积

![]()

with autocast():

loss, predictions = self.model(input_ids, attention_mask, labels)

# 梯度累积训练

loss /= self.accu_steps

# loss.backward()

# 放大loss,并求梯度

scaled_loss = self.scaler.scale(loss)

scaled_loss.backward()

if current_step % self.accu_steps == 0:

# 先将梯度缩放回去,再执行梯度裁剪

self.scaler.unscale_(self.optimizer)

clip_grad_norm_(self.model.parameters(), 1.0)

self.scaler.step(self.optimizer)

self.scheduler.step()

self.scaler.update()

self.optimizer.zero_grad()

![]()

4.2 多GPU + DDP训练

DDP训练也是大家最常用的方法,尤其是在模型没那么大的情况下,DDP训练就是主流,就不多赘述,在这里值得注意的是,每个GPU会分担一部分数据,在验证的时候如果需要拿到全部数据的验证结果并输出时,需要通过dist.all_gather 或者 dist.gather的方法将验证集的结果聚合到一块。详细代码见https://github.com/jiangxinyang227/LLM-tuning/blob/master/llama_tuning/lora_ddp/trainer.py

![]()

def eval(self):

self.model.eval()

with torch.no_grad():

eval_losses = []

eval_word_preds = []

eval_word_labels = []

eval_masks = []

for batch_data in self.valid_data_loader:

input_ids = batch_data[0].cuda()

attention_mask = batch_data[1].cuda()

labels = batch_data[2].cuda()

with autocast():

loss, predictions = self.model(input_ids, attention_mask, labels)

# 获取所有gpu上输出的数据

avg_loss_multi_gpu = reduce_value(loss, average=True)

gather_preds = [torch.zeros_like(predictions, dtype=predictions.dtype) for _ in range(Config.world_size)]

gather_labels = [torch.zeros_like(labels, dtype=labels.dtype) for _ in range(Config.world_size)]

gather_masks = [torch.zeros_like(attention_mask, dtype=attention_mask.dtype) for _ in range(Config.world_size)]

gather_value(predictions, gather_preds)

gather_value(labels, gather_labels)

gather_value(attention_mask, gather_masks)

eval_losses.append(float(avg_loss_multi_gpu))

for pred, label, mask in zip(gather_preds, gather_labels, gather_masks):

eval_word_preds.extend(pred.tolist())

eval_word_labels.extend(label.tolist())

eval_masks.extend(mask.tolist())

if is_main_process():

acc = accuracy(pred_ys=eval_word_preds, true_ys=eval_word_labels, masks=eval_masks)

logger.info("\n")

logger.info("eval: num: {}, loss: {}, acc: {}".format(

len(eval_word_preds), mean(eval_losses), acc))

logger.info("\n")

![]()

4.3 deepspeed的zero-3 + cpu offload

在这里使用的是hugging face的accelerate库中的deepspeed方法,zero-3会将模型、梯度、优化器参数都分割到不同的GPU,并且使用cpu offload将一些中间变量放到cpu上,经实测使用两张GPU时,每张GPU的使用大概5个G多一点,单张卡的batch size可以设置到8,但是在实际训练过程中速度比DDP还要慢一点,这里的原因还是因为模型并行、CPU offload等带来了大量的通信工作,所以单张gpu能存放一整个模型时还是首推DDP。

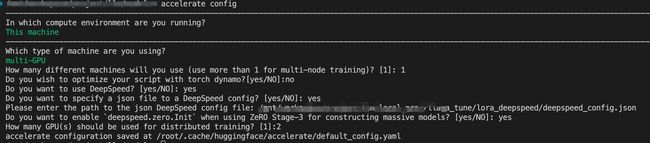

使用accelerate中的deepspeed时,首先要通过accelerate config这个命令互动式配置训练参数,以下是我在配置时选择的参数

在使用deepspeed时可以通过json文件去配置其他参数,accelerate config只配置一些通用参数。zero-3 + cpu offload的json文件如下,配置的时候有几个参数(如allgather_bucket_size 和 reduce_bucket_size)要设小一点,不然显存会爆掉,默认的值会比较大,主要是V100太小了。

![]()

{

"fp16": {

"enabled": true,

"loss_scale": 0,

"loss_scale_window": 1000,

"initial_scale_power": 16,

"hysteresis": 2,

"min_loss_scale": 1

},

"optimizer": {

"type": "AdamW",

"params": {

"lr": 3e-4,

"weight_decay": 0.0

}

},

"scheduler": {

"type": "WarmupDecayLR",

"params": {

"warmup_min_lr": "auto",

"warmup_max_lr": "auto",

"warmup_num_steps": "auto",

"total_num_steps": "auto"

}

},

"zero_optimization": {

"stage": 3,

"offload_optimizer": {

"device": "cpu",

"pin_memory": true

},

"offload_param": {

"device": "cpu",

"pin_memory": true

},

"overlap_comm": true,

"contiguous_gradients": true,

"allgather_bucket_size": 1e6, # 参数要小,不然容易内存爆掉

"reduce_bucket_size": 1e6, # 参数要小,不然容易内存爆掉

"stage3_prefetch_bucket_size": 1e6, # 参数要小,不然容易内存爆掉

"stage3_param_persistence_threshold": 1e6, # 参数要小,不然容易内存爆掉

"sub_group_size": 1e9,

"stage3_max_live_parameters": 1e9,

"stage3_max_reuse_distance": 1e9,

"stage3_gather_16bit_weights_on_model_save": true

},

"gradient_accumulation_steps": 1,

"gradient_clipping": 1.0,

"steps_per_print": 2000,

"train_batch_size": "auto",

"train_micro_batch_size_per_gpu": "auto",

"wall_clock_breakdown": false

}

![]()

在使用的时候有一个问题一直没有解决,保存模型时,保存完之后会出现GPU1掉线的情况,所以在这里将保存模型放在整个训练结束后保存,这个问题还没找到解决的办法,有知道怎么解的还麻烦指导下。

如果在运行时报这样的错误的话:

![]()

Traceback (most recent call last): File "/mnt/workspace/project/llm/local_proj/chatglm_tune/lora_deepspeed/trainer.py", line 271, inmain() File "/mnt/workspace/project/llm/local_proj/chatglm_tune/lora_deepspeed/trainer.py", line 265, in main trainer = Trainer() File "/mnt/workspace/project/llm/local_proj/chatglm_tune/lora_deepspeed/trainer.py", line 93, in __init__ self.model, self.optimizer, self.train_data_loader, self.valid_data_loader, self.scheduler = self.accelerator.prepare( File "/home/pai/lib/python3.9/site-packages/accelerate/accelerator.py", line 1118, in prepare result = self._prepare_deepspeed(*args) File "/home/pai/lib/python3.9/site-packages/accelerate/accelerator.py", line 1415, in _prepare_deepspeed engine, optimizer, _, lr_scheduler = deepspeed.initialize(**kwargs) File "/home/pai/lib/python3.9/site-packages/deepspeed/__init__.py", line 165, in initialize engine = DeepSpeedEngine(args=args, File "/home/pai/lib/python3.9/site-packages/deepspeed/runtime/engine.py", line 308, in __init__ self._configure_optimizer(optimizer, model_parameters) File "/home/pai/lib/python3.9/site-packages/deepspeed/runtime/engine.py", line 1173, in _configure_optimizer self.optimizer = self._configure_zero_optimizer(basic_optimizer) File "/home/pai/lib/python3.9/site-packages/deepspeed/runtime/engine.py", line 1463, in _configure_zero_optimizer optimizer = DeepSpeedZeroOptimizer_Stage3( File "/home/pai/lib/python3.9/site-packages/deepspeed/runtime/zero/stage3.py", line 298, in __init__ largest_partitioned_param_numel = max([ File "/home/pai/lib/python3.9/site-packages/deepspeed/runtime/zero/stage3.py", line 299, in max([max(tensor.numel(), tensor.ds_numel) for tensor in fp16_partitioned_group]) ValueError: max() arg is an empty sequence

![]()

具体原因不知道为什么会导致这样,可以进入到/home/pai/lib/python3.9/site-packages/deepspeed/runtime/zero/stage3.py(具体的路径看报错的日志)文件中,将

largest_partitioned_param_numel = max([

max([max(tensor.numel(), tensor.ds_numel) for tensor in fp16_partitioned_group])

for fp16_partitioned_group in self.fp16_partitioned_groups

])

改成

largest_partitioned_param_numel = max([

max([max(tensor.numel(), tensor.ds_numel) for tensor in fp16_partitioned_group])

for fp16_partitioned_group in self.fp16_partitioned_groups if len (fp16_partitioned_group) > 0

])

即可运行。