Android音视频编码(2)

Android本身提供了音视频编解码工具,很多时候是不需要第三方工具的,比如ffmpeg, OpenCV等,在android中引入第三库比较复杂,在Android音视频编码中介绍了如何引入第三方库libpng来进行进行图片处理,同时引入这些第三方库,是程序结构变得复杂。

本文介绍的音视频编解码利用的就是android自带的MediaCodec。视频编码之后,你可以对视频做任何形式的处理,比如添加广告,剪辑等等。

MediaCodec

官方文档: MediaCodec

MediaCodec class can be used to access low-level media codecs, i.e. encoder/decoder components. It is part of the Android low-level multimedia support infrastructure (normally used together with MediaExtractor, MediaSync, MediaMuxer, MediaCrypto, MediaDrm, Image, Surface, and AudioTrack.)

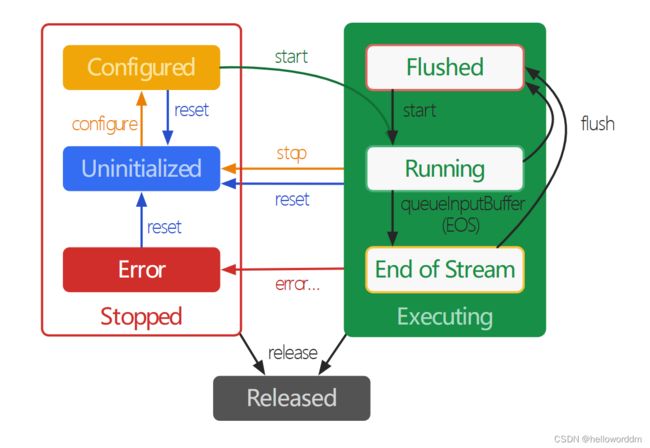

在其生命周期中,编解码器概念上存在三种状态:停止、执行和释放。停止状态实际上是三种状态的集合:未初始化、已配置和错误。而执行状态在概念上分为三个子状态:刷新、运行和流结束。

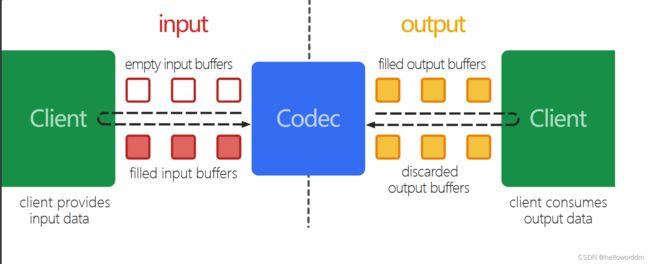

使用Buffers进行异步处理

Since Build.VERSION_CODES.LOLLIPOP, the preferred method is to process data asynchronously by setting a callback before calling configure.

MediaCodec codec = MediaCodec.createByCodecName(name);

MediaFormat mOutputFormat; // member variable

codec.setCallback(new MediaCodec.Callback() {

@Override

void onInputBufferAvailable(MediaCodec mc, int inputBufferId) {

ByteBuffer inputBuffer = codec.getInputBuffer(inputBufferId);

// fill inputBuffer with valid data

…

codec.queueInputBuffer(inputBufferId, …);

}

@Override

void onOutputBufferAvailable(MediaCodec mc, int outputBufferId, …) {

ByteBuffer outputBuffer = codec.getOutputBuffer(outputBufferId);

MediaFormat bufferFormat = codec.getOutputFormat(outputBufferId); // option A

// bufferFormat is equivalent to mOutputFormat

// outputBuffer is ready to be processed or rendered.

…

codec.releaseOutputBuffer(outputBufferId, …);

}

@Override

void onOutputFormatChanged(MediaCodec mc, MediaFormat format) {

// Subsequent data will conform to new format.

// Can ignore if using getOutputFormat(outputBufferId)

mOutputFormat = format; // option B

}

@Override

void onError(…) {

…

}

@Override

void onCryptoError(…) {

…

}

});

codec.configure(format, …);

mOutputFormat = codec.getOutputFormat(); // option B

codec.start();

// wait for processing to complete

codec.stop();

codec.release();

Since Build.VERSION_CODES.LOLLIPOP, you should retrieve input and output buffers using getInput/OutputBuffer(int) and/or getInput/OutputImage(int) even when using the codec in synchronous mode

在5.0之前,使用同步的方式。通过 getInput/OutputBuffer(int) 或者getInput/OutputImage(int) 来获取输入输出流。

MediaCodec codec = MediaCodec.createByCodecName(name);

codec.configure(format, …);

MediaFormat outputFormat = codec.getOutputFormat(); // option B

codec.start();

for (;;) {

int inputBufferId = codec.dequeueInputBuffer(timeoutUs);

if (inputBufferId >= 0) {

ByteBuffer inputBuffer = codec.getInputBuffer(…);

// fill inputBuffer with valid data

…

codec.queueInputBuffer(inputBufferId, …);

}

int outputBufferId = codec.dequeueOutputBuffer(…);

if (outputBufferId >= 0) {

ByteBuffer outputBuffer = codec.getOutputBuffer(outputBufferId);

MediaFormat bufferFormat = codec.getOutputFormat(outputBufferId); // option A

// bufferFormat is identical to outputFormat

// outputBuffer is ready to be processed or rendered.

…

codec.releaseOutputBuffer(outputBufferId, …);

} else if (outputBufferId == MediaCodec.INFO_OUTPUT_FORMAT_CHANGED) {

// Subsequent data will conform to new format.

// Can ignore if using getOutputFormat(outputBufferId)

outputFormat = codec.getOutputFormat(); // option B

}

}

codec.stop();

codec.release();

音视频编解码

这里使用同步编码,异步解码。

使用场景是需要截取某一段视频某个时间段的内容,则需要首先对原视频进行解码,获取相应时间段的开始帧和结束帧率。然后根据从开始帧和结束帧对视频进行重新编码。

关于视频帧

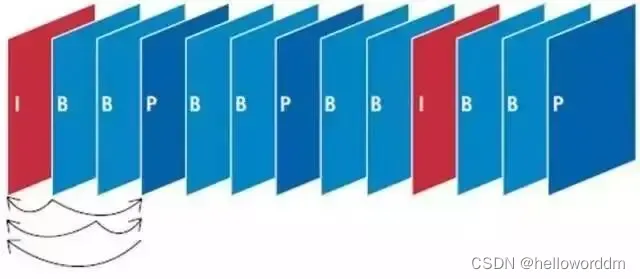

I帧又称帧内编码帧,是一种自带全部信息的独立帧,无需参考其他图像便可独立进行解码,可以简单理解为一张静态画面。视频序列中的第一个帧始终都是I帧,因为它是关键帧。

P帧又称帧间预测编码帧,需要参考前面的I帧才能进行编码。表示的是当前帧画面与前一帧(前一帧可能是I帧也可能是P帧)的差别

B帧又称双向预测编码帧,也就是B帧记录的是本帧与前后帧的差别。也就是说要解码B帧,不仅要取得之前的缓存画面,还要解码之后的画面,通过前后画面的与本帧数据的叠加取得最终的画面。

这里直接使用获取的帧,可能是i,p,b帧,根据场景需要可以自己修改。

同步音视频编码

public class VideoEncoder {

private static final String TAG = "VideoEncoder";

private final static String MIME_TYPE = MediaFormat.MIMETYPE_VIDEO_AVC;

private static final long DEFAULT_TIMEOUT_US = 10000;

private MediaCodec mEncoder;

private MediaMuxer mMediaMuxer;

private int mVideoTrackIndex;

private boolean mStop = false;

private AudioEncode mAudioEncode;

public void init(String outPath, int width, int height) {

try {

mStop = false;

mVideoTrackIndex = -1;

mMediaMuxer = new MediaMuxer(outPath, MediaMuxer.OutputFormat.MUXER_OUTPUT_MPEG_4);

mEncoder = MediaCodec.createEncoderByType(MIME_TYPE);

MediaFormat mediaFormat = MediaFormat.createVideoFormat(MIME_TYPE, width, height);

mediaFormat.setInteger(MediaFormat.KEY_COLOR_FORMAT, MediaCodecInfo.CodecCapabilities.COLOR_FormatYUV420SemiPlanar);

mediaFormat.setInteger(MediaFormat.KEY_BIT_RATE, width * height * 6);

mediaFormat.setInteger(MediaFormat.KEY_FRAME_RATE, 30);

mediaFormat.setInteger(MediaFormat.KEY_I_FRAME_INTERVAL, 1);

mEncoder.configure(mediaFormat, null, null, MediaCodec.CONFIGURE_FLAG_ENCODE);

mEncoder.start();

} catch (Throwable e) {

e.printStackTrace();

}

}

public void release() {

mStop = true;

if (mEncoder != null) {

mEncoder.stop();

mEncoder.release();

mEncoder = null;

}

if (mMediaMuxer != null) {

mMediaMuxer.stop();

mMediaMuxer.release();

mMediaMuxer = null;

}

if (mAudioEncode != null) {

mAudioEncode.release();

}

}

public void encode(byte[] yuv, long presentationTimeUs) {

if (mEncoder == null || mMediaMuxer == null) {

Log.e(TAG, "mEncoder or mMediaMuxer is null");

return;

}

if (yuv == null) {

Log.e(TAG, "input yuv data is null");

return;

}

int inputBufferIndex = mEncoder.dequeueInputBuffer(DEFAULT_TIMEOUT_US);

Log.d(TAG, "inputBufferIndex: " + inputBufferIndex);

if (inputBufferIndex == -1) {

Log.e(TAG, "no valid buffer available");

return;

}

ByteBuffer inputBuffer = mEncoder.getInputBuffer(inputBufferIndex);

inputBuffer.put(yuv);

mEncoder.queueInputBuffer(inputBufferIndex, 0, yuv.length, presentationTimeUs, 0);

while (!mStop) {

MediaCodec.BufferInfo bufferInfo = new MediaCodec.BufferInfo();

int outputBufferIndex = mEncoder.dequeueOutputBuffer(bufferInfo, DEFAULT_TIMEOUT_US);

Log.d(TAG, "outputBufferIndex: " + outputBufferIndex);

if (outputBufferIndex >= 0) {

ByteBuffer outputBuffer = mEncoder.getOutputBuffer(outputBufferIndex);

// write head info

if (mVideoTrackIndex == -1) {

Log.d(TAG, "this is first frame, call writeHeadInfo first");

mVideoTrackIndex = writeHeadInfo(outputBuffer, bufferInfo);

}

if ((bufferInfo.flags & MediaCodec.BUFFER_FLAG_CODEC_CONFIG) == 0) {

Log.d(TAG, "write outputBuffer");

mMediaMuxer.writeSampleData(mVideoTrackIndex, outputBuffer, bufferInfo);

}

mEncoder.releaseOutputBuffer(outputBufferIndex, false);

break; // 跳出循环

}

}

}

public void encodeAudio() {

//音频混合

mAudioEncode.encodeAudio();

release();

}

private int writeHeadInfo(ByteBuffer outputBuffer, MediaCodec.BufferInfo bufferInfo) {

byte[] csd = new byte[bufferInfo.size];

outputBuffer.limit(bufferInfo.offset + bufferInfo.size);

outputBuffer.position(bufferInfo.offset);

outputBuffer.get(csd);

ByteBuffer sps = null;

ByteBuffer pps = null;

for (int i = bufferInfo.size - 1; i > 3; i--) {

if (csd[i] == 1 && csd[i - 1] == 0 && csd[i - 2] == 0 && csd[i - 3] == 0) {

sps = ByteBuffer.allocate(i - 3);

pps = ByteBuffer.allocate(bufferInfo.size - (i - 3));

sps.put(csd, 0, i - 3).position(0);

pps.put(csd, i - 3, bufferInfo.size - (i - 3)).position(0);

}

}

MediaFormat outputFormat = mEncoder.getOutputFormat();

if (sps != null && pps != null) {

outputFormat.setByteBuffer("csd-0", sps);

outputFormat.setByteBuffer("csd-1", pps);

}

int videoTrackIndex = mMediaMuxer.addTrack(outputFormat);

mAudioEncode = new AudioEncode(mMediaMuxer);

Log.d(TAG, "videoTrackIndex: " + videoTrackIndex);

mMediaMuxer.start();

return videoTrackIndex;

}

}

音频编码:

public class AudioEncode {

private MediaMuxer mediaMuxer;

private int audioTrack;

private MyExtractor audioExtractor;

private MediaFormat audioFormat;

public AudioEncode(MediaMuxer mediaMuxer) {

audioExtractor = new MyExtractor(Constants.VIDEO_PATH);

audioFormat = audioExtractor.getAudioFormat();

if (audioFormat != null) {

audioTrack = mediaMuxer.addTrack(audioFormat);

}

this.mediaMuxer = mediaMuxer;

}

@SuppressLint("WrongConstant")

public void encodeAudio() {

MediaCodec.BufferInfo bufferInfo = new MediaCodec.BufferInfo();

ByteBuffer buffer = ByteBuffer.allocate(1024 * 1024);

if (audioFormat != null) {

//写完视频,再把音频混合进去

int audioSize = 0;

//读取音频帧的数据,直到结束

while ((audioSize = audioExtractor.readBuffer(buffer, false)) > 0) {

bufferInfo.offset = 0;

bufferInfo.size = audioSize;

bufferInfo.presentationTimeUs = audioExtractor.getSampleTime();

bufferInfo.flags = audioExtractor.getSampleFlags();

mediaMuxer.writeSampleData(audioTrack, buffer, bufferInfo);

}

}

}

public void release() {

this.audioExtractor.release();

}

}

异步音视频解码

要使用sleepRender进行时间同步。

/**

* describe:异步解码

*/

public class AsyncVideoDecode extends BaseAsyncDecode {

private static final String TAG = "AsyncVideoDecode";

private Surface mSurface;

private long mTime = -1;

private int mOutputFormat = Constants.COLOR_FORMAT_NV12;

private byte[] mYuvBuffer;

private Map<Integer, MediaCodec.BufferInfo> map =

new ConcurrentHashMap<>();

private boolean writeAudioFlag = false;

public AsyncVideoDecode(SurfaceTexture surfaceTexture, long progress) {

super(progress);

mSurface = new Surface(surfaceTexture);

}

private ByteArrayOutputStream outStream = new ByteArrayOutputStream();

static VideoEncoder mVideoEncoder = null;

@Override

public void start(){

super.start();

mediaCodec.setCallback(new MediaCodec.Callback() {

@Override

public void onInputBufferAvailable(@NonNull MediaCodec codec, int index) {

ByteBuffer inputBuffer = mediaCodec.getInputBuffer(index);

int size = extractor.readBuffer(inputBuffer, true);

long st = extractor.getSampleTime();

if (size >= 0) {

codec.queueInputBuffer(

index,

0,

size,

st,

extractor.getSampleFlags()

);

} else {

//结束

codec.queueInputBuffer(

index,

0,

0,

0,

BUFFER_FLAG_END_OF_STREAM

);

}

}

@Override

public void onOutputBufferAvailable(@NonNull MediaCodec codec, int index, @NonNull MediaCodec.BufferInfo info) {

Message msg = new Message();

msg.what = MSG_VIDEO_OUTPUT;

Bundle bundle = new Bundle();

bundle.putInt("index",index);

bundle.putLong("time",info.presentationTimeUs);

if (info.flags == BUFFER_FLAG_END_OF_STREAM) {

if (mVideoEncoder != null) {

mVideoEncoder.encodeAudio();

}

}

//当MediaCodeC配置了输出Surface时,此值返回null

Image image = codec.getOutputImage(index);

Rect rect = image.getCropRect();

// if (image != null) {

// Rect rect = null;

// try {

// rect = image.getCropRect();

// } catch (Exception e) {

// throw new RuntimeException(e);

// }

if (mYuvBuffer == null) {

mYuvBuffer = new byte[rect.width()*rect.height()*3/2];

}

YuvImage yuvImage = new YuvImage(ConverUtils.getDataFromImage(image), ImageFormat.NV21, rect.width(), rect.height(), null);

yuvImage.compressToJpeg(rect, 100, outStream);

byte[] bytes = outStream.toByteArray();

if (mVideoEncoder == null) {

mVideoEncoder = new VideoEncoder();

mVideoEncoder.init(Constants.OUTPUT_PATH, rect.width(), rect.height());

}

getDataFromImage(image, mOutputFormat, rect.width(), rect.height());

// mVideoEncoder.encode(ConverUtils.getDataFromImage(image), info.presentationTimeUs);

mVideoEncoder.encode(mYuvBuffer, info.presentationTimeUs);

// }

// ByteBuffer outputBuffer = codec.getOutputBuffer(index);

// ConverUtils.convertByteBufferToBitmap(outputBuffer);

msg.setData(bundle);

mHandler.sendMessage(msg);

}

@Override

public void onError(@NonNull MediaCodec codec, @NonNull MediaCodec.CodecException e) {

codec.stop();

}

@Override

public void onOutputFormatChanged(@NonNull MediaCodec codec, @NonNull MediaFormat format) {

}

});

// mediaCodec.configure(mediaFormat,mSurface,null,0);

mediaCodec.configure(mediaFormat,null,null,0);

mediaCodec.start();

}

private void getDataFromImage(Image image, int colorFormat, int width, int height) {

Rect crop = image.getCropRect();

int format = image.getFormat();

Log.d(TAG, "crop width: " + crop.width() + ", height: " + crop.height());

Image.Plane[] planes = image.getPlanes();

byte[] rowData = new byte[planes[0].getRowStride()];

int channelOffset = 0;

int outputStride = 1;

for (int i = 0; i < planes.length; i++) {

switch (i) {

case 0:

channelOffset = 0;

outputStride = 1;

break;

case 1:

if (colorFormat == Constants.COLOR_FORMAT_I420) {

channelOffset = width * height;

outputStride = 1;

} else if (colorFormat == Constants.COLOR_FORMAT_NV21) {

channelOffset = width * height + 1;

outputStride = 2;

} else if (colorFormat == Constants.COLOR_FORMAT_NV12) {

channelOffset = width * height;

outputStride = 2;

}

break;

case 2:

if (colorFormat == Constants.COLOR_FORMAT_I420) {

channelOffset = (int) (width * height * 1.25);

outputStride = 1;

} else if (colorFormat ==Constants.COLOR_FORMAT_NV21) {

channelOffset = width * height;

outputStride = 2;

} else if (colorFormat == Constants.COLOR_FORMAT_NV12) {

channelOffset = width * height + 1;

outputStride = 2;

}

break;

default:

}

ByteBuffer buffer = planes[i].getBuffer();

int rowStride = planes[i].getRowStride();

int pixelStride = planes[i].getPixelStride();

int shift = (i == 0) ? 0 : 1;

int w = width >> shift;

int h = height >> shift;

buffer.position(rowStride * (crop.top >> shift) + pixelStride * (crop.left >> shift));

for (int row = 0; row < h; row++) {

int length;

if (pixelStride == 1 && outputStride == 1) {

length = w;

buffer.get(mYuvBuffer, channelOffset, length);

channelOffset += length;

} else {

length = (w - 1) * pixelStride + 1;

buffer.get(rowData, 0, length);

for (int col = 0; col < w; col++) {

mYuvBuffer[channelOffset] = rowData[col * pixelStride];

channelOffset += outputStride;

}

}

if (row < h - 1) {

buffer.position(buffer.position() + rowStride - length);

}

}

}

}

@Override

protected int decodeType() {

return VIDEO;

}

@Override

public boolean handleMessage(@NonNull Message msg) {

switch (msg.what){

case MSG_VIDEO_OUTPUT:

try {

if (mTime == -1) {

mTime = System.currentTimeMillis();

}

Bundle bundle = msg.getData();

int index = bundle.getInt("index");

long ptsTime = bundle.getLong("time");

sleepRender(ptsTime,mTime);

// if (ptsTime + extractor.getSampleDiffTime() >= progress && progress >= ptsTime)

// {

// writeAudioFlag = true;

// }

// if (writeAudioFlag) {

mediaCodec.releaseOutputBuffer(index, false);

// }

} catch (Exception e) {

e.printStackTrace();

}

break;

default:break;

}

return super.handleMessage(msg);

}

/**

* 数据的时间戳对齐

**/

private long sleepRender(long ptsTimes, long startMs) {

/**

* 注意这里是以 0 为出事目标的,info.presenttationTimes 的单位为微秒

* 这里用系统时间来模拟两帧的时间差

*/

ptsTimes = ptsTimes / 1000;

long systemTimes = System.currentTimeMillis() - startMs;

long timeDifference = ptsTimes - systemTimes;

// 如果当前帧比系统时间差快了,则延时以下

if (timeDifference > 0) {

try {

//todo 受系统影响,建议还是用视频本身去告诉解码器 pts 时间

Thread.sleep(timeDifference);

} catch (InterruptedException e) {

e.printStackTrace();

}

}

return timeDifference;

}

}