李沐《动手学深度学习》循环神经网络 经典网络模型

系列文章

李沐《动手学深度学习》预备知识 张量操作及数据处理

李沐《动手学深度学习》预备知识 线性代数及微积分

李沐《动手学深度学习》线性神经网络 线性回归

李沐《动手学深度学习》线性神经网络 softmax回归

李沐《动手学深度学习》多层感知机 模型概念和代码实现

李沐《动手学深度学习》多层感知机 深度学习相关概念

李沐《动手学深度学习》深度学习计算

李沐《动手学深度学习》卷积神经网络 相关基础概念

李沐《动手学深度学习》卷积神经网络 经典网络模型

李沐《动手学深度学习》循环神经网络 相关基础概念

目录

- 系列文章

- 一、门控循环单元GRU

-

- (一)门控隐状态

- (二)从零开始实现

- (三)简洁实现

- 二、长短期记忆网络LSTM

-

- (一)门控记忆元

- (二)从零开始实现

- (三)简洁实现

- 三、深度循环神经网络

-

- (一)函数依赖关系

- (二)简洁实现

- (三)训练和预测

- 四、双向循环神经网络

-

- (一)隐马尔可夫模型中的动态规划

- (二)双向模型

- (三)双向循环神经网络的特性

- 五、机器翻译与数据集

-

- (一)下载和预处理数据集

- (二)词元化

- (三)词表

- (四)加载数据集

- (五)训练数据

- 六、编码器-解码器架构

-

- (一)编码器

- (二)解码器

- (三)合并编码器和解码器

- 七、序列到序列学习

-

- (一)编码器

- (二)解码器

- (三)损失函数

- (四)训练

- (五)预测

- (六)预测序列的评估

- 八、束搜索

-

- (一)贪心搜索

- (二)穷举搜索

- (三)束搜索

- (四)小结

教材:李沐《动手学深度学习》

实践中存在的梯度问题:

- 可能会遇到早期观测值比较重要的情况: 需要有某些机制能够在一个记忆元里存储重要的早期信息。 如果没有这样的机制,我们将不得不给这个观测值指定一个非常大的梯度, 因为它会影响所有后续的观测值。

- 可能遇到一些词元没有相关观测值的情况:我们希望有一些机制来跳过隐状态表示中的此类词元。

- 可能遇到序列的各个部分之间存在逻辑中断的情况:在这种情况下,最好有一种方法来重置我们的内部状态表示。

一、门控循环单元GRU

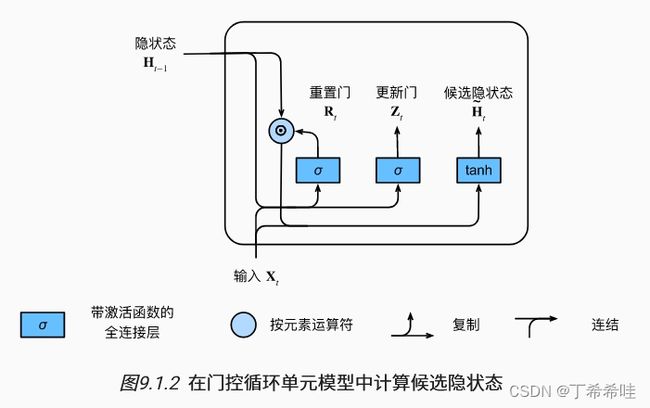

(一)门控隐状态

门控循环单元与普通的循环神经网络的区别:门控循环单元支持隐状态的门控,因此模型有专门的机制来确定应该何时更新隐状态,以及应该何时重置隐状态。

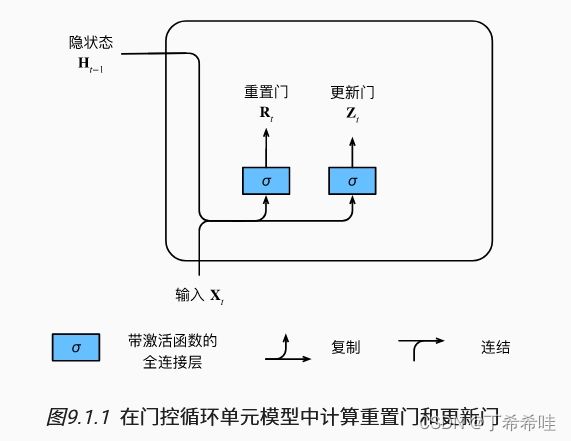

- 重置门和更新门

重置门:允许控制“可能还想记住”的过去状态的数量(捕获序列中的短期依赖关系)

更新门:允许控制新状态中有多少个是旧状态的副本(捕获序列中的长期依赖关系)

门控循环单元中的重置门和更新门的输入是由当前时间步的输入和前一时间步的隐状态给出。 两个门的输出是由使用sigmoid激活函数的两个全连接层给出。

重置门的计算公式: R t = σ ( X t W x r + H t − 1 W h r + b r ) R_t=\sigma(X_tW_{xr}+H_{t-1}W_{hr}+b_r) Rt=σ(XtWxr+Ht−1Whr+br)

更新门的计算公式: Z t = σ ( X t W x z + H t − 1 W h z + b z ) Z_t=\sigma(X_tW_{xz}+H_{t-1}W_{hz}+b_z) Zt=σ(XtWxz+Ht−1Whz+bz)

( X t X_t Xt是输入, H t − 1 H_{t-1} Ht−1是上一个时间步的隐状态, W W W是权重参数, b b b是偏置参数)

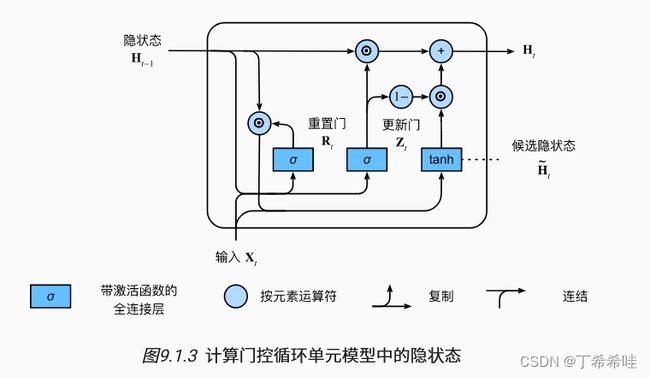

- 候选隐状态(集成重置门)

常规隐状态 H t H_t Ht: H t = ϕ ( X t W x h + H t − 1 W h h + b h ) H_t=\phi(X_tW_{xh}+H_{t-1}W_{hh}+b_h) Ht=ϕ(XtWxh+Ht−1Whh+bh)

时间步t的候选隐状态 H t ~ \tilde{H_t} Ht~:重置门与常规隐状态更新机制集成

H t ~ = t a n h ( X t W x h + ( R t ⨀ H t − 1 ) W h h + b h ) \tilde{H_t}=tanh(X_tW_{xh}+(R_t \bigodot H_{t-1})W_{hh}+b_h) Ht~=tanh(XtWxh+(Rt⨀Ht−1)Whh+bh)

- R t R_t Rt和 H t − 1 H_{t-1} Ht−1的元素相乘可以减少以往状态的影响

- 当重置门 R t R_t Rt接近于1时,会恢复一个普通的循环神经网络

- 当重置门 R t R_t Rt接近于0时,会恢复为以 X t X_t Xt作为输入的多层感知机的结果

- 隐状态(集成重置门和更新门)

门控循环单元的最终更新公式:

H t = Z t ⨀ H t − 1 + ( 1 − Z t ) ⨀ H ~ t H_t=Z_t \bigodot H_{t-1}+(1-Z_t )\bigodot \tilde H_t Ht=Zt⨀Ht−1+(1−Zt)⨀H~t

- 当更新门 Z t Z_t Zt接近于1时,模型倾向于只保留旧状态,来自 X t X_t Xt的信息基本上被忽略,从而跳过了依赖链条中的时间步t

- 当更新门 Z t Z_t Zt接近于0时,新的隐状态 H t H_t Ht就会接近候选隐状态 H ~ t \tilde H_t H~t

(二)从零开始实现

- 读取数据

import torch

from torch import nn

from d2l import torch as d2l

batch_size, num_steps = 32, 35

train_iter, vocab = d2l.load_data_time_machine(batch_size, num_steps)

- 初始化模型参数

def get_params(vocab_size, num_hiddens, device):

num_inputs = num_outputs = vocab_size

def normal(shape):

return torch.randn(size=shape, device=device)*0.01

def three():

return (normal((num_inputs, num_hiddens)),

normal((num_hiddens, num_hiddens)),

torch.zeros(num_hiddens, device=device))

W_xz, W_hz, b_z = three() # 更新门参数

W_xr, W_hr, b_r = three() # 重置门参数

W_xh, W_hh, b_h = three() # 候选隐状态参数

# 输出层参数

W_hq = normal((num_hiddens, num_outputs))

b_q = torch.zeros(num_outputs, device=device)

# 附加梯度

params = [W_xz, W_hz, b_z, W_xr, W_hr, b_r, W_xh, W_hh, b_h, W_hq, b_q]

for param in params:

param.requires_grad_(True)

return params

- 定义隐状态的初始化函数init_gru_state

def init_gru_state(batch_size, num_hiddens, device):

return (torch.zeros((batch_size, num_hiddens), device=device), )

- 定义门控循环单元模型

def gru(inputs, state, params):

W_xz, W_hz, b_z, W_xr, W_hr, b_r, W_xh, W_hh, b_h, W_hq, b_q = params

H, = state

outputs = []

for X in inputs:

Z = torch.sigmoid((X @ W_xz) + (H @ W_hz) + b_z)

R = torch.sigmoid((X @ W_xr) + (H @ W_hr) + b_r)

H_tilda = torch.tanh((X @ W_xh) + ((R * H) @ W_hh) + b_h)

H = Z * H + (1 - Z) * H_tilda

Y = H @ W_hq + b_q

outputs.append(Y)

return torch.cat(outputs, dim=0), (H,)

- 训练

vocab_size, num_hiddens, device = len(vocab), 256, d2l.try_gpu()

num_epochs, lr = 500, 1

model = d2l.RNNModelScratch(len(vocab), num_hiddens, device, get_params,

init_gru_state, gru)

d2l.train_ch8(model, train_iter, vocab, lr, num_epochs, device)

(三)简洁实现

num_inputs = vocab_size

gru_layer = nn.GRU(num_inputs, num_hiddens)

model = d2l.RNNModel(gru_layer, len(vocab))

model = model.to(device)

d2l.train_ch8(model, train_iter, vocab, lr, num_epochs, device)

二、长短期记忆网络LSTM

隐变量模型存在着长期信息保存和短期输入缺失的问题。 解决这一问题的最早方法之一是长短期存储器(long short-term memory,LSTM)。 它有许多与门控循环单元一样的属性。

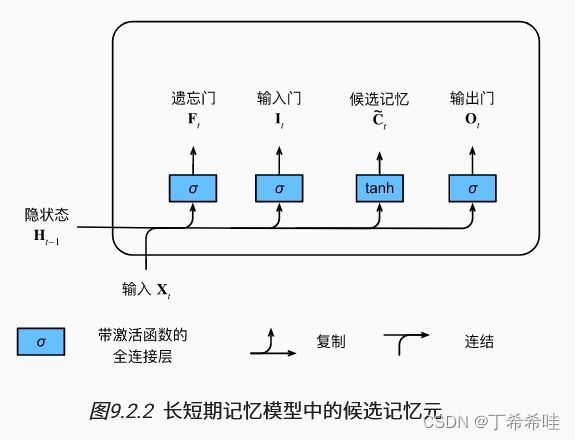

(一)门控记忆元

长短期记忆网络引入了记忆元,需要许多门来对记忆元进行控制。

- 输入门、忘记门和输出门

输出门:用来从单元中输出条目的门

输入门:用来决定何时将数据读入单元

忘记门:用来重置单元的内容

与在门控循环单元中一样,长短期记忆网络的输入是当前时间步的输入和前一个时间步的隐状态。

输入门的计算公式: I t = σ ( X t W x i + H t − 1 W h i + b i ) I_t=\sigma(X_tW_{xi}+H_{t-1}W_{hi}+b_i) It=σ(XtWxi+Ht−1Whi+bi)

忘记门的计算公式: F t = σ ( X t W x f + H t − 1 W h f + b f ) F_t=\sigma(X_tW_{xf}+H_{t-1}W_{hf}+b_f) Ft=σ(XtWxf+Ht−1Whf+bf)

输出门的计算公式: O t = σ ( X t W x o + H t − 1 W h o + b o ) O_t=\sigma(X_tW_{xo}+H_{t-1}W_{ho}+b_o) Ot=σ(XtWxo+Ht−1Who+bo)

( X t X_t Xt是输入, H t − 1 H_{t-1} Ht−1是上一个时间步的隐状态, W W W是权重参数, b b b是偏置参数)

- 候选记忆元:没有指定各种门的操作

候选记忆元在时间步 t t t处的方程:

C ~ t = t a n h ( X t W x c + H t − 1 W h c + b c ) \tilde C_t=tanh(X_tW_{xc}+H_{t-1}W_{hc}+b_c) C~t=tanh(XtWxc+Ht−1Whc+bc)

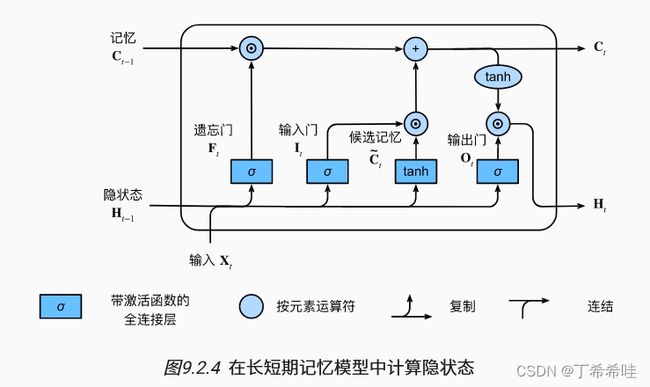

- 记忆元:指定了输入门和遗忘门

长短期记忆网络中的输入门 I t I_t It:控制采用多少来自 C ~ t \tilde C_t C~t的新数据;

长短期记忆网络中的遗忘门 F t F_t Ft:控制保留多少过去的几医院 C t − 1 C_{t-1} Ct−1的内容;

H t = F t ⨀ C t − 1 + I t ⨀ C ~ t H_t=F_t \bigodot C_{t-1}+I_t \bigodot \tilde C_t Ht=Ft⨀Ct−1+It⨀C~t

- 隐状态:输出门发挥作用

H t = O t ⨀ t a n h ( C t ) H_t=O_t\bigodot tanh(C_t) Ht=Ot⨀tanh(Ct)

(二)从零开始实现

- 数据集加载

import torch

from torch import nn

from d2l import torch as d2l

batch_size, num_steps = 32, 35

train_iter, vocab = d2l.load_data_time_machine(batch_size, num_steps)

- 初始化模型参数

def get_lstm_params(vocab_size, num_hiddens, device):

num_inputs = num_outputs = vocab_size

def normal(shape):

return torch.randn(size=shape, device=device)*0.01

def three():

return (normal((num_inputs, num_hiddens)),

normal((num_hiddens, num_hiddens)),

torch.zeros(num_hiddens, device=device))

W_xi, W_hi, b_i = three() # 输入门参数

W_xf, W_hf, b_f = three() # 遗忘门参数

W_xo, W_ho, b_o = three() # 输出门参数

W_xc, W_hc, b_c = three() # 候选记忆元参数

# 输出层参数

W_hq = normal((num_hiddens, num_outputs))

b_q = torch.zeros(num_outputs, device=device)

# 附加梯度

params = [W_xi, W_hi, b_i, W_xf, W_hf, b_f, W_xo, W_ho, b_o, W_xc, W_hc,

b_c, W_hq, b_q]

for param in params:

param.requires_grad_(True)

return params

- 状态初始化

def init_lstm_state(batch_size, num_hiddens, device):

return (torch.zeros((batch_size, num_hiddens), device=device),

torch.zeros((batch_size, num_hiddens), device=device))

- 定义模型

def lstm(inputs, state, params):

[W_xi, W_hi, b_i, W_xf, W_hf, b_f, W_xo, W_ho, b_o, W_xc, W_hc, b_c,

W_hq, b_q] = params

(H, C) = state

outputs = []

for X in inputs:

I = torch.sigmoid((X @ W_xi) + (H @ W_hi) + b_i)

F = torch.sigmoid((X @ W_xf) + (H @ W_hf) + b_f)

O = torch.sigmoid((X @ W_xo) + (H @ W_ho) + b_o)

C_tilda = torch.tanh((X @ W_xc) + (H @ W_hc) + b_c)

C = F * C + I * C_tilda

H = O * torch.tanh(C)

Y = (H @ W_hq) + b_q

outputs.append(Y)

return torch.cat(outputs, dim=0), (H, C)

- 训练

vocab_size, num_hiddens, device = len(vocab), 256, d2l.try_gpu()

num_epochs, lr = 500, 1

model = d2l.RNNModelScratch(len(vocab), num_hiddens, device, get_lstm_params,

init_lstm_state, lstm)

d2l.train_ch8(model, train_iter, vocab, lr, num_epochs, device)

(三)简洁实现

num_inputs = vocab_size

lstm_layer = nn.LSTM(num_inputs, num_hiddens)

model = d2l.RNNModel(lstm_layer, len(vocab))

model = model.to(device)

d2l.train_ch8(model, train_iter, vocab, lr, num_epochs, device)

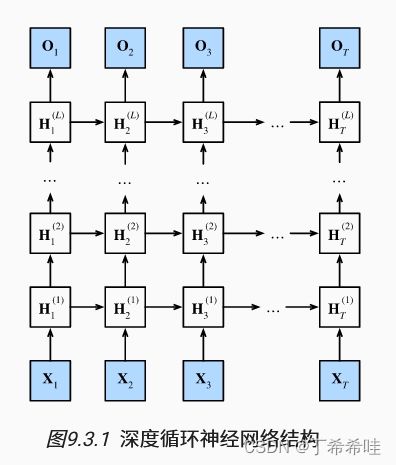

三、深度循环神经网络

具有L个隐藏层的深度循环神经网络, 每个隐状态都连续地传递到当前层的下一个时间步和下一层的当前时间步。

(一)函数依赖关系

第 l l l层隐藏层的隐状态 H t ( l ) H_t^{(l)} Ht(l):

H t ( l ) = ϕ l ( H t ( l − 1 ) W x h ( l ) + H t − 1 ( l ) W h h ( l ) + b h ( l ) ) H_t^{(l)}=\phi_l(H_t^{(l-1)}W_{xh}^{(l)}+H_{t-1}^{(l)}W_{hh}^{(l)}+b_h^{(l)}) Ht(l)=ϕl(Ht(l−1)Wxh(l)+Ht−1(l)Whh(l)+bh(l))

输出层的计算仅仅基于第 l l l个隐藏层最终的隐状态:

O t = H t ( L ) W h q + b q O_t=H_t^{(L)}W_{hq}+b_q Ot=Ht(L)Whq+bq

(二)简洁实现

import torch

from torch import nn

from d2l import torch as d2l

batch_size, num_steps = 32, 35

train_iter, vocab = d2l.load_data_time_machine(batch_size, num_steps)

vocab_size, num_hiddens, num_layers = len(vocab), 256, 2

num_inputs = vocab_size

device = d2l.try_gpu()

lstm_layer = nn.LSTM(num_inputs, num_hiddens, num_layers)

model = d2l.RNNModel(lstm_layer, len(vocab))

model = model.to(device)

(三)训练和预测

num_epochs, lr = 500, 2

d2l.train_ch8(model, train_iter, vocab, lr*1.0, num_epochs, device)

四、双向循环神经网络

(一)隐马尔可夫模型中的动态规划

隐马尔可夫模型:

任何 h t → h t + 1 h_t\rightarrow h_{t+1} ht→ht+1转移都是由一些状态转移概率 P ( h t + 1 ∣ h t ) P(h_{t+1}|h_t) P(ht+1∣ht)给出。

对于有 T T T个观测值的序列, 在观测状态和隐状态上具有以下联合概率分布:

P ( x 1 , . . . , x T , h 1 , . . . , h T ) = ∏ t = 1 T P ( h t ∣ h t − 1 ) P ( x t ∣ h t ) , w h e r e P ( h 1 ∣ h 0 ) = P ( h 1 ) P(x_1,...,x_T,h_1,...,h_T)= \prod_{t=1}^T P(h_t|h_{t-1})P(x_t|h_t),where P(h_1|h_0)=P(h_1) P(x1,...,xT,h1,...,hT)=t=1∏TP(ht∣ht−1)P(xt∣ht),whereP(h1∣h0)=P(h1)

隐马尔可夫模型中的动态规划的前向递归:

π t + 1 ( h t + 1 ) = ∑ h t π t ( h t ) P ( x t ∣ h t ) P ( h t + 1 ∣ h t ) \pi_{t+1}(h_{t+1})=\sum_{h_t}\pi_t(h_t)P(x_t|h_t)P(h_{t+1}|h_t) πt+1(ht+1)=ht∑πt(ht)P(xt∣ht)P(ht+1∣ht)

隐马尔可夫模型中的动态规划的后向递归:

ρ t − 1 ( h t − 1 ) = ∑ h t P ( x t ∣ h t ) P ( h t ∣ h t − 1 ) ρ t ( h t ) \rho_{t-1}(h_{t-1})=\sum_{h_t}P(x_t|h_t)P(h_{t}|h_{t-1})\rho_t(h_t) ρt−1(ht−1)=ht∑P(xt∣ht)P(ht∣ht−1)ρt(ht)

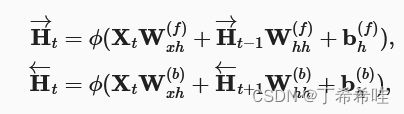

(二)双向模型

双向循环神经网络(bidirectional RNNs) 添加了反向传递信息的隐藏层。具有单个隐藏层的双向循环神经网络的架构:

前向和反向隐状态的更新:

输出层计算得到的输出:

O t = H t W h q + b q O_t=H_tW_{hq}+b_q Ot=HtWhq+bq

(三)双向循环神经网络的特性

双向循环神经网络的一个关键特性:使用来自序列两端的信息来估计输出。

缺点:

- 不适用于对下一个词元进行预测的场景

- 双向循环神经网络的计算速度非常慢

五、机器翻译与数据集

机器翻译:将序列从一种语言自动翻译成另一种语言。

(一)下载和预处理数据集

机器翻译的数据集是由源语言和目标语言的文本序列对组成的:

import os

import torch

from d2l import torch as d2l

#@save

d2l.DATA_HUB['fra-eng'] = (d2l.DATA_URL + 'fra-eng.zip',

'94646ad1522d915e7b0f9296181140edcf86a4f5')

#@save

def read_data_nmt():

"""载入“英语-法语”数据集"""

data_dir = d2l.download_extract('fra-eng')

with open(os.path.join(data_dir, 'fra.txt'), 'r',

encoding='utf-8') as f:

return f.read()

raw_text = read_data_nmt()

print(raw_text[:75])

对原始文本数据进行预处理:

#@save

def preprocess_nmt(text):

"""预处理“英语-法语”数据集"""

def no_space(char, prev_char):

return char in set(',.!?') and prev_char != ' '

# 使用空格替换不间断空格

# 使用小写字母替换大写字母

text = text.replace('\u202f', ' ').replace('\xa0', ' ').lower()

# 在单词和标点符号之间插入空格

out = [' ' + char if i > 0 and no_space(char, text[i - 1]) else char

for i, char in enumerate(text)]

return ''.join(out)

text = preprocess_nmt(raw_text)

print(text[:80])

(二)词元化

机器翻译中一般使用单词级词元化

#@save

def tokenize_nmt(text, num_examples=None):

"""词元化“英语-法语”数据数据集"""

source, target = [], []

for i, line in enumerate(text.split('\n')):

if num_examples and i > num_examples:

break

parts = line.split('\t')

if len(parts) == 2:

source.append(parts[0].split(' '))

target.append(parts[1].split(' '))

return source, target

source, target = tokenize_nmt(text)

source[:6], target[:6]

(三)词表

- 分别为源语言和目标语言构建两个词表

- 将出现次数少于2次的低频率词元 视为相同的未知(“

”)词元 - 额外的特定词元:填充词元(“

”),序列的开始词元(“ ”)和结束词元(“ ”)

src_vocab = d2l.Vocab(source, min_freq=2,

reserved_tokens=['' , '' , '' ])

len(src_vocab)

(四)加载数据集

定义truncate_pad函数用于截断或填充文本序列:

#@save

def truncate_pad(line, num_steps, padding_token):

"""截断或填充文本序列"""

if len(line) > num_steps:

return line[:num_steps] # 截断

return line + [padding_token] * (num_steps - len(line)) # 填充

truncate_pad(src_vocab[source[0]], 10, src_vocab['' ])

将文本序列 转换成小批量数据集用于训练:

#@save

def build_array_nmt(lines, vocab, num_steps):

"""将机器翻译的文本序列转换成小批量"""

lines = [vocab[l] for l in lines]

lines = [l + [vocab['' ]] for l in lines]

array = torch.tensor([truncate_pad(

l, num_steps, vocab['' ]) for l in lines])

valid_len = (array != vocab['' ]).type(torch.int32).sum(1)

return array, valid_len

(五)训练数据

#@save

def load_data_nmt(batch_size, num_steps, num_examples=600):

"""返回翻译数据集的迭代器和词表"""

text = preprocess_nmt(read_data_nmt())

source, target = tokenize_nmt(text, num_examples)

src_vocab = d2l.Vocab(source, min_freq=2,

reserved_tokens=['' , '' , '' ])

tgt_vocab = d2l.Vocab(target, min_freq=2,

reserved_tokens=['' , '' , '' ])

src_array, src_valid_len = build_array_nmt(source, src_vocab, num_steps)

tgt_array, tgt_valid_len = build_array_nmt(target, tgt_vocab, num_steps)

data_arrays = (src_array, src_valid_len, tgt_array, tgt_valid_len)

data_iter = d2l.load_array(data_arrays, batch_size)

return data_iter, src_vocab, tgt_vocab

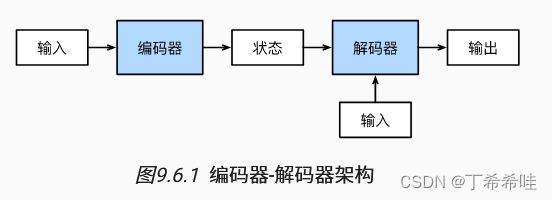

六、编码器-解码器架构

编码器和解码器用于解决机器翻译中的输入序列和输出序列都是长度可变的这一问题。

(一)编码器

在编码器接口中,我们只指定长度可变的序列作为编码器的输入X。 任何继承这个Encoder基类的模型将完成代码实现。

from torch import nn

#@save

class Encoder(nn.Module):

"""编码器-解码器架构的基本编码器接口"""

def __init__(self, **kwargs):

super(Encoder, self).__init__(**kwargs)

def forward(self, X, *args):

raise NotImplementedError

(二)解码器

在解码器接口中新增一个init_state函数, 用于将编码器的输出(enc_outputs)转换为编码后的状态。 为了逐个地生成长度可变的词元序列, 解码器在每个时间步都会将输入 (例如:在前一时间步生成的词元)和编码后的状态映射成当前时间步的输出词元。

#@save

class Decoder(nn.Module):

"""编码器-解码器架构的基本解码器接口"""

def __init__(self, **kwargs):

super(Decoder, self).__init__(**kwargs)

def init_state(self, enc_outputs, *args):

raise NotImplementedError

def forward(self, X, state):

raise NotImplementedError

(三)合并编码器和解码器

“编码器-解码器”架构包含了一个编码器和一个解码器, 并且还拥有可选的额外的参数。 在前向传播中,编码器的输出用于生成编码状态, 这个状态又被解码器作为其输入的一部分。

#@save

class EncoderDecoder(nn.Module):

"""编码器-解码器架构的基类"""

def __init__(self, encoder, decoder, **kwargs):

super(EncoderDecoder, self).__init__(**kwargs)

self.encoder = encoder

self.decoder = decoder

def forward(self, enc_X, dec_X, *args):

enc_outputs = self.encoder(enc_X, *args)

dec_state = self.decoder.init_state(enc_outputs, *args)

return self.decoder(dec_X, dec_state)

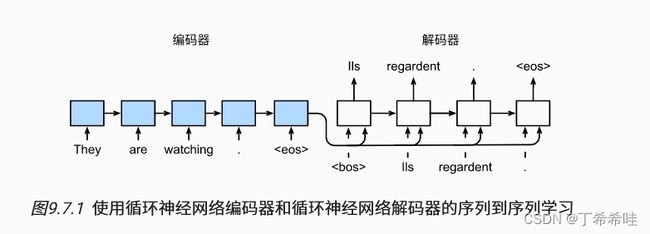

七、序列到序列学习

- 编码器部分:

特定的“”表示序列结束词元。 一旦输出序列生成此词元,模型就会停止预测。 - 解码器部分:

- 特定的“

”表示序列开始词元,它是解码器的输入序列的第一个词元。 - 使用循环神经网络编码器最终的隐状态来初始化解码器的隐状态。

- 特定的“

(一)编码器

编码器将长度可变的输入序列转换成形状固定的上下文变量c,并且将输入序列的信息在该上下文变量中进行编码。

- 在时间步 t t t,循环神经网络使用函数 f f f将词元 x t x_t xt的输入特征向量 x t x_t xt和 h t − 1 h_{t-1} ht−1转换成 h t h_t ht:

h t = f ( x t , h t − 1 ) h_t=f(x_t,h_{t-1}) ht=f(xt,ht−1) - 编码器通过选定的函数 q q q,将所有时间步的隐状态转换为上下文变量:

c = q ( h 1 , . . . , h T ) c=q(h_1,...,h_T) c=q(h1,...,hT)

当选择 q ( h 1 , . . . , h T ) = h T q(h_1,...,h_T)=h_T q(h1,...,hT)=hT时,上下文变量仅仅是输入序列在最后时间步的隐状态 h T h_T hT。

利用一个多层门控循环单元来实现编码器:

#@save

class Seq2SeqEncoder(d2l.Encoder):

"""用于序列到序列学习的循环神经网络编码器"""

def __init__(self, vocab_size, embed_size, num_hiddens, num_layers,

dropout=0, **kwargs):

super(Seq2SeqEncoder, self).__init__(**kwargs)

# 嵌入层

self.embedding = nn.Embedding(vocab_size, embed_size)

self.rnn = nn.GRU(embed_size, num_hiddens, num_layers,

dropout=dropout)

def forward(self, X, *args):

# 输出'X'的形状:(batch_size,num_steps,embed_size)

X = self.embedding(X)

# 在循环神经网络模型中,第一个轴对应于时间步

X = X.permute(1, 0, 2)

# 如果未提及状态,则默认为0

output, state = self.rnn(X)

# output的形状:(num_steps,batch_size,num_hiddens)

# state的形状:(num_layers,batch_size,num_hiddens)

return output, state

实例化编码器:

encoder = Seq2SeqEncoder(vocab_size=10, embed_size=8, num_hiddens=16,

num_layers=2)

encoder.eval()

X = torch.zeros((4, 7), dtype=torch.long)

output, state = encoder(X)

output.shape

(二)解码器

来自训练数据集的输出序列 y 1 , y 2 , . . . , y T ′ y_1,y_2,...,y_{T'} y1,y2,...,yT′,对于每个时间步 t ′ t' t′(与输入序列或编码器的时间步 t t t不同),解码器输出 y t ′ y_{t'} yt′的概率取决于先前的输出子序列 y 1 , . . . , y t ′ − 1 y_1,...,y_{t'-1} y1,...,yt′−1和上下文变量 c c c,即 P ( y t ′ ∣ y 1 , . . . , y t ′ − 1 , c ) P(y_{t'}|y_1,...,y{t'-1},c) P(yt′∣y1,...,yt′−1,c)。

- 在输出序列上的任意时间步 t ′ t' t′, 循环神经网络将来自上一时间步的输出 y t ′ − 1 y_{t'-1} yt′−1和上下文变量 c c c作为输入, 然后在当前时间步将它们和上一隐状态 s t ′ − 1 s_{t'-1} st′−1转换为隐状态 s t s_t st:

s t ′ = g ( y t ′ − 1 , c , s t ′ − 1 ) s_{t'}=g(y_{t'-1},c,s_{t'-1}) st′=g(yt′−1,c,st′−1) - 在获得解码器的隐状态后,使用输出层和softmax操作计算在时间步 t ′ t' t′时输出 y t ′ y_{t'} yt′的条件概率分布:

P ( y t ′ ∣ y 1 , . . . , y t ′ − 1 , c ) P(y_{t'}|y_1,...,y_{t'-1},c) P(yt′∣y1,...,yt′−1,c)

实现解码器时,使用编码器最后一个时间步的隐状态来初始化解码器的隐状态:

class Seq2SeqDecoder(d2l.Decoder):

"""用于序列到序列学习的循环神经网络解码器"""

def __init__(self, vocab_size, embed_size, num_hiddens, num_layers,

dropout=0, **kwargs):

super(Seq2SeqDecoder, self).__init__(**kwargs)

self.embedding = nn.Embedding(vocab_size, embed_size)

self.rnn = nn.GRU(embed_size + num_hiddens, num_hiddens, num_layers,

dropout=dropout)

self.dense = nn.Linear(num_hiddens, vocab_size)

def init_state(self, enc_outputs, *args):

return enc_outputs[1]

def forward(self, X, state):

# 输出'X'的形状:(batch_size,num_steps,embed_size)

X = self.embedding(X).permute(1, 0, 2)

# 广播context,使其具有与X相同的num_steps

context = state[-1].repeat(X.shape[0], 1, 1)

X_and_context = torch.cat((X, context), 2)

output, state = self.rnn(X_and_context, state)

output = self.dense(output).permute(1, 0, 2)

# output的形状:(batch_size,num_steps,vocab_size)

# state的形状:(num_layers,batch_size,num_hiddens)

return output, state

实例化解码器:

decoder = Seq2SeqDecoder(vocab_size=10, embed_size=8, num_hiddens=16,

num_layers=2)

decoder.eval()

state = decoder.init_state(encoder(X))

output, state = decoder(X, state)

output.shape, state.shape

(三)损失函数

使用sequence_mask函数通过零值化屏蔽不相关的项:

#@save

def sequence_mask(X, valid_len, value=0):

"""在序列中屏蔽不相关的项"""

maxlen = X.size(1)

mask = torch.arange((maxlen), dtype=torch.float32,

device=X.device)[None, :] < valid_len[:, None]

X[~mask] = value

return X

X = torch.tensor([[1, 2, 3], [4, 5, 6]])

sequence_mask(X, torch.tensor([1, 2]))

通过扩展softmax交叉熵损失函数来遮蔽不相关的预测:

#@save

class MaskedSoftmaxCELoss(nn.CrossEntropyLoss):

"""带遮蔽的softmax交叉熵损失函数"""

# pred的形状:(batch_size,num_steps,vocab_size)

# label的形状:(batch_size,num_steps)

# valid_len的形状:(batch_size,)

def forward(self, pred, label, valid_len):

weights = torch.ones_like(label)

weights = sequence_mask(weights, valid_len)

self.reduction='none'

unweighted_loss = super(MaskedSoftmaxCELoss, self).forward(

pred.permute(0, 2, 1), label)

weighted_loss = (unweighted_loss * weights).mean(dim=1)

return weighted_loss

(四)训练

#@save

def train_seq2seq(net, data_iter, lr, num_epochs, tgt_vocab, device):

"""训练序列到序列模型"""

def xavier_init_weights(m):

if type(m) == nn.Linear:

nn.init.xavier_uniform_(m.weight)

if type(m) == nn.GRU:

for param in m._flat_weights_names:

if "weight" in param:

nn.init.xavier_uniform_(m._parameters[param])

net.apply(xavier_init_weights)

net.to(device)

optimizer = torch.optim.Adam(net.parameters(), lr=lr)

loss = MaskedSoftmaxCELoss()

net.train()

animator = d2l.Animator(xlabel='epoch', ylabel='loss',

xlim=[10, num_epochs])

for epoch in range(num_epochs):

timer = d2l.Timer()

metric = d2l.Accumulator(2) # 训练损失总和,词元数量

for batch in data_iter:

optimizer.zero_grad()

X, X_valid_len, Y, Y_valid_len = [x.to(device) for x in batch]

bos = torch.tensor([tgt_vocab['' ]] * Y.shape[0],

device=device).reshape(-1, 1)

dec_input = torch.cat([bos, Y[:, :-1]], 1) # 强制教学

Y_hat, _ = net(X, dec_input, X_valid_len)

l = loss(Y_hat, Y, Y_valid_len)

l.sum().backward() # 损失函数的标量进行“反向传播”

d2l.grad_clipping(net, 1)

num_tokens = Y_valid_len.sum()

optimizer.step()

with torch.no_grad():

metric.add(l.sum(), num_tokens)

if (epoch + 1) % 10 == 0:

animator.add(epoch + 1, (metric[0] / metric[1],))

print(f'loss {metric[0] / metric[1]:.3f}, {metric[1] / timer.stop():.1f} '

f'tokens/sec on {str(device)}')

创建和训练一个循环神经网络“编码器-解码器”模型用于序列到序列的学习:

embed_size, num_hiddens, num_layers, dropout = 32, 32, 2, 0.1

batch_size, num_steps = 64, 10

lr, num_epochs, device = 0.005, 300, d2l.try_gpu()

train_iter, src_vocab, tgt_vocab = d2l.load_data_nmt(batch_size, num_steps)

encoder = Seq2SeqEncoder(len(src_vocab), embed_size, num_hiddens, num_layers,

dropout)

decoder = Seq2SeqDecoder(len(tgt_vocab), embed_size, num_hiddens, num_layers,

dropout)

net = d2l.EncoderDecoder(encoder, decoder)

train_seq2seq(net, train_iter, lr, num_epochs, tgt_vocab, device)

(五)预测

为了采用一个接着一个词元的方式预测输出序列, 每个解码器当前时间步的输入都将来自于前一时间步的预测词元。 与训练类似,序列开始词元(“

#@save

def predict_seq2seq(net, src_sentence, src_vocab, tgt_vocab, num_steps,

device, save_attention_weights=False):

"""序列到序列模型的预测"""

# 在预测时将net设置为评估模式

net.eval()

src_tokens = src_vocab[src_sentence.lower().split(' ')] + [

src_vocab['' ]]

enc_valid_len = torch.tensor([len(src_tokens)], device=device)

src_tokens = d2l.truncate_pad(src_tokens, num_steps, src_vocab['' ])

# 添加批量轴

enc_X = torch.unsqueeze(

torch.tensor(src_tokens, dtype=torch.long, device=device), dim=0)

enc_outputs = net.encoder(enc_X, enc_valid_len)

dec_state = net.decoder.init_state(enc_outputs, enc_valid_len)

# 添加批量轴

dec_X = torch.unsqueeze(torch.tensor(

[tgt_vocab['' ]], dtype=torch.long, device=device), dim=0)

output_seq, attention_weight_seq = [], []

for _ in range(num_steps):

Y, dec_state = net.decoder(dec_X, dec_state)

# 我们使用具有预测最高可能性的词元,作为解码器在下一时间步的输入

dec_X = Y.argmax(dim=2)

pred = dec_X.squeeze(dim=0).type(torch.int32).item()

# 保存注意力权重(稍后讨论)

if save_attention_weights:

attention_weight_seq.append(net.decoder.attention_weights)

# 一旦序列结束词元被预测,输出序列的生成就完成了

if pred == tgt_vocab['' ]:

break

output_seq.append(pred)

return ' '.join(tgt_vocab.to_tokens(output_seq)), attention_weight_seq

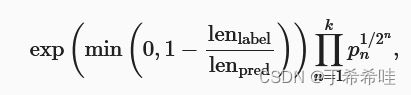

(六)预测序列的评估

def bleu(pred_seq, label_seq, k): #@save

"""计算BLEU"""

pred_tokens, label_tokens = pred_seq.split(' '), label_seq.split(' ')

len_pred, len_label = len(pred_tokens), len(label_tokens)

score = math.exp(min(0, 1 - len_label / len_pred))

for n in range(1, k + 1):

num_matches, label_subs = 0, collections.defaultdict(int)

for i in range(len_label - n + 1):

label_subs[' '.join(label_tokens[i: i + n])] += 1

for i in range(len_pred - n + 1):

if label_subs[' '.join(pred_tokens[i: i + n])] > 0:

num_matches += 1

label_subs[' '.join(pred_tokens[i: i + n])] -= 1

score *= math.pow(num_matches / (len_pred - n + 1), math.pow(0.5, n))

return score

八、束搜索

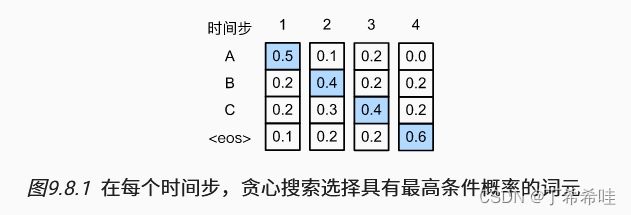

(一)贪心搜索

贪心搜索:对于输出序列的每一时间步 t ′ t' t′,基于贪心搜索从 γ \gamma γ中找到具有最高条件概率的词元。

y t ′ = arg max y ∈ γ P ( y ∣ y 1 , . . . , y t ′ − 1 , c ) y_{t'}=\underset{y\in \gamma}{{\arg\max \,}} P(y|y_1,...,y_{t'-1},c) yt′=y∈γargmaxP(y∣y1,...,yt′−1,c)

(二)穷举搜索

穷举搜索(exhaustive search): 穷举地列举所有可能的输出序列及其条件概率, 然后计算输出条件概率最高的一个。

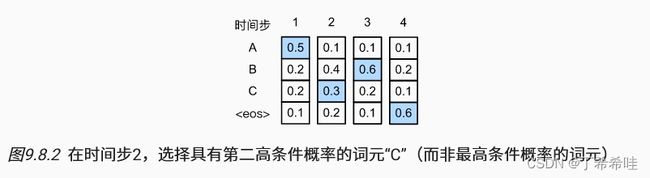

(三)束搜索

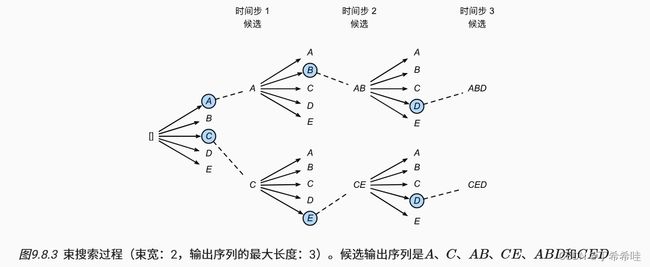

束搜索是贪心搜索的一个改进版本,它有一个超参数:束宽k。在时间步1,选择具有最高条件概率的k个词元;在接下来的时间步,都基于上一时间步的k个候选输出序列,继续从 k ∣ γ ∣ k|\gamma| k∣γ∣个可能的选择中挑出具有最高条件概率的k个候选输出序列:

(四)小结

- 贪心搜索所选取序列的计算量最小,但精度相对较低;

- 穷举搜索所选取序列的精度最高,但计算量最大;

- 束搜索通过灵活选择束宽,在正确率和计算代价之间进行权衡。