评分机制是Lucene的核心部分之一。Lucene默认是按照评分机制对每个Document进行打分,然后在返回结果中按照得分进行降序排序。内部的打分机制是通过Query,Weight,Scorer,Similarity这几个协作完成的。想要根据自己的业务对默认的评分机制进行干预来影响最终的索引文档的评分,那你必须首先对Lucene的评分公式要了解:

coord(q,d):这里q即query,d即document,表示指定查询项在document中出现的频率,频率越大说明该document匹配度越大,评分就越高,默认实现是:

/** Implemented as <code>overlap / maxOverlap</code>. */

@Override

public float coord(int overlap, int maxOverlap) {

return overlap / (float)maxOverlap;

}

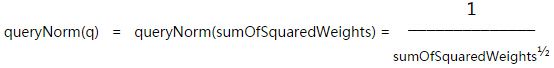

queryNorm(q):用来计算每个查询的权重的,从它的参数只有一个q就知道,它只是用来衡量每个查询的权重的,使每个Query之间也可以比较,注意:它的计算结果会影响最终document的得分值,但它不会影响每个文档的得分排序,因为每个document都会应用这个query权重值。默认它的实现数学公式是这样的:

queryNorm实现代码在DefaultSimilarity类中:

/** Implemented as <code>1/sqrt(sumOfSquaredWeights)</code>. */

@Override

public float queryNorm(float sumOfSquaredWeights) {

return (float)(1.0 / Math.sqrt(sumOfSquaredWeights));

}

tf(t,d):用来统计指定Term t在document d中的出现频率,出现次数越多说明匹配度越高,得分自然就越高,默认实现是

/** Implemented as <code>sqrt(freq)</code>. */

@Override

public float tf(float freq) {

return (float)Math.sqrt(freq);

}

idf(t):统计出现Term t的document的频率docFreq,docFreq越小,idf越大,则得分越高(一个Term若只在几个document中出现,说明这几个document稀有,物以稀为贵,所以你懂的)。

t.getBoot():就是给Term设置权重值,比如使用QueryParser语法表达式时可以这样:java^1.2

norm(t,d):主要分两部分:一部分是Document的权重,不过在Lucene5中Document的权重已经被取消了

一部分是Field的boot,刚才说过了,一部分是field中分词器分出来的Token个数因素,个数越多,匹配度越低,就好比你在1000000个字符中匹配到一个关键字和在10个字符中匹配到一个关键字,lucene认为后者权重更大应该排在前面。

上面各个子函数计算出来的分值再相乘求积得到最终得分。

演示域权重对评分的影响:

package com.yida.framework.lucene5.score;

import java.io.IOException;

import org.apache.lucene.analysis.Analyzer;

import org.apache.lucene.document.Document;

import org.apache.lucene.document.Field;

import org.apache.lucene.document.Field.Store;

import org.apache.lucene.document.TextField;

import org.apache.lucene.index.DirectoryReader;

import org.apache.lucene.index.IndexReader;

import org.apache.lucene.index.IndexWriter;

import org.apache.lucene.index.IndexWriterConfig;

import org.apache.lucene.index.IndexWriterConfig.OpenMode;

import org.apache.lucene.index.Term;

import org.apache.lucene.search.IndexSearcher;

import org.apache.lucene.search.Query;

import org.apache.lucene.search.ScoreDoc;

import org.apache.lucene.search.TermQuery;

import org.apache.lucene.search.TopDocs;

import org.apache.lucene.store.RAMDirectory;

import org.wltea.analyzer.lucene.IKAnalyzer;

/**

* 为域设置权重从而影响索引文档的最终评分[为Document设置权重的API已经被废弃了]

* @author Lanxiaowei

*

*/

public class FieldBootTest {

public static void main(String[] args) throws IOException {

RAMDirectory directory = new RAMDirectory();

Analyzer analyzer = new IKAnalyzer();

IndexWriterConfig config = new IndexWriterConfig(analyzer);

config.setOpenMode(OpenMode.CREATE_OR_APPEND);

IndexWriter writer = new IndexWriter(directory, config);

Document doc1 = new Document();

Field f1 = new TextField("title", "Java, hello world!",Store.YES);

doc1.add(f1);

writer.addDocument(doc1);

Document doc2 = new Document();

Field f2 = new TextField("title", "Java ,I like it.",Store.YES);

//第二个文档的title域权重

f2.setBoost(100);

doc2.add(f2);

writer.addDocument(doc2);

writer.close();

IndexReader reader = DirectoryReader.open(directory);

IndexSearcher searcher = new IndexSearcher(reader);

Query query = new TermQuery(new Term("title","java"));

TopDocs topDocs = searcher.search(query, Integer.MAX_VALUE);

ScoreDoc[] docs = topDocs.scoreDocs;

if(null == docs || docs.length == 0) {

System.out.println("No results for this query.");

return;

}

for (ScoreDoc scoreDoc : docs) {

int docID = scoreDoc.doc;

float score = scoreDoc.score;

Document document = searcher.doc(docID);

String title = document.get("title");

System.out.println("docId:" + docID);

System.out.println("title:" + title);

System.out.println("score:" + score);

System.out.println("\n");

}

reader.close();

directory.close();

}

}

测试域值长度对评分的影响:

package com.yida.framework.lucene5.score;

import java.io.IOException;

import org.apache.lucene.analysis.Analyzer;

import org.apache.lucene.document.Document;

import org.apache.lucene.document.Field;

import org.apache.lucene.index.DirectoryReader;

import org.apache.lucene.index.IndexReader;

import org.apache.lucene.index.IndexWriter;

import org.apache.lucene.index.IndexWriterConfig;

import org.apache.lucene.index.IndexWriterConfig.OpenMode;

import org.apache.lucene.index.Term;

import org.apache.lucene.search.IndexSearcher;

import org.apache.lucene.search.Query;

import org.apache.lucene.search.ScoreDoc;

import org.apache.lucene.search.TermQuery;

import org.apache.lucene.search.TopDocs;

import org.apache.lucene.store.RAMDirectory;

import org.wltea.analyzer.lucene.IKAnalyzer;

/**

* 测试域值长度对评分的影响

* @author Lanxioawei

*

*/

public class FileValueLengthBootTest {

public static void main(String[] args) throws IOException {

RAMDirectory directory = new RAMDirectory();

Analyzer analyzer = new IKAnalyzer();

IndexWriterConfig config = new IndexWriterConfig(analyzer);

config.setOpenMode(OpenMode.CREATE_OR_APPEND);

IndexWriter writer = new IndexWriter(directory, config);

Document doc1 = new Document();

//Field f1 = new Field("title", "Java, hello world!", Field.Store.YES, Field.Index.ANALYZED_NO_NORMS);

Field f1 = new Field("title", "Java, hello world!", Field.Store.YES, Field.Index.ANALYZED);

doc1.add(f1);

writer.addDocument(doc1);

Document doc2 = new Document();

//Field.Index.ANALYZED_NO_NORMS表示禁用Norms

//Field f2 = new Field("title", "Hello hello hello hello hello Java Java.", Field.Store.YES, Field.Index.ANALYZED_NO_NORMS);

Field f2 = new Field("title", "Hello hello hello hello hello Java Java.", Field.Store.YES, Field.Index.ANALYZED);

doc2.add(f2);

writer.addDocument(doc2);

writer.close();

//因为第二个索引文档的title域值比第一个的Term个数要多,所以第二个索引文档评分比第一个低

//但如果禁用Norms,不考虑索引域值的长度因素,因为第二个文档匹配到了两个Term,所以评分较高

IndexReader reader = DirectoryReader.open(directory);

IndexSearcher searcher = new IndexSearcher(reader);

Query query = new TermQuery(new Term("title","java"));

TopDocs topDocs = searcher.search(query, Integer.MAX_VALUE);

ScoreDoc[] docs = topDocs.scoreDocs;

if(null == docs || docs.length == 0) {

System.out.println("No results for this query.");

return;

}

for (ScoreDoc scoreDoc : docs) {

int docID = scoreDoc.doc;

float score = scoreDoc.score;

Document document = searcher.doc(docID);

String title = document.get("title");

System.out.println("docId:" + docID);

System.out.println("title:" + title);

System.out.println("score:" + score);

System.out.println("\n");

}

reader.close();

directory.close();

}

}

设置Term权重对评分的影响:

package com.yida.framework.lucene5.score;

import java.io.IOException;

import org.apache.lucene.analysis.Analyzer;

import org.apache.lucene.document.Document;

import org.apache.lucene.document.Field;

import org.apache.lucene.document.Field.Store;

import org.apache.lucene.document.TextField;

import org.apache.lucene.index.DirectoryReader;

import org.apache.lucene.index.IndexReader;

import org.apache.lucene.index.IndexWriter;

import org.apache.lucene.index.IndexWriterConfig;

import org.apache.lucene.index.IndexWriterConfig.OpenMode;

import org.apache.lucene.queryparser.classic.ParseException;

import org.apache.lucene.queryparser.classic.QueryParser;

import org.apache.lucene.search.IndexSearcher;

import org.apache.lucene.search.Query;

import org.apache.lucene.search.ScoreDoc;

import org.apache.lucene.search.TopDocs;

import org.apache.lucene.store.RAMDirectory;

import org.wltea.analyzer.lucene.IKAnalyzer;

/**

* Term权重对评分的影响测试

* @author Lanxiaowei

*

*/

public class QueryBootTest {

public static void main(String[] args) throws IOException, ParseException {

RAMDirectory directory = new RAMDirectory();

Analyzer analyzer = new IKAnalyzer();

IndexWriterConfig config = new IndexWriterConfig(analyzer);

config.setOpenMode(OpenMode.CREATE_OR_APPEND);

IndexWriter writer = new IndexWriter(directory, config);

Document doc1 = new Document();

Field f1 = new TextField("title", "Java, hello hello!",Store.YES);

doc1.add(f1);

writer.addDocument(doc1);

Document doc2 = new Document();

Field f2 = new TextField("title", "Python Python Python hello.",Store.YES);

doc2.add(f2);

writer.addDocument(doc2);

writer.close();

IndexReader reader = DirectoryReader.open(directory);

IndexSearcher searcher = new IndexSearcher(reader);

QueryParser parser = new QueryParser("title",analyzer);

//Query query = parser.parse("java hello");

//不设置权重之前,Python出现了3次,所以文档2的评分较高

//增加java关键字的权重,使文档1的评分大于文档2

Query query = parser.parse("java^100 Python");

TopDocs topDocs = searcher.search(query, Integer.MAX_VALUE);

ScoreDoc[] docs = topDocs.scoreDocs;

if(null == docs || docs.length == 0) {

System.out.println("No results for this query.");

return;

}

for (ScoreDoc scoreDoc : docs) {

int docID = scoreDoc.doc;

float score = scoreDoc.score;

Document document = searcher.doc(docID);

String title = document.get("title");

System.out.println("docId:" + docID);

System.out.println("title:" + title);

System.out.println("score:" + score);

System.out.println("\n");

}

reader.close();

directory.close();

}

}

自定义Similarity来重写上述几个子函数的实现,从而更细粒度的干预评分:

package com.yida.framework.lucene5.score;

import org.apache.lucene.search.similarities.DefaultSimilarity;

public class CustomSimilarity extends DefaultSimilarity {

@Override

public float idf(long docFreq, long numDocs) {

//docFreq表示某个Term在哪几个文档中出现过,numDocs表示总的文档数

System.out.println("docFreq:" + docFreq);

System.out.println("numDocs:" + numDocs);

return super.idf(docFreq, numDocs);

}

}

继承DefaultSimilarity类,重写里面的相关函数即可,来看看DefaultSimilarity的源码:

package org.apache.lucene.search.similarities;

/*

* Licensed to the Apache Software Foundation (ASF) under one or more

* contributor license agreements. See the NOTICE file distributed with

* this work for additional information regarding copyright ownership.

* The ASF licenses this file to You under the Apache License, Version 2.0

* (the "License"); you may not use this file except in compliance with

* the License. You may obtain a copy of the License at

*

* http://www.apache.org/licenses/LICENSE-2.0

*

* Unless required by applicable law or agreed to in writing, software

* distributed under the License is distributed on an "AS IS" BASIS,

* WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

* See the License for the specific language governing permissions and

* limitations under the License.

*/

import org.apache.lucene.index.FieldInvertState;

import org.apache.lucene.util.BytesRef;

import org.apache.lucene.util.SmallFloat;

/**

* Expert: Default scoring implementation which {@link #encodeNormValue(float)

* encodes} norm values as a single byte before being stored. At search time,

* the norm byte value is read from the index

* {@link org.apache.lucene.store.Directory directory} and

* {@link #decodeNormValue(long) decoded} back to a float <i>norm</i> value.

* This encoding/decoding, while reducing index size, comes with the price of

* precision loss - it is not guaranteed that <i>decode(encode(x)) = x</i>. For

* instance, <i>decode(encode(0.89)) = 0.75</i>.

* <p>

* Compression of norm values to a single byte saves memory at search time,

* because once a field is referenced at search time, its norms - for all

* documents - are maintained in memory.

* <p>

* The rationale supporting such lossy compression of norm values is that given

* the difficulty (and inaccuracy) of users to express their true information

* need by a query, only big differences matter. <br>

* <br>

* Last, note that search time is too late to modify this <i>norm</i> part of

* scoring, e.g. by using a different {@link Similarity} for search.

*/

public class DefaultSimilarity extends TFIDFSimilarity {

/** Cache of decoded bytes. */

private static final float[] NORM_TABLE = new float[256];

static {

for (int i = 0; i < 256; i++) {

NORM_TABLE[i] = SmallFloat.byte315ToFloat((byte)i);

}

}

/** Sole constructor: parameter-free */

public DefaultSimilarity() {}

/** Implemented as <code>overlap / maxOverlap</code>. */

@Override

public float coord(int overlap, int maxOverlap) {

return overlap / (float)maxOverlap;

}

/** Implemented as <code>1/sqrt(sumOfSquaredWeights)</code>. */

@Override

public float queryNorm(float sumOfSquaredWeights) {

return (float)(1.0 / Math.sqrt(sumOfSquaredWeights));

}

/**

* Encodes a normalization factor for storage in an index.

* <p>

* The encoding uses a three-bit mantissa, a five-bit exponent, and the

* zero-exponent point at 15, thus representing values from around 7x10^9 to

* 2x10^-9 with about one significant decimal digit of accuracy. Zero is also

* represented. Negative numbers are rounded up to zero. Values too large to

* represent are rounded down to the largest representable value. Positive

* values too small to represent are rounded up to the smallest positive

* representable value.

*

* @see org.apache.lucene.document.Field#setBoost(float)

* @see org.apache.lucene.util.SmallFloat

*/

@Override

public final long encodeNormValue(float f) {

return SmallFloat.floatToByte315(f);

}

/**

* Decodes the norm value, assuming it is a single byte.

*

* @see #encodeNormValue(float)

*/

@Override

public final float decodeNormValue(long norm) {

return NORM_TABLE[(int) (norm & 0xFF)]; // & 0xFF maps negative bytes to positive above 127

}

/** Implemented as

* <code>state.getBoost()*lengthNorm(numTerms)</code>, where

* <code>numTerms</code> is {@link FieldInvertState#getLength()} if {@link

* #setDiscountOverlaps} is false, else it's {@link

* FieldInvertState#getLength()} - {@link

* FieldInvertState#getNumOverlap()}.

*

* @lucene.experimental */

@Override

public float lengthNorm(FieldInvertState state) {

final int numTerms;

if (discountOverlaps)

numTerms = state.getLength() - state.getNumOverlap();

else

numTerms = state.getLength();

return state.getBoost() * ((float) (1.0 / Math.sqrt(numTerms)));

}

/** Implemented as <code>sqrt(freq)</code>. */

@Override

public float tf(float freq) {

return (float)Math.sqrt(freq);

}

/** Implemented as <code>1 / (distance + 1)</code>. */

@Override

public float sloppyFreq(int distance) {

return 1.0f / (distance + 1);

}

/** The default implementation returns <code>1</code> */

@Override

public float scorePayload(int doc, int start, int end, BytesRef payload) {

return 1;

}

/** Implemented as <code>log(numDocs/(docFreq+1)) + 1</code>. */

@Override

public float idf(long docFreq, long numDocs) {

return (float)(Math.log(numDocs/(double)(docFreq+1)) + 1.0);

}

/**

* True if overlap tokens (tokens with a position of increment of zero) are

* discounted from the document's length.

*/

protected boolean discountOverlaps = true;

/** Determines whether overlap tokens (Tokens with

* 0 position increment) are ignored when computing

* norm. By default this is true, meaning overlap

* tokens do not count when computing norms.

*

* @lucene.experimental

*

* @see #computeNorm

*/

public void setDiscountOverlaps(boolean v) {

discountOverlaps = v;

}

/**

* Returns true if overlap tokens are discounted from the document's length.

* @see #setDiscountOverlaps

*/

public boolean getDiscountOverlaps() {

return discountOverlaps;

}

@Override

public String toString() {

return "DefaultSimilarity";

}

}

看到里面的idf,tf,coord等函数,你们应该已经知道他们的作用了,你只要重写他们实现自己的统计算法即可。

细心的你,应该会发现里面还有个scorePayload,计算payload分值。那什么是payload呢?Payload

其实在Lucene2.x时代就有了,它跟位置索引,位置增量作用类似,就是为Document提供一些额外的信息,实现一些特殊的功能,比如位置索引用来实现PhraseQuery短语查询以及关键字高亮功能。Payload可以用来实现更加灵活的索引技术,为了更加形象点,有助于你们理解payload,请认真仔细观摩看懂这张图:

比如你有这样两个文档:

what is your trouble?

What the fucking up?

第二个what加粗了,你想加大它的权重,你可以这样实现:

<b>What></b> the fucking up?

然后分词的时候把<b>What></b>当作一个Term,然后判断如果有<b></b>标记就记录下是否有加粗信息存入payload。但注意要想把<b>XXXXXX></b>当一个整体,需要自定义分词器,因为<>这些符号会被剔除掉。不一定是在Term两头加<b></b>来附带额外信息,你也可以term{1.2}如java{1.2}表示把这个Term权重乘以1.2,我只是举个例子,关键是你要把term{1.2}能通过分词器当作一个整体给分出来,默认{}会被剔除,分词才是重点。

下面是一个简单的Payload示例,直接上代码了:

package com.yida.framework.lucene5.score.payload;

import java.io.IOException;

import org.apache.lucene.analysis.TokenFilter;

import org.apache.lucene.analysis.TokenStream;

import org.apache.lucene.analysis.tokenattributes.CharTermAttribute;

import org.apache.lucene.analysis.tokenattributes.PayloadAttribute;

import org.apache.lucene.util.BytesRef;

import com.yida.framework.lucene5.util.Tools;

public class BoldFilter extends TokenFilter {

public static int IS_NOT_BOLD = 0;

public static int IS_BOLD = 1;

private CharTermAttribute termAtt;

private PayloadAttribute payloadAtt;

protected BoldFilter(TokenStream input) {

super(input);

termAtt = addAttribute(CharTermAttribute.class);

payloadAtt = addAttribute(PayloadAttribute.class);

}

@Override

public boolean incrementToken() throws IOException {

if (input.incrementToken()) {

final char[] buffer = termAtt.buffer();

final int length = termAtt.length();

String tokenstring = new String(buffer, 0, length).toLowerCase();

//System.out.println("token:" + tokenstring);

if (tokenstring.startsWith("<b>") && tokenstring.endsWith("</b>")) {

tokenstring = tokenstring.replace("<b>", "");

tokenstring = tokenstring.replace("</b>", "");

termAtt.copyBuffer(tokenstring.toCharArray(), 0, tokenstring.length());

//在分词阶段,设置payload信息

payloadAtt.setPayload(new BytesRef(Tools.int2bytes(IS_BOLD)));

} else {

payloadAtt.setPayload(new BytesRef(Tools.int2bytes(IS_NOT_BOLD)));

}

return true;

} else

return false;

}

}

package com.yida.framework.lucene5.score.payload;

import org.apache.lucene.analysis.Analyzer;

import org.apache.lucene.analysis.TokenStream;

import org.apache.lucene.analysis.Tokenizer;

import org.apache.lucene.analysis.core.LowerCaseFilter;

import org.apache.lucene.analysis.core.StopAnalyzer;

import org.apache.lucene.analysis.core.StopFilter;

import org.apache.lucene.analysis.core.WhitespaceTokenizer;

public class BoldAnalyzer extends Analyzer {

@Override

protected TokenStreamComponents createComponents(String fieldName) {

Tokenizer tokenizer = new WhitespaceTokenizer();

TokenStream tokenStream = new BoldFilter(tokenizer);

tokenStream = new LowerCaseFilter(tokenStream);

tokenStream = new StopFilter(tokenStream,StopAnalyzer.ENGLISH_STOP_WORDS_SET);

return new TokenStreamComponents(tokenizer, tokenStream);

}

}

package com.yida.framework.lucene5.score.payload;

import org.apache.lucene.search.similarities.DefaultSimilarity;

import org.apache.lucene.util.BytesRef;

import com.yida.framework.lucene5.util.Tools;

public class PayloadSimilarity extends DefaultSimilarity {

@Override

public float scorePayload(int doc, int start, int end, BytesRef payload) {

int isbold = Tools.bytes2int(payload.bytes);

if (isbold == BoldFilter.IS_BOLD) {

return 100f;

}

return 1f;

}

}

package com.yida.framework.lucene5.score.payload;

import java.io.IOException;

import org.apache.lucene.analysis.Analyzer;

import org.apache.lucene.document.Document;

import org.apache.lucene.document.Field;

import org.apache.lucene.document.Field.Store;

import org.apache.lucene.document.TextField;

import org.apache.lucene.index.DirectoryReader;

import org.apache.lucene.index.IndexReader;

import org.apache.lucene.index.IndexWriter;

import org.apache.lucene.index.IndexWriterConfig;

import org.apache.lucene.index.IndexWriterConfig.OpenMode;

import org.apache.lucene.index.Term;

import org.apache.lucene.search.IndexSearcher;

import org.apache.lucene.search.Query;

import org.apache.lucene.search.ScoreDoc;

import org.apache.lucene.search.TopDocs;

import org.apache.lucene.search.payloads.MaxPayloadFunction;

import org.apache.lucene.search.payloads.PayloadNearQuery;

import org.apache.lucene.search.spans.SpanQuery;

import org.apache.lucene.search.spans.SpanTermQuery;

import org.apache.lucene.store.RAMDirectory;

/**

* Payload测试

* @author Lanxiaowei

*

*/

public class PayloadTest {

public static void main(String[] args) throws IOException {

RAMDirectory directory = new RAMDirectory();

//Analyzer analyzer = new IKAnalyzer();

Analyzer analyzer = new BoldAnalyzer();

IndexWriterConfig config = new IndexWriterConfig(analyzer);

config.setOpenMode(OpenMode.CREATE_OR_APPEND);

IndexWriter writer = new IndexWriter(directory, config);

Document doc1 = new Document();

Field f1 = new TextField("title", "Java <B>hello</B> world",Store.YES);

doc1.add(f1);

writer.addDocument(doc1);

Document doc2 = new Document();

Field f2 = new TextField("title", "Java ,I like it.",Store.YES);

doc2.add(f2);

writer.addDocument(doc2);

writer.close();

IndexReader reader = DirectoryReader.open(directory);

IndexSearcher searcher = new IndexSearcher(reader);

searcher.setSimilarity(new PayloadSimilarity());

SpanQuery queryStart = new SpanTermQuery(new Term("title","java"));

SpanQuery queryEnd = new SpanTermQuery(new Term("title","hello"));

Query query = new PayloadNearQuery(new SpanQuery[] {

queryStart,queryEnd},2,true,new MaxPayloadFunction());

TopDocs topDocs = searcher.search(query, Integer.MAX_VALUE);

ScoreDoc[] docs = topDocs.scoreDocs;

if(null == docs || docs.length == 0) {

System.out.println("No results for this query.");

return;

}

for (ScoreDoc scoreDoc : docs) {

int docID = scoreDoc.doc;

float score = scoreDoc.score;

Document document = searcher.doc(docID);

String title = document.get("title");

System.out.println("docId:" + docID);

System.out.println("title:" + title);

System.out.println("score:" + score);

System.out.println("\n");

}

reader.close();

directory.close();

}

}

上述所有示例代码我都会上传到底下的附件里。好了,有关Lucene的评分机制就说这么多了,关键还是要看懂Lucene的那个评分公式,当然,如果你想要完全推倒Lucene的默认评分计算公式,实现一套自己的评分公式,那你恐怕要实现一套自己的Query,Weight,Scorer,Similarity.

如果你还有什么问题请加我Q-Q:7-3-6-0-3-1-3-0-5,

或者加裙![]() 一起交流学习!

一起交流学习!