C++ AMP: 哈夫变换求平面参数的GPU实现

终于有时间把这篇博客给补上了,理论知识我会在另外一片数学知识中讲到。

写了一个静态的方法,GPU实现的方便并不难(比起来DirectCompute里的复杂配置,amp的确提供了异常方面的接口)。

具体代码见下,我会做一些讲解。

写了一个静态的方法,GPU实现的方便并不难(比起来DirectCompute里的复杂配置,amp的确提供了异常方面的接口)。

具体代码见下,我会做一些讲解。

static void HoughFitPlanGPU(float* pXData, float* pYData, float* pZData, int count, PlanParameters& planParam)

{

concurrency::array_view<const float, 1> xdata(count, pXData);

concurrency::array_view<const float, 1> ydata(count, pYData);

concurrency::array_view<const float, 1> zdata(count, pZData);

float miminor = 0.08f;

int aN = planParam.aN, bN = planParam.bN, cN = planParam.cN, dN = planParam.dN;

// for the special demand

float* aParam = new float[aN];

CreateParam(aParam, aN, -PI_FIT/4.0f, PI_FIT/4.0f);

float* bParam = new float[bN];

CreateParam(bParam, bN, -PI_FIT/4.0f, PI_FIT/4.0f);

//std::vector<float> aParam(aN, 1.0);

//CreateParam(aParam, aN, -PI_FIT/4.0f, PI_FIT/4.0f);

//std::vector<float> bParam(bN, 0.5);

//CreateParam(bParam, bN, -PI_FIT/4.0f, PI_FIT/4.0f);

float* dParam = new float[dN];

for ( int i = 0; i < dN; i++)

dParam[i] = (float)(i-13);

concurrency::array_view<float,1> vaParam(aN, aParam);

concurrency::array_view<float,1> vbParam(bN, bParam);

concurrency::array_view<float,1> vdParam(dN, dParam);

std::vector<float> voteRes( aN*bN*dN, 0);

concurrency::array_view<float, 3> voteData(aN,bN,dN,voteRes); // 特别注意,不能是int型,否则会把shader设置成为int还是怎么,影响到参数空间的值dParam也会变成int值

voteData.discard_data();

concurrency::parallel_for_each(

voteData.extent,

[=](concurrency::index<3> idx) restrict(amp)

{

float a = vaParam(idx[0]);

float b = vbParam(idx[1]);

float c = 1.0f;

float d = vdParam[idx[2]];

voteData[idx] = 0;

for ( int m = 0; m < count; m++)

{

float value = a * xdata[m] + b * ydata[m] + zdata[m] + d;

value = (value > 0)? value: -value;

if(value < miminor)

//voteData[idx] += d;

voteData[idx]++;

}

}

);

float max = 0;

for ( int i = 0; i < aN; i++)

{

for ( int j = 0; j< bN; j++)

{

for (int k = 0; k < dN; k++)

{

if(voteData(i,j,k) > max)

{

max = voteData(i,j,k);

planParam.a = aParam[i];

planParam.b = bParam[j];

planParam.c = 1.0f;

planParam.d = dParam[k];

}

}

}

}

max = max;

std::cout << max << std::endl;

}

在C++ AMP中主要有array和array_view这两种数据容器。这两者主要的区别在于array类型的数据在创建时会在GPU显存上拥有一个备份,在GPU对该数据进行完运算之后,开发者必须手动将数据拷贝回CPU。与之相比,array_view其实是一个数据结构的封装,只有在它指向的数据被GPU调用时才会被拷贝到GPU上进行相应的计算。从下例中我们看到,声明array_view数据时需要提供两个模板参数:array_view元素的类型和数据结构的纬度。因为pXData,pYData和pZData都是一维数组,因此我们在声明时传入const float和1两个参数。其他所遇到的情况以此类推即可。【说明摘自程序员杂志第四期】

参数空间的设计可以根据自己的需求而定,因为在我的试验里,发现在z轴上的分布为40 - 60cm,所以我考虑将z的参数定为单位值,参数空间为a、b、d(点到平面的距离),a、b为-1到1的等差采样,采样率可以根据精度需求进行手动指定(优化选择当然更好,如果要把这个功能集成到现在的项目里可以会考虑做这一步)。

为了和GPU进行检查和对比,实现了CPU进行平面检测,在鉴定GPU效果良好后,对其进行改进,采用了迭代的方法提高检测进度。

static void HoughFitPlanCPU(float* pXData, float* pYData, float* pZData, int count, PlanParameters& planParam)

{

float miminor = 0.01f;

int aN = planParam.aN, bN = planParam.bN, cN = planParam.cN, dN = planParam.dN;

// for the special demand

float band = 2.0f;

int iter = 6;

float* aParam = new float[aN];

float* bParam = new float[bN];

float* dParam = new float[dN];

for ( int i = 0; i < dN; i++)

dParam[i] = (float)(i-13);

while ( iter-- >0)

{

band = band / 2.0f;

CreateParam(aParam, aN, planParam.a - band, planParam.a + band);

CreateParam(bParam, bN, planParam.b - band, planParam.b + band);

//std::ofstream outfile("data3.txt");

float miniset = 10000.0f, dx, dy, dz;

std::vector<float> voteRes( aN*bN*dN, 0);

//int*** vote = new int**[dN];

for ( int i = 0; i < aN; i++)

{

//vote[i] = new int*[bN];

for ( int j = 0; j < bN; j++)

{

//vote[i][j] = new int[dN];

for (int k = 0; k < dN; k++)

{

long idx = i*bN*dN + j*dN + k;

float a = aParam[i];

float b = bParam[j];

float c = 1.0f;

float d = dParam[k];

float normal = (a*a + b*b + c*c + d*d);

//vote[i][j][k] = 0;

voteRes[idx] = 0;

for (int m = 0; m < count; m++)

{

float x = pXData[m], y = pYData[m], z = pZData[m];

float value = a*x + b*y + c*z + d;

value = (value >0)?value:(-value);

//value = value*value / 10000.0f;// / (x*x + y*y + z*z)/ normal;

if( value < miminor)

//voteRes[idx] += d;

voteRes[idx]++;

//vote[i][j][k]++;

}

//outfile << vote[i][j][k] <<"\t";

}

//outfile << std::endl;

}

}

//outfile.close();

float max = -0.01;

for ( int i = 0; i < aN; i++)

{

for ( int j = 0; j < bN; j++)

{

for (int k = 0; k < dN; k++)

{

long idx = i*bN*dN + j*dN + k;

if ( voteRes[idx] > max) //vote[i][j][k] > max)

{

max = voteRes[idx]; //vote[i][j][k];

planParam.a = aParam[i];

planParam.b = bParam[j];

planParam.d = dParam[k];

}

}

}

}

std::cout << max << std::endl;

}

}测试代码:

int _tmain(int argc, _TCHAR* argv[])

{

// 0.457x + 0.787y + z - 9 = 0;

int count = 80, rand_count = 10;

float* XData = new float[count];

float* YData = new float[count];

float* ZData = new float[count];

for ( int i = 0; i<rand_count; i++)

{

ZData[count-1-i] = (float)rand()/1000.0f;

XData[count-1-i] = (float)rand()/1000.0f;

YData[count-1-i] = (float)rand()/1000.0f;

}

for ( int i = 0; i < count-rand_count; i++)

{

ZData[i] = (float)(i+20);

XData[i] = (float)(i*i)/100.0f;

YData[i] = (9.0f - ZData[i] - 0.457f*XData[i] ) / 0.787f;

}

PlanParameters planParam;

planParam.c = 1.0f;

planParam.cN = 1;

planParam.aN = 500;

planParam.bN = 500;

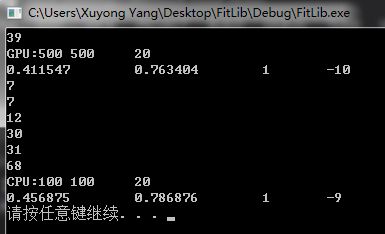

CFitLib::HoughFitPlanGPU(XData, YData, ZData,count, planParam);

std::cout << "GPU:" << planParam.aN << "\t" <<planParam.bN<< "\t" <<planParam.dN << std::endl;

std::cout << planParam.a << "\t" << planParam.b << "\t" << planParam.c << "\t" << planParam.d << std::endl;

planParam.a = 0.0f;

planParam.b = 0.0f;

planParam.aN = 100;

planParam.bN = 100;

CFitLib::HoughFitPlanCPU(XData, YData, ZData, count, planParam);

std::cout << "CPU:" << planParam.aN << "\t" <<planParam.bN<< "\t" <<planParam.dN << std::endl;

std::cout << planParam.a << "\t" << planParam.b << "\t" << planParam.c << "\t" << planParam.d << std::endl;

std::cout << (0.061*178 + 7.41)*0.65 <<std::endl;

system("pause");

return 0;

}

CPU递归迭代能找到更好的逼近结果,可考虑换成GPU进行迭代在降低算法复杂度。

【由于这段时间太多,写得很粗糙,请多多见谅】

http://www.cnblogs.com/yxy8023ustc/archive/2012/12/09/2809404.html