caffe层解析之softmaxwithloss层

理论

caffe中的softmaxWithLoss其实是:

softmaxWithLoss = Multinomial Logistic Loss Layer + Softmax Layer

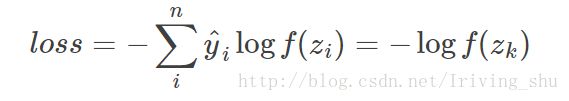

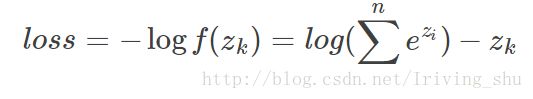

其核心公式为:

其中,其中y^为标签值,k为输入图像标签所对应的的神经元。m为输出的最大值,主要是考虑数值稳定性。

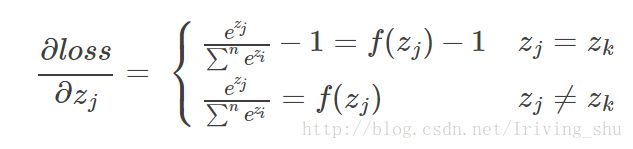

反向传播时:

对输入的zj进行求导得:

Caffe中使用

首先在Caffe中使用如下:

1 layer {

2 name: "loss"

3 type: "SoftmaxWithLoss"

4 bottom: "fc8"

5 bottom: "label"

6 top: "loss"

7 }caffe中softmaxloss 层的参数如下:

// Message that stores parameters shared by loss layers

message LossParameter {

// If specified, ignore instances with the given label.

//忽略那些label

optional int32 ignore_label = 1;

// How to normalize the loss for loss layers that aggregate across batches,

// spatial dimensions, or other dimensions. Currently only implemented in

// SoftmaxWithLoss and SigmoidCrossEntropyLoss layers.

enum NormalizationMode {

// Divide by the number of examples in the batch times spatial dimensions.

// Outputs that receive the ignore label will NOT be ignored in computing

// the normalization factor.

//一次前向计算的loss除以所有的label数

FULL = 0;

// Divide by the total number of output locations that do not take the

// ignore_label. If ignore_label is not set, this behaves like FULL.

//一次前向计算的loss除以所有的可用的label数

VALID = 1;

// Divide by the batch size.

//除以batchsize大小,默认为batchsize大小。

BATCH_SIZE = 2;

// Do not normalize the loss.

NONE = 3;

}

// For historical reasons, the default normalization for

// SigmoidCrossEntropyLoss is BATCH_SIZE and *not* VALID.

optional NormalizationMode normalization = 3 [default = VALID];

// Deprecated. Ignored if normalization is specified. If normalization

// is not specified, then setting this to false will be equivalent to

// normalization = BATCH_SIZE to be consistent with previous behavior.

//如果normalize==false,则normalization=BATCH_SIZE

//如果normalize==true,则normalization=Valid

optional bool normalize = 2;

}

首先来看一下softmaxwithloss的头文件:

#ifndef CAFFE_SOFTMAX_WITH_LOSS_LAYER_HPP_

#define CAFFE_SOFTMAX_WITH_LOSS_LAYER_HPP_

#include {

public:

/**

* @param param provides LossParameter loss_param, with options:

* - ignore_label (optional)

* Specify a label value that should be ignored when computing the loss.

* - normalize (optional, default true)

* If true, the loss is normalized by the number of (nonignored) labels

* present; otherwise the loss is simply summed over spatial locations.

*/

explicit SoftmaxWithLossLayer(const LayerParameter& param)

: LossLayer(param) {}

virtual void LayerSetUp(const vector > softmax_layer_;

/// prob stores the output probability predictions from the SoftmaxLayer.

//存储经过softmax layer输出的概率

Blob prob_;

/// bottom vector holder used in call to the underlying

//softmax层前向函数的bottom

SoftmaxLayer::Forward

vector class_weight_;

Blob counts_;

Blob loss_;

/// How to normalize the output loss.

//归一化loss类型

LossParameter_NormalizationMode normalization_;

bool has_cutting_point_;

Dtype cutting_point_;

std::string normalize_type_;

int softmax_axis_, outer_num_, inner_num_;

};

} // namespace caffe

#endif // CAFFE_SOFTMAX_WITH_LOSS_LAYER_HPP_

具体函数实现

#include ::LayerSetUp(

const vector::LayerSetUp(bottom, top);

normalize_type_ =

this->layer_param_.softmax_param().normalize_type();

//归一化为softmax

if (normalize_type_ == "Softmax") {

LayerParameter softmax_param(this->layer_param_);

softmax_param.set_type("Softmax");

softmax_layer_ = LayerRegistry::CreateLayer(softmax_param);

softmax_bottom_vec_.clear();

softmax_bottom_vec_.push_back(bottom[0]);

softmax_top_vec_.clear();

softmax_top_vec_.push_back(&prob_);

softmax_layer_->SetUp(softmax_bottom_vec_, softmax_top_vec_);

}

else if(normalize_type_ == "L2" || normalize_type_ == "L1") {

LayerParameter normalize_param(this->layer_param_);

normalize_param.set_type("Normalize");

softmax_layer_ = LayerRegistry::CreateLayer(normalize_param);

softmax_bottom_vec_.clear();

softmax_bottom_vec_.push_back(bottom[0]);

softmax_top_vec_.clear();

softmax_top_vec_.push_back(&prob_);

softmax_layer_->SetUp(softmax_bottom_vec_, softmax_top_vec_);

}

else {

NOT_IMPLEMENTED;

}

has_ignore_label_ =

this->layer_param_.loss_param().has_ignore_label();

if (has_ignore_label_) {

ignore_label_ = this->layer_param_.loss_param().ignore_label();

}

has_hard_ratio_ =

this->layer_param_.softmax_param().has_hard_ratio();

if (has_hard_ratio_) {

hard_ratio_ = this->layer_param_.softmax_param().hard_ratio();

CHECK_GE(hard_ratio_, 0);

CHECK_LE(hard_ratio_, 1);

}

has_cutting_point_ =

this->layer_param_.softmax_param().has_cutting_point();

if (has_cutting_point_) {

cutting_point_ = this->layer_param_.softmax_param().cutting_point();

CHECK_GE(cutting_point_, 0);

CHECK_LE(cutting_point_, 1);

}

has_hard_mining_label_ = this->layer_param_.softmax_param().has_hard_mining_label();

if (has_hard_mining_label_) {

hard_mining_label_ = this->layer_param_.softmax_param().hard_mining_label();

}

has_class_weight_ = (this->layer_param_.softmax_param().class_weight_size() != 0);

softmax_axis_ =

bottom[0]->CanonicalAxisIndex(this->layer_param_.softmax_param().axis());

if (has_class_weight_) {

class_weight_.Reshape({ bottom[0]->shape(softmax_axis_) });

CHECK_EQ(this->layer_param_.softmax_param().class_weight().size(), bottom[0]->shape(softmax_axis_));

for (int i = 0; i < bottom[0]->shape(softmax_axis_); i++) {

class_weight_.mutable_cpu_data()[i] = (Dtype)this->layer_param_.softmax_param().class_weight(i);

}

}

else {

if (bottom.size() == 3) {

class_weight_.Reshape({ bottom[0]->shape(softmax_axis_) });

for (int i = 0; i < bottom[0]->shape(softmax_axis_); i++) {

class_weight_.mutable_cpu_data()[i] = (Dtype)1.0;

}

}

}

if (!this->layer_param_.loss_param().has_normalization() &&

this->layer_param_.loss_param().has_normalize()) {

normalization_ = this->layer_param_.loss_param().normalize() ?

LossParameter_NormalizationMode_VALID :

LossParameter_NormalizationMode_BATCH_SIZE;

} else {

normalization_ = this->layer_param_.loss_param().normalization();

}

}

template <typename Dtype>

void SoftmaxWithLossLayer::Reshape(

const vector::Reshape(bottom, top);

softmax_layer_->Reshape(softmax_bottom_vec_, softmax_top_vec_);

softmax_axis_ =

bottom[0]->CanonicalAxisIndex(this->layer_param_.softmax_param().axis());

outer_num_ = bottom[0]->count(0, softmax_axis_);

inner_num_ = bottom[0]->count(softmax_axis_ + 1);

counts_.Reshape({ outer_num_, inner_num_ });

loss_.Reshape({ outer_num_, inner_num_ });

CHECK_EQ(outer_num_ * inner_num_, bottom[1]->count())

<< "Number of labels must match number of predictions; "

<< "e.g., if softmax axis == 1 and prediction shape is (N, C, H, W), "

<< "label count (number of labels) must be N*H*W, "

<< "with integer values in {0, 1, ..., C-1}.";

if (bottom.size() == 3) {

CHECK_EQ(outer_num_ * inner_num_, bottom[2]->count())

<< "Number of loss weights must match number of label.";

}

if (top.size() >= 2) {

// softmax output

top[1]->ReshapeLike(*bottom[0]);

}

if (has_class_weight_) {

CHECK_EQ(class_weight_.count(), bottom[0]->shape(1));

}

}

template <typename Dtype>

Dtype SoftmaxWithLossLayer::get_normalizer(

LossParameter_NormalizationMode normalization_mode, Dtype valid_count) {

Dtype normalizer;

switch (normalization_mode) {

case LossParameter_NormalizationMode_FULL:

normalizer = Dtype(outer_num_ * inner_num_);

break;

case LossParameter_NormalizationMode_VALID:

if (valid_count == -1) {

normalizer = Dtype(outer_num_ * inner_num_);

} else {

normalizer = valid_count;

}

break;

case LossParameter_NormalizationMode_BATCH_SIZE:

normalizer = Dtype(outer_num_);

break;

case LossParameter_NormalizationMode_NONE:

normalizer = Dtype(1);

break;

default:

LOG(FATAL) << "Unknown normalization mode: "

<< LossParameter_NormalizationMode_Name(normalization_mode);

}

// Some users will have no labels for some examples in order to 'turn off' a

// particular loss in a multi-task setup. The max prevents NaNs in that case.

return std::max(Dtype(1.0), normalizer);

}

//前向中主要利用softmax层输出每一个样本的对应的所有类别概率。如输入一只狗,则输出狗的概率,猫的概率,猴的概率。[0.8,0.1,0.1]

template <typename Dtype>

void SoftmaxWithLossLayer::Forward_cpu(

const vector::Backward_cpu(const vector