从入门到深入卷积神经网络学习分享(关于对Pytorch的MNIST手写字母的识别)

笔者采用4种网络层次结构来实现关于MNIST手写字母识别的神经网络,从表层开始步步深入,便于理解。

四种网络分别是:

一:3层全连接网络模型(包含批处理化以及ReLU优化函数)

(layer1): Sequential(

(0): Linear(in_features=784, out_features=300, bias=True)

(1): BatchNorm1d(300, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU(inplace=True)

)

(layer2): Sequential(

(0): Linear(in_features=300, out_features=100, bias=True)

(1): BatchNorm1d(100, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU(inplace=True)

)

(layer3): Sequential(

(0): Linear(in_features=100, out_features=10, bias=True)

)

二:2层卷积神经网络和3层全连接输出(无批处理化有ReLU优化函数)

(此2层卷积转载来自其他作者,如侵必删)

self.conv1 = nn.Sequential( #input_size=(1*28*28)

nn.Conv2d(1, 6, 5, 1, 2), #padding=2保证输入输出尺寸相同

nn.ReLU(), #input_size=(6*28*28)

nn.MaxPool2d(kernel_size=2, stride=2),#output_size=(6*14*14)

)

self.conv2 = nn.Sequential(

nn.Conv2d(6, 16, 5),

nn.ReLU(), #input_size=(16*10*10)

nn.MaxPool2d(2, 2) #output_size=(16*5*5)

)

self.fc1 = nn.Sequential(

nn.Linear(16 * 5 * 5, 120),

nn.ReLU()

)

self.fc2 = nn.Sequential(

nn.Linear(120, 84),

nn.ReLU()

)

self.fc3 = nn.Linear(84, 10)

三:4层卷积神经网络和3层全连接输出(无批处理化有ReLU函数优化)

CNN(

(layer1): Sequential(

(0): Conv2d(1, 16, kernel_size=(3, 3), stride=(1, 1))

(1): ReLU(inplace=True)

)

(layer2): Sequential(

(0): Conv2d(16, 32, kernel_size=(3, 3), stride=(1, 1))

(1): ReLU(inplace=True)

(2): MaxPool2d(kernel_size=2, stride=2, padding=0, dilation=1, ceil_mode=False)

)

(layer3): Sequential(

(0): Conv2d(32, 64, kernel_size=(3, 3), stride=(1, 1))

(1): ReLU(inplace=True)

)

(layer4): Sequential(

(0): Conv2d(64, 128, kernel_size=(3, 3), stride=(1, 1))

(1): ReLU(inplace=True)

(2): MaxPool2d(kernel_size=2, stride=2, padding=0, dilation=1, ceil_mode=False)

)

(fc): Sequential(

(0): Linear(in_features=2048, out_features=1024, bias=True)

(1): ReLU(inplace=True)

(2): Linear(in_features=1024, out_features=128, bias=True)

(3): ReLU(inplace=True)

(4): Linear(in_features=128, out_features=10, bias=True)

)

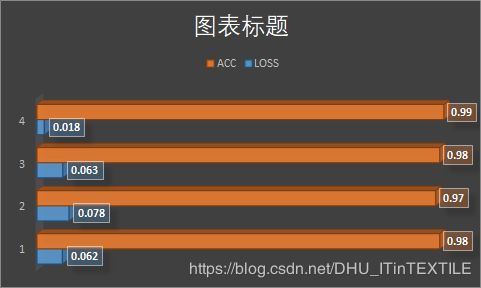

)测试集正确率明显有很大提升,函数收敛速度加快几倍,笔者会在最后附上正确率对比

四:4层卷积神经网络和3层全连接输出(有批处理化有ReLU函数优化)—最优

(layer1): Sequential(

(0): Conv2d(1, 16, kernel_size=(3, 3), stride=(1, 1))

(1): BatchNorm2d(16, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU(inplace=True)

)

(layer2): Sequential(

(0): Conv2d(16, 32, kernel_size=(3, 3), stride=(1, 1))

(1): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU(inplace=True)

(3): MaxPool2d(kernel_size=2, stride=2, padding=0, dilation=1, ceil_mode=False)

)

(layer3): Sequential(

(0): Conv2d(32, 64, kernel_size=(3, 3), stride=(1, 1))

(1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU(inplace=True)

)

(layer4): Sequential(

(0): Conv2d(64, 128, kernel_size=(3, 3), stride=(1, 1))

(1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU(inplace=True)

(3): MaxPool2d(kernel_size=2, stride=2, padding=0, dilation=1, ceil_mode=False)

)

(fc): Sequential(

(0): Linear(in_features=2048, out_features=1024, bias=True)

(1): ReLU(inplace=True)

(2): Linear(in_features=1024, out_features=128, bias=True)

(3): ReLU(inplace=True)

(4): Linear(in_features=128, out_features=10, bias=True)

)上述第4种模型采用卷积层-批处理化-ReLU优化-池化层 这种顺序搭建

第一种3层全连接模型采用20次训练结果,其他模型采用8次训练,因为第一种模型如果采用8次训练,结果并未收敛无参考价值

最后笔者列出第第四种4层卷积包含批处理化模型全部代码,供参考学习

CNN2_Net.py

import torch

from torch import nn, optim

from torch.autograd import Variable

from torch.utils.data import DataLoader

from torchvision import datasets, transforms

class CNN(nn.Module):

def __init__(self):

super(CNN, self).__init__()

self.layer1 = nn.Sequential(

nn.Conv2d(1, 16, kernel_size=3), nn.BatchNorm2d(16), nn.ReLU(True))

self.layer2 = nn.Sequential(

nn.Conv2d(16, 32, kernel_size=3), nn.BatchNorm2d(32), nn.ReLU(True), nn.MaxPool2d(2, 2))

self.layer3 = nn.Sequential(

nn.Conv2d(32, 64, kernel_size=3), nn.BatchNorm2d(64), nn.ReLU(True))

self.layer4 = nn.Sequential(

nn.Conv2d(64, 128, kernel_size=3), nn.BatchNorm2d(128), nn.ReLU(True), nn.MaxPool2d(2, 2))

self.fc = nn.Sequential(

nn.Linear(128 * 4 * 4, 1024), nn.ReLU(inplace=True), nn.Linear(1024, 128), nn.ReLU(inplace=True),

nn.Linear(128, 10))

def forward(self, x):

x = self.layer1(x)

x = self.layer2(x)

x = self.layer3(x)

x = self.layer4(x)

x = x.view(x.size(0), -1)

x = self.fc(x)

return x

main.py

import torch

import torchvision as tv

import torchvision.transforms as transforms

import torch.nn as nn

import torch.optim as optim

import CNN2_Net

# 超参数设置

EPOCH = 8 # 遍历数据集次数

BATCH_SIZE = 64 # 批处理尺寸(batch_size)

LR = 0.001 # 学习率

# 定义数据预处理方式

transform = transforms.ToTensor()

# 定义训练数据集

trainset = tv.datasets.MNIST(

root='./data/',

train=True,

download=True,

transform=transform)

# 定义训练批处理数据

trainloader = torch.utils.data.DataLoader(

trainset,

batch_size=BATCH_SIZE,

shuffle=True,

)

# 定义测试数据集

testset = tv.datasets.MNIST(

root='./data/',

train=False,

download=True,

transform=transform)

# 定义测试批处理数据

testloader = torch.utils.data.DataLoader(

testset,

batch_size=BATCH_SIZE,

shuffle=False,

)

# 定义损失函数loss function 和优化方式(采用SGD)

net = CNN2_Net.CNN()

criterion = nn.CrossEntropyLoss() # 交叉熵损失函数,通常用于多分类问题上

optimizer = optim.SGD(net.parameters(), lr=LR, momentum=0.9)

# 训练

if __name__ == "__main__":

for epoch in range(EPOCH):

sum_loss = 0.0

Total_sum_loss = 0.0

# 数据读取

for i, data in enumerate(trainloader):

inputs, labels = data

# inputs, labels = inputs.to(device), labels.to(device)

# 梯度清零

optimizer.zero_grad()

# forward + backward

outputs = net(inputs)

loss = criterion(outputs, labels)

loss.backward()

optimizer.step()

# 每训练100个batch打印一次平均loss

sum_loss += loss.item()

Total_sum_loss += loss.item()

if i % 100 == 99:

print('[%d, %d] loss: %.03f'

% (epoch + 1, i + 1, sum_loss / 100))

sum_loss = 0.0

print('[%d] Total_loss: %.03f' % (epoch + 1, Total_sum_loss / len(trainloader)))

# 每跑完一次epoch测试一下准确率

with torch.no_grad():

correct = 0

total = 0

i = 0

for data in testloader:

images, labels = data

# images, labels = images.to(device), labels.to(device)

outputs = net(images)

# 取得分最高的那个类

_, predicted = torch.max(outputs.data, 1) # 选择概率最大的那个类别

total += labels.size(0)

i += 1

correct += (predicted == labels).sum()

print(labels.size(0))

print('第%d个epoch的识别准确率为:%d%%' % (epoch + 1, (100 * correct / total)))

大家可以在下方留言