Tensorflow学习笔记:CNN篇(1)——初识卷积神经网络

Tensorflow学习笔记:CNN篇(1)——初识卷积神经网络

前序

— 对于MNIST数据集来说,采用逻辑回归对数据进行辨别似乎已经达到极限,无法通过细枝末节的修补对其准确度做出更进一步的提高,因此本章开始放弃原有模型而采用全新的卷积神经网络对数据进行处理。

— 对于任意一个卷积网络来说,几个必不可少的部分为:

(1)输入层:用以对数据进行输入

(2)卷积层:使用给定的核函数对输入的数据进行特征提取,并根据核函数的数据产生若干个卷积特征结果

(3)池化层:用以对数据进行降维,减少数据的特征

(4)全连接层:对数据已有的特征进行重新提取并输出结果

代码示例

1、数据准备

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

import time

# 声明输入图片数据,类别

x = tf.placeholder('float', [None, 784])

y_ = tf.placeholder('float', [None, 10])

# 输入图片数据转化

x_image = tf.reshape(x, [-1, 28, 28, 1])2、卷积层、池化层

在程序中首先创建两个卷积层,TensorFlow中将卷积层已经实现并封装完毕,其他人只需调用即可。

filter1 = tf.Variable(tf.truncated_normal([5, 5, 1, 6]))

bias1 = tf.Variable(tf.truncated_normal([6]))

conv1 = tf.nn.conv2d(x_image, filter1, strides=[1, 1, 1, 1], padding='SAME')

h_conv1 = tf.nn.sigmoid(conv1 + bias1)

maxPool2 = tf.nn.max_pool(h_conv1, ksize=[1, 2, 2, 1],strides=[1, 2, 2, 1], padding='SAME')

filter2 = tf.Variable(tf.truncated_normal([5, 5, 6, 16]))

bias2 = tf.Variable(tf.truncated_normal([16]))

conv2 = tf.nn.conv2d(maxPool2, filter2, strides=[1, 1, 1, 1], padding='SAME')

h_conv2 = tf.nn.sigmoid(conv2 + bias2)

maxPool3 = tf.nn.max_pool(h_conv2, ksize=[1, 2, 2, 1],strides=[1, 2, 2, 1], padding='SAME')

filter3 = tf.Variable(tf.truncated_normal([5, 5, 16, 120]))

bias3 = tf.Variable(tf.truncated_normal([120]))

conv3 = tf.nn.conv2d(maxPool3, filter3, strides=[1, 1, 1, 1], padding='SAME')

h_conv3 = tf.nn.sigmoid(conv3 + bias3)代码段中首先定义了卷积核w_conv,其中的四个参数[5,5,1,32],前两者参数5,5是卷积核的大小,代表卷积核是一个[5,5]的矩阵所构成,而第三个参数是输入的数据通道,第四个参数即为输出的数据通道(卷积核的个数)

在这里:ksize=[1, 2, 2, 1]指的是池化矩阵的大小,即使用[2,2]的矩阵,而第三个参数strides=[1, 2, 2, 1]指的是池化层在每一维度上滑动的步长。

通过第一个卷积层和池化层,输入的数据被转化成[None,7,7,120]的大小的新的数据集,之后再通过一次全连接层对数据进行重新分类

3、全连接层

# 全连接层

# 权值参数

W_fc1 = tf.Variable(tf.truncated_normal([7 * 7 * 120, 80]))

# 偏置值

b_fc1 = tf.Variable(tf.truncated_normal([80]))

# 将卷积的产出展开

h_pool2_flat = tf.reshape(h_conv3, [-1, 7 * 7 * 120])

# 神经网络计算,并添加sigmoid激活函数

h_fc1 = tf.nn.sigmoid(tf.matmul(h_pool2_flat, W_fc1) + b_fc1)

# 输出层,使用softmax进行多分类

W_fc2 = tf.Variable(tf.truncated_normal([80, 10]))

b_fc2 = tf.Variable(tf.truncated_normal([10]))

y_conv = tf.nn.softmax(tf.matmul(h_fc1, W_fc2) + b_fc2)4、计算损失值

cross_entropy = -tf.reduce_sum(y_ * tf.log(y_conv))5、初始化optimizer

train_step = tf.train.GradientDescentOptimizer(0.001).minimize(cross_entropy)6、指定迭代次数,并在session执行graph

sess = tf.InteractiveSession()

# 测试正确率

correct_prediction = tf.equal(tf.argmax(y_conv, 1), tf.argmax(y_, 1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, "float"))

# 所有变量进行初始化

sess.run(tf.initialize_all_variables())

# 获取mnist数据

mnist_data_set = input_data.read_data_sets('MNIST_data', one_hot=True)

# 进行训练

start_time = time.time()

for i in range(20000):

# 获取训练数据

batch_xs, batch_ys = mnist_data_set.train.next_batch(200)

# 每迭代100个 batch,对当前训练数据进行测试,输出当前预测准确率

if i % 100 == 0:

train_accuracy = accuracy.eval(feed_dict={x: batch_xs, y_: batch_ys})

print("step %d, training accuracy %g" % (i, train_accuracy))

# 计算间隔时间

end_time = time.time()

print('time: ', (end_time - start_time))

start_time = end_time

# 训练数据

train_step.run(feed_dict={x: batch_xs, y_: batch_ys})

# 关闭会话

sess.close()运行结果

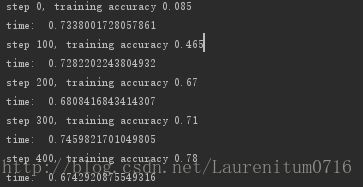

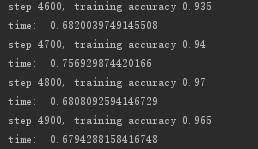

可以看到,准确率在平滑的上升,当达到400次迭代时,准确率达到0.78;当达到4900次迭代时,准确率达到0.965;当达到19900次迭代时,基本已经达到0.98

完整代码

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

import time

# 声明输入图片数据,类别

x = tf.placeholder('float', [None, 784])

y_ = tf.placeholder('float', [None, 10])

# 输入图片数据转化

x_image = tf.reshape(x, [-1, 28, 28, 1])

#第一层卷积层,初始化卷积核参数、偏置值,该卷积层5*5大小,一个通道,共有6个不同卷积核

filter1 = tf.Variable(tf.truncated_normal([5, 5, 1, 6]))

bias1 = tf.Variable(tf.truncated_normal([6]))

conv1 = tf.nn.conv2d(x_image, filter1, strides=[1, 1, 1, 1], padding='SAME')

h_conv1 = tf.nn.sigmoid(conv1 + bias1)

maxPool2 = tf.nn.max_pool(h_conv1, ksize=[1, 2, 2, 1],strides=[1, 2, 2, 1], padding='SAME')

filter2 = tf.Variable(tf.truncated_normal([5, 5, 6, 16]))

bias2 = tf.Variable(tf.truncated_normal([16]))

conv2 = tf.nn.conv2d(maxPool2, filter2, strides=[1, 1, 1, 1], padding='SAME')

h_conv2 = tf.nn.sigmoid(conv2 + bias2)

maxPool3 = tf.nn.max_pool(h_conv2, ksize=[1, 2, 2, 1],strides=[1, 2, 2, 1], padding='SAME')

filter3 = tf.Variable(tf.truncated_normal([5, 5, 16, 120]))

bias3 = tf.Variable(tf.truncated_normal([120]))

conv3 = tf.nn.conv2d(maxPool3, filter3, strides=[1, 1, 1, 1], padding='SAME')

h_conv3 = tf.nn.sigmoid(conv3 + bias3)

# 全连接层

# 权值参数

W_fc1 = tf.Variable(tf.truncated_normal([7 * 7 * 120, 80]))

# 偏置值

b_fc1 = tf.Variable(tf.truncated_normal([80]))

# 将卷积的产出展开

h_pool2_flat = tf.reshape(h_conv3, [-1, 7 * 7 * 120])

# 神经网络计算,并添加sigmoid激活函数

h_fc1 = tf.nn.sigmoid(tf.matmul(h_pool2_flat, W_fc1) + b_fc1)

# 输出层,使用softmax进行多分类

W_fc2 = tf.Variable(tf.truncated_normal([80, 10]))

b_fc2 = tf.Variable(tf.truncated_normal([10]))

y_conv = tf.nn.softmax(tf.matmul(h_fc1, W_fc2) + b_fc2)

# 损失函数

cross_entropy = -tf.reduce_sum(y_ * tf.log(y_conv))

# 使用GDO优化算法来调整参数

train_step = tf.train.GradientDescentOptimizer(0.001).minimize(cross_entropy)

sess = tf.InteractiveSession()

# 测试正确率

correct_prediction = tf.equal(tf.argmax(y_conv, 1), tf.argmax(y_, 1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, "float"))

# 所有变量进行初始化

sess.run(tf.initialize_all_variables())

# 获取mnist数据

mnist_data_set = input_data.read_data_sets('MNIST_data', one_hot=True)

# 进行训练

start_time = time.time()

for i in range(20000):

# 获取训练数据

batch_xs, batch_ys = mnist_data_set.train.next_batch(200)

# 每迭代100个 batch,对当前训练数据进行测试,输出当前预测准确率

if i % 100 == 0:

train_accuracy = accuracy.eval(feed_dict={x: batch_xs, y_: batch_ys})

print("step %d, training accuracy %g" % (i, train_accuracy))

# 计算间隔时间

end_time = time.time()

print('time: ', (end_time - start_time))

start_time = end_time

# 训练数据

train_step.run(feed_dict={x: batch_xs, y_: batch_ys})

# 关闭会话

sess.close()