Tensorflow——卷积神经网络(CNN)应用于MNIST数据集手写字体识别+Tensorboard展示

一、卷积神经网络详解

参考:https://blog.csdn.net/gaoyu1253401563/article/details/83714865

二、Tensorflow实现手写数字识别的卷积神经网络

- 编译环境:jupyter notebook

- CNN设计:输入层-卷积层-池化层-卷积层-池化层-全连接层-全连接层-输出层

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

#载入数据

mnist = input_data.read_data_sets('MNIST_data', one_hot= True)

#设置批次的大小

batch_size = 100

#计算共有多少个批次

n_batch = mnist.train.num_examples // batch_size

#初始化权值

def weight_variable(shape):

initial = tf.truncated_normal(shape,stddev=0.1) #生成一个截断的正态分布

return tf.Variable(initial)

#初始化偏置

def bias_variable(shape):

initial = tf.constant(0.1,shape=shape)

return tf.Variable(initial)

#卷积层

def conv2d(x,W):

#tf.nn.conv2d()是tensorflow中实现卷积的函数

#x:需要做卷积的输入图像,四维的[batch,in_height,in_width,in_channels]

#W:filter参数,CNN中的卷积核,形状为[filter_height,filter_width,in_chnnesl,out_chnnels]

#strides:卷积时在图像每一维上的步长,这是一个一维的向量,长度为4

#padding:string类型的量,只能是“SAME”(卷积时会在外围补零)、"VALID",(不会在外围补零)这个值决定了不同的卷积方式

#结果返回一个Tensor,即我们常说的feature map

return tf.nn.conv2d(x,W,strides=[1,1,1,1],padding='SAME')

#池化层:最大池化

def max_pool_2x2(x):

return tf.nn.max_pool(x,ksize=[1,2,2,1],strides=[1,2,2,1],padding="SAME")

#定义两个placeholder

x = tf.placeholder(tf.float32,[None,784])

y = tf.placeholder(tf.float32,[None,10])

#改变x的格式转成为4D的向量[batch,in_height,in_width,in_channels]

x_image = tf.reshape(x,[-1,28,28,1])

#初始化第一个卷积层的权值和偏置

#5*5的采样窗口,32个卷积核从1个平面抽取特征

W_conv1 = weight_variable([5,5,1,32])

#每一个卷积核对应医用偏置值,32是偏置值数量

b_conv1 = bias_variable([32])

#把x_image和权值向量进行卷积,再加上偏置值,然后用于ReLU激活函数

h_conv1 = tf.nn.relu(conv2d(x_image,W_conv1) + b_conv1)

#进行max—pooling操作

h_pool1 = max_pool_2x2(h_conv1)

#初始化第二个卷积层的权值和偏重

#5*5的采样窗口,64个卷积核从32个平面上抽取特征

W_conv2 = weight_variable([5,5,32,64])

#每一个卷积核对应一个偏置

b_conv2 = bias_variable([64])

#把h_pool1和权值向量进行卷积,再加上偏置值,然后用于ReLU激活函数

h_conv2 = tf.nn.relu(conv2d(h_pool1,W_conv2) + b_conv2)

#进行max—pooling层操作

h_pool2 = max_pool_2x2(h_conv2)

#28*28的图片第一次卷积后还是28*28,第一次池化后变成14*14

#第二次卷积后为14*14,第二次池化后为7*7

#通过以上操作得到64张7*7的平面

#初始化第一个全连接层的权值:上面有7*7*64个神经元,全连接层有1024个神经元,对应1024个偏置

W_fc1 = weight_variable([7*7*64,1024])

b_fc1 = bias_variable([1024])

#把池化层2的输出扁平化为1维

h_pool2_flat = tf.reshape(h_pool2,[-1,7*7*64])

#求第一个全连接层的输出

h_fc1 = tf.nn.relu(tf.matmul(h_pool2_flat,W_fc1) + b_fc1)

#keep_prob用来表示神经元的输出概率

keep_prob = tf.placeholder(tf.float32)

#加入dropout操作,目的是使得一部分神经元工作,一部分神经元不工作

h_fc1_drop = tf.nn.dropout(h_fc1,keep_prob)

#初始化第二个全连接层

W_fc2 = weight_variable([1024,10])

b_fc2 = bias_variable([10])

#计算输出层的输出,使用softmax函数计算概率得到输出

prediction = tf.nn.softmax(tf.matmul(h_fc1_drop,W_fc2) + b_fc2)

#使用交叉熵代价函数计算loss

cross_entropy = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(labels = y, logits = prediction))

#使用AdamOptimizer优化器进行优化,使得loss最小

train_step = tf.train.AdamOptimizer(1e-4).minimize(cross_entropy)

#将结果存放在一个布尔列表中,argmax()函数返回一维张量中最大值所在的位置:tf.equal(A,B)函数,若A=B,则返回True,否则返回False

correct_prediction = tf.equal(tf.argmax(prediction,1),tf.argmax(y,1))

#求准确率:tf.cast(x,dtype,name=None),类型转换函数,将x转化为dtype类型

accuracy = tf.reduce_mean(tf.cast(correct_prediction,tf.float32))

#定义会话

with tf.Session() as sess:

#初始化变量

sess.run(tf.global_variables_initializer())

#迭代21个周期

for epoch in range(21):

for batch in range(n_batch):

batch_xs,batch_ys = mnist.train.next_batch(batch_size)

sess.run(train_step,feed_dict = {x:batch_xs, y:batch_ys, keep_prob:0.7}) #每次训练有70%神经元在工作

acc = sess.run(accuracy,feed_dict = {x:mnist.test.images,y:mnist.test.labels,keep_prob:1.0})

print("Iter " + str(epoch) + ", Testing Accuracy = " + str(acc))测试准确度结果:

Extracting MNIST_data/train-images-idx3-ubyte.gz

Extracting MNIST_data/train-labels-idx1-ubyte.gz

Extracting MNIST_data/t10k-images-idx3-ubyte.gz

Extracting MNIST_data/t10k-labels-idx1-ubyte.gz

Iter 0, Testing Accuracy = 0.951

Iter 1, Testing Accuracy = 0.97

Iter 2, Testing Accuracy = 0.9743

Iter 3, Testing Accuracy = 0.981

Iter 4, Testing Accuracy = 0.9838

Iter 5, Testing Accuracy = 0.9861

Iter 6, Testing Accuracy = 0.9866

Iter 7, Testing Accuracy = 0.9873

Iter 8, Testing Accuracy = 0.9859

Iter 9, Testing Accuracy = 0.9868

Iter 10, Testing Accuracy = 0.9883

Iter 11, Testing Accuracy = 0.9888

Iter 12, Testing Accuracy = 0.9886

Iter 13, Testing Accuracy = 0.9898

Iter 14, Testing Accuracy = 0.9896

Iter 15, Testing Accuracy = 0.9901

Iter 16, Testing Accuracy = 0.9909

Iter 17, Testing Accuracy = 0.9897

Iter 18, Testing Accuracy = 0.9908

Iter 19, Testing Accuracy = 0.9913

Iter 20, Testing Accuracy = 0.9906

三、Tensorflow实现手写数字识别的卷积神经网络——tensorboard展示

- 使用tensorboard代码

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

#载入数据

mnist = input_data.read_data_sets('MNIST_data', one_hot= True)

#设置批次的大小

batch_size = 100

#计算共有多少个批次

n_batch = mnist.train.num_examples // batch_size

#生成tensorboard的参数概要

def variable_summaries(var):

with tf.name_scope('summaries'):

mean = tf.reduce_mean(var)

tf.summary.scalar('mean',mean)

with tf.name_scope('stddev'):

stddev = tf.sqrt(tf.reduce_mean(tf.square(var - mean)))

tf.summary.scalar('stddev',stddev) #标准差

tf.summary.scalar('max',tf.reduce_max(var)) #最大值

tf.summary.scalar('min',tf.reduce_min(var)) #最小值

tf.summary.histogram('histogram',var) #直方图

#初始化权值

def weight_variable(shape,name):

#生成一个截断的正态分布

initial = tf.truncated_normal(shape,stddev=0.1)

return tf.Variable(initial,name = name)

#初始化偏置

def bias_variable(shape,name):

initial = tf.constant(0.1,shape=shape)

return tf.Variable(initial,name = name)

#卷积层

def conv2d(x,W):

#tf.nn.conv2d()是tensorflow中实现卷积的函数

#x:需要做卷积的输入图像,四维的[batch,in_height,in_width,in_channels]

#W:filter参数,CNN中的卷积核,形状为[filter_height,filter_width,in_chnnesl,out_chnnels]

#strides:卷积时在图像每一维上的步长,这是一个一维的向量,长度为4

#padding:string类型的量,只能是“SAME”(卷积时会在外围补零)或"VALID",(不会在外围补零)这个值决定了不同的卷积方式

#结果返回一个Tensor,即我们常说的feature map

return tf.nn.conv2d(x,W,strides=[1,1,1,1],padding='SAME')

#池化层:最大池化

def max_pool_2x2(x):

return tf.nn.max_pool(x,ksize=[1,2,2,1],strides=[1,2,2,1],padding="SAME")

#命名空间

with tf.name_scope('input'):

#定义两个placeholder

x = tf.placeholder(tf.float32,[None,784],name = 'x-input')

y = tf.placeholder(tf.float32,[None,10],name = 'y-input')

with tf.name_scope('x_image'):

#改变x的格式转成为4D的向量[batch,in_height,in_width,in_channels]

x_image = tf.reshape(x,[-1,28,28,1], name = 'x_image')

with tf.name_scope('Conv1'):

with tf.name_scope('W_conv1'):

#初始化第一个卷积层的权值和偏置

#5*5的采样窗口,32个卷积核从1个平面抽取特征

W_conv1 = weight_variable([5,5,1,32],name='W_conv1')

with tf.name_scope('b_conv1'):

#每一个卷积核对应医用偏置值,32是偏置值数量

b_conv1 = bias_variable([32],name = 'b_conv1')

#把x_image和权值向量进行卷积,再加上偏置值,然后用于ReLU激活函数

with tf.name_scope('conv2d_1'):

conv2d_1 = conv2d(x_image,W_conv1) + b_conv1

with tf.name_scope('relu'):

h_conv1 = tf.nn.relu(conv2d_1)

with tf.name_scope('h_pool1'):

#进行max—pooling操作

h_pool1 = max_pool_2x2(h_conv1)

with tf.name_scope('Conv2'):

#初始化第二个卷积层的权值和偏重

#5*5的采样窗口,64个卷积核从32个平面上抽取特征

with tf.name_scope('W_conv2'):

W_conv2 = weight_variable([5,5,32,64],name='W_conv2')

with tf.name_scope('b_conv2'):

#每一个卷积核对应一个偏置

b_conv2 = bias_variable([64],name = 'b_conv2')

#把h_pool1和权值向量进行卷积,再加上偏置值,然后用于ReLU激活函数

with tf.name_scope('conv2d_2'):

conv2d_2 = conv2d(h_pool1,W_conv2) + b_conv2

with tf.name_scope('relu'):

h_conv2 = tf.nn.relu(conv2d_2)

with tf.name_scope('h_pool2'):

#进行max—pooling层操作

h_pool2 = max_pool_2x2(h_conv2)

#28*28的图片第一次卷积后还是28*28,第一次池化后变成14*14

#第二次卷积后为14*14,第二次池化后为7*7

#通过以上操作得到64张7*7的平面

#初始化第一个全连接层的权值:上面有7*7*64个神经元,全连接层有1024个神经元,对应1024个偏置

with tf.name_scope('fc1'):

with tf.name_scope('W_fc1'):

W_fc1 = weight_variable([7*7*64,1024],name = 'W_fc1')

with tf.name_scope('b_fc1'):

b_fc1 = bias_variable([1024],name = 'b_fc1')

#把池化层2的输出扁平化为1维

with tf.name_scope('h_pool2_flat'):

h_pool2_flat = tf.reshape(h_pool2,[-1,7*7*64],name = 'h_pool2_flat')

#求第一个全连接层的输出

with tf.name_scope('wx_plus_b1'):

wx_plus_b1 = tf.matmul(h_pool2_flat,W_fc1) +b_fc1

with tf.name_scope('relu'):

h_fc1 = tf.nn.relu(wx_plus_b1)

#keep_prob用来表示神经元的输出概率

with tf.name_scope('keep_prob'):

keep_prob = tf.placeholder(tf.float32,name = 'keep_prob')

#加入dropout操作,目的是使得一部分神经元工作,一部分神经元不工作

with tf.name_scope('h_fc1_drop'):

h_fc1_drop = tf.nn.dropout(h_fc1,keep_prob,name = 'h_fc1_drop')

#初始化第二个全连接层

with tf.name_scope('fc2'):

with tf.name_scope('W_fc2'):

W_fc2 = weight_variable([1024,10], name = 'W_fc2')

with tf.name_scope('b_fc2'):

b_fc2 = bias_variable([10],name = 'b_fc2')

with tf.name_scope('wx_plus_b2'):

wx_plus_b2 = tf.matmul(h_fc1_drop,W_fc2) + b_fc2

with tf.name_scope('softmax'):

#计算输出层的输出,使用softmax函数计算概率得到输出

prediction = tf.nn.softmax(wx_plus_b2)

#使用交叉熵代价函数计算loss

with tf.name_scope('cross_entropy'):

cross_entropy = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(labels = y, logits = prediction),name = 'cross_entropy')

tf.summary.scalar('cross_entropy',cross_entropy)

#使用AdamOptimizer优化器进行优化,使得loss最小

with tf.name_scope('train'):

train_step = tf.train.AdamOptimizer(1e-4).minimize(cross_entropy)

#将结果存放在一个布尔列表中,argmax()函数返回一维张量中最大值所在的位置:tf.equal(A,B)函数,若A=B,则返回True,否则返回False

with tf.name_scope('accuracy'):

with tf.name_scope('correct_prediction'):

correct_prediction = tf.equal(tf.argmax(prediction,1),tf.argmax(y,1))

#求准确率:tf.cast(x,dtype,name=None),类型转换函数,将x转化为dtype类型

with tf.name_scope('accuracy'):

accuracy = tf.reduce_mean(tf.cast(correct_prediction,tf.float32))

tf.summary.scalar('accuracy',accuracy)

#合并所有的summary

merged = tf.summary.merge_all()

#定义会话

with tf.Session() as sess:

#初始化变量

sess.run(tf.global_variables_initializer())

train_writer = tf.summary.FileWriter('tensorboard/logs/train',sess.graph)

test_writer = tf.summary.FileWriter('tensorboard/logs/test',sess.graph)

for i in range(1001):

#训练模型

batch_xs,batch_ys = mnist.train.next_batch(batch_size)

sess.run(train_step,feed_dict = {x:batch_xs, y:batch_ys, keep_prob:0.5}) #每次训练有50%神经元在工作

#记录训练集计算的参数

summary = sess.run(merged,feed_dict = {x:batch_xs,y:batch_ys,keep_prob:1.0})

train_writer.add_summary(summary,i)

#记录测试机计算的参数

batch_xs,batch_ys = mnist.test.next_batch(batch_size)

summary = sess.run(merged,feed_dict = {x:batch_xs,y:batch_ys,keep_prob:1.0})

test_writer.add_summary(summary,i)

if i%100 == 0:

test_acc = sess.run(accuracy,feed_dict = {x:mnist.test.images,y:mnist.test.labels,keep_prob:1.0})

train_acc = sess.run(accuracy,feed_dict = {x:mnist.train.images[:10000],y:mnist.train.labels[:10000],keep_prob:1.0})

print("Iter " + str(i) + ", Testing Accuracy = " + str(test_acc) + ", Training Accuracy = " + str(train_acc))

- 如何生成tensorboard网址

- 在生成的目录tensorboard/logs下运行终端:

tensorboard --logdir=/home/xx(自己的)/tensorflow学习代码实例/tsorboard/logs- 打开红线网址

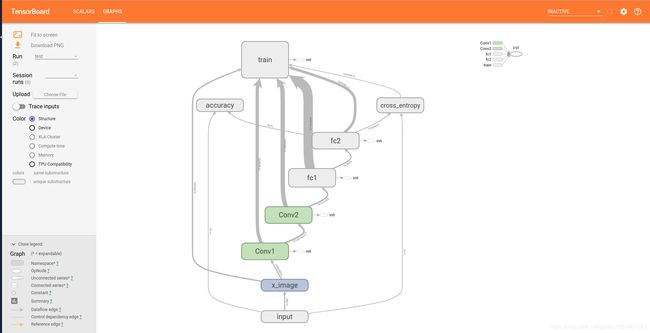

如下就是tensorboard

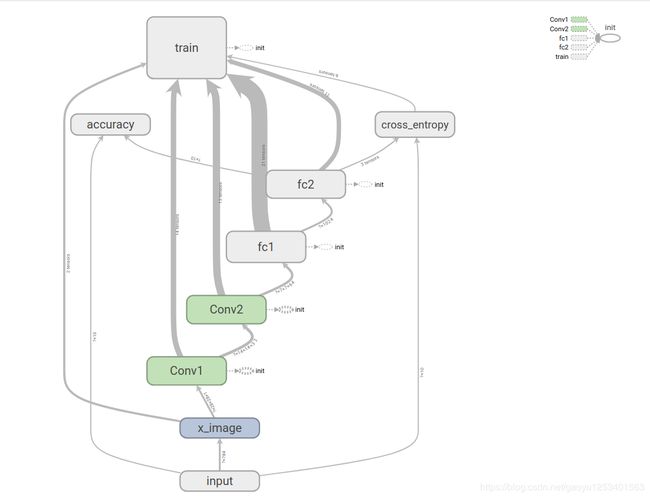

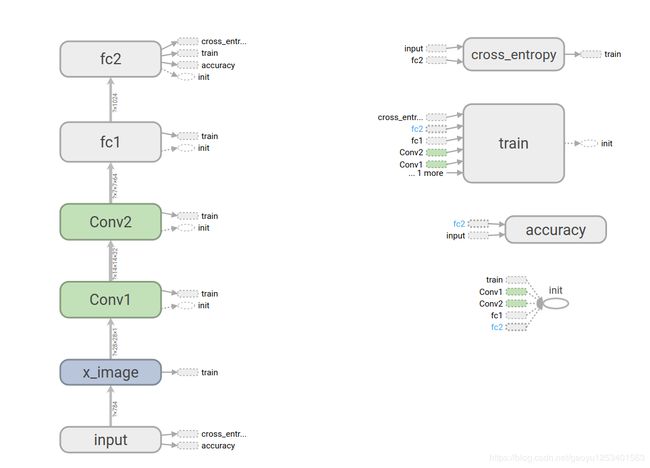

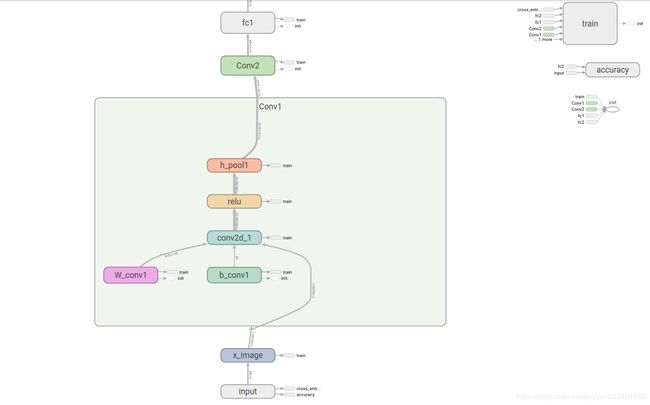

各部件的表示含义:

(1)网络结构的展示:

形式一:

形式二:

拿其中一个展开

(2)参数的展示

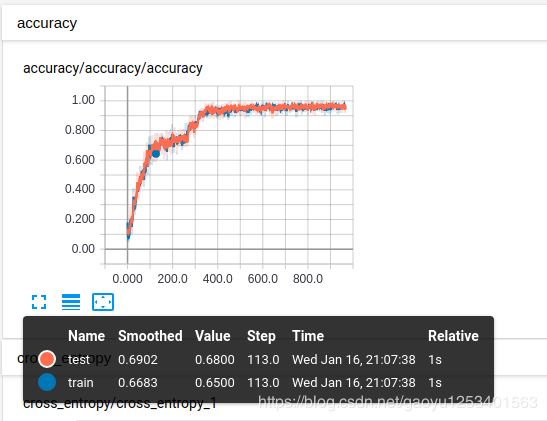

- accuracy

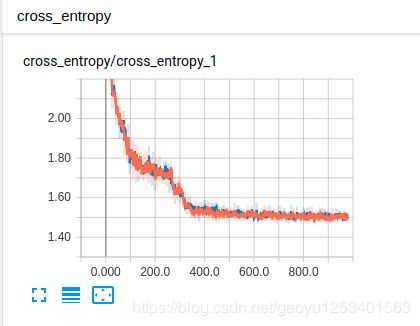

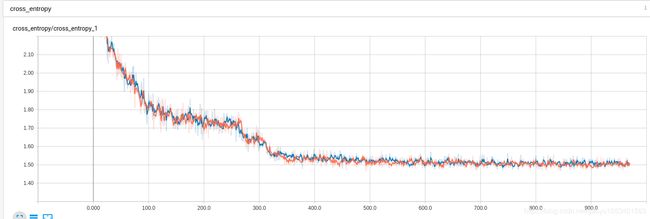

- cross_entropy