二进制安装kubernetes(k8s)

k8s安装

kubernetes 容器编排工具

k8s主要特性

自动装箱、自我修复、水平扩展、服务发现和负载均衡、自动发布和回滚

秘钥和配置管理

master —> Nodes

各node节点用来运行容器

用户运行容器时,启动容器时请求master,master中会自动分析各node相关状态,找到最佳适配运行的一个node然后把镜像启动起来,如果没有镜像会在registry中拖下并启动.

master管理节点三个核心组件

API Server 负责接收请求、解析请求、处理请求

Scheduler 绑定Pod到Node上,资源调度

Controller Manager 资源对象的 自动化控制中心

Etcd 共享存储,所有持久化的状态信息存储在Etcd中.

Pod是在K8s集群中运行部署应用或服务的最小单元,它是可以支持多容器的

Pod的设计理念是支持多个容器在一个Pod中共享网络地址和文件系统。

Pod控制器

Replication Controller、ReplicaSet、Deployment、StatefulSet、DacmonSet、Job,Ctonjob

LABEL(标签)

Label是一个 key=value的键值对,由用户指定,可以附加到 K8S资源之上.

给某个资源定义一个标签,随后可以通过label进行查询和筛选 ,类似SQL的where语句.

Label可以给对象创建多组标签

label : key=value

Label Selector(标签选择器)

RC是K8s集群中最早的保证Pod高可用的API对象。通过监控运行中的Pod来保证集群中运行指定数目的Pod副本.

指定的数目可以是多个也可以是1个;少于指定数目,RC就会启动运行新的Pod副本;多于指定数目,RC就会杀死多余的Pod副本.

即使在指定数目为1的情况下,通过RC运行Pod也比直接运行Pod更明智,因为RC也可以发挥它高可用的能力,保证永远有1个Pod在运行.

RC里包括完整的POD定义模板

RC通过Label Selector(标签选择器)机制实现对POD副本的自动控制.

通过改变RC里的POD副本以实现POD的扩容和缩容

通过改变RC里POD模块中的镜像版本,可以实现POD的滚动升级.

node核心组件

Kubelet 管理Pods以及容器、镜像、Volume等,实现对集群 对节点的管理

docker

Kube-proxy 提供网络代理以及负载均衡,实现与Service通讯。

k8s通信方式

节点网络 node与node

pod网络 pod与pod

server网络 kube-proxy与kube-proxy

这里实验环境为redhat7.5

Server1 为master节点 10.217.135.35

Server2、server3为pod节点 10.217.135.36、10.217.135.37

Master节点中需要安装:docker、etcd、api-server、scheduler、controller-manager、kubelet、flannel、docker-compose、harbor 作为集群的Master、服务环境控制相关模块、api网管控制相关模块、平台管理控制台模块

Pod节点中需要安装:docker、etcd、kubelet、proxy、flannel 服务节点、用于容器化服务部署和运行

首先三台需要安装docker并设置开机自启动、关闭swap firewall 及selinux等

vim/etc/fstab

注释掉swap分区并关闭swap分区

swapoff -a

systemctl enable docker

systemctl start docker

systemctl disable firewalld

systemctl stop firewalld

systemctl stop NetworkManager

systemctl disable NetworkManager

vim /etc/sysconfig/selinux

SELINUX=disabled

Init 6重启生效

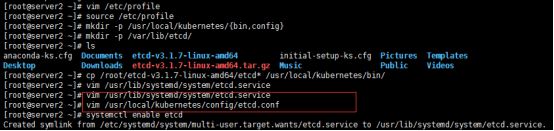

首先安装etcd共享存储需要在master及pod节点中同时安装

tar xf etcd-v3.1.7-linux-amd64.tar.gz

mkdir -p /usr/local/kubernetes/{bin,config}

mkdir -p /var/lib/etcd/

配置etcd.service

vim /usr/lib/systemd/system/etcd.service

[Unit]

Description=Etcd Server

After=network.target

[Service]

Type=simple

WorkingDirectory=/var/lib/etcd

EnvironmentFile=-/usr/local/kubernetes/config/etcd.conf

ExecStart=/usr/local/kubernetes/bin/etcd \

--name=${ETCD_NAME} \

--data-dir=${ETCD_DATA_DIR} \

--listen-peer-urls=${ETCD_LISTEN_PEER_URLS} \

--listen-client-urls=${ETCD_LISTEN_CLIENT_URLS} \

--advertise-client-urls=${ETCD_ADVERTISE_CLIENT_URLS} \

--initial-advertise-peer-urls=${ETCD_INITIAL_ADVERTISE_PEER_URLS} \

--initial-cluster=${ETCD_INITIAL_CLUSTER} \

--initial-cluster-token=${ETCD_INITIAL_CLUSTER_TOKEN} \

--initial-cluster-state=${ETCD_INITIAL_CLUSTER_STATE}

Type=notify

[Install]

WantedBy=multi-user.target

配置etcd.conf

#[member]

#name不可重复

ETCD_NAME="etcd01"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="http://0.0.0.0:2380"

ETCD_LISTEN_CLIENT_URLS="http://0.0.0.0:2379"

#[cluster]

#对外公告该节点并告知集群中其他节点配置为本机IP

ETCD_INITIAL_ADVERTISE_PEER_URLS="http://10.217.135.35:2380"

#集群中所有节点

ETCD_INITIAL_CLUSTER="etcd01=http://10.217.135.35:2380,etcd02=http://10.217.135.36:2380,etcd03=http://10.217.135.37:2380"

ETCD_INITIAL_CLUSTER_STATE="new"

#集群ID,多个集群时每个集群必须保持唯一

ETCD_INITIAL_CLUSTER_TOKEN="k8s-etcd-cluster"

#客户端交互请求的url配置为本机IP

ETCD_ADVERTISE_CLIENT_URLS="http://10.217.135.35:2379"

vim /etc/profile 在最后一行加入

export PATH=$PATH:/usr/local/kubernetes/bin

source /etc/profile

systemctl enable etcd

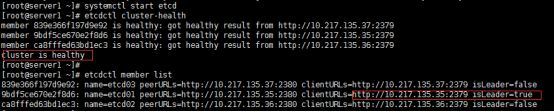

注意这里启动etcd时需要同时启动否则可能会出现错误

systemctl start etcd

etcdctl cluster-health 查看状态

tcdctl member list 列出集群,这里集群会自己选择出leader

这里集群选择的leader为server1

开始安装

Master节点安装组件

tar xf /root/kubernetes-server-linux-amd64.tar.gz

cp /root/kubernetes/server/bin/{kube-apiserver,kube-scheduler,kube-controller-manager,kubectl,kubelet} /usr/local/kubernetes/bin

安装apiserver

配置apiserver文件

vim /usr/local/kubernetes/config/kube-apiserver

#启用日志标准错误

KUBE_LOGTOSTDERR="--logtostderr=true"

#日志级别

KUBE_LOG_LEVEL="--v=4"

#Etcd服务地址

KUBE_ETCD_SERVERS="--etcd-servers=http://10.217.135.35:2379"

#API服务监听地址

KUBE_API_ADDRESS="--insecure-bind-address=0.0.0.0"

#API服务监听端口

KUBE_API_PORT="--insecure-port=8080"

#对集群中成员提供API服务地址master

KUBE_ADVERTISE_ADDR="--advertise-address=10.217.135.35"

#允许容器请求特权模式默认

KUBE_ALLOW_PRIV="--allow-privileged=false"

#集群分配的IP域

KUBE_SERVICE_ADDRESSES="--service-cluster-ip-range=10.217.135.0/24"

KUBE_ADMISSION_CONTROL="--admission_control=NamespaceLifecycle,NamespaceExists,LimitRanger,SecurityContextDeny,ResourceQuota"

Apiserver systemd配置文件

vim /usr/lib/systemd/system/kube-apiserver.service

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/usr/local/kubernetes/config/kube-apiserver

ExecStart=/usr/local/kubernetes/bin/kube-apiserver \

${KUBE_LOGTOSTDERR} \

${KUBE_LOG_LEVEL} \

${KUBE_ETCD_SERVERS} \

${KUBE_API_ADDRESS} \

${KUBE_API_PORT} \

${KUBE_ADVERTISE_ADDR} \

${KUBE_ALLOW_PRIV} \

${KUBE_SERVICE_ADDRESSES}

Restart=on-failure

[Install]

WantedBy=multi-user.target

systemctl enable kube-apiserver #设置开机自启动

systemctl start kube-apiserver #启动apiserver

安装scheduler文件

vim /usr/local/kubernetes/config/kube-scheduler

KUBE_LOGTOSTDERR="--logtostderr=true"

KUBE_LOG_LEVEL="--v=4"

#kube-masterIP

KUBE_MASTER="--master=10.217.135.35:8080"

KUBE_LEADER_ELECT="--leader-elect"

scheduler systemd配置文件

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/usr/local/kubernetes/config/kube-scheduler

ExecStart=/usr/local/kubernetes/bin/kube-scheduler \

${KUBE_LOGTOSTDERR} \

${KUBE_LOG_LEVEL} \

${KUBE_MASTER} \

${KUBE_LEADER_ELECT}

Restart=on-failure

[Install]

WantedBy=multi-user.target

systemctl enable kube-scheduler #开机自启动scheduler

systemctl start kube-scheduler #启动scheduler

controller-manager安装

vim /usr/local/kubernetes/config/kube-controller-manager

KUBE_LOGTOSTDERR="--logtostderr=true"

KUBE_LOG_LEVEL="--v=4"

#master节点IP

KUBE_MASTER="--master=10.217.135.35:8080"

KUBE_LEADER_ELECT="--leader-elect"

controller-manage systemd配置文件

vim /usr/lib/systemd/system/kube-controller-manager.service

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/usr/local/kubernetes/config/kube-controller-manager

ExecStart=/usr/local/kubernetes/bin/kube-controller-manager \

${KUBE_LOGTOSTDERR} \

${KUBE_LOG_LEVEL} \

${KUBE_MASTER} \

${KUBE_LEADER_ELECT}

Restart=on-failure

[Install]

WantedBy=multi-user.target

systemctl enable kube-controller-manager #设置开机自启动controller-manager

systemctl start kube-controller-manager #启动controller-manager

安装kubelet

配置kubelet-kubeconfig配置文件

vim /usr/local/kubernetes/config/kubelet.kubeconfig

apiVersion: v1

kind: Config

clusters:

- cluster:

#k8s-masterIP

server: http://10.217.135.35:8080

name: local

contexts:

- context:

cluster: local

name: local

current-context: local

配置kubelet配置文件

vim /usr/local/kubernetes/config/kubelet

# 启用日志标准错误

KUBE_LOGTOSTDERR="--logtostderr=true"

# 日志级别

KUBE_LOG_LEVEL="--v=4"

# Kubelet服务IP地址(本机)

NODE_ADDRESS="--address=10.217.135.35"

# Kubelet服务端口

NODE_PORT="--port=10250"

# 自定义节点名称IP为(本机)

NODE_HOSTNAME="--hostname-override=10.217.135.35"

# kubeconfig路径,指定连接API服务器

KUBELET_KUBECONFIG="--kubeconfig=/usr/local/kubernetes/config/kubelet.kubeconfig"

# 允许容器请求特权模式,默认false

KUBE_ALLOW_PRIV="--allow-privileged=false"

# DNS信息,DNS的IP

KUBELET_DNS_IP="--cluster-dns=10.217.135.36"

KUBELET_DNS_DOMAIN="--cluster-domain=cluster.local"

# 禁用Swap

KUBELET_SWAP="--fail-swap-on=false"

配置kubelet systemd配置文件

vim /usr/lib/systemd/system/kubelet.service

[Unit]

Description=Kubernetes Kubelet

After=docker.service

Requires=docker.service

[Service]

Environment=“KUBELET_CGROUP_ARGS=–cgroup-driver=cgroupfs”

EnvironmentFile=-/usr/local/kubernetes/config/kubelet

ExecStart=/usr/local/kubernetes/bin/kubelet \

${KUBE_LOGTOSTDERR} \

${KUBE_LOG_LEVEL} \

${NODE_ADDRESS} \

${NODE_PORT} \

${NODE_HOSTNAME} \

${KUBELET_KUBECONFIG} \

${KUBE_ALLOW_PRIV} \

${KUBELET_DNS_IP} \

${KUBELET_DNS_DOMAIN} \

${KUBELET_SWAP}

Restart=on-failure

KillMode=process

[Install]

WantedBy=multi-user.target

swapoff -a #关闭swap

systemctl enable kubelet #设置开机自启动kubelet

systemctl start kubelet #启动kubelet

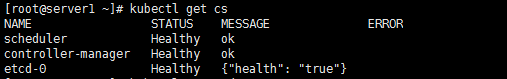

kubectl get cs

Master节点已安装完毕安装node节点

安装node节点(server2、server3安装)

tar xf kubernetes-node-linux-amd64.tar.gz

cp /root/kubernetes/node/bin/{kubelet,kube-proxy} /usr/local/kubernetes/bin/

安装kubelet

vim /usr/local/kubernetes/config/kubelet.kubeconfig

apiVersion: v1

kind: Config

clusters:

- cluster:

server: http://10.217.135.35:8080

name: local

contexts:

- context:

cluster: local

name: local

current-context: local

vim /usr/local/kubernetes/config/kubelet

# 启用日志标准错误

KUBE_LOGTOSTDERR="--logtostderr=true"

# 日志级别

KUBE_LOG_LEVEL="--v=4"

# Kubelet服务IP地址(本机)

NODE_ADDRESS="--address=10.217.135.36"

# Kubelet服务端口

NODE_PORT="--port=10250"

# 自定义节点名称(本机IP)

NODE_HOSTNAME="--hostname-override=10.217.135.36"

# kubeconfig路径,指定连接API服务器

KUBELET_KUBECONFIG="--kubeconfig=/usr/local/kubernetes/config/kubelet.kubeconfig"

# 允许容器请求特权模式,默认false

KUBE_ALLOW_PRIV="--allow-privileged=false"

# DNS信息,DNS的IP

KUBELET_DNS_IP="--cluster-dns=10.217.135.36"

KUBELET_DNS_DOMAIN="--cluster-domain=cluster.local"

# 禁用使用Swap

KUBELET_SWAP="--fail-swap-on=false"

kubelet systemd配置文件

vim /usr/lib/systemd/system/kubelet.service

[Unit]

Description=Kubernetes Kubelet

After=docker.service

Requires=docker.service

[Service]

EnvironmentFile=-/usr/local/kubernetes/config/kubelet

ExecStart=/usr/local/kubernetes/bin/kubelet \

${KUBE_LOGTOSTDERR} \

${KUBE_LOG_LEVEL} \

${NODE_ADDRESS} \

${NODE_PORT} \

${NODE_HOSTNAME} \

${KUBELET_KUBECONFIG} \

${KUBE_ALLOW_PRIV} \

${KUBELET_DNS_IP} \

${KUBELET_DNS_DOMAIN} \

${KUBELET_SWAP}

Restart=on-failure

KillMode=process

[Install]

WantedBy=multi-user.target

swapoff -a #关闭swap

systemctl enable kubelet #设置开机自启动kubelet

systemctl start kubelet #启动kubelet

安装proxy

配置proxy配置文件

vim /usr/local/kubernetes/config/kube-proxy

#启用日志标准错误

KUBE_LOGTOSTDERR="--logtostderr=true"

#日志级别

KUBE_LOG_LEVEL="--v=4"

#自定义节点名称(本机IP)

NODE_HOSTNAME="--hostname-override=10.217.135.36"

#API服务地址(MasterIP)

KUBE_MASTER="--master=http://10.217.135.35:8080"

配置proxy systemd配置文件

vim /usr/lib/systemd/system/kube-proxy.service

[Unit]

Description=Kubernetes Proxy

After=network.target

[Service]

EnvironmentFile=-/usr/local/kubernetes/config/kube-proxy

ExecStart=/usr/local/kubernetes/bin/kube-proxy \

${KUBE_LOGTOSTDERR} \

${KUBE_LOG_LEVEL} \

${NODE_HOSTNAME} \

${KUBE_MASTER}

Restart=on-failure

[Install]

WantedBy=multi-user.target

systemctl enable kube-proxy #设置开机自启动kube-proxy

systemctl restart kube-proxy #启动kube-proxy

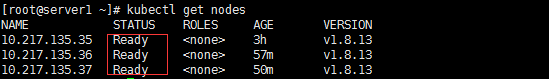

Node节点安装完成查看节点状态

在master节点(server1中)

kubectl get nodes

安装flannel(server1、server2、server3)

tar xf flannel-v0.7.1-linux-amd64.tar.gz

cp /root/{flanneld,mk-docker-opts.sh} /usr/local/kubernetes/bin/

配置flanneld文件

vim /usr/local/kubernetes/config/flanneld

# Flanneld configuration options

# etcd url location. Point this to the server where etcd runs,本机IP

FLANNEL_ETCD="http://10.217.135.36:2379"

# etcd config key. This is the configuration key that flannel queries

# For address range assignment,etcd-key的目录

FLANNEL_ETCD_KEY="/atomic.io/network"

# Any additional options that you want to pass,网卡名称

FLANNEL_OPTIONS="--iface=ens192"

flannel systemd配置文件

vim /usr/lib/systemd/system/flanneld.service

[Unit]

Description=Flanneld overlay address etcd agent

After=network.target

After=network-online.target

Wants=network-online.target

After=etcd.service

Before=docker.service

[Service]

Type=notify

EnvironmentFile=/usr/local/kubernetes/config/flanneld

EnvironmentFile=-/etc/sysconfig/docker-network

ExecStart=/usr/local/kubernetes/bin/flanneld -etcd-endpoints=${FLANNEL_ETCD} -etcd-prefix=${FLANNEL_ETCD_KEY} $FLANNEL_OPTIONS

ExecStartPost=/usr/local/kubernetes/bin/mk-docker-opts.sh -k DOCKER_NETWORK_OPTIONS -d /run/flannel/docker

Restart=on-failure

[Install]

WantedBy=multi-user.target

RequiredBy=docker.service

配置etcd-key(注意这里只需要在一台执行其他会自动同步

etcdctl mkdir /atomic.io/network

etcdctl mk /atomic.io/network/config "{ \"Network\": \"172.17.0.0/16\", \"SubnetLen\": 24, \"Backend\": { \"Type\": \"vxlan\" } }"

{ "Network": "172.17.0.0/16", "SubnetLen": 24, "Backend": { "Type": "vxlan" } }

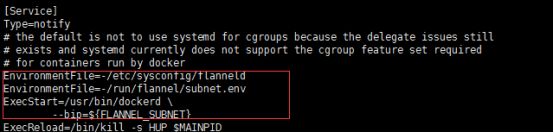

设置docker配置

Docker需要使用flanneld需要添加配置

vim /usr/lib/systemd/system/docker.service 在Service项中增加

EnvironmentFile=-/etc/sysconfig/flanneld

EnvironmentFile=-/run/flannel/subnet.env

ExecStart=/usr/bin/dockerd \

--bip=${FLANNEL_SUBNET}

systemctl enable flanneld.service #设置开机自启动flanneld

systemctl restart flanneld.service #启动flanneld

systemctl daemon-reload #保存docker

systemctl restart docker.service #重启docker生效配置

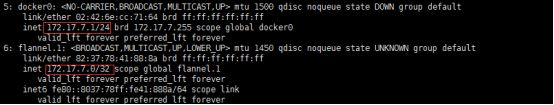

ip addr 查看flanneld与docker生成IP是否在同网段,同网段就是正常.

这里普通的k8s集群已经搭建完成,下面利用harbor管理集群

Harbor安装

安装docker-compose

下载地址 https://github.com/docker/compose/releases/

wget https://github.com/docker/compose/releases/download/1.24.1/docker-compose-Linux-x86_64

cp docker-compose-Linux-x86_64 /usr/local/kubernetes/bin/docker-compose

chmod +x /usr/local/kubernetes/bin/docker-compose

docker-compose -version #查看版本

安装harbor

https://github.com/vmware/harbor/blob/master/docs/installation_guide.md 官网安装地址

https://github.com/vmware/harbor/releases githua安装地址因github上面下载地址为国外这里利用国内可访问网站

wget http://harbor.orientsoft.cn/harbor-v1.5.0/harbor-offline-installer-v1.5.0.tgz

安装完成解压至kubernetes目录下

tar xf harbor-offline-installer-v1.5.0.tgz -C /usr/local/kubernetes/

修改harbor主配置文件

vim /usr/local/kubernetes/harbor/harbor.cfg

hostname = 10.217.135.35 #修改为本机IP

其他用户名密码等等可根据自己进行修改,这里使用默认,用户admin;密码Harbor12345

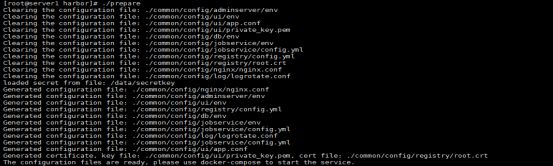

安装

cd /usr/local/kubernetes/harbor/ #harbor安装路径下面有直接可执行脚本

![]()

执行安装脚本

./prepare

./install.sh

安装完成后会有一些docker镜像这里可以看出镜像仓库及nginx等服务

利用http查看http://10.217.135.35镜像管理界面,登录用户admin;密码Harbor12345

加入认证功能使得在linux系统中进行登录操作

在master中添加(server1)

vim /etc/sysconfig/docker

OPTIONS='--selinux-enabled --log-driver=journald --signature-verification=false --insecure-registry=10.217.135.35'

配置daemon.josn 添加registries位置

vim /etc/docker/daemon.json

{

"insecure-registries": [

"10.217.135.35"

]

}

添加解析

vim /etc/hosts

10.217.135.35 server1 harbor.dinginfo.com

systemctl daemon-reload #重载docker

systemctl restart docker #重启docker这里发现不生效后重启生效

登录测试

在server端及node端测试登录成功

docker login 10.217.135.35 #登录账号:admin 密码:Harbor12345

cd /usr/local/kubernetes/harbor/

修改配置首先关闭docker-compose

docker-compose down -v 或 docker-compose stop

修改harbor.cfg或docker-compose.yml配置文件

![]()

启动harbor

./prepare

docker-compose up -d 或 docker-compose start

启动后再次刷新页面正常

上传镜像

这里上传ubuntu镜像

首先修改镜像标签

docker tag ubuntu:latest 10.217.135.35/library/ubuntu:latest

docker push 10.217.135.35/library/ubuntu:latest