spark是个啥?

Spark是一个通用的并行计算框架,由UCBerkeley的AMP实验室开发。

Spark和Hadoop有什么不同呢?

Spark是基于map reduce算法实现的分布式计算,拥有Hadoop MapReduce所具有的优点;但不同于MapReduce的是Job中间输出和结果可以保存在内存中,从而不再需要读写HDFS,因此Spark能更好地适用于数据挖掘与机器学习等需要迭代的map reduce的算法。

Spark的适用场景

Spark是基于内存的迭代计算框架,适用于需要多次操作特定数据集的应用场合。需要反复操作的次数越多,所需读取的数据量越大,受益越大,数据量小但是计算密集度较大的场合,受益就相对较小

由于RDD的特性,Spark不适用那种异步细粒度更新状态的应用,例如web服务的存储或者是增量的web爬虫和索引。就是对于那种增量修改的应用模型不适合。

总的来说Spark的适用面比较广泛且比较通用。

运行模式

本地模式

Standalone模式

Mesoes模式

yarn模式

我们来看看Standalone模式怎么运行。

1.下载安装

http://spark.apache.org/downloads.html

这里可以选择下载源码编译,或者下载已经编译好的程序(因为spark是运行在JVM上面,也可以说是跨平台的),这里是直接下载可执行程序。

Chose a package type: Pre-built for Hadoop 2.4 and later 。

解压这个 spark-1.3.0-bin-hadoop2.4.tgz 即可。

PS:你需要安装java运行环境

~/project/spark-1.3.0-bin-hadoop2.4 $java -version

java version"1.8.0_25"Java(TM) SE Runtime Environment (build1.8.0_25-b17)

Java HotSpot(TM)64-Bit Server VM (build 25.25-b02, mixed mode)

2.目录分布

sbin目录是各种启动命令

~/project/spark-1.3.0-bin-hadoop2.4 $tree sbin/

sbin/

├── slaves.sh

├── spark-config.sh

├── spark-daemon.sh

├── spark-daemons.sh

├── start-all.sh

├── start-history-server.sh

├── start-master.sh

├── start-slave.sh

├── start-slaves.sh

├── start-thriftserver.sh

├── stop-all.sh

├── stop-history-server.sh

├── stop-master.sh

├── stop-slaves.sh

└── stop-thriftserver.sh

conf目录是一些配置模板:

~/project/spark-1.3.0-bin-hadoop2.4 $tree conf/

conf/

├── fairscheduler.xml.template

├── log4j.properties.template

├── metrics.properties.template

├── slaves.template

├── spark-defaults.conf.template

└── spark-env.sh.template

3.启动master

~/project/spark-1.3.0-bin-hadoop2.4 $./sbin/start-master.sh

starting org.apache.spark.deploy.master.Master, logging to /Users/qpzhang/project/spark-1.3.0-bin-hadoop2.4/sbin/../logs/spark-qpzhang-org.apache.spark.deploy.master.Master-1-qpzhangdeMac-mini.local.out

没有进行任何配置时,采用的都是默认配置,可以看到日志文件的输出:

~/project/spark-1.3.0-bin-hadoop2.4$cat logs/spark-qpzhang-org.apache.spark.deploy.master.Master-1-qpzhangdeMac-mini.local.outSpark assembly has been built with Hive, including Datanucleus jars on classpath

Spark Command:/Library/Java/JavaVirtualMachines/jdk1.8.0_25.jdk/Contents/Home/bin/java -cp :/Users/qpzhang/project/spark-1.3.0-bin-hadoop2.4/sbin/../conf:/Users/qpzhang/project/spark-1.3.0-bin-hadoop2.4/lib/spark-assembly-1.3.0-hadoop2.4.0.jar:/Users/qpzhang/project/spark-1.3.0-bin-hadoop2.4/lib/datanucleus-api-jdo-3.2.6.jar:/Users/qpzhang/project/spark-1.3.0-bin-hadoop2.4/lib/datanucleus-core-3.2.10.jar:/Users/qpzhang/project/spark-1.3.0-bin-hadoop2.4/lib/datanucleus-rdbms-3.2.9.jar -Dspark.akka.logLifecycleEvents=true-Xms512m -Xmx512m org.apache.spark.deploy.master.Master --ip qpzhangdeMac-mini.local --port7077--webui-port8080========================================Using Spark's default log4j profile: org/apache/spark/log4j-defaults.properties15/03/2010:08:09INFO Master: Registered signal handlersfor[TERM, HUP, INT]15/03/2010:08:10WARN NativeCodeLoader: Unable to load native-hadoop libraryforyour platform...usingbuiltin-java classeswhereapplicable15/03/2010:08:10INFO SecurityManager: Changing view acls to: qpzhang15/03/2010:08:10INFO SecurityManager: Changing modify acls to: qpzhang15/03/2010:08:10INFO SecurityManager: SecurityManager: authentication disabled; ui acls disabled; users with view permissions: Set(qpzhang); users with modify permissions: Set(qpzhang)15/03/2010:08:10INFO Slf4jLogger: Slf4jLogger started15/03/2010:08:10INFO Remoting: Starting remoting15/03/2010:08:10INFO Remoting: Remoting started; listening on addresses :[akka.tcp://[email protected]:7077]15/03/2010:08:10INFO Remoting: Remoting now listens on addresses: [akka.tcp://[email protected]:7077]15/03/2010:08:10INFO Utils: Successfully started service'sparkMaster'on port7077.15/03/2010:08:11INFO Server: jetty-8.y.z-SNAPSHOT15/03/2010:08:11INFO AbstractConnector: Started [email protected]:606615/03/20 10:08:11 INFO Utils: Successfully started service on port 6066.15/03/2010:08:11INFO StandaloneRestServer: Started REST serverforsubmitting applications on port606615/03/20 10:08:11 INFO Master: Starting Spark master at spark://qpzhangdeMac-mini.local:707715/03/2010:08:11INFO Master: Running Spark version1.3.015/03/2010:08:11INFO Server: jetty-8.y.z-SNAPSHOT15/03/2010:08:11INFO AbstractConnector: Started [email protected]:808015/03/20 10:08:11 INFO Utils: Successfully started service 'MasterUI' on port 8080.15/03/2010:08:11INFO MasterWebUI: Started MasterWebUI at http://10.60.215.41:808015/03/20 10:08:11 INFO Master: I have been elected leader! New state: ALIVE

可以看到输出的几条重要的信息,service端口6066,spark端口 7077,ui端口8080等,并且当前node通过选举,确认自己为leader。

这个时候,我们可以通过 http://localhost:8080/ 来查看到当前master的总体状态。

4.附加一个worker到master

~/project/spark-1.3.0-bin-hadoop2.4$./bin/spark-classorg.apache.spark.deploy.worker.Worker spark://qpzhangdeMac-mini.local:7077Spark assembly has been built with Hive, including Datanucleus jars on classpath

Using Spark's default log4j profile: org/apache/spark/log4j-defaults.properties15/03/2010:33:49INFO Worker: Registered signal handlersfor[TERM, HUP, INT]15/03/2010:33:49WARN NativeCodeLoader: Unable to load native-hadoop libraryforyour platform...usingbuiltin-java classeswhereapplicable15/03/2010:33:49INFO SecurityManager: Changing view acls to: qpzhang15/03/2010:33:49INFO SecurityManager: Changing modify acls to: qpzhang15/03/2010:33:49INFO SecurityManager: SecurityManager: authentication disabled; ui acls disabled; users with view permissions: Set(qpzhang); users with modify permissions: Set(qpzhang)15/03/2010:33:50INFO Slf4jLogger: Slf4jLogger started15/03/2010:33:50INFO Remoting: Starting remoting15/03/2010:33:50INFO Remoting: Remoting started; listening on addresses :[akka.tcp://[email protected]:60994]15/03/2010:33:50INFO Remoting: Remoting now listens on addresses: [akka.tcp://[email protected]:60994]15/03/20 10:33:50 INFO Utils: Successfully started service 'sparkWorker' on port 60994.

15/03/20 10:33:50 INFO Worker: Starting Spark worker 10.60.215.41:60994 with 8 cores, 7.0 GB RAM15/03/2010:33:50INFO Worker: Running Spark version1.3.015/03/2010:33:50INFO Worker: Spark home: /Users/qpzhang/project/spark-1.3.0-bin-hadoop2.415/03/2010:33:50INFO Server: jetty-8.y.z-SNAPSHOT15/03/2010:33:50INFO AbstractConnector: Started [email protected]:808115/03/2010:33:50INFO Utils: Successfully started service'WorkerUI'on port8081.15/03/20 10:33:50 INFO WorkerWebUI: Started WorkerWebUI at http://10.60.215.41:808115/03/2010:33:50INFO Worker: Connecting to master akka.tcp://[email protected]:7077/user/Master...15/03/2010:33:50INFO Worker: Successfully registered with master spark://qpzhangdeMac-mini.local:7077

从日志输出可以看到, worker自己在60994端口工作,然后为自己也起了一个UI,端口是8081,可以通过 http://10.60.215.41:8081查看worker的工作状态,(不得不说,选择的分布式少不了UI监控状态这一块儿了)。

5.启动spark shell终端:

~/project/spark-1.3.0-bin-hadoop2.4$./bin/spark-shell

Spark assembly has been built with Hive, including Datanucleus jars on classpath

log4j:WARN No appenders could be foundforlogger (org.apache.hadoop.metrics2.lib.MutableMetricsFactory).

log4j:WARN Please initialize the log4j system properly.

log4j:WARN See http://logging.apache.org/log4j/1.2/faq.html#noconfig for more info.Using Spark's default log4j profile: org/apache/spark/log4j-defaults.properties15/03/2010:43:39INFO SecurityManager: Changing view acls to: qpzhang15/03/2010:43:39INFO SecurityManager: Changing modify acls to: qpzhang15/03/2010:43:39INFO SecurityManager: SecurityManager: authentication disabled; ui acls disabled; users with view permissions: Set(qpzhang); users with modify permissions: Set(qpzhang)15/03/2010:43:39INFO HttpServer: Starting HTTP Server15/03/2010:43:39INFO Server: jetty-8.y.z-SNAPSHOT15/03/2010:43:39INFO AbstractConnector: Started [email protected]:6164415/03/2010:43:39INFO Utils: Successfully started service'HTTP class server'on port61644.

Welcome to

____ __/ __/__ ___ _____/ /__

_\ \/ _ \/ _ `/ __/'_//___/ .__/\_,_/_/ /_/\_\ version1.3.0/_/Using Scala version2.10.4(Java HotSpot(TM)64-Bit Server VM, Java1.8.0_25)

Typeinexpressions to have them evaluated.

Type :helpformore information.15/03/2010:43:43INFO SparkContext: Running Spark version1.3.015/03/2010:43:43INFO SecurityManager: Changing view acls to: qpzhang15/03/2010:43:43INFO SecurityManager: Changing modify acls to: qpzhang15/03/2010:43:43INFO SecurityManager: SecurityManager: authentication disabled; ui acls disabled; users with view permissions: Set(qpzhang); users with modify permissions: Set(qpzhang)15/03/2010:43:43INFO Slf4jLogger: Slf4jLogger started15/03/2010:43:43INFO Remoting: Starting remoting15/03/2010:43:43INFO Remoting: Remoting started; listening on addresses :[akka.tcp://[email protected]:61645]15/03/20 10:43:43 INFO Utils: Successfully started service 'sparkDriver' on port 61645.15/03/2010:43:43INFO SparkEnv: Registering MapOutputTracker15/03/2010:43:44INFO SparkEnv: Registering BlockManagerMaster15/03/2010:43:44INFO DiskBlockManager: Created local directory at /var/folders/2l/195zcc1n0sn2wjfjwf9hl9d80000gn/T/spark-5349b1ce-bd10-4f44-9571-da660c1a02a3/blockmgr-a519687e-0cc3-45e4-839a-f93ac8f1397b15/03/2010:43:44INFO MemoryStore: MemoryStore started with capacity265.1MB15/03/2010:43:44INFO HttpFileServer: HTTP File server directoryis/var/folders/2l/195zcc1n0sn2wjfjwf9hl9d80000gn/T/spark-29d81b59-ec6a-4595-b2fb-81bf6b1d3b10/httpd-c572e4a5-ff85-44c9-a21f-71fb34b831e115/03/2010:43:44INFO HttpServer: Starting HTTP Server15/03/2010:43:44INFO Server: jetty-8.y.z-SNAPSHOT15/03/20 10:43:44 INFO AbstractConnector: Started [email protected]:6164615/03/2010:43:44INFO Utils: Successfully started service'HTTP file server'on port61646.15/03/2010:43:44INFO SparkEnv: Registering OutputCommitCoordinator15/03/2010:43:44INFO Server: jetty-8.y.z-SNAPSHOT15/03/2010:43:44INFO AbstractConnector: Started [email protected]:404015/03/2010:43:44INFO Utils: Successfully started service'SparkUI'on port4040.15/03/20 10:43:44 INFO SparkUI: Started SparkUI at http://10.60.215.41:404015/03/2010:43:44INFO Executor: Starting executor ID on host localhost15/03/20 10:43:44 INFO Executor: Using REPL class URI: http://10.60.215.41:61644

15/03/20 10:43:44 INFO AkkaUtils: Connecting to HeartbeatReceiver: akka.tcp://[email protected]:61645/user/HeartbeatReceiver15/03/2010:43:44INFO NettyBlockTransferService: Server created on6165115/03/2010:43:44INFO BlockManagerMaster: Trying to register BlockManager15/03/20 10:43:44 INFO BlockManagerMasterActor: Registering block manager localhost:61651 with 265.1 MB RAM, BlockManagerId(, localhost, 61651)15/03/2010:43:44INFO BlockManagerMaster: Registered BlockManager15/03/2010:43:44INFO SparkILoop: Created spark context..

Spark context availableassc.15/03/2010:43:45INFO SparkILoop: Created sql context (with Hive support)..

SQL context availableassqlContext.

scala>

从输出可以看到,又是一堆端口(各种service进行通信,没办法),包含UI, driver等等。warning日志告诉你没有进行config,采用默认。如何进行config,后面再说,先用默认的跑起来玩玩。

6.通过shell下达命令

下面我们来执行几个官网上面overview中的几个命令来玩玩。

scala>val textFile = sc.textFile("README.md") //加载数据文件,可以是本地路径,也是是HDFS路径或者其它15/03/2010:55:20INFO MemoryStore: ensureFreeSpace(159118) called with curMem=0, maxMem=27801944015/03/2010:55:20INFO MemoryStore: Block broadcast_0 storedasvaluesinmemory (estimated size155.4KB, free265.0MB)15/03/2010:55:20INFO MemoryStore: ensureFreeSpace(22692) called with curMem=159118, maxMem=27801944015/03/2010:55:20INFO MemoryStore: Block broadcast_0_piece0 storedasbytesinmemory (estimated size22.2KB, free265.0MB)15/03/2010:55:20INFO BlockManagerInfo: Added broadcast_0_piece0inmemory on localhost:61651(size:22.2KB, free:265.1MB)15/03/2010:55:20INFO BlockManagerMaster: Updated info of block broadcast_0_piece015/03/2010:55:20INFO SparkContext: Created broadcast0fromtextFile at :21textFile: org.apache.spark.rdd.RDD[String] = README.md MapPartitionsRDD[1] at textFile at :21scala>textFile.count() //列出文件行数15/03/2010:56:38INFO FileInputFormat: Total input paths to process :115/03/2010:56:38INFO SparkContext: Starting job: count at :2415/03/2010:56:38INFO DAGScheduler: Got job0(count at :24) with2output partitions (allowLocal=false)15/03/2010:56:38INFO DAGScheduler: Final stage: Stage0(count at :24)15/03/2010:56:38INFO DAGScheduler: Parents of final stage: List()15/03/2010:56:38INFO DAGScheduler: Missing parents: List()15/03/2010:56:38INFO DAGScheduler: Submitting Stage0(README.md MapPartitionsRDD[1] at textFile at :21), which has no missing parents15/03/2010:56:38INFO MemoryStore: ensureFreeSpace(2632) called with curMem=181810, maxMem=27801944015/03/2010:56:38INFO MemoryStore: Block broadcast_1 storedasvaluesinmemory (estimated size2.6KB, free265.0MB)15/03/2010:56:38INFO MemoryStore: ensureFreeSpace(1923) called with curMem=184442, maxMem=27801944015/03/2010:56:38INFO MemoryStore: Block broadcast_1_piece0 storedasbytesinmemory (estimated size1923.0B, free265.0MB)15/03/2010:56:38INFO BlockManagerInfo: Added broadcast_1_piece0inmemory on localhost:61651(size:1923.0B, free:265.1MB)15/03/2010:56:38INFO BlockManagerMaster: Updated info of block broadcast_1_piece015/03/2010:56:38INFO SparkContext: Created broadcast1frombroadcast at DAGScheduler.scala:83915/03/2010:56:38INFO DAGScheduler: Submitting2missing tasksfromStage0(README.md MapPartitionsRDD[1] at textFile at :21)15/03/2010:56:38INFO TaskSchedulerImpl: Adding taskset0.0with2tasks15/03/2010:56:38INFO TaskSetManager: Starting task0.0instage0.0(TID0, localhost, PROCESS_LOCAL,1327bytes)15/03/2010:56:38INFO TaskSetManager: Starting task1.0instage0.0(TID1, localhost, PROCESS_LOCAL,1327bytes)15/03/2010:56:38INFO Executor: Running task1.0instage0.0(TID1)15/03/2010:56:38INFO Executor: Running task0.0instage0.0(TID0)15/03/2010:56:38INFO HadoopRDD: Input split: file:/Users/qpzhang/project/spark-1.3.0-bin-hadoop2.4/README.md:0+181415/03/2010:56:38INFO HadoopRDD: Input split: file:/Users/qpzhang/project/spark-1.3.0-bin-hadoop2.4/README.md:1814+181515/03/2010:56:38INFO deprecation: mapred.tip.idisdeprecated. Instead, use mapreduce.task.id15/03/2010:56:38INFO deprecation: mapred.task.idisdeprecated. Instead, use mapreduce.task.attempt.id15/03/2010:56:38INFO deprecation: mapred.task.is.mapisdeprecated. Instead, use mapreduce.task.ismap15/03/2010:56:38INFO deprecation: mapred.task.partitionisdeprecated. Instead, use mapreduce.task.partition15/03/2010:56:38INFO deprecation: mapred.job.idisdeprecated. Instead, use mapreduce.job.id15/03/2010:56:38INFO Executor: Finished task1.0instage0.0(TID1).1830bytes result sent to driver15/03/2010:56:38INFO Executor: Finished task0.0instage0.0(TID0).1830bytes result sent to driver15/03/2010:56:38INFO TaskSetManager: Finished task0.0instage0.0(TID0)in120ms on localhost (1/2)15/03/2010:56:38INFO TaskSetManager: Finished task1.0instage0.0(TID1)in111ms on localhost (2/2)15/03/2010:56:38INFO TaskSchedulerImpl: Removed TaskSet0.0, whose tasks have all completed,frompool15/03/2010:56:38INFO DAGScheduler: Stage0(count at :24) finishedin0.134s15/03/2010:56:38INFO DAGScheduler: Job0finished: count at :24, took0.254626sres0: Long= 98scala>textFile.first() //输出第一个item, 也就是第一行内容15/03/2010:59:31INFO SparkContext: Starting job: first at :2415/03/2010:59:31INFO DAGScheduler: Got job1(first at :24) with1output partitions (allowLocal=true)15/03/2010:59:31INFO DAGScheduler: Final stage: Stage1(first at :24)15/03/2010:59:31INFO DAGScheduler: Parents of final stage: List()15/03/2010:59:31INFO DAGScheduler: Missing parents: List()15/03/2010:59:31INFO DAGScheduler: Submitting Stage1(README.md MapPartitionsRDD[1] at textFile at :21), which has no missing parents15/03/2010:59:31INFO MemoryStore: ensureFreeSpace(2656) called with curMem=186365, maxMem=27801944015/03/2010:59:31INFO MemoryStore: Block broadcast_2 storedasvaluesinmemory (estimated size2.6KB, free265.0MB)15/03/2010:59:31INFO MemoryStore: ensureFreeSpace(1945) called with curMem=189021, maxMem=27801944015/03/2010:59:31INFO MemoryStore: Block broadcast_2_piece0 storedasbytesinmemory (estimated size1945.0B, free265.0MB)15/03/2010:59:31INFO BlockManagerInfo: Added broadcast_2_piece0inmemory on localhost:61651(size:1945.0B, free:265.1MB)15/03/2010:59:31INFO BlockManagerMaster: Updated info of block broadcast_2_piece015/03/2010:59:31INFO SparkContext: Created broadcast2frombroadcast at DAGScheduler.scala:83915/03/2010:59:31INFO DAGScheduler: Submitting1missing tasksfromStage1(README.md MapPartitionsRDD[1] at textFile at :21)15/03/2010:59:31INFO TaskSchedulerImpl: Adding taskset1.0with1tasks15/03/2010:59:31INFO TaskSetManager: Starting task0.0instage1.0(TID2, localhost, PROCESS_LOCAL,1327bytes)15/03/2010:59:31INFO Executor: Running task0.0instage1.0(TID2)15/03/2010:59:31INFO HadoopRDD: Input split: file:/Users/qpzhang/project/spark-1.3.0-bin-hadoop2.4/README.md:0+181415/03/2010:59:31INFO Executor: Finished task0.0instage1.0(TID2).1809bytes result sent to driver15/03/2010:59:31INFO TaskSetManager: Finished task0.0instage1.0(TID2)in8ms on localhost (1/1)15/03/2010:59:31INFO DAGScheduler: Stage1(first at :24) finishedin0.009s15/03/2010:59:31INFO TaskSchedulerImpl: Removed TaskSet1.0, whose tasks have all completed,frompool15/03/2010:59:31INFO DAGScheduler: Job1finished: first at :24, took0.016292sres1: String=# Apache Sparkscala>val linesWithSpark = textFile.filter(line => line.contains("Spark")) //定义一个filter, 这里定义的是包含Spark关键词的filterlinesWithSpark: org.apache.spark.rdd.RDD[String]= MapPartitionsRDD[2] at filter at :23scala>linesWithSpark.count() //输出filter中的结果数15/03/2011:00:28INFO SparkContext: Starting job: count at :2615/03/2011:00:28INFO DAGScheduler: Got job2(count at :26) with2output partitions (allowLocal=false)15/03/2011:00:28INFO DAGScheduler: Final stage: Stage2(count at :26)15/03/2011:00:28INFO DAGScheduler: Parents of final stage: List()15/03/2011:00:28INFO DAGScheduler: Missing parents: List()15/03/2011:00:28INFO DAGScheduler: Submitting Stage2(MapPartitionsRDD[2] at filter at :23), which has no missing parents15/03/2011:00:28INFO MemoryStore: ensureFreeSpace(2840) called with curMem=190966, maxMem=27801944015/03/2011:00:28INFO MemoryStore: Block broadcast_3 storedasvaluesinmemory (estimated size2.8KB, free265.0MB)15/03/2011:00:28INFO MemoryStore: ensureFreeSpace(2029) called with curMem=193806, maxMem=27801944015/03/2011:00:28INFO MemoryStore: Block broadcast_3_piece0 storedasbytesinmemory (estimated size2029.0B, free265.0MB)15/03/2011:00:28INFO BlockManagerInfo: Added broadcast_3_piece0inmemory on localhost:61651(size:2029.0B, free:265.1MB)15/03/2011:00:28INFO BlockManagerMaster: Updated info of block broadcast_3_piece015/03/2011:00:28INFO SparkContext: Created broadcast3frombroadcast at DAGScheduler.scala:83915/03/2011:00:28INFO DAGScheduler: Submitting2missing tasksfromStage2(MapPartitionsRDD[2] at filter at :23)15/03/2011:00:28INFO TaskSchedulerImpl: Adding taskset2.0with2tasks15/03/2011:00:28INFO TaskSetManager: Starting task0.0instage2.0(TID3, localhost, PROCESS_LOCAL,1327bytes)15/03/2011:00:28INFO TaskSetManager: Starting task1.0instage2.0(TID4, localhost, PROCESS_LOCAL,1327bytes)15/03/2011:00:28INFO Executor: Running task0.0instage2.0(TID3)15/03/2011:00:28INFO Executor: Running task1.0instage2.0(TID4)15/03/2011:00:28INFO HadoopRDD: Input split: file:/Users/qpzhang/project/spark-1.3.0-bin-hadoop2.4/README.md:1814+181515/03/2011:00:28INFO HadoopRDD: Input split: file:/Users/qpzhang/project/spark-1.3.0-bin-hadoop2.4/README.md:0+181415/03/2011:00:28INFO Executor: Finished task1.0instage2.0(TID4).1830bytes result sent to driver15/03/2011:00:28INFO Executor: Finished task0.0instage2.0(TID3).1830bytes result sent to driver15/03/2011:00:28INFO TaskSetManager: Finished task1.0instage2.0(TID4)in9ms on localhost (1/2)15/03/2011:00:28INFO TaskSetManager: Finished task0.0instage2.0(TID3)in11ms on localhost (2/2)15/03/2011:00:28INFO DAGScheduler: Stage2(count at :26) finishedin0.011s15/03/2011:00:28INFO TaskSchedulerImpl: Removed TaskSet2.0, whose tasks have all completed,frompool15/03/2011:00:28INFO DAGScheduler: Job2finished: count at :26, took0.019407sres2: Long= 19 //可以看到有19行包含 Spark关键词scala>linesWithSpark.first() //打印第一行数据15/03/2011:00:35INFO SparkContext: Starting job: first at :2615/03/2011:00:35INFO DAGScheduler: Got job3(first at :26) with1output partitions (allowLocal=true)15/03/2011:00:35INFO DAGScheduler: Final stage: Stage3(first at :26)15/03/2011:00:35INFO DAGScheduler: Parents of final stage: List()15/03/2011:00:35INFO DAGScheduler: Missing parents: List()15/03/2011:00:35INFO DAGScheduler: Submitting Stage3(MapPartitionsRDD[2] at filter at :23), which has no missing parents15/03/2011:00:35INFO MemoryStore: ensureFreeSpace(2864) called with curMem=195835, maxMem=27801944015/03/2011:00:35INFO MemoryStore: Block broadcast_4 storedasvaluesinmemory (estimated size2.8KB, free265.0MB)15/03/2011:00:35INFO MemoryStore: ensureFreeSpace(2048) called with curMem=198699, maxMem=27801944015/03/2011:00:35INFO MemoryStore: Block broadcast_4_piece0 storedasbytesinmemory (estimated size2.0KB, free264.9MB)15/03/2011:00:35INFO BlockManagerInfo: Added broadcast_4_piece0inmemory on localhost:61651(size:2.0KB, free:265.1MB)15/03/2011:00:35INFO BlockManagerMaster: Updated info of block broadcast_4_piece015/03/2011:00:35INFO SparkContext: Created broadcast4frombroadcast at DAGScheduler.scala:83915/03/2011:00:35INFO DAGScheduler: Submitting1missing tasksfromStage3(MapPartitionsRDD[2] at filter at :23)15/03/2011:00:35INFO TaskSchedulerImpl: Adding taskset3.0with1tasks15/03/2011:00:35INFO TaskSetManager: Starting task0.0instage3.0(TID5, localhost, PROCESS_LOCAL,1327bytes)15/03/2011:00:35INFO Executor: Running task0.0instage3.0(TID5)15/03/2011:00:35INFO HadoopRDD: Input split: file:/Users/qpzhang/project/spark-1.3.0-bin-hadoop2.4/README.md:0+181415/03/2011:00:35INFO Executor: Finished task0.0instage3.0(TID5).1809bytes result sent to driver15/03/2011:00:35INFO TaskSetManager: Finished task0.0instage3.0(TID5)in10ms on localhost (1/1)15/03/2011:00:35INFO DAGScheduler: Stage3(first at :26) finishedin0.010s15/03/2011:00:35INFO TaskSchedulerImpl: Removed TaskSet3.0, whose tasks have all completed,frompool15/03/2011:00:35INFO DAGScheduler: Job3finished: first at :26, took0.016494sres3: String= # Apache Spark

更多命令参考: https://spark.apache.org/docs/latest/quick-start.html

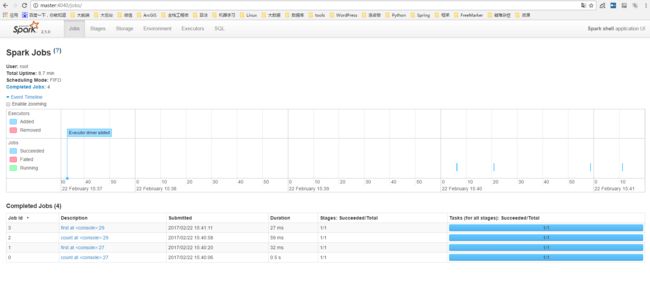

期间,我们可以通过UI看到job列表和状态:

跑起来先,第一步已经完成,那么spark架构是什么样的?运行原理?如何自定义配置?如何扩展到分布式?如何编程实现?我们后面再慢慢研究。

转载请注明出处:http://www.cnblogs.com/zhangqingping/p/4352977.html