CDH5.7Hadoop集群搭建(离线版)

用了一周多的时间终于把CDH版Hadoop部署在了测试环境(部分组件未安装成功),本文将就这个部署过程做个总结。

一、Hadoop版本选择。

Hadoop大致可分为Apache Hadoop和第三方发行第三方发行版Hadoop,考虑到Hadoop集群部署的高效,集群的稳定性,以及后期集中的配置管理,业界多使用Cloudera公司的发行版,简称为CDH。

下面是转载的Hadoop社区版本与第三方发行版本的比较:

Apache社区版本

优点:

完全开源免费。

社区活跃

文档、资料详实

缺点:

复杂的版本管理。版本管理比较混乱的,各种版本层出不穷,让很多使用者不知所措。

复杂的集群部署、安装、配置。通常按照集群需要编写大量的配置文件,分发到每一台节点上,容易出错,效率低下。

复杂的集群运维。对集群的监控,运维,需要安装第三方的其他软件,如ganglia,nagois等,运维难度较大。

复杂的生态环境。在Hadoop生态圈中,组件的选择、使用,比如Hive,Mahout,Sqoop,Flume,Spark,Oozie等等,需要大量考虑兼容性的问题,版本是否兼容,组件是否有冲突,编译是否能通过等。经常会浪费大量的时间去编译组件,解决版本冲突问题。

第三方发行版本(如CDH,HDP,MapR等)

优点:

基于Apache协议,100%开源。

版本管理清晰。比如Cloudera,CDH1,CDH2,CDH3,CDH4等,后面加上补丁版本,如CDH4.1.0 patch level 923.142,表示在原生态Apache Hadoop 0.20.2基础上添加了1065个patch。

比Apache Hadoop在兼容性、安全性、稳定性上有增强。第三方发行版通常都经过了大量的测试验证,有众多部署实例,大量的运行到各种生产环境。

版本更新快。通常情况,比如CDH每个季度会有一个update,每一年会有一个release。

基于稳定版本Apache Hadoop,并应用了最新Bug修复或Feature的patch

提供了部署、安装、配置工具,大大提高了集群部署的效率,可以在几个小时内部署好集群。

运维简单。提供了管理、监控、诊断、配置修改的工具,管理配置方便,定位问题快速、准确,使运维工作简单,有效。

缺点:

涉及到厂商锁定的问题。(可以通过技术解决)

转自:http://itindex.net/detail/51484-%E8%87%AA%E5%AD%A6-%E5%A4%A7%E6%95%B0%E6%8D%AE-%E7%94%9F%E4%BA%A7

更多内容请看原作者博客。

二、安装介质准备

安装介质准备和安装部分主要参考:http://blog.csdn.net/shawnhu007/article/details/52579204,对其内容进行少许补充以做到能傻瓜安装的目的。

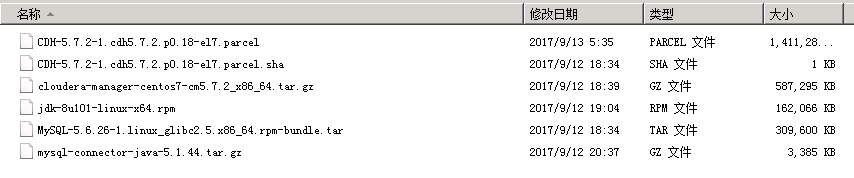

我们采用离线安装的方式,需要下载CDH离线安装包和相关组件:

操作系统采用CentOS Minimal 7 :http://124.205.69.134/files/4128000005F9FCB3/mirrors.zju.edu.cn/centos/7.4.1708/isos/x86_64/CentOS-7-x86_64-Minimal-1708.iso

JDK环境 版本:jdk-8u101-linux-x64.rpm 下载地址:oracle官网mysql

rpm包:http://dev.mysql.com/get/Downloads/MySQL-5.6/MySQL-5.6.26-1.linux_glibc2.5.x86_64.rpm-bundle.tarjdbc连接包mysql-connector-java-5.1.39-bin.jar: http://dev.mysql.com/downloads/connector/j/

CDH安装相关的包

cloudera manager包 :5.7.2 cloudera-manager-centos7-cm5.7.2_x86_64.tar.gz

下载地址:http://archive.cloudera.com/cm5/cm/5/cloudera-manager-centos7-cm5.7.2_x86_64.tar.gzCDH-5.7.2-1.cdh5.7.2.p0.11-el7.parcel

CDH-5.7.2-1.cdh5.7.2.p0.11-el7.parcel.sha1

manifest.json

以上三个下载地址在http://archive.cloudera.com/cdh5/parcels/5.7.2/,注意centos要下载el7的,我就因为一开始不清楚下的el6,结果提示parcels不知道redhat7,搞了好久才还原到初始重新来过

介质下载和安装部分主要参考:http://blog.csdn.net/shawnhu007/article/details/52579204

在线安装请参考文章(对网速有较高要求):http://www.cnblogs.com/ee900222/p/hadoop_3.html

三、操作系统准备

准备好三台环境一样的centos7在本地虚拟机VMWare上,Cloudera发行版比起Apache社区版本安装对硬件的要求更高,内存至少10G,不然后面你会遇到各种问题,或许都找不到答案。

本人前2次安装失败就是因为节点分配内存太少,建议对于cloudera-scm-server就需要至少4G的内存,cloudera-scm-agent的内存至少也需要1.5G以上。

3台虚拟机环境如下:

| IP地址 | 主机名 | 说明 |

| 10.250.160.32 | CDH1 | 主节点master,datanode |

| 10.250.160.33 | CDH2 | datanode |

| 10.250.160.34 | CDH3 | datanode |

四、开始安装前配置和预装软件

可以在VM中先安装1台机器,做完相关配置后再克隆出另外2台机器,以避免在3台机器上的重复配置

因为Centos7的最小安装版,所以首先解决首次开机联网问题

[root@cdh1~]$ vi /etc/sysconfig/network-scripts/ifcfg-enp0s3 将 ONBOOT=no 改为 ONBOOT=yes [root@cdh1~]$ systemct1 restart network [root@cdh1~]$ yum install net-tools //为了使用ifconfig查看网络

安装jdk(每台机器都要) ,首先卸载原有的openJDK

![]()

[root@cdh1~]$ java -version [root@cdh1~]$ rpm -qa | grep jdk java-1.7.0-openjdk-1.7.0.75-2.5.4.2.el7_0.x86_64 java-1.7.0-openjdk-headless-1.7.0.75-2.5.4.2.el7_0.x86_64 [root@cdh1~]# yum -y remove java-1.7.0-openjdk-1.7.0.75-2.5.4.2.el7_0.x86_64 [root@cdh1~]# yum -y remove java-1.7.0-openjdk-headless-1.7.0.75-2.5.4.2.el7_0.x86_64 [root@cdh1~]# java -version bash: /usr/bin/java: No such file or directory [root@cdh1~]# rpm -ivh jdk-8u101-linux-x64.rpm [root@cdh1~]# java -version java version "1.8.0_101"Java(TM) SE Runtime Environment (build 1.8.0_101-b13) Java HotSpot(TM) 64-Bit Server VM (build 25.101-b13, mixed mode)

![]()

修改每台节点服务器的有关配置hostname、selinux关闭,防火墙关闭;hostname修改:分别对三台都进行更改,并且注意每台名称和ip,每台都要配上hosts。下面以cdh1为例

![]()

[root@cdh1~]# vi /etc/sysconfig/network NETWORKING=yes HOSTNAME=cdh1 [root@cdh1~]# vi /etc/hosts127.0.0.1 localhost.cdh1 10.250.160.32 CDH1 主节点master,datanode cdh1 10.250.160.33 CDH1 主节点master,datanode cdh2 10.250.160.34 CDH1 主节点master,datanode cdh3

![]()

selinux关闭(所有节点官方文档要求),机器重启后生效。

[root@cdh1~]# vi /etc/sysconfig/selinux SELINUX=disabled [root@cdh1~]#sestatus -v SELinux status: disabled 表示已经关闭了

关闭防火墙

![]()

[root@cdh1~]# systemctl stop firewalld [root@cdh1~]# systemctl disable firewalld rm '/etc/systemd/system/dbus-org.fedoraproject.FirewallD1.service'rm '/etc/systemd/system/basic.target.wants/firewalld.service'[root@cdh1~]# systemctl status firewalld firewalld.service - firewalld - dynamic firewall daemon Loaded: loaded (/usr/lib/systemd/system/firewalld.service; disabled) Active: inactive (dead)

![]()

NTP服务器配置(用于3个节点间实现时间同步)

![]()

[root@cdh1~]#yum -y install ntp 更改master的节点 [root@cdh1~]## vi /etc/ntp.conf 注释掉所有server *.*.*的指向,新添加一条可连接的ntp服务器(我选的本公司的ntp测试服务器) server 172.30.0.19 iburst 在其他节点上把ntp指向master服务器地址即可(/etc/ntp.conf下) server 10.250.160.32 iburst [root@cdh1~]## systemctl start ntpd //启动ntp服务[root@cdh1~]## systemctl status ntpd //查看ntp服务状态

![]()

SSH无密码登录配置,各个节点都需要设置免登录密码

下面以到10.250.160.32的免密登录设置举例

![]()

[root@cdh1 /]# ssh-keygen -t rsa Generating public/private rsa key pair. Enter file in which to save the key (/root/.ssh/id_rsa):/root/.ssh/id_rsa already exists. Overwrite (y/n)? y Enter passphrase (empty for no passphrase): Enter same passphrase again: Your identification has been saved in /root/.ssh/id_rsa. Your public key has been saved in /root/.ssh/id_rsa.pub. The key fingerprint is: 1d:e9:b4:ed:1d:e5:c6:a7:f3:23:ac:02:2b:8c:fc:ca root@cdh1 The key's randomart image is:+--[ RSA 2048]----+ | | | . | | + .| | + + + | | S + . . =| | . . . +.| | . o o o + | | .o o . . o + | | Eo.. ... . o| +-----------------+[root@cdh1 /]# ssh-copy-id 10.250.160.33/usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed/usr/bin/ssh-copy-id: INFO: 1 key(s) remain to be installed -- if you are prompted now it is to install the new keys [email protected]'s password:Number of key(s) added: 1Now try logging into the machine, with: "ssh '192.168.42.129'"and check to make sure that only the key(s) you wanted were added.

![]()

安装mysql

centos7自带的是mariadb,需要先卸载掉

![]()

[root@cdh1 /]# rpm -qa |-libs-.-/]# rpm -e --nodeps mariadb-libs-.-/]# tar -xvf MySQL-.-.linux_glibc2..x86_64.rpm-bundle.tar [root@cdh1 /]# rpm -ivh MySQL-*.rpm [root@cdh1 /]# cp /usr/share/mysql/my-.cnf /etc//]# vi /etc/my.cnf -storage-engine =-server =-connect = --server =/]# yum install -y perl-Module-/]# /usr/bin/mysql_install_db [root@cdh1 /]# service mysql restart ERROR! MySQL server PID file could not be found!!/]# cat /root/.mysql_secret # The random password the root user at Fri Sep :: /]# mysql -u root -p mysql> SET PASSWORD=PASSWORD(> update user host= user= and host=; Query OK, row affected ( Changed: Warnings: > rows affected (/]# chkconfig mysql on

[root@cdh1 /]# tar -zcvf mysql-connector-java-5.1.44.tar.gz // 解压mysql-connector-java-5.1.44.tar.gz得到mysql-connector-java-5.1.44-bin.jar

[root@cdh1 /]# mkdir /usr/share/java // 在各节点创建java文件夹

[root@cdh1 /]# cp mysql-connector-java-5.1.44-bin.jar /usr/share/java/mysql-connector-java.jar //将mysql-connector-java-5.1.44-bin.jar拷贝到/usr/share/java路径下并重命名为mysql-connector-java.jar

![]()

创建数据库

![]()

create database hive DEFAULT CHARSET utf8 COLLATE utf8_general_ci; Query OK, 1 row affected (0.00 sec) create database amon DEFAULT CHARSET utf8 COLLATE utf8_general_ci; Query OK, 1 row affected (0.00 sec) create database hue DEFAULT CHARSET utf8 COLLATE utf8_general_ci; Query OK, 1 row affected (0.00 sec) create database monitor DEFAULT CHARSET utf8 COLLATE utf8_general_ci; Query OK, 1 row affected (0.00 sec) create database oozie DEFAULT CHARSET utf8 COLLATE utf8_general_ci; Query OK, 1 row affected (0.00 sec) grant all on *.* to root@"%" Identified by "123456";

![]()

五、安装Cloudera-Manager

![]()

//解压cm tar包到指定目录所有服务器都要(或者在主节点解压好,然后通过scp到各个节点同一目录下)[root@cdh1 ~]#mkdir /opt/cloudera-manager

[root@cdh1 ~]# tar -axvf cloudera-manager-centos7-cm5.7.2_x86_64.tar.gz -C /opt/cloudera-manager

//创建cloudera-scm用户(所有节点)[root@cdh1 ~]# useradd --system --home=/opt/cloudera-manager/cm-5.7.2/run/cloudera-scm-server --no-create-home --shell=/bin/false --comment "Cloudera SCM User" cloudera-scm

//在主节点创建cloudera-manager-server的本地元数据保存目录[root@cdh1 ~]# mkdir /var/cloudera-scm-server

[root@cdh1 ~]# chown cloudera-scm:cloudera-scm /var/cloudera-scm-server

[root@cdh1 ~]# chown cloudera-scm:cloudera-scm /opt/cloudera-manager//配置从节点cloudera-manger-agent指向主节点服务器[root@cdh1 ~]# vi /opt/cloudera-manager/cm-5.7.2/etc/cloudera-scm-agent/config.ini

将server_host改为CMS所在的主机名即cdh1//主节点中创建parcel-repo仓库目录[root@cdh1 ~]# mkdir -p /opt/cloudera/parcel-repo

[root@cdh1 ~]# chown cloudera-scm:cloudera-scm /opt/cloudera/parcel-repo

[root@cdh1 ~]# cp CDH-5.7.2-1.cdh5.7.2.p0.18-el7.parcel CDH-5.7.2-1.cdh5.7.2.p0.18-el7.parcel.sha manifest.json /opt/cloudera/parcel-repo

注意:其中CDH-5.7.2-1.cdh5.7.2.p0.18-el5.parcel.sha1 后缀要把1去掉//所有节点创建parcels目录[root@cdh1 ~]# mkdir -p /opt/cloudera/parcels

[root@cdh1 ~]# chown cloudera-scm:cloudera-scm /opt/cloudera/parcels

解释:Clouder-Manager将CDHs从主节点的/opt/cloudera/parcel-repo目录中抽取出来,分发解压激活到各个节点的/opt/cloudera/parcels目录中//初始脚本配置数据库scm_prepare_database.sh(在主节点上)[root@cdh1 ~]# /opt/cloudera-manager/cm-5.7.2/share/cmf/schema/scm_prepare_database.sh mysql -hcdh1 -uroot -p123456 --scm-host cdh1 scmdbn scmdbu scmdbp

说明:这个脚本就是用来创建和配置CMS需要的数据库的脚本。各参数是指:

mysql:数据库用的是mysql,如果安装过程中用的oracle,那么该参数就应该改为oracle。-cdh1:数据库建立在cdh1主机上面,也就是主节点上面。-uroot:root身份运行mysql。-123456:mysql的root密码是***。--scm-host cdh1:CMS的主机,一般是和mysql安装的主机是在同一个主机上,最后三个参数是:数据库名,数据库用户名,数据库密码。

如果报错:

ERROR com.cloudera.enterprise.dbutil.DbProvisioner - Exception when creating/dropping database with user 'root' and jdbc url 'jdbc:mysql://localhost/?useUnicode=true&characterEncoding=UTF-8'java.sql.SQLException: Access denied for user 'root'@'cdh1' (using password: YES)

则参考 http://forum.spring.io/forum/spring-projects/web/57254-java-sql-sqlexception-access-denied-for-user-root-localhost-using-password-yes运行如下命令:

update user set PASSWORD=PASSWORD('123456') where user='root';

GRANT ALL PRIVILEGES ON *.* TO 'root'@'cdh1' IDENTIFIED BY '123456' WITH GRANT OPTION;

FLUSH PRIVILEGES;//启动主节点[root@cdh1 ~]# cp /opt/cloudera-manager/cm-5.7.2/etc/init.d/cloudera-scm-server /etc/init.d/cloudera-scm-server

[root@cdh1 ~]# chkconfig cloudera-scm-server on

[root@cdh1 ~]# vi /etc/init.d/cloudera-scm-server

CMF_DEFAULTS=${CMF_DEFAULTS:-/etc/default}改为=/opt/cloudera-manager/cm-5.7.2/etc/default[root@cdh1 ~]# service cloudera-scm-server start//同时为了保证在每次服务器重启的时候都能启动cloudera-scm-server,应该在开机启动脚本/etc/rc.local中加入命令:service cloudera-scm-server restart//启动cloudera-scm-agent所有节点[root@cdhX ~]# mkdir /opt/cloudera-manager/cm-5.7.2/run/cloudera-scm-agent

[root@cdhX ~]# cp /opt/cloudera-manager/cm-5.7.2/etc/init.d/cloudera-scm-agent /etc/init.d/cloudera-scm-agent

[root@cdhX ~]# chkconfig cloudera-scm-agent on

[root@cdhX ~]# vi /etc/init.d/cloudera-scm-agent

CMF_DEFAULTS=${CMF_DEFAULTS:-/etc/default}改为=/opt/cloudera-manager/cm-5.7.2/etc/default[root@cdhX ~]# service cloudera-scm-agent start//同时为了保证在每次服务器重启的时候都能启动cloudera-scm-agent,应该在开机启动脚本/etc/rc.local中加入命令:service cloudera-scm-agent restart

![]()

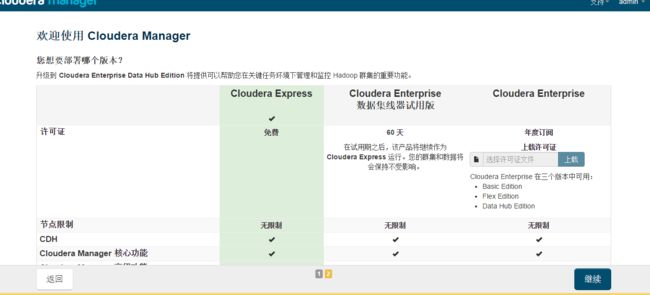

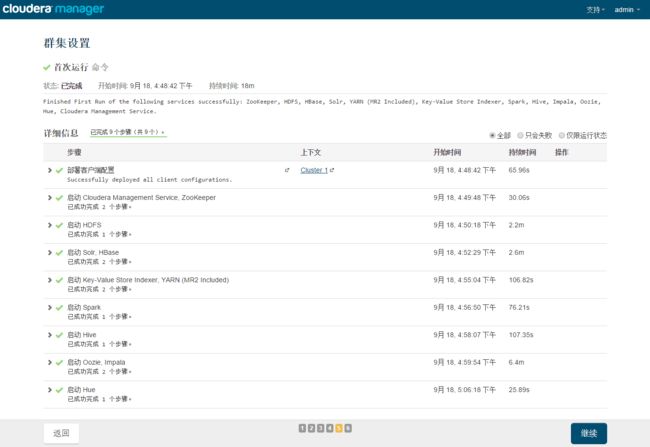

六、在浏览器安装CDH

等待主节点完成启动就在浏览器中进行操作了

进入10.250.160.32:7180 默认使用admin admin登录

以下在浏览器中使用操作安装

配置主机:由于我们在各个节点都安装启动了agent,并且在中各个节点都在配置文件中指向cdh1是server节点,所以这里我们可以在“当前管理的主机”中看到三个主机,全部勾选并继续.

注意:如果cloudera-scm-agent没有设为开机启动,如果以上有重启这里可能会检测不到其他服务器。

然后选择选择cdh

这个地方要注意这个地方有两项没有检查通过,

根据帖子 http://www.cnblogs.com/itboys/p/5955545.html 可以在集群中使用以下命令,然后再点击上面的重新运行会发现这次全部检查通过了,

但是我没有成功,还请高手告诉我原因。

echo 0 > /proc/sys/vm/swappiness echo never > /sys/kernel/mm/transparent_hugepage/defrag

根据需要选择要安装的服务,如果选择所有服务则对系统配置要求较高

数据库设置选择

| 数据库设置 | 数据库类型 | 数据库名称 | 用户名 | 密码 |

| Hive | mysql | hive | root | 123456 |

| Oozie Server | mysql | oozie | root | 123456 |

然后直接下一步下一步开始安装

安装完成后可在浏览器中进入10.250.160.32:7180地址,查看集群情况:

我这里有较多报警,大概是安装过程中部分组件存在错误所致,现在还没有能力排除这些错误,先看基本功能。

七、测试

在集群的一台机器上执行以下模拟Pi的示例程序:

sudo -u hdfs hadoop jar /opt/cloudera/parcels/CDH/lib/hadoop-mapreduce/hadoop-mapreduce-examples.jar pi 10 100

通过YARN的Web管理界面也可以看到MapReduce的执行状态:

10.250.160.32:8088/cluster

MapReduce执行过程中终端的输出如下:

![]()

Number of Maps = 10Samples per Map = 100Wrote input for Map #0Wrote input for Map #1Wrote input for Map #2Wrote input for Map #3Wrote input for Map #4Wrote input for Map #5Wrote input for Map #6Wrote input for Map #7Wrote input for Map #8Wrote input for Map #9Starting Job17/09/22 17:17:50 INFO client.RMProxy: Connecting to ResourceManager at cdh1/192.168.42.128:803217/09/22 17:17:52 INFO input.FileInputFormat: Total input paths to process : 1017/09/22 17:17:52 INFO mapreduce.JobSubmitter: number of splits:1017/09/22 17:17:53 INFO mapreduce.JobSubmitter: Submitting tokens for job: job_1505892176617_000217/09/22 17:17:53 INFO impl.YarnClientImpl: Submitted application application_1505892176617_000217/09/22 17:17:54 INFO mapreduce.Job: The url to track the job: http://cdh1:8088/proxy/application_1505892176617_0002/17/09/22 17:17:54 INFO mapreduce.Job: Running job: job_1505892176617_000217/09/22 17:18:07 INFO mapreduce.Job: Job job_1505892176617_0002 running in uber mode : false17/09/22 17:18:07 INFO mapreduce.Job: map 0% reduce 0%17/09/22 17:18:22 INFO mapreduce.Job: map 10% reduce 0%17/09/22 17:18:29 INFO mapreduce.Job: map 20% reduce 0%17/09/22 17:18:37 INFO mapreduce.Job: map 30% reduce 0%17/09/22 17:18:43 INFO mapreduce.Job: map 40% reduce 0%17/09/22 17:18:49 INFO mapreduce.Job: map 50% reduce 0%17/09/22 17:18:56 INFO mapreduce.Job: map 60% reduce 0%17/09/22 17:19:02 INFO mapreduce.Job: map 70% reduce 0%17/09/22 17:19:10 INFO mapreduce.Job: map 80% reduce 0%17/09/22 17:19:16 INFO mapreduce.Job: map 90% reduce 0%17/09/22 17:19:24 INFO mapreduce.Job: map 100% reduce 0%17/09/22 17:19:30 INFO mapreduce.Job: map 100% reduce 100%17/09/22 17:19:32 INFO mapreduce.Job: Job job_1505892176617_0002 completed successfully17/09/22 17:19:32 INFO mapreduce.Job: Counters: 49 File System Counters FILE: Number of bytes read=91 FILE: Number of bytes written=1308980 FILE: Number of read operations=0 FILE: Number of large read operations=0 FILE: Number of write operations=0 HDFS: Number of bytes read=2590 HDFS: Number of bytes written=215 HDFS: Number of read operations=43 HDFS: Number of large read operations=0 HDFS: Number of write operations=3 Job Counters Launched map tasks=10 Launched reduce tasks=1 Data-local map tasks=10 Total time spent by all maps in occupied slots (ms)=58972 Total time spent by all reduces in occupied slots (ms)=5766 Total time spent by all map tasks (ms)=58972 Total time spent by all reduce tasks (ms)=5766 Total vcore-seconds taken by all map tasks=58972 Total vcore-seconds taken by all reduce tasks=5766 Total megabyte-seconds taken by all map tasks=60387328 Total megabyte-seconds taken by all reduce tasks=5904384 Map-Reduce Framework Map input records=10 Map output records=20 Map output bytes=180 Map output materialized bytes=340 Input split bytes=1410 Combine input records=0 Combine output records=0 Reduce input groups=2 Reduce shuffle bytes=340 Reduce input records=20 Reduce output records=0 Spilled Records=40 Shuffled Maps =10 Failed Shuffles=0 Merged Map outputs=10 GC time elapsed (ms)=1509 CPU time spent (ms)=10760 Physical memory (bytes) snapshot=4541886464 Virtual memory (bytes) snapshot=30556168192 Total committed heap usage (bytes)=3937402880 Shuffle Errors BAD_ID=0 CONNECTION=0 IO_ERROR=0 WRONG_LENGTH=0 WRONG_MAP=0 WRONG_REDUCE=0 File Input Format Counters Bytes Read=1180 File Output Format Counters Bytes Written=97Job Finished in 102.286 seconds Estimated value of Pi is 3.14800000000000000000

![]()

遇到的问题:

1、在Windows Server2008 r2服务器使用VM安装Centos7时,报错:

此主机不支持64位客户机操作系统,此系统无法运行

这个需要分别在VM的虚拟机编辑中添加VT-X虚拟化功能,并且在Windows Server服务器的虚拟机服务器管理Web界面同步设置。

参考:https://www.cnblogs.com/zhangleisanshi/p/7575579.html