1、抓取本地网页解析其中的图片、标题、价格、星级和浏览量

经过查看和分析,每一项都是由一个div包裹

抓取数据的Python代码#

from bs4 import BeautifulSoup

path = r'G:/1_2_homework_required/index.html'

with open(path,'r') as wb_data:

soup = BeautifulSoup(wb_data,'lxml')

imgs = soup.select('div.col-sm-4 > div.thumbnail > img')

titles = soup.select('div.col-sm-4 > div.thumbnail > div.caption > h4:nth-of-type(2) > a')

prices = soup.select('div.col-sm-4 > div.thumbnail > div.caption > h4:nth-of-type(1)')

stars = soup.select('div.col-sm-4 > div.thumbnail > div.ratings > p:nth-of-type(2)')

views = soup.select('div.col-sm-4 > div.thumbnail > div.ratings > p.pull-right')

for img,title,price,star,view in zip(imgs,titles,prices,stars,views):

data = {

'title' : title.get_text(),

'img' : img.get('src'),

'price' : price.get_text(),

'star' : len(star.find_all('span',class_='glyphicon glyphicon-star')),

'view' : view.get_text()

}

print(data)

这题的难点在于星星数的抓取, 观察发现,每一个星星会有一次

所以统计有多少次,就知道有多少个星星了;

使用find_all 统计有几处是星星的样式,第一个参数定位标签名,第二个参数定位css 样式由于find_all()返回的结果是列表,我们再使用len()方法去计算列表中的元素个数

2、抓取小猪短租网的列表页和详情页数据

1. 列表页的抓取

def item_link_list(page):

for i in range(1,page+1):

ti = random.randrange(1,4)

time.sleep(ti)

url = 'http://sh.xiaozhu.com/search-duanzufang-p{}-0/'.format(i)

print(url)

wb_data = requests.get(url)

soup = BeautifulSoup(wb_data.text,'lxml')

urls = imgs = soup.select('ul.pic_list.clearfix > li > a')

prices = soup.select('div.result_btm_con.lodgeunitname > span > i')

titles = soup.select('div.result_btm_con.lodgeunitname > div.result_intro > a > span')

for title,url,price,img in zip(titles,urls,prices,imgs):

da = {

'title' : title.get_text(),

'url' : url.get('href'),

'price' : price.get_text(),

}

print(da)

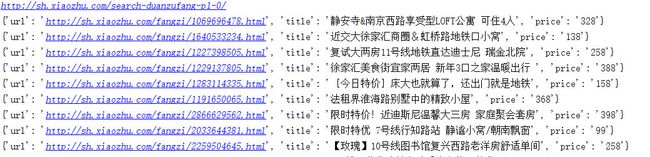

结果为

2.根据url抓取详情页数据

def returnSex(sexclass):

if sexclass == 'member_ico':

return '男'

if sexclass == 'member_ico1':

return '女'

def item_detail(url):

wd_data = requests.get(url)

soup = BeautifulSoup(wd_data.text,'lxml')

title = soup.select('div.pho_info > h4 > em')[0].get_text()

address = soup.select('div.pho_info > p > span.pr5')[0].get_text()

price = soup.select('div.day_l > span')[0].get_text()

img = soup.select('#curBigImage')[0].get('src')

host_img = soup.select('div.member_pic > a > img')[0].get('src')

host_sex = soup.select('div.member_pic > div')[0].get('class')[0]

host_name = soup.select('#floatRightBox > div.js_box.clearfix > div.w_240 > h6 > a')[0].get_text()

data = {

'title': title,

'address': address.strip().lstrip().rstrip(','),

'price': price,

'img': img,

'host_img': host_img,

'ownersex': returnSex(host_sex),

'ownername': host_name

}

print(data)

结果为#

1.jpg

3.总结

这次的作业基本无太大的问题,最难的是判断房东的性别,需要通过类名来判断

3.抓取Weheartit前20页数据

根据传入页数抓取1到页数的所有图片链接

def get_list_imgs(page):

for index in range(1,page+1):

time.sleep(3)

url = 'http://weheartit.com/recent?scrolling=true&page={0}'.format(index)

wb_data = requests.get(url)

soup = BeautifulSoup(wb_data.text,'lxml')

imgs = soup.select('img.entry_thumbnail')

for img in imgs:

img = img.get('src')

down_url.append(img)

print(url)

根据url下载图片

def down_img(urls):

for item in urls:

time.sleep(1)

name = item[-24:-15]

urlsd = path + name + '.jpg'

print(urlsd)

urllib.request.urlretrieve(item, urlsd)

print('Done')

4.抓取58同城的列表页和详情页

首先是根据用户的类别和要抓取的页数来获得物品的链接地址

def get_item_list(who_sells,page):

links = []

for index in range(1,page+1):

url = 'http://bj.58.com/pbdn/{}/pn{}'.format(str(who_sells),index)

wb_data = requests.get(url)

soup = BeautifulSoup(wb_data.text,'lxml')

for item_url in soup.select('td.t > a.t'):

if 'bj.58.com' in str(item_url):

link = item_url.get('href').split('?')[0]

links.append(link)

else:

pass

return links

再根据链接地址抓取物品的信息

# 获得物品成色

def get_quality(qu):

if qu == '-':

return '不明'

else:

return qu

def get_detail_item(url):

wb_data = requests.get(url)

soup = BeautifulSoup(wb_data.text,'lxml')

category = soup.select('div.breadCrumb.f12 > span:nth-of-type(3) > a')[0].get_text()

title = soup.select('div.col_sub.mainTitle > h1')[0].get_text()

date = soup.select('li.time')[0].get_text()

price = soup.select('span.price.c_f50')[0].get_text()

quality = soup.select('div.su_con > span')[1].get_text().strip().lstrip().rstrip(',')

quality = get_quality(quality)

area = list(soup.select('.c_25d')[0].stripped_strings) if soup.find_all('span','c_25d') else None

date = {

'category' : category,

'title' : title,

'date' : date,

'price' : price,

'quality' : quality,

'area' : area

}

print(date)

通过这儿一周的练习,我了解了有关于爬虫的基本信息。也在课外爬了一些网页作为练习。深感python语言的精妙之处,希望在第二周的学习中更近一步