yolov3迁移学习,训练自己的数据

一、准备个人数据集、训练、测试

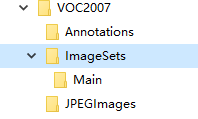

1.在darknet目录下创建myData文件夹,目录结构如下,将labelimg工具标注好的图片和xml文件放到对应目录下:

myData

…JPEGImages#存放图像

…Annotations#存放图像对应的xml文件

…ImageSets/Main # 存放训练/验证图像的名字(格式如 000001.jpg或者000001),里面包括train.txt。这里给出的格式是: 000000,因为下面的代码中给出了图像的格式。

类似如下格式:

2.将自己的数据集图片拷贝到JPEGImages目录下。将数据集label文件拷贝到Annotations目录下。在myData下创建test.py,将下面代码拷贝进去运行,将生成四个文件:train.txt,val.txt,test.txt和trainval.txt。

import os

import random

trainval_percent = 0.1

train_percent = 0.9

xmlfilepath = 'Annotations'

txtsavepath = 'ImageSets\Main'

total_xml = os.listdir(xmlfilepath)

num = len(total_xml)

list = range(num)

tv = int(num * trainval_percent)

tr = int(tv * train_percent)

trainval = random.sample(list, tv)

train = random.sample(trainval, tr)

ftrainval = open('ImageSets/Main/trainval.txt', 'w')

ftest = open('ImageSets/Main/test.txt', 'w')

ftrain = open('ImageSets/Main/train.txt', 'w')

fval = open('ImageSets/Main/val.txt', 'w')

for i in list:

name = total_xml[i][:-4] + '\n'

if i in trainval:

ftrainval.write(name)

if i in train:

ftest.write(name)

else:

fval.write(name)

else:

ftrain.write(name)

ftrainval.close()

ftrain.close()

fval.close()

ftest.close()

3.将数据转换成darknet支持的格式

yolov3提供了将VOC数据集转为YOLO训练所需要的格式的代码,在scripts/voc_label.py文件中。这里提供一个修改版本的。在darknet文件夹下新建一个my_lables.py文件,内容如下(路径需要按照自己实际路径进行修改):

import xml.etree.ElementTree as ET

import pickle

import os

from os import listdir, getcwd

from os.path import join

#源代码sets=[('2012', 'train'), ('2012', 'val'), ('2007', 'train'), ('2007', 'val'), ('2007', 'test')]

# 训练模式下改为sets=[('myData', 'train'), ('myData', 'test')]

sets=[('myData', 'train')] # 改成自己建立的myData

classes = ["person", "foot", "face"] # 改成自己的类别

def convert(size, box):

dw = 1./(size[0])

dh = 1./(size[1])

x = (box[0] + box[1])/2.0 - 1

y = (box[2] + box[3])/2.0 - 1

w = box[1] - box[0]

h = box[3] - box[2]

x = x*dw

w = w*dw

y = y*dh

h = h*dh

return (x,y,w,h)

def convert_annotation(year, image_id):

in_file = open('myData/Annotations/%s.xml'%(image_id)) # 源代码VOCdevkit/VOC%s/Annotations/%s.xml

out_file = open('myData/labels/%s.txt'%(image_id), 'w') # 源代码VOCdevkit/VOC%s/labels/%s.txt

tree=ET.parse(in_file)

root = tree.getroot()

size = root.find('size')

w = int(size.find('width').text)

h = int(size.find('height').text)

for obj in root.iter('object'):

difficult = obj.find('difficult').text

cls = obj.find('name').text

if cls not in classes or int(difficult)==1:

continue

cls_id = classes.index(cls)

xmlbox = obj.find('bndbox')

b = (float(xmlbox.find('xmin').text), float(xmlbox.find('xmax').text), float(xmlbox.find('ymin').text), float(xmlbox.find('ymax').text))

bb = convert((w,h), b)

out_file.write(str(cls_id) + " " + " ".join([str(a) for a in bb]) + '\n')

wd = getcwd()

for year, image_set in sets:

if not os.path.exists('myData/labels/'): # 改成自己建立的myData

os.makedirs('myData/labels/')

image_ids = open('myData/ImageSets/Main/%s.txt'%(image_set)).read().strip().split()

list_file = open('myData/%s_%s.txt'%(year, image_set), 'w')

for image_id in image_ids:

list_file.write('%s/myData/JPEGImages/%s.jpg\n'%(wd, image_id))

convert_annotation(year, image_id)

list_file.close()

3.运行该脚本

python my_lables.py

4.修改darknet/cfg下的voc.data和yolov3-voc.cfg文件

为了保险起见,复制这两个文件,并分别重命名为my_data.data和yolov3-tiny-car.cfg

my_data.data内容:

classes= 1 ##改为自己的分类个数

##下面都改为自己的路径

train = darknet_pwd + /myData/myData_train.txt

names = darknet_pwd + /myData/myData.names #稍后需要创建这个文件

backup = /backup # 模型生成路径

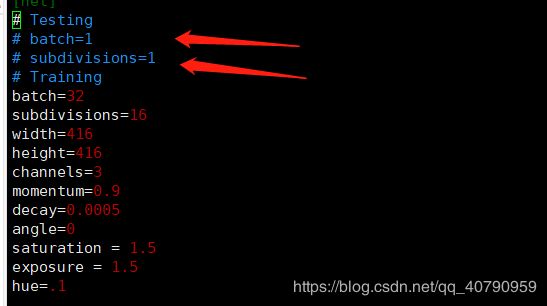

yolov3-tiny-car.cfg的内容:

/yolo, 总共会搜出3个含有yolo的地方。

每个地方都必须要改2处, filters:3*(5+len(classes));

其中:classes: len(classes) = 1,这里以我的工程为例

filters = 24

classes = 1

可修改:random = 1:原来是1,显存小改为0。(是否要多尺度输出。)

修改成训练模式

因为是训练,所以注释Testing,打开Training,其中

batch=64 每batch个样本更新一次参数。

subdivisions=16 如果内存不够大,将batch分割为subdivisions个子batch,每个子batch的大小为batch/subdivisions。

6.设置anchor box

https://github.com/lars76/kmeans-anchor-boxes

下载kmeans.py

同时新增一个anchor_box.py文件

# -*- coding=utf-8 -*-

import glob

import os

import sys

import xml.etree.ElementTree as ET

import numpy as np

from kmeans import kmeans, avg_iou

# 根文件夹

ROOT_PATH = '/data/DataBase/YOLO_Data/V3_DATA/'

# 聚类的数目

CLUSTERS = 6

# 模型中图像的输入尺寸,默认是一样的

SIZE = 640

# 加载YOLO格式的标注数据

def load_dataset(path):

jpegimages = os.path.join(path, 'JPEGImages')

if not os.path.exists(jpegimages):

print('no JPEGImages folders, program abort')

sys.exit(0)

labels_txt = os.path.join(path, 'labels')

if not os.path.exists(labels_txt):

print('no labels folders, program abort')

sys.exit(0)

label_file = os.listdir(labels_txt)

print('label count: {}'.format(len(label_file)))

dataset = []

for label in label_file:

with open(os.path.join(labels_txt, label), 'r') as f:

txt_content = f.readlines()

for line in txt_content:

line_split = line.split(' ')

roi_with = float(line_split[len(line_split)-2])

roi_height = float(line_split[len(line_split)-1])

if roi_with == 0 or roi_height == 0:

continue

dataset.append([roi_with, roi_height])

# print([roi_with, roi_height])

return np.array(dataset)

data = load_dataset(ROOT_PATH)

out = kmeans(data, k=CLUSTERS)

print(out)

print("Accuracy: {:.2f}%".format(avg_iou(data, out) * 100))

print("Boxes:\n {}-{}".format(out[:, 0] * SIZE, out[:, 1] * SIZE))

ratios = np.around(out[:, 0] / out[:, 1], decimals=2).tolist()

print("Ratios:\n {}".format(sorted(ratios)))

经过运行之后得到一组如下数据:

[[0.21203704 0.02708333]

[0.34351852 0.09375 ]

[0.35185185 0.06388889]

[0.29513889 0.06597222]

[0.24652778 0.06597222]

[0.24861111 0.05347222]]

Accuracy: 89.58%

Boxes:

[135.7037037 219.85185185 225.18518519 188.88888889 157.77777778

159.11111111]-[17.33333333 60. 40.88888889 42.22222222 42.22222222 34.22222222]

其中的Boxes就是得到的anchor参数,以上面给出的计算结果为例,最后的anchor参数设置为

anchors = 135,17, 219,60, 225,40, 188,42, 157,42, 159,34

6.训练

在myData文件夹下新建myData.names文件(标签文件)

car

foot

people

生成预训练权重(tiny)

wget https://pjreddie.com/media/files/yolov3-tiny.weights(如果有可以不下载)

./darknet partial ./cfg/yolov3-tiny.cfg ./yolov3-tiny.weights ./yolov3-tiny.conv.15 15

或者直接下载预训练权重

wget https://pjreddie.com/media/files/darknet53.conv.74

开始训练(我用的是tiny模型)

./darknet detector train ./cfg/mydata.data ./cfg/yolov3-tiny-car.cfg ./mydata/yolov3-tiny.conv.15

或者指定gpu训练,默认使用gpu0

./darknet detector train cfg/my_data.data cfg/yolov3-tiny-car.cfg darknet53.conv.74 -gups 0,1,2,3

从停止处重新训练

./darknet detector train cfg/my_data.data cfg/yolov3-tiny-car.cfg darknet53.conv.74 -gups 0,1,2,3 backup/my_yolov3.backup -gpus 0,1,2,3

测试

./darknet detector test cfg/mydata.data cfg/yolov3-tiny-car.cfg backup/yolov3-tiny-mydata_900.weights 2.jpg

完成