吴恩达作业9:卷积神经网络实现手势数字的识别(基于tensorflow)

数据集链接:https://download.csdn.net/download/fanzonghao/10551018

提供数据集代码放在cnn_utils.py里。

import math

import numpy as np

import h5py

import matplotlib.pyplot as plt

import tensorflow as tf

from tensorflow.python.framework import ops

def load_dataset():

train_dataset = h5py.File('datasets/train_signs.h5', "r")

train_set_x_orig = np.array(train_dataset["train_set_x"][:]) # your train set features

train_set_y_orig = np.array(train_dataset["train_set_y"][:]) # your train set labels

test_dataset = h5py.File('datasets/test_signs.h5', "r")

test_set_x_orig = np.array(test_dataset["test_set_x"][:]) # your test set features

test_set_y_orig = np.array(test_dataset["test_set_y"][:]) # your test set labels

classes = np.array(test_dataset["list_classes"][:]) # the list of classes

train_set_y_orig = train_set_y_orig.reshape((1, train_set_y_orig.shape[0]))

test_set_y_orig = test_set_y_orig.reshape((1, test_set_y_orig.shape[0]))

return train_set_x_orig, train_set_y_orig, test_set_x_orig, test_set_y_orig, classes

def random_mini_batches(X, Y, mini_batch_size = 64, seed = 0):

"""

Creates a list of random minibatches from (X, Y)

Arguments:

X -- input data, of shape (input size, number of examples) (m, Hi, Wi, Ci)

Y -- true "label" vector (containing 0 if cat, 1 if non-cat), of shape (1, number of examples) (m, n_y)

mini_batch_size - size of the mini-batches, integer

seed -- this is only for the purpose of grading, so that you're "random minibatches are the same as ours.

Returns:

mini_batches -- list of synchronous (mini_batch_X, mini_batch_Y)

"""

m = X.shape[0] # number of training examples

mini_batches = []

np.random.seed(seed)

# Step 1: Shuffle (X, Y)

permutation = list(np.random.permutation(m))

shuffled_X = X[permutation,:,:,:]

shuffled_Y = Y[permutation,:]

# Step 2: Partition (shuffled_X, shuffled_Y). Minus the end case.

num_complete_minibatches = math.floor(m/mini_batch_size) # number of mini batches of size mini_batch_size in your partitionning

for k in range(0, num_complete_minibatches):

mini_batch_X = shuffled_X[k * mini_batch_size : k * mini_batch_size + mini_batch_size,:,:,:]

mini_batch_Y = shuffled_Y[k * mini_batch_size : k * mini_batch_size + mini_batch_size,:]

mini_batch = (mini_batch_X, mini_batch_Y)

mini_batches.append(mini_batch)

# Handling the end case (last mini-batch < mini_batch_size)

if m % mini_batch_size != 0:

mini_batch_X = shuffled_X[num_complete_minibatches * mini_batch_size : m,:,:,:]

mini_batch_Y = shuffled_Y[num_complete_minibatches * mini_batch_size : m,:]

mini_batch = (mini_batch_X, mini_batch_Y)

mini_batches.append(mini_batch)

return mini_batches

def convert_to_one_hot(Y, C):

Y = np.eye(C)[Y.reshape(-1)].T

return Y

def forward_propagation_for_predict(X, parameters):

"""

Implements the forward propagation for the model: LINEAR -> RELU -> LINEAR -> RELU -> LINEAR -> SOFTMAX

Arguments:

X -- input dataset placeholder, of shape (input size, number of examples)

parameters -- python dictionary containing your parameters "W1", "b1", "W2", "b2", "W3", "b3"

the shapes are given in initialize_parameters

Returns:

Z3 -- the output of the last LINEAR unit

"""

# Retrieve the parameters from the dictionary "parameters"

W1 = parameters['W1']

b1 = parameters['b1']

W2 = parameters['W2']

b2 = parameters['b2']

W3 = parameters['W3']

b3 = parameters['b3']

# Numpy Equivalents:

Z1 = tf.add(tf.matmul(W1, X), b1) # Z1 = np.dot(W1, X) + b1

A1 = tf.nn.relu(Z1) # A1 = relu(Z1)

Z2 = tf.add(tf.matmul(W2, A1), b2) # Z2 = np.dot(W2, a1) + b2

A2 = tf.nn.relu(Z2) # A2 = relu(Z2)

Z3 = tf.add(tf.matmul(W3, A2), b3) # Z3 = np.dot(W3,Z2) + b3

return Z3

def predict(X, parameters):

W1 = tf.convert_to_tensor(parameters["W1"])

b1 = tf.convert_to_tensor(parameters["b1"])

W2 = tf.convert_to_tensor(parameters["W2"])

b2 = tf.convert_to_tensor(parameters["b2"])

W3 = tf.convert_to_tensor(parameters["W3"])

b3 = tf.convert_to_tensor(parameters["b3"])

params = {"W1": W1,

"b1": b1,

"W2": W2,

"b2": b2,

"W3": W3,

"b3": b3}

x = tf.placeholder("float", [12288, 1])

z3 = forward_propagation_for_predict(x, params)

p = tf.argmax(z3)

sess = tf.Session()

prediction = sess.run(p, feed_dict = {x: X})

return prediction

#def predict(X, parameters):

#

# W1 = tf.convert_to_tensor(parameters["W1"])

# b1 = tf.convert_to_tensor(parameters["b1"])

# W2 = tf.convert_to_tensor(parameters["W2"])

# b2 = tf.convert_to_tensor(parameters["b2"])

## W3 = tf.convert_to_tensor(parameters["W3"])

## b3 = tf.convert_to_tensor(parameters["b3"])

#

## params = {"W1": W1,

## "b1": b1,

## "W2": W2,

## "b2": b2,

## "W3": W3,

## "b3": b3}

#

# params = {"W1": W1,

# "b1": b1,

# "W2": W2,

# "b2": b2}

#

# x = tf.placeholder("float", [12288, 1])

#

# z3 = forward_propagation(x, params)

# p = tf.argmax(z3)

#

# with tf.Session() as sess:

# prediction = sess.run(p, feed_dict = {x: X})

#

# return prediction看数据集,代码:

import cnn_utils

import cv2

train_set_x_orig, train_set_Y, test_set_x_orig, test_set_Y, classes = cnn_utils.load_dataset()

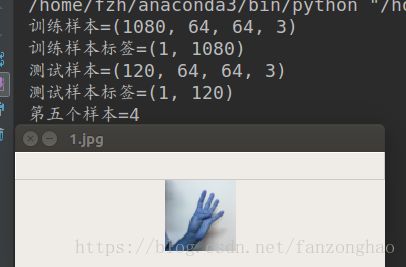

print('训练样本={}'.format(train_set_x_orig.shape))

print('训练样本标签={}'.format(train_set_Y.shape))

print('测试样本={}'.format(test_set_x_orig.shape))

print('测试样本标签={}'.format(test_set_Y.shape))

print('第五个样本={}'.format(train_set_Y[0,5]))

cv2.imshow('1.jpg',train_set_x_orig[5,:,:,:]/255)

cv2.waitKey()

train_set_x_flatten = train_set_x_orig.reshape(train_set_x_orig.shape[0],

train_set_x_orig.shape[1] * train_set_x_orig.shape[2] * 3).T

test_set_x_flatten = test_set_x_orig.reshape(test_set_x_orig.shape[0],

test_set_x_orig.shape[1] * test_set_x_orig.shape[2] * 3).T

train_X = train_set_x_flatten / 255 #(12288,1080)

test_X = test_set_x_flatten / 255打印结果:训练样本数1080个,size(64*64*3),数字4代表手势数字四

开始搭建神经网络代码如下:

import cnn_utils

import tensorflow as tf

import matplotlib.pyplot as plt

import numpy as np

import h5py

"""

定义卷积核

"""

def initialize_parameter():

W1 = tf.get_variable('W1',shape=[4,4,3,8],initializer=tf.contrib.layers.xavier_initializer())

#tf.add_to_collection("losses", tf.contrib.layers.l2_regularizer(0.07)(W1))

W2 = tf.get_variable('W2', shape=[2, 2, 8, 16], initializer=tf.contrib.layers.xavier_initializer())

#tf.add_to_collection("losses", tf.contrib.layers.l2_regularizer(0.07)(W2))

parameters={'W1':W1,

'W2':W2}

return parameters

"""

创建输入输出placeholder

"""

def creat_placeholder(n_xH,n_xW,n_C0,n_y):

X=tf.placeholder(tf.float32,shape=(None,n_xH,n_xW,n_C0))

Y = tf.placeholder(tf.float32, shape=(None, n_y))

return X,Y

"""

传播过程

"""

def forward_propagation(X,parameters):

W1=parameters['W1']

W2 = parameters['W2']

Z1=tf.nn.conv2d(X,W1,strides=[1,1,1,1],padding='SAME')

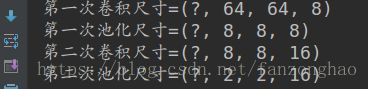

print('第一次卷积尺寸={}'.format(Z1.shape))

A1=tf.nn.relu(Z1)

P1 = tf.nn.max_pool(A1, ksize=[1,8,8,1], strides=[1, 8, 8, 1], padding='VALID')

print('第一次池化尺寸={}'.format(P1.shape))

Z2 = tf.nn.conv2d(P1, W2, strides=[1, 1, 1, 1], padding='SAME')

print('第二次卷积尺寸={}'.format(Z2.shape))

A2 = tf.nn.relu(Z2)

P2 = tf.nn.max_pool(A2, ksize=[1, 4, 4, 1], strides=[1, 4, 4, 1], padding='VALID')

print('第二次池化尺寸={}'.format(P2.shape))

P_flatten=tf.contrib.layers.flatten(P2)

Z3=tf.contrib.layers.fully_connected(P_flatten,6,activation_fn=None)

return Z3

"""

计算损失值

"""

def compute_cost(Z3,Y):

cost = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits_v2(logits=Z3, labels=Y))

return cost

"""

模型应用过程

"""

def model(learning_rate,num_pochs,minibatch_size):

train_set_x_orig, train_y_orig, test_set_x_orig, test_y_orig, classes=cnn_utils.load_dataset()

train_x = train_set_x_orig / 255

test_x = test_set_x_orig / 255

# 转换成one-hot

train_y=cnn_utils.convert_to_one_hot(train_y_orig,6).T

test_y = cnn_utils.convert_to_one_hot(test_y_orig, 6).T

m,n_xH, n_xW, n_C0=train_set_x_orig.shape

n_y=train_y.shape[1]

X, Y = creat_placeholder(n_xH, n_xW, n_C0, n_y)

parameters = initialize_parameter()

Z3 = forward_propagation(X, parameters)

cost = compute_cost(Z3, Y)

##带正则项误差

# tf.add_to_collection("losses", cost)

# loss = tf.add_n(tf.get_collection('losses'))

optimizer=tf.train.AdamOptimizer(learning_rate=learning_rate).minimize(cost)

init = tf.global_variables_initializer()

costs=[]

with tf.Session() as sess:

sess.run(init)

for epoch in range(num_pochs):

minibatch_cost=0

num_minibatches=int(m/minibatch_size)

minibatchs=cnn_utils.random_mini_batches(train_x,train_y,)

for minibatch in minibatchs:

(mini_batch_X, mini_batch_Y)=minibatch

_,temp_cost = sess.run([optimizer,cost], feed_dict={X:mini_batch_X , Y: mini_batch_Y})

minibatch_cost+=temp_cost/num_minibatches

if epoch%5==0:

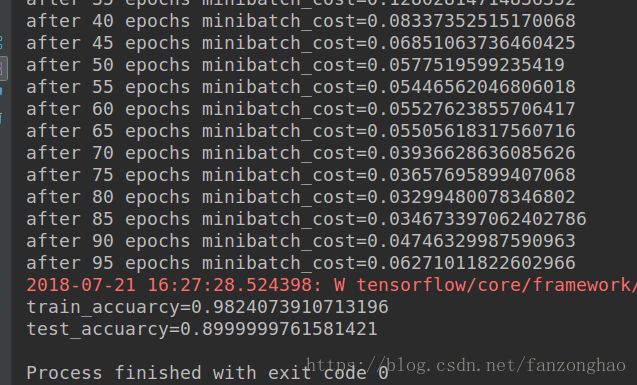

print('after {} epochs minibatch_cost={}'.format(epoch,minibatch_cost))

costs.append(minibatch_cost)

#predict_y=tf.argmax(Z3,1)####1 represent hang zuida

corect_prediction=tf.equal(tf.argmax(Z3,1),tf.argmax(Y,1))

accuarcy=tf.reduce_mean(tf.cast(corect_prediction,'float'))

train_accuarcy=sess.run(accuarcy,feed_dict={X:train_x,Y:train_y})

test_accuarcy = sess.run(accuarcy, feed_dict={X: test_x, Y: test_y})

print('train_accuarcy={}'.format(train_accuarcy))

print('test_accuarcy={}'.format(test_accuarcy))

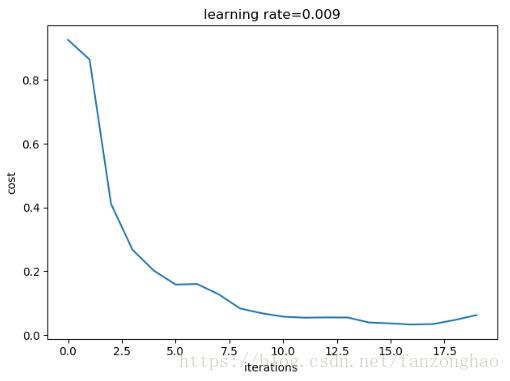

plt.plot(np.squeeze(costs))

plt.ylabel('cost')

plt.xlabel('iterations ')

plt.title('learning rate={}'.format(learning_rate))

plt.show()

def test_model():

model(learning_rate=0.009,num_pochs=100,minibatch_size=32)

def test():

########test forward

# init = tf.global_variables_initializer()

# sess = tf.Session()

# sess.run(init)

with tf.Session() as sess:

X,Y=creat_placeholder(64,64,3,6)

parameters=initialize_parameter()

Z3=forward_propagation(X,parameters)

cost=compute_cost(Z3,Y)

init = tf.global_variables_initializer()

sess.run(init)

Z3,cost=sess.run([Z3,cost],feed_dict={X:np.random.randn(2,64,64,3),Y:np.random.randn(2,6)})

print('Z3={}'.format(Z3))

print('cost={}'.format(cost))

################

if __name__=='__main__':

#test()

test_model()打印结果:

其中?代表样本数,可看出最后池化维度结果为(2,2,16),在接全连接层即可。

训练精度为0.98,测试精度为0.89,还不错啊,继续还可以优化。