Pytorch源码学习之七:torchvision.models.googlenet

0.基本知识

torchvision.models.googlenet源码地址

GoogLeNet论文地址

一、源码

import torch

import torch.nn as nn

import torch.nn.functional as F

import warnings

from collections import namedtuple

from torch.hub import load_state_dict_from_url

__all__ = ['GoogLeNet', 'googlenet']

model_urls = {

# GoogLeNet ported from TensorFlow

'googlenet': 'https://download.pytorch.org/models/googlenet-1378be20.pth',

}

_GoogLeNetOuputs = namedtuple('GoogLeNetOuputs', ['logits', 'aux_logits2', 'aux_logits1'])

class BasicBlock(nn.Module):

def __init(self, in_channels, out_channels, **kwargs):

super(BasicBlock, self).__init__()

self.conv = nn.Conv2d(in_channels, out_channels, bias=False, **kwargs)

self.bn = nn.BatchNorm2d(out_channels, eps=0.001)

def forward(self, x):

x = self.conv(x)

x = self.bn(x)

return F.relu(x, inplace=True)

class Inception(nn.Module):

def __init__(self, in_channels, ch1x1, ch3x3red, ch3x3, ch5x5red, ch5x5, pool_proj):

super(Inception, self).__init__()

self.branch1 = BasicBlock(in_channels, ch1x1, kernel_size=1)

self.branch2 = nn.Sequential(

BasicBlock(in_channels, ch3x3red, kernel_size=1),

BasicBlock(ch3x3red, ch3x3, kernel_size=3, padding=1)

)

self.branch3 = nn.Sequential(

BasicBlock(in_channels, ch5x5red, kernel_size=1),

BasicBlock(ch5x5red, ch5x5, kernel_size=3, padding=1)

)

self.branch4 = nn.Sequential(

nn.MaxPool2d(kernel_size=3, stride=1, padding=1, ceil_mode=True),

BasicBlock(in_channels, pool_proj, kernel_size=1)

)

def forward(self, x):

branch1 = self.branch1(x)

branch2 = self.branch2(x)

branch3 = self.branch3(x)

branch4 = self.branch4(x)

outputs = [branch1, branch2, branch3, branch4]

return torch.cat(outputs, 1)

class InceptionAux(nn.Module):

def __init__(self, in_channels, num_classes):

super(InceptionAux, self).__init__()

self.conv = BasicBlock(in_channels, 128, kernel_size=1)

self.fc1 = nn.Linear(2048, 1024)

self.fc2 = nn.Linear(1024, num_classes)

def forward(self, x):

# aux1: N x 512 x 14 x 14, aux2: N x 528 x 14 x 14

x = F.adaptive_avg_pool2d(x, (4, 4))

# aux1 : N x 512 x 4 x 4, aux2 : N X 528 x 4 x 4

x = self.conv(x)

# N x 128 x 4 x 4

x = x.view(x.size(0), -1)

# N x 2048

x = self.fc1(x)

# N x 1024

x = F.relu(x, inplace=True)

x = F.dropout(x, 0.7, training=self.training)

# N x 1024

x = self.fc2(x)

# N x num_classes

return x

class GoogLeNet(nn.Module):

def __init__(self, num_classes=1000, aux_logits=True, transform_input=False, init_weights=True):

super(GoogLeNet, self).__init__()

self.aux_logits = aux_logits

self.transform_input = transform_input

self.conv1 = BasicBlock(3, 64, kernel_size=7, stride=2, padding=3)

self.maxpool1 = nn.MaxPool2d(3, stride=2, ceil_mode=True)

self.conv2 = BasicBlock(64, 64, kernel_size=1)

self.conv3 = BasicBlock(64, 192, kernel_size=3, padding=1)

self.maxpool2 = nn.MaxPool2d(3, stride=2, ceil_mode=True)

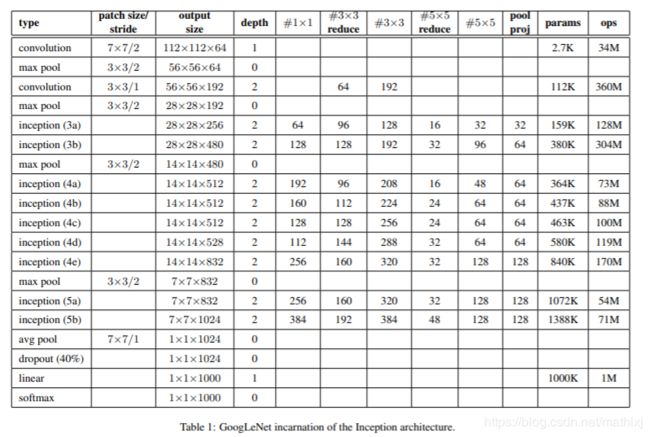

self.inception3a = Inception(192, 64, 96, 128, 16, 32, 32) #64+128+32+32=256

self.inception3b = Inception(256, 128, 128, 192, 32, 96, 64) #128+192+96+64=480

self.maxpool3 = nn.MaxPool2d(3, stride=2, ceil_mode=True)

self.inception4a = Inception(480, 192, 96, 208, 16, 48, 64) # 192+208+48+64=512

self.inception4b = Inception(512, 160, 112, 224, 24, 64, 64)#160+224+64+64=512

self.inception4c = Inception(512, 128, 128, 256, 24, 64, 64)#128+256_64+64=512

self.inception4d = Inception(512, 112, 144, 288, 32, 64, 64)#112+288+64+64=528

self.inception4e = Inception(528, 256, 160, 320, 32, 128, 128)#256+320+128+128=832

self.maxpool4 = nn.MaxPool2d(2, stride=2, ceil_mode=True)

self.inception5a = Inception(832, 256, 160, 320, 32, 128, 128)#256+320+128+128=832

self.inception5b = Inception(832, 384, 192, 384, 48, 128, 128)#384+384+128+128=1024

if aux_logits:

self.aux1 = InceptionAux(512, num_classes)

self.aux2 = InceptionAux(528, num_classes)

self.avgpool = nn.AdaptiveAvgPool2d((1, 1))

self.dropout = nn.Dropout(0.2)

self.fc = nn.Linear(1024, num_classes)

if init_weights:

self._initialize_weights()

def _initialize_weights(self):

for m in self.modules():

if isinstance(m, nn.Conv2d) or isinstance(m, nn.Linear):

import scipy.stats as stats

X = stats.truncnorm(-2, 2, scale=0.01)

values = torch.as_tensor(X.rvs(m.weight.numel()), dtype=m.weight.dtype)

values = values.view(m.weight.size())

with torch.no_grad():

m.weight.copy_(values)

elif isinstance(m, nn.BatchNorm2d):

nn.init.constant_(m.weight, 1)

nn.init.constant_(m.bias, 0)

def forward(self, x):

if self.transform_input:

x_ch0 = torch.unsqueeze(x[:, 0], 1) * (0.229 / 0.5) + (0.485 - 0.5) / 0.5

x_ch1 = torch.unsqueeze(x[:, 1], 1) * (0.224 / 0.5) + (0.456 - 0.5) / 0.5

x_ch2 = torch.unsqueeze(x[:, 2], 1) * (0.225 / 0.5) + (0.406 - 0.5) / 0.5

x = torch.cat((x_ch0, x_ch1, x_ch2), 1)

# N x 3 x 224 x 224

x = self.conv1(x)

# N x 64 x 112 x 112

x = self.maxpool1(x)

# N x 64 x 56 x 56

x = self.conv2(x)

# N x 64 x 56 x 56

x = self.conv3(x)

# N x 192 x 56 x 56

x = self.maxpool2(x)

# N x 192 x 28 x 28

x = self.inception3a(x)

# N x 256 x 28 x 28

x = self.inception3b(x)

# N x 480 x 28 x 28

x = self.maxpool3(x)

# N x 480 x 14 x 14

x = self.inception4a(x)

# N x 512 x 14 x 14

if self.training and self.aux_logits:

aux1 = self.aux1(x)

x = self.inception4b(x)

# N x 512 x 14 x 14

x = self.inception4c(x)

# N x 512 x 14 x 14

x = self.inception4d(x)

# N x 528 x 14 x 14

if self.training and self.aux_logits:

aux2 = self.aux2(x)

x = self.inception4e(x)

# N x 832 x 14 x 14

x = self.maxpool4(x)

# N x 832 x 7 x 7

x = self.inception5a(x)

# N x 832 x 7 x 7

x = self.inception5b(x)

# N x 1024 x 7 x 7

x = self.avgpool(x)

# N x 1024 x 1 x 1

x = x.view(x.size(0), -1)

# N x 1024

x = self.dropout(x)

x = self.fc(x)

# N x 1000(num_classes)

if self.training and self.aux_logits:

return _GoogLeNetOuputs(x, aux2, aux1)

return x

def googlenet(pretrained=False, progress=True, **kwargs):

if pretrained:

if 'transform_input' not in kwargs:

kwargs['transform_input'] = True

if 'aux_logits' not in kwargs:

kwargs['aux_logits'] = False

if kwargs['aux_logits']:

warnings.warn('auxiliary heads in the pretrained googlenet model are NOT pretrained, '

'so make sure to train them')

original_aux_logits = kwargs['aux_logits']

kwargs['aux_logits'] = True

kwargs['init_weights'] = False

model = GoogLeNet(**kwargs)

state_dict = load_state_dict_from_url(model_urls['googlenet'],

progress=progress)

model.load_state_dict(state_dict)

if not original_aux_logits:

model.aux_logits = False

del model.aux1, model.aux2

return model

return GoogLeNet(**kwargs)

二、一些有趣的用法

1.collections.namedtuple

使用以下方式来返回辅助输出和最终输出,方便索引.

from collections import namedtuple

_GoogLeNetOuputs = namedtuple('GoogLeNetOuputs', ['logits', 'aux_logits2', 'aux_logits1'])

class GoogLeNet(nn.Module):

...

def forward(self, x):

...

if self.training and self.aux_logits:

return _GoogLeNetOuputs(x, aux2, aux1)

warnings来返回自定义警告

import warnings

if pretrained:

if kwargs['aux_logits']:

warning.warn('your warnings')