Hive的基础介绍

一、什么是Hive?

1、Hive是一个翻译器,SQL ---> Hive引擎 ---> MR程序

2、Hive是构建在HDFS上的一个数据仓库(Data Warehouse)

Hive HDFS

表 目录

分区 目录

数据 文件

桶 文件

3、Hive支持SQL(SQL99标准的一个自子集)

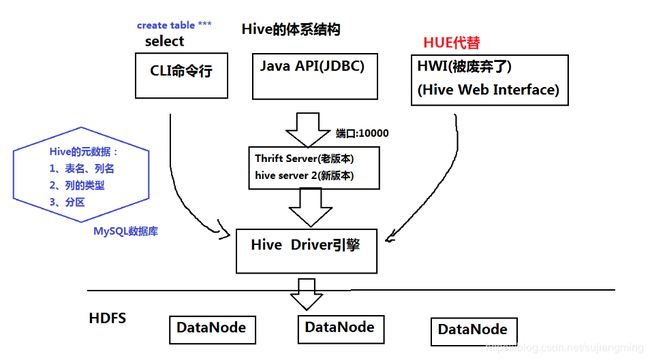

二、Hive的体系结构(画图)

三、安装和配置

解压安装到/training/目录下

tar -zxvf apache-hive-2.3.0-bin.tar.gz -C ~/training/

设置环境变量

HIVE_HOME=/root/training/apache-hive-2.3.0-bin

export HIVE_HOME

PATH=$HIVE_HOME/bin:$PATH

export PATH核心配置文件: conf/hive-site.xml

1、嵌入模式

(*)不需要MySQL的支持,使用Hive的自带的数据库Derby

(*)局限:只支持一个连接

javax.jdo.option.ConnectionURL

jdbc:derby:;databaseName=metastore_db;create=true

javax.jdo.option.ConnectionDriverName

org.apache.derby.jdbc.EmbeddedDriver

hive.metastore.local

true

hive.metastore.warehouse.dir

file:///root/training/apache-hive-2.3.0-bin/warehouse

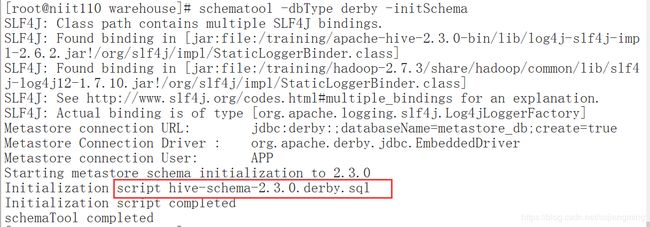

初始化Derby数据库

schematool -dbType derby -initSchema

日志

Hive-on-MR is deprecated in Hive 2 and may not be available in the future versions. Consider using a different execution engine (i.e. spark, tez) or using Hive 1.X releases.

2、本地模式、远程模式:需要MySQL

(*)MySQL的客户端: mysql front http://www.mysqlfront.de/

-

Hive的安装

(1)在虚拟机上安装MySQL:-

rpm -ivh mysql-community-devel-5.7.19-1.el7.x86_64.rpm (可选)

-

rpm -ivh mysql-community-server-5.7.19-1.el7.x86_64.rpm

-

rpm -ivh mysql-community-client-5.7.19-1.el7.x86_64.rpm

-

rpm -ivh mysql-community-libs-5.7.19-1.el7.x86_64.rpm

-

rpm -ivh mysql-community-common-5.7.19-1.el7.x86_64.rpm

-

yum remove mysql-libs

-

(2) 启动MySQL:service mysqld start,或者:systemctl start mysqld.service

查看root用户的密码:cat /var/log/mysqld.log | grep password

登录后修改密码:alter user 'root'@'localhost' identified by 'Sjm_123456';

| MySQL数据库的配置: 创建一个新的数据库:create database hive; 创建一个新的用户: create user 'hiveowner'@'%' identified by 'Sjm_123456'; 给该用户授权 grant all on hive.* TO 'hiveowner'@'%'; grant all on hive.* TO 'hiveowner'@'localhost' identified by 'Sjm_123456'; |

-

远程模式

-

元数据信息存储在远程的MySQL数据库中

注意一定要使用高版本的MySQL驱动(5.1.43以上的版本)

| 参数文件 |

配置参数 |

参考值 |

| hive-site.xml |

javax.jdo.option.ConnectionURL |

jdbc:mysql://localhost:3306/hive?useSSL=false |

|

|

javax.jdo.option.ConnectionDriverName |

com.mysql.jdbc.Driver |

|

|

javax.jdo.option.ConnectionUserName |

hiveowner |

|

|

javax.jdo.option.ConnectionPassword |

Welcome_1 |

-

初始化MetaStore:schematool -dbType mysql -initSchema

(*)重新创建hive-site.xml

javax.jdo.option.ConnectionURL

jdbc:mysql://localhost:3306/hive?useSSL=false

javax.jdo.option.ConnectionDriverName

com.mysql.jdbc.Driver

javax.jdo.option.ConnectionUserName

hiveowner

javax.jdo.option.ConnectionPassword

Sjm_123456

(*)将mysql的jar包放到lib目录下(上传mysql驱动包)

u注意一定要使用高版本的MySQL驱动(5.1.43以上的版本)

目录在: /training/apache-hive-2.3.0-bin/lib

(*)初始化MySQL

(*)老版本:当第一次启动HIve的时候 自动进行初始化

(*)新版本:

schematool -dbType mysql -initSchema

Starting metastore schema initialization to 2.3.0

Initialization script hive-schema-2.3.0.mysql.sql

Initialization script completed

schemaTool completed

四、Hive的数据模型(最重要的内容)

注意:默认:列的分隔符是tab键(制表符)

测试数据:员工表和部门表

7654,MARTIN,SALESMAN,7698,1981/9/28,1250,1400,30

首先看下hive在HDFS的目录结构

create database hive;

1、内部表:相当于MySQL的表 对应的HDFS的目录 /user/hive/warehouse

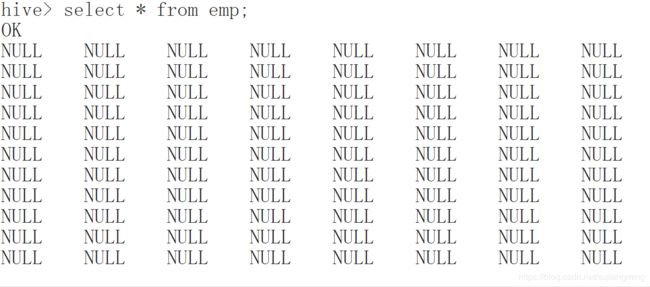

create table emp

(empno int,

ename string,

job string,

mgr int,

hiredate string,

sal int,

comm int,

deptno int);插入数据 insert、load语句

load data inpath '/scott/emp.csv' into table emp; 导入HDFS的数据 (从某个HDFS的目录,把数据导入Hive的表 本质ctrl+x)

load data local inpath '/root/temp/*****' into table emp; 导入本地Linux的数据 (把数据导入Hive的表 本质ctrl+c)

创建表的时候,一定指定分隔符

create table emp1

(empno int,

ename string,

job string,

mgr int,

hiredate string,

sal int,

comm int,

deptno int)

row format delimited fields terminated by ',';

创建部门表 并且导入数据

create table dept

(deptno int,

dname string,

loc string)

row format delimited fields terminated by ',';

2、分区表: 可以提高查询的效率的----> 通过查看SQL的执行计划

根据员工的部门号创建分区

create table emp_part

(empno int,

ename string,

job string,

mgr int,

hiredate string,

sal int,

comm int)

partitioned by (deptno int)

row format delimited fields terminated by ',';

指明导入的数据的分区(通过子查询导入数据) ----> MapReduce程序

insert into table emp_part partition(deptno=10) select empno,ename,job,mgr,hiredate,sal,comm from emp1 where deptno=10;

insert into table emp_part partition(deptno=20) select empno,ename,job,mgr,hiredate,sal,comm from emp1 where deptno=20;

insert into table emp_part partition(deptno=30) select empno,ename,job,mgr,hiredate,sal,comm from emp1 where deptno=30;hive的静默模式:hive -S 好处是控制台不会打印一些日志信息,屏幕干净清爽

如何查看SQL的执行计划呢?需要使用到关键字explain

1)、查看hive普通的表(内部表)的SQL执行计划:

explain select * from emp_1 where deptno=10;

STAGE DEPENDENCIES:

Stage-0 is a root stage

STAGE PLANS:

Stage: Stage-0

Fetch Operator

limit: -1

Processor Tree:

TableScan

alias: emp_1

Statistics: Num rows: 1 Data size: 619 Basic stats: COMPLETE Column stats: NONE

Filter Operator

predicate: (deptno = 10) (type: boolean)

Statistics: Num rows: 1 Data size: 619 Basic stats: COMPLETE Column stats: NONE

Select Operator

expressions: empno (type: int), ename (type: string), job (type: string), mgr (type: int), hiredate (type: string), sal (type: int), comm (type: int), 10 (type: int)

outputColumnNames: _col0, _col1, _col2, _col3, _col4, _col5, _col6, _col7

Statistics: Num rows: 1 Data size: 619 Basic stats: COMPLETE Column stats: NONE

ListSink2)、查看hive中的分区表的SQL执行计划

explain select * from emp_part where deptno=10;

STAGE DEPENDENCIES:

Stage-0 is a root stage

STAGE PLANS:

Stage: Stage-0

Fetch Operator

limit: -1

Processor Tree:

TableScan

alias: emp_part

Statistics: Num rows: 3 Data size: 121 Basic stats: COMPLETE Column stats: NONE

Select Operator

expressions: empno (type: int), ename (type: string), job (type: string), mgr (type: int), hiredate (type: string), sal (type: int), comm (type: int), 10 (type: int)

outputColumnNames: _col0, _col1, _col2, _col3, _col4, _col5, _col6, _col7

Statistics: Num rows: 3 Data size: 121 Basic stats: COMPLETE Column stats: NONE

ListSink如何理解或者阅读执行计划呢?

记住一个原则:从下往上,从右往左

3、外部表:本质是给HDFS上目录或者文件新建一个“快捷方式"

create external table t1

(sid int,sname string,age)

row format delimited fields terminated by ','

location '/students';注意:外部表,删除表时,数据不删。

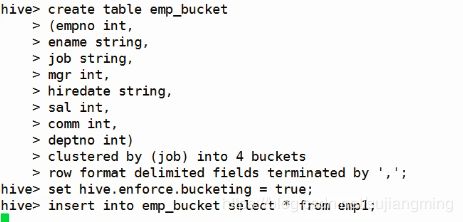

4、桶表:本质上是采用hash算法对数据进行存放,以文件的形式存在。与分区的区别在于分区是一个个目录

(*)hash分区

(*)桶表

create table emp_bucket

(empno int,

ename string,

job string,

mgr int,

hiredate string,

sal int,

comm int,

deptno int)

clustered by (job) into 4 buckets

row format delimited fields terminated by ',';注意:在插入数据到hive桶表之前必须先要设置环境变量,否则就算你插入数据了,但是hive也不会对数据进行分桶存储

登录hive,执行:hive -S

再执行如下命令:

set hive.enforce.bucketing = true;

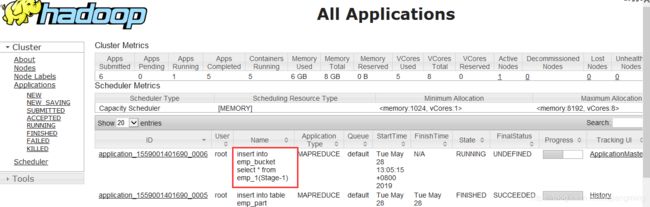

如图所示:

通过子查询的方式插入数据:

insert into emp_bucket select * from emp_1;这句语句会被转换成MR程序执行:

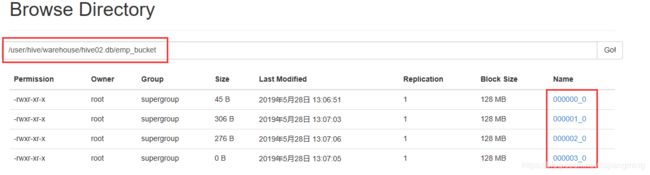

当执行完毕后,我们来看在HDFS中的hive的桶表的目录结构:

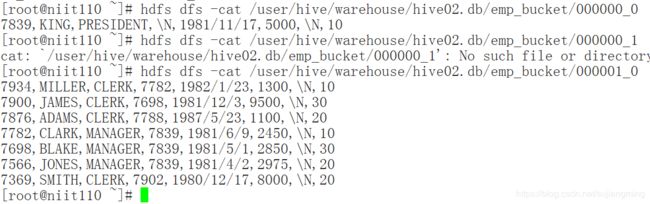

数据被分别存储在四个不同的桶上,你可以随便查看某个文件的内容:

hdfs dfs -cat /user/hive/warehouse/hive02.db/emp_bucket/000000_0

5、视图:view 虚表

(1) 视图不存数据 视图依赖的表叫基表

(2) 操作视图 跟操作表 一样

(3) 视图可以提高查询的效率吗?

不可以、视图是简化复杂的查询

(4) 举例 查询员工信息:部门名称 员工姓名

create view myview

as

select dept.dname,emp1.ename

from emp1,dept

where emp1.deptno=dept.deptno;

一些操作:

hive中表

-------------------

1.managed table

托管表。

删除表时,数据也删除了。

2.external table

外部表。

删除表时,数据不删。

hive命令

---------------

//创建表,external 外部表

CREATE external TABLE IF NOT EXISTS t2(id int,name string,age int)COMMENT 'xx' ROW FORMAT DELIMITED FIELDS TERMINATED BY ',' STORED AS TEXTFILE ;//查看表数据

desc t2 ;desc formatted t2 ;//加载数据到hive表

load data local inpath '/home/centos/customers.txt' into table t2 ; //local上传文件load data inpath '/user/centos/customers.txt' [overwrite] into table t2 ; //移动文件//复制表

mysql>create table tt as select * from users ; //携带数据和表结构

mysql>create table tt like users ; //不带数据,只有表结构

hive>create table tt as select * from users ;

hive>create table tt like users ;

//count()查询要转成mr

$hive>select count(*) from t2 ;

$hive>select id,name from t2 ;

$hive>select * from t2 order by id desc ; //MR

//启用/禁用表

ALTER TABLE t2 ENABLE NO_DROP; //不允许删除

ALTER TABLE t2 DISABLE NO_DROP; //允许删除//分区表,优化手段之一,从目录的层面控制搜索数据的范围。

//创建分区表.

CREATE TABLE t3(id int,name string,age int) PARTITIONED BY (Year INT, Month INT) ROW FORMAT DELIMITED FIELDS TERMINATED BY ',' ;//显式表的分区信息

SHOW PARTITIONS t3;//添加分区,创建目录

alter table t3 add partition (year=2014, month=12);//删除分区

ALTER TABLE employee_partitioned DROP IF EXISTS PARTITION (year=2014, month=11);//分区结构

hive>/user/hive/warehouse/mydb2.db/t3/year=2014/month=11

hive>/user/hive/warehouse/mydb2.db/t3/year=2014/month=12

//加载数据到分区表

load data local inpath '/home/centos/customers.txt' into table t3 partition(year=2014,month=11);//创建桶表

CREATE TABLE t4(id int,name string,age int) CLUSTERED BY (id) INTO 3 BUCKETS ROW FORMAT DELIMITED FIELDS TERMINATED BY ',' ;//加载数据不会进行分桶操作

load data local inpath '/home/centos/customers.txt' into table t4 ;//查询t3表数据插入到t4中。

insert into t4 select id,name,age from t3 ;//桶表的数量如何设置?

//评估数据量,保证每个桶的数据量block的2倍大小。

//连接查询

CREATE TABLE customers(id int,name string,age int) ROW FORMAT DELIMITED FIELDS TERMINATED BY ',' ;

CREATE TABLE orders(id int,orderno string,price float,cid int) ROW FORMAT DELIMITED FIELDS TERMINATED BY ',' //加载数据到表

//内连接查询

select a.*,b.* from customers a , orders b where a.id = b.cid ;//左外

select a.*,b.* from customers a left outer join orders b on a.id = b.cid ;

select a.*,b.* from customers a right outer join orders b on a.id = b.cid ;

select a.*,b.* from customers a full outer join orders b on a.id = b.cid ;//explode,炸裂,表生成函数。

//使用hive实现单词统计

//1.建表

CREATE TABLE doc(line string) ROW FORMAT DELIMITED FIELDS TERMINATED BY ',' ;五、Hive的查询

就是SQL:select ---> MapReduce

六、Hive的Java API

本质就是JDBC程序

七、Hive的自定义函数(UDF:user defined function)

本质就是一个Java程序