Flink高可用集群搭建

文章目录

- 1.高可用集群搭建

- 1.1上传安装包

- 1.2解压

- 1.3重命名

- 1.4配置环境变量

- 1.5修改配置文件

- 1.5.1masters

- 1.5.2slaves

- 1.5.3flink-conf.yaml

- 1.6拷贝配置文件

- 1.7远程发送文件

- 2.WordCount程序

- 2.1java版本

- 2.2scala版本

安装节点要求:

- jdk1.8

- hadoop2.7.6

- scala2.11.8

- zookeeper3.4.10

节点分配

| JobManager | TaskManager | ZooKeeper | |

|---|---|---|---|

| hadoop01 | √ | √ | √ |

| hadoop02 | √ | √ | √ |

| hadoop03 | √ | √ |

1.高可用集群搭建

1.1上传安装包

rz -E C:/flink-1.7.2-bin-hadoop27-scala_2.11.tgz

1.2解压

tar -zxvf flink-1.7.2-bin-hadoop27-scala_2.11.tgz -C ~/apps/

1.3重命名

mv flink-1.7.2 flink

1.4配置环境变量

vim ~/.bash_profile

export FLINK_HOME=/home/hadoop/apps/flink

export PATH=$PATH:$FLINK_HOME/bin

重新加载配置文件

source ~/.bash_profile

1.5修改配置文件

1.5.1masters

vi $FLINK_HOME/conf/masters

hadoop01:8081

hadoop02:8081

1.5.2slaves

vi $FLINK_HOME/conf/slaves

hadoop01

hadoop02

hadoop03

1.5.3flink-conf.yaml

vi $FLINK_HOME/conf/flink-conf.yaml

################################################################################

# Licensed to the Apache Software Foundation (ASF) under one

# or more contributor license agreements. See the NOTICE file

# distributed with this work for additional information

# regarding copyright ownership. The ASF licenses this file

# to you under the Apache License, Version 2.0 (the

# "License"); you may not use this file except in compliance

# with the License. You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

################################################################################

#==============================================================================

# Common

#==============================================================================

# The external address of the host on which the JobManager runs and can be

# reached by the TaskManagers and any clients which want to connect. This setting

# is only used in Standalone mode and may be overwritten on the JobManager side

# by specifying the --host parameter of the bin/jobmanager.sh executable.

# In high availability mode, if you use the bin/start-cluster.sh script and setup

# the conf/masters file, this will be taken care of automatically. Yarn/Mesos

# automatically configure the host name based on the hostname of the node where the

# JobManager runs.

#指定主节点,可以为localhost,这样在哪里启动谁就是JobManager

jobmanager.rpc.address: hadoop01

# The RPC port where the JobManager is reachable.

jobmanager.rpc.port: 6123

# The heap size for the JobManager JVM

jobmanager.heap.size: 1024m

# The heap size for the TaskManager JVM

taskmanager.heap.size: 1024m

# The number of task slots that each TaskManager offers. Each slot runs one parallel pipeline.

taskmanager.numberOfTaskSlots: 2

# The parallelism used for programs that did not specify and other parallelism.

parallelism.default: 1

# The default file system scheme and authority.

#

# By default file paths without scheme are interpreted relative to the local

# root file system 'file:///'. Use this to override the default and interpret

# relative paths relative to a different file system,

# for example 'hdfs://mynamenode:12345'

#

# fs.default-scheme

#==============================================================================

# High Availability

#==============================================================================

# The high-availability mode. Possible options are 'NONE' or 'zookeeper'.

# 指定使用 zookeeper 进行 HA 协调

high-availability: zookeeper

# The path where metadata for master recovery is persisted. While ZooKeeper stores

# the small ground truth for checkpoint and leader election, this location stores

# the larger objects, like persisted dataflow graphs.

#

# Must be a durable file system that is accessible from all nodes

# (like HDFS, S3, Ceph, nfs, ...)

#

high-availability.storageDir: hdfs://bd1906/flink172/hastorage/

# The list of ZooKeeper quorum peers that coordinate the high-availability

# setup. This must be a list of the form:

# "host1:clientPort,host2:clientPort,..." (default clientPort: 2181)

#

high-availability.zookeeper.quorum: hadoop01:2181,hadoop02:2181,hadoop03:2181

# ACL options are based on https://zookeeper.apache.org/doc/r3.1.2/zookeeperProgrammers.html#sc_BuiltinACLSchemes

# It can be either "creator" (ZOO_CREATE_ALL_ACL) or "open" (ZOO_OPEN_ACL_UNSAFE)

# The default value is "open" and it can be changed to "creator" if ZK security is enabled

#

high-availability.zookeeper.client.acl: open

#==============================================================================

# Fault tolerance and checkpointing

#==============================================================================

# The backend that will be used to store operator state checkpoints if

# checkpointing is enabled.

#

# Supported backends are 'jobmanager', 'filesystem', 'rocksdb', or the

# .

#

# 指定 checkpoint 的类型和对应的数据存储目录

state.backend: filesystem

state.backend.fs.checkpointdir: hdfs://bd1906/flink-checkpoints

# Directory for checkpoints filesystem, when using any of the default bundled

# state backends.

#

# state.checkpoints.dir: hdfs://namenode-host:port/flink-checkpoints

# Default target directory for savepoints, optional.

#

# state.savepoints.dir: hdfs://namenode-host:port/flink-checkpoints

# Flag to enable/disable incremental checkpoints for backends that

# support incremental checkpoints (like the RocksDB state backend).

#

# state.backend.incremental: false

#==============================================================================

# Web Frontend

#==============================================================================

# The address under which the web-based runtime monitor listens.

#

#web.address: 0.0.0.0

# The port under which the web-based runtime monitor listens.

# A value of -1 deactivates the web server.

rest.port: 8081

# Flag to specify whether job submission is enabled from the web-based

# runtime monitor. Uncomment to disable.

#web.submit.enable: false

#==============================================================================

# Advanced

#==============================================================================

# Override the directories for temporary files. If not specified, the

# system-specific Java temporary directory (java.io.tmpdir property) is taken.

#

# For framework setups on Yarn or Mesos, Flink will automatically pick up the

# containers' temp directories without any need for configuration.

#

# Add a delimited list for multiple directories, using the system directory

# delimiter (colon ':' on unix) or a comma, e.g.:

# /data1/tmp:/data2/tmp:/data3/tmp

#

# Note: Each directory entry is read from and written to by a different I/O

# thread. You can include the same directory multiple times in order to create

# multiple I/O threads against that directory. This is for example relevant for

# high-throughput RAIDs.

#

# io.tmp.dirs: /tmp

# Specify whether TaskManager's managed memory should be allocated when starting

# up (true) or when memory is requested.

#

# We recommend to set this value to 'true' only in setups for pure batch

# processing (DataSet API). Streaming setups currently do not use the TaskManager's

# managed memory: The 'rocksdb' state backend uses RocksDB's own memory management,

# while the 'memory' and 'filesystem' backends explicitly keep data as objects

# to save on serialization cost.

#

# taskmanager.memory.preallocate: false

# The classloading resolve order. Possible values are 'child-first' (Flink's default)

# and 'parent-first' (Java's default).

#

# Child first classloading allows users to use different dependency/library

# versions in their application than those in the classpath. Switching back

# to 'parent-first' may help with debugging dependency issues.

#

# classloader.resolve-order: child-first

# The amount of memory going to the network stack. These numbers usually need

# no tuning. Adjusting them may be necessary in case of an "Insufficient number

# of network buffers" error. The default min is 64MB, teh default max is 1GB.

#

# taskmanager.network.memory.fraction: 0.1

# taskmanager.network.memory.min: 64mb

# taskmanager.network.memory.max: 1gb

#==============================================================================

# Flink Cluster Security Configuration

#==============================================================================

# Kerberos authentication for various components - Hadoop, ZooKeeper, and connectors -

# may be enabled in four steps:

# 1. configure the local krb5.conf file

# 2. provide Kerberos credentials (either a keytab or a ticket cache w/ kinit)

# 3. make the credentials available to various JAAS login contexts

# 4. configure the connector to use JAAS/SASL

# The below configure how Kerberos credentials are provided. A keytab will be used instead of

# a ticket cache if the keytab path and principal are set.

# security.kerberos.login.use-ticket-cache: true

# security.kerberos.login.keytab: /path/to/kerberos/keytab

# security.kerberos.login.principal: flink-user

# The configuration below defines which JAAS login contexts

# security.kerberos.login.contexts: Client,KafkaClient

#==============================================================================

# ZK Security Configuration

#==============================================================================

# Below configurations are applicable if ZK ensemble is configured for security

# Override below configuration to provide custom ZK service name if configured

# zookeeper.sasl.service-name: zookeeper

# The configuration below must match one of the values set in "security.kerberos.login.contexts"

# zookeeper.sasl.login-context-name: Client

#==============================================================================

# HistoryServer

#==============================================================================

# The HistoryServer is started and stopped via bin/historyserver.sh (start|stop)

# Directory to upload completed jobs to. Add this directory to the list of

# monitored directories of the HistoryServer as well (see below).

#jobmanager.archive.fs.dir: hdfs:///completed-jobs/

# The address under which the web-based HistoryServer listens.

#historyserver.web.address: 0.0.0.0

# The port under which the web-based HistoryServer listens.

#historyserver.web.port: 8082

# Comma separated list of directories to monitor for completed jobs.

#historyserver.archive.fs.dir: hdfs:///completed-jobs/

# Interval in milliseconds for refreshing the monitored directories.

#historyserver.archive.fs.refresh-interval: 10000

1.6拷贝配置文件

拷贝zoo.cfg、hdfs-site.xml、core-site.xml到flink配置文件目录

cp $ZOOKEEPER_HOME/conf/zoo.cfg $FLINK_HOME/conf/

cp $HADOOP_HOME/etc/hadoop/hdfs-site.xml $FLINK_HOME/conf/

cp $HADOOP_HOME/etc/hadoop/core-site.xml $FLINK_HOME/conf/

1.7远程发送文件

scp -r flink hadoop02:$PWD

scp -r flink hadoop03:$PWD

scp ~/.bash_profile hadoop02:/home/hadoop/

scp ~/.bash_profile hadoop03:/home/hadoop/

三台机器都要重新加载配置文件

source ~/.bash_profile

如果前面修改了jobmanager.rpc.address的值,请修改hadoop02上的flink-conf.yaml中jobmanager.rpc.address的值为hadoop02,hadoop03可改可不改,这样才能看出高可用集群的效果!!

依次启动zk、hdfs、flink

zkServer.sh start

start-dfs.sh

start-cluster.sh

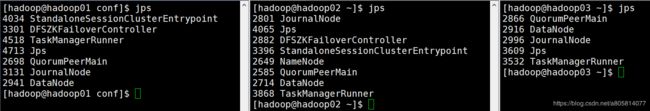

查看进程

jps

查看Web UI http://hadoop01:8081/

可以跑一个官方案例测试一下(输入文件为flink文件夹中的README.txt文件)

flink run -m hadoop02:8081 \

$FLINK_HOME/examples/batch/WordCount.jar

至此集群搭建成功!!

停止集群命令

stop-cluster.sh

2.WordCount程序

Maven依赖

<properties>

<flink.version>1.7.2flink.version>

<hadoop.version>2.7.6hadoop.version>

<scala.version>2.11.8scala.version>

properties>

<dependencies>

<dependency>

<groupId>org.apache.flinkgroupId>

<artifactId>flink-javaartifactId>

<version>${flink.version}version>

dependency>

<dependency>

<groupId>org.apache.flinkgroupId>

<artifactId>flink-scala_2.11artifactId>

<version>${flink.version}version>

dependency>

<dependency>

<groupId>org.apache.flinkgroupId>

<artifactId>flink-streaming-java_2.11artifactId>

<version>${flink.version}version>

dependency>

<dependency>

<groupId>org.apache.flinkgroupId>

<artifactId>flink-streaming-scala_2.11artifactId>

<version>${flink.version}version>

dependency>

dependencies>

2.1java版本

WordCountJava.java

package wc;

import org.apache.flink.api.common.functions.FlatMapFunction;

import org.apache.flink.api.common.functions.MapFunction;

import org.apache.flink.api.common.typeinfo.Types;

import org.apache.flink.api.java.ExecutionEnvironment;

import org.apache.flink.api.java.operators.AggregateOperator;

import org.apache.flink.api.java.operators.DataSource;

import org.apache.flink.api.java.operators.FlatMapOperator;

import org.apache.flink.api.java.operators.MapOperator;

import org.apache.flink.api.java.tuple.Tuple2;

/**

* @Author Daniel

* @Description java版本Flink wordcount 程序

**/

public class WordCountJava {

public static void main(String[] args) {

//编程入口

ExecutionEnvironment batchEnv = ExecutionEnvironment.getExecutionEnvironment();

//数据源

DataSource<String> dataSource = batchEnv.fromElements("hadoop hadoop", "spark saprk saprk", "flink flink flink");

//flatMap算子,一行转多行

FlatMapOperator<String, String> wordDataSet = dataSource.flatMap((FlatMapFunction<String, String>) (value, out) -> {

String[] words = value.split(" ");

for (String word : words) {

out.collect(word);

}

}).returns(Types.STRING);

//map算子,计数

MapOperator<String, Tuple2<String, Integer>> wordAndOneDataSet = wordDataSet.map((MapFunction<String, Tuple2<String, Integer>>) value -> new Tuple2(value, 1))

.returns(Types.TUPLE(Types.STRING, Types.INT));

//分组并计数

AggregateOperator<Tuple2<String, Integer>> lastResult = wordAndOneDataSet.groupBy(0)

.sum(1);

try {

//Sink打印结果

lastResult.print();

// batchEnv.execute("WordCountJava");//批处理不用此方法,流处理得使用

} catch (Exception e) {

e.printStackTrace();

}

}

}

2.2scala版本

WordCountScala.scala

package wc

import org.apache.flink.streaming.api.scala.{DataStream, StreamExecutionEnvironment, _}

/**

* @Author Daniel

* @Description scala版本Flink wordcount 程序

**/

object WordCountScala {

def main(args: Array[String]): Unit = {

//获取flink编程入口

val streamEnv = StreamExecutionEnvironment.getExecutionEnvironment

//从网络端口读取流数据

val dS = streamEnv.socketTextStream("hadoop01", 9999)

// 主要业务逻辑

val resultDS = dS.flatMap(line => line.toString.split(" "))

.map(word => Word(word, 1))

.keyBy("word")

.sum("count")

//输出

resultDS.print()

//进行流数据处理,不间断的运行

streamEnv.execute("StreamWordCountScala")

}

}

//良好的数据结构

case class Word(word: String, count: Int)

nc -lk hadoop01 9999

> hadoop hadoop spark spark spark flink flink flink flink