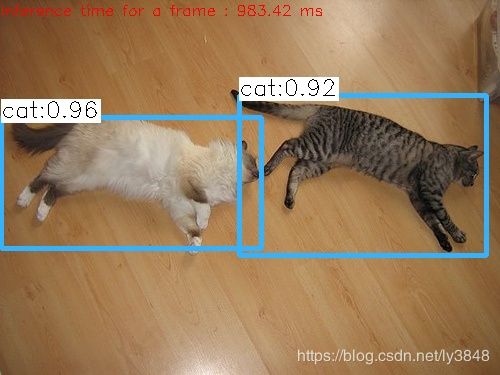

win10在darknet训练完成后用C++调用实现目标检测

1.目的

最终让图片目标检测功能封装到动态库文件中,供其它函数直接调用

2.准备工作

软件:

1.visual studio 2015

2.opencv3.4.7(版本尽量新,3.4.0貌似不行)

文件:

1.darknet训练完成后的weights格式文件yolov3.weights

2.test时的cfg文件yolov3.cfg

3.样本names文件coco.names

4.测试需求的jpg图片cat.jpg

3.建立工程

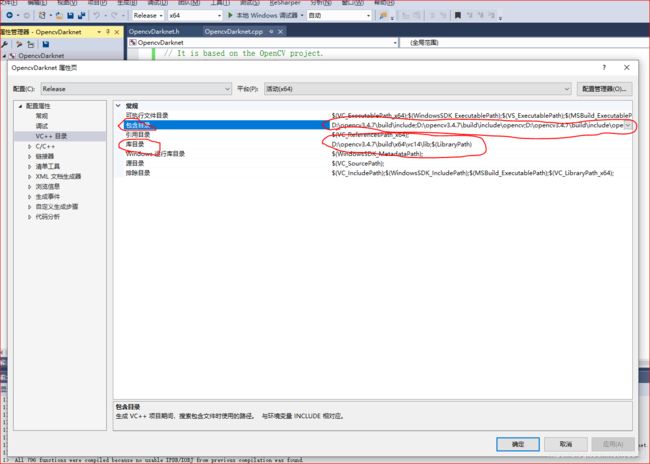

3.1 opencv3.4.7配置在vs2015的release x64环境里面(网上教程很多)

3.2 新建opencvdarknet动态库工程文件(DLL)

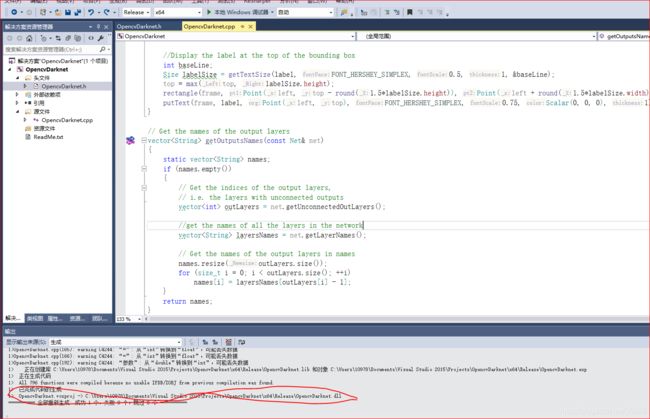

3.2.1新建OpencvDaeknet.cpp文件,代码如下:

// It is based on the OpenCV project.

#include "OpencvDarknet.h"

#include

drawPred(classIds[idx], confidences[idx], box.x, box.y, box.x + box.width, box.y + box.height, frame);

mess.index = to_string(idx);

mess.x = box.x + box.width / 2;

mess.y = box.y + box.height / 2;

mess.rotate = box.width / box.height >= 1;

vctRes.push_back(mess);

}

return vctRes;

}

// Draw the predicted bounding box

void drawPred(int classId, float conf, int left, int top, int right, int bottom, Mat& frame)

{

//Draw a rectangle displaying the bounding box

rectangle(frame, Point(left, top), Point(right, bottom), Scalar(255, 178, 50), 3);

//Get the label for the class name and its confidence

string label = format("%.2f", conf);

if (!classes.empty())

{

CV_Assert(classId < (int)classes.size());

label = classes[classId] + ":" + label;

}

//Display the label at the top of the bounding box

int baseLine;

Size labelSize = getTextSize(label, FONT_HERSHEY_SIMPLEX, 0.5, 1, &baseLine);

top = max(top, labelSize.height);

rectangle(frame, Point(left, top - round(1.5*labelSize.height)), Point(left + round(1.5*labelSize.width), top + baseLine), Scalar(255, 255, 255), FILLED);

putText(frame, label, Point(left, top), FONT_HERSHEY_SIMPLEX, 0.75, Scalar(0, 0, 0), 1);

}

// Get the names of the output layers

vector<String> getOutputsNames(const Net& net)

{

static vector<String> names;

if (names.empty())

{

// Get the indices of the output layers,

// i.e. the layers with unconnected outputs

vector<int> outLayers = net.getUnconnectedOutLayers();

//get the names of all the layers in the network

vector<String> layersNames = net.getLayerNames();

// Get the names of the output layers in names

names.resize(outLayers.size());

for (size_t i = 0; i < outLayers.size(); ++i)

names[i] = layersNames[outLayers[i] - 1];

}

return names;

}

3.2.2新建OpencvDaeknet.h文件,代码如下:

#pragma once

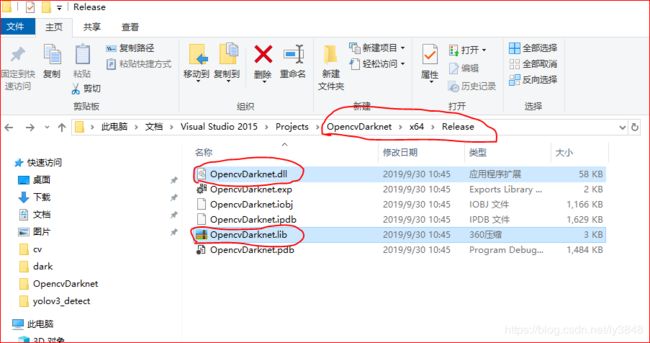

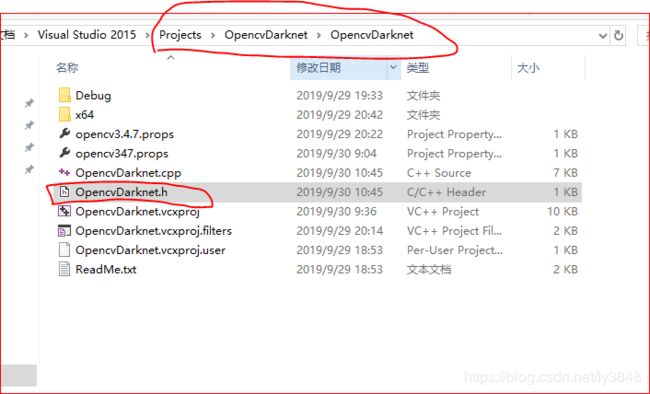

#include 3.2.3 点击 生成–>重新生成解决方案 后生成dll文件

3.3 新建opencvdarknet_test工程(控制台应用程序)

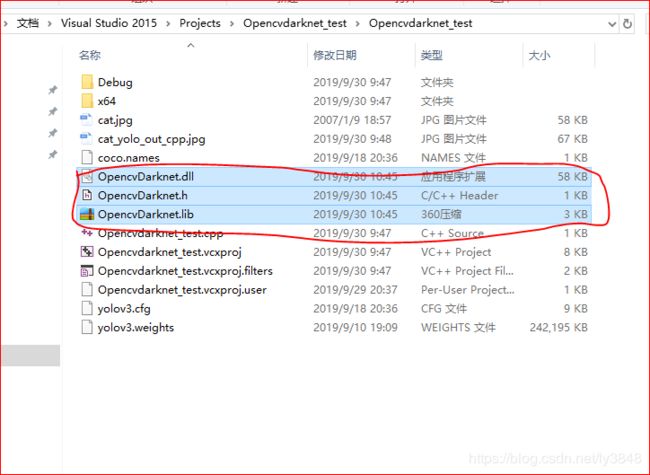

3.3.1 将上面opencvdarknet产生的OpencvDarknet.dll,OpencvDarknet.lib,OpencvDarknet.h复制到Opencvdarknet_test文件里面,详情如下:

OpencvDarknet.dll,OpencvDarknet.lib文件

OpencvDarknet.h 文件

复制完以后的现状

3.3.2 将OpencvDarknet.h 文件添加到头文件中,OpencvDarknet.lib文件添加到资源文件中,最后新建源文件OpencvDarknet_test.cpp 文件,OpencvDarknet_test.cpp如下:

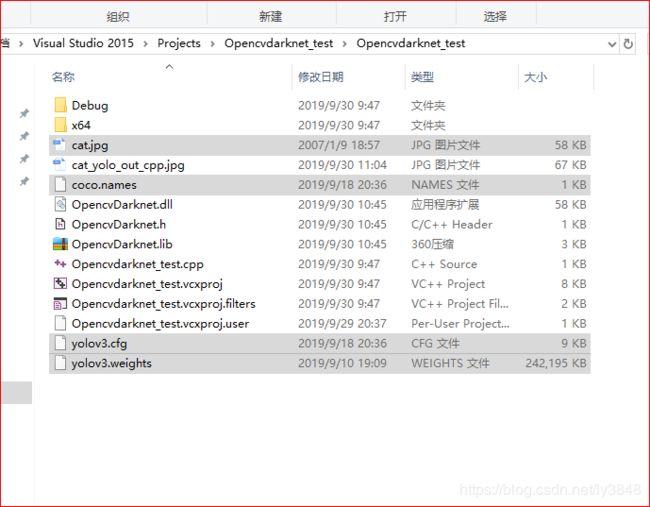

#include 3.3.3 将文章开头需要的文件复制到Opencvdarknet_test文件夹里面,如下:

cat.jpg待检测图片文件,coco.names文件,yolov3.cfg文件,yolov3.weights文件

参考链接:

https://www.aiuai.cn/aifarm822.html

https://blog.csdn.net/m0_37170593/article/details/76445972