spark-streaming与flume整合

一、以push方式接收flume发送过来的数据(也就是flume客户端主动向spark streaming发送数据)

1、首先配置pom.xml文件,文件内容如下:

4.0.0

spark-scala-java-demo

spark-scala-java-demo

1.0-SNAPSHOT

org.apache.spark

spark-core_2.11

2.1.0

org.apache.spark

spark-sql_2.11

2.1.0

org.apache.spark

spark-hive_2.11

2.1.0

org.apache.spark

spark-streaming_2.11

2.1.0

org.apache.hadoop

hadoop-client

2.6.5

org.apache.spark

spark-streaming-kafka-0-8_2.11

2.1.0

org.apache.spark

spark-streaming-flume_2.11

2.1.0

mysql

mysql-connector-java

8.0.18

org.scala-lang

scala-library

2.11.12

src/main/java

src/test/java

org.scala-tools

maven-scala-plugin

2.15.2

compile

testCompile

org.apache.maven.plugins

maven-assembly-plugin

3.1.1

jar-with-dependencies

make-assembly

package

single

org.apache.maven.plugins

maven-surefire-plugin

2.10

true

org.apache.maven.plugins

maven-compiler-plugin

1.8

1.8

其中最主要的是:spark-streaming-flume_2.11和spark-streaming_2.11包

2、以push方式接收flume发送过来的数据(也就是flume客户端主动向spark streaming发送数据),如下代码所示:

package com.best.spark.streaming.java;

import org.apache.spark.SparkConf;

import org.apache.spark.api.java.JavaSparkContext;

import org.apache.spark.sql.SparkSession;

import org.apache.spark.streaming.Durations;

import org.apache.spark.streaming.api.java.JavaDStream;

import org.apache.spark.streaming.api.java.JavaPairDStream;

import org.apache.spark.streaming.api.java.JavaReceiverInputDStream;

import org.apache.spark.streaming.api.java.JavaStreamingContext;

import org.apache.spark.streaming.flume.FlumeUtils;

import org.apache.spark.streaming.flume.SparkFlumeEvent;

import org.apache.spark.streaming.kafka.KafkaUtils;

import scala.Tuple2;

import java.util.Arrays;

import java.util.HashMap;

import java.util.Map;

public class FlumePushWordCount {

public static void main(String[] args){

/**

* kafka

* 创建topic:/usr/local/src/kafka_2.11-2.2.1/bin/kafka-topics.sh --create --zookeeper master:2181 --topic topic_spark_streaming --partitions 5 --replication-factor 3

*

*

* 启动生产者:/usr/local/src/kafka_2.11-2.2.1/bin/kafka-console-producer.sh --broker-list master:9092 --topic topic_spark_streaming

*/

//创建SparkConf对象,注意在streaming中的setMaster在设置本地模式时,必须加上local[2],数字2是最小值,因为实时计算需要多个线程并行执行,一个

//一个线程是检测数据是否过来,另一个线程是用于处理数据的

SparkConf conf=new SparkConf().setAppName("FlumePushWordCount").setMaster("local[2]");

//创建JavaStreamingContext对象,它在实例化时有两个参数,第一个是SparkConf对象,

//第二个参数是Durations时间参数,用于每隔多长时间就去收集一次数据,然后划分成一个batch来处理

SparkSession sparkSession= SparkSession.builder().config(conf).getOrCreate();

JavaSparkContext sc=JavaSparkContext.fromSparkContext(sparkSession.sparkContext());

//JavaStreamingContext jssc=new JavaStreamingContext(conf, Durations.seconds(5));

JavaStreamingContext jssc=new JavaStreamingContext(sc, Durations.seconds(5));

//此处的IP和端口是作为服务端的,flume是作为客户端

JavaReceiverInputDStream lines= FlumeUtils.createStream(jssc,"192.168.3.26",8888);

//在spark core中此处的时JavaRDD,而在Streaming中则是JavaDStream

JavaDStream words=lines.flatMap(x->{

//获得从flume中过来的字符串,它是以byte的格式发送过来的

String line=new String(x.event().getBody().array());

return Arrays.asList(line.split(" ")).iterator();

});

//在spark core中此处的时JavaPairRDD,而在Streaming中则是JavaPairDStream

JavaPairDStream pair=words.mapToPair(x->{

return new Tuple2(x,1);

});

JavaPairDStream wordcount=pair.reduceByKey((a,b)->a+b);

//注意在使用spark streaming中必须有action算子,否则无法启动

wordcount.print();

/*wordcount.foreachRDD(x->{

x.foreach(y->{

System.out.println(y);

});

});*/

try{

//注意使用jssc.awaitTermination(),必须加上try异常处理

//只有调用start方法,spark streaming才会启动执行,否则不会被执行

jssc.start();

//启动之后就会卡到这,就会一直处理实时数据流

jssc.awaitTermination();

//在执行stop之后就不能在使用start启动了,在调用stop后,内部的SparkContext也会同时停止

//如果不希望停止SparkContext,那么使用jssc.stop(false);即可

//注意:一个JVM同时只能运行一个StreamingContext,只有StreamingContext停止之后,才能启动另一个StreamingContext

jssc.stop();

}catch(InterruptedException e){

e.printStackTrace();

}

jssc.close();

sc.stop();

sc.close();

sparkSession.stop();

sparkSession.close();

}

}

3、flume的配置文件为:

#a1表示代理名称

a1.sources = s1

a1.sinks = k1

a1.channels = c1

#配置source

a1.sources.s1.type = spooldir

a1.sources.s1.spoolDir = /usr/local/flume_logs

a1.sources.s1.channels = c1

a1.sources.s1.fileHeader = false

a1.sources.s1.interceptors = i1

a1.sources.s1.interceptors.i1.type = timestamp

#配置channel

a1.channels.c1.type = file

a1.channels.c1.checkpointDir = /usr/local/flume_logs_tmp_cp

a1.channels.c1.dataDirs = /usr/local/flume_logs_tmp

#配置sink

a1.sinks.k1.type = avro

a1.sinks.k1.channel = c1

a1.sinks.k1.hostname = 192.168.3.26

a1.sinks.k1.port = 8888

注意:/usr/local/flume_logs目录必须是事先建好的,sinks的type是avro,主机名就是spark-streaming绑定的ip和端口

4、启动spark-streaming ,然后在执行flume,其命令如下:

flume-ng agent -n a1 -c conf -f flume-spark-streaming.properties -Dflume.root.logger=DEBUG,console5、然后在/usr/local/flume_logs目录创建一个文件,文件内容为:

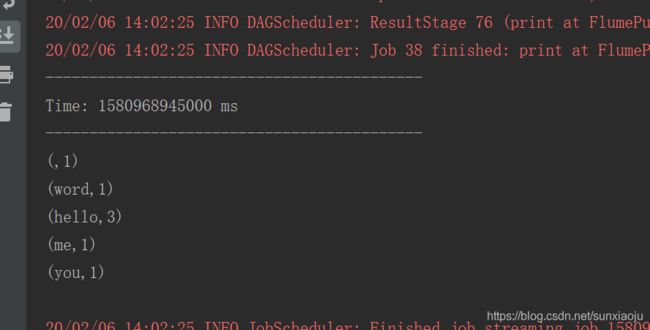

6、此时spark-streaming就会接收到信息,如下图所示:

7、什么时候我们应该用Spark Streaming整合Kafka去做实时计算?什么使用应该整合flume去做实时计算?这就看你的实时数据流的产出频率了:

(1)如果你的实时数据流产出特别频繁,比如说一秒钟10w条,那就必须是kafka,分布式的消息缓存中间件,可以承受超高并发。

(2)如果你的实时数据流产出频率不固定,比如有的时候是1秒10w,有的时候是1个小时才10w,可以选择将数据用nginx日志来表示,每隔一段时间将日志文件放到flume监控的目录中,然后用spark streaming来计算。

二、以poll方式主动从flume-sink中拉取数据,也就是spark streaming会定时去flume拉取数据(spark-streaming是客户端,flume是服务端)

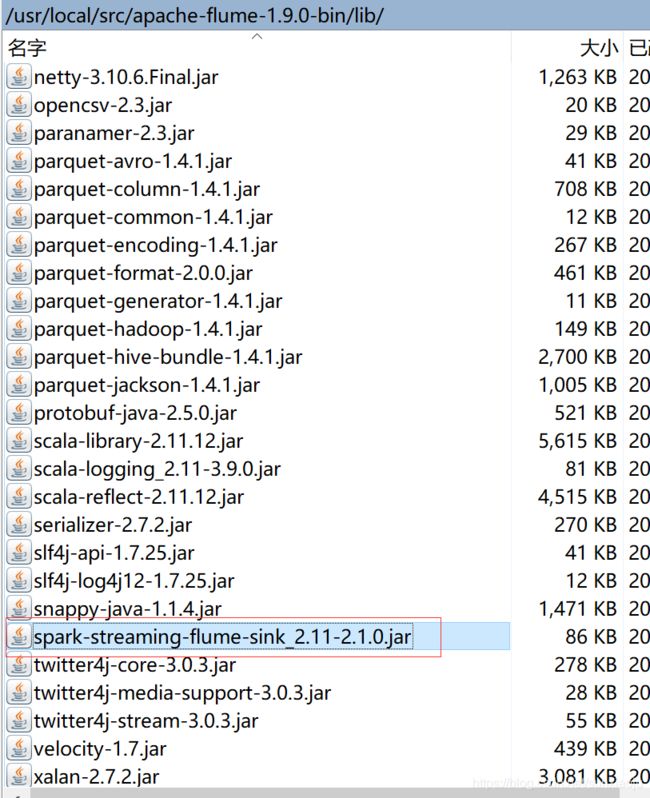

1、首先将spark-streaming-flume-sink_2.11-2.1.0.jar,scala-library-2.11.12.jar包拷贝到flume目录中的lib目录中去,如果已存在则不需要拷贝,如下图所示:

2、flume配置文件如下所示:

#a1表示代理名称

a1.sources = s1

a1.sinks = k1

a1.channels = c1

#配置source

a1.sources.s1.type = spooldir

a1.sources.s1.spoolDir = /usr/local/flume_logs

a1.sources.s1.channels = c1

a1.sources.s1.fileHeader = false

a1.sources.s1.interceptors = i1

a1.sources.s1.interceptors.i1.type = timestamp

#配置channel

a1.channels.c1.type = file

a1.channels.c1.checkpointDir = /usr/local/flume_logs_tmp_cp

a1.channels.c1.dataDirs = /usr/local/flume_logs_tmp

#配置sink

#a1.sinks.k1.type = avro

#a1.sinks.k1.channel = c1

#a1.sinks.k1.hostname = 192.168.3.26

#a1.sinks.k1.port = 8888

a1.sinks.k1.type = org.apache.spark.streaming.flume.sink.SparkSink

a1.sinks.k1.hostname = slave2

a1.sinks.k1.port = 8888

a1.sinks.k1.channel = c1

3、代码如下:

package com.best.spark.streaming.java;

import org.apache.spark.SparkConf;

import org.apache.spark.api.java.JavaSparkContext;

import org.apache.spark.sql.SparkSession;

import org.apache.spark.streaming.Durations;

import org.apache.spark.streaming.api.java.JavaDStream;

import org.apache.spark.streaming.api.java.JavaPairDStream;

import org.apache.spark.streaming.api.java.JavaReceiverInputDStream;

import org.apache.spark.streaming.api.java.JavaStreamingContext;

import org.apache.spark.streaming.flume.FlumeUtils;

import org.apache.spark.streaming.flume.SparkFlumeEvent;

import scala.Tuple2;

import java.util.Arrays;

public class FlumePollWordCount {

public static void main(String[] args){

/**

* kafka

* 创建topic:/usr/local/src/kafka_2.11-2.2.1/bin/kafka-topics.sh --create --zookeeper master:2181 --topic topic_spark_streaming --partitions 5 --replication-factor 3

*

*

* 启动生产者:/usr/local/src/kafka_2.11-2.2.1/bin/kafka-console-producer.sh --broker-list master:9092 --topic topic_spark_streaming

*/

//创建SparkConf对象,注意在streaming中的setMaster在设置本地模式时,必须加上local[2],数字2是最小值,因为实时计算需要多个线程并行执行,一个

//一个线程是检测数据是否过来,另一个线程是用于处理数据的

SparkConf conf=new SparkConf().setAppName("FlumePollWordCount").setMaster("local[2]");

//创建JavaStreamingContext对象,它在实例化时有两个参数,第一个是SparkConf对象,

//第二个参数是Durations时间参数,用于每隔多长时间就去收集一次数据,然后划分成一个batch来处理

SparkSession sparkSession= SparkSession.builder().config(conf).getOrCreate();

JavaSparkContext sc=JavaSparkContext.fromSparkContext(sparkSession.sparkContext());

//JavaStreamingContext jssc=new JavaStreamingContext(conf, Durations.seconds(5));

JavaStreamingContext jssc=new JavaStreamingContext(sc, Durations.seconds(5));

//此处的IP和端口是作为客户端的,flume是作为服务端

JavaReceiverInputDStream lines= FlumeUtils.createPollingStream(jssc,"slave2",8888);

//在spark core中此处的时JavaRDD,而在Streaming中则是JavaDStream

JavaDStream words=lines.flatMap(x->{

//获得从flume中过来的字符串,它是以byte的格式发送过来的

String line=new String(x.event().getBody().array());

return Arrays.asList(line.split(" ")).iterator();

});

//在spark core中此处的时JavaPairRDD,而在Streaming中则是JavaPairDStream

JavaPairDStream pair=words.mapToPair(x->{

return new Tuple2(x,1);

});

JavaPairDStream wordcount=pair.reduceByKey((a,b)->a+b);

//注意在使用spark streaming中必须有action算子,否则无法启动

wordcount.print();

/*wordcount.foreachRDD(x->{

x.foreach(y->{

System.out.println(y);

});

});*/

try{

//注意使用jssc.awaitTermination(),必须加上try异常处理

//只有调用start方法,spark streaming才会启动执行,否则不会被执行

jssc.start();

//启动之后就会卡到这,就会一直处理实时数据流

jssc.awaitTermination();

//在执行stop之后就不能在使用start启动了,在调用stop后,内部的SparkContext也会同时停止

//如果不希望停止SparkContext,那么使用jssc.stop(false);即可

//注意:一个JVM同时只能运行一个StreamingContext,只有StreamingContext停止之后,才能启动另一个StreamingContext

jssc.stop();

}catch(InterruptedException e){

e.printStackTrace();

}

jssc.close();

sc.stop();

sc.close();

sparkSession.stop();

sparkSession.close();

}

}

4、首先启动flume,启动命令为:

flume-ng agent -n a1 -c conf -f flume-spark-streaming_poll.properties -Dflume.root.logger=DEBUG,console5、然后启动spark-streaming,在/usr/local/flume_logs目录中创建一个文件输入:

hello word

hello you

hello me

6、此时会在spark-steaming中输出,如下图所示: