- 10月|愿你的青春不负梦想-读书笔记-01

Tracy的小书斋

本书的作者是俞敏洪,大家都很熟悉他了吧。俞敏洪老师是我行业的领头羊吧,也是我事业上的偶像。本日摘录他书中第一章中的金句:『一个人如果什么目标都没有,就会浑浑噩噩,感觉生命中缺少能量。能给我们能量的,是对未来的期待。第一件事,我始终为了进步而努力。与其追寻全世界的骏马,不如种植丰美的草原,到时骏马自然会来。第二件事,我始终有阶段性的目标。什么东西能给我能量?答案是对未来的期待。』读到这里的时候,我便

- 《投行人生》读书笔记

小蘑菇的树洞

《投行人生》----作者詹姆斯-A-朗德摩根斯坦利副主席40年的职业洞见-很短小精悍的篇幅,比较适合初入职场的新人。第一部分成功的职业生涯需要规划1.情商归为适应能力分享与协作同理心适应能力,更多的是自我意识,你有能力识别自己的情并分辨这些情绪如何影响你的思想和行为。2.对于初入职场的人的建议,细节,截止日期和数据很重要截止日期,一种有效的方法是请老板为你所有的任务进行优先级排序。和老板喝咖啡的好

- 【一起学Rust | 设计模式】习惯语法——使用借用类型作为参数、格式化拼接字符串、构造函数

广龙宇

一起学Rust#Rust设计模式rust设计模式开发语言

提示:文章写完后,目录可以自动生成,如何生成可参考右边的帮助文档文章目录前言一、使用借用类型作为参数二、格式化拼接字符串三、使用构造函数总结前言Rust不是传统的面向对象编程语言,它的所有特性,使其独一无二。因此,学习特定于Rust的设计模式是必要的。本系列文章为作者学习《Rust设计模式》的学习笔记以及自己的见解。因此,本系列文章的结构也与此书的结构相同(后续可能会调成结构),基本上分为三个部分

- git常用命令笔记

咩酱-小羊

git笔记

###用习惯了idea总是不记得git的一些常见命令,需要用到的时候总是担心旁边站了人~~~记个笔记@_@,告诉自己看笔记不丢人初始化初始化一个新的Git仓库gitinit配置配置用户信息gitconfig--globaluser.name"YourName"gitconfig--globaluser.email"

[email protected]"基本操作克隆远程仓库gitclone查看

- 509. 斐波那契数(每日一题)

lzyprime

lzyprime博客(github)创建时间:2021.01.04qq及邮箱:2383518170leetcode笔记题目描述斐波那契数,通常用F(n)表示,形成的序列称为斐波那契数列。该数列由0和1开始,后面的每一项数字都是前面两项数字的和。也就是:F(0)=0,F(1)=1F(n)=F(n-1)+F(n-2),其中n>1给你n,请计算F(n)。示例1:输入:2输出:1解释:F(2)=F(1)+

- 拥有断舍离的心态,过精简生活--《断舍离》读书笔记

爱吃丸子的小樱桃

不知不觉间房间里的东西越来越多,虽然摆放整齐,但也时常会觉得空间逼仄,令人心生烦闷。抱着断舍离的态度,我开始阅读《断舍离》这本书,希望从书中能找到一些有效的方法,帮助我实现空间、物品上的断舍离。《断舍离》是日本作家山下英子通过自己的经历、思考和实践总结而成的,整体内涵也从刚开始的私人生活哲学的“断舍离”升华成了“人生实践哲学”,接着又成为每个人都能实行的“改变人生的断舍离”,从“哲学”逐渐升华成“

- 四章-32-点要素的聚合

彩云飘过

本文基于腾讯课堂老胡的课《跟我学Openlayers--基础实例详解》做的学习笔记,使用的openlayers5.3.xapi。源码见1032.html,对应的官网示例https://openlayers.org/en/latest/examples/cluster.htmlhttps://openlayers.org/en/latest/examples/earthquake-clusters.

- 高端密码学院笔记285

柚子_b4b4

高端幸福密码学院(高级班)幸福使者:李华第(598)期《幸福》之回归内在深层生命原动力基础篇——揭秘“激励”成长的喜悦心理案例分析主讲:刘莉一,知识扩充:成功=艰苦劳动+正确方法+少说空话。贪图省力的船夫,目标永远下游。智者的梦再美,也不如愚人实干的脚印。幸福早课堂2020.10.16星期五一笔记:1,重视和珍惜的前提是知道它的价值非常重要,当你珍惜了,你就真正定下来,真正的学到身上。2,大家需要

- Day17笔记-高阶函数

~在杰难逃~

Python笔记python开发语言pycharm数据分析

高阶函数【重点掌握】函数的本质:函数是一个变量,函数名是一个变量名,一个函数可以作为另一个函数的参数或返回值使用如果A函数作为B函数的参数,B函数调用完成之后,会得到一个结果,则B函数被称为高阶函数常用的高阶函数:map(),reduce(),filter(),sorted()1.map()map(func,iterable),返回值是一个iterator【容器,迭代器】func:函数iterab

- Day1笔记-Python简介&标识符和关键字&输入输出

~在杰难逃~

Pythonpython开发语言大数据数据分析数据挖掘

大家好,从今天开始呢,杰哥开展一个新的专栏,当然,数据分析部分也会不定时更新的,这个新的专栏主要是讲解一些Python的基础语法和知识,帮助0基础的小伙伴入门和学习Python,感兴趣的小伙伴可以开始认真学习啦!一、Python简介【了解】1.计算机工作原理编程语言就是用来定义计算机程序的形式语言。我们通过编程语言来编写程序代码,再通过语言处理程序执行向计算机发送指令,让计算机完成对应的工作,编程

- node.js学习

小猿L

node.jsnode.js学习vim

node.js学习实操及笔记温故node.js,node.js学习实操过程及笔记~node.js学习视频node.js官网node.js中文网实操笔记githubcsdn笔记为什么学node.js可以让别人访问我们编写的网页为后续的框架学习打下基础,三大框架vuereactangular离不开node.jsnode.js是什么官网:node.js是一个开源的、跨平台的运行JavaScript的运行

- 数据仓库——维度表一致性

墨染丶eye

背诵数据仓库

数据仓库基础笔记思维导图已经整理完毕,完整连接为:数据仓库基础知识笔记思维导图维度一致性问题从逻辑层面来看,当一系列星型模型共享一组公共维度时,所涉及的维度称为一致性维度。当维度表存在不一致时,短期的成功难以弥补长期的错误。维度时确保不同过程中信息集成起来实现横向钻取货活动的关键。造成横向钻取失败的原因维度结构的差别,因为维度的差别,分析工作涉及的领域从简单到复杂,但是都是通过复杂的报表来弥补设计

- 【Git】常见命令(仅笔记)

好想有猫猫

GitLinux学习笔记git笔记elasticsearchlinuxc++

文章目录创建/初始化本地仓库添加本地仓库配置项提交文件查看仓库状态回退仓库查看日志分支删除文件暂存工作区代码远程仓库使用`.gitigore`文件让git不追踪一些文件标签创建/初始化本地仓库gitinit添加本地仓库配置项gitconfig-l#以列表形式显示配置项gitconfiguser.name"ljh"#配置user.namegitconfiguser.email"

[email protected]

- nosql数据库技术与应用知识点

皆过客,揽星河

NoSQLnosql数据库大数据数据分析数据结构非关系型数据库

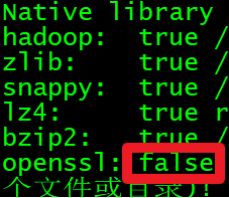

Nosql知识回顾大数据处理流程数据采集(flume、爬虫、传感器)数据存储(本门课程NoSQL所处的阶段)Hdfs、MongoDB、HBase等数据清洗(入仓)Hive等数据处理、分析(Spark、Flink等)数据可视化数据挖掘、机器学习应用(Python、SparkMLlib等)大数据时代存储的挑战(三高)高并发(同一时间很多人访问)高扩展(要求随时根据需求扩展存储)高效率(要求读写速度快)

- ES聚合分析原理与代码实例讲解

光剑书架上的书

大厂Offer收割机面试题简历程序员读书硅基计算碳基计算认知计算生物计算深度学习神经网络大数据AIGCAGILLMJavaPython架构设计Agent程序员实现财富自由

ES聚合分析原理与代码实例讲解1.背景介绍1.1问题的由来在大规模数据分析场景中,特别是在使用Elasticsearch(ES)进行数据存储和检索时,聚合分析成为了一个至关重要的功能。聚合分析允许用户对数据集进行细分和分组,以便深入探索数据的结构和模式。这在诸如实时监控、日志分析、业务洞察等领域具有广泛的应用。1.2研究现状目前,ES聚合分析已经成为现代大数据平台的核心组件之一。它支持多种类型的聚

- 为什么你总是对下属不满意?

ZhaoWu1050

【ZhaoWu的听课笔记】大多数公司,都存在两种问题。我创业四年,更是体会深切。这两种问题就是:老板经常不满意下属的表现;下属总是不知道老板想要什么;虽然这两种问题普遍存在,其实解决方法并不复杂。这节课,我们再聊聊第一个问题:为什么老板经常不满意下属表现?其实,这背后也是一条管理常识。管理学家德鲁克先生早就说过:管理者的任务,不是去改变人。*来自《卓有成效的管理者》只是大多数老板和我一样,都是一边

- 母亲节如何做小红书营销

美橙传媒

小红书的一举一动引起了外界的高度关注。通过爆款笔记和流行话题,我们可以看到“干货”类型的内容在小红书中偏向实用的生活经验共享和生活指南非常受欢迎。根据运营社的分析,这种现象是由小红书用户心智和内容社区背后机制共同决定的。首先,小红书将使用“强搜索”逻辑为用户提供特定的“搜索场景”。在“我必须这样生活”中,大量使用了满足小红书站用户喜好和需求的内容。内容社区自制的高质量内容也吸引了寻找营销新途径的品

- 读书笔记|《遇见孩子,遇见更好的自己》5

抹茶社长

为人父母意味着放弃自己的过去,不要对以往没有实现的心愿耿耿于怀,只有这样,孩子们才能做回自己。985909803.jpg孩子在与父母保持亲密的同时更需要独立,唯有这样,孩子才会成为孩子,父母才会成其为父母。有耐心的人生往往更幸福,给孩子留点余地。认识到养儿育女是对耐心的考验。为失败做好心理准备,教会孩子控制情绪。了解自己的底线,说到底线,有一点很重要,父母之所以发脾气,真正的原因往往在于他们自己,

- 基于Python给出的PDF文档转Markdown文档的方法

程序媛了了

pythonpdf开发语言

注:网上有很多将Markdown文档转为PDF文档的方法,但是却很少有将PDF文档转为Markdown文档的方法。就算有,比如某些网站声称可以将PDF文档转为Markdown文档,尝试过,不太符合自己的要求,而且无法保证文档没有泄露风险。于是本人为了解决这个问题,借助GPT(能使用GPT镜像或者有条件直接使用GPT的,反正能调用GPT接口就行)生成Python代码来完成这个功能。笔记、代码难免存在

- 语文主题教学学习笔记之87

东哥杂谈

“语文主题教学”学习笔记之八十七(0125)今天继续学习小学语文主题教学的实践样态。板块三:教学中体现“书艺”味道。作为四大名著之一的《水浒传》,堪称我国文学宝库之经典。对从《水浒传》中摘选的单元,教师就要了解其原生态,即评书体特点。这也要求教师要了解一些常用的评书行话术语,然后在教学时适时地加入一些,让学生体味其文本中原有的特色。学生也要尽可能地通过朗读的方式,而不单是分析讲解的方式进行学习。细

- Armv8.3 体系结构扩展--原文版

代码改变世界ctw

ARM-TEE-Androidarmv8嵌入式arm架构安全架构芯片TrustzoneSecureboot

快速链接:.ARMv8/ARMv9架构入门到精通-[目录]付费专栏-付费课程【购买须知】:个人博客笔记导读目录(全部)TheArmv8.3architectureextensionTheArmv8.3architectureextensionisanextensiontoArmv8.2.Itaddsmandatoryandoptionalarchitecturalfeatures.Somefeat

- springboot+vue项目实战一-创建SpringBoot简单项目

苹果酱0567

面试题汇总与解析springboot后端java中间件开发语言

这段时间抽空给女朋友搭建一个个人博客,想着记录一下建站的过程,就当做笔记吧。虽然复制zjblog只要一个小时就可以搞定一个网站,或者用cms系统,三四个小时就可以做出一个前后台都有的网站,而且想做成啥样也都行。但是就是要从新做,自己做的意义不一样,更何况,俺就是专门干这个的,嘿嘿嘿要做一个网站,而且从零开始,首先呢就是技术选型了,经过一番思量决定选择-SpringBoot做后端,前端使用Vue做一

- 阅读《认知觉醒》读书笔记

就看看书

本周阅读了周岭的《认知觉醒开启自我改变的原动力》,启发较多,故做读书笔记一则,留待学习。全书共八章,讲述了大脑、潜意识、元认知、专注力、学习力、行动力、情绪力及成本最低的成长之道。具体描述了大脑、焦虑、耐心、模糊、感性、元认知、自控力、专注力、情绪专注、学习专注、匹配、深度、关联、体系、打卡、反馈、休息、清晰、傻瓜、行动、心智宽带、单一视角、游戏心态、早起、冥想、阅读、写作、运动等相关知识点。大脑

- 阅读笔记:阅读方法中的逻辑和转念

施吉涛

聊聊一些阅读的方法论吧,别人家的读书方法刚开始想写,然后就不知道写什么了,因为作者写的非常的“精致”我有一种乡巴佬进城的感觉,看到精美的摆盘,精致的食材不知道该如何下口也就是《阅读的方法》,我们姑且来试一下强劲的大脑篇,第一节:逻辑通俗的来讲,也就是表达的排列和顺序,再进一步就是因果关系和关联实际上书已经看了大概一遍,但直到打算写一下笔记的时候,才发现作者讲的推理更多的是阅读的对象中呈现出的逻辑也

- WebMagic:强大的Java爬虫框架解析与实战

Aaron_945

Javajava爬虫开发语言

文章目录引言官网链接WebMagic原理概述基础使用1.添加依赖2.编写PageProcessor高级使用1.自定义Pipeline2.分布式抓取优点结论引言在大数据时代,网络爬虫作为数据收集的重要工具,扮演着不可或缺的角色。Java作为一门广泛使用的编程语言,在爬虫开发领域也有其独特的优势。WebMagic是一个开源的Java爬虫框架,它提供了简单灵活的API,支持多线程、分布式抓取,以及丰富的

- 《转介绍方法论》学习笔记

小可乐的妈妈

一、高效转介绍的流程:价值观---执行----方案一)转介绍发生的背景:1、对象:谁向谁转介绍?全员营销,人人参与。①员工的激励政策、客户的转介绍诱因制作客户画像:a信任;支付能力;意愿度;便利度(根据家长具备四个特征的个数分为四类)B性格分类C职业分类D年龄性别②执行:套路,策略,方法,流程2、诱因:为什么要转介绍?认同信任;多方共赢;传递美好;零风险承诺打动人心,超越期待。选择做教育,就是选择

- JAVA学习笔记之23种设计模式学习

victorfreedom

Java技术设计模式androidjava常用设计模式

博主最近买了《设计模式》这本书来学习,无奈这本书是以C++语言为基础进行说明,整个学习流程下来效率不是很高,虽然有的设计模式通俗易懂,但感觉还是没有充分的掌握了所有的设计模式。于是博主百度了一番,发现有大神写过了这方面的问题,于是博主迅速拿来学习。一、设计模式的分类总体来说设计模式分为三大类:创建型模式,共五种:工厂方法模式、抽象工厂模式、单例模式、建造者模式、原型模式。结构型模式,共七种:适配器

- 解决Obsidian写笔记中的<img>标签无法显示图片的问题

全能全知者

笔记

Obsidian中写md笔记如果使用标签会显示不出图案,后来才知道因为Obsidian的问题导致只能用绝对路径定位。所以我本人写了一个py插件,将md笔记里的img标签批量替换成Obsidian能够读取的形式。安装FixObsImgDpy:pipinstallFixObsImgDpy安装完成后在需要修复的md文件的父目录下运行命令:FixObsImgDpy就会自动修复父目录以下的全部md文件 仓库

- 免费的GPT可在线直接使用(一键收藏)

kkai人工智能

gpt

1、LuminAI(https://kk.zlrxjh.top)LuminAI标志着一款融合了星辰大数据模型与文脉深度模型的先进知识增强型语言处理系统,旨在自然语言处理(NLP)的技术开发领域发光发热。此系统展现了卓越的语义把握与内容生成能力,轻松驾驭多样化的自然语言处理任务。VisionAI在NLP界的应用领域广泛,能够胜任从机器翻译、文本概要撰写、情绪分析到问答等众多任务。通过对大量文本数据的

- 2021年周总结 03

Ruby之家

这周的生活过得也是比较快,因为暂时住的离公司有点距离,所以通勤时间相对较长一点,而在地铁上的一个半小时如何充分利用起来,则是我最近一直在思考的问题,2021年想让自己的生活都运行在计划中。(有时候自己想干一件事情就总是给自己找很多借口,想着以后怎么怎么样?然而哪有那么多的以后,能够方便当下的工作生活就立马执行就OK,这仅仅只是我此时想到背的很重的老人机笔记本电脑,也算是陪伴我快8年的—当时买的时候

- jQuery 跨域访问的三种方式 No 'Access-Control-Allow-Origin' header is present on the reque

qiaolevip

每天进步一点点学习永无止境跨域众观千象

XMLHttpRequest cannot load http://v.xxx.com. No 'Access-Control-Allow-Origin' header is present on the requested resource. Origin 'http://localhost:63342' is therefore not allowed access. test.html:1

- mysql 分区查询优化

annan211

java分区优化mysql

分区查询优化

引入分区可以给查询带来一定的优势,但同时也会引入一些bug.

分区最大的优点就是优化器可以根据分区函数来过滤掉一些分区,通过分区过滤可以让查询扫描更少的数据。

所以,对于访问分区表来说,很重要的一点是要在where 条件中带入分区,让优化器过滤掉无需访问的分区。

可以通过查看explain执行计划,是否携带 partitions

- MYSQL存储过程中使用游标

chicony

Mysql存储过程

DELIMITER $$

DROP PROCEDURE IF EXISTS getUserInfo $$

CREATE PROCEDURE getUserInfo(in date_day datetime)-- -- 实例-- 存储过程名为:getUserInfo-- 参数为:date_day日期格式:2008-03-08-- BEGINdecla

- mysql 和 sqlite 区别

Array_06

sqlite

转载:

http://www.cnblogs.com/ygm900/p/3460663.html

mysql 和 sqlite 区别

SQLITE是单机数据库。功能简约,小型化,追求最大磁盘效率

MYSQL是完善的服务器数据库。功能全面,综合化,追求最大并发效率

MYSQL、Sybase、Oracle等这些都是试用于服务器数据量大功能多需要安装,例如网站访问量比较大的。而sq

- pinyin4j使用

oloz

pinyin4j

首先需要pinyin4j的jar包支持;jar包已上传至附件内

方法一:把汉字转换为拼音;例如:编程转换后则为biancheng

/**

* 将汉字转换为全拼

* @param src 你的需要转换的汉字

* @param isUPPERCASE 是否转换为大写的拼音; true:转换为大写;fal

- 微博发送私信

随意而生

微博

在前面文章中说了如和获取登陆时候所需要的cookie,现在只要拿到最后登陆所需要的cookie,然后抓包分析一下微博私信发送界面

http://weibo.com/message/history?uid=****&name=****

可以发现其发送提交的Post请求和其中的数据,

让后用程序模拟发送POST请求中的数据,带着cookie发送到私信的接入口,就可以实现发私信的功能了。

- jsp

香水浓

jsp

JSP初始化

容器载入JSP文件后,它会在为请求提供任何服务前调用jspInit()方法。如果您需要执行自定义的JSP初始化任务,复写jspInit()方法就行了

JSP执行

这一阶段描述了JSP生命周期中一切与请求相关的交互行为,直到被销毁。

当JSP网页完成初始化后

- 在 Windows 上安装 SVN Subversion 服务端

AdyZhang

SVN

在 Windows 上安装 SVN Subversion 服务端2009-09-16高宏伟哈尔滨市道里区通达街291号

最佳阅读效果请访问原地址:http://blog.donews.com/dukejoe/archive/2009/09/16/1560917.aspx

现在的Subversion已经足够稳定,而且已经进入了它的黄金时段。我们看到大量的项目都在使

- android开发中如何使用 alertDialog从listView中删除数据?

aijuans

android

我现在使用listView展示了很多的配置信息,我现在想在点击其中一条的时候填出 alertDialog,点击确认后就删除该条数据,( ArrayAdapter ,ArrayList,listView 全部删除),我知道在 下面的onItemLongClick 方法中 参数 arg2 是选中的序号,但是我不知道如何继续处理下去 1 2 3

- jdk-6u26-linux-x64.bin 安装

baalwolf

linux

1.上传安装文件(jdk-6u26-linux-x64.bin)

2.修改权限

[root@localhost ~]# ls -l /usr/local/jdk-6u26-linux-x64.bin

3.执行安装文件

[root@localhost ~]# cd /usr/local

[root@localhost local]# ./jdk-6u26-linux-x64.bin&nbs

- MongoDB经典面试题集锦

BigBird2012

mongodb

1.什么是NoSQL数据库?NoSQL和RDBMS有什么区别?在哪些情况下使用和不使用NoSQL数据库?

NoSQL是非关系型数据库,NoSQL = Not Only SQL。

关系型数据库采用的结构化的数据,NoSQL采用的是键值对的方式存储数据。

在处理非结构化/半结构化的大数据时;在水平方向上进行扩展时;随时应对动态增加的数据项时可以优先考虑使用NoSQL数据库。

在考虑数据库的成熟

- JavaScript异步编程Promise模式的6个特性

bijian1013

JavaScriptPromise

Promise是一个非常有价值的构造器,能够帮助你避免使用镶套匿名方法,而使用更具有可读性的方式组装异步代码。这里我们将介绍6个最简单的特性。

在我们开始正式介绍之前,我们想看看Javascript Promise的样子:

var p = new Promise(function(r

- [Zookeeper学习笔记之八]Zookeeper源代码分析之Zookeeper.ZKWatchManager

bit1129

zookeeper

ClientWatchManager接口

//接口的唯一方法materialize用于确定那些Watcher需要被通知

//确定Watcher需要三方面的因素1.事件状态 2.事件类型 3.znode的path

public interface ClientWatchManager {

/**

* Return a set of watchers that should

- 【Scala十五】Scala核心九:隐式转换之二

bit1129

scala

隐式转换存在的必要性,

在Java Swing中,按钮点击事件的处理,转换为Scala的的写法如下:

val button = new JButton

button.addActionListener(

new ActionListener {

def actionPerformed(event: ActionEvent) {

- Android JSON数据的解析与封装小Demo

ronin47

转自:http://www.open-open.com/lib/view/open1420529336406.html

package com.example.jsondemo;

import org.json.JSONArray;

import org.json.JSONException;

import org.json.JSONObject;

impor

- [设计]字体创意设计方法谈

brotherlamp

UIui自学ui视频ui教程ui资料

从古至今,文字在我们的生活中是必不可少的事物,我们不能想象没有文字的世界将会是怎样。在平面设计中,UI设计师在文字上所花的心思和功夫最多,因为文字能直观地表达UI设计师所的意念。在文字上的创造设计,直接反映出平面作品的主题。

如设计一幅戴尔笔记本电脑的广告海报,假设海报上没有出现“戴尔”两个文字,即使放上所有戴尔笔记本电脑的图片都不能让人们得知这些电脑是什么品牌。只要写上“戴尔笔

- 单调队列-用一个长度为k的窗在整数数列上移动,求窗里面所包含的数的最大值

bylijinnan

java算法面试题

import java.util.LinkedList;

/*

单调队列 滑动窗口

单调队列是这样的一个队列:队列里面的元素是有序的,是递增或者递减

题目:给定一个长度为N的整数数列a(i),i=0,1,...,N-1和窗长度k.

要求:f(i) = max{a(i-k+1),a(i-k+2),..., a(i)},i = 0,1,...,N-1

问题的另一种描述就

- struts2处理一个form多个submit

chiangfai

struts2

web应用中,为完成不同工作,一个jsp的form标签可能有多个submit。如下代码:

<s:form action="submit" method="post" namespace="/my">

<s:textfield name="msg" label="叙述:">

- shell查找上个月,陷阱及野路子

chenchao051

shell

date -d "-1 month" +%F

以上这段代码,假如在2012/10/31执行,结果并不会出现你预计的9月份,而是会出现八月份,原因是10月份有31天,9月份30天,所以-1 month在10月份看来要减去31天,所以直接到了8月31日这天,这不靠谱。

野路子解决:假设当天日期大于15号

- mysql导出数据中文乱码问题

daizj

mysql中文乱码导数据

解决mysql导入导出数据乱码问题方法:

1、进入mysql,通过如下命令查看数据库编码方式:

mysql> show variables like 'character_set_%';

+--------------------------+----------------------------------------+

| Variable_name&nbs

- SAE部署Smarty出现:Uncaught exception 'SmartyException' with message 'unable to write

dcj3sjt126com

PHPsmartysae

对于SAE出现的问题:Uncaught exception 'SmartyException' with message 'unable to write file...。

官方给出了详细的FAQ:http://sae.sina.com.cn/?m=faqs&catId=11#show_213

解决方案为:

01

$path

- 《教父》系列台词

dcj3sjt126com

Your love is also your weak point.

你的所爱同时也是你的弱点。

If anything in this life is certain, if history has taught us anything, it is

that you can kill anyone.

不顾家的人永远不可能成为一个真正的男人。 &

- mongodb安装与使用

dyy_gusi

mongo

一.MongoDB安装和启动,widndows和linux基本相同

1.下载数据库,

linux:mongodb-linux-x86_64-ubuntu1404-3.0.3.tgz

2.解压文件,并且放置到合适的位置

tar -vxf mongodb-linux-x86_64-ubun

- Git排除目录

geeksun

git

在Git的版本控制中,可能有些文件是不需要加入控制的,那我们在提交代码时就需要忽略这些文件,下面讲讲应该怎么给Git配置一些忽略规则。

有三种方法可以忽略掉这些文件,这三种方法都能达到目的,只不过适用情景不一样。

1. 针对单一工程排除文件

这种方式会让这个工程的所有修改者在克隆代码的同时,也能克隆到过滤规则,而不用自己再写一份,这就能保证所有修改者应用的都是同一

- Ubuntu 创建开机自启动脚本的方法

hongtoushizi

ubuntu

转载自: http://rongjih.blog.163.com/blog/static/33574461201111504843245/

Ubuntu 创建开机自启动脚本的步骤如下:

1) 将你的启动脚本复制到 /etc/init.d目录下 以下假设你的脚本文件名为 test。

2) 设置脚本文件的权限 $ sudo chmod 755

- 第八章 流量复制/AB测试/协程

jinnianshilongnian

nginxluacoroutine

流量复制

在实际开发中经常涉及到项目的升级,而该升级不能简单的上线就完事了,需要验证该升级是否兼容老的上线,因此可能需要并行运行两个项目一段时间进行数据比对和校验,待没问题后再进行上线。这其实就需要进行流量复制,把流量复制到其他服务器上,一种方式是使用如tcpcopy引流;另外我们还可以使用nginx的HttpLuaModule模块中的ngx.location.capture_multi进行并发

- 电商系统商品表设计

lkl

DROP TABLE IF EXISTS `category`; -- 类目表

/*!40101 SET @saved_cs_client = @@character_set_client */;

/*!40101 SET character_set_client = utf8 */;

CREATE TABLE `category` (

`id` int(11) NOT NUL

- 修改phpMyAdmin导入SQL文件的大小限制

pda158

sqlmysql

用phpMyAdmin导入mysql数据库时,我的10M的

数据库不能导入,提示mysql数据库最大只能导入2M。

phpMyAdmin数据库导入出错: You probably tried to upload too large file. Please refer to documentation for ways to workaround this limit.

- Tomcat性能调优方案

Sobfist

apachejvmtomcat应用服务器

一、操作系统调优

对于操作系统优化来说,是尽可能的增大可使用的内存容量、提高CPU的频率,保证文件系统的读写速率等。经过压力测试验证,在并发连接很多的情况下,CPU的处理能力越强,系统运行速度越快。。

【适用场景】 任何项目。

二、Java虚拟机调优

应该选择SUN的JVM,在满足项目需要的前提下,尽量选用版本较高的JVM,一般来说高版本产品在速度和效率上比低版本会有改进。

J

- SQLServer学习笔记

vipbooks

数据结构xml

1、create database school 创建数据库school

2、drop database school 删除数据库school

3、use school 连接到school数据库,使其成为当前数据库

4、create table class(classID int primary key identity not null)

创建一个名为class的表,其有一