NLP------ BERT源码分析(PART I)

注意,阅读源码前需要对NLP相关知识有所了解,比如attention机制、transformer 框架以及python和TensorFlow基础等。

以下要介绍的是BERT最主要的模型实现部分------BertModel,代码位于

modeling.py 模块

1、配置类(BertConfig)

class BertConfig(object):

"""BERT模型的配置类."""

def __init__(self,

vocab_size,

hidden_size=768,

num_hidden_layers=12,

num_attention_heads=12,

intermediate_size=3072,

hidden_act="gelu",

hidden_dropout_prob=0.1,

attention_probs_dropout_prob=0.1,

max_position_embeddings=512,

type_vocab_size=16,

initializer_range=0.02):

self.vocab_size = vocab_size

self.hidden_size = hidden_size

self.num_hidden_layers = num_hidden_layers

self.num_attention_heads = num_attention_heads

self.hidden_act = hidden_act

self.intermediate_size = intermediate_size

self.hidden_dropout_prob = hidden_dropout_prob

self.attention_probs_dropout_prob = attention_probs_dropout_prob

self.max_position_embeddings = max_position_embeddings

self.type_vocab_size = type_vocab_size

self.initializer_range = initializer_range

@classmethod

def from_dict(cls, json_object):

"""Constructs a `BertConfig` from a Python dictionary of parameters."""

config = BertConfig(vocab_size=None)

for (key, value) in six.iteritems(json_object):

config.__dict__[key] = value

return config

@classmethod

def from_json_file(cls, json_file):

"""Constructs a `BertConfig` from a json file of parameters."""

with tf.gfile.GFile(json_file, "r") as reader:

text = reader.read()

return cls.from_dict(json.loads(text))

def to_dict(self):

"""Serializes this instance to a Python dictionary."""

output = copy.deepcopy(self.__dict__)

return output

def to_json_string(self):

"""Serializes this instance to a JSON string."""

return json.dumps(self.to_dict(), indent=2, sort_keys=True) + "\n"

2、获取词向量(Embedding_lookup)

def embedding_lookup(input_ids,# word_id:【batch_size, seq_length】

vocab_size,

embedding_size=128,

initializer_range=0.02,

word_embedding_name="word_embeddings",

use_one_hot_embeddings=False):

# 该函数默认输入的形状为【batch_size, seq_length, input_num】

# 如果输入为2D的【batch_size, seq_length】,则扩展到【batch_size, seq_length, 1】

if input_ids.shape.ndims == 2:

input_ids = tf.expand_dims(input_ids, axis=[-1])

embedding_table = tf.get_variable(

name=word_embedding_name,

shape=[vocab_size, embedding_size],

initializer=create_initializer(initializer_range))

flat_input_ids = tf.reshape(input_ids, [-1]) #【batch_size*seq_length*input_num】

if use_one_hot_embeddings:

one_hot_input_ids = tf.one_hot(flat_input_ids, depth=vocab_size)

output = tf.matmul(one_hot_input_ids, embedding_table)

else:# 按索引取值

output = tf.gather(embedding_table, flat_input_ids)

input_shape = get_shape_list(input_ids)

# output:[batch_size, seq_length, num_inputs]

# 转成:[batch_size, seq_length, num_inputs*embedding_size]

output = tf.reshape(output,

input_shape[0:-1] + [input_shape[-1] * embedding_size])

return (output, embedding_table)

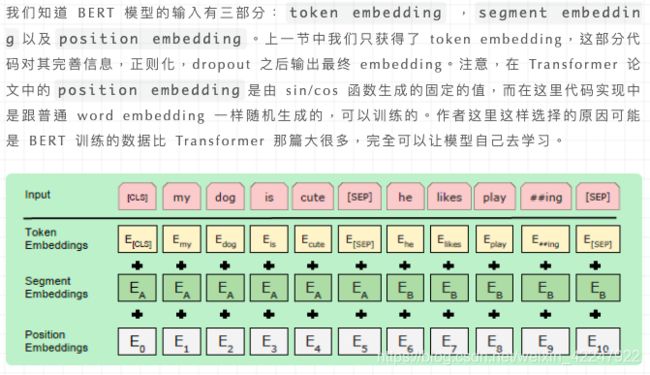

3、词向量的后续处理(embedding_postprocessor)

def embedding_postprocessor(input_tensor,# [batch_size, seq_length, embedding_size]

use_token_type=False,

token_type_ids=None,

token_type_vocab_size=16,# 一般是2

token_type_embedding_name="token_type_embeddings",

use_position_embeddings=True,

position_embedding_name="position_embeddings",

initializer_range=0.02,

max_position_embeddings=512, #最大位置编码,必须大于等于max_seq_len

dropout_prob=0.1):

input_shape = get_shape_list(input_tensor, expected_rank=3) #【batch_size,seq_length,embedding_size】

batch_size = input_shape[0]

seq_length = input_shape[1]

width = input_shape[2]

output = input_tensor

# Segment position信息

if use_token_type:

if token_type_ids isNone:

raise ValueError("`token_type_ids` must be specified if"

"`use_token_type` is True.")

token_type_table = tf.get_variable(

name=token_type_embedding_name,

shape=[token_type_vocab_size, width],

initializer=create_initializer(initializer_range))

# 由于token-type-table比较小,所以这里采用one-hot的embedding方式加速

flat_token_type_ids = tf.reshape(token_type_ids, [-1])

one_hot_ids = tf.one_hot(flat_token_type_ids, depth=token_type_vocab_size)

token_type_embeddings = tf.matmul(one_hot_ids, token_type_table)

token_type_embeddings = tf.reshape(token_type_embeddings,

[batch_size, seq_length, width])

output += token_type_embeddings

# Position embedding信息

if use_position_embeddings:

# 确保seq_length小于等于max_position_embeddings

assert_op = tf.assert_less_equal(seq_length, max_position_embeddings)

with tf.control_dependencies([assert_op]):

full_position_embeddings = tf.get_variable(

name=position_embedding_name,

shape=[max_position_embeddings, width],

initializer=create_initializer(initializer_range))

# 这里position embedding是可学习的参数,[max_position_embeddings, width]

# 但是通常实际输入序列没有达到max_position_embeddings

# 所以为了提高训练速度,使用tf.slice取出句子长度的embedding

position_embeddings = tf.slice(full_position_embeddings, [0, 0],

[seq_length, -1])

num_dims = len(output.shape.as_list())

# word embedding之后的tensor是[batch_size, seq_length, width]

# 因为位置编码是与输入内容无关,它的shape总是[seq_length, width]

# 我们无法把位置Embedding加到word embedding上

# 因此我们需要扩展位置编码为[1, seq_length, width]

# 然后就能通过broadcasting加上去了。

position_broadcast_shape = []

for _ in range(num_dims - 2):

position_broadcast_shape.append(1)

position_broadcast_shape.extend([seq_length, width])

position_embeddings = tf.reshape(position_embeddings,

position_broadcast_shape)

output += position_embeddings

output = layer_norm_and_dropout(output, dropout_prob)

return output

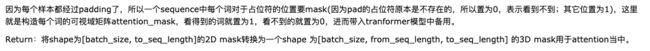

4、构造 attention_mask

def create_attention_mask_from_input_mask(from_tensor, to_mask):

#这里的to_mask就是input_mask,from_tensor就是input_ids,两者长度都是max_seq_length。

from_shape = get_shape_list(from_tensor, expected_rank=[2, 3])

batch_size = from_shape[0]

from_seq_length = from_shape[1]

to_shape = get_shape_list(to_mask, expected_rank=2)

to_seq_length = to_shape[1]

to_mask = tf.cast(

tf.reshape(to_mask, [batch_size, 1, to_seq_length]), tf.float32)

broadcast_ones = tf.ones(

shape=[batch_size, from_seq_length, 1], dtype=tf.float32)

mask = broadcast_ones * to_mask

return mask

举例:

import tensorflow as tf

import six

batch_size=2

to_seq_length=3

from_seq_length=3

to_mask=[[1,0,0],[1,1,0]]

to_mask = tf.cast(

tf.reshape(to_mask, [batch_size, 1, to_seq_length]), tf.float32)

print(to_mask)

broadcast_ones = tf.ones(

shape=[batch_size, from_seq_length, 1], dtype=tf.float32)

print(broadcast_ones)

mask = broadcast_ones * to_mask

mask

输出:

tf.Tensor(

[[[1. 0. 0.]]

[[1. 1. 0.]]], shape=(2, 1, 3), dtype=float32)

tf.Tensor(

[[[1.]

[1.]

[1.]]

[[1.]

[1.]

[1.]]], shape=(2, 3, 1), dtype=float32)

<tf.Tensor: id=63, shape=(2, 3, 3), dtype=float32, numpy=

array([[[1., 0., 0.],

[1., 0., 0.],

[1., 0., 0.]],

[[1., 1., 0.],

[1., 1., 0.],

[1., 1., 0.]]], dtype=float32)>

5、注意力层(attention layer)

def attention_layer(from_tensor, # 【batch_size, from_seq_length, from_width】

to_tensor,#【batch_size, to_seq_length, to_width】

attention_mask=None,#【batch_size,from_seq_length, to_seq_length】

num_attention_heads=1,# attention head numbers

size_per_head=512,# 每个head的大小

query_act=None,# query变换的激活函数

key_act=None,# key变换的激活函数

value_act=None,# value变换的激活函数

attention_probs_dropout_prob=0.0,# attention层的dropout

initializer_range=0.02,# 初始化取值范围

do_return_2d_tensor=False,# 是否返回2d张量。

#如果True,输出形状【batch_size*from_seq_length,num_attention_heads*size_per_head】

#如果False,输出形状【batch_size, from_seq_length, num_attention_heads*size_per_head】

batch_size=None,#如果输入是3D的,

#那么batch就是第一维,但是可能3D的压缩成了2D的,所以需要告诉函数batch_size

from_seq_length=None,# 同上

to_seq_length=None):# 同上

def transpose_for_scores(input_tensor, batch_size, num_attention_heads,

seq_length, width):

output_tensor = tf.reshape(

input_tensor, [batch_size, seq_length, num_attention_heads, width])

output_tensor = tf.transpose(output_tensor, [0, 2, 1, 3])#[batch_size, num_attention_heads, seq_length, width]

return output_tensor

from_shape = get_shape_list(from_tensor, expected_rank=[2, 3])

to_shape = get_shape_list(to_tensor, expected_rank=[2, 3])

if len(from_shape) != len(to_shape):

raise ValueError(

"The rank of `from_tensor` must match the rank of `to_tensor`.")

if len(from_shape) == 3:

batch_size = from_shape[0]

from_seq_length = from_shape[1]

to_seq_length = to_shape[1]

elif len(from_shape) == 2:

if (batch_size isNoneor from_seq_length isNoneor to_seq_length isNone):

raise ValueError(

"When passing in rank 2 tensors to attention_layer, the values "

"for `batch_size`, `from_seq_length`, and `to_seq_length` "

"must all be specified.")

# 为了方便备注shape,采用以下简写:

# B = batch size (number of sequences)

# F = `from_tensor` sequence length

# T = `to_tensor` sequence length

# N = `num_attention_heads`

# H = `size_per_head`

# 把from_tensor和to_tensor压缩成2D张量

from_tensor_2d = reshape_to_matrix(from_tensor)# 【B*F, hidden_size】

to_tensor_2d = reshape_to_matrix(to_tensor)# 【B*T, hidden_size】

# 将from_tensor输入全连接层得到query_layer

# `query_layer` = [B*F, N*H]

query_layer = tf.layers.dense(

from_tensor_2d,

num_attention_heads * size_per_head,

activation=query_act,

name="query",

kernel_initializer=create_initializer(initializer_range))

# 将to_tensor输入全连接层得到key_layer

# `key_layer` = [B*T, N*H]

key_layer = tf.layers.dense(

to_tensor_2d,

num_attention_heads * size_per_head,

activation=key_act,

name="key",

kernel_initializer=create_initializer(initializer_range))

# 将to_tensor输入全连接层得到value_layer

# `value_layer` = [B*T, N*H]

value_layer = tf.layers.dense(

to_tensor_2d,

num_attention_heads * size_per_head,

activation=value_act,

name="value",

kernel_initializer=create_initializer(initializer_range))

# query_layer转成多头:[B*F, N*H]==>[B, F, N, H]==>[B, N, F, H]

query_layer = transpose_for_scores(query_layer, batch_size,

num_attention_heads, from_seq_length,

size_per_head)

# key_layer转成多头:[B*T, N*H] ==> [B, T, N, H] ==> [B, N, T, H]

key_layer = transpose_for_scores(key_layer, batch_size, num_attention_heads,

to_seq_length, size_per_head)

# 将query与key做点积,然后做一个scale,公式可以参见原始论文

# `attention_scores` = [B, N, F, T]

attention_scores = tf.matmul(query_layer, key_layer, transpose_b=True)

attention_scores = tf.multiply(attention_scores,

1.0 / math.sqrt(float(size_per_head)))

if attention_mask isnotNone:

# `attention_mask` = [B, 1, F, T]

attention_mask = tf.expand_dims(attention_mask, axis=[1])

# 如果attention_mask里的元素为1,则通过下面运算有(1-1)*-10000,adder就是0

# 如果attention_mask里的元素为0,则通过下面运算有(1-0)*-10000,adder就是-10000

adder = (1.0 - tf.cast(attention_mask, tf.float32)) * -10000.0

# 我们最终得到的attention_score一般不会很大,

#所以上述操作对mask为0的地方得到的score可以认为是负无穷

attention_scores += adder

# 负无穷经过softmax之后为0,就相当于mask为0的位置不计算attention_score

# `attention_probs` = [B, N, F, T]

attention_probs = tf.nn.softmax(attention_scores)

# 对attention_probs进行dropout,这虽然有点奇怪,但是Transforme原始论文就是这么做的

attention_probs = dropout(attention_probs, attention_probs_dropout_prob)

# `value_layer` = [B, T, N, H]

value_layer = tf.reshape(

value_layer,

[batch_size, to_seq_length, num_attention_heads, size_per_head])

# `value_layer` = [B, N, T, H]

value_layer = tf.transpose(value_layer, [0, 2, 1, 3])

# `context_layer` = [B, N, F, H]

context_layer = tf.matmul(attention_probs, value_layer)

# `context_layer` = [B, F, N, H]

context_layer = tf.transpose(context_layer, [0, 2, 1, 3])

if do_return_2d_tensor:

# `context_layer` = [B*F, N*H]

context_layer = tf.reshape(

context_layer,

[batch_size * from_seq_length, num_attention_heads * size_per_head])

else:

# `context_layer` = [B, F, N*H]

context_layer = tf.reshape(

context_layer,

[batch_size, from_seq_length, num_attention_heads * size_per_head])

return context_layer

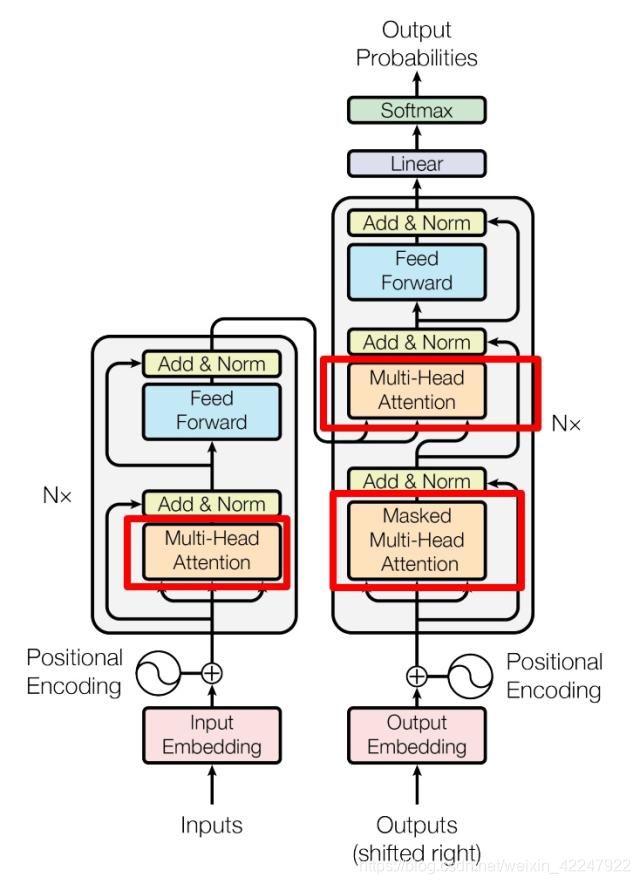

6、Transformer

def transformer_model(input_tensor,# 【batch_size, seq_length, hidden_size】

attention_mask=None,# 【batch_size, seq_length, seq_length】

hidden_size=768,

num_hidden_layers=12,

num_attention_heads=12,

intermediate_size=3072,

intermediate_act_fn=gelu,# feed-forward层的激活函数

hidden_dropout_prob=0.1,

attention_probs_dropout_prob=0.1,

initializer_range=0.02,

do_return_all_layers=False):

# 这里注意,因为最终要输出hidden_size, 我们有num_attention_head个区域,

# 每个head区域有size_per_head多的隐层

# 所以有 hidden_size = num_attention_head * size_per_head

if hidden_size % num_attention_heads != 0:

raise ValueError(

"The hidden size (%d) is not a multiple of the number of attention "

"heads (%d)" % (hidden_size, num_attention_heads))

attention_head_size = int(hidden_size / num_attention_heads)

input_shape = get_shape_list(input_tensor, expected_rank=3)

batch_size = input_shape[0]

seq_length = input_shape[1]

input_width = input_shape[2]

# 因为encoder中有残差操作,所以需要shape相同

if input_width != hidden_size:

raise ValueError("The width of the input tensor (%d) != hidden size (%d)" %

(input_width, hidden_size))

# reshape操作在CPU/GPU上很快,但是在TPU上很不友好

# 所以为了避免2D和3D之间的频繁reshape,我们把所有的3D张量用2D矩阵表示

prev_output = reshape_to_matrix(input_tensor)

all_layer_outputs = []

for layer_idx in range(num_hidden_layers):

with tf.variable_scope("layer_%d" % layer_idx):

layer_input = prev_output

with tf.variable_scope("attention"):

# multi-head attention

attention_heads = []

with tf.variable_scope("self"):

# self-attention

attention_head = attention_layer(

from_tensor=layer_input,

to_tensor=layer_input,

attention_mask=attention_mask,

num_attention_heads=num_attention_heads,

size_per_head=attention_head_size,

attention_probs_dropout_prob=attention_probs_dropout_prob,

initializer_range=initializer_range,

do_return_2d_tensor=True,

batch_size=batch_size,

from_seq_length=seq_length,

to_seq_length=seq_length)

attention_heads.append(attention_head)

attention_output = None

if len(attention_heads) == 1:

attention_output = attention_heads[0]

else:

# 如果有多个head,将他们拼接起来

attention_output = tf.concat(attention_heads, axis=-1)

# 对attention的输出进行线性映射, 目的是将shape变成与input一致

# 然后dropout+residual+norm

with tf.variable_scope("output"):

attention_output = tf.layers.dense(

attention_output,

hidden_size,

kernel_initializer=create_initializer(initializer_range))

attention_output = dropout(attention_output, hidden_dropout_prob)

attention_output = layer_norm(attention_output + layer_input)

# feed-forward

with tf.variable_scope("intermediate"):

intermediate_output = tf.layers.dense(

attention_output,

intermediate_size,

activation=intermediate_act_fn,

kernel_initializer=create_initializer(initializer_range))

# 对feed-forward层的输出使用线性变换变回‘hidden_size’

# 然后dropout + residual + norm

with tf.variable_scope("output"):

layer_output = tf.layers.dense(

intermediate_output,

hidden_size,

kernel_initializer=create_initializer(initializer_range))

layer_output = dropout(layer_output, hidden_dropout_prob)

layer_output = layer_norm(layer_output + attention_output)

prev_output = layer_output

all_layer_outputs.append(layer_output)

if do_return_all_layers:

final_outputs = []

for layer_output in all_layer_outputs:

final_output = reshape_from_matrix(layer_output, input_shape)

final_outputs.append(final_output)

return final_outputs

else:

final_output = reshape_from_matrix(prev_output, input_shape)

return final_output

7、函数入口(init)

![]()

def __init__(self,

config,# BertConfig对象

is_training,

input_ids,# 【batch_size, seq_length】

input_mask=None,# 【batch_size, seq_length】

token_type_ids=None,# 【batch_size, seq_length】

use_one_hot_embeddings=False,# 是否使用one-hot;否则tf.gather()

scope=None):

config = copy.deepcopy(config)

ifnot is_training:

config.hidden_dropout_prob = 0.0

config.attention_probs_dropout_prob = 0.0

input_shape = get_shape_list(input_ids, expected_rank=2)

batch_size = input_shape[0]

seq_length = input_shape[1]

# 不做mask,即所有元素为1

if input_mask isNone:

input_mask = tf.ones(shape=[batch_size, seq_length], dtype=tf.int32)

if token_type_ids isNone:

token_type_ids = tf.zeros(shape=[batch_size, seq_length], dtype=tf.int32)

with tf.variable_scope(scope, default_name="bert"):

with tf.variable_scope("embeddings"):

# word embedding

(self.embedding_output, self.embedding_table) = embedding_lookup(

input_ids=input_ids,

vocab_size=config.vocab_size,

embedding_size=config.hidden_size,

initializer_range=config.initializer_range,

word_embedding_name="word_embeddings",

use_one_hot_embeddings=use_one_hot_embeddings)

# 添加position embedding和segment embedding

# layer norm + dropout

self.embedding_output = embedding_postprocessor(

input_tensor=self.embedding_output,

use_token_type=True,

token_type_ids=token_type_ids,

token_type_vocab_size=config.type_vocab_size,

token_type_embedding_name="token_type_embeddings",

use_position_embeddings=True,

position_embedding_name="position_embeddings",

initializer_range=config.initializer_range,

max_position_embeddings=config.max_position_embeddings,

dropout_prob=config.hidden_dropout_prob)

with tf.variable_scope("encoder"):

# input_ids是经过padding的word_ids:[25, 120, 34, 0, 0]

# input_mask是有效词标记:[1, 1, 1, 0, 0]

attention_mask = create_attention_mask_from_input_mask(

input_ids, input_mask)

# transformer模块叠加

# `sequence_output` shape = [batch_size, seq_length, hidden_size].

self.all_encoder_layers = transformer_model(

input_tensor=self.embedding_output,

attention_mask=attention_mask,

hidden_size=config.hidden_size,

num_hidden_layers=config.num_hidden_layers,

num_attention_heads=config.num_attention_heads,

intermediate_size=config.intermediate_size,

intermediate_act_fn=get_activation(config.hidden_act),

hidden_dropout_prob=config.hidden_dropout_prob,

attention_probs_dropout_prob=config.attention_probs_dropout_prob,

initializer_range=config.initializer_range,

do_return_all_layers=True)

# `self.sequence_output`是最后一层的输出,shape为【batch_size, seq_length, hidden_size】

self.sequence_output = self.all_encoder_layers[-1]

# ‘pooler’部分将encoder输出【batch_size, seq_length, hidden_size】

# 转成【batch_size, hidden_size】

with tf.variable_scope("pooler"):

# 取最后一层的第一个时刻[CLS]对应的tensor, 对于分类任务很重要

# sequence_output[:, 0:1, :]得到的是[batch_size, 1, hidden_size]

# 我们需要用squeeze把第二维去掉

first_token_tensor = tf.squeeze(self.sequence_output[:, 0:1, :], axis=1)

# 然后再加一个全连接层,输出仍然是[batch_size, hidden_size]

self.pooled_output = tf.layers.dense(

first_token_tensor,

config.hidden_size,

activation=tf.tanh,

kernel_initializer=create_initializer(config.initializer_range))

总结一哈

# 假设输入已经经过分词变成word_ids. shape=[2, 3]

input_ids = tf.constant([[31, 51, 99], [15, 5, 0]])

input_mask = tf.constant([[1, 1, 1], [1, 1, 0]])

# segment_emebdding. 表示第一个样本前两个词属于句子1,后一个词属于句子2.

# 第二个样本的第一个词属于句子1, 第二次词属于句子2,第三个元素0表示padding

# 原始代码是下面这样的,但是感觉么必要用 2,不知道是不是我哪里没理解

token_type_ids = tf.constant([[0, 0, 1], [0, 2, 0]])

# 创建BertConfig实例

config = modeling.BertConfig(vocab_size=32000, hidden_size=512,

num_hidden_layers=8, num_attention_heads=6, intermediate_size=1024)

# 创建BertModel实例

model = modeling.BertModel(config=config, is_training=True,

input_ids=input_ids, input_mask=input_mask, token_type_ids=token_type_ids)

label_embeddings = tf.get_variable(...)

#得到最后一层的第一个Token也就是[CLS]向量表示,可以看成是一个句子的embedding

pooled_output = model.get_pooled_output()

logits = tf.matmul(pooled_output, label_embeddings)

本文参考资料

Transformer 模型详解

NLP 大杀器 BERT 模型解读:

BERT 相关论文、文章和代码资源汇总:

modeling.py 模块:

参考这个 Issue:

理解 Attention 机制原理及模型:

原始论文:

原始代码:

Bert源码解读(二)之Transformer 代码实现