The Happy House

Why are we using Keras? Keras was developed to enable deep learning engineers to build and experiment with different models very quickly. Just as TensorFlow is a higher-level framework than Python, Keras is an even higher-level framework and provides additional abstractions. Being able to go from idea to result with the least possible delay is key to finding good models. However, Keras is more restrictive than the lower-level frameworks, so there are some very complex models that you can implement in TensorFlow but not (without more difficulty) in Keras. That being said, Keras will work fine for many common models.

import numpy as np

from keras import layers

from keras.layers import Input, Dense, Activation, ZeroPadding2D, BatchNormalization, Flatten, Conv2D

from keras.layers import AveragePooling2D, MaxPooling2D, Dropout, GlobalMaxPooling2D, GlobalAveragePooling2D

from keras.models import Model

from keras.preprocessing import image

from keras.utils import layer_utils

from keras.utils.data_utils import get_file

from keras.applications.imagenet_utils import preprocess_input

import pydot

from IPython.display import SVG

from keras.utils.vis_utils import model_to_dot

from keras.utils import plot_model

from kt_utils import *

import keras.backend as K

K.set_image_data_format('channels_last')

import matplotlib.pyplot as plt

from matplotlib.pyplot import imshow

%matplotlib inline

导入了很多函数,可以直接调用X = Input(...) or X = ZeroPadding2D(...).

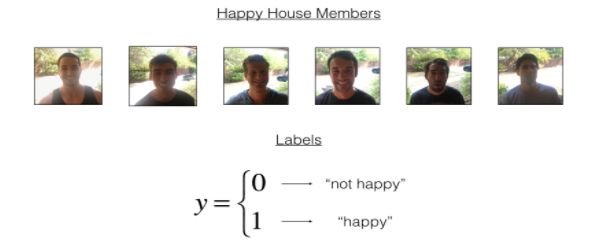

As a deep learning expert, to make sure the "Happy" rule is strictly applied, you are going to build an algorithm which that uses pictures from the front door camera to check if the person is happy or not. The door should open only if the person is happy.

normalize the dataset and learn about its shapes.

X_train_orig, Y_train_orig, X_test_orig, Y_test_orig, classes = load_dataset()

# Normalize image vectors

X_train = X_train_orig/255.

X_test = X_test_orig/255.

# Reshape

Y_train = Y_train_orig.T

Y_test = Y_test_orig.T

print ("number of training examples = " + str(X_train.shape[0]))

print ("number of test examples = " + str(X_test.shape[0]))

print ("X_train shape: " + str(X_train.shape))

print ("Y_train shape: " + str(Y_train.shape))

print ("X_test shape: " + str(X_test.shape))

print ("Y_test shape: " + str(Y_test.shape))

Details of the "Happy" dataset:

- Images are of shape (64,64,3)

- Training: 600 pictures

- Test: 150 pictures

2 - Building a model in Keras

def model(input_shape):

# Define the input placeholder as a tensor with shape input_shape. Think of this as your input image!

X_input = Input(input_shape)

# Zero-Padding: pads the border of X_input with zeroes

X = ZeroPadding2D((3, 3))(X_input)

# CONV -> BN -> RELU Block applied to X

X = Conv2D(32, (7, 7), strides = (1, 1), name = 'conv0')(X)

X = BatchNormalization(axis = 3, name = 'bn0')(X)

X = Activation('relu')(X)

# MAXPOOL

X = MaxPooling2D((2, 2), name='max_pool')(X)

# FLATTEN X (means convert it to a vector) + FULLYCONNECTED

X = Flatten()(X)

X = Dense(1, activation='sigmoid', name='fc')(X)

# Create model. This creates your Keras model instance, you'll use this instance to train/test the model.

model = Model(inputs = X_input, outputs = X, name='HappyModel')

return model

You have now built a function to describe your model. To train and test this model, there are four steps in Keras:

- Create the model by calling the function above

- Compile the model by calling

model.compile(optimizer = "...", loss = "...", metrics = ["accuracy"]) - Train the model on train data by calling

model.fit(x = ..., y = ..., epochs = ..., batch_size = ...) - Test the model on test data by calling

model.evaluate(x = ..., y = ...)

If you want to know more about model.compile(), model.fit(), model.evaluate() and their arguments, refer to the official Keras documentation.

create the model

happyModel = HappyModel(X_train.shape[1:])

Implement step 2, i.e. compile the model to configure the learning process. Choose the 3 arguments of compile() wisely. Hint: the Happy Challenge is a binary classification problem.

compile the model

happyModel.compile(optimizer = "Adam", loss = "binary_crossentropy", metrics = ["accuracy"])

Train

Train the model. Choose the number of epochs and the batch size.

happyModel.fit(x = X_train, y = Y_train, epochs = 10, batch_size = 32)

Epoch 1/10

600/600 [==============================] - 14s - loss: 2.5816 - acc: 0.6033

Epoch 2/10

600/600 [==============================] - 14s - loss: 0.3833 - acc: 0.8467

Epoch 3/10

600/600 [==============================] - 14s - loss: 0.1957 - acc: 0.9283

Epoch 4/10

600/600 [==============================] - 15s - loss: 0.1397 - acc: 0.9517

Epoch 5/10

600/600 [==============================] - 15s - loss: 0.1134 - acc: 0.9633

Epoch 6/10

600/600 [==============================] - 13s - loss: 0.1129 - acc: 0.9667

Epoch 7/10

600/600 [==============================] - 13s - loss: 0.0971 - acc: 0.9733

Epoch 8/10

600/600 [==============================] - 13s - loss: 0.0840 - acc: 0.9717

Epoch 9/10

600/600 [==============================] - 12s - loss: 0.0740 - acc: 0.9817

Epoch 10/10

600/600 [==============================] - 12s - loss: 0.1057 - acc: 0.9650

Note that if you run fit() again, the model will continue to train with the parameters it has already learnt instead of reinitializing them.

test/evaluate the model.

### START CODE HERE ### (1 line)

preds = preds = happyModel.evaluate(X_test, Y_test)

### END CODE HERE ###

print()

print ("Loss = " + str(preds[0]))

print ("Test Accuracy = " + str(preds[1]))

If your happyModel() function worked, you should have observed much better than random-guessing (50%) accuracy on the train and test sets.

To give you a point of comparison, our model gets around 95% test accuracy in 40 epochs (and 99% train accuracy) with a mini batch size of 16 and "adam" optimizer. But our model gets decent accuracy after just 2-5 epochs, so if you're comparing different models you can also train a variety of models on just a few epochs and see how they compare.

If you have not yet achieved a very good accuracy (let's say more than 80%), here're some things you can play around with to try to achieve it:

- Try using blocks of CONV->BATCHNORM->RELU such as:

X = BatchNormalization(axis = 3, name = 'bn0')(X)

X = Activation('relu')(X)

until your height and width dimensions are quite low and your number of channels quite large (≈32 for example). You are encoding useful information in a volume with a lot of channels.

- You can then flatten the volume and use a fully-connected layer.

You can use MAXPOOL after such blocks. It will help you lower the dimension in height and width. - Change your optimizer. We find Adam works well.

- If the model is struggling to run and you get memory issues, lower your batch_size (12 is usually a good compromise)

- Run on more epochs, until you see the train accuracy plateauing.

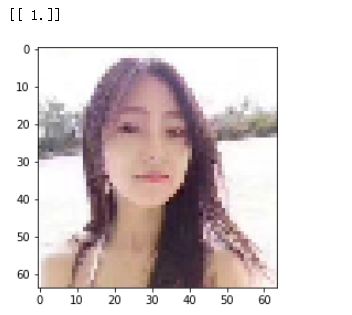

Test with your own image

img_path = 'images/hhh.png'

### END CODE HERE ###

img = image.load_img(img_path, target_size=(64, 64))

imshow(img)

x = image.img_to_array(img)

x = np.expand_dims(x, axis=0)

x = preprocess_input(x)

print(happyModel.predict(x))

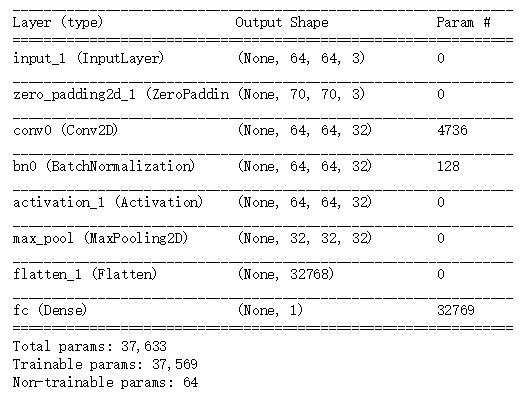

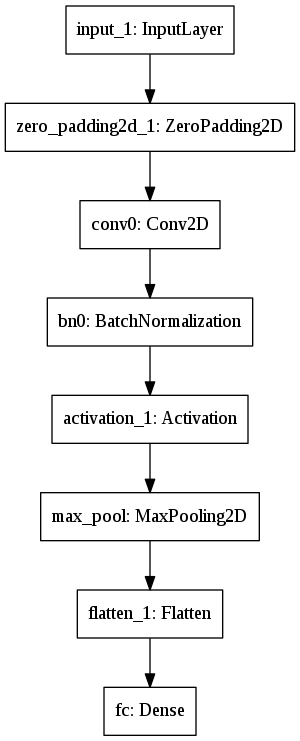

Other useful functions in Keras

Two other basic features of Keras that you'll find useful are:

- model.summary(): prints the details of your layers in a table with the sizes of its inputs/outputs

- plot_model(): plots your graph in a nice layout. You can even save it as ".png" using SVG() if you'd like to share it on social media ;). It is saved in "File" then "Open..." in the upper bar of the notebook.

happyModel.summary()

plot_model(happyModel, to_file='HappyModel.png')

SVG(model_to_dot(happyModel).create(prog='dot', format='svg'))