Raki的NLP竞赛topline解读:NBME - Score Clinical Patient Notes

Description

当你去看医生时,他们如何解释你的症状可以决定你的诊断是否准确。当他们获得执照时,医生们已经有了很多写病人笔记的练习,这些笔记记录了病人的主诉历史、体检结果、可能的诊断和后续护理。学习和评估写病人笔记的技能需要其他医生的反馈,这是一个时间密集的过程,可以通过加入机器学习来改进。

直到最近,第二步临床技能考试是美国医学执照考试®(USMLE®)的一个组成部分。该考试要求应试者与标准化病人(受过训练的人,以描绘特定的临床病例)互动,并写下病人的笔记。训练有素的医生评分员随后用概述每个病例的重要概念(被称为特征)的评分标准对病人笔记进行评分。在病历中发现的这种特征越多,分数就越高(除其他因素外,还包括对考试的最终得分的贡献)。

然而,让医生为病人笔记考试打分需要大量的时间,以及人力和财力资源。使用自然语言处理的方法已经被创造出来以解决这个问题,但病人笔记在计算上的评分仍然具有挑战性,因为特征可能以许多方式表达。例如,"对活动失去兴趣 "这一特征可以被表达为 “不再打网球”。其他挑战包括需要通过结合多个文本片段来映射概念,或出现模糊的否定词的情况,如 “没有不耐寒、脱发、心悸或震颤”,对应于关键的基本要素 “缺乏其他甲状腺症状”。

在这次比赛中,你将确定病人笔记中的具体临床概念。具体来说,你要开发一种自动方法,将考试评分标准中的临床概念(如 “食欲减退”)与医学生写的临床病人笔记中表达这些概念的各种方式(如 “吃得少”、“衣服更宽松”)进行映射。好的解决方案将既准确又可靠

Evaluation

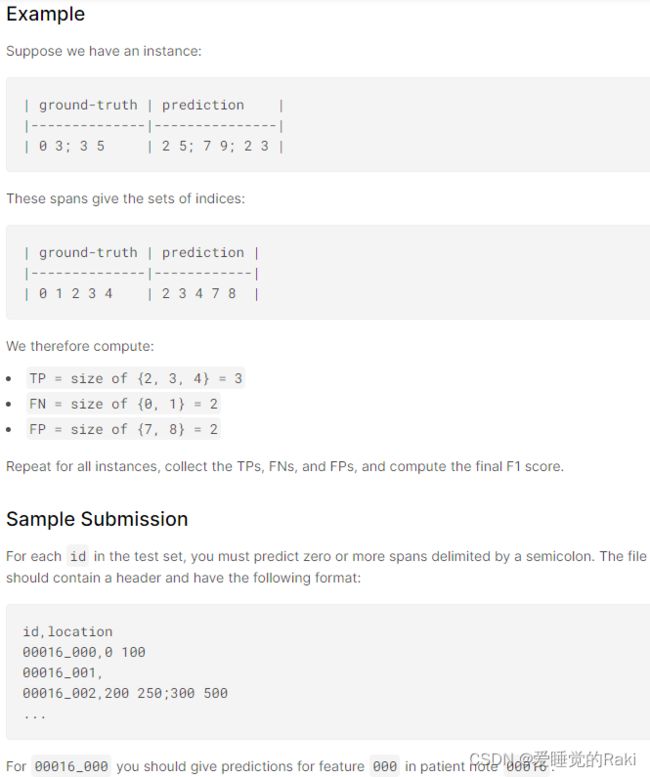

比赛通过微观平均的F1分数来评估

对于每个实例,我们预测一组字符跨度。一个字符跨度是一对索引,代表文本中的一个字符范围。一个跨度i j代表索引为i到j的字符,左闭右开,在Python的符号中,一个跨度i j相当于一个切片 i:j

对于每个实例,都有一个基础真实跨度的集合和一个预测跨度的集合。我们用分号来划分跨度,比如。0 3; 5 9.

Data Description

这里介绍的文本数据来自USMLE® Step 2临床技能考试,这是一项医学执照考试。该考试衡量受训者在与标准化病人接触时识别相关临床事实的能力。

在这个考试中,每个应试者都会看到一个标准化病人,一个被训练来描述一个临床病例的人。在与病人交流后,应试者在一份病人笔记中记录下与之相关的事实。每份病人笔记都由受过训练的医生打分,医生会根据评分标准寻找与该病例相关的某些关键概念或特征。本次竞赛的目标是开发一种自动识别每份病人笔记中相关特征的方法,特别关注笔记中记录了与标准化病人面谈的信息的病史部分

Important Terms

- 临床病例:标准化病人向应试者(医科学生、住院医师或医生)展示的情景(如症状、投诉、关切)。本数据集中有十个临床病例

- 患者备注:详细说明患者在就诊期间(体检和问诊)的重要信息的文字

- 特征:一个与临床相关的概念。一个评分标准描述了与每个病例相关的关键概念

Training Data

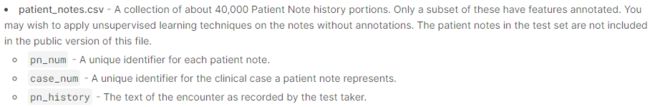

patient_notes.csv - 约40,000份病人笔记历史部分的集合。其中只有一个子集有特征注释。你可能希望在没有注释的笔记上应用无监督学习技术。测试集中的病人笔记不包括在该文件的公开版本中

features.csv - 每个临床病例的特征(或关键概念)的评分标准。

- feature_num - 每个特征的唯一标识符

- case_num - 每个病例的唯一标识符

- feature_text - 对特征的描述

train.csv - 1000份病人笔记的特征注释,10个病例中每个100份。 - id - 每个病人笔记/特征对的唯一标识符

- pn_num - 本行中注释的病人笔记

- feature_num - 本行注释的特征

- case_num - 该患者笔记所属的病例

- annotation - 患者笔记中表示特征的文字。一个特征可以在一个笔记中被多次指出。

- location - 表示每个注释在笔记中的位置的字符跨度。可能需要多个跨度来表示一个注释,在这种情况下,跨度由分号;分隔

Example Test Data

为了帮助你编写提交的代码,我们包括一些从训练集中选出的实例。当你提交的笔记本被打分时,这个例子数据将被实际的测试数据所取代。测试集中的病人笔记将被添加到patient_notes.csv文件中。这些病人笔记与训练集中的病人笔记来自相同的临床病例。在测试集中大约有2000份病人笔记

赛题与数据集理解

首先要理解,这是一个细粒度NER的任务,总共有10种疾病的大类别,然后判断span属于疾病的哪一种特征

patient_notes这个包含各个病人病情的描述

然后我们要做的就是从病情描述里面抽取一些span,并识别它属于哪一种疾病,注意这个span是char级别的span,而不是token级别

RoBERTa

论文:《RoBERTa:A Robustly Optimized BERT Pretraining Approach》

作者/机构:Facebook + 华盛顿大学

论文地址:https://arxiv.org/pdf/1907.11692

年份:2019.7

RoBERTa在训练方法上对Bert进行改进,主要体现在改变mask的方式、丢弃NSP任务、训练超参数优化和使用更大规模的训练数据四个方面。其改进点如下:

静态Mask变动态Mask

Bert在整个预训练过程,选择进行mask的15%的Tokens是不变的,也就是说从一开始随机选择了这15%的Tokens,之后的N个epoch里都不再改变了。这就叫做静态Masking。

而RoBERTa一开始把预训练的数据复制10份,每一份都随机选择15%的Tokens进行Masking,也就是说,同样的一句话有10种不同的mask方式。然后每份数据都训练N/10个epoch。这就相当于在这N个epoch的训练中,每个序列的被mask的tokens是会变化的。这就叫做动态Masking。

这样做的目的是:动态mask相当于间接的增加了训练数据,有助于提高模型性能。

移去NSP任务

Bert为了捕捉句子之间的关系,使用了NSP任务进行预训练,就是输入一对句子A和B,判断这两个句子是否是连续的。两句子最大长度之和为512。

RoBERTa去除了NSP,而是每次输入连续的多个句子,直到最大长度512(可以跨文章)。这种训练方式叫做(FULL-SENTENCES),而原来的Bert每次只输入两个句子。

这样做的目的是:实验发现,消除NSP损失在下游任务的性能上能够与原始BERT持平或略有提高。这可能是由于Bert一单句子为单位输入,模型无法学习到词之间的远程依赖关系,而RoBERTa输入为连续的多个句子,模型更能俘获更长的依赖关系,这对长序列的下游任务比较友好。

更大的mini-batch

BERTbase 的batch size是256,训练1M个steps。RoBERTa的batch size是8k。

这样做的目的是:作者是借鉴了在了机器翻译中的训练策略,用更大的batch size配合更大学习率能提升模型优化速率和模型性能的现象,并且也用实验证明了确实Bert还能用更大的batch size。

更多的训练数据,更长的训练时间

借鉴RoBERTa(160G)用了比Bert(16G)多10倍的数据。性能确实再次彪升。当然,也需要配合更长时间的训练。

这样做的目的是:很明显更多的训练数据增加了数据的多样性(词汇量、句法结构、语法结构等等),当然能提高模型性能。

Roberta Strikes Back !

Initialization

import

import os #Python的模块

import re #正则表达式

import ast #指的是Abstract Syntax Tree,抽象语法树

import json #处理json格式文件

import glob #glob模块用来查找文件目录和文件,并将搜索的到的结果返回到一个列表中

import numpy as np

import pandas as pd

from tqdm.notebook import tqdm

os.environ["TOKENIZERS_PARALLELISM"] = "false"

Paths

pytorch如何设置batch-size和num_workers,避免超显存, 并提高实验速度?

pytorch dataloader 使用batch和 num_works参数的原理是什么?

DATA_PATH = "../input/nbme-score-clinical-patient-notes/"

OUT_PATH = "../input/nbme-roberta-large/"

WEIGHTS_FOLDER = "../input/nbme-roberta-large/"

NUM_WORKERS = 2

Data

Preparation

用正则表达式对文本进行清洗

def process_feature_text(text): #利用正则表达式替换一些表示

text = re.sub('I-year', '1-year', text)

text = re.sub('-OR-', " or ", text)

text = re.sub('-', ' ', text)

return text

def clean_spaces(txt): #替换掉文本中的各种形式空格

txt = re.sub('\n', ' ', txt)

txt = re.sub('\t', ' ', txt)

txt = re.sub('\r', ' ', txt)

# txt = re.sub(r'\s+', ' ', txt)

return txt

def load_and_prepare_test(root=""):

patient_notes = pd.read_csv(root + "patient_notes.csv")

features = pd.read_csv(root + "features.csv")

df = pd.read_csv(root + "test.csv")

df = df.merge(features, how="left", on=["case_num", "feature_num"]) #合并,左连接

df = df.merge(patient_notes, how="left", on=['case_num', 'pn_num'])

df['pn_history'] = df['pn_history'].apply(lambda x: x.strip()) #去除文本首尾的空格

df['feature_text'] = df['feature_text'].apply(process_feature_text)

df['feature_text'] = df['feature_text'].apply(clean_spaces)

df['clean_text'] = df['pn_history'].apply(clean_spaces)

df['target'] = ""

return df

Processing

import itertools

def token_pred_to_char_pred(token_pred, offsets): #将token级别的预测span转化为char span

char_pred = np.zeros((np.max(offsets), token_pred.shape[1]))

for i in range(len(token_pred)):

s, e = int(offsets[i][0]), int(offsets[i][1]) # start, end

char_pred[s:e] = token_pred[i]

if token_pred.shape[1] == 3: # following characters cannot be tagged as start

s += 1

char_pred[s: e, 1], char_pred[s: e, 2] = (

np.max(char_pred[s: e, 1:], 1),

np.min(char_pred[s: e, 1:], 1),

)

return char_pred

def labels_to_sub(labels): #标签到下标

all_spans = []

for label in labels:

indices = np.where(label > 0)[0]

indices_grouped = [

list(g) for _, g in itertools.groupby(

indices, key=lambda n, c=itertools.count(): n - next(c)

)

]

spans = [f"{min(r)} {max(r) + 1}" for r in indices_grouped]

all_spans.append(";".join(spans))

return all_spans

def char_target_to_span(char_target): #拿到预测的char,返回span

spans = []

start, end = 0, 0

for i in range(len(char_target)):

if char_target[i] == 1 and char_target[i - 1] == 0:

if end:

spans.append([start, end])

start = i

end = i + 1

elif char_target[i] == 1:

end = i + 1

else:

if end:

spans.append([start, end])

start, end = 0, 0

return spans

Tokenization

分词器

import numpy as np

from transformers import AutoTokenizer

def get_tokenizer(name, precompute=False, df=None, folder=None):

if folder is None: #如果没有预训练好的分词器就根据模型名称加载一个

tokenizer = AutoTokenizer.from_pretrained(name)

else:

tokenizer = AutoTokenizer.from_pretrained(folder)

tokenizer.name = name

tokenizer.special_tokens = {

"sep": tokenizer.sep_token_id,

"cls": tokenizer.cls_token_id,

"pad": tokenizer.pad_token_id,

}

if precompute:

tokenizer.precomputed = precompute_tokens(df, tokenizer)

else:

tokenizer.precomputed = None

return tokenizer

def precompute_tokens(df, tokenizer):

feature_texts = df["feature_text"].unique()

ids = {}

offsets = {}

for feature_text in feature_texts:

encoding = tokenizer(

feature_text,

return_token_type_ids=True,

return_offsets_mapping=True,

return_attention_mask=False,

add_special_tokens=False,

)

ids[feature_text] = encoding["input_ids"]

offsets[feature_text] = encoding["offset_mapping"]

texts = df["clean_text"].unique()

for text in texts:

encoding = tokenizer(

text,

return_token_type_ids=True,

return_offsets_mapping=True,

return_attention_mask=False,

add_special_tokens=False,

)

ids[text] = encoding["input_ids"]

offsets[text] = encoding["offset_mapping"]

return {"ids": ids, "offsets": offsets}

def encodings_from_precomputed(feature_text, text, precomputed, tokenizer, max_len=300):

tokens = tokenizer.special_tokens

# Input ids

if "roberta" in tokenizer.name:

qa_sep = [tokens["sep"], tokens["sep"]]

else:

qa_sep = [tokens["sep"]]

input_ids = [tokens["cls"]] + precomputed["ids"][feature_text] + qa_sep

n_question_tokens = len(input_ids)

input_ids += precomputed["ids"][text]

input_ids = input_ids[: max_len - 1] + [tokens["sep"]]

# Token type ids

if "roberta" not in tokenizer.name:

token_type_ids = np.ones(len(input_ids))

token_type_ids[:n_question_tokens] = 0

token_type_ids = token_type_ids.tolist()

else:

token_type_ids = [0] * len(input_ids)

# Offsets

offsets = [(0, 0)] * n_question_tokens + precomputed["offsets"][text]

offsets = offsets[: max_len - 1] + [(0, 0)]

# Padding

padding_length = max_len - len(input_ids)

if padding_length > 0:

input_ids = input_ids + ([tokens["pad"]] * padding_length)

token_type_ids = token_type_ids + ([0] * padding_length)

offsets = offsets + ([(0, 0)] * padding_length)

encoding = {

"input_ids": input_ids,

"token_type_ids": token_type_ids,

"offset_mapping": offsets,

}

return encoding

Dataset

继承dataset类必须重写__init__, __getitem__, __len__ 三个方法

import torch

import numpy as np

from torch.utils.data import Dataset

class PatientNoteDataset(Dataset):

def __init__(self, df, tokenizer, max_len):

self.df = df

self.max_len = max_len

self.tokenizer = tokenizer

self.texts = df['clean_text'].values

self.feature_text = df['feature_text'].values

self.char_targets = df['target'].values.tolist()

def __getitem__(self, idx):

text = self.texts[idx]

feature_text = self.feature_text[idx]

char_target = self.char_targets[idx]

# Tokenize

if self.tokenizer.precomputed is None:

encoding = self.tokenizer(

feature_text,

text,

return_token_type_ids=True,

return_offsets_mapping=True,

return_attention_mask=False,

truncation="only_second",

max_length=self.max_len,

padding='max_length',

)

raise NotImplementedError("fix issues with question offsets")

else:

encoding = encodings_from_precomputed(

feature_text,

text,

self.tokenizer.precomputed,

self.tokenizer,

max_len=self.max_len

)

return {

"ids": torch.tensor(encoding["input_ids"], dtype=torch.long),

"token_type_ids": torch.tensor(encoding["token_type_ids"], dtype=torch.long),

"target": torch.tensor([0], dtype=torch.float),

"offsets": np.array(encoding["offset_mapping"]),

"text": text,

}

def __len__(self):

return len(self.texts)

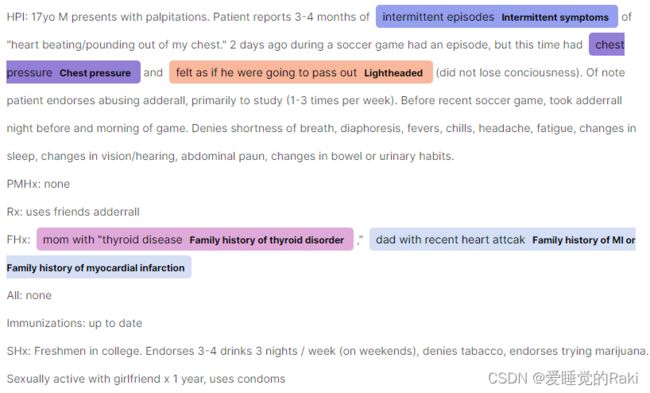

Plot predictions

给预测中的span上色

import spacy

import numpy as np

def plot_annotation(df, pn_num):

options = {"colors": {}}

df_text = df[df["pn_num"] == pn_num].reset_index(drop=True)

text = df_text["pn_history"][0]

ents = []

for spans, feature_text, feature_num in df_text[["span", "feature_text", "feature_num"]].values:

for s in spans:

ents.append({"start": int(s[0]), "end": int(s[1]), "label": feature_text})

options["colors"][feature_text] = f"rgb{tuple(np.random.randint(100, 255, size=3))}"

doc = {"text": text, "ents": sorted(ents, key=lambda i: i["start"])}

spacy.displacy.render(doc, style="ent", options=options, manual=True, jupyter=True)

Model

import torch

import transformers

import torch.nn as nn

from transformers import AutoConfig, AutoModel

class NERTransformer(nn.Module):

def __init__(

self,

model,

num_classes=1,

config_file=None,

pretrained=True,

):

super().__init__()

self.name = model

self.pad_idx = 1 if "roberta" in self.name else 0

transformers.logging.set_verbosity_error()

if config_file is None:

config = AutoConfig.from_pretrained(model, output_hidden_states=True)

else:

config = torch.load(config_file)

if pretrained:

self.transformer = AutoModel.from_pretrained(model, config=config)

else:

self.transformer = AutoModel.from_config(config)

self.nb_features = config.hidden_size

# self.cnn = nn.Identity()

self.logits = nn.Linear(self.nb_features, num_classes)

def forward(self, tokens, token_type_ids):

"""

Usual torch forward function

Arguments:

tokens {torch tensor} -- Sentence tokens

token_type_ids {torch tensor} -- Sentence tokens ids

"""

hidden_states = self.transformer(

tokens,

attention_mask=(tokens != self.pad_idx).long(),

token_type_ids=token_type_ids,

)[-1]

features = hidden_states[-1]

logits = self.logits(features)

return logits

Inference

Loads weights

import torch

def load_model_weights(model, filename, verbose=1, cp_folder="", strict=True):

"""

Loads the weights of a PyTorch model. The exception handles cpu/gpu incompatibilities.

Args:

model (torch model): Model to load the weights to.

filename (str): Name of the checkpoint.

verbose (int, optional): Whether to display infos. Defaults to 1.

cp_folder (str, optional): Folder to load from. Defaults to "".

strict (bool, optional): Whether to allow missing/additional keys. Defaults to False.

Returns:

torch model: Model with loaded weights.

"""

if verbose:

print(f"\n -> Loading weights from {os.path.join(cp_folder,filename)}\n")

try:

model.load_state_dict(

torch.load(os.path.join(cp_folder, filename), map_location="cpu"),

strict=strict,

)

except RuntimeError:

model.encoder.fc = torch.nn.Linear(model.nb_ft, 1)

model.load_state_dict(

torch.load(os.path.join(cp_folder, filename), map_location="cpu"),

strict=strict,

)

return model

Predict

import torch

from torch.utils.data import DataLoader

from tqdm.notebook import tqdm

def predict(model, dataset, data_config, activation="softmax"):

"""

Usual predict torch function

"""

model.eval()

loader = DataLoader(

dataset,

batch_size=data_config['val_bs'],

shuffle=False,

num_workers=NUM_WORKERS,

pin_memory=True,

)

preds = []

with torch.no_grad():

for data in tqdm(loader):

ids, token_type_ids = data["ids"], data["token_type_ids"]

y_pred = model(ids.cuda(), token_type_ids.cuda())

if activation == "sigmoid":

y_pred = y_pred.sigmoid()

elif activation == "softmax":

y_pred = y_pred.softmax(-1)

preds += [

token_pred_to_char_pred(y, offsets) for y, offsets

in zip(y_pred.detach().cpu().numpy(), data["offsets"].numpy())

]

return preds

Inference

def inference_test(df, exp_folder, config, cfg_folder=None):

preds = []

if cfg_folder is not None:

model_config_file = cfg_folder + config.name.split('/')[-1] + "/config.pth"

tokenizer_folder = cfg_folder + config.name.split('/')[-1] + "/tokenizers/"

else:

model_config_file, tokenizer_folder = None, None

tokenizer = get_tokenizer(

config.name, precompute=config.precompute_tokens, df=df, folder=tokenizer_folder

)

dataset = PatientNoteDataset(

df,

tokenizer,

max_len=config.max_len,

)

model = NERTransformer(

config.name,

num_classes=config.num_classes,

config_file=model_config_file,

pretrained=False

).cuda()

model.zero_grad()

weights = sorted(glob.glob(exp_folder + "*.pt"))

for weight in weights:

model = load_model_weights(model, weight)

pred = predict(

model,

dataset,

data_config=config.data_config,

activation=config.loss_config["activation"]

)

preds.append(pred)

return preds

Main

Config

class Config:

# Architecture

name = "roberta-large"

num_classes = 1

# Texts

max_len = 310

precompute_tokens = True

# Training

loss_config = {

"activation": "sigmoid",

}

data_config = {

"val_bs": 16 if "large" in name else 32,

"pad_token": 1 if "roberta" in name else 0,

}

verbose = 1

Data

Inference

Plot predictions

try:

df_test['span'] = df_test['preds'].apply(char_target_to_span)

plot_annotation(df_test, df_test['pn_num'][0])

except:

pass

与deberta不同,空格不包括在偏移量中,我们需要手动添加,否则会影响性能

Post-processing

def post_process_spaces(target, text):

target = np.copy(target)

if len(text) > len(target):

padding = np.zeros(len(text) - len(target))

target = np.concatenate([target, padding])

else:

target = target[:len(text)]

if text[0] == " ":

target[0] = 0

if text[-1] == " ":

target[-1] = 0

for i in range(1, len(text) - 1):

if text[i] == " ":

if target[i] and not target[i - 1]: # space before

target[i] = 0

if target[i] and not target[i + 1]: # space after

target[i] = 0

if target[i - 1] and target[i + 1]:

target[i] = 1

return target

df_test['preds_pp'] = df_test.apply(lambda x: post_process_spaces(x['preds'], x['clean_text']), 1)

try:

df_test['span'] = df_test['preds_pp'].apply(char_target_to_span)

plot_annotation(df_test, df_test['pn_num'][0])

except:

pass

Submission

df_test['location'] = labels_to_sub(df_test['preds_pp'].values)

sub = pd.read_csv(DATA_PATH + 'sample_submission.csv')

sub = sub[['id']].merge(df_test[['id', "location"]], how="left", on="id")

sub.to_csv('submission.csv', index=False)

sub.head()