跟李沐学AI--多层感知机

学习笔记,来源于李沐的课程

自己动手实现一个多层感知机

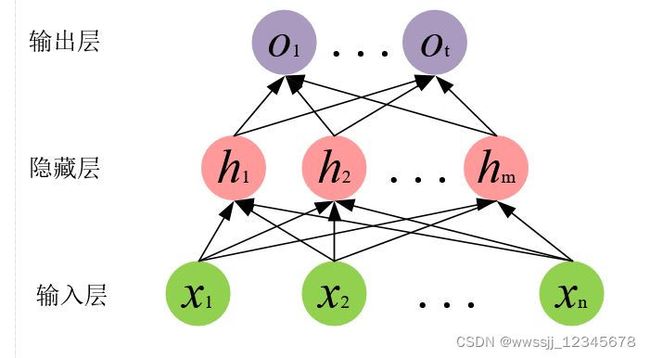

这里使用Fashion-MNIST图像分类数据集,用一包含一个隐藏层的多层感知机进行简单的分类训练。多层感知机的结构如下图所示。

- 输入层是数据集中的图片,每张图片的尺寸是2828,因此这里的输入可以看作将图片展开为一维张量后每个通道的像素,即n=2828=784。

- 隐藏层,这里隐藏层的大小设置为m=256。

- 输出层,该数据集一共有10个分类,故输出层数量为t=10。

代码如下:

import torch

from torch import nn

from d2l import torch as d2l

batch_size = 256

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size) # d2l库中加载train 和test数据

num_inputs, num_outputs, num_hiddens = 784, 10, 256

# 初始化线性层的参数,注意这里的参数数量要对应

w1 = nn.Parameter(torch.randn(num_inputs, num_hiddens, requires_grad=True) * 0.01)

b1 = nn.Parameter(torch.zeros(num_hiddens, requires_grad=True))

w2 = nn.Parameter(torch.randn(num_hiddens, num_outputs, requires_grad=True) * 0.01)

b2 = nn.Parameter(torch.zeros(num_outputs, requires_grad=True))

params = [w1, b1, w2, b2]

def relu(x):

a = torch.zeros_like(x)

return torch.max(x, a)

def net(x):

x = x.reshape((-1, num_inputs)) # 将图片展开

H = relu(x@w1 + b1) # 第一个线性层操作后relu

return (H@w2 + b2) # 第二个线性

loss = nn.CrossEntropyLoss(reduction='none') # 计算交叉熵损失

num_epochs, lr = 10, 0.1

updater = torch.optim.SGD(params, lr=lr) # 梯度优化方法为SGD

d2l.train_ch3(net, train_iter, test_iter, loss, num_epochs, updater) # 训练

d2l.predict_ch3(net, test_iter) # 预测

d2l.plt.show()

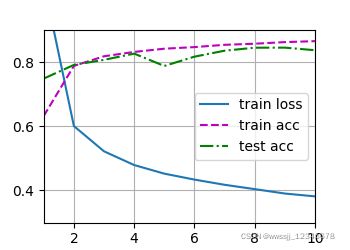

得到的运行的结果如下:

也可以用torch封装好的函数简洁的实现以上操作:

import torch

from torch import nn

from d2l import torch as d2l

def init_weights(m):

if type(m) == nn.Module:

nn.init.normal_(m.weight, std=0.01)

def main():

batch_size, lr, num_epochs = 256, 0.1, 20

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size)

net = nn.Sequential(nn.Flatten(), nn.Linear(784, 256), nn.ReLU(), nn.Linear(256, 10))

net.apply(init_weights)

loss = nn.CrossEntropyLoss(reduction='none')

trainer = torch.optim.SGD(net.parameters(), lr=lr)

d2l.train_ch3(net, test_iter, test_iter, loss, num_epochs, trainer)

d2l.plt.show()

if __name__ == "__main__":

main()