8、多分类问题

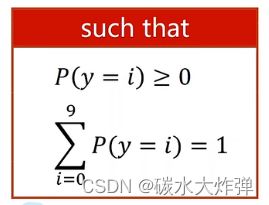

(1)在处理手写数字识别时,要把所有数据集分为10类,这10类的概率之和为1,每一类的概率值大等0,为满足上述条件,引入softmax函数,因为softmax函数可以输出一个分布,每个输出值都大等0,输出值和为1

(2)处理多分类问题时,前面所有激活函数用sigmoid函数,最后一层用softmax函数

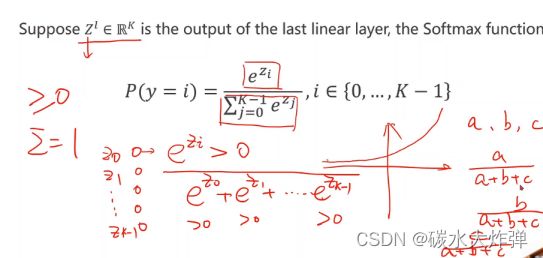

softmax函数:指数的幂运算结果大于0,k个分类的值输出为1

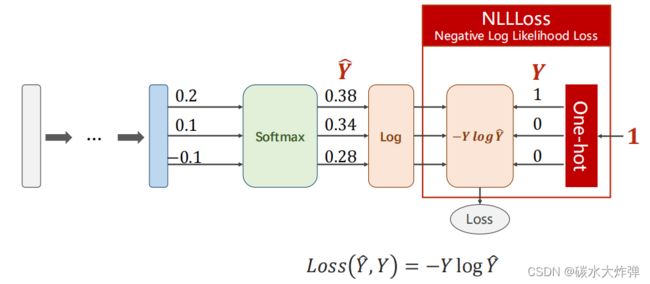

(3)多分类问题用NLLLoss损失函数,在softmax层输出后做log计算,然后进入损失函数-ylogy^,损失函数中的log不做计算

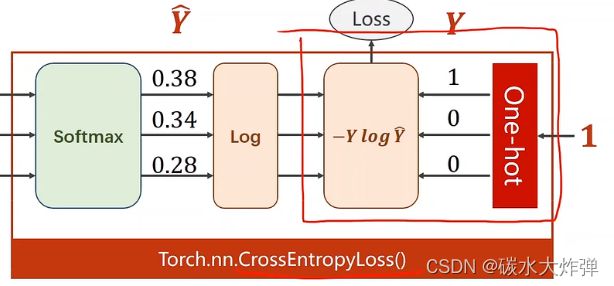

(4)交叉熵损失和NLLLoss的区别:

(5)神经网络最后一层不做激活,因为损失函数torch.nn.CrossEntropyLoss中包含了softmax

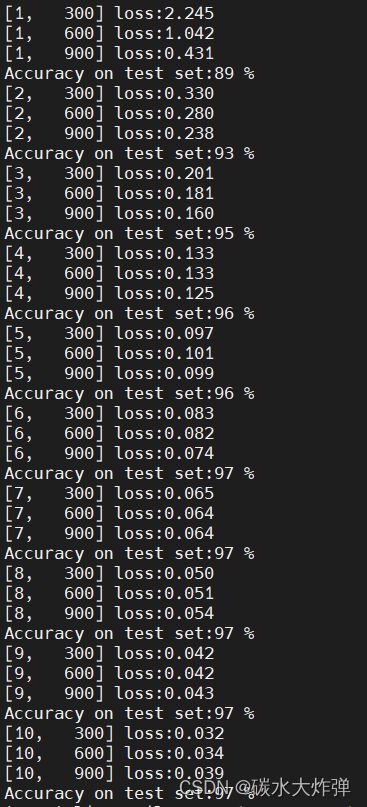

(6)softmax函数处理手写数字识别的代码如下:

#1、prepare dataset

import torch

from torchvision import transforms

from torchvision import datasets

from torch.utils.data import DataLoader

import torch.nn.functional as F

import torch.optim as optim

batch_size = 64

#读图像时用pil,神经网络希望输入的数值比较小,在[-1,1]之间,遵循正态分布

#把[0.255]转变为[0,1]

#R、G、B各是一个通道channel用c表示通道

#图像张量一般是WxHxC,在pytorch中需要转为CxWxH

transform = transforms.Compose([

# convert the PIL Image to tensor,单通道变为多通道

transforms.ToTensor(),

#数据标准化,切换到(0.1)分布,均值mean和标准差std,对MNIST所有像素值计算的结果

transforms.Normalize((0.1307, ), (0.3081, ))

])

train_dataset = datasets.MNIST(root='./mnist/',

train=True,

download=True,

transform=transform)

train_loader = DataLoader(dataset=train_dataset,

shuffle=True,

batch_size=batch_size)

test_dataset = datasets.MNIST(root='./mnist/',

train=False,

download=True,

transform=transform)

test_loader = DataLoader(dataset=test_dataset,

shuffle=False,

batch_size=batch_size

)

#2、Design model using Class

#激活层用Relu

class Net(torch.nn.Module):

def __init__(self):

super(Net, self).__init__()

self.l1 = torch.nn.Linear(784, 512)

self.l2 = torch.nn.Linear(512, 256)

self.l3 = torch.nn.Linear(256, 128)

self.l4 = torch.nn.Linear(128, 64)

self.l5 = torch.nn.Linear(64, 10)

def forward(self, x):

# view改变张量的形状

x = x.view(-1, 784)#-1自动计算N,N是有多少个样本,Nx784的矩阵

x = F.relu(self.l1(x))

x = F.relu(self.l2(x))

x = F.relu(self.l3(x))

x = F.relu(self.l4(x))

return self.l5(x)

model = Net()

#最后一层不做激活

#3、construct loss and optimizer

criterion = torch.nn.CrossEntropyLoss()

#带冲量的梯度下降,冲量可以优化训练过程

optimizer = optim.SGD(model.parameters(), lr=0.01, momentum=0.5)

#4、Training and Test

def train(epoch):

running_loss = 0.0

for batch_idx, data in enumerate(train_loader, 0):

inputs, target = data

optimizer.zero_grad()

outputs = model(inputs)

loss = criterion(outputs, target)

loss.backward()

optimizer.step()

running_loss += loss.item()

if batch_idx % 300 == 299:

print('[%d, %5d] loss:%.3f' % (epoch+1, batch_idx+1, running_loss/300))

running_loss = 0.0

def test():

correct = 0

total = 0

with torch.no_grad():

for data in test_loader:

images, labels = data

outputs = model(images)

_, predicted = torch.max(outputs.data, dim=1)

total += labels.size(0)

correct += (predicted == labels).sum().item()

print('Accuracy on test set:%d %%' % (100 * correct / total))

if __name__ =='__main__':

for epoch in range(10):

train(epoch)

test()输出结果: