Pytorch双向RNN隐藏层和输出层结果拆分

1 RNN隐藏层和输出层结果的形状

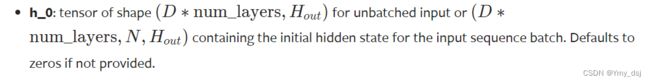

从Pytorch官方文档可以得到,对于批量化输入的RNN来讲,其隐藏层的shape为(num_directions*num_layers, batch_size, hidden_size)。

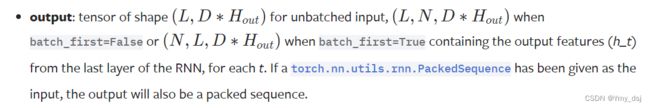

其输出的shape为(seq_len, batch_size, D*hidden_size)。

其输出的shape为(seq_len, batch_size, D*hidden_size)。

2 双向RNN情况下,隐藏层和输出层结果拆分

当采用双向RNN时,其输出的结果包含正向和反向两个方向输出的结果。

2.1 输出层结果拆分

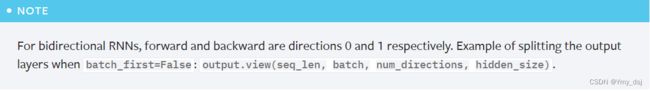

其中对于输出output来讲,从官方文档我们可以得到,其拆分正向和反向两个方向结果的方法为:

output.shape = (seq_len, batch_size, num_directions*hidden_size)

output.view(seq_len, batch, num_directions, hidden_size)

其中,对于(num_directions)方向维度,正向和反向的维度值分别为0和1。

2.2 隐藏层结果拆分

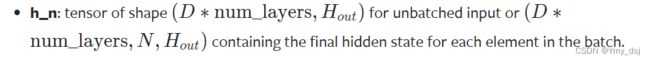

而对于隐藏层,包括初始值h_0以及最终输出h_n,也都包含两个方向的隐藏状态,但是其拆分方式跟输出层不一样。方法如下:

h_0, h_n.shape = (num_directions*num_layers, batch_size, hidden_size)

h_0, h_n.view(num_layers, num_directions, batch_size, hidden_size)

可以从简单单层双向RNN的输出结果来验证,此时RNN的输出结果与最后一层的隐藏层结果是一样的。

import torch

import torch.nn as nn

if __name__ == "__main__":

# input_size: 3, hidden_size: 5, num_layers: 3

BiRNN_Net = nn.RNN(3, 5, 3, bidirectional=True, batch_first=True)

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

# batch_size: 1, seq_len: 1, input_size: 3

inputs = torch.zeros(1, 1, 3, device=device)

# state: (num_directions*num_layers, batch_size, hidden_size)

state = torch.randn(6, 1, 5, device=device)

BiRNN_Net.to(device)

output, hidden = BiRNN_Net(inputs, state)

output_re = output.reshape((1, 1, 2, 5))

hidden_re = hidden.reshape((3, 2, 1, 5))

print(output)

print(output_re)

print(hidden)

print(hidden_re)输出结果可以看出,隐藏层的结果是优先num_layers网络层数这一个维度来构成的。

tensor([[[ 0.3939, -0.9160, 0.5054, 0.2949, -0.5225, 0.0533, 0.4197,

-0.7200, -0.1262, -0.7975]]], device='cuda:0',

grad_fn=)

tensor([[[[ 0.3939, -0.9160, 0.5054, 0.2949, -0.5225],

[ 0.0533, 0.4197, -0.7200, -0.1262, -0.7975]]]], device='cuda:0',

grad_fn=)

tensor([[[-0.2606, 0.5410, -0.2663, 0.6418, -0.2902]],

[[ 0.1367, 0.7222, -0.3051, -0.6410, -0.3062]],

[[ 0.2433, 0.3287, -0.4809, -0.1782, -0.5582]],

[[ 0.4824, -0.8529, 0.7604, 0.8508, -0.1902]],

[[ 0.3939, -0.9160, 0.5054, 0.2949, -0.5225]],

[[ 0.0533, 0.4197, -0.7200, -0.1262, -0.7975]]], device='cuda:0',

grad_fn=)

tensor([[[[-0.2606, 0.5410, -0.2663, 0.6418, -0.2902]],

[[ 0.1367, 0.7222, -0.3051, -0.6410, -0.3062]]],

[[[ 0.2433, 0.3287, -0.4809, -0.1782, -0.5582]],

[[ 0.4824, -0.8529, 0.7604, 0.8508, -0.1902]]],

[[[ 0.3939, -0.9160, 0.5054, 0.2949, -0.5225]],

[[ 0.0533, 0.4197, -0.7200, -0.1262, -0.7975]]]], device='cuda:0',

grad_fn=)

3 参考

Pytorch中nn.RNN()基本用法和输入输出

从零实现深度学习框架——再探多层双向RNN的实现