DataWhale计算机视觉实践(目标检测)Task01

DataWhale计算机视觉实践(目标检测)Task01

文章目录

- DataWhale计算机视觉实践(目标检测)Task01

-

- 目标检测:

-

- 一、基本概念:

- 二、目标检测数据集VOC:

-

- 1. VOC数据集简介

- 2. VOC数据集的`dataloader`的构建

文中图片和部分内容、代码转自:动手学CV-Pytorch

目标检测:

一、基本概念:

目标检测,也叫目标提取,是一种基于目标几何和统计特征的图像分割,它将目标的分割和识别合二为一,其准确性和实时性是整个系统的一项重要能力。尤其是在复杂场景中,需要对多个目标进行实时处理时,目标自动提取和识别就显得特别重要。百度百科

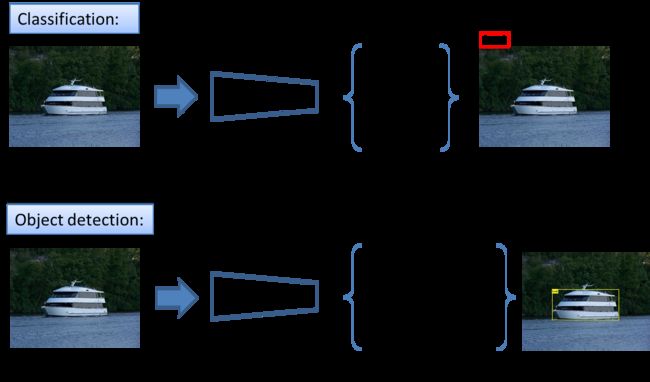

- 图像分类和目标检测的区别:

-

图像分类:只需要判断输入的图像中是否包含感兴趣物体。

-

目标检测:需要在识别出图片中目标类别的基础上,还要精确定位到目标的具体位置,并用外接矩形框标出。

-

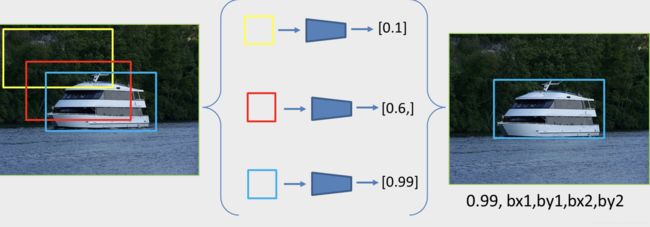

- 目标检测的思路:

即定义大量的候选框,计算每一个候选框中基于分类网络得到的得分(代表当前框中有某个物体的置信度),最终得分最高的就代表识别的最准确的框,其位置就是最终要检测的目标的位置。

**先确立众多候选框,再对候选框进行分类和微调。**(RCNN、YOLO、SSD等经典网络模型思路。)

-

目标框定义方式:

- 图像分类:标签信息是类别。

- 目标检测:类别label,目标的位置信息(目标的外接矩形框bounding box)

- bbox的格式通常有两种:

(x1,y1,x2,y2)和(x_c,y_c,w,h)。

- bbox的格式通常有两种:

- 两种定义方式也可以进行互转,代码如下:

import torch

# 两种不同的目标框信息表达格式互转

def xy_to_cxcy(xy):

"""

Convert bounding boxes from boundary coordinates (x_min, y_min, x_max, y_max) to center-size coordinates (c_x, c_y, w, h).

:param xy: bounding boxes in boundary coordinates, a tensor of size (n_boxes, 4)

:return: bounding boxes in center-size coordinates, a tensor of size (n_boxes, 4)

"""

return torch.cat([(xy[:, 2:] + xy[:, :2]) / 2, # c_x, c_y

xy[:, 2:] - xy[:, :2]], 1) # w, h

def cxcy_to_xy(cxcy):

"""

Convert bounding boxes from center-size coordinates (c_x, c_y, w, h) to boundary coordinates (x_min, y_min, x_max, y_max).

:param cxcy: bounding boxes in center-size coordinates, a tensor of size (n_boxes, 4)

:return: bounding boxes in boundary coordinates, a tensor of size (n_boxes, 4)

"""

return torch.cat([cxcy[:, :2] - (cxcy[:, 2:] / 2), # x_min, y_min

cxcy[:, :2] + (cxcy[:, 2:] / 2)], 1) # x_max, y_max

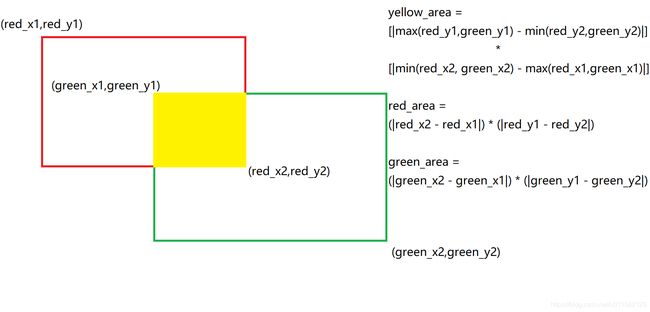

- 交并比(IoU):

IoU:Intersection over Union

- 贯穿整个模型的训练测试和评价过程,目的是用来衡量两个目标框的重叠程度。

- 表示两个目标框的交集占其并集的比例。

I o U = i n t e r s e c t i o n u n i o n IoU = \frac{intersection}{union} IoU=unionintersection

计算流程:

1.首先获取两个框的坐标,红框坐标: 左上(red_x1, red_y1), 右下(red_x2, red_y2),绿框坐标: 左上(green_x1, green_y1),右下(green_x2, green_y2)

2.计算两个框左上点的坐标最大值:(max(red_x1, green_x1), max(red_y1, green_y1)), 和右下点坐标最小值:(min(red_x2, green_x2), min(red_y2, green_y2))

3.利用2算出的信息计算黄框面积:yellow_area

4.计算红绿框的面积:red_area和green_area

5.IoU = yellow_area / (red_area + green_area - yellow_area)

- IoU计算实现代码如下所示:

# 计算IoU

def find_intersection(set_1, set_2):

"""

Find the intersection of every box combination between two sets of boxes that are in boundary coordinates.

:param set_1: set 1, a tensor of dimensions (n1, 4) [x_1,y_1,x_2,y_2]

:param set_2: set 2, a tensor of dimensions (n2, 4) [x_1,y_1,x_2,y_2]

:return: 返回交集的面积intersection of each of the boxes in set 1 with respect to each of the boxes in set 2, a tensor of dimensions (n1, n2)

"""

# PyTorch auto-broadcasts singleton dimensions

# 计算出交集的左上角坐标max((red_x1, green_y1),(green_x1,red_y1))

lower_bounds = torch.max(set_1[:, :2].unsqueeze(1), set_2[:, :2].unsqueeze(0)) # (n1, n2, 2)

# 计算出交集的右下角坐标min((red_x2, red_y2), (green_x2, green_y2))

upper_bounds = torch.min(set_1[:, 2:].unsqueeze(1), set_2[:, 2:].unsqueeze(0)) # (n1, n2, 2)

# clamp:将输入input张量每个元素的夹紧到区间 [min,max][min,max],并返回结果到一个新张量。

# 这里这样的处理为了体现若无交集,则upper-lower为负,此时返回0.

intersection_dims = torch.clamp(upper_bounds - lower_bounds, min=0) # (n1, n2, 2)

# 返回交集的面积,有则返回实际计算的结果,无则是0

return intersection_dims[:, :, 0] * intersection_dims[:, :, 1] # (n1, n2)

def find_jaccard_overlap(set_1, set_2):

"""

Find the Jaccard Overlap (IoU) of every box combination between two sets of boxes that are in boundary coordinates.

:param set_1: set 1, a tensor of dimensions (n1, 4)

:param set_2: set 2, a tensor of dimensions (n2, 4)

:return: 返回IoU Jaccard Overlap of each of the boxes in set 1 with respect to each of the boxes in set 2, a tensor of dimensions (n1, n2)

"""

# Find intersections

# 计算交集的面积

intersection = find_intersection(set_1, set_2) # (n1, n2)

# Find areas of each box in both sets

# 分别计算两个候选框的面积

areas_set_1 = (set_1[:, 2] - set_1[:, 0]) * (set_1[:, 3] - set_1[:, 1]) # (n1)

areas_set_2 = (set_2[:, 2] - set_2[:, 0]) * (set_2[:, 3] - set_2[:, 1]) # (n2)

# Find the union

# PyTorch auto-broadcasts singleton dimensions

# 计算并集的面积

union = areas_set_1.unsqueeze(1) + areas_set_2.unsqueeze(0) - intersection # (n1, n2)

# 返回IoU

return intersection / union # (n1, n2)

- 小结:

这部分主要介绍了一个实现目标检测的解决思路:

先确定众多候选框,再对候选框进行分类和微调,以最终确定图中有多少个物体极其对应的类别。

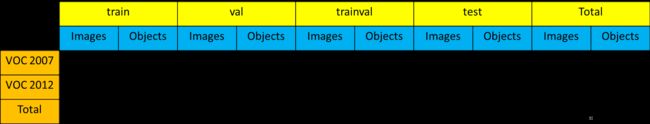

二、目标检测数据集VOC:

1. VOC数据集简介

VOC数据及时目标检测领域最常用的标准数据集之一,在练习中主要使用

VOC2007和VOC2012这两个最流行的版本作为训练和测试的数据。

-

数据集类别:VOC数据集主要分为4大类,20个小类。

- Vehicles

- Household

- Animals

- Person

https://raw.githubusercontent.com/datawhalechina/dive-into-cv-pytorch/master/markdown_imgs/chapter03/3-5.png

-

数据集下载链接:

VOC数据集–解压码(7aek) -

数据集结构说明:

JPEGImages:这个目录中的图片,包括了训练、验证和测试用到的所有图片。ImageSets:Layout:训练、验证、测试和训练+验证数据集的文件名;Segmentation:分割所用的训练、验证、测试和训练+验证数据集的文件名。Main:各个类别所有图片的文件名。

Annotations:存放了每张图片相关的标注信息,以xml格式形式存储。某一张图片对应的文件如下:

<annotation>

<folder>VOC2007folder>

<filename>000001.jpgfilename>

<source>

<database>The VOC2007 Databasedatabase>

<annotation>PASCAL VOC2007annotation>

<image>flickrimage>

<flickrid>341012865flickrid>

source>

<owner>

<flickrid>Fried Camelsflickrid>

<name>Jinky the Fruit Batname>

owner>

<size>

<width>353width>

<height>500height>

<depth>3depth>

size>

<segmented>0segmented>

<object>

<name>dogname>

<pose>Leftpose>

<truncated>1truncated>

<difficult>0difficult>

<bndbox>

<xmin>48xmin>

<ymin>240ymin>

<xmax>195xmax>

<ymax>371ymax>

bndbox>

object>

<object>

<name>personname>

<pose>Leftpose>

<truncated>1truncated>

<difficult>0difficult>

<bndbox>

<xmin>8xmin>

<ymin>12ymin>

<xmax>352xmax>

<ymax>498ymax>

bndbox>

object>

annotation>

2. VOC数据集的dataloader的构建

- 数据集准备:使用一个预处理的脚本,可以提前对

xml文件进行解析,将其转换为json格式的文件,便于后面再训练时,能够更便捷的获取相应的标签信息。

这样的处理取决于自己,相较于

xml格式而言,json格式更便于解析和读取相应的字段信息。

练习中便于后面再训练时,能够更便捷的获取相应的标签信息。

练习中可以通过运行create_data_lists.py脚本,使用utils.py中的create_data_lists方法实现:

"""python

create_data_lists

"""

from utils import create_data_lists

if __name__ == '__main__':

# voc07_path,voc12_path为我们训练测试所需要用到的数据集,output_folder为我们生成构建dataloader所需文件的路径

# 参数中涉及的路径以个人实际路径为准,建议将数据集放到dataset目录下,和教程保持一致

create_data_lists(voc07_path='../../../dataset/VOCdevkit/VOC2007',

voc12_path='../../../dataset/VOCdevkit/VOC2012',

output_folder='../../../dataset/VOCdevkit')

- 将下载好的数据解压放到路径的

dataset目录中,并运行脚本,便生成了相应的json文件,用于后面的训练中。 - 解析

xml文件主要是通过parse_annotation函数实现:

"""

xml文件解析

"""

import json

import os

import torch

import random

import xml.etree.ElementTree as ET #解析xml文件所用工具

import torchvision.transforms.functional as FT

#GPU设置

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

# Label map

#voc_labels为VOC数据集中20类目标的类别名称

voc_labels = ('aeroplane', 'bicycle', 'bird', 'boat', 'bottle', 'bus', 'car', 'cat', 'chair', 'cow', 'diningtable',

'dog', 'horse', 'motorbike', 'person', 'pottedplant', 'sheep', 'sofa', 'train', 'tvmonitor')

#创建label_map字典,用于存储类别和类别索引之间的映射关系。比如:{1:'aeroplane', 2:'bicycle',......}

label_map = {k: v + 1 for v, k in enumerate(voc_labels)}

#VOC数据集默认不含有20类目标中的其中一类的图片的类别为background,类别索引设置为0

label_map['background'] = 0

#将映射关系倒过来,{类别名称:类别索引}

rev_label_map = {v: k for k, v in label_map.items()} # Inverse mapping

#解析xml文件,最终返回这张图片中所有目标的标注框及其类别信息,以及这个目标是否是一个difficult目标

def parse_annotation(annotation_path):

#解析xml

tree = ET.parse(annotation_path)

root = tree.getroot()

boxes = list() #存储bbox

labels = list() #存储bbox对应的label

difficulties = list() #存储bbox对应的difficult信息

#遍历xml文件中所有的object,前面说了,有多少个object就有多少个目标

for object in root.iter('object'):

#提取每个object的difficult、label、bbox信息

difficult = int(object.find('difficult').text == '1')

label = object.find('name').text.lower().strip()

if label not in label_map:

continue

bbox = object.find('bndbox')

xmin = int(bbox.find('xmin').text) - 1

ymin = int(bbox.find('ymin').text) - 1

xmax = int(bbox.find('xmax').text) - 1

ymax = int(bbox.find('ymax').text) - 1

#存储

boxes.append([xmin, ymin, xmax, ymax])

labels.append(label_map[label])

difficulties.append(difficult)

#返回包含图片标注信息的字典

return {'boxes': boxes, 'labels': labels, 'difficulties': difficulties}

- 运行脚本后解析出来的

json文件部分内容如下。会发现仅将最重要的目标信息解析到出来,便于后面的训练测试使用:

[{

"boxes": [

[262, 210, 323, 338],

[164, 263, 252, 371],

[4, 243, 66, 373],

[240, 193, 294, 298],

[276, 185, 311, 219]

],

"labels": [9, 9, 9, 9, 9],

"difficulties": [0, 0, 1, 0, 1]

}, {

"boxes": [

[140, 49, 499, 329]

],

"labels": [7],

"difficulties": [0]

}, {

"boxes": [

[68, 171, 269, 329],

[149, 140, 228, 283],

[284, 200, 326, 330],

[257, 197, 296, 328]

],

"labels": [13, 15, 15, 15],

"difficulties": [0, 0, 0, 0]

}]

- 构建

dataloader:

- 定义dataloader:

#train_dataset和train_loader的实例化

# 实例化PascalVOCDataset类

train_dataset = PascalVOCDataset(data_folder,

split='train',

keep_difficult=keep_difficult)

# 将train_dataset传入DataLoader得到train_loader

train_loader = torch.utils.data.DataLoader(train_dataset, batch_size=batch_size, shuffle=True,

collate_fn=train_dataset.collate_fn, num_workers=workers,

pin_memory=True) # note that we're passing the collate function here

- 其中,

PascalVOCDataset的定义如下,主要继承了torch.utils.data.Dataset,然后重写了__init__ , getitem, len 和 collate_fn 四个方法:

"""python

PascalVOCDataset具体实现过程

"""

import torch

from torch.utils.data import Dataset

import json

import os

from PIL import Image

from utils import transform

class PascalVOCDataset(Dataset):

"""

A PyTorch Dataset class to be used in a PyTorch DataLoader to create batches.

"""

#初始化相关变量

#读取images和objects标注信息

def __init__(self, data_folder, split, keep_difficult=False):

"""

:param data_folder: 数据目录。folder where data files are stored

:param split: 数据分割为训练集和测试集。split, one of 'TRAIN' or 'TEST'

:param keep_difficult: 保留或放弃难以检测的对象。keep or discard objects that are considered difficult to detect?

"""

self.split = split.upper() #保证输入为纯大写字母,便于匹配{'TRAIN', 'TEST'}

assert self.split in {'TRAIN', 'TEST'}

self.data_folder = data_folder

self.keep_difficult = keep_difficult

# Read data files

with open(os.path.join(data_folder, self.split + '_images.json'), 'r') as j:

self.images = json.load(j)

with open(os.path.join(data_folder, self.split + '_objects.json'), 'r') as j:

self.objects = json.load(j)

assert len(self.images) == len(self.objects)

#循环读取image及对应objects

#对读取的image及objects进行tranform操作(数据增广)

#返回PIL格式图像,标注框,标注框对应的类别索引,对应的difficult标志(True or False)

def __getitem__(self, i):

# Read image

#*需要注意,在pytorch中,图像的读取要使用Image.open()读取成PIL格式,不能使用opencv

#*由于Image.open()读取的图片是四通道的(RGBA),因此需要.convert('RGB')转换为RGB通道

image = Image.open(self.images[i], mode='r')

image = image.convert('RGB')

# Read objects in this image (bounding boxes, labels, difficulties)

# 读取图片对应的对象信息

objects = self.objects[i]

boxes = torch.FloatTensor(objects['boxes']) # (n_objects, 4)

labels = torch.LongTensor(objects['labels']) # (n_objects)

difficulties = torch.ByteTensor(objects['difficulties']) # (n_objects)

# Discard difficult objects, if desired

#如果self.keep_difficult为False,即不保留difficult标志为True的目标

#那么这里将对应的目标删去

if not self.keep_difficult:

boxes = boxes[1 - difficulties]

labels = labels[1 - difficulties]

difficulties = difficulties[1 - difficulties]

# Apply transformations

#对读取的图片应用transform

image, boxes, labels, difficulties = transform(image, boxes, labels, difficulties, split=self.split)

return image, boxes, labels, difficulties

#获取图片的总数,用于计算batch数

def __len__(self):

return len(self.images)

#我们知道,我们输入到网络中训练的数据通常是一个batch一起输入,而通过__getitem__我们只读取了一张图片及其objects信息

#如何将读取的一张张图片及其object信息整合成batch的形式呢?

#collate_fn就是做这个事情,

#对于一个batch的images,collate_fn通过torch.stack()将其整合成4维tensor,对应的objects信息分别用一个list存储

def collate_fn(self, batch):

"""

Since each image may have a different number of objects, we need a collate function (to be passed to the DataLoader).

This describes how to combine these tensors of different sizes. We use lists.

Note: this need not be defined in this Class, can be standalone.

:param batch: an iterable of N sets from __getitem__()

:return: a tensor of images, lists of varying-size tensors of bounding boxes, labels, and difficulties

"""

images = list()

boxes = list()

labels = list()

difficulties = list()

for b in batch:

images.append(b[0])

boxes.append(b[1])

labels.append(b[2])

difficulties.append(b[3])

#(3,224,224) -> (N,3,224,224)

images = torch.stack(images, dim=0)

return images, boxes, labels, difficulties # tensor (N, 3, 224, 224), 3 lists of N tensors each

- 数据增强:

通过数据增强,可以提升网络精度和泛化能力。

transform数据增强实现代码如下:

"""python

transform操作是训练模型中一项非常重要的工作,

其中不仅包含数据增强以提升模型性能的相关操作,

也包含如数据类型转换(PIL to Tensor)、归一化(Normalize)这些必要操作。

"""

import json

import os

import torch

import random

import xml.etree.ElementTree as ET

import torchvision.transforms.functional as FT

"""

可以看到,transform分为TRAIN和TEST两种模式,以本实验为例:

在TRAIN时进行的transform有:

1.以随机顺序改变图片亮度,对比度,饱和度和色相,每种都有50%的概率被执行。photometric_distort

2.扩大目标,expand

3.随机裁剪图片,random_crop

4.0.5的概率进行图片翻转,flip

*注意:a. 第一种transform属于像素级别的图像增强,目标相对于图片的位置没有改变,因此bbox坐标不需要变化。

但是2,3,4,5都属于图片的几何变化,目标相对于图片的位置被改变,因此bbox坐标要进行相应变化。

在TRAIN和TEST时都要进行的transform有:

1.统一图像大小到(224,224),resize

2.PIL to Tensor

3.归一化,FT.normalize()

注1: resize也是一种几何变化,要知道应用数据增强策略时,哪些属于几何变化,哪些属于像素变化

注2: PIL to Tensor操作,normalize操作必须执行

"""

def transform(image, boxes, labels, difficulties, split):

"""

Apply the transformations above.

:param image: image, a PIL Image

:param boxes: bounding boxes in boundary coordinates, a tensor of dimensions (n_objects, 4)

:param labels: labels of objects, a tensor of dimensions (n_objects)

:param difficulties: difficulties of detection of these objects, a tensor of dimensions (n_objects)

:param split: one of 'TRAIN' or 'TEST', since different sets of transformations are applied

:return: transformed image, transformed bounding box coordinates, transformed labels, transformed difficulties

"""

#在训练和测试时使用的transform策略往往不完全相同,所以需要split变量指明是TRAIN还是TEST时的transform方法

assert split in {'TRAIN', 'TEST'}

# Mean and standard deviation of ImageNet data that our base VGG from torchvision was trained on

# see: https://pytorch.org/docs/stable/torchvision/models.html

#为了防止由于图片之间像素差异过大而导致的训练不稳定问题,图片在送入网络训练之间需要进行归一化

#对所有图片各通道求mean和std来获得

mean = [0.485, 0.456, 0.406]

std = [0.229, 0.224, 0.225]

new_image = image

new_boxes = boxes

new_labels = labels

new_difficulties = difficulties

# Skip the following operations for evaluation/testing

if split == 'TRAIN':

# A series of photometric distortions in random order, each with 50% chance of occurrence, as in Caffe repo

# 以随机的顺序改变图片的亮度、对比度、饱和度和色相(都有50%的概率)

new_image = photometric_distort(new_image)

# Convert PIL image to Torch tensor

# 将图片转换为tensor

new_image = FT.to_tensor(new_image)

# Expand image (zoom out) with a 50% chance - helpful for training detection of small objects

# Fill surrounding space with the mean of ImageNet data that our base VGG was trained on

if random.random() < 0.5:

# 扩大目标

new_image, new_boxes = expand(new_image, boxes, filler=mean)

# Randomly crop image (zoom in)

# 随机裁剪图片

new_image, new_boxes, new_labels, new_difficulties = random_crop(new_image, new_boxes, new_labels,

new_difficulties)

# Convert Torch tensor to PIL image

# 将tensor转换为图片格式PIL

new_image = FT.to_pil_image(new_image)

# Flip image with a 50% chance

if random.random() < 0.5:

# 以0.5的概率进行图片翻转

new_image, new_boxes = flip(new_image, new_boxes)

# Resize image to (224, 224) - this also converts absolute boundary coordinates to their fractional form

# 统一图片的大小到(224,224)

new_image, new_boxes = resize(new_image, new_boxes, dims=(224, 224))

# Convert PIL image to Torch tensor

# PIL转换为tensor

new_image = FT.to_tensor(new_image)

# Normalize by mean and standard deviation of ImageNet data that our base VGG was trained on

# 归一化

new_image = FT.normalize(new_image, mean=mean, std=std)

return new_image, new_boxes, new_labels, new_difficulties

- 构建DataLoader:

"""python

DataLoader

"""

#参数说明:

#在train时一般设置shufle=True打乱数据顺序,增强模型的鲁棒性

#num_worker表示读取数据时的线程数,一般根据自己设备配置确定(如果是windows系统,建议设默认值0,防止出错)

#pin_memory,在计算机内存充足的时候设置为True可以加快内存中的tensor转换到GPU的速度

train_loader = torch.utils.data.DataLoader(train_dataset, batch_size=batch_size, shuffle=True,

collate_fn=train_dataset.collate_fn, num_workers=workers,

pin_memory=True) # note that we're passing the collate function here