HA-hadoop-3.2.1下配置hive-3.1.2的hive及hiveserver2

1.下载解压

apache-hive-3.1.2-bin.tar.gz

[xiaokang@hadoop01 ~]$ tar -zxvf apache-hive-1.2.2-bin.tar.gz -C /opt/software/

2.配置环境变量

[xiaokang@hadoop01 ~]$ sudo vim /etc/profile.d/env.sh

#在原有基础上添加以下内容,如图所示

export HIVE_HOME=/opt/software/hive-3.1.2

${HIVE_HOME}/bin:

[xiaokang@hadoop01 ~]$ . /etc/profile.d/env.sh

3.修改hive-3.1.2的配置文件

[xiaokang@hadoop01 ~]$ cd $HIVE_HOME/conf

[xiaokang@hadoop01 ~]$ cp hive-env.sh.template hive-env.sh

[xiaokang@hadoop01 ~]$ cp hive-log4j2.properties.template hive-log4j2.properties

[xiaokang@hadoop01 ~]$ cp hive-default.xml.template hive-default.xml

hive-env.sh

#第48行

HADOOP_HOME=/opt/software/hadoop-3.2.1

#第51行

export HIVE_CONF_DIR=/opt/software/hive-3.1.2/conf

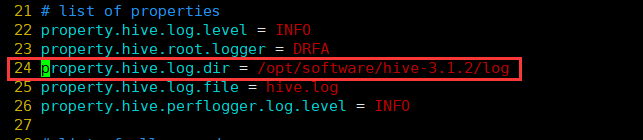

hive-log4j2.properties

#第24行

property.hive.log.dir = /opt/software/hive-3.1.2/log

<?xml version="1.0" encoding="UTF-8" standalone="no"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<property>

<!-- 查询数据时 显示出列的名字 -->

<name>hive.cli.print.header</name>

<value>true</value>

</property>

<property>

<!-- 在命令行中显示当前所使用的数据库 -->

<name>hive.cli.print.current.db</name>

<value>true</value>

</property>

<property>

<!-- 默认数据仓库存储的位置,该位置为HDFS上的路径 -->

<name>hive.metastore.warehouse.dir</name>

<value>/user/hive/warehouse</value>

</property>

<!-- 8.x -->

<property>

<name>javax.jdo.option.ConnectionURL</name>

<value>jdbc:mysql://hadoop01:3306/hive_metastore?createDatabaseIfNotExist=true&useSSL=false&serverTimezone=GMT</value>

</property>

<!-- 8.x -->

<property>

<name>javax.jdo.option.ConnectionDriverName</name>

<value>com.mysql.cj.jdbc.Driver</value>

</property>

<property>

<name>javax.jdo.option.ConnectionUserName</name>

<value>root</value>

</property>

<property>

<name>javax.jdo.option.ConnectionPassword</name>

<value>xiaokang</value>

</property>

<!-- hiveserver2服务的端口号以及绑定的主机名 -->

<property>

<name>hive.server2.thrift.port</name>

<value>11240</value>

</property>

<property>

<name>hive.server2.thrift.bind.host</name>

<value>hadoop01</value>

</property>

</configuration>

4.拷贝jar包

查看mysql版本

[xiaokang@hadoop01 ~]$ mysql -V

mysql Ver 8.0.21 for Linux on x86_64 (MySQL Community Server - GPL)

根据mysql的版本下载对应的连接驱动包,并拷贝到/opt/software/hive-3.1.2/lib/目录下

这里我没有去官网找mysql-connector-java-8.0.21.jar,直接在idea中用maven导入依赖:

<dependency>

<groupId>mysql</groupId>

<artifactId>mysql-connector-java</artifactId>

<version>8.0.21</version>

</dependency>

下载完成之后就可以在maven工作目录中找到mysql-connector-java-8.0.21.jar了,如图所示:

[xiaokang@hadoop01 ~]$ cp mysql-connector-java-8.0.21.jar /opt/software/hive-3.1.2/lib/

删除/opt/software/hive-3.1.2/lib/目录下低版本的guava-19.0.jar,将/opt/software/hadoop-3.2.1/share/hadoop/common/lib/下高版本的guava-27.0-jre.jar拷贝过来

[xiaokang@hadoop ~]$ rm -rf /opt/software/hive-3.1.2/lib/guava-19.0.jar

[xiaokang@hadoop ~]$ cp /opt/software/hadoop-3.2.1/share/hadoop/common/lib/guava-27.0-jre.jar /opt/software/hive-3.1.2/lib/

5.修改hadoop-3.2.1的配置文件

分别在3台虚拟机的core-site.xml中添加以下内容

[xiaokang@hadoop01 ~]$ vim /opt/software/hadoop-3.2.1/etc/hadoop/core-site.xml

<property>

<name>hadoop.proxyuser.xiaokang.hosts</name>

<value>*</value>

</property>

<property>

<name>hadoop.proxyuser.xiaokang.groups</name>

<value>*</value>

</property>

6.初始化元数据库

[xiaokang@hadoop01 ~]$ schematool -dbType mysql -initSchema

7.分发文件

将hadoop01上的hive-3.1.2分发一份到hadoop03上

[xiaokang@hadoop01 ~]$ scp -r /opt/software/hive-3.1.2/ xiaokang@hadoop03:/opt/software/

分发完成之后配置环境变量

[xiaokang@hadoop03 ~]$ sudo vim /etc/profile.d/env.sh

#在原有基础上添加以下内容,如图所示

export HIVE_HOME=/opt/software/hive-3.1.2

${HIVE_HOME}/bin:

[xiaokang@hadoop03 ~]$ . /etc/profile.d/env.sh

8.启动集群

[xiaokang@hadoop01 ~]$ zkServer.sh start

[xiaokang@hadoop02 ~]$ zkServer.sh start

[xiaokang@hadoop03 ~]$ zkServer.sh start

[xiaokang@hadoop01 ~]$ start-dfs.sh

[xiaokang@hadoop03 ~]$ start-yarn.sh

[xiaokang@hadoop01 ~]$ mapred --daemon start historyserver

9.交互式hive启动

[xiaokang@hadoop01 ~]$ hive

hive (default)> show databases;

OK

database_name

default

Time taken: 0.571 seconds, Fetched: 1 row(s)

hive (default)> quit;

10.hiveserver2启动

[xiaokang@hadoop01 ~]$ hiveserver2

[xiaokang@hadoop03 ~]$ beeline

beeline> ! connect jdbc:hive2://hadoop01:11240

Enter username for jdbc:hive2://hadoop01:11240: xiaokang

Enter password for jdbc:hive2://hadoop01:11240: ********

0: jdbc:hive2://hadoop01:11240> show databases;

在hadoop03上show databases;

hadoop01打印出OK

参考文献:

Linux下Hive的安装

DBeaverEE连接Hive-2.3.6-视频教程

HUE与Hive(3.1.2)集成