Multi-Interest Network with Dynamic Routing forRecommendation at Tmall 论文阅读笔记

1. ABSTRACT

1.1 Industrial recommender systems

(1)工业推荐系统通常由匹配阶段和排名阶段组成;

(2)匹配阶段:检索与用户兴趣相关的候选项;

(3)排名阶段:根据用户兴趣对候选项进行排序。

Industrial recommender systems usually consist of the matching stage and the ranking stage, in order to handle the billion-scale of users and items. The matching stage retrieves candidate items relevant to user interests, while the ranking stage sorts candidate items by user interests.

1.2 deep learning-based models(shortage)

大多数现有的基于深度学习的模型将一个用户表示为一个单一的向量,这不足以捕获用户兴趣的不同性质。

Most of the existing deep learning-based models represent one user as a single vector which is insufficient to capture the varying nature of user’s interests.

1.3 The paper was presented

(1)提出了动态路由多兴趣网络(MIND),以处理用户在匹配阶段的不同兴趣 ;

(2)开发了一种名为标签感知注意的技术来帮助学习具有多个向量的用户表示。

We propose the Multi-Interest Network with Dynamic routing (MIND) for dealing with user’s diverse interests in the matching stage.we develop a technique named label-aware attention to help learn a user representation with multiple vectors.

1.4 deployed

MIND已经部署在移动天猫应用程序主页上的主要在线流量。

Currently, MIND has been deployed for handling major online traffic at the homepage on Mobile Tmall App.

2. INTRODUCTION

2.1 Tmall's personalized recommendation system(RS)

匹配阶段和排名阶段中建模用户兴趣和找到捕获用户兴趣的用户表示是至关重要的,以支持满足用户兴趣的项目的有效检索。

2.2 Major contributions to the paper

(1)设计了一个多兴趣提取层

(2)建立了一个用于个性化推荐任务的深度神经网络。

(3)构建了一个系统来实现数据收集、模型培训和在线服务的the whole pipeline 。

To capture diverse interests of users from user behaviors, we design the multi-interest extractor layer, which utilizes dynamic routing to adaptively aggregate user’s historicalbehaviors into user representation vectors.By using user representation vectors produced by the multi-interest extractor layer and a newly proposed label-aware attention layer, we build a deep neural network for personalized recommendation tasks. Compared with existing methods, MIND shows superior performance on several public datasets and one industrial dataset from Tmall.To deploy MIND for serving billion-scale users at Tmall, we construct a system to implement the whole pipeline for data collecting, model training and online serving. The deployed system significantly improves the click-through rate (CTR) of the homepage on Mobile Tmall App.

3. RELATED WORK

3.1 Deep Learning for Recommendation

(1)Neural Collaborative Filtering (NCF);

(2)DeepFM;

(3)Deep Matrix Factorization Models (DMF);

(4)Personalized top-n sequential recommendation via convolutional sequence embedding;

3.2 User Representation

(1)employs RNN-GRU to learn user embeddings from the temporal ordered review documents;

(2)Modelling Context with User Embeddings for Sarcasm Detection in Social Media。

3.3 Capsule Network

采用动态路由来学习胶囊之间的连接的权重,并利用期望最大化算法进行了改进,克服了一些缺陷,获得了更好的精度。

4. Paper core method

4.1 Problem Formalization

(1) ![]() 函数:

函数:

![]()

其中:![]() ,d表示Userd的embedding向量的维度,K代表预测的用户兴趣个数

,d表示Userd的embedding向量的维度,K代表预测的用户兴趣个数

(2)![]() 函数

函数

![]()

其中![]() ,表示每个item的embeding向量表征

,表示每个item的embeding向量表征

(3)![]() 函数

函数

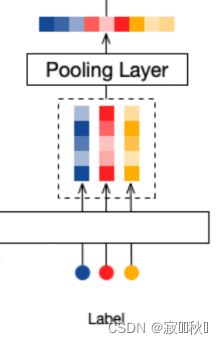

4.2 Embedding & Pooling Layer

输入由三部分组成,过Embedding层之后获得了三个Embedding向量,所以通过pooling层将其变成单一的Embedding向量

4.3 Multi-Interest Extractor Layer

参考了胶囊网络中的动态路由的方法

4.3.1 B2I Dynamic Routing算法流程

4.3.2 流程主要公式

(1)用户兴趣向量:

![]()

(2)系数![]() 使用高斯分布进行初始化

使用高斯分布进行初始化

(3)循环中的公式:

![]()

![]()

注意:![]() 是一个可学习参数, S相当于是对User的行为胶囊和兴趣胶囊进行信息交互融合的一个权重。

是一个可学习参数, S相当于是对User的行为胶囊和兴趣胶囊进行信息交互融合的一个权重。

![]()

4.4 Label-aware Attention Layer

就类似于我们给项目加入权重

![]()

其中p值取的情况如下表:

| p | 对应情况 |

| 0 | 每个用户兴趣向量有相同的权重 |

| >0 | p越大,与目标商品向量点积更大的用户兴趣向量会有更大的权重 |

| 只使用与目标商品向量点积最大的用户兴趣向量,忽略其他用户向量 |

注:论文得出当p趋于无穷大的时候模型的训练效果是最好的。

4.5 Training & Serving

4.5.1 Training

其中![]() 表示用户向量,

表示用户向量,![]() 表示标签项目嵌入

表示标签项目嵌入

![]()

D是包含用户项的训练数据的集合

4.5.2 Serving

在服务时,用户的行为序列和用户配置文件被输入到fuser函数中,为每个用户生成多个表示向量。然后,利用这些表示向量通过近似最近邻方法检索前N个项。

4.6 Connections with Existing Methods

YouTube DNN. Both MIND and YouTube DNN

5. EXPERIMENTS

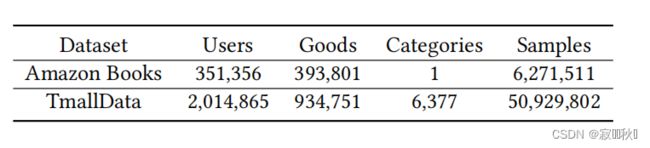

5.1 Datasets

5.2 the main metric

denotes the test set consisting of pairs of users and target items ( u , i )

denotes the indicator function

6. The MIND model definition

基于paddle的MIND模型定义(飞桨AI Studio - 人工智能学习实训社区 (baidu.com))。

class CapsuleNetwork(nn.Layer):

def __init__(self, hidden_size, seq_len, bilinear_type=2, interest_num=4, routing_times=3, hard_readout=True,

relu_layer=False):

super(CapsuleNetwork, self).__init__()

self.hidden_size = hidden_size # h

self.seq_len = seq_len # s

self.bilinear_type = bilinear_type

self.interest_num = interest_num

self.routing_times = routing_times

self.hard_readout = hard_readout

self.relu_layer = relu_layer

self.stop_grad = True

self.relu = nn.Sequential(

nn.Linear(self.hidden_size, self.hidden_size, bias_attr=False),

nn.ReLU()

)

if self.bilinear_type == 0: # MIND

self.linear = nn.Linear(self.hidden_size, self.hidden_size, bias_attr=False)

elif self.bilinear_type == 1:

self.linear = nn.Linear(self.hidden_size, self.hidden_size * self.interest_num, bias_attr=False)

else: # ComiRec_DR

self.w = self.create_parameter(

shape=[1, self.seq_len, self.interest_num * self.hidden_size, self.hidden_size])

def forward(self, item_eb, mask):

if self.bilinear_type == 0: # MIND

item_eb_hat = self.linear(item_eb) # [b, s, h]

item_eb_hat = paddle.repeat_interleave(item_eb_hat, self.interest_num, 2) # [b, s, h*in]

elif self.bilinear_type == 1:

item_eb_hat = self.linear(item_eb)

else: # ComiRec_DR

u = paddle.unsqueeze(item_eb, 2) # shape=(batch_size, maxlen, 1, embedding_dim)

item_eb_hat = paddle.sum(self.w[:, :self.seq_len, :, :] * u,

3) # shape=(batch_size, maxlen, hidden_size*interest_num)

item_eb_hat = paddle.reshape(item_eb_hat, (-1, self.seq_len, self.interest_num, self.hidden_size))

item_eb_hat = paddle.transpose(item_eb_hat, perm=[0,2,1,3])

# item_eb_hat = paddle.reshape(item_eb_hat, (-1, self.interest_num, self.seq_len, self.hidden_size))

# [b, in, s, h]

if self.stop_grad: # 截断反向传播,item_emb_hat不计入梯度计算中

item_eb_hat_iter = item_eb_hat.detach()

else:

item_eb_hat_iter = item_eb_hat

# b的shape=(b, in, s)

if self.bilinear_type > 0: # b初始化为0(一般的胶囊网络算法)

capsule_weight = paddle.zeros((item_eb_hat.shape[0], self.interest_num, self.seq_len))

else: # MIND使用高斯分布随机初始化b

capsule_weight = paddle.randn((item_eb_hat.shape[0], self.interest_num, self.seq_len))

for i in range(self.routing_times): # 动态路由传播3次

atten_mask = paddle.repeat_interleave(paddle.unsqueeze(mask, 1), self.interest_num, 1) # [b, in, s]

paddings = paddle.zeros_like(atten_mask)

# 计算c,进行mask,最后shape=[b, in, 1, s]

capsule_softmax_weight = F.softmax(capsule_weight, axis=-1)

capsule_softmax_weight = paddle.where(atten_mask==0, paddings, capsule_softmax_weight) # mask

capsule_softmax_weight = paddle.unsqueeze(capsule_softmax_weight, 2)

if i < 2:

# s=c*u_hat , (batch_size, interest_num, 1, seq_len) * (batch_size, interest_num, seq_len, hidden_size)

interest_capsule = paddle.matmul(capsule_softmax_weight,

item_eb_hat_iter) # shape=(batch_size, interest_num, 1, hidden_size)

cap_norm = paddle.sum(paddle.square(interest_capsule), -1, keepdim=True) # shape=(batch_size, interest_num, 1, 1)

scalar_factor = cap_norm / (1 + cap_norm) / paddle.sqrt(cap_norm + 1e-9) # shape同上

interest_capsule = scalar_factor * interest_capsule # squash(s)->v,shape=(batch_size, interest_num, 1, hidden_size)

# 更新b

delta_weight = paddle.matmul(item_eb_hat_iter, # shape=(batch_size, interest_num, seq_len, hidden_size)

paddle.transpose(interest_capsule, perm=[0,1,3,2])

# shape=(batch_size, interest_num, hidden_size, 1)

) # u_hat*v, shape=(batch_size, interest_num, seq_len, 1)

delta_weight = paddle.reshape(delta_weight, (

-1, self.interest_num, self.seq_len)) # shape=(batch_size, interest_num, seq_len)

capsule_weight = capsule_weight + delta_weight # 更新b

else:

interest_capsule = paddle.matmul(capsule_softmax_weight, item_eb_hat)

cap_norm = paddle.sum(paddle.square(interest_capsule), -1, keepdim=True)

scalar_factor = cap_norm / (1 + cap_norm) / paddle.sqrt(cap_norm + 1e-9)

interest_capsule = scalar_factor * interest_capsule

interest_capsule = paddle.reshape(interest_capsule, (-1, self.interest_num, self.hidden_size))

if self.relu_layer: # MIND模型使用book数据库时,使用relu_layer

interest_capsule = self.relu(interest_capsule)

return interest_capsuleclass MIND(nn.Layer):

def __init__(self, config):

super(MIND, self).__init__()

self.config = config

self.embedding_dim = self.config['embedding_dim']

self.max_length = self.config['max_length']

self.n_items = self.config['n_items']

self.item_emb = nn.Embedding(self.n_items, self.embedding_dim, padding_idx=0)

self.capsule = CapsuleNetwork(self.embedding_dim, self.max_length, bilinear_type=0,

interest_num=self.config['K'])

self.loss_fun = nn.CrossEntropyLoss()

self.reset_parameters()

def calculate_loss(self,user_emb,pos_item):

all_items = self.item_emb.weight

scores = paddle.matmul(user_emb, all_items.transpose([1, 0]))

return self.loss_fun(scores,pos_item)

def output_items(self):

return self.item_emb.weight

def reset_parameters(self, initializer=None):

for weight in self.parameters():

paddle.nn.initializer.KaimingNormal(weight)

def forward(self, item_seq, mask, item, train=True):

if train:

seq_emb = self.item_emb(item_seq) # Batch,Seq,Emb

item_e = self.item_emb(item).squeeze(1)

multi_interest_emb = self.capsule(seq_emb, mask) # Batch,K,Emb

cos_res = paddle.bmm(multi_interest_emb, item_e.squeeze(1).unsqueeze(-1))

k_index = paddle.argmax(cos_res, axis=1)

best_interest_emb = paddle.rand((multi_interest_emb.shape[0], multi_interest_emb.shape[2]))

for k in range(multi_interest_emb.shape[0]):

best_interest_emb[k, :] = multi_interest_emb[k, k_index[k], :]

loss = self.calculate_loss(best_interest_emb,item)

output_dict = {

'user_emb': multi_interest_emb,

'loss': loss,

}

else:

seq_emb = self.item_emb(item_seq) # Batch,Seq,Emb

multi_interest_emb = self.capsule(seq_emb, mask) # Batch,K,Emb

output_dict = {

'user_emb': multi_interest_emb,

}

return output_dict参考:Multi-Interest Network with Dynamic Routing forRecommendation at Tmall

飞桨AI Studio - 人工智能学习与实训社区 (baidu.com)