深度学习基于Resnet18的图像多分类--训练自己的数据集(超详细 含源码)

1.ResNet18原理

2.文件存储 一个样本存放的文件夹为dataset 下两个文件夹 train和test文件(训练和预测)

3.训练和测试的文件要相同。下面都分别放了 crane (鹤)、elephant(大象)、leopard(豹子)

4.编写预测的Python文件:code.py 跟dataset是同级路径。

5.code.py 训练模型源代码

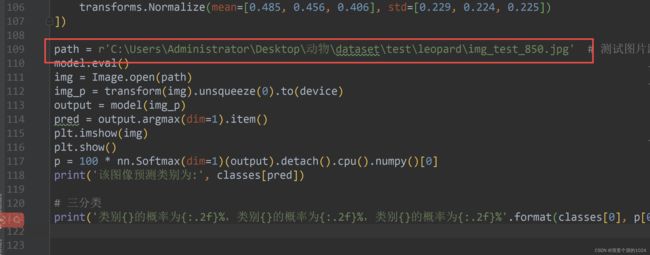

6. 测试代码使用豹子的图像进行分类,复制自己的绝对路径。

7.预测结果达到了99.95%

1.ResNet18原理

ResNet18是一个经典的深度卷积神经网络模型,由微软亚洲研究院提出,用于参加2015年的ImageNet图像分类比赛。ResNet18的名称来源于网络中包含的18个卷积层。

ResNet18的基本结构如下:

输入层:接收大小为224x224的RGB图像。

卷积层:共4个卷积层,每个卷积层使用3x3的卷积核和ReLU激活函数,提取图像的局部特征。

残差块:共8个残差块,每个残差块由两个卷积层和一条跳跃连接构成,用于解决深度卷积神经网络中梯度消失和梯度爆炸问题。

全局平均池化层:对特征图进行全局平均池化,将特征图转化为一维

向量。

全连接层:包含一个大小为1000的全连接层,用于分类输出。

输出层:使用softmax激活函数,生成1000个类别的概率分布。

2.文件存储 一个样本存放的文件夹为dataset 下两个文件夹 train和test文件(训练和预测)

3.训练和测试的文件要相同。下面都分别放了 crane (鹤)、elephant(大象)、leopard(豹子)

4.编写预测的Python文件:code.py 跟dataset是同级路径。

5.code.py 训练模型源代码

import os

import torch

import torchvision

import torch.nn as nn

import torch.nn.functional as F

import torch.optim as optim

from torchvision import models, datasets, transforms

import torch.utils.data as tud

import numpy as np

from torch.utils.data import Dataset, DataLoader, SubsetRandomSampler

from PIL import Image

import matplotlib.pyplot as plt

import warnings

warnings.filterwarnings("ignore")

device = torch.device("cuda:0" if torch.cuda.is_available() else 'cpu')

n_classes = 3 # 几种分类的

preteain = False # 是否下载使用训练参数 有网true 没网false

epoches = 4 # 训练的轮次

traindataset = datasets.ImageFolder(root='./dataset/train/', transform=transforms.Compose([

transforms.RandomResizedCrop(224),

transforms.RandomHorizontalFlip(),

transforms.ToTensor(),

transforms.Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225])

]))

testdataset = datasets.ImageFolder(root='./dataset/test/', transform=transforms.Compose([

transforms.RandomResizedCrop(224),

transforms.RandomHorizontalFlip(),

transforms.ToTensor(),

transforms.Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225])

]))

classes = testdataset.classes

print(classes)

model = models.resnet18(pretrained=preteain)

if preteain == True:

for param in model.parameters():

param.requires_grad = False

model.fc = nn.Linear(in_features=512, out_features=n_classes, bias=True)

model = model.to(device)

def train_model(model, train_loader, loss_fn, optimizer, epoch):

model.train()

total_loss = 0.

total_corrects = 0.

total = 0.

for idx, (inputs, labels) in enumerate(train_loader):

inputs = inputs.to(device)

labels = labels.to(device)

outputs = model(inputs)

loss = loss_fn(outputs, labels)

optimizer.zero_grad()

loss.backward()

optimizer.step()

preds = outputs.argmax(dim=1)

total_corrects += torch.sum(preds.eq(labels))

total_loss += loss.item() * inputs.size(0)

total += labels.size(0)

total_loss = total_loss / total

acc = 100 * total_corrects / total

print("轮次:%4d|训练集损失:%.5f|训练集准确率:%6.2f%%" % (epoch + 1, total_loss, acc))

return total_loss, acc

def test_model(model, test_loader, loss_fn, optimizer, epoch):

model.train()

total_loss = 0.

total_corrects = 0.

total = 0.

with torch.no_grad():

for idx, (inputs, labels) in enumerate(test_loader):

inputs = inputs.to(device)

labels = labels.to(device)

outputs = model(inputs)

loss = loss_fn(outputs, labels)

preds = outputs.argmax(dim=1)

total += labels.size(0)

total_loss += loss.item() * inputs.size(0)

total_corrects += torch.sum(preds.eq(labels))

loss = total_loss / total

accuracy = 100 * total_corrects / total

print("轮次:%4d|训练集损失:%.5f|训练集准确率:%6.2f%%" % (epoch + 1, loss, accuracy))

return loss, accuracy

loss_fn = nn.CrossEntropyLoss().to(device)

optimizer = optim.Adam(model.parameters(), lr=0.0001)

train_loader = DataLoader(traindataset, batch_size=32, shuffle=True)

test_loader = DataLoader(testdataset, batch_size=32, shuffle=True)

for epoch in range(0, epoches):

loss1, acc1 = train_model(model, train_loader, loss_fn, optimizer, epoch)

loss2, acc2 = test_model(model, test_loader, loss_fn, optimizer, epoch)

classes = testdataset.classes

transform = transforms.Compose([

transforms.Resize((224, 224)),

transforms.ToTensor(),

transforms.Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225])

])

path = r'C:\Users\Administrator\Desktop\动物\dataset\test\leopard\img_test_850.jpg' # 测试图片路径

model.eval()

img = Image.open(path)

img_p = transform(img).unsqueeze(0).to(device)

output = model(img_p)

pred = output.argmax(dim=1).item()

plt.imshow(img)

plt.show()

p = 100 * nn.Softmax(dim=1)(output).detach().cpu().numpy()[0]

print('该图像预测类别为:', classes[pred])

# 三分类

print('类别{}的概率为{:.2f}%,类别{}的概率为{:.2f}%,类别{}的概率为{:.2f}%'.format(classes[0], p[0], classes[1], p[1], classes[2], p[2]))

6. 测试代码使用豹子的图像进行分类,复制自己的绝对路径。

7.预测结果达到了99.95%

如果自己的分类不止三种那么需要修改。我这里训练的是三种图像。根据自己实际情况填写。

还需修改,如果是四分类的话 多加一个 “类别{}的概率为{:.2f}%” 和 classes[3], p[3] ,因为索引是从0开始的 所以四分类的下标就为3。

# 三分类

print('类别{}的概率为{:.2f}%,类别{}的概率为{:.2f}%,类别{}的概率为{:.2f}%'.format(classes[0], p[0], classes[1], p[1], classes[2], p[2]))制作不易 希望大家多多支持!

有问题可以联系我。添加备注 CSDN图像分类谢谢。QQ