Android音频系统探究——从SoundPool到AudioHardware

一. bug现象

Android的照相机在拍照的时候会播放一个按键音。最近的一个MID项目(基于RK3188,Android 4.2)中,测试部门反馈,拍照时按键音播放异常情况如下:

(1)进入应用程序以后,第一次拍照,没有按键音

(2)连续拍照,有按键音

(3)停止连拍,等待几秒钟后,再次拍照,又没有按键音

二. 问题简化

看CameraApp代码可以知道,播放按键音使用了SoundPool类。做一个使用SoundPool播放声音的应用程序,界面上只有一个Button,点击后播放声音。这样就能确定这单纯是声音播放问题还是复合性问题。代码很简单:

- protected void onCreate(Bundle savedInstanceState) {

- super.onCreate(savedInstanceState);

- setContentView(R.layout.activity_test_sound_pool);

- mSoundPool = new SoundPool(10, AudioManager.STREAM_SYSTEM, 5);

- mSoundId = mSoundPool.load(this, R.raw.camera_click, 1); //这里R.raw.camera_click是ogg格式的音频资源

- vBtnShut = (Button) findViewById(R.id.btn_click);

- vBtnShut.setOnClickListener(new OnClickListener() {

- @Override

- public void onClick(View v) {

- mSoundPool.play(mSoundId, 1, 1, 0, 0, 1);

- }

- });

- }

protected void onCreate(Bundle savedInstanceState) {

super.onCreate(savedInstanceState);

setContentView(R.layout.activity_test_sound_pool);

mSoundPool = new SoundPool(10, AudioManager.STREAM_SYSTEM, 5);

mSoundId = mSoundPool.load(this, R.raw.camera_click, 1); //这里R.raw.camera_click是ogg格式的音频资源

vBtnShut = (Button) findViewById(R.id.btn_click);

vBtnShut.setOnClickListener(new OnClickListener() {

@Override

public void onClick(View v) {

mSoundPool.play(mSoundId, 1, 1, 0, 0, 1);

}

});

}

结果表明,BUG现象仍然是一样的。我们将BUG现象做一次简化:

idle-->play failed-->idle-->play failed-->play success-->play success-->idle-->play failed-->...

可以总结为,每间隔几秒钟后,第一次播放音频无声音输出。

三. 初步分析

理清了现象,简化了环境,我们可以开始分析问题了:

显而易见的是,BUG非常规律,只有相隔几秒钟后的第一次播放才出现问题,与软件逻辑密切相关,可以排除硬件问题。本质上来讲,无论使用什么软件系统,声音播放的流程一般都是——用户指定要播放的声音数据,可能是文件,可能是Buffer;Audio系统对声音数据解码,可能采用软解码,也可能采用硬解码;将解码出来的数字音频信号传给功放设备,经过D/A转换后送到扬声器,声音就播放出来了。可以说,这个流程中的第一部分,是应用程序的行为;第二部分,是Android系统的职责;第三部分,是kernel中驱动的工作。应用程序的问题可以排除,现在要解决的疑问是,是解码程序出了问题,还是驱动程序出了问题?出现了什么情况,导致了idle后播放不出来?

四. 代码研究

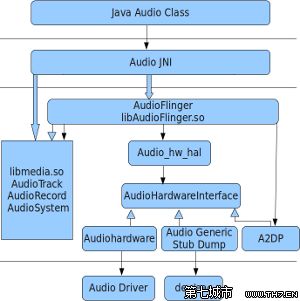

1. Android Audio框架

首先网络上找找资料,要搞清楚Android音频的框架层次结构,才容易定位问题。用图说明——

有了大致的概念,开始以SoundPool为入口,摸清播放流程。其中在每个层次中要了解两点:数据如何传递,播放的动作如何执行。 也就是沿着SoundPool.load()和Sound.play()顺藤摸瓜。

2. SoundPool和AudioFlinger

SoundPool.java基本是个空壳,直接使用了Native接口,代码没什么可看的。不过可以先看下这个类的介绍,就在SoundPool.java的开头,整一页的英文注释。幸运的是,很快就找到了我们需要看的资料:

- /**

- * The SoundPool class manages and plays audio resources for applications.

- *

- * <p>A SoundPool is a collection of samples that can be loaded into memory

- * from a resource inside the APK or from a file in the file system. The

- * SoundPool library uses the MediaPlayer service to decode the audio

- * into a raw 16-bit PCM mono or stereo stream. This allows applications

- * to ship with compressed streams without having to suffer the CPU load

- * and latency of decompressing during playback.</p>

- ... ...

- ... ...

- */

/** * The SoundPool class manages and plays audio resources for applications. * * <p>A SoundPool is a collection of samples that can be loaded into memory * from a resource inside the APK or from a file in the file system. The * SoundPool library uses the MediaPlayer service to decode the audio * into a raw 16-bit PCM mono or stereo stream. This allows applications * to ship with compressed streams without having to suffer the CPU load * and latency of decompressing during playback.</p> ... ... ... ... */

挑重要的说,SoundPool是Sample的集合,能把APK里的资源或者文件系统中的文件加载到内存中,使用MediaPlayer服务把音频解码成原始的16位PCM单声道或立体声数据流。好嘛,原来解码在这里就做了。还是看看代码实现吧,免得心里不踏实。

不去理会Jni那些手续,直接看SoundPool.cpp。上面那个测试APK的代码,调用了SoundPool的load,play两个接口,就把声音播放出来了。load一次后,可多次播放,这两个接口之所以要分开,应该就是load做了解码。先看load的实现,为满足不同音频资源的需要,load被重载了,看其中一个就行了。

- int SoundPool::load(int fd, int64_t offset, int64_t length, int priority)

- {

- ALOGV("load: fd=%d, offset=%lld, length=%lld, priority=%d",

- fd, offset, length, priority);

- Mutex::Autolock lock(&mLock);

- sp<Sample> sample = new Sample(++mNextSampleID, fd, offset, length);

- mSamples.add(sample->sampleID(), sample); //将sample对象加入管理

- doLoad(sample); //load所在

- return sample->sampleID();

- }

int SoundPool::load(int fd, int64_t offset, int64_t length, int priority)

{

ALOGV("load: fd=%d, offset=%lld, length=%lld, priority=%d",

fd, offset, length, priority);

Mutex::Autolock lock(&mLock);

sp<Sample> sample = new Sample(++mNextSampleID, fd, offset, length);

mSamples.add(sample->sampleID(), sample); //将sample对象加入管理

doLoad(sample); //load所在

return sample->sampleID();

}

数据处理角度来说,真正的load在doLoad中:

- void SoundPool::doLoad(sp<Sample>& sample)

- {

- ALOGV("doLoad: loading sample sampleID=%d", sample->sampleID());

- sample->startLoad(); //只是改变了状态

- mDecodeThread->loadSample(sample->sampleID()); //真正加载的地方

- }

void SoundPool::doLoad(sp<Sample>& sample)

{

ALOGV("doLoad: loading sample sampleID=%d", sample->sampleID());

sample->startLoad(); //只是改变了状态

mDecodeThread->loadSample(sample->sampleID()); //真正加载的地方

}

看到了mDecodeThread,眼前一亮,很可能这里就是将ogg解码成PCM的地方了。所以进入loadSample看一看:

- void SoundPoolThread::loadSample(int sampleID) {

- write(SoundPoolMsg(SoundPoolMsg::LOAD_SAMPLE, sampleID));

- }

void SoundPoolThread::loadSample(int sampleID) {

write(SoundPoolMsg(SoundPoolMsg::LOAD_SAMPLE, sampleID));

}

只是消息传递而已,找到LOAD_SAMPLE消息处理的地方:

- int SoundPoolThread::run() {

- ALOGV("run");

- for (;;) {

- SoundPoolMsg msg = read();

- ALOGV("Got message m=%d, mData=%d", msg.mMessageType, msg.mData);

- switch (msg.mMessageType) {

- case SoundPoolMsg::KILL:

- ALOGV("goodbye");

- return NO_ERROR;

- case SoundPoolMsg::LOAD_SAMPLE: //在这里处理LOAD_SAMPLE

- doLoadSample(msg.mData);

- break;

- default:

- ALOGW("run: Unrecognized message %d\n",

- msg.mMessageType);

- break;

- }

- }

- }

int SoundPoolThread::run() {

ALOGV("run");

for (;;) {

SoundPoolMsg msg = read();

ALOGV("Got message m=%d, mData=%d", msg.mMessageType, msg.mData);

switch (msg.mMessageType) {

case SoundPoolMsg::KILL:

ALOGV("goodbye");

return NO_ERROR;

case SoundPoolMsg::LOAD_SAMPLE: //在这里处理LOAD_SAMPLE

doLoadSample(msg.mData);

break;

default:

ALOGW("run: Unrecognized message %d\n",

msg.mMessageType);

break;

}

}

}

- void SoundPoolThread::doLoadSample(int sampleID) {

- sp <Sample> sample = mSoundPool->findSample(sampleID);

- status_t status = -1;

- if (sample != 0) {

- status = sample->doLoad();

- }

- mSoundPool->notify(SoundPoolEvent(SoundPoolEvent::SAMPLE_LOADED, sampleID, status));

- }

void SoundPoolThread::doLoadSample(int sampleID) {

sp <Sample> sample = mSoundPool->findSample(sampleID);

status_t status = -1;

if (sample != 0) {

status = sample->doLoad();

}

mSoundPool->notify(SoundPoolEvent(SoundPoolEvent::SAMPLE_LOADED, sampleID, status));

}

看来最后是在sample->doLoad()中做的处理。进去看看,颇有惊喜:

- status_t Sample::doLoad()

- {

- uint32_t sampleRate;

- int numChannels;

- audio_format_t format;

- sp<IMemory> p;

- ALOGV("Start decode");

- if (mUrl) {

- p = MediaPlayer::decode(mUrl, &sampleRate, &numChannels, &format);

- } else {

- p = MediaPlayer::decode(mFd, mOffset, mLength, &sampleRate, &numChannels, &format);

- ALOGV("close(%d)", mFd);

- ::close(mFd);

- mFd = -1;

- }

- if (p == 0) {

- ALOGE("Unable to load sample: %s", mUrl);

- return -1;

- }

- ALOGV("pointer = %p, size = %u, sampleRate = %u, numChannels = %d",

- p->pointer(), p->size(), sampleRate, numChannels);

- if (sampleRate > kMaxSampleRate) {

- ALOGE("Sample rate (%u) out of range", sampleRate);

- return - 1;

- }

- if ((numChannels < 1) || (numChannels > 2)) {

- ALOGE("Sample channel count (%d) out of range", numChannels);

- return - 1;

- }

- //_dumpBuffer(p->pointer(), p->size());

- uint8_t* q = static_cast<uint8_t*>(p->pointer()) + p->size() - 10;

- //_dumpBuffer(q, 10, 10, false);

- mData = p;

- mSize = p->size();

- mSampleRate = sampleRate;

- mNumChannels = numChannels;

- mFormat = format;

- mState = READY;

- return 0;

- }

status_t Sample::doLoad()

{

uint32_t sampleRate;

int numChannels;

audio_format_t format;

sp<IMemory> p;

ALOGV("Start decode");

if (mUrl) {

p = MediaPlayer::decode(mUrl, &sampleRate, &numChannels, &format);

} else {

p = MediaPlayer::decode(mFd, mOffset, mLength, &sampleRate, &numChannels, &format);

ALOGV("close(%d)", mFd);

::close(mFd);

mFd = -1;

}

if (p == 0) {

ALOGE("Unable to load sample: %s", mUrl);

return -1;

}

ALOGV("pointer = %p, size = %u, sampleRate = %u, numChannels = %d",

p->pointer(), p->size(), sampleRate, numChannels);

if (sampleRate > kMaxSampleRate) {

ALOGE("Sample rate (%u) out of range", sampleRate);

return - 1;

}

if ((numChannels < 1) || (numChannels > 2)) {

ALOGE("Sample channel count (%d) out of range", numChannels);

return - 1;

}

//_dumpBuffer(p->pointer(), p->size());

uint8_t* q = static_cast<uint8_t*>(p->pointer()) + p->size() - 10;

//_dumpBuffer(q, 10, 10, false);

mData = p;

mSize = p->size();

mSampleRate = sampleRate;

mNumChannels = numChannels;

mFormat = format;

mState = READY;

return 0;

}

弄清楚SoundPool的Play做了什么,也就能找到HAL的代码了。下面看只看play中的关键代码:

- int SoundPool::play(int sampleID, float leftVolume, float rightVolume,

- int priority, int loop, float rate)

- {

- //...

- channel = allocateChannel_l(priority);

- //...

- channel->play(sample, channelID, leftVolume, rightVolume, priority, loop, rate);

- //...

- }

int SoundPool::play(int sampleID, float leftVolume, float rightVolume,

int priority, int loop, float rate)

{

//...

channel = allocateChannel_l(priority);

//...

channel->play(sample, channelID, leftVolume, rightVolume, priority, loop, rate);

//...

} 调用了SoundChannel的play.好读书而不求甚解,先把代码一路追下去,不作细究。

- void SoundChannel::play(const sp<Sample>& sample, int nextChannelID, float leftVolume,

- float rightVolume, int priority, int loop, float rate)

- {

- AudioTrack* newTrack;

- //....

- newTrack = new AudioTrack(streamType, sampleRate, sample->format(),

- channels, frameCount, AUDIO_OUTPUT_FLAG_FAST, callback, userData, bufferFrames);

- //...

- mState = PLAYING;

- mAudioTrack->start();

- //...

- }

void SoundChannel::play(const sp<Sample>& sample, int nextChannelID, float leftVolume,

float rightVolume, int priority, int loop, float rate)

{

AudioTrack* newTrack;

//....

newTrack = new AudioTrack(streamType, sampleRate, sample->format(),

channels, frameCount, AUDIO_OUTPUT_FLAG_FAST, callback, userData, bufferFrames);

//...

mState = PLAYING;

mAudioTrack->start();

//...

}

SoundChannel::play创建了一个AudioTrack对象,在AudioTrack的构造函数中,调用了set,set又调用了createTrack_l。createTrack_I中,通过IAudioFlinger创建了一个IAudioTrack。关于AudioTrack和AudioFlinger是为何物,两者如何交换音频数据,就说来话长了。而且有很多大大分析得很详细,就不赘述了。有几篇写得很好——

- AudioTrack分析:http://www.cnblogs.com/innost/archive/2011/01/09/1931457.html

- AudioFlinger分析:http://www.cnblogs.com/innost/archive/2011/01/15/1936425.html

- AudioTrack如何与AudioFlinger交换数据: http://blog.chinaunix.net/uid-26533928-id-3052398.html

阅读这些资料我们可以知道,Android Framework的音频子系统中,每一个音频流对应着一个AudioTrack类的一个实例,每个AudioTrack会在创建时注册到AudioFlinger中,由AudioFlinger把所有的AudioTrack进行混合(Mixer),然后输送到AudioHardware中进行播放。换言之,AudioFlinger是Audio系统的核心服务之一,起到了承上启下的衔接作用。

我们现在已经让SoundPool牵线,抓到AudioFlinger这条大鱼。下面着重来看AudioFlinger如何向下调用AudioHardware的。

3. AudioFlinger与AudioHardware

这里需要一点基础知识,先要了解Android的硬件抽象接口机制,才能理解AudioFlinger如何调用到AudioHardware,相关资料:

http://blog.csdn.net/myarrow/article/details/7175204

因为对Audio系统一无所知,所以很惭愧用了反相的代码搜索,在hardware/xxx/audio目录下查找HAL_MODULE_INFO_SYM,然后反过来到framework找HAL_MODULE_INFO_SYM的id "AUDIO_HARDWARE_MODULE_ID",过程非常笨拙,不足为道。他山之石可以攻玉,看到一篇好文,借助其中的一段分析来完成对AudioFlinger和AudioHardware关联的分析。原文地址:http://blog.csdn.net/xuesen_lin/article/details/8805108

当AudioPolicyService构造时创建了一个AudioPolicyDevice(mpAudioPolicyDev)并由此打开一个AudioPolicy(mpAudioPolicy)——这个Policy默认情况下的实现是legacy_audio_policy::policy(数据类型audio_policy)。同时legacy_audio_policy还包含了一个AudioPolicyInterface成员变量,它会被初始化为一个AudioPolicyManagerDefault。AudioPolicyManagerDefault的父类,即AudioPolicyManagerBase,它的构造函数中调用了mpClientInterface->loadHwModule()。

- AudioPolicyManagerBase::AudioPolicyManagerBase(AudioPolicyClientInterface*clientInterface)…

- {

- //......

- for (size_t i = 0; i < mHwModules.size();i++) {

- mHwModules[i]->mHandle = mpClientInterface->loadHwModule(mHwModules[i]->mName);

- if(mHwModules[i]->mHandle == 0) {

- continue;

- }

- //......

- }

AudioPolicyManagerBase::AudioPolicyManagerBase(AudioPolicyClientInterface*clientInterface)…

{

//......

for (size_t i = 0; i < mHwModules.size();i++) {

mHwModules[i]->mHandle = mpClientInterface->loadHwModule(mHwModules[i]->mName);

if(mHwModules[i]->mHandle == 0) {

continue;

}

//......

}

很明显的mpClientInterface这个变量在AudioPolicyManagerBase构造函数中做了初始化,再回溯追踪,可以发现它的根源在AudioPolicyService的构造函数中,对应的代码语句如下:

- rc =mpAudioPolicyDev->create_audio_policy(mpAudioPolicyDev, &aps_ops, this, &mpAudioPolicy);

rc =mpAudioPolicyDev->create_audio_policy(mpAudioPolicyDev, &aps_ops, this, &mpAudioPolicy);

在这个场景下,函数create_audio_policy对应的是create_legacy_ap,并将传入的aps_ops组装到一个AudioPolicyCompatClient对象中,也就是mpClientInterface所指向的那个对象。

换句话说,mpClientInterface->loadHwModule实际上调用的就是aps_ops->loadHwModule,即:

- static audio_module_handle_t aps_load_hw_module(void*service,const char *name)

- {

- sp<IAudioFlinger> af= AudioSystem::get_audio_flinger();

- …

- return af->loadHwModule(name);

- }

static audio_module_handle_t aps_load_hw_module(void*service,const char *name)

{

sp<IAudioFlinger> af= AudioSystem::get_audio_flinger();

…

return af->loadHwModule(name);

}

AudioFlinger终于出现了,同样的情况也适用于mpClientInterface->openOutput,代码如下:

- static audio_io_handle_t aps_open_output(…)

- {

- sp<IAudioFlinger> af= AudioSystem::get_audio_flinger();

- …

- return af->openOutput((audio_module_handle_t)0,pDevices, pSamplingRate, pFormat, pChannelMask,

- pLatencyMs, flags);

- }

static audio_io_handle_t aps_open_output(…)

{

sp<IAudioFlinger> af= AudioSystem::get_audio_flinger();

…

return af->openOutput((audio_module_handle_t)0,pDevices, pSamplingRate, pFormat, pChannelMask,

pLatencyMs, flags);

}

现在前方就是AudioHardware了,终于打开了从APK到HAL的通路。

4. AudioHardware

AudioHardware有两个内部类,AudioStreamOutALSA和AudioStreamInALSA,我们要解决的是声音播放的问题,看AudioStreamOutALSA即可。 AudioStreamOutALSA代码很清晰,很快找到了我们需要的代码,写PCM数据用的函数:

- AudioHardware::AudioStreamOutALSA::AudioStreamOutALSA() :

- mHardware(0), mPcm(0), mMixer(0), mRouteCtl(0),

- mStandby(true), mDevices(0), mChannels(AUDIO_HW_OUT_CHANNELS),

- mSampleRate(AUDIO_HW_OUT_SAMPLERATE), mBufferSize(AUDIO_HW_OUT_PERIOD_BYTES),

- mDriverOp(DRV_NONE), mStandbyCnt(0)

- {

- #ifdef DEBUG_ALSA_OUT

- if(alsa_out_fp== NULL)

- alsa_out_fp = fopen("/data/data/out.pcm","a+");

- if(alsa_out_fp)

- ALOGI("------------>openfile success");

- #endif

- }

- ssize_t AudioHardware::AudioStreamOutALSA::write(const void* buffer, size_t bytes)

- {

- //...

- #ifdef DEBUG_ALSA_OUT

- if(alsa_out_fp)

- fwrite(buffer,1,bytes,alsa_out_fp);

- #endif

- //...

- if (mStandby) {

- open_l(); //重新open音频设备

- mStandby = false;

- }

- //...

- ret = pcm_write(mPcm,(void*) p, bytes);

- //...

- }

AudioHardware::AudioStreamOutALSA::AudioStreamOutALSA() :

mHardware(0), mPcm(0), mMixer(0), mRouteCtl(0),

mStandby(true), mDevices(0), mChannels(AUDIO_HW_OUT_CHANNELS),

mSampleRate(AUDIO_HW_OUT_SAMPLERATE), mBufferSize(AUDIO_HW_OUT_PERIOD_BYTES),

mDriverOp(DRV_NONE), mStandbyCnt(0)

{

#ifdef DEBUG_ALSA_OUT

if(alsa_out_fp== NULL)

alsa_out_fp = fopen("/data/data/out.pcm","a+");

if(alsa_out_fp)

ALOGI("------------>openfile success");

#endif

}

ssize_t AudioHardware::AudioStreamOutALSA::write(const void* buffer, size_t bytes)

{

//...

#ifdef DEBUG_ALSA_OUT

if(alsa_out_fp)

fwrite(buffer,1,bytes,alsa_out_fp);

#endif

//...

if (mStandby) {

open_l(); //重新open音频设备

mStandby = false;

}

//...

ret = pcm_write(mPcm,(void*) p, bytes);

//...

}

这里提供了一个很容易验证PCM数据是否正确的方法,打开DEBUG_ALSA_OUT开关后,可以将PCM流保存到“/data/data/out.pcm”文件中。到了验证数据是否正确的时候了,打开这个编译开关,得到out.pcm,将它pull到PC上,用coolEdit打开播放,发现正常播放正常。好了,我们现在可以知道,问题并不出在解码程序上了。那又是什么原因导致的呢,我们从write函数开始,研究播放的流程。

首先,AudioStreamOutALSA的构造函数中将mStandby初始化为true。这个变量显然是作为记录音频设备待机状态用的。当mStandby==true时,每次调用write,都会调用open_l()重新开启一次音频设备,然后再做pcm_write。

再看看open_l():

- status_t AudioHardware::AudioStreamOutALSA::open_l()

- {

- //...

- mPcm = mHardware->openPcmOut_l();

- if (mPcm == NULL) {

- return NO_INIT;

- }

- //...

- }

- struct pcm *AudioHardware::openPcmOut_l()

- {

- //...

- mPcm = pcm_open(flags);

- //...

- if (!pcm_ready(mPcm)) {

- pcm_close(mPcm);

- //...

- }

- }

- return mPcm;

- }

status_t AudioHardware::AudioStreamOutALSA::open_l()

{

//...

mPcm = mHardware->openPcmOut_l();

if (mPcm == NULL) {

return NO_INIT;

}

//...

}

struct pcm *AudioHardware::openPcmOut_l()

{

//...

mPcm = pcm_open(flags);

//...

if (!pcm_ready(mPcm)) {

pcm_close(mPcm);

//...

}

}

return mPcm;

}open_l中调用了AudioHardware::openPcmOut_l,AudioHardware::openPcmOut_l中调用了pcm_open。再到pcm_open 中去看看:

- struct pcm *pcm_open(unsigned flags)

- {

- //... ...

- if (flags & PCM_IN) {

- dname = "/dev/snd/pcmC0D0c";

- channalFlags = -1;

- startCheckCount = 0;

- } else {

- #ifdef SUPPORT_USB

- dname = "/dev/snd/pcmC1D0p";

- #else

- dname = "/dev/snd/pcmC0D0p";

- #endif

- }

- pcm->fd = open(dname, O_RDWR);

- if (pcm->fd < 0) {

- oops(pcm, errno, "cannot open device '%s'", dname);

- return pcm;

- }

- if (ioctl(pcm->fd, SNDRV_PCM_IOCTL_INFO, &info)) {

- oops(pcm, errno, "cannot get info - %s", dname);

- goto fail;

- }

- if (ioctl(pcm->fd, SNDRV_PCM_IOCTL_HW_PARAMS, ¶ms)) {

- oops(pcm, errno, "cannot set hw params");

- goto fail;

- }

- if (ioctl(pcm->fd, SNDRV_PCM_IOCTL_SW_PARAMS, &sparams)) {

- oops(pcm, errno, "cannot set sw params");

- goto fail;

- }

- fail:

- close(pcm->fd);

- pcm->fd = -1;

- return pcm;

- }

struct pcm *pcm_open(unsigned flags)

{

//... ...

if (flags & PCM_IN) {

dname = "/dev/snd/pcmC0D0c";

channalFlags = -1;

startCheckCount = 0;

} else {

#ifdef SUPPORT_USB

dname = "/dev/snd/pcmC1D0p";

#else

dname = "/dev/snd/pcmC0D0p";

#endif

}

pcm->fd = open(dname, O_RDWR);

if (pcm->fd < 0) {

oops(pcm, errno, "cannot open device '%s'", dname);

return pcm;

}

if (ioctl(pcm->fd, SNDRV_PCM_IOCTL_INFO, &info)) {

oops(pcm, errno, "cannot get info - %s", dname);

goto fail;

}

if (ioctl(pcm->fd, SNDRV_PCM_IOCTL_HW_PARAMS, ¶ms)) {

oops(pcm, errno, "cannot set hw params");

goto fail;

}

if (ioctl(pcm->fd, SNDRV_PCM_IOCTL_SW_PARAMS, &sparams)) {

oops(pcm, errno, "cannot set sw params");

goto fail;

}

fail:

close(pcm->fd);

pcm->fd = -1;

return pcm;

}

果然,这里就是操作设备节点的地方了。我们先在AudioStreamOutALSA的write中加打印信息,看看第一次播放和后续播放究竟有何不同。测试结果发现,每次播放不出声音的情况,都发生mStandby==true之后,这个时候做了一次打开音频设备的动作,但此时PCM数据是正确的。我们先来看看什么时候会导致mStandby==true。

- <PRE class=cpp name="code"><PRE class=cpp name="code">status_t AudioHardware::AudioStreamOutALSA::standby()

- {

- doStandby_l();

- }

- void AudioHardware::AudioStreamOutALSA::doStandby_l()

- {

- if(!mStandby)

- mStandby = true;

- close_l();

- }

- void AudioHardware::AudioStreamOutALSA::close_l()

- {

- if (mPcm) {

- mHardware->closePcmOut_l();

- mPcm = NULL;

- }

- }</PRE><BR><BR></PRE>

<div class="dp-highlighter bg_cpp"><div class="bar"><div class="tools"><strong>[cpp]</strong> <a target=_blank class="ViewSource" title="view plain" href="http://blog.csdn.net/special_lin/article/details/12849637#">view plain</a><a target=_blank class="CopyToClipboard" title="copy" href="http://blog.csdn.net/special_lin/article/details/12849637#">copy</a><a target=_blank class="PrintSource" title="print" href="http://blog.csdn.net/special_lin/article/details/12849637#">print</a><a target=_blank class="About" title="?" href="http://blog.csdn.net/special_lin/article/details/12849637#">?</a></div></div><ol class="dp-cpp"><li class="alt"><span><span><PRE </span><span class="keyword">class</span><span>=cpp name=</span><span class="string">"code"</span><span>>status_t AudioHardware::AudioStreamOutALSA::standby() </span></span></li><li><span>{ </span></li><li class="alt"><span> doStandby_l(); </span></li><li><span>} </span></li><li class="alt"><span> </span></li><li><span></span><span class="keyword">void</span><span> AudioHardware::AudioStreamOutALSA::doStandby_l() </span></li><li class="alt"><span>{ </span></li><li><span> </span></li><li class="alt"><span> </span><span class="keyword">if</span><span>(!mStandby) </span></li><li><span> mStandby = </span><span class="keyword">true</span><span>; </span></li><li class="alt"><span> close_l(); </span></li><li><span>} </span></li><li class="alt"><span> </span></li><li><span></span><span class="keyword">void</span><span> AudioHardware::AudioStreamOutALSA::close_l() </span></li><li class="alt"><span>{ </span></li><li><span> </span><span class="keyword">if</span><span> (mPcm) { </span></li><li class="alt"><span> mHardware->closePcmOut_l(); </span></li><li><span> mPcm = NULL; </span></li><li class="alt"><span> } </span></li><li><span>}</PRE><BR><BR> </span></li></ol></div><pre style="DISPLAY: none" class="cpp" name="code"><div class="dp-highlighter bg_cpp"><div class="bar"><div class="tools"><strong>[cpp]</strong> <a target=_blank class="ViewSource" title="view plain" href="http://blog.csdn.net/special_lin/article/details/12849637#">view plain</a><a target=_blank class="CopyToClipboard" title="copy" href="http://blog.csdn.net/special_lin/article/details/12849637#">copy</a><a target=_blank class="PrintSource" title="print" href="http://blog.csdn.net/special_lin/article/details/12849637#">print</a><a target=_blank class="About" title="?" href="http://blog.csdn.net/special_lin/article/details/12849637#">?</a></div></div><ol class="dp-cpp"><li class="alt"><span><span>status_t AudioHardware::AudioStreamOutALSA::standby() </span></span></li><li><span>{ </span></li><li class="alt"><span> doStandby_l(); </span></li><li><span>} </span></li><li class="alt"><span> </span></li><li><span></span><span class="keyword">void</span><span> AudioHardware::AudioStreamOutALSA::doStandby_l() </span></li><li class="alt"><span>{ </span></li><li><span> </span></li><li class="alt"><span> </span><span class="keyword">if</span><span>(!mStandby) </span></li><li><span> mStandby = </span><span class="keyword">true</span><span>; </span></li><li class="alt"><span> close_l(); </span></li><li><span>} </span></li><li class="alt"><span> </span></li><li><span></span><span class="keyword">void</span><span> AudioHardware::AudioStreamOutALSA::close_l() </span></li><li class="alt"><span>{ </span></li><li><span> </span><span class="keyword">if</span><span> (mPcm) { </span></li><li class="alt"><span> mHardware->closePcmOut_l(); </span></li><li><span> mPcm = NULL; </span></li><li class="alt"><span> } </span></li><li><span>} </span></li></ol></div><pre style="DISPLAY: none" class="cpp" name="code">status_t AudioHardware::AudioStreamOutALSA::standby()

{

doStandby_l();

}

void AudioHardware::AudioStreamOutALSA::doStandby_l()

{

if(!mStandby)

mStandby = true;

close_l();

}

void AudioHardware::AudioStreamOutALSA::close_l()

{

if (mPcm) {

mHardware->closePcmOut_l();

mPcm = NULL;

}

}

好了,现在我们可以确定,mStandby是在调用standby的时候被设置生true了。如果不总是重新打开音频设备,会不会变正常?做了一个实验,把standby函数体的代码都注释掉。这样修改后,果然开机只有一次声音播放不出来,那就是第一次。每隔一段时间,声音就播不出来的问题不见了。

其实到现在,问题已经定位出来了。这个问题属于kernel问题,不再属于Framework了。但是还是想弄清楚,standby为什么隔一段时间被调用一次,是被谁调用的。经过一系列反查,找到了standby的真正调用处,AudioFlinger的播放线程中。具体怎么查的,还是要参考HAL知识去,就不重复记载了。

- void AudioFlinger::PlaybackThread::threadLoop_standby()

- {

- ALOGV("Audio hardware entering standby, mixer %p, suspend count %d", this, mSuspended);

- mOutput->stream->common.standby(&mOutput->stream->common);

- }

- bool AudioFlinger::PlaybackThread::threadLoop()

- {

- // ... ...

- while (!exitPending())

- <PRE class=cpp name="code"> <SPAN style="FONT-FAMILY: Arial, Helvetica, sans-serif">{</SPAN></PRE> if (CC_UNLIKELY((!mActiveTracks.size() && systemTime() > standbyTime) || isSuspended())) { if (!mStandby) { threadLoop_standby(); mStandby = true; }//... ...}//... ...standbyTime = systemTime() + standbyDelay;//... ...}// ... ...}

void AudioFlinger::PlaybackThread::threadLoop_standby()

{

ALOGV("Audio hardware entering standby, mixer %p, suspend count %d", this, mSuspended);

mOutput->stream->common.standby(&mOutput->stream->common);

}

bool AudioFlinger::PlaybackThread::threadLoop()

{

// ... ...

while (!exitPending())

<div class="dp-highlighter bg_cpp"><div class="bar"><div class="tools"><strong>[cpp]</strong> <a target=_blank class="ViewSource" title="view plain" href="http://blog.csdn.net/special_lin/article/details/12849637#">view plain</a><a target=_blank class="CopyToClipboard" title="copy" href="http://blog.csdn.net/special_lin/article/details/12849637#">copy</a><a target=_blank class="PrintSource" title="print" href="http://blog.csdn.net/special_lin/article/details/12849637#">print</a><a target=_blank class="About" title="?" href="http://blog.csdn.net/special_lin/article/details/12849637#">?</a></div></div><ol class="dp-cpp"><li class="alt"><span><span><SPAN style=</span><span class="string">"FONT-FAMILY: Arial, Helvetica, sans-serif"</span><span>>{</SPAN> </span></span></li></ol></div><pre style="DISPLAY: none" class="cpp" name="code"> <span style="font-family:Arial, Helvetica, sans-serif;"><span>{</span></span> if (CC_UNLIKELY((!mActiveTracks.size() && systemTime() > standbyTime) || isSuspended())) { if (!mStandby) { threadLoop_standby(); mStandby = true; }//... ...}//... ...standbyTime = systemTime() + standbyDelay;//... ...}// ... ...}

这里我们看到了,standby是由AudioFlinger控制的,一旦满足以下条件后,没有AudioTrack处于活动状态并且已经到达了standbyTime这个时间就进入Standby模式。那么standbyTime=systemTime() + standbyDelay,也就是过了standbyDelay这段时间后,音频系统将进入待机,关闭音频设备。最后找到standbyDelay的值是多少。

AudioFlinger::PlaybackThread构造函数中,将standbyDelay初始化,standbyDelay(AudioFlinger::mStandbyTimeInNsecs),

AudioFlinger这个类第一次被引用时,就对成员变量mStandbyTimeInNsecs 进行了初始化

- void AudioFlinger::onFirstRef()

- {

- //... ...

- /* TODO: move all this work into an Init() function */

- char val_str[PROPERTY_VALUE_MAX] = { 0 };

- if (property_get("ro.audio.flinger_standbytime_ms", val_str, NULL) >= 0) {

- uint32_t int_val;

- if (1 == sscanf(val_str, "%u", &int_val)) {

- mStandbyTimeInNsecs = milliseconds(int_val);

- ALOGI("Using %u mSec as standby time.", int_val);

- } else {

- mStandbyTimeInNsecs = kDefaultStandbyTimeInNsecs;

- ALOGI("Using default %u mSec as standby time.",

- (uint32_t)(mStandbyTimeInNsecs / 1000000));

- }

- }

- mMode = AUDIO_MODE_NORMAL;

- }

void AudioFlinger::onFirstRef()

{

//... ...

/* TODO: move all this work into an Init() function */

char val_str[PROPERTY_VALUE_MAX] = { 0 };

if (property_get("ro.audio.flinger_standbytime_ms", val_str, NULL) >= 0) {

uint32_t int_val;

if (1 == sscanf(val_str, "%u", &int_val)) {

mStandbyTimeInNsecs = milliseconds(int_val);

ALOGI("Using %u mSec as standby time.", int_val);

} else {

mStandbyTimeInNsecs = kDefaultStandbyTimeInNsecs;

ALOGI("Using default %u mSec as standby time.",

(uint32_t)(mStandbyTimeInNsecs / 1000000));

}

}

mMode = AUDIO_MODE_NORMAL;

}

如果有ro.audio.flinger_standbytime_ms这个属性,就按这个属性值设定stand by的idle time(很可能是OEM代码),如果没有,取kDefaultStandbyTimeInNsecs的值。kDefaultStandbyTimeInNsecs是个常量,3s:

- static const nsecs_t kDefaultStandbyTimeInNsecs = seconds(3);

static const nsecs_t kDefaultStandbyTimeInNsecs = seconds(3);

通过分析研究Android系统代码,我们虽然最终没有解决问题,但是已经定位出了问题所在的层次,确定这是一个驱动的BUG。Framework工程师的任务至此完成了。问题交付给驱动工程师,经过排查发现,是PA没有打开造成的问题。

经验可以带来技巧,如果下次遇到类似问题,我们可以直接在AudioHardware中截获PCM,通过判断解码出的PCM流是否正确,较快速的定位到问题所在——是MediaPlayer Codec、AudioSystem、还是Driver。

原文转自:http://blog.csdn.net/special_lin/article/details/12849637