DBInputFormat用法

输入的数据库数据:

代码:

package hadoop_2_6_0;

import java.io.DataInput;

import java.io.DataOutput;

import java.io.IOException;

import java.sql.PreparedStatement;

import java.sql.ResultSet;

import java.sql.SQLException;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.io.Writable;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.lib.db.DBConfiguration;

import org.apache.hadoop.mapreduce.lib.db.DBInputFormat;

import org.apache.hadoop.mapreduce.lib.db.DBWritable;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

public class DBInputFormatTest {

public static class MyMapper extends

Mapper<LongWritable, MyDBWritable, LongWritable, Text> {

final Text v2 = new Text();

protected void map(LongWritable key, MyDBWritable value,Mapper<LongWritable, MyDBWritable, LongWritable, Text>.Context context)throws InterruptedException, IOException {

v2.set(value.toString());

context.write(key, v2);

}

}

public static class MyDBWritable implements Writable,DBWritable{

int id;

String name;

public void write(PreparedStatement statement) throws SQLException {

statement.setInt(1, id);

statement.setString(2, name);

}

public void readFields(ResultSet resultSet) throws SQLException {

this.id=resultSet.getInt(1);

this.name=resultSet.getString(2);

}

public void write(DataOutput out) throws IOException {

out.write(id);

out.writeUTF(name);

}

public void readFields(DataInput in) throws IOException {

this.id=in.readInt();

this.name=in.readUTF();

}

public String toString(){

return "MyDBWritable[id="+id+",\t"+"name="+name+"]";

}

}

public static void main(String[] args) throws Exception {

final Configuration conf = new Configuration();

DBConfiguration.configureDB(conf, "com.mysql.jdbc.Driver", "jdbc:mysql://192.168.1.1:3306/oled", "root", "mysqladmin");

final Job job = Job.getInstance(conf, DBInputFormatTest.class.getSimpleName());

// 1.1

//FileInputFormat.setInputPaths(job,"hdfs://192.168.1.10:9000/input/hehe");

job.setInputFormatClass(DBInputFormat.class);

// 1.2

job.setMapperClass(MyMapper.class);

// 1.3

//job.setPartitionerClass(HashPartitioner.class);

//job.setNumReduceTasks(1);

// 1.4

// 1.5

// 2.2

job.setOutputKeyClass(LongWritable.class);

job.setOutputValueClass(Text.class);

//

DBInputFormat.setInput(job, MyDBWritable.class, "select id,name from DB", "select count(1) from DB");

// 2.3

FileOutputFormat.setOutputPath(job, new Path("hdfs://192.168.1.100:9000/DBout1"));

//job.setOutputFormatClass(TextOutputFormat.class);

job.setJarByClass(DBInputFormatTest.class);

job.waitForCompletion(true);

}

}

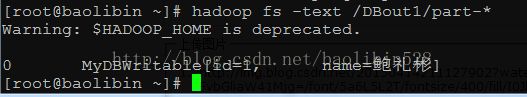

输出:

[root@baolibin ~]# hadoop fs -text /DBout1/part-* Warning: $HADOOP_HOME is deprecated. 0 MyDBWritable[id=1, name=鲍礼彬]

Console输出:

15/04/14 21:10:32 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable 15/04/14 21:10:32 WARN mapred.JobClient: Use GenericOptionsParser for parsing the arguments. Applications should implement Tool for the same. 15/04/14 21:10:32 WARN mapred.JobClient: No job jar file set. User classes may not be found. See JobConf(Class) or JobConf#setJar(String). 15/04/14 21:10:34 INFO mapred.JobClient: Running job: job_local486772134_0001 15/04/14 21:10:34 INFO mapred.LocalJobRunner: Waiting for map tasks 15/04/14 21:10:34 INFO mapred.LocalJobRunner: Starting task: attempt_local486772134_0001_m_000000_0 15/04/14 21:10:34 INFO mapred.Task: Using ResourceCalculatorPlugin : null 15/04/14 21:10:34 INFO mapred.MapTask: Processing split: org.apache.hadoop.mapreduce.lib.db.DBInputFormat$DBInputSplit@4abf053f 15/04/14 21:10:34 INFO mapred.MapTask: io.sort.mb = 100 15/04/14 21:10:34 INFO mapred.MapTask: data buffer = 79691776/99614720 15/04/14 21:10:34 INFO mapred.MapTask: record buffer = 262144/327680 15/04/14 21:10:34 INFO mapred.MapTask: Starting flush of map output 15/04/14 21:10:34 INFO mapred.MapTask: Finished spill 0 15/04/14 21:10:34 INFO mapred.Task: Task:attempt_local486772134_0001_m_000000_0 is done. And is in the process of commiting 15/04/14 21:10:34 INFO mapred.LocalJobRunner: 15/04/14 21:10:34 INFO mapred.Task: Task 'attempt_local486772134_0001_m_000000_0' done. 15/04/14 21:10:34 INFO mapred.LocalJobRunner: Finishing task: attempt_local486772134_0001_m_000000_0 15/04/14 21:10:34 INFO mapred.LocalJobRunner: Map task executor complete. 15/04/14 21:10:34 INFO mapred.Task: Using ResourceCalculatorPlugin : null 15/04/14 21:10:34 INFO mapred.LocalJobRunner: 15/04/14 21:10:34 INFO mapred.Merger: Merging 1 sorted segments 15/04/14 21:10:35 INFO mapred.Merger: Down to the last merge-pass, with 1 segments left of total size: 47 bytes 15/04/14 21:10:35 INFO mapred.LocalJobRunner: 15/04/14 21:10:35 INFO mapred.JobClient: map 100% reduce 0% 15/04/14 21:10:35 INFO mapred.Task: Task:attempt_local486772134_0001_r_000000_0 is done. And is in the process of commiting 15/04/14 21:10:35 INFO mapred.LocalJobRunner: 15/04/14 21:10:35 INFO mapred.Task: Task attempt_local486772134_0001_r_000000_0 is allowed to commit now 15/04/14 21:10:35 INFO output.FileOutputCommitter: Saved output of task 'attempt_local486772134_0001_r_000000_0' to hdfs://192.168.1.100:9000/DBout1 15/04/14 21:10:35 INFO mapred.LocalJobRunner: reduce > reduce 15/04/14 21:10:35 INFO mapred.Task: Task 'attempt_local486772134_0001_r_000000_0' done. 15/04/14 21:10:36 INFO mapred.JobClient: map 100% reduce 100% 15/04/14 21:10:36 INFO mapred.JobClient: Job complete: job_local486772134_0001 15/04/14 21:10:36 INFO mapred.JobClient: Counters: 18 15/04/14 21:10:36 INFO mapred.JobClient: File Output Format Counters 15/04/14 21:10:36 INFO mapred.JobClient: Bytes Written=37 15/04/14 21:10:36 INFO mapred.JobClient: File Input Format Counters 15/04/14 21:10:36 INFO mapred.JobClient: Bytes Read=0 15/04/14 21:10:36 INFO mapred.JobClient: FileSystemCounters 15/04/14 21:10:36 INFO mapred.JobClient: FILE_BYTES_READ=295 15/04/14 21:10:36 INFO mapred.JobClient: FILE_BYTES_WRITTEN=139062 15/04/14 21:10:36 INFO mapred.JobClient: HDFS_BYTES_WRITTEN=37 15/04/14 21:10:36 INFO mapred.JobClient: Map-Reduce Framework 15/04/14 21:10:36 INFO mapred.JobClient: Map output materialized bytes=51 15/04/14 21:10:36 INFO mapred.JobClient: Map input records=1 15/04/14 21:10:36 INFO mapred.JobClient: Reduce shuffle bytes=0 15/04/14 21:10:36 INFO mapred.JobClient: Spilled Records=2 15/04/14 21:10:36 INFO mapred.JobClient: Map output bytes=43 15/04/14 21:10:36 INFO mapred.JobClient: Total committed heap usage (bytes)=323878912 15/04/14 21:10:36 INFO mapred.JobClient: Combine input records=0 15/04/14 21:10:36 INFO mapred.JobClient: SPLIT_RAW_BYTES=78 15/04/14 21:10:36 INFO mapred.JobClient: Reduce input records=1 15/04/14 21:10:36 INFO mapred.JobClient: Reduce input groups=1 15/04/14 21:10:36 INFO mapred.JobClient: Combine output records=0 15/04/14 21:10:36 INFO mapred.JobClient: Reduce output records=1 15/04/14 21:10:36 INFO mapred.JobClient: Map output records=1

解析:

指明驱动、要访问的数据库、用户名、密码:

DBConfiguration.configureDB(conf, "com.mysql.jdbc.Driver", "jdbc:mysql://192.168.1.1:3306/oled", "root", "mysqladmin");

设置操作语句:

DBInputFormat.setInput(job, MyDBWritable.class, "select id,name from DB", "select count(1) from DB");

根据数据库字段,自定义数据类型,该类实现接口Writable、DBWritable。

public static class MyDBWritable implements Writable,DBWritable