Tensorflow tf.keras.layers.LSTM

参数

| 参数 | 描述 |

|---|---|

| units | 输出空间的维度 |

| input_shape | (timestep, input_dim),timestep可以设置为None,由输入决定,input_dime根据具体情况 |

| activation | 激活函数,默认tanh |

| recurrent_activation | |

| use_bias | |

| kernel_initializer | |

| recurrent_initializer | |

| bias_initializer | |

| unit_forget_bias | |

| kernel_regularizer | |

| recurrent_regularizer | |

| bias_regularizer | |

| activity_regularizer | |

| kernel_constraint | |

| recurrent_constraint | |

| bias_constraint | |

| dropout | |

| recurrent_dropout | |

| implementation | |

| return_sequences | |

| return_state | |

| go_backwards | |

| stateful | |

| unroll |

例子

keras.layers.LSTM(units=200,input_shape=(None,1),return_sequences=True)

init

__init__(

units,

activation='tanh',

recurrent_activation='sigmoid',

use_bias=True,

kernel_initializer='glorot_uniform',

recurrent_initializer='orthogonal',

bias_initializer='zeros',

unit_forget_bias=True,

kernel_regularizer=None,

recurrent_regularizer=None,

bias_regularizer=None,

activity_regularizer=None,

kernel_constraint=None,

recurrent_constraint=None,

bias_constraint=None,

dropout=0.0,

recurrent_dropout=0.0,

implementation=2,

return_sequences=False,

return_state=False,

go_backwards=False,

stateful=False,

time_major=False,

unroll=False,

**kwargs

)

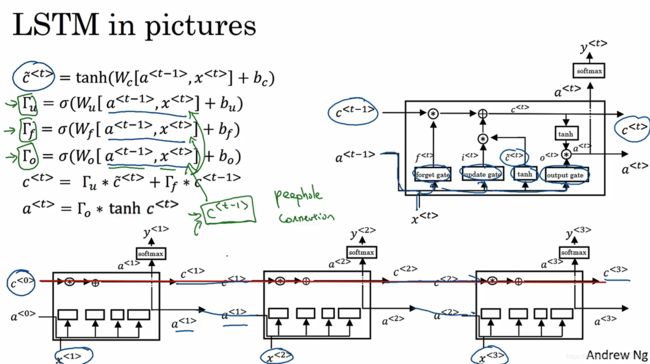

原理

c ^ < t > \hat{c}^{<t>} c^<t>是记忆状态,对应矩阵形状( u n i t s ∗ f e a t u r e s + u n i t s ∗ u n i t s + b i a s units*features+units*units+bias units∗features+units∗units+bias)

Γ u \Gamma_u Γu为更新门(update),形状是 ( u n i t s ∗ f e a t u r e s + u n i t s ∗ u n i t s + b i a s ) (units*features+units*units+bias) (units∗features+units∗units+bias)

Γ f \Gamma_f Γf为更新门(forget),形状是 ( u n i t s ∗ f e a t u r e s + u n i t s ∗ u n i t s + b i a s ) (units*features+units*units+bias) (units∗features+units∗units+bias)

Γ o \Gamma_o Γo为输出门(out),形状是 ( u n i t s ∗ f e a t u r e s + u n i t s ∗ u n i t s + b i a s ) (units*features+units*units+bias) (units∗features+units∗units+bias)

所以LSTM的参数个数是: ( u n i t s ∗ f e a t u r e s + u n i t s ∗ u n i t s + u n i t s ) ∗ 4 (units*features+units*units+units)*4 (units∗features+units∗units+units)∗4

参考:

官网

https://blog.csdn.net/jiangpeng59/article/details/77646186

https://www.zhihu.com/question/41949741?sort=created

https://stackoverflow.com/questions/38080035/how-to-calculate-the-number-of-parameters-of-an-lstm-network/56614978#56614978

https://stackoverflow.com/questions/46584171/why-does-the-first-lstm-in-a-keras-model-have-more-params-than-the-subsequent-on