介绍

归一化层,主要有这几种方法,BatchNorm(2015年)、LayerNorm(2016年)、InstanceNorm(2016年)、GroupNorm(2018年);

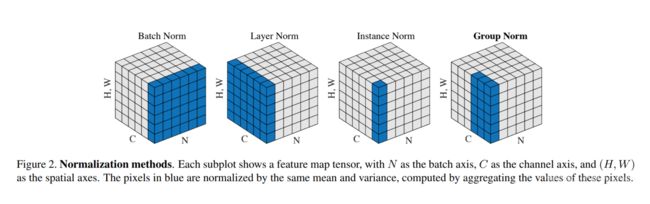

将输入的图像shape记为[N,C,H,W],这几个方法主要区别是:

BatchNorm:batch方向做归一化,计算NHW的均值,对小batchsize效果不好;

(BN主要缺点是对batchsize的大小比较敏感,由于每次计算均值和方差是在一个batch上,所以如果batchsize太小,则计算的均值、方差不足以代表整个数据分布)LayerNorm:channel方向做归一化,计算CHW的均值;

(对RNN作用明显)InstanceNorm:一个batch,一个channel内做归一化。计算HW的均值,用在风格化迁移;

(因为在图像风格化中,生成结果主要依赖于某个图像实例,所以对整个batch归一化不适合图像风格化中,因而对HW做归一化。可以加速模型收敛,并且保持每个图像实例之间的独立。)GroupNorm:将channel方向分group,然后每个group内做归一化,算(C//G)HW的均值;这样与batchsize无关,不受其约束。

1. BatchNorm详解

torch.nn.BatchNorm1d(num_features, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

torch.nn.BatchNorm2d(num_features, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

torch.nn.BatchNorm3d(num_features, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

参数:

num_features:输入的特征数,该期望输入的大小为’N x C [x L]’

eps: 为保证数值稳定性(分母不能趋近或取0),给分母加上的值。默认为1e-5。

momentum: 动态均值和动态方差所使用的动量。默认为0.1。

affine: 布尔值,当设为true,给该层添加可学习的仿射变换参数。

track_running_stats:布尔值,当设为true,记录训练过程中的均值和方差;

实现公式:

# 示例代码

import torch

import torch.nn as nn

"""

BatchNorm1d(时域)

Input: (N, C) or (N, C, L)

Output: (N, C) or (N, C, L)(same shape as input)

"""

# input = torch.randn(2, 10, 100)

input = torch.randn(2, 10)

# with learnable parameters

m1 = nn.BatchNorm1d(10)

# without learnable parameters

m2 = nn.BatchNorm1d(10, affine=False)

output1 = m1(input)

print(output1.shape)

output2 = m2(input)

print(output2.shape)

"""

BatchNorm2d(空域)

Input: (N, C, H, W)

Output: (N, C, H, W)(same shape as input)

"""

input = torch.randn(2, 10, 35, 45)

# with learnable parameters

m1 = nn.BatchNorm2d(10)

# without learnable parameters

m2 = nn.BatchNorm2d(10)

output1 = m1(input)

print(output1.shape)

output2 = m2(input)

print(output2.shape)

"""

BatchNorm3d(时空域)

Input: (N, C, D, H, W)

Output: (N, C, D, H, W)(same shape as input)

"""

input = torch.randn(2, 10, 20, 35, 45)

# with leanable parameters

m1 = nn.BatchNorm3d(10)

# without learnable parameters

m2 = nn.BatchNorm3d(10)

output1 = m1(input)

print(output1.shape)

output2 = m2(input)

print(output2.shape)

# 结果

torch.Size([2, 10])

torch.Size([2, 10])

torch.Size([2, 10, 35, 45])

torch.Size([2, 10, 35, 45])

torch.Size([2, 10, 20, 35, 45])

torch.Size([2, 10, 20, 35, 45])

2. GroupNorm详解

torch.nn.GroupNorm(num_groups, num_channels, eps=1e-05, affine=True)

参数:

num_groups:需要划分的groups

num_features:输入的特征数,输入的大小为’N x C x *’

eps: 为保证数值稳定性(分母不能趋近或取0),给分母加上的值。默认为1e-5

momentum: 动态均值和动态方差所使用的动量。默认为0.1

affine: 布尔值,当设为true,给该层添加可学习的仿射变换参数

实现公式:

# 示例代码

"""

GroupNorm

Input: (N, C, *)where C=num_channels

Output: (N, C, *)(same shape as input)

"""

input = torch.randn(2, 6, 10, 10)

# separate 6 channels into 3 groups

m1 = nn.GroupNorm(3, 6)

# Separate 6 channels into 6 groups (equivalent with InstanceNorm)

m2 = nn.GroupNorm(6, 6)

# Put all 6 channels into a single group (equivalent with LayerNorm)

m3 = nn.GroupNorm(1, 6)

output1 = m1(input)

print(output1.shape)

output2 = m2(input)

print(output2.shape)

output3 = m3(input)

print(output3.shape)

# 结果

torch.Size([2, 6, 10, 10])

torch.Size([2, 6, 10, 10])

torch.Size([2, 6, 10, 10])

3. InstanceNorm详解

torch.nn.InstanceNorm1d(num_features, eps=1e-05, momentum=0.1, affine=False, track_running_stats=False)

torch.nn.InstanceNorm2d(num_features, eps=1e-05, momentum=0.1, affine=False, track_running_stats=False)

torch.nn.InstanceNorm3d(num_features, eps=1e-05, momentum=0.1, affine=False, track_running_stats=False)

参数:

num_features:输入的特征数,输入的大小为’N x C [x L]’

eps:为保证数值稳定性(分母不能趋近或取0),给分母加上的值。默认为1e-5

momentum: 动态均值和动态方差所使用的动量。默认为0.1

affine: 布尔值,当设为true,给该层添加可学习的仿射变换参数

track_running_stats:布尔值,当设为true,记录训练过程中的均值和方差;

实现公式:

# 示例代码

"""

InstanceNorm1d

Input: (N, C, L)

Output: (N, C, L)(same shape as input)

"""

input = torch.randn(20, 100, 40)

# without learnable parameters

m1 = nn.InstanceNorm1d(100)

# with learnable parameters

m2 = nn.InstanceNorm1d(100, affine=True)

output1 = m1(input)

print(output1.shape)

output2 = m2(input)

print(output2.shape)

"""

InstanceNorm2d

Input: (N, C, H, W)

Output: (N, C, H, W)(same shape as input)

"""

input = torch.randn(20, 100, 35, 45)

# without learnable parameters

m1 = nn.InstanceNorm2d(100)

# with learnable parameters

m2 = nn.InstanceNorm2d(100, affine=True)

output1 = m1(input)

print(output1.shape)

output2 = m2(input)

print(output2.shape)

"""

InstanceNorm3d

Input: (N, C, D, H, W)

Output: (N, C, D, H, W)(same shape as input)

"""

input = torch.randn(20, 100, 35, 45)

# without learnable parameters

m1 = nn.InstanceNorm2d(100)

# with learnable parameters

m2 = nn.InstanceNorm2d(100, affine=True)

output1 = m1(input)

print(output1.shape)

output2 = m2(input)

print(output2.shape)

# 结果

torch.Size([20, 100, 40])

torch.Size([20, 100, 40])

torch.Size([20, 100, 35, 45])

torch.Size([20, 100, 35, 45])

torch.Size([20, 100, 35, 45])

torch.Size([20, 100, 35, 45])

4. LayerNorm详解

torch.nn.LayerNorm(normalized_shape, eps=1e-05, elementwise_affine=True)

参数:

normalized_shape:输入尺寸

[∗×normalized_shape[0]×normalized_shape[1]×…×normalized_shape[−1]]

eps:为保证数值稳定性(分母不能趋近或取0),给分母加上的值。默认为1e-5。

elementwise_affine:布尔值,当设为true,给该层添加可学习的仿射变换参数

实现公式:

# 示例代码

"""

LayerNorm

Input: (N, *)

Output: (N, *)(same shape as input)

"""

input = torch.randn(20, 5, 10, 10)

# with learnable parameters

m1 = nn.LayerNorm(input.size()[1:])

# without learnable parameters

m2 = nn.LayerNorm(input.size()[1:], elementwise_affine=False)

# normalize over last two dimensions

m3 = nn.LayerNorm([10, 10])

# normalize over last dimension of size 10

m4 = nn.LayerNorm(10)

output1 = m1(input)

print(output1.shape)

output2 = m2(input)

print(output2.shape)

output3 = m3(input)

print(output3.shape)

output4 = m4(input)

print(output4.shape)

# 结果

torch.Size([20, 5, 10, 10])

torch.Size([20, 5, 10, 10])

torch.Size([20, 5, 10, 10])

torch.Size([20, 5, 10, 10])

论文链接

- BatchNorm

https://arxiv.org/pdf/1502.03167.pdf- LayerNorm

https://arxiv.org/pdf/1607.06450v1.pdf- InstanceNorm

https://arxiv.org/pdf/1607.08022.pdf- GroupNorm

https://arxiv.org/pdf/1803.08494.pdf- SwitchableNorm

https://arxiv.org/pdf/1806.10779.pdf