神经网络学习小记录26——Keras EfficientNet模型的复现详解

神经网络学习小记录26——EfficientNet模型的复现详解

- 学习前言

- 什么是EfficientNet模型

- EfficientNet模型的特点

- EfficientNet网络的结构

- EfficientNet网络部分实现代码

- 图片预测

学习前言

2019年,谷歌新出EfficientNet,在其它网络的基础上,大幅度的缩小了参数的同时提高了预测准确度,简直太强了,我这样的强者也要跟着做下去!

什么是EfficientNet模型

2019年,谷歌新出EfficientNet,网络如其名,这个网络非常的有效率,怎么理解有效率这个词呢,我们从卷积神经网络的发展来看:

从最初的VGG16发展到如今的Xception,人们慢慢发现,提高神经网络的性能不仅仅在于堆叠层数,更重要的几点是:

1、网络要可以训练,可以收敛。

2、参数量要比较小,方便训练,提高速度。

3、创新神经网络的结构,学到更重要的东西。

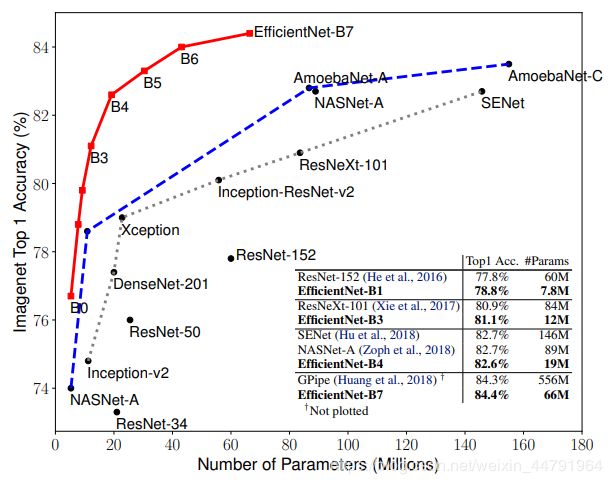

而EfficientNet很好的做到了这一点,它利用更少的参数量(关系到训练、速度)得到最好的识别度(学到更重要的特点)。

EfficientNet模型的特点

EfficientNet模型具有很独特的特点,这个特点是参考其它优秀神经网络设计出来的。经典的神经网络特点如下:

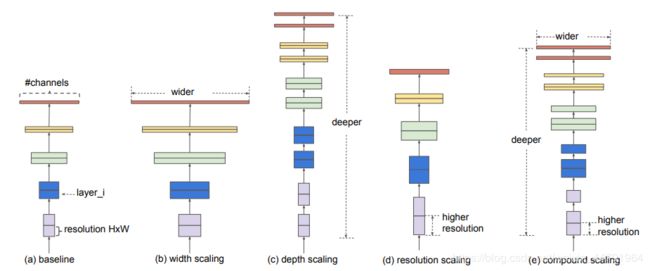

1、利用残差神经网络增大神经网络的深度,通过更深的神经网络实现特征提取。

2、改变每一层提取的特征层数,实现更多层的特征提取,得到更多的特征,提升宽度。

3、通过增大输入图片的分辨率也可以使得网络可以学习与表达的东西更加丰富,有利于提高精确度。

EfficientNet就是将这三个特点结合起来,通过一起缩放baseline模型(MobileNet中就通过缩放α实现缩放模型,不同的α有不同的模型精度,α=1时为baseline模型;ResNet其实也是有一个baseline模型,在baseline的基础上通过改变图片的深度实现不同的模型实现),同时调整深度、宽度、输入图片的分辨率完成一个优秀的网络设计。

EfficientNet的效果如下:

在EfficientNet模型中,其使用一组固定的缩放系数统一缩放网络深度、宽度和分辨率。

假设想使用 2N倍的计算资源,我们可以简单的对网络深度扩大αN倍、宽度扩大βN 、图像尺寸扩大γN倍,这里的α,β,γ都是由原来的小模型上做微小的网格搜索决定的常量系数。

如图为EfficientNet的设计思路,从三个方面同时拓充网络的特性。

EfficientNet网络的结构

EfficientNet一共由Stem + 16个Blocks + Con2D + GlobalAveragePooling2D + Dense组成,其核心内容是16个Blocks,其它的结构与常规的卷积神经网络差距不大。

此时展示的是EfficientNet-B0也就是EfficientNet的baseline的结构:

其中每个Block的的参数如下:

DEFAULT_BLOCKS_ARGS = [

{'kernel_size': 3, 'repeats': 1, 'filters_in': 32, 'filters_out': 16,

'expand_ratio': 1, 'id_skip': True, 'strides': 1, 'se_ratio': 0.25},

{'kernel_size': 3, 'repeats': 2, 'filters_in': 16, 'filters_out': 24,

'expand_ratio': 6, 'id_skip': True, 'strides': 2, 'se_ratio': 0.25},

{'kernel_size': 5, 'repeats': 2, 'filters_in': 24, 'filters_out': 40,

'expand_ratio': 6, 'id_skip': True, 'strides': 2, 'se_ratio': 0.25},

{'kernel_size': 3, 'repeats': 3, 'filters_in': 40, 'filters_out': 80,

'expand_ratio': 6, 'id_skip': True, 'strides': 2, 'se_ratio': 0.25},

{'kernel_size': 5, 'repeats': 3, 'filters_in': 80, 'filters_out': 112,

'expand_ratio': 6, 'id_skip': True, 'strides': 1, 'se_ratio': 0.25},

{'kernel_size': 5, 'repeats': 4, 'filters_in': 112, 'filters_out': 192,

'expand_ratio': 6, 'id_skip': True, 'strides': 2, 'se_ratio': 0.25},

{'kernel_size': 3, 'repeats': 1, 'filters_in': 192, 'filters_out': 320,

'expand_ratio': 6, 'id_skip': True, 'strides': 1, 'se_ratio': 0.25}

]

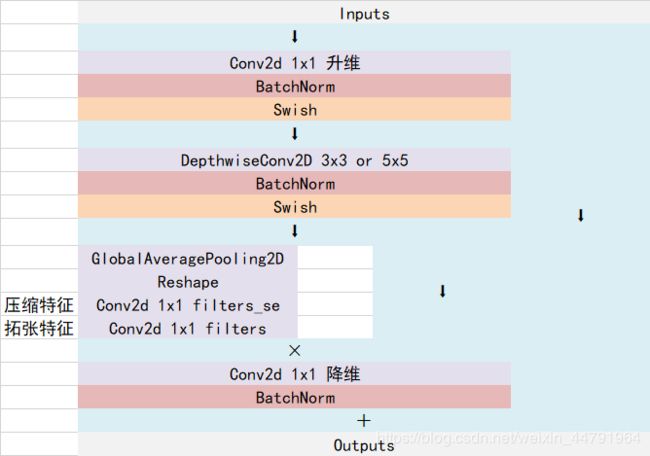

Efficientnet-B0由1个Stem+16个大Blocks堆叠构成,16个大Blocks可以分为1、2、2、3、3、4、1个Block。Block的通用结构如下,其总体的设计思路是Inverted residuals结构和残差结构,在3x3或者5x5网络结构前利用1x1卷积升维,在3x3或者5x5网络结构后增加了一个关于通道的注意力机制,最后利用1x1卷积降维后增加一个大残差边。

Block实现代码如下:

def block(inputs, activation_fn=tf.nn.swish, drop_rate=0., name='',

filters_in=32, filters_out=16, kernel_size=3, strides=1,

expand_ratio=1, se_ratio=0., id_skip=True):

bn_axis = 3

# 升多少维度

filters = filters_in * expand_ratio

# 利用Inverted residuals

# part1 1x1升维度

if expand_ratio != 1:

x = layers.Conv2D(filters, 1,

padding='same',

use_bias=False,

kernel_initializer=CONV_KERNEL_INITIALIZER,

name=name + 'expand_conv')(inputs)

x = layers.BatchNormalization(axis=bn_axis, name=name + 'expand_bn')(x)

x = layers.Activation(activation_fn, name=name + 'expand_activation')(x)

else:

x = inputs

# padding

if strides == 2:

x = layers.ZeroPadding2D(padding=correct_pad(x, kernel_size),

name=name + 'dwconv_pad')(x)

conv_pad = 'valid'

else:

conv_pad = 'same'

# part2 利用3x3卷积对每一个channel进行卷积

x = layers.DepthwiseConv2D(kernel_size,

strides=strides,

padding=conv_pad,

use_bias=False,

depthwise_initializer=CONV_KERNEL_INITIALIZER,

name=name + 'dwconv')(x)

x = layers.BatchNormalization(axis=bn_axis, name=name + 'bn')(x)

x = layers.Activation(activation_fn, name=name + 'activation')(x)

# 压缩后再放大,作为一个调整系数

if 0 < se_ratio <= 1:

filters_se = max(1, int(filters_in * se_ratio))

se = layers.GlobalAveragePooling2D(name=name + 'se_squeeze')(x)

se = layers.Reshape((1, 1, filters), name=name + 'se_reshape')(se)

se = layers.Conv2D(filters_se, 1,

padding='same',

activation=activation_fn,

kernel_initializer=CONV_KERNEL_INITIALIZER,

name=name + 'se_reduce')(se)

se = layers.Conv2D(filters, 1,

padding='same',

activation='sigmoid',

kernel_initializer=CONV_KERNEL_INITIALIZER,

name=name + 'se_expand')(se)

x = layers.multiply([x, se], name=name + 'se_excite')

# part3 利用1x1对特征层进行压缩

x = layers.Conv2D(filters_out, 1,

padding='same',

use_bias=False,

kernel_initializer=CONV_KERNEL_INITIALIZER,

name=name + 'project_conv')(x)

x = layers.BatchNormalization(axis=bn_axis, name=name + 'project_bn')(x)

# 实现残差神经网络

if (id_skip is True and strides == 1 and filters_in == filters_out):

if drop_rate > 0:

x = layers.Dropout(drop_rate,

noise_shape=(None, 1, 1, 1),

name=name + 'drop')(x)

x = layers.add([x, inputs], name=name + 'add')

return x

EfficientNet网络部分实现代码

#-------------------------------------------------------------#

# EfficientNet的网络部分

#-------------------------------------------------------------#

from __future__ import absolute_import

from __future__ import division

from __future__ import print_function

import os

import math

import tensorflow as tf

import numpy as np

from keras import layers

from keras.models import Model

from keras.applications import correct_pad

from keras.applications import imagenet_utils

from keras.applications.imagenet_utils import decode_predictions

from keras.applications.imagenet_utils import _obtain_input_shape

from keras.utils.data_utils import get_file

from keras.preprocessing import image

# 用于下载模型的默认参数

BASE_WEIGHTS_PATH = (

'https://github.com/Callidior/keras-applications/'

'releases/download/efficientnet/')

WEIGHTS_HASHES = {

'b0': ('e9e877068bd0af75e0a36691e03c072c',

'345255ed8048c2f22c793070a9c1a130'),

'b1': ('8f83b9aecab222a9a2480219843049a1',

'b20160ab7b79b7a92897fcb33d52cc61'),

'b2': ('b6185fdcd190285d516936c09dceeaa4',

'c6e46333e8cddfa702f4d8b8b6340d70'),

'b3': ('b2db0f8aac7c553657abb2cb46dcbfbb',

'e0cf8654fad9d3625190e30d70d0c17d'),

'b4': ('ab314d28135fe552e2f9312b31da6926',

'b46702e4754d2022d62897e0618edc7b'),

'b5': ('8d60b903aff50b09c6acf8eaba098e09',

'0a839ac36e46552a881f2975aaab442f'),

'b6': ('a967457886eac4f5ab44139bdd827920',

'375a35c17ef70d46f9c664b03b4437f2'),

'b7': ('e964fd6e26e9a4c144bcb811f2a10f20',

'd55674cc46b805f4382d18bc08ed43c1')

}

# 每个Blocks的参数

DEFAULT_BLOCKS_ARGS = [

{'kernel_size': 3, 'repeats': 1, 'filters_in': 32, 'filters_out': 16,

'expand_ratio': 1, 'id_skip': True, 'strides': 1, 'se_ratio': 0.25},

{'kernel_size': 3, 'repeats': 2, 'filters_in': 16, 'filters_out': 24,

'expand_ratio': 6, 'id_skip': True, 'strides': 2, 'se_ratio': 0.25},

{'kernel_size': 5, 'repeats': 2, 'filters_in': 24, 'filters_out': 40,

'expand_ratio': 6, 'id_skip': True, 'strides': 2, 'se_ratio': 0.25},

{'kernel_size': 3, 'repeats': 3, 'filters_in': 40, 'filters_out': 80,

'expand_ratio': 6, 'id_skip': True, 'strides': 2, 'se_ratio': 0.25},

{'kernel_size': 5, 'repeats': 3, 'filters_in': 80, 'filters_out': 112,

'expand_ratio': 6, 'id_skip': True, 'strides': 1, 'se_ratio': 0.25},

{'kernel_size': 5, 'repeats': 4, 'filters_in': 112, 'filters_out': 192,

'expand_ratio': 6, 'id_skip': True, 'strides': 2, 'se_ratio': 0.25},

{'kernel_size': 3, 'repeats': 1, 'filters_in': 192, 'filters_out': 320,

'expand_ratio': 6, 'id_skip': True, 'strides': 1, 'se_ratio': 0.25}

]

# 两个Kernel的初始化器

CONV_KERNEL_INITIALIZER = {

'class_name': 'VarianceScaling',

'config': {

'scale': 2.0,

'mode': 'fan_out',

'distribution': 'normal'

}

}

DENSE_KERNEL_INITIALIZER = {

'class_name': 'VarianceScaling',

'config': {

'scale': 1. / 3.,

'mode': 'fan_out',

'distribution': 'uniform'

}

}

def block(inputs, activation_fn=tf.nn.swish, drop_rate=0., name='',

filters_in=32, filters_out=16, kernel_size=3, strides=1,

expand_ratio=1, se_ratio=0., id_skip=True):

bn_axis = 3

# 升多少维度

filters = filters_in * expand_ratio

# 利用Inverted residuals

# part1 1x1升维度

if expand_ratio != 1:

x = layers.Conv2D(filters, 1,

padding='same',

use_bias=False,

kernel_initializer=CONV_KERNEL_INITIALIZER,

name=name + 'expand_conv')(inputs)

x = layers.BatchNormalization(axis=bn_axis, name=name + 'expand_bn')(x)

x = layers.Activation(activation_fn, name=name + 'expand_activation')(x)

else:

x = inputs

# padding

if strides == 2:

x = layers.ZeroPadding2D(padding=correct_pad(x, kernel_size),

name=name + 'dwconv_pad')(x)

conv_pad = 'valid'

else:

conv_pad = 'same'

# part2 利用3x3卷积对每一个channel进行卷积

x = layers.DepthwiseConv2D(kernel_size,

strides=strides,

padding=conv_pad,

use_bias=False,

depthwise_initializer=CONV_KERNEL_INITIALIZER,

name=name + 'dwconv')(x)

x = layers.BatchNormalization(axis=bn_axis, name=name + 'bn')(x)

x = layers.Activation(activation_fn, name=name + 'activation')(x)

# 压缩后再放大,作为一个调整系数

if 0 < se_ratio <= 1:

filters_se = max(1, int(filters_in * se_ratio))

se = layers.GlobalAveragePooling2D(name=name + 'se_squeeze')(x)

se = layers.Reshape((1, 1, filters), name=name + 'se_reshape')(se)

se = layers.Conv2D(filters_se, 1,

padding='same',

activation=activation_fn,

kernel_initializer=CONV_KERNEL_INITIALIZER,

name=name + 'se_reduce')(se)

se = layers.Conv2D(filters, 1,

padding='same',

activation='sigmoid',

kernel_initializer=CONV_KERNEL_INITIALIZER,

name=name + 'se_expand')(se)

x = layers.multiply([x, se], name=name + 'se_excite')

# part3 利用1x1对特征层进行压缩

x = layers.Conv2D(filters_out, 1,

padding='same',

use_bias=False,

kernel_initializer=CONV_KERNEL_INITIALIZER,

name=name + 'project_conv')(x)

x = layers.BatchNormalization(axis=bn_axis, name=name + 'project_bn')(x)

# 实现残差神经网络

if (id_skip is True and strides == 1 and filters_in == filters_out):

if drop_rate > 0:

x = layers.Dropout(drop_rate,

noise_shape=(None, 1, 1, 1),

name=name + 'drop')(x)

x = layers.add([x, inputs], name=name + 'add')

return x

def EfficientNet(width_coefficient,

depth_coefficient,

default_size,

dropout_rate=0.2,

drop_connect_rate=0.2,

depth_divisor=8,

activation_fn=tf.nn.swish,

blocks_args=DEFAULT_BLOCKS_ARGS,

model_name='efficientnet',

weights='imagenet',

input_tensor=None,

input_shape=None,

pooling=None,

classes=1000,

**kwargs):

input_shape = [416,416,3]

img_input = layers.Input(tensor=input_tensor, shape=input_shape)

bn_axis = 3

# 保证filter的大小可以被8整除

def round_filters(filters, divisor=depth_divisor):

"""Round number of filters based on depth multiplier."""

filters *= width_coefficient

new_filters = max(divisor, int(filters + divisor / 2) // divisor * divisor)

# Make sure that round down does not go down by more than 10%.

if new_filters < 0.9 * filters:

new_filters += divisor

return int(new_filters)

# 重复次数,取顶

def round_repeats(repeats):

return int(math.ceil(depth_coefficient * repeats))

# Build stem

x = img_input

x = layers.ZeroPadding2D(padding=correct_pad(x, 3),

name='stem_conv_pad')(x)

x = layers.Conv2D(round_filters(32), 3,

strides=2,

padding='valid',

use_bias=False,

kernel_initializer=CONV_KERNEL_INITIALIZER,

name='stem_conv')(x)

x = layers.BatchNormalization(axis=bn_axis, name='stem_bn')(x)

x = layers.Activation(activation_fn, name='stem_activation')(x)

# Build blocks

from copy import deepcopy

# 防止参数的改变

blocks_args = deepcopy(blocks_args)

b = 0

# 计算总的block的数量

blocks = float(sum(args['repeats'] for args in blocks_args))

for (i, args) in enumerate(blocks_args):

assert args['repeats'] > 0

args['filters_in'] = round_filters(args['filters_in'])

args['filters_out'] = round_filters(args['filters_out'])

for j in range(round_repeats(args.pop('repeats'))):

if j > 0:

args['strides'] = 1

args['filters_in'] = args['filters_out']

x = block(x, activation_fn, drop_connect_rate * b / blocks,

name='block{}{}_'.format(i + 1, chr(j + 97)), **args)

b += 1

# 收尾工作

x = layers.Conv2D(round_filters(1280), 1,

padding='same',

use_bias=False,

kernel_initializer=CONV_KERNEL_INITIALIZER,

name='top_conv')(x)

x = layers.BatchNormalization(axis=bn_axis, name='top_bn')(x)

x = layers.Activation(activation_fn, name='top_activation')(x)

# 利用GlobalAveragePooling2D代替全连接层

x = layers.GlobalAveragePooling2D(name='avg_pool')(x)

if dropout_rate > 0:

x = layers.Dropout(dropout_rate, name='top_dropout')(x)

x = layers.Dense(classes,

activation='softmax',

kernel_initializer=DENSE_KERNEL_INITIALIZER,

name='probs')(x)

# 输入inputs

inputs = img_input

model = Model(inputs, x, name=model_name)

# Load weights.

if weights == 'imagenet':

file_suff = '_weights_tf_dim_ordering_tf_kernels_autoaugment.h5'

file_hash = WEIGHTS_HASHES[model_name[-2:]][0]

file_name = model_name + file_suff

weights_path = get_file(file_name,BASE_WEIGHTS_PATH + file_name,

cache_subdir='models',

file_hash=file_hash)

model.load_weights(weights_path)

elif weights is not None:

model.load_weights(weights)

return model

def EfficientNetB0(weights='imagenet',

input_tensor=None,

input_shape=None,

pooling=None,

classes=1000,

**kwargs):

return EfficientNet(1.0, 1.0, 224, 0.2,

model_name='efficientnet-b0',

weights=weights,

input_tensor=input_tensor, input_shape=input_shape,

pooling=pooling, classes=classes,

**kwargs)

def EfficientNetB1(weights='imagenet',

input_tensor=None,

input_shape=None,

pooling=None,

classes=1000,

**kwargs):

return EfficientNet(1.0, 1.1, 240, 0.2,

model_name='efficientnet-b1',

weights=weights,

input_tensor=input_tensor, input_shape=input_shape,

pooling=pooling, classes=classes,

**kwargs)

def EfficientNetB2(weights='imagenet',

input_tensor=None,

input_shape=None,

pooling=None,

classes=1000,

**kwargs):

return EfficientNet(1.1, 1.2, 260, 0.3,

model_name='efficientnet-b2',

weights=weights,

input_tensor=input_tensor, input_shape=input_shape,

pooling=pooling, classes=classes,

**kwargs)

def EfficientNetB3(weights='imagenet',

input_tensor=None,

input_shape=None,

pooling=None,

classes=1000,

**kwargs):

return EfficientNet(1.2, 1.4, 300, 0.3,

model_name='efficientnet-b3',

weights=weights,

input_tensor=input_tensor, input_shape=input_shape,

pooling=pooling, classes=classes,

**kwargs)

def EfficientNetB4(weights='imagenet',

input_tensor=None,

input_shape=None,

pooling=None,

classes=1000,

**kwargs):

return EfficientNet(1.4, 1.8, 380, 0.4,

model_name='efficientnet-b4',

weights=weights,

input_tensor=input_tensor, input_shape=input_shape,

pooling=pooling, classes=classes,

**kwargs)

def EfficientNetB5(weights='imagenet',

input_tensor=None,

input_shape=None,

pooling=None,

classes=1000,

**kwargs):

return EfficientNet(1.6, 2.2, 456, 0.4,

model_name='efficientnet-b5',

weights=weights,

input_tensor=input_tensor, input_shape=input_shape,

pooling=pooling, classes=classes,

**kwargs)

def EfficientNetB6(weights='imagenet',

input_tensor=None,

input_shape=None,

pooling=None,

classes=1000,

**kwargs):

return EfficientNet(1.8, 2.6, 528, 0.5,

model_name='efficientnet-b6',

weights=weights,

input_tensor=input_tensor, input_shape=input_shape,

pooling=pooling, classes=classes,

**kwargs)

def EfficientNetB7(weights='imagenet',

input_tensor=None,

input_shape=None,

pooling=None,

classes=1000,

**kwargs):

return EfficientNet(2.0, 3.1, 600, 0.5,

model_name='efficientnet-b7',

weights=weights,

input_tensor=input_tensor, input_shape=input_shape,

pooling=pooling, classes=classes,

**kwargs)

图片预测

建立网络后,可以用以下的代码进行预测。

# 处理图片

def preprocess_input(x):

x /= 255.

x -= 0.5

x *= 2.

return x

if __name__ == '__main__':

model = EfficientNetB0()

model.summary()

img_path = 'elephant.jpg'

img = image.load_img(img_path, target_size=(224, 224))

x = image.img_to_array(img)

x = np.expand_dims(x, axis=0)

x = preprocess_input(x)

print('Input image shape:', x.shape)

preds = model.predict(x)

print(np.argmax(preds))

print('Predicted:', decode_predictions(preds, 1))

预测所需的已经训练好的EfficientNet模型会在运行时自动下载,下载后的模型位于C:\Users\Administrator.keras\models文件夹内。

通过函数EfficientNetB0,EfficientNetB1……EfficientNetB6,EfficientNetB7可以获得不同size的EfficientNet模型。

| Top-1 | Top-5 | 10-5 | Size | Stem | |

|---|---|---|---|---|---|

| EfficientNet-B0 | 22.810 | 6.508 | 5.858 | 5.3M | 4.0M |

| EfficientNet-B1 | 20.866 | 5.552 | 5.050 | 7.9M | 6.6M |

| EfficientNet-B2 | 19.820 | 5.054 | 4.538 | 9.2M | 7.8M |

| EfficientNet-B3 | 18.422 | 4.324 | 3.902 | 12.3M | 10.8M |

| EfficientNet-B4 | 17.040 | 3.740 | 3.344 | 19.5M | 17.7M |

| EfficientNet-B5 | 16.298 | 3.290 | 3.114 | 30.6M | 28.5M |

| EfficientNet-B6 | 15.918 | 3.102 | 2.916 | 43.3M | 41.0M |

| EfficientNet-B7 | 15.570 | 3.160 | 2.906 | 66.7M | 64.1M |