tensorflow使用object detection实现目标检测超详细全流程(视频+图像集检测)

使用tensorflow object detection进行训练检测。参考原始代码:https://github.com/tensorflow/models/tree/master/research

本博客以mobilenet-ssd-v2为例进行处理,通过换模型即可实现faster RCNN等的训练检测。

1、数据整理

对生成的数据集(整理成VOC格式),通过Annotations的数据数进行train、test、val、trainval.txt的生成

进入目录

cd VOCdevkit/VOC2012/

python data_segment.py

"""

data_segment.py

可自主设计数据集的比例,即trainval_percent,train_percent

"""

import os

import random

trainval_percent = 0.9

train_percent = 0.95

xmlfilepath = 'Annotations'

txtsavepath = 'ImageSets\Main'

total_xml = os.listdir(xmlfilepath)

num=len(total_xml)

list1=range(num)

tv=int(num*trainval_percent)

tr=int(tv*train_percent)

trainval= random.sample(list1,tv)

train=random.sample(trainval,tr)

ftrainval = open('ImageSets/Main/trainval.txt', 'w')

ftest = open('ImageSets/Main/test.txt', 'w')

ftrain = open('ImageSets/Main/train.txt', 'w')

fval = open('ImageSets/Main/val.txt', 'w')

for i in list1:

name=total_xml[i][:-4]+'\n'

if i in trainval:

ftrainval.write(name)

if i in train:

ftrain.write(name)

else:

fval.write(name)

else:

ftest.write(name)

ftrainval.close()

ftrain.close()

fval.close()

ftest .close()

note:

在使用数据集格式转化工具,生成的voc文件中,ImageSets/mains中,只含有trainval.txt。(可通过上述方式进行重新生成.txt文件,亦可暂时忽略,在后续生成record文件时,直接应用)

数据集的下载可参考自动驾驶数据集,同时能获得自动驾驶数据集与voc格式之间的转换。

2、安装依赖项

pip install -r requirements.txt

3、载入环境变量

protoc object_detection/protos/*.proto --python_out=.

export PYTHONPATH=$PYTHONPATH:`pwd`:`pwd`/object_detection

export PYTHONPATH=$PYTHONPATH:`pwd`:`pwd`/slim

4、生成record文件

# 生成相应的train.record、val.record、test.record

python object_detection/dataset_tools/create_pascal_tf_record.py --data_dir=VOCdevkit/ --year=VOC2012 --set=val --label_map_path=object_detection/data/pascal_label_map.pbtxt --output_path=dataset/val.record

也可根据修改过的record生成文件,直接对数据集转化结果trainval.txt进行处理。

python object_detection/create_crj.py --data_dir=VOCdevkit/ --set=trainval --year=VOC2012 --label_map_path=object_detection/data/pascal_label_map.pbtxt

"""

前面部分,篇幅问题,省略,参考create_pascal_tf_record.py中函数

"""

def main(_):

if FLAGS.set not in SETS:

raise ValueError('set must be in : {}'.format(SETS))

if FLAGS.year not in YEARS:

raise ValueError('year must be in : {}'.format(YEARS))

data_dir = FLAGS.data_dir

years = ['VOC2007', 'VOC2012']

if FLAGS.year != 'merged':

years = [FLAGS.year]

# writer = tf.python_io.TFRecordWriter(FLAGS.output_path)

label_map_dict = label_map_util.get_label_map_dict(FLAGS.label_map_path)

for year in years:

logging.info('Reading from PASCAL %s dataset.', year)

# examples_path = os.path.join(data_dir, year, 'ImageSets', 'Main',

# 'aeroplane_' + FLAGS.set + '.txt')

examples_path = os.path.join(data_dir, year, 'ImageSets', 'Main', FLAGS.set + '.txt')

image_dir = os.path.join(data_dir, year, 'JPEGImages')

annotations_dir = os.path.join(data_dir, year, FLAGS.annotations_dir)

examples_list = dataset_util.read_examples_list(examples_path)

trainval_percent = 0.8

train_percent = 0.7

random.seed(42)

random.shuffle(examples_list)

num_examples = len(examples_list)

num_trainval = int(trainval_percent * num_examples)

num_train = int(train_percent * num_trainval)

trainval_examples = examples_list[:num_trainval]

train_examples = trainval_examples[:num_train]

val_examples = trainval_examples[num_train:]

test_examples = examples_list[num_trainval:]

logging.info('%d training and %d validation examples.', len(train_examples), len(val_examples))

train_output_path = 'record_path/train.record'

val_output_path = 'record_path/val.record'

test_output_path = 'record_path/test.record'

create_pascal_record(train_output_path, label_map_dict,annotations_dir, image_dir, train_examples)

create_pascal_record(val_output_path, label_map_dict,annotations_dir, image_dir, val_examples)

create_pascal_record(test_output_path, label_map_dict,annotations_dir, image_dir, test_examples)

if __name__ == '__main__':

tf.app.run()

5、训练

修改ssd_mobilenet_v2_coco.config中的六处

(1)num_classes: 3(设为自己的训练集的类别)

(2)fine_tune_checkpoint: “/home/crj/tensorflow/ssd_mobilenet_v2_coco_2018_03_29/model.ckpt”(fine_tune_checkpoint的地址)

(3)train_input_reader:{}中input_path和label_map_path的路径

(4)eval_input_reader:{}中input_path和label_map_path的路径

(5)batch_size:48 通常不能太小,否则会报错

(6)initial_learning_rate: 0.005 不能太小,否则容易陷入局部过拟合

# 训练模型在dataset中保存

python object_detection/legacy/train.py --logtostderr --train_dir=dataset --pipeline_config_path=ssd_mobilenet_v2_coco.config

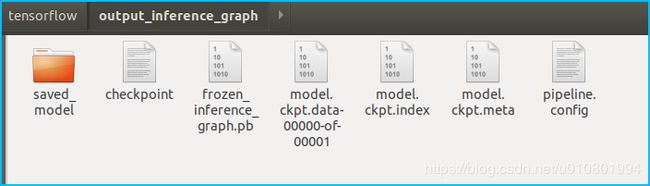

6、导出训练数据

将dataset中的生成模型导出到output_inference_graph输出文件夹中

python object_detection/export_inference_graph.py --input_type image_tensor --pipeline_config_path ssd_mobilenet_v2_coco.config --trained_checkpoint_prefix /home/crj/tensorflow/dataset/model.ckpt-20649 --output_directory output_inference_graph

7、检测结果输出

python object_detection/Object_Detection_Tensorflow_API_crj.py

from moviepy.editor import VideoFileClip

from IPython.display import HTML

# import detection_demo.object_detection_model as model

import matplotlib

matplotlib.use('Agg')

import os

import numpy as np

import imageio

imageio.plugins.ffmpeg.download()

from object_detection.utils import label_map_util

from object_detection.utils import visualization_utils as vis_util

from moviepy.editor import VideoFileClip

from IPython.display import HTML

import time

import tensorflow as tf

import imageio

imageio.plugins.ffmpeg.download()

CWD_PATH = os.getcwd()

# Path to frozen detection graph. This is the actual model that is used for the object detection.

MODEL_NAME = 'output_inference_graph'

# PATH_TO_CKPT = os.path.join(CWD_PATH, 'object_detection', MODEL_NAME, 'frozen_inference_graph.pb')

PATH_TO_CKPT = os.path.join(CWD_PATH, '/home/crj/tensorflow', MODEL_NAME, 'frozen_inference_graph.pb')

# # List of the strings that is used to add correct label for each box.

PATH_TO_LABELS = os.path.join(CWD_PATH, '/home/crj/tensorflow/object_detection/data/pascal_label_map.pbtxt')

NUM_CLASSES = 3

label_map = label_map_util.load_labelmap(PATH_TO_LABELS)

categories = label_map_util.convert_label_map_to_categories(label_map, max_num_classes=NUM_CLASSES,

use_display_name=True)

category_index = label_map_util.create_category_index(categories)

def process_image(image):

# NOTE: The output you return should be a color image (3 channel) for processing video below

# you should return the final output (image with lines are drawn on lanes)

with detection_graph.as_default():

with tf.Session(graph=detection_graph) as sess:

image_process = detect_objects(image, sess, detection_graph)

return image_process

def detect_objects(image_np, sess, detection_graph):

# Expand dimensions since the model expects images to have shape: [1, None, None, 3]

image_np_expanded = np.expand_dims(image_np, axis=0)

image_tensor = detection_graph.get_tensor_by_name('image_tensor:0')

# Each box represents a part of the image where a particular object was detected.

boxes = detection_graph.get_tensor_by_name('detection_boxes:0')

# Each score represent how level of confidence for each of the objects.

# Score is shown on the result image, together with the class label.

scores = detection_graph.get_tensor_by_name('detection_scores:0')

classes = detection_graph.get_tensor_by_name('detection_classes:0')

num_detections = detection_graph.get_tensor_by_name('num_detections:0')

# Actual detection.

(boxes, scores, classes, num_detections) = sess.run(

[boxes, scores, classes, num_detections],

feed_dict={image_tensor: image_np_expanded})

# Visualization of the results of a detection.

vis_util.visualize_boxes_and_labels_on_image_array(

image_np,

np.squeeze(boxes),

np.squeeze(classes).astype(np.int32),

np.squeeze(scores),

category_index,

use_normalized_coordinates=True,

line_thickness=8)

return image_np

detection_graph = tf.Graph()

with detection_graph.as_default():

od_graph_def = tf.GraphDef()

with tf.gfile.GFile(PATH_TO_CKPT, 'rb') as fid:

serialized_graph = fid.read()

od_graph_def.ParseFromString(serialized_graph)

tf.import_graph_def(od_graph_def, name='')

def process_image(image):

# NOTE: The output you return should be a color image (3 channel) for processing video below

# you should return the final output (image with lines are drawn on lanes)

with detection_graph.as_default():

with tf.Session(graph=detection_graph) as sess:

image_process = detect_objects(image, sess, detection_graph)

return image_process

"""

================================视频检测===================================

"""

white_output = 'video1_out.mp4'

clip1 = VideoFileClip("/home/crj/tensorflow/test_images1/1.mp4").subclip(0,2)

white_clip = clip1.fl_image(process_image) #NOTE: this function expects color images!!s

#get_ipython().magic('time white_clip.write_videofile(white_output, audio=False)')

white_clip.write_videofile(white_output, audio=False)

HTML("""

""".format(white_output))

"""

==============================图像检测=====================================

"""

# First test on images

PATH_TO_TEST_IMAGES_DIR = '/home/crj/tensorflow/test_images/'

TEST_IMAGE_PATHS = [ os.path.join(PATH_TO_TEST_IMAGES_DIR, 'image-{}.jpg'.format(i)) for i in range(1, 5) ]

# Size, in inches, of the output images.

IMAGE_SIZE = (12, 8)

def load_image_into_numpy_array(image):

(im_width, im_height) = image.size

return np.array(image.getdata()).reshape(

(im_height, im_width, 3)).astype(np.uint8)

from PIL import Image

for image_path in TEST_IMAGE_PATHS:

image = Image.open(image_path)

image_np = load_image_into_numpy_array(image)

plt.imshow(image_np)

plt.show()

print(image.size, image_np.shape)

#Load a frozen TF model

detection_graph = tf.Graph()

with detection_graph.as_default():

od_graph_def = tf.GraphDef()

with tf.gfile.GFile(PATH_TO_CKPT, 'rb') as fid:

serialized_graph = fid.read()

od_graph_def.ParseFromString(serialized_graph)

tf.import_graph_def(od_graph_def, name='')

with detection_graph.as_default():

with tf.Session(graph=detection_graph) as sess:

for image_path in TEST_IMAGE_PATHS:

image = Image.open(image_path)

image_np = load_image_into_numpy_array(image)

image_process = detect_objects(image_np, sess, detection_graph)

print(image_process.shape)

plt.figure(figsize=IMAGE_SIZE)

plt.imshow(image_process)

plt.show()

学习小记录:

(1)batchsize:批大小。在深度学习中,一般采用SGD训练,即每次训练在训练集中取batchsize个样本训练;

(2)iteration:1个iteration等于使用batchsize个样本训练一次;

(3)epoch:1个epoch等于使用训练集中的全部样本训练一次;

举个例子,训练集有1000个样本,batchsize=10,那么:

训练完整个样本集需要:100次iteration,1次epoch。