计算机视觉--全景图像拼接

文章目录

- 1、全景图像拼接

- 1.1 原理

- 1.2 基本流程

- 2、实验过程

- 2.1 场景一:固定点拍摄多张图片

- 2.1.1 实验结果展示

- 2.1.2 小结

- 2.1.3 代码

- 2.2 场景二:视差变化大

- 2.2.1 实验结果展示

- 2.2.2 小结

- 2.2.3 代码

- 3、总结

- 3.1 实验总结

- 3.2 实验遇到的问题

1、全景图像拼接

1.1 原理

全景图像拼接,即将两幅或多幅具有重叠区域的图像,合并成一张大图。全景图像拼接的实现基本上包括特征点的提取与匹配、图像配准、图像融合三大部分。

特征点的提取与匹配: 采用SIFT特征点匹配算法对图像进行特征点匹配,得到两幅图像中相互匹配的特征点对,以及每个特征点对应的特征点描述符,但这些特征点对中会有一部分是误匹配点,因此我们需要进行匹配点对的消除,一般我们使用RANSAC去除误匹配点对。

图像配准: 采用一定的匹配策略,找出待拼接图像中的模板或特征点在参考图像中对应的位置,进而确定两幅图像之间的变换关系。

图像融合: 将待拼接图像的重合区域进行融合得到拼接重构的平滑无缝全景图像。

1.2 基本流程

图像拼接的基本流程如下:

1、针对某个场景拍摄多张/序列图像

2、计算第二张图像与第一张图像之间的变换关系 (sift匹配)

3、将第二张图像叠加到第一张图像的坐标系中 (图像映射)

4、融合/合成变换后的图像

2、实验过程

2.1 场景一:固定点拍摄多张图片

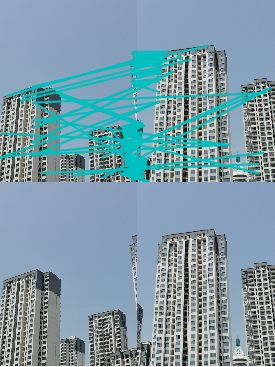

2.1.1 实验结果展示

2.1.2 小结

失败分析:

由上图失败例子可看出,拼接效果非常不好,楼层完全错乱,基本没有正确拼接的地方。从特征匹配图中也可看出有不少错误匹配的地方,可能是因为楼层相似度过高,且拍摄角度太小导致拼接发生扭曲,错乱。

成功分析:

由上图成功例子可看出,拼接效果还不错,虽然景深比较复杂,但拼接之后基本没有错乱重影的地方,图像大部分都有正确正确拼接。可能是因为图像本身虽然事物繁多复杂,但相似度不高,影响特征匹配的因素小,所以拼接效果比较理想。

2.1.3 代码

from pylab import *

from numpy import *

from PIL import Image

# If you have PCV installed, these imports should work

from PCV.geometry import homography, warp

from PCV.localdescriptors import sift

"""

This is the panorama example from section 3.3.

"""

# set paths to data folder

featname = ['C:/A/1/' + str(i + 1) + '.sift' for i in range(5)]

imname = ['C:/A/1/' + str(i + 1) + '.jpg' for i in range(5)]

# extract features and match

l = {}

d = {}

for i in range(5):

sift.process_image(imname[i], featname[i])

l[i], d[i] = sift.read_features_from_file(featname[i])

matches = {}

for i in range(4):

matches[i] = sift.match(d[i + 1], d[i])

# visualize the matches (Figure 3-11 in the book)

# sift匹配可视化

for i in range(4):

im1 = array(Image.open(imname[i]))

im2 = array(Image.open(imname[i + 1]))

figure()

sift.plot_matches(im2, im1, l[i + 1], l[i], matches[i], show_below=True)

# function to convert the matches to hom. points

# 将匹配转换成齐次坐标点的函数

def convert_points(j):

ndx = matches[j].nonzero()[0]

fp = homography.make_homog(l[j + 1][ndx, :2].T)

ndx2 = [int(matches[j][i]) for i in ndx]

tp = homography.make_homog(l[j][ndx2, :2].T)

# switch x and y - TODO this should move elsewhere

fp = vstack([fp[1], fp[0], fp[2]])

tp = vstack([tp[1], tp[0], tp[2]])

return fp, tp

# estimate the homographies

# 估计单应性矩阵

model = homography.RansacModel()

fp, tp = convert_points(1)

H_12 = homography.H_from_ransac(fp, tp, model)[0] # im 1 to 2 # im1 到 im2 的单应性矩阵

fp, tp = convert_points(0)

H_01 = homography.H_from_ransac(fp, tp, model)[0] # im 0 to 1

tp, fp = convert_points(2) # NB: reverse order

H_32 = homography.H_from_ransac(fp, tp, model)[0] # im 3 to 2

tp, fp = convert_points(3) # NB: reverse order

H_43 = homography.H_from_ransac(fp, tp, model)[0] # im 4 to 3

# warp the images

# 扭曲图像

delta = 2000 # for padding and translation 用于填充和平移

im1 = array(Image.open(imname[1]), "uint8")

im2 = array(Image.open(imname[2]), "uint8")

im_12 = warp.panorama(H_12, im1, im2, delta, delta)

im1 = array(Image.open(imname[0]), "f")

im_02 = warp.panorama(dot(H_12, H_01), im1, im_12, delta, delta)

im1 = array(Image.open(imname[3]), "f")

im_32 = warp.panorama(H_32, im1, im_02, delta, delta)

im1 = array(Image.open(imname[4]), "f")

im_42 = warp.panorama(dot(H_32, H_43), im1, im_32, delta, 2 * delta)

imsave('jmu2.jpg', array(im_42, "uint8"))

figure()

imshow(array(im_42, "uint8"))

axis('off')

show()

2.2 场景二:视差变化大

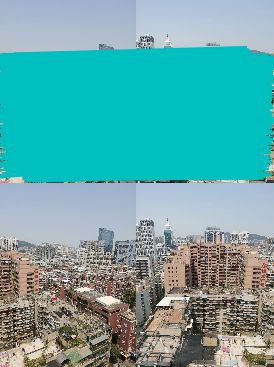

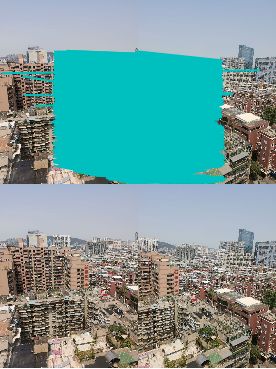

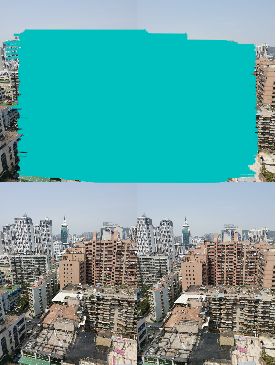

2.2.1 实验结果展示

2.2.2 小结

失败分析:

由上图失败例子可看出,拼接效果不是特别理想,树丛和楼层都有严重重影,特征匹配效果不错,左图中多出来的楼栋,在右图中并没有特征匹配点。可能也是因为这个多出来的楼栋,才导致拼接图中楼栋出现重影。

成功分析:

由上图成功例子可看出,拼接效果理想,拼接图基本看不出是两图拼接得到的,特征匹配点也比较准确,可能是因为图片中的建筑物标志明显,且其附近建筑物不会产生冲突的原因,所以特征匹配准确,拼接效果也比较理想。

2.2.3 代码

import numpy as np

import cv2 as cv

from matplotlib import pyplot as plt

%matplotlib inline

if __name__ == '__main__':

top, bot, left, right = 100, 100, 0, 500

img1 = cv.imread('C:/A/3/9.jpg')

img2 = cv.imread('C:/A/3/10.jpg')

srcImg = cv.copyMakeBorder(img1, top, bot, left, right, cv.BORDER_CONSTANT, value=(0, 0, 0))

testImg = cv.copyMakeBorder(img2, top, bot, left, right, cv.BORDER_CONSTANT, value=(0, 0, 0))

img1gray = cv.cvtColor(srcImg, cv.COLOR_BGR2GRAY)

img2gray = cv.cvtColor(testImg, cv.COLOR_BGR2GRAY)

sift = cv.xfeatures2d_SIFT().create()

# find the keypoints and descriptors with SIFT

kp1, des1 = sift.detectAndCompute(img1gray, None)

kp2, des2 = sift.detectAndCompute(img2gray, None)

# FLANN parameters

FLANN_INDEX_KDTREE = 1

index_params = dict(algorithm=FLANN_INDEX_KDTREE, trees=5)

search_params = dict(checks=50)

flann = cv.FlannBasedMatcher(index_params, search_params)

matches = flann.knnMatch(des1, des2, k=2)

# Need to draw only good matches, so create a mask

matchesMask = [[0, 0] for i in range(len(matches))]

good = []

pts1 = []

pts2 = []

# ratio test as per Lowe's paper

for i, (m, n) in enumerate(matches):

if m.distance < 0.7*n.distance:

good.append(m)

pts2.append(kp2[m.trainIdx].pt)

pts1.append(kp1[m.queryIdx].pt)

matchesMask[i] = [1, 0]

draw_params = dict(matchColor=(0, 255, 0),

singlePointColor=(255, 0, 0),

matchesMask=matchesMask,

flags=0)

img3 = cv.drawMatchesKnn(img1gray, kp1, img2gray, kp2, matches, None, **draw_params)

plt.imshow(img3, ), plt.show()

rows, cols = srcImg.shape[:2]

MIN_MATCH_COUNT = 10

if len(good) > MIN_MATCH_COUNT:

src_pts = np.float32([kp1[m.queryIdx].pt for m in good]).reshape(-1, 1, 2)

dst_pts = np.float32([kp2[m.trainIdx].pt for m in good]).reshape(-1, 1, 2)

M, mask = cv.findHomography(src_pts, dst_pts, cv.RANSAC, 5.0)

warpImg = cv.warpPerspective(testImg, np.array(M), (testImg.shape[1], testImg.shape[0]), flags=cv.WARP_INVERSE_MAP)

for col in range(0, cols):

if srcImg[:, col].any() and warpImg[:, col].any():

left = col

break

for col in range(cols-1, 0, -1):

if srcImg[:, col].any() and warpImg[:, col].any():

right = col

break

res = np.zeros([rows, cols, 3], np.uint8)

for row in range(0, rows):

for col in range(0, cols):

if not srcImg[row, col].any():

res[row, col] = warpImg[row, col]

elif not warpImg[row, col].any():

res[row, col] = srcImg[row, col]

else:

srcImgLen = float(abs(col - left))

testImgLen = float(abs(col - right))

alpha = srcImgLen / (srcImgLen + testImgLen)

res[row, col] = np.clip(srcImg[row, col] * (1-alpha) + warpImg[row, col] * alpha, 0, 255)

# opencv is bgr, matplotlib is rgb

res = cv.cvtColor(res, cv.COLOR_BGR2RGB)

# show the result

plt.figure()

plt.imshow(res)

plt.show()

else:

print("Not enough matches are found - {}/{}".format(len(good), MIN_MATCH_COUNT))

matchesMask = None

3、总结

3.1 实验总结

- 图像拍摄角度变化太小可能会导致图像特征匹配相似度过高,影响拼接效果,导致拼接出现重影,变形,错乱的现象。

- 图像景深复杂时拼接效果反而比较理想,可能是所拍摄景物相似度不高,易辨识,特征匹配错误点少,所以拼接效果理想。

- 图像的尺寸大小会影响运行速度,图像尺寸较小时,运行速度快,图像尺寸较大时,运行速度慢。

3.2 实验遇到的问题

报错一:print 语法

解决办法: 可能因为python版本的原因,print在不同版本里语法不一样,用记事本将该路径下的warp.py打开并按照提示修改即可。

报错二:matplotlib.delaunay不可用

解决办法: 参考以下链接进行修改即可。https://blog.csdn.net/weixin_42648848/article/details/88667243