PyTorch自用笔记(第五周-实战1)

PyTorch自用笔记(第五周)

-

- 9.6 Module模块

- 9.7 数据增强

- 十、CIFAR10与ResNet实战

-

- 10.1 CIFAR10数据集

- 10.2 Lenet-5实战

- 10.3 ResNet实战

9.6 Module模块

1.所有网络层次类的一个父类

如:

nn.Linear

nn.BatchNorm2d

nn.Conv2d

自定义类

class MyLinear(nn.Module):

def __init__(self, inp, outp):

super(MyLinear, self).__init__()

#requires_grad = True

self.w = nn.Parameter(torch.randn(outp, inp))

self.b = nn.Parameter(torch.randn(outp))

def forward(self, x):

x = x @ self.w.t() + self.b

return x

2.嵌套

优点/功能:

1.大量现成层次的接口

2.Container

nn.Sequential # 按顺序执行

3.参数管理

4.children:直系亲属

modules:非直系亲属

5.to(device) # cpu/gpu/cuda

6.保存和加载

torch.save(net.state_dict(), 'ckpt.mdl') # 保存当前状态到ckpt.mdl文件

net.load_state_dict(torch.load('ckpt.mdl')) # 加载train好的状态

7.train/test

net.train()

net.eval()

8.自定义类

# Flatten

class Flatten(nn.Module):

def __init__(self):

super(Flatten, self).__init()

def forward(self, input):

return input.view(input, size(0), -1)

class TestNet(nn.Module):

def __init__(self):

super(TestNet, self).__init__()

self.net = nn.Sequential(nn.Conv2d(1, 16, stride=1, padding=1),

nn.MaxPool2d(2, 2),

Flatten(),

nn.Linear(1*14*14, 10))

def forward(self, x):

return self.net(x)

# 自定义线性层

class MyLinear(nn.Module):

def __init__(self, inp, outp):

super(MyLinear, self).__init__()

#requires_grad = True

self.w = nn.Parameter(torch.randn(outp, inp))

self.b = nn.Parameter(torch.randn(outp))

def forward(self, x)

x = x @ self.w.t() + self.b

return x

9.7 数据增强

由于神经网络需要大量的数据,从已有的数据中心扩充出更多的数据供网络进行学习

1.减少参数量

2.正则化

3.数据增强

常用数据增强的手段:

1.翻转

transform.RandomHorizontalFlip() # 水平翻转

transforms.RandomVerticalFlip() # 垂直翻转

# Random表示随机进行翻转

2.旋转

transforms.RandomRotation(15) # 旋转15°

transforms.RandomTotation([90, 180, 270]) # 随机旋转90/180/270

3.随机移动和裁剪

# scale缩放

transforms.Resize([32, 32])

# 部分裁剪

transforms.RandomCrop([28, 28])

transforms.Compose([, , ,]) # 类似nn.Sequential

4.噪声

5.GAN(对抗生成网络)

十、CIFAR10与ResNet实战

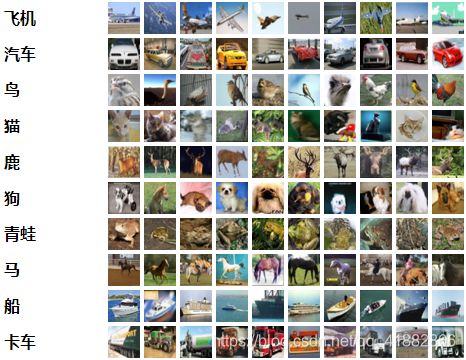

10.1 CIFAR10数据集

CIFAR-10数据集由10个类的60000个32x32彩色图像组成,每个类有6000个图像。有50000个训练图像和10000个测试图像。

数据集分为五个训练批次和一个测试批次,每个批次有10000个图像。测试批次包含来自每个类别的恰好1000个随机选择的图像。训练批次以随机顺序包含剩余图像,但一些训练批次可能包含来自一个类别的图像比另一个更多。总体来说,五个训练集之和包含来自每个类的正好5000张图像。

以下是数据集中的类,以及来自每个类的10个随机图像:

这些类完全相互排斥;汽车和卡车之间没有重叠。

在pycharm中加载数据集如果速度慢的话除了代理下载之外,还可尝试以下方法:

1.将数据集下载到本地,并用浏览器打开其路径

2.Ctrl+鼠标左键点击数据集名字进入数据集的.py文件中,找到url

3.将url修改为’file:///本地数据集路径’即可

4.注:运行download时浏览器不要关闭

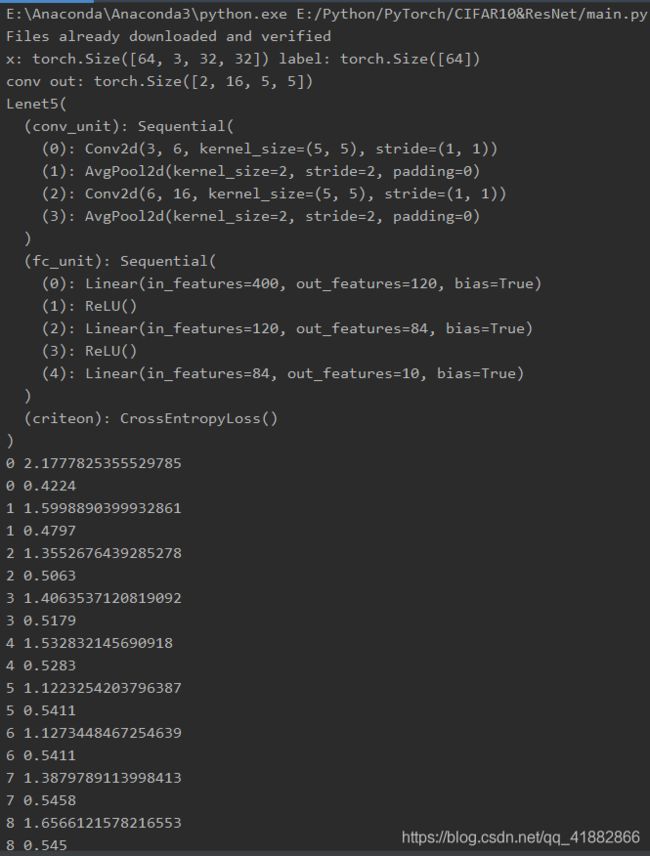

10.2 Lenet-5实战

首先根据论文模型创建lenet-5

import torch

from torch import nn

# from torch.nn import functional as F

class Lenet5(nn.Module):

"""

for cifar10 dataset

"""

def __init__(self):

super(Lenet5, self).__init__()

self.conv_unit = nn.Sequential(

# x:[b, 3, 32, 32] => [b, 6, ]

nn.Conv2d(3, 6, kernel_size=5, stride=1, padding=0),

nn.AvgPool2d(kernel_size=2, stride=2, padding=0),

# 第二个卷积层

nn.Conv2d(6, 16, kernel_size=5, stride=1, padding=0),

nn.AvgPool2d(kernel_size=2, stride=2, padding=0),

# 需要打平操作

)

# flatten

# fc unit

self.fc_unit = nn.Sequential(

nn.Linear(16*5*5, 120),

nn.ReLU(),

nn.Linear(120, 84),

nn.ReLU(),

nn.Linear(84, 10)

)

# [b, 3, 32, 32]

tmp = torch.randn(2, 3, 32, 32)

out = self.conv_unit(tmp)

# [b, 16, 5, 5]

print('conv out:', out.shape)

# use Cross Entropy Loss

# self.criteon = nn.MSELoss()

# 分类用CEL;回归用MSE

self.criteon = nn.CrossEntropyLoss()

def forward(self, x):

"""

:param x: [b, 3, 32, 32]

:return:

"""

batchsz = x.size(0)

# [b, 3, 32, 32] => [b, 16, 5, 5]

x = self.conv_unit(x)

# [b, 16, 5, 5] => [b, 16*5*5]

x = x.view(batchsz, 16*5*5) # -1;flatten

# [b, 16*5*5] => [b, 10]

logits = self.fc_unit(x) # before softmax

# [b, 10]

# pred = F.softmax(logits, dim=1)

# loss = self.criteon(logits, y)

return logits

def main():

net = Lenet5()

tmp = torch.randn(2, 3, 32, 32)

out = net(tmp)

# [b, 16, 5, 5]

print('lenet out:', out.shape)

if __name__ == '__main__':

main()

加载数据集训练模型并计算精度

import torch

from torch.utils.data import DataLoader

from torchvision import datasets, transforms

from torch import nn, optim

from lenet5 import Lenet5

def main():

batchsz = 64

cifar_train = datasets.CIFAR10('cifar', True, transform=transforms.Compose([

transforms.Resize((32, 32)),

transforms.ToTensor()

]), download=False)

cifar_train = DataLoader(cifar_train, batch_size=batchsz, shuffle=True)

cifar_test = datasets.CIFAR10('cifar', False, transform=transforms.Compose([

transforms.Resize((32, 32)),

transforms.ToTensor()

]), download=True)

cifar_test = DataLoader(cifar_test, batch_size=batchsz, shuffle=True)

x, label = iter(cifar_train).next() # 迭代器

print('x:', x.shape, 'label:', label.shape)

device = torch.device('cuda')

model = Lenet5().to(device)

criteon = nn.CrossEntropyLoss()

optimizer = optim.Adam(model.parameters(), lr=1e-3)

print(model)

for epoch in range(1000):

model.train()

for batchidx, (x, label) in enumerate(cifar_train):

# [b, 3, 32, 32]

# [b]

x, label = x.to(device), label.to(device)

logits = model(x) # forward

# logits:[b, 10]

# label:[b]

# loss:tensor scalar

loss = criteon(logits, label)

# backprop

optimizer.zero_grad()

loss.backward()

optimizer.step()

#

print(epoch, loss.item())

model.eval()

with torch.no_grad():

# test

total_correct = 0

total_num = 0

for x, label in cifar_test:

#

#

x, label = x.to(device), label.to(device)

# [b, 10]

logits = model(x)

# [b]

pred = logits.argmax(dim=1)

# [b] vs [b] => scalar tensor

total_correct += torch.eq(pred, label).float().sum().item()

total_num += x.size(0)

acc = total_correct / total_num

print(epoch, acc)

if __name__ == '__main__':

main()

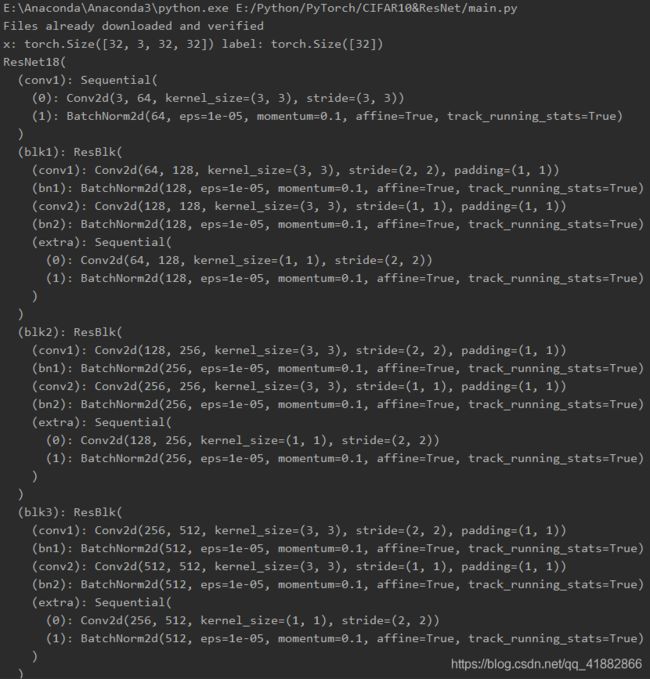

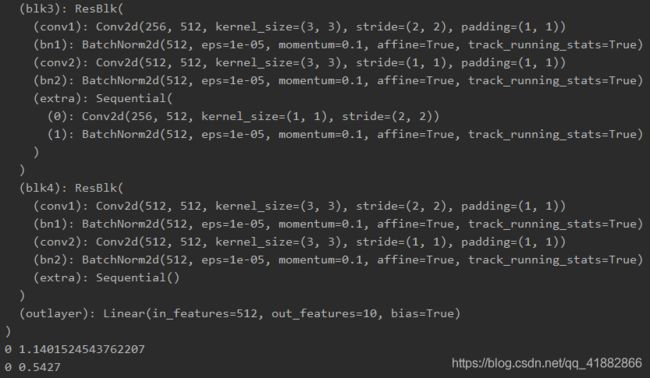

10.3 ResNet实战

注:这里的残差块并未严格按照论文中的实现,而是经过了微调

resnet.py

import torch

from torch import nn

from torch.nn import functional as F

class ResBlk(nn.Module):

"""

resnet block

"""

def __init__(self, ch_in, ch_out, stride=1):

"""

:param ch_in:

:param ch_out:

"""

super(ResBlk, self).__init__()

self.conv1 = nn.Conv2d(ch_in, ch_out, kernel_size=3, stride=stride, padding=1)

self.bn1 = nn.BatchNorm2d(ch_out)

self.conv2 = nn.Conv2d(ch_out, ch_out, kernel_size=3, stride=1, padding=1)

self.bn2 = nn.BatchNorm2d(ch_out)

self.extra = nn.Sequential()

if ch_out != ch_in:

# [b, ch_in, h, w] => [b, ch_in, h, w]

self.extra = nn.Sequential(

nn.Conv2d(ch_in, ch_out, kernel_size=1, stride=stride),

nn.BatchNorm2d(ch_out)

)

def forward(self, x):

"""

:param x: [b, ch, h, w]

:return:

"""

out = F.relu(self.bn1(self.conv1(x)))

out = self.bn2(self.conv2(out))

# short cut

# extra module:[b, ch_in, h, w] => [b, ch_in, h, w]

# element-wise add:[b, ch_in, h, w] with [b, ch_out, h, w]

out = self.extra(x) + out

return out

class ResNet18(nn.Module):

def __init__(self):

super(ResNet18, self).__init__()

self.conv1 = nn.Sequential(

nn.Conv2d(3, 64, kernel_size=3, stride=3, padding=0),

nn.BatchNorm2d(64)

)

# followed 4 blocks

# [b, 64, h, w] => [b, 128, h, w]

self.blk1 = ResBlk(64, 128, stride=2)

# [b, 128, h, w] => [b, 256, h, w]

self.blk2 = ResBlk(128, 256, stride=2)

# [b, 256, h, w] => [b, 512, h, w]

self.blk3 = ResBlk(256, 512, stride=2)

#

self.blk4 = ResBlk(512, 512, stride=2) # 1024

self.outlayer = nn.Linear(512*1*1, 10)

def forward(self, x):

"""

:param x:

:return:

"""

x = F.relu(self.conv1(x))

# [b, 64, h, w] => [b, 1024, h, w]

x = self.blk1(x)

x = self.blk2(x)

x = self.blk3(x)

x = self.blk4(x)

# print('after conv:', x.shape) # [b, 512, 2, 2]

# [b, 512, h, 2] => [b, 512, 1, 1]

x = F.adaptive_avg_pool2d(x, [1, 1])

# print('after pool', x.shape)

x = x.view(x.size(0), -1)

x = self.outlayer(x)

return x

def main():

blk = ResBlk(64, 128, stride=2)

tmp = torch.randn(2, 64, 16, 16)

out = blk(tmp)

print('block:', out.shape)

x = torch.randn(2, 3, 32, 32)

model = ResNet18()

out = model(x)

print('renet:', out.shape)

if __name__ == '__main__':

main()

main.py

import torch

from torch.utils.data import DataLoader

from torchvision import datasets, transforms

from torch import nn, optim

# from lenet5 import Lenet5

from resnet import ResNet18

def main():

batchsz = 32

cifar_train = datasets.CIFAR10('cifar', True, transform=transforms.Compose([

transforms.Resize((32, 32)),

transforms.ToTensor()

]), download=False)

cifar_train = DataLoader(cifar_train, batch_size=batchsz, shuffle=True)

cifar_test = datasets.CIFAR10('cifar', False, transform=transforms.Compose([

transforms.Resize((32, 32)),

transforms.ToTensor(),

# resnet

# transforms.RandomRotation

transforms.Normalize(mean=[0.485, 0.456, 0.406],

std=[0.229, 0.224, 0.225])

]), download=True)

cifar_test = DataLoader(cifar_test, batch_size=batchsz, shuffle=True)

x, label = iter(cifar_train).next() # 迭代器

print('x:', x.shape, 'label:', label.shape)

device = torch.device('cuda')

# model = Lenet5().to(device)

model = ResNet18().to(device)

criteon = nn.CrossEntropyLoss()

optimizer = optim.Adam(model.parameters(), lr=1e-3)

print(model)

for epoch in range(1000):

model.train()

for batchidx, (x, label) in enumerate(cifar_train):

# [b, 3, 32, 32]

# [b]

x, label = x.to(device), label.to(device)

logits = model(x) # forward

# logits:[b, 10]

# label:[b]

# loss:tensor scalar

loss = criteon(logits, label)

# backprop

optimizer.zero_grad()

loss.backward()

optimizer.step()

#

print(epoch, loss.item())

model.eval()

with torch.no_grad():

# test

total_correct = 0

total_num = 0

for x, label in cifar_test:

#

#

x, label = x.to(device), label.to(device)

# [b, 10]

logits = model(x)

# [b]

pred = logits.argmax(dim=1)

# [b] vs [b] => scalar tensor

total_correct += torch.eq(pred, label).float().sum().item()

total_num += x.size(0)

acc = total_correct / total_num

print(epoch, acc)

if __name__ == '__main__':

main()