Unity AI 语音识别、语音合成、人机交互(一)

自我介绍

大家好,我是VAIN,这是我在CSDN的第一篇文章,之前一直在微博博客上写文章,今后会用CSDN给大家更新一些技术帖,还希望大家多多关照!

项目介绍

因为公司项目要求,今天给大家分享一个unity制作AI助手的帖子,由于网上相关的文章还是比较少的,要么就是不是特别的全面。所以分享一下,希望可以帮助到小伙伴们。

思路

1.需要将我们说的话转成文字(语音识别)

2.AI助手理解我们说的话(人机交互)

3.将AI助手的返回信息转成语音(语音合成)

制作准备

我做的是PC端的,用的是讯飞的SDK和百度的SDK。至于为什么用两个SDK,我也不想啊,C++我也不会啊。这里吐槽一下,讯飞的技术文档真的写给自己看的。

讯飞:语音识别、语音合成(Windows MSC)

百度:人机交互(UNIT)

开始

1.首先我们去讯飞下载SDK,至于怎么下,一些平台操作,这里不做过多的讲解,网上有很多。

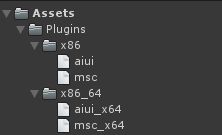

2.导入到Unity项目中 msc是语音识别和语音合成会用到的,aiui(人机交互)可以不用导入,用不到。我这边调用aiui的接口不知道为什么一直差找不到接口。所以才用了百度的UNIT。

这里需要注意的是自己下载SDK只能用对应自己的appid,如果你用了别的SDK,那你就只能用别人的appid。

3.讯飞的SDK是C++写的,我们想要使用,还需要使用非托管DLL的方式

这里我就直接贴出来了

public class MSCDLL

{

#region 登录登出

[DllImport("msc_x64", CallingConvention = CallingConvention.StdCall)]

public static extern int MSPLogin(string usr, string pwd, string parameters);

[DllImport("msc_x64", CallingConvention = CallingConvention.StdCall)]

public static extern int MSPLogout();

#endregion

#region 语音识别

[DllImport("msc_x64", CallingConvention = CallingConvention.StdCall)]

public static extern IntPtr QISRSessionBegin(string grammarList, string _params, ref int errorCode);

[DllImport("msc_x64", CallingConvention = CallingConvention.StdCall)]

public static extern int QISRAudioWrite(IntPtr sessionID, byte[] waveData, uint waveLen, AudioStatus audioStatus, ref EpStatus epStatus, ref RecogStatus recogStatus);

[DllImport("msc_x64", CallingConvention = CallingConvention.StdCall)]

public static extern IntPtr QISRGetResult(IntPtr sessionID, ref RecogStatus rsltStatus, int waitTime, ref int errorCode);

[DllImport("msc_x64", CallingConvention = CallingConvention.StdCall)]

public static extern int QISRSessionEnd(IntPtr sessionID, string hints);

[DllImport("msc_x64", CallingConvention = CallingConvention.StdCall)]

public static extern int QISRBuildGrammar(IntPtr grammarType, string grammarContent, uint grammarLength, string _params, GrammarCallBack callback, IntPtr userData);

[System.Runtime.InteropServices.UnmanagedFunctionPointerAttribute(System.Runtime.InteropServices.CallingConvention.Cdecl)]

public delegate int GrammarCallBack(int errorCode, string info, object udata);

[DllImport("msc.dll", CallingConvention = CallingConvention.StdCall)]

public static extern IntPtr QISRUploadData(string sessionID, string dataName, byte[] userData, uint lenght, string paramValue, ref int errorCode);

#endregion

#region 语音唤醒

//定义回调函数

[UnmanagedFunctionPointer(CallingConvention.Cdecl)]

public delegate int ivw_ntf_handler(string sessionID, int msg, int param1, int param2, IntPtr info, IntPtr userData);

//调用 QIVWSessionBegin(...)开始一次语音唤醒

[DllImport("msc_x64", CallingConvention = CallingConvention.StdCall)]

public static extern IntPtr QIVWSessionBegin(string grammarList, string _params, ref int errorCode);

//调用 QIVWAudioWrite(...) 分块写入音频数据

[DllImport("msc_x64", CallingConvention = CallingConvention.StdCall)]

public static extern int QIVWAudioWrite(string sessionID, byte[] waveData, uint waveLen, AudioStatus audioStatus);

[DllImport("msc_x64", CallingConvention = CallingConvention.StdCall)]

public static extern int QIVWGetResInfo(string resPath, string resInfo, uint infoLen, string _params);

//调用 QIVWRegisterNotify(...) 注册回调函数到msc。

//如果唤醒成功,msc 调用回调函数通知唤醒成功息同时给出相应唤醒数据。如果出错,msc 调用回调函数给出错误信息

[DllImport("msc_x64", CallingConvention = CallingConvention.StdCall)]

public static extern int QIVWRegisterNotify(string sessionID, [MarshalAs(UnmanagedType.FunctionPtr)]ivw_ntf_handler msgProcCb, IntPtr userData);

//调用 QIVWSessionEnd(...) 主动结束本次唤醒

[DllImport("msc_x64", CallingConvention = CallingConvention.StdCall)]

public static extern int QIVWSessionEnd(string sessionID, string hints);

#endregion

#region 语音合成

[DllImport("msc_x64", CallingConvention = CallingConvention.Winapi)]

public static extern IntPtr QTTSSessionBegin(string _params, ref int errorCode);

[DllImport("msc_x64", CallingConvention = CallingConvention.Winapi)]

public static extern int QTTSTextPut(IntPtr sessionID, string textString, uint textLen, string _params);

[DllImport("msc_x64", CallingConvention = CallingConvention.Winapi)]

public static extern IntPtr QTTSAudioGet(IntPtr sessionID, ref uint audioLen, ref SynthStatus synthStatus, ref int errorCode);

[DllImport("msc_x64", CallingConvention = CallingConvention.Winapi)]

public static extern IntPtr QTTSAudioInfo(IntPtr sessionID);

[DllImport("msc_x64", CallingConvention = CallingConvention.Winapi)]

public static extern int QTTSSessionEnd(IntPtr sessionID, string hints);

#endregion

}

4.下面我们开始做语音识别部分。官网提供的C#案例是用一个音频文件来进行识别,这不是我们需要的效果,我们进行一些修改。跳过读取音频文件这个步骤,直接从audio.clip中直接拿音频数据。

// An highlighted block

///

/// 音频识别功能

///

/// byte[]

/// 设置识别的参数:语言、领域、语言区域。。。。

///参数可以参考这个 "sub = iat, domain = iat, language = zh_cn, accent = mandarin, sample_rate = 16000, result_type = plain, result_encoding = utf-8";

private void AudioDiscern(byte[] audio_content, string session_begin_params)

{

StringBuilder result = new StringBuilder();//存储最终识别的结果

var aud_stat = AudioStatus.MSP_AUDIO_SAMPLE_CONTINUE;//音频状态

var ep_stat = EpStatus.MSP_EP_LOOKING_FOR_SPEECH;//端点状态

var rec_stat = RecogStatus.MSP_REC_STATUS_SUCCESS;//识别状态

int errcode = (int)Errors.MSP_SUCCESS;

int totalLength = 0;//用来记录总的识别后的结果的长度,判断是否超过缓存最大值

Debug.Log("正在进行语音识别...");

QISRSessionBegin(null, session_begin_params, ref errcode, ref session_id);

QISRAudioWrite(session_id, audio_content, (uint)audio_content.Length, aud_stat, ref ep_stat, ref rec_stat);

QISRAudioWrite(session_id, null, 0, AudioStatus.MSP_AUDIO_SAMPLE_LAST, ref ep_stat, ref rec_stat);

while (rec_stat != RecogStatus.MSP_REC_STATUS_COMPLETE) //如果没有完成就一直继续获取结果

{

QISRGetResult(totalLength, result, session_id, ref rec_stat, 0, ref errcode);

Thread.Sleep(150);//防止频繁占用cpu

}

Debug.Log("语音听写结束!\n结果:" + result.ToString());

Player_Audio_Value = result.ToString();

QISRSessionEnd(session_id, "");

}

audio_content就是我们传入的音频数据。下面的问题是怎么把audio.clip直接转成byte[]。可以参考:https://blog.csdn.net/qq_28745613/article/details/84874752 不够这个还需要优化。

5.下面就是与百度UNIT做人机交互了。我们需要将语音识别出来的值给到UNIT,官网案例讲的还是比较细的。

大概说下步骤:

1.设置请求参数 参数是json 我这里用的是机器人的 需要注意下技能的和机器人的请求参数是不一样的。好好看下文档,我也是没看文档,傻傻的去问客服为什么会报错。

{

"log_id":"自己定义",

"version":"2.0",

"service_id":"自己的机器人id",

"session_id":"",

"request":

{

"query":"今天的天气怎么样",

"user_id":"自己定义"

},

"dialog_state":

{

"contexts":{

"SYS_REMEMBERED_SKILLS":[""]}

}

}

post方式 请求https://aip.baidubce.com/rpc/2.0/unit/service/chat?access_token= 你自己的token 发送请求参数,返回值也是json

不会使用就看看这个帖子https://ai.baidu.com/forum/topic/show/944007还是很有帮助的(感叹一下,讯飞文档能有百度这样就好了)

///

/// 发送请求获取返回值

///

/// 我的提问

/// (result);

//参数赋值 //这个是用来做多轮对话用的

session_id = value.result.session_id;

//选择优先级高的origin

origin = value.result.response_list[0].origin;

//for (int i = 0; i < value.result.response_list.Count; i++)

//{

// for (int j = 0; j < value.result.response_list[i].action_list.Count; j++)

// {

// //打印全部的返回 say 和 confidence

// Debug.Log(value.result.response_list[i].action_list[j].say + value.result.response_list[i].action_list[j].confidence);

// }

//}

//返回值选择优先级高的say

return value.result.response_list[0].action_list[0].say;

}

6.下面就是最后一步,把返回的值做语音合成。语音合成目前是保存成音频文件,在加载到audio.clip上

private byte[] bytes;

private void CreateAudio(string speekText, string szParams)

{

QTTSSessionBegin(ref session_id, szParams,ref err_code);

QTTSTextPut(session_id, speekText, (uint)Encoding.Default.GetByteCount(speekText), string.Empty);

uint audio_len = 0;

SynthStatus synth_status = SynthStatus.MSP_TTS_FLAG_STILL_HAVE_DATA;

MemoryStream memoryStream = new MemoryStream();

memoryStream.Write(new byte[44], 0, 44);

while (true)

{

IntPtr source = MSCDLL.QTTSAudioGet(session_id, ref audio_len, ref synth_status, ref err_code);

byte[] array = new byte[audio_len];

if (audio_len > 0)

{

Marshal.Copy(source, array, 0, (int)audio_len);

}

memoryStream.Write(array, 0, array.Length);

Thread.Sleep(150);

if (synth_status == SynthStatus.MSP_TTS_FLAG_DATA_END || err_code != (int)Errors.MSP_SUCCESS)

break;

}

QTTSSessionEnd(session_id, "");

WAVE_Header header = getWave_Header((int)memoryStream.Length - 44);//创建wav文件头

byte[] headerByte = StructToBytes(header);//把文件头结构转化为字节数组

memoryStream.Position = 0;//定位到文件头

memoryStream.Write(headerByte, 0, headerByte.Length);//写入文件头

bytes = memoryStream.ToArray();

memoryStream.Close();

if (AI_audio_url != null)

{

if (File.Exists(AI_audio_url))

{

File.Delete(AI_audio_url);

}

File.WriteAllBytes(AI_audio_url, bytes);

StartCoroutine(OnAudioLoadAndPaly(AI_audio_url, audio_type,AI.GetComponent()));

}

}

///

/// 结构体转字符串

///

///

///

/// 结构体初始化赋值

///

///

///

/// 语音音频头

///

private struct WAVE_Header

{

public int RIFF_ID;

public int File_Size;

public int RIFF_Type;

public int FMT_ID;

public int FMT_Size;

public short FMT_Tag;

public ushort FMT_Channel;

public int FMT_SamplesPerSec;

public int AvgBytesPerSec;

public ushort BlockAlign;

public ushort BitsPerSample;

public int DATA_ID;

public int DATA_Size;

}

这样基本的AI助手功能就算完成了

项目地址:https://download.csdn.net/download/weixin_42208093/12446368

demo地址:https://pan.baidu.com/s/1nmd2j_FCSsB8sSIN-u3eNA 提取码:5r1m

注意需要更换自己的SDK和appid 等等参数