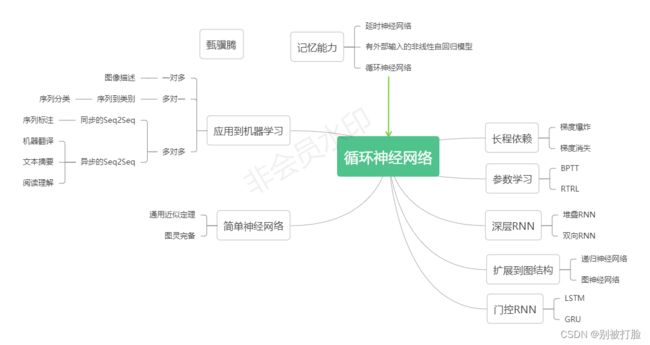

NNDL 实验七 循环神经网络(3)LSTM的记忆能力实验

文章目录

前言

一、6.3 LSTM的记忆能力实验

6.3.1 模型构建

6.3.1.1 LSTM层

6.3.1.2 模型汇总

6.3.2 模型训练

6.3.2.1 训练指定长度的数字预测模型

6.3.2.2 多组训练

6.3.2.3 损失曲线展示

【思考题1】LSTM与SRN实验结果对比,谈谈看法。(选做)

6.3.3 模型评价

6.3.3.1 在测试集上进行模型评价

6.3.3.2 模型在不同长度的数据集上的准确率变化图

【思考题2】LSTM与SRN在不同长度数据集上的准确度对比,谈谈看法。(选做)

6.3.3.3 LSTM模型门状态和单元状态的变化

【思考题3】分析LSTM中单元状态和门数值的变化图,并用自己的话解释该图。

总结

前言

首先,我这次写的还是很细,这次感觉学到了很多,像LSTM的原理以及LSTM计算过程的推导,这个是很重要的。

其次,是这两天做了好多抗原,希望疫情快点过去吧,河大加油。

最后,写的不太好,希望老师和各位大佬多教教我。

![]()

使用LSTM模型重新进行数字求和实验,验证LSTM模型的长程依赖能力。

![]()

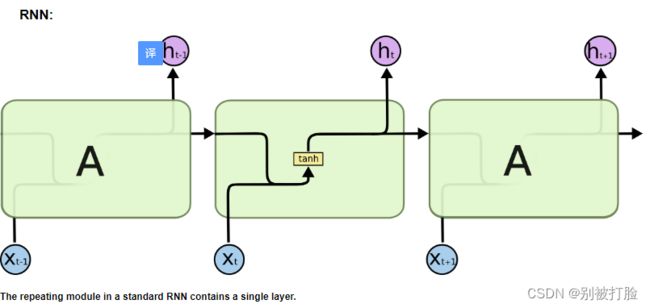

一、6.3 LSTM的记忆能力实验

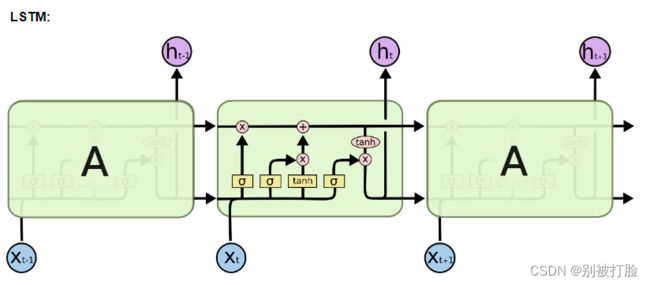

具体计算分为三步:

(1)计算三个“门”

(2)计算内部状态

(3)计算输出状态

通过学习这些门的设置,LSTM可以选择性地忽略或者强化当前的记忆或是输入信息,帮助网络更好地学习长句子的语义信息。

在本节中,我们使用LSTM模型重新进行数字求和实验,验证LSTM模型的长程依赖能力。

6.3.1 模型构建

在本实验中,我们将使用第6.1.2.4节中定义Model_RNN4SeqClass模型,并构建 LSTM 算子.只需要实例化 LSTM 算,并传入Model_RNN4SeqClass模型,就可以用 LSTM 进行数字求和实验

6.3.1.1 LSTM层

LSTM层的代码与SRN层结构相似,只是在SRN层的基础上增加了内部状态、输入门、遗忘门和输出门的定义和计算。这里LSTM层的输出也依然为序列的最后一个位置的隐状态向量。代码实现如下:

# coding=gbk

import torch.nn.functional as F

import torch.nn as nn

import torch

# 声明LSTM和相关参数

class LSTM(nn.Module):

def __init__(self, input_size, hidden_size, Wi_attr=None, Wf_attr=None, Wo_attr=None, Wc_attr=None,

Ui_attr=None, Uf_attr=None, Uo_attr=None, Uc_attr=None, bi_attr=None, bf_attr=None,

bo_attr=None, bc_attr=None):

super(LSTM, self).__init__()

self.input_size = input_size

self.hidden_size = hidden_size

# 初始化模型参数

if Wi_attr==None:

self.W_i=torch.zeros(size=[input_size, hidden_size],dtype=torch.float32)

self.W_i=torch.nn.Parameter(self.W_i)

else:

self.W_i=torch.nn.Parameter(Wi_attr)

if Wf_attr==None:

self.W_f = torch.zeros(size=[input_size, hidden_size], dtype=torch.float32)

self.W_f = torch.nn.Parameter(self.W_f)

else:

self.W_f = torch.nn.Parameter(Wf_attr)

if Wo_attr==None:

self.W_o = torch.zeros(size=[input_size, hidden_size], dtype=torch.float32)

self.W_o = torch.nn.Parameter(self.W_o)

else:

self.W_o = torch.nn.Parameter(Wo_attr)

if Wc_attr==None:

self.W_c = torch.zeros(size=[input_size, hidden_size], dtype=torch.float32)

self.W_c = torch.nn.Parameter(self.W_c)

else:

self.W_c = torch.nn.Parameter(Wc_attr)

if Ui_attr==None:

self.U_i = torch.zeros(size=[hidden_size, hidden_size], dtype=torch.float32)

self.U_i = torch.nn.Parameter(self.U_i)

else:

self.U_i = torch.nn.Parameter(Ui_attr)

if Uf_attr==None:

self.U_f = torch.zeros(size=[hidden_size, hidden_size], dtype=torch.float32)

self.U_f = torch.nn.Parameter(self.U_f)

else:

self.U_f = torch.nn.Parameter(Uf_attr)

if Uo_attr==None:

self.U_o = torch.zeros(size=[hidden_size, hidden_size], dtype=torch.float32)

self.U_o = torch.nn.Parameter(self.U_o)

else:

self.U_o = torch.nn.Parameter(Uo_attr)

if Uc_attr==None:

self.U_c = torch.zeros(size=[hidden_size, hidden_size], dtype=torch.float32)

self.U_c = torch.nn.Parameter(self.U_c)

else:

self.U_c = torch.nn.Parameter(Uc_attr)

if bi_attr==None:

self.b_i = torch.zeros(size=[1, hidden_size], dtype=torch.float32)

self.b_i = torch.nn.Parameter(self.b_i)

else:

self.b_i = torch.nn.Parameter(bi_attr)

if bf_attr==None:

self.b_f = torch.zeros(size=[1, hidden_size], dtype=torch.float32)

self.b_f = torch.nn.Parameter(self.b_f)

else:

self.b_f = torch.nn.Parameter(bf_attr)

if bo_attr==None:

self.b_o = torch.zeros(size=[1, hidden_size], dtype=torch.float32)

self.b_o = torch.nn.Parameter(self.b_o)

else:

self.b_o = torch.nn.Parameter(bo_attr)

if bc_attr==None:

self.b_c = torch.zeros(size=[1, hidden_size], dtype=torch.float32)

self.b_c = torch.nn.Parameter(self.b_c)

else:

self.b_c = torch.nn.Parameter(bc_attr)

# 初始化状态向量和隐状态向量

def init_state(self, batch_size):

hidden_state = torch.zeros(size=[batch_size, self.hidden_size], dtype=torch.float32)

cell_state = torch.zeros(size=[batch_size, self.hidden_size], dtype=torch.float32)

return hidden_state, cell_state

# 定义前向计算

def forward(self, inputs, states=None):

# inputs: 输入数据,其shape为batch_size x seq_len x input_size

batch_size, seq_len, input_size = inputs.shape

# 初始化起始的单元状态和隐状态向量,其shape为batch_size x hidden_size

if states is None:

states = self.init_state(batch_size)

hidden_state, cell_state = states

# 执行LSTM计算,包括:输入门、遗忘门和输出门、候选内部状态、内部状态和隐状态向量

for step in range(seq_len):

# 获取当前时刻的输入数据step_input: 其shape为batch_size x input_size

step_input = inputs[:, step, :]

# 计算输入门, 遗忘门和输出门, 其shape为:batch_size x hidden_size

I_gate = F.sigmoid(torch.matmul(step_input, self.W_i) + torch.matmul(hidden_state, self.U_i) + self.b_i)

F_gate = F.sigmoid(torch.matmul(step_input, self.W_f) + torch.matmul(hidden_state, self.U_f) + self.b_f)

O_gate = F.sigmoid(torch.matmul(step_input, self.W_o) + torch.matmul(hidden_state, self.U_o) + self.b_o)

# 计算候选状态向量, 其shape为:batch_size x hidden_size

C_tilde = F.tanh(torch.matmul(step_input, self.W_c) + torch.matmul(hidden_state, self.U_c) + self.b_c)

# 计算单元状态向量, 其shape为:batch_size x hidden_size

cell_state = F_gate * cell_state + I_gate * C_tilde

# 计算隐状态向量,其shape为:batch_size x hidden_size

hidden_state = O_gate * F.tanh(cell_state)

return hidden_stateWi_attr = torch.tensor([[0.1, 0.2], [0.1, 0.2]])

Wf_attr = torch.tensor([[0.1, 0.2], [0.1, 0.2]])

Wo_attr = torch.tensor([[0.1, 0.2], [0.1, 0.2]])

Wc_attr = torch.tensor([[0.1, 0.2], [0.1, 0.2]])

Ui_attr = torch.tensor([[0.0, 0.1], [0.1, 0.0]])

Uf_attr = torch.tensor([[0.0, 0.1], [0.1, 0.0]])

Uo_attr = torch.tensor([[0.0, 0.1], [0.1, 0.0]])

Uc_attr = torch.tensor([[0.0, 0.1], [0.1, 0.0]])

bi_attr = torch.tensor([[0.1, 0.1]])

bf_attr = torch.tensor([[0.1, 0.1]])

bo_attr = torch.tensor([[0.1, 0.1]])

bc_attr = torch.tensor([[0.1, 0.1]])

lstm = LSTM(2, 2, Wi_attr=Wi_attr, Wf_attr=Wf_attr, Wo_attr=Wo_attr, Wc_attr=Wc_attr,

Ui_attr=Ui_attr, Uf_attr=Uf_attr, Uo_attr=Uo_attr, Uc_attr=Uc_attr,

bi_attr=bi_attr, bf_attr=bf_attr, bo_attr=bo_attr, bc_attr=bc_attr)

inputs = torch.tensor([[[1, 0]]], dtype=torch.float32)

hidden_state = lstm(inputs)

print(hidden_state)运行结果为:

tensor([[0.0594, 0.0952]], grad_fn=

)

pytorch框架已经内置了LSTM的API torch.nn.LSTM,其与自己实现的SRN不同点在于其实现时采用了两个偏置,同时矩阵相乘时参数在输入数据前面,如下公式所示:

这里我们可以将自己实现的SRN和Paddle框架内置的SRN返回的结果进行打印展示,实现代码如下。

# 这里创建一个随机数组作为测试数据,数据shape为batch_size x seq_len x input_size

batch_size, seq_len, input_size = 8, 20, 32

inputs = torch.randn(size=[batch_size, seq_len, input_size])

# 设置模型的hidden_size

hidden_size = 32

paddle_lstm = nn.LSTM(input_size, hidden_size)

self_lstm = LSTM(input_size, hidden_size)

self_hidden_state = self_lstm(inputs)

paddle_outputs, (paddle_hidden_state, paddle_cell_state) = paddle_lstm(inputs)

print("self_lstm hidden_state: ", self_hidden_state.shape)

print("paddle_lstm outpus:", paddle_outputs.shape)

print("paddle_lstm hidden_state:", paddle_hidden_state.shape)

print("paddle_lstm cell_state:", paddle_cell_state.shape)运行结果为:

self_lstm hidden_state: torch.Size([8, 32])

paddle_lstm outpus: torch.Size([8, 20, 32])

paddle_lstm hidden_state: torch.Size([1, 20, 32])

paddle_lstm cell_state: torch.Size([1, 20, 32])

可以看到,自己实现的LSTM由于没有考虑多层因素,因此没有层次这个维度,因此其输出shape为[8, 32]。同时由于在以上代码使用Paddle内置API实例化LSTM时,默认定义的是1层的单向SRN,因此其shape为[1, 8, 32],同时隐状态向量为[8,20, 32].

接下来,我们可以将自己实现的LSTM与Paddle内置的LSTM在输出值的精度上进行对比,这里首先根据Paddle内置的LSTM实例化模型(为了进行对比,在实例化时只保留一个偏置,将偏置bihbih设置为0),然后提取该模型对应的参数,进行参数分割后,使用相应参数去初始化自己实现的LSTM,从而保证两者在参数初始化时是一致的。

在进行实验时,首先定义输入数据inputs,然后将该数据分别传入Paddle内置的LSTM与自己实现的LSTM模型中,最后通过对比两者的隐状态输出向量。代码实现如下:

import torch

torch.manual_seed(0)

# 这里创建一个随机数组作为测试数据,数据shape为batch_size x seq_len x input_size

batch_size, seq_len, input_size, hidden_size = 2, 5, 10, 10

inputs = torch.randn(size=[batch_size, seq_len, input_size])

# 设置模型的hidden_size

bih_attr = torch.nn.Parameter(torch.zeros([4*hidden_size, ]))

paddle_lstm = nn.LSTM(input_size, hidden_size)

paddle_lstm.bias_ih_l0=bih_attr

paddle_lstm.bias_ih_l1=bih_attr

paddle_lstm.bias_ih_l2=bih_attr

paddle_lstm.bias_ih_l3=bih_attr

paddle_lstm.bias_ih_l4=bih_attr

# 获取paddle_lstm中的参数,并设置相应的paramAttr,用于初始化lstm

print(paddle_lstm.weight_ih_l0.T.shape)

chunked_W = torch.split(paddle_lstm.weight_ih_l0.T, split_size_or_sections=10, dim=-1)

chunked_U = torch.split(paddle_lstm.weight_hh_l0.T, split_size_or_sections=10, dim=-1)

chunked_b = torch.split(paddle_lstm.bias_hh_l0.T, split_size_or_sections=10, dim=-1)

print(chunked_b[0].shape,chunked_b[1].shape,chunked_b[2].shape)

Wi_attr = torch.tensor(chunked_W[0])

Wf_attr = torch.tensor(chunked_W[1])

Wc_attr = torch.tensor(chunked_W[2])

Wo_attr = torch.tensor(chunked_W[3])

Ui_attr = torch.tensor(chunked_U[0])

Uf_attr = torch.tensor(chunked_U[1])

Uc_attr = torch.tensor(chunked_U[2])

Uo_attr = torch.tensor(chunked_U[3])

bi_attr = torch.tensor(chunked_b[0])

bf_attr = torch.tensor(chunked_b[1])

bc_attr = torch.tensor(chunked_b[2])

bo_attr = torch.tensor(chunked_b[3])

self_lstm = LSTM(input_size, hidden_size, Wi_attr=Wi_attr, Wf_attr=Wf_attr, Wo_attr=Wo_attr, Wc_attr=Wc_attr,

Ui_attr=Ui_attr, Uf_attr=Uf_attr, Uo_attr=Uo_attr, Uc_attr=Uc_attr,

bi_attr=bi_attr, bf_attr=bf_attr, bo_attr=bo_attr, bc_attr=bc_attr)

# 进行前向计算,获取隐状态向量,并打印展示

self_hidden_state = self_lstm(inputs)

paddle_outputs, (paddle_hidden_state, _) = paddle_lstm(inputs)

print("paddle SRN:\n", paddle_hidden_state.detach().numpy().squeeze(0))

print("self SRN:\n", self_hidden_state.detach().numpy())运行结果为:

paddle SRN:

[[ 0.06057303 0.0352371 -0.04730584 0.16420795 0.13122755 -0.15738934

0.1771467 -0.00439037 -0.02465727 -0.3045934 ]

[ 0.14093119 0.11173882 0.27511147 0.04056947 -0.00766448 -0.16597556

0.32193324 0.01466936 -0.28634343 -0.19916353]

[ 0.14699097 0.03865489 -0.11907008 0.24300049 0.31992295 -0.07868578

0.19904399 0.03308991 0.09627407 -0.1424047 ]

[ 0.06207867 0.2342088 0.00657276 0.1791542 0.32928583 -0.04207081

-0.06663163 -0.00604617 -0.10334547 0.10602648]

[ 0.05457556 0.05111036 -0.10710873 0.00312713 -0.09948594 -0.11760624

0.11195059 0.13914587 -0.09120954 -0.1052993 ]]

self SRN:

[[ 0.0940564 -0.14659543 -0.14954016 0.20936163 -0.12826967 0.14749622

0.00946941 0.1993472 -0.06859784 -0.2767597 ]

[ 0.17217153 0.16705877 -0.05719084 0.14882174 0.10330292 -0.20432511

0.13150844 0.03508793 -0.07331903 -0.06966008]]

可以看到,两者的输出基本是一致的。另外,还可以进行对比两者在运算速度方面的差异。代码实现如下:

import time

# 这里创建一个随机数组作为测试数据,数据shape为batch_size x seq_len x input_size

batch_size, seq_len, input_size = 8, 20, 32

inputs = torch.randn(size=[batch_size, seq_len, input_size])

# 设置模型的hidden_size

hidden_size = 32

self_lstm = LSTM(input_size, hidden_size)

paddle_lstm = nn.LSTM(input_size, hidden_size)

# 计算自己实现的SRN运算速度

model_time = 0

for i in range(100):

strat_time = time.time()

hidden_state = self_lstm(inputs)

# 预热10次运算,不计入最终速度统计

if i < 10:

continue

end_time = time.time()

model_time += (end_time - strat_time)

avg_model_time = model_time / 90

print('self_lstm speed:', avg_model_time, 's')

# 计算Paddle内置的SRN运算速度

model_time = 0

for i in range(100):

strat_time = time.time()

outputs, (hidden_state, cell_state) = paddle_lstm(inputs)

# 预热10次运算,不计入最终速度统计

if i < 10:

continue

end_time = time.time()

model_time += (end_time - strat_time)

avg_model_time = model_time / 90

print('paddle_lstm speed:', avg_model_time, 's')运行结果为:

self_lstm speed: 0.006990869839986165 s

paddle_lstm speed: 0.00160928832160102 s

可以看到,由于pytorch框架的LSTM底层采用了C++实现并进行优化,Paddle框架内置的LSTM运行效率远远高于自己实现的LSTM。

6.3.1.2 模型汇总

在本节实验中,我们将使用6.1.2.4的Model_RNN4SeqClass作为预测模型,不同在于在实例化时将传入实例化的LSTM层。

6.3.2 模型训练

6.3.2.1 训练指定长度的数字预测模型

本节将基于RunnerV3类进行训练,首先定义模型训练的超参数,并保证和简单循环网络的超参数一致. 然后定义一个train函数,其可以通过指定长度的数据集,并进行训练. 在train函数中,首先加载长度为length的数据,然后实例化各项组件并创建对应的Runner,然后训练该Runner。同时在本节将使用4.5.4节定义的准确度(Accuracy)作为评估指标,代码实现如下:

import os

import random

import torch

import numpy as np

from nndl import RunnerV3

from nndl import Accuracy, RunnerV3

# 训练轮次

num_epochs = 500

# 学习率

lr = 0.001

# 输入数字的类别数

num_digits = 10

# 将数字映射为向量的维度

input_size = 32

# 隐状态向量的维度

hidden_size = 32

# 预测数字的类别数

num_classes = 19

# 批大小

batch_size = 8

# 模型保存目录

save_dir = "./checkpoints"

# 可以设置不同的length进行不同长度数据的预测实验

def train(length):

print(f"\n====> Training LSTM with data of length {length}.")

np.random.seed(0)

random.seed(0)

torch.manual_seed(0)

# 加载长度为length的数据

data_path = f"./datasets/{length}"

train_examples, dev_examples, test_examples = load_data(data_path)

train_set, dev_set, test_set = DigitSumDataset(train_examples), DigitSumDataset(dev_examples), DigitSumDataset(test_examples)

train_loader = torch.utils.data.DataLoader(train_set, batch_size=batch_size)

dev_loader = torch.utils.data.DataLoader(dev_set, batch_size=batch_size)

test_loader = torch.utils.data.DataLoader(test_set, batch_size=batch_size)

# 实例化模型

base_model = LSTM(input_size, hidden_size)

model = Model_RNN4SeqClass(base_model, num_digits, input_size, hidden_size, num_classes)

# 指定优化器

optimizer = torch.optim.Adam(lr=lr, params=model.parameters())

# 定义评价指标

metric = Accuracy()

# 定义损失函数

loss_fn = torch.nn.CrossEntropyLoss()

# 基于以上组件,实例化Runner

runner = RunnerV3(model, optimizer, loss_fn, metric)

# 进行模型训练

model_save_path = os.path.join(save_dir, f"best_lstm_model_{length}.pdparams")

runner.train(train_loader, dev_loader, num_epochs=num_epochs, eval_steps=100, log_steps=100, save_path=model_save_path)

return runner用到的函数为:

from torch.nn.init import xavier_uniform

class Embedding(nn.Module):

def __init__(self, num_embeddings, embedding_dim,

para_attr=xavier_uniform):

super(Embedding, self).__init__()

# 定义嵌入矩阵

W=torch.zeros(size=[num_embeddings, embedding_dim], dtype=torch.float32)

self.W = torch.nn.Parameter(W)

xavier_uniform(W)

def forward(self, inputs):

# 根据索引获取对应词向量

embs = self.W[inputs]

return embs# 基于RNN实现数字预测的模型

class Model_RNN4SeqClass(nn.Module):

def __init__(self, model, num_digits, input_size, hidden_size, num_classes):

super(Model_RNN4SeqClass, self).__init__()

# 传入实例化的RNN层,例如SRN

self.rnn_model = model

# 词典大小

self.num_digits = num_digits

# 嵌入向量的维度

self.input_size = input_size

# 定义Embedding层

self.embedding = Embedding(num_digits, input_size)

# 定义线性层

self.linear = nn.Linear(hidden_size, num_classes)

def forward(self, inputs):

# 将数字序列映射为相应向量

inputs_emb = self.embedding(inputs)

# 调用RNN模型

hidden_state = self.rnn_model(inputs_emb)

# 使用最后一个时刻的状态进行数字预测

logits = self.linear(hidden_state)

return logitsfrom torch.utils.data import Dataset

class DigitSumDataset(Dataset):

def __init__(self, data):

self.data = data

def __getitem__(self, idx):

example = self.data[idx]

seq = torch.tensor(example[0], dtype=torch.int64)

label = torch.tensor(example[1], dtype=torch.int64)

return seq, label

def __len__(self):

return len(self.data)

# 加载数据

def load_data(data_path):

# 加载训练集

train_examples = []

train_path = os.path.join(data_path, "train.txt")

with open(train_path, "r", encoding="utf-8") as f:

for line in f.readlines():

# 解析一行数据,将其处理为数字序列seq和标签label

items = line.strip().split("\t")

seq = [int(i) for i in items[0].split(" ")]

label = int(items[1])

train_examples.append((seq, label))

# 加载验证集

dev_examples = []

dev_path = os.path.join(data_path, "dev.txt")

with open(dev_path, "r", encoding="utf-8") as f:

for line in f.readlines():

# 解析一行数据,将其处理为数字序列seq和标签label

items = line.strip().split("\t")

seq = [int(i) for i in items[0].split(" ")]

label = int(items[1])

dev_examples.append((seq, label))

# 加载测试集

test_examples = []

test_path = os.path.join(data_path, "test.txt")

with open(test_path, "r", encoding="utf-8") as f:

for line in f.readlines():

# 解析一行数据,将其处理为数字序列seq和标签label

items = line.strip().split("\t")

seq = [int(i) for i in items[0].split(" ")]

label = int(items[1])

test_examples.append((seq, label))

return train_examples, dev_examples, test_examples6.3.2.2 多组训练

接下来,分别进行数据长度为10, 15, 20, 25, 30, 35的数字预测模型训练实验,训练后的runner保存至runners字典中。

lstm_runners = {}

lengths = [10, 15, 20, 25, 30, 35]

for length in lengths:

runner = train(length)

lstm_runners[length] = runner运行结果为:

====> Training LSTM with data of length 10.

[Evaluate] dev score: 0.09000, dev loss: 2.86460

[Evaluate] best accuracy performence has been updated: 0.00000 --> 0.09000

[Train] epoch: 5/500, step: 200/19000, loss: 2.48144

[Evaluate] dev score: 0.10000, dev loss: 2.84022

[Evaluate] best accuracy performence has been updated: 0.09000 --> 0.10000

[Train] epoch: 7/500, step: 300/19000, loss: 2.46724

[Evaluate] dev score: 0.10000, dev loss: 2.83455

[Train] epoch: 10/500, step: 400/19000, loss: 2.41858

[Evaluate] dev score: 0.10000, dev loss: 2.83207

[Train] epoch: 13/500, step: 500/19000, loss: 2.45705

[Evaluate] dev score: 0.76000, dev loss: 1.38295

[Train] epoch: 484/500, step: 18400/19000, loss: 0.00069

[Evaluate] dev score: 0.76000, dev loss: 1.38330

[Train] epoch: 486/500, step: 18500/19000, loss: 0.00040

[Evaluate] dev score: 0.77000, dev loss: 1.38700

[Evaluate] best accuracy performence has been updated: 0.76000 --> 0.77000

[Train] epoch: 489/500, step: 18600/19000, loss: 0.00067

[Evaluate] dev score: 0.77000, dev loss: 1.38891

[Train] epoch: 492/500, step: 18700/19000, loss: 0.00050

[Evaluate] dev score: 0.77000, dev loss: 1.39004

[Train] epoch: 494/500, step: 18800/19000, loss: 0.00053

[Evaluate] dev score: 0.77000, dev loss: 1.39317

[Train] epoch: 497/500, step: 18900/19000, loss: 0.00069

[Evaluate] dev score: 0.77000, dev loss: 1.39381

[Evaluate] dev score: 0.77000, dev loss: 1.39583

[Train] Training done!====> Training LSTM with data of length 15.

[Train] epoch: 0/500, step: 0/19000, loss: 2.83505

[Train] epoch: 2/500, step: 100/19000, loss: 2.78581

[Evaluate] dev score: 0.07000, dev loss: 2.86551

[Evaluate] best accuracy performence has been updated: 0.00000 --> 0.07000

[Train] epoch: 5/500, step: 200/19000, loss: 2.49482

[Evaluate] dev score: 0.10000, dev loss: 2.84367

[Evaluate] best accuracy performence has been updated: 0.07000 --> 0.10000

[Train] epoch: 7/500, step: 300/19000, loss: 2.48387

[Evaluate] dev score: 0.10000, dev loss: 2.83747

[Train] epoch: 10/500, step: 400/19000, loss: 2.40902

[Evaluate] best accuracy performence has been updated: 0.79000 --> 0.80000

[Train] epoch: 418/500, step: 15900/19000, loss: 0.00319

[Evaluate] dev score: 0.80000, dev loss: 1.25397

[Train] epoch: 421/500, step: 16000/19000, loss: 0.00458

[Evaluate] dev score: 0.80000, dev loss: 1.25535

[Train] epoch: 423/500, step: 16100/19000, loss: 0.00265

[Evaluate] dev score: 0.80000, dev loss: 1.25759

[Train] epoch: 426/500, step: 16200/19000, loss: 0.00167

[Evaluate] dev score: 0.80000, dev loss: 1.25949

[Train] epoch: 428/500, step: 16300/19000, loss: 0.00092

[Evaluate] dev score: 0.80000, dev loss: 1.26127

[Train] epoch: 431/500, step: 16400/19000, loss: 0.00161

[Evaluate] dev score: 0.80000, dev loss: 1.26405

[Train] epoch: 434/500, step: 16500/19000, loss: 0.00154

[Evaluate] dev score: 0.80000, dev loss: 1.26565

[Train] epoch: 436/500, step: 16600/19000, loss: 0.00068

[Evaluate] dev score: 0.80000, dev loss: 1.26796

[Train] epoch: 439/500, step: 16700/19000, loss: 0.00132

[Evaluate] dev score: 0.80000, dev loss: 1.27097

[Train] epoch: 442/500, step: 16800/19000, loss: 0.00164

[Evaluate] dev score: 0.80000, dev loss: 1.27273

[Train] epoch: 444/500, step: 16900/19000, loss: 0.00087

[Evaluate] dev score: 0.80000, dev loss: 1.27552

[Train] epoch: 447/500, step: 17000/19000, loss: 0.00130

[Evaluate] dev score: 0.80000, dev loss: 1.27835

[Train] epoch: 450/500, step: 17100/19000, loss: 0.00409

[Evaluate] dev score: 0.80000, dev loss: 1.28007

[Train] epoch: 452/500, step: 17200/19000, loss: 0.00074

[Evaluate] dev score: 0.80000, dev loss: 1.28351

[Train] epoch: 455/500, step: 17300/19000, loss: 0.00037

[Evaluate] dev score: 0.80000, dev loss: 1.28587

[Train] epoch: 457/500, step: 17400/19000, loss: 0.00081

[Evaluate] dev score: 0.80000, dev loss: 1.28809

[Train] epoch: 460/500, step: 17500/19000, loss: 0.00078

[Evaluate] dev score: 0.80000, dev loss: 1.29226

[Train] epoch: 463/500, step: 17600/19000, loss: 0.00063

[Evaluate] dev score: 0.80000, dev loss: 1.29375

[Train] epoch: 465/500, step: 17700/19000, loss: 0.00081

[Evaluate] dev score: 0.80000, dev loss: 1.29866

[Train] epoch: 468/500, step: 17800/19000, loss: 0.00133

[Evaluate] dev score: 0.80000, dev loss: 1.30044

[Train] epoch: 471/500, step: 17900/19000, loss: 0.00188

[Evaluate] dev score: 0.80000, dev loss: 1.30269

[Train] epoch: 473/500, step: 18000/19000, loss: 0.00104

[Evaluate] dev score: 0.80000, dev loss: 1.30722

[Train] epoch: 476/500, step: 18100/19000, loss: 0.00080

[Evaluate] dev score: 0.80000, dev loss: 1.30969

[Train] epoch: 478/500, step: 18200/19000, loss: 0.00039

[Evaluate] dev score: 0.80000, dev loss: 1.31293

[Train] epoch: 481/500, step: 18300/19000, loss: 0.00066

[Evaluate] dev score: 0.80000, dev loss: 1.31794

[Train] epoch: 484/500, step: 18400/19000, loss: 0.00054

[Evaluate] dev score: 0.80000, dev loss: 1.31867

[Train] epoch: 486/500, step: 18500/19000, loss: 0.00031

[Evaluate] dev score: 0.80000, dev loss: 1.32285

[Train] epoch: 489/500, step: 18600/19000, loss: 0.00053

[Evaluate] dev score: 0.80000, dev loss: 1.32626

[Train] epoch: 492/500, step: 18700/19000, loss: 0.00063

[Evaluate] dev score: 0.80000, dev loss: 1.32818

[Train] epoch: 494/500, step: 18800/19000, loss: 0.00034

[Evaluate] dev score: 0.80000, dev loss: 1.33328

[Train] epoch: 497/500, step: 18900/19000, loss: 0.00036

[Evaluate] dev score: 0.80000, dev loss: 1.33515

[Evaluate] dev score: 0.80000, dev loss: 1.33826

[Train] Training done!====> Training LSTM with data of length 20.

[Train] epoch: 0/500, step: 0/19000, loss: 2.83505

[Train] epoch: 2/500, step: 100/19000, loss: 2.76947

[Evaluate] dev score: 0.10000, dev loss: 2.85964

[Evaluate] best accuracy performence has been updated: 0.00000 --> 0.10000

[Train] epoch: 5/500, step: 200/19000, loss: 2.48617

[Evaluate] dev score: 0.10000, dev loss: 2.83813

[Train] epoch: 7/500, step: 300/19000, loss: 2.48167

[Evaluate] dev score: 0.10000, dev loss: 2.83235

[Train] epoch: 10/500, step: 400/19000, loss: 2.40573

[Evaluate] dev score: 0.10000, dev loss: 2.82994

[Train] epoch: 13/500, step: 500/19000, loss: 2.46062

[Evaluate] dev score: 0.10000, dev loss: 2.82841

[Train] epoch: 15/500, step: 600/19000, loss: 2.36476

[Evaluate] dev score: 0.10000, dev loss: 2.82848

[Train] epoch: 18/500, step: 700/19000, loss: 2.50661

[Evaluate] dev score: 0.10000, dev loss: 2.82729

[Train] epoch: 21/500, step: 800/19000, loss: 2.69739

[Evaluate] dev score: 0.10000, dev loss: 2.82654

[Train] epoch: 23/500, step: 900/19000, loss: 2.67164

[Evaluate] dev score: 0.10000, dev loss: 2.82587

[Train] epoch: 26/500, step: 1000/19000, loss: 2.73424

[Evaluate] dev score: 0.11000, dev loss: 2.82421

[Evaluate] best accuracy performence has been updated: 0.10000 --> 0.11000

[Train] epoch: 28/500, step: 1100/19000, loss: 3.44213

[Evaluate] dev score: 0.12000, dev loss: 2.82406

[Evaluate] best accuracy performence has been updated: 0.11000 --> 0.12000

[Train] epoch: 31/500, step: 1200/19000, loss: 2.81749[Evaluate] dev score: 0.72000, dev loss: 1.76485

[Evaluate] best accuracy performence has been updated: 0.71000 --> 0.72000

[Train] epoch: 352/500, step: 13400/19000, loss: 0.00286

[Evaluate] dev score: 0.73000, dev loss: 1.77673

[Evaluate] best accuracy performence has been updated: 0.72000 --> 0.73000

[Train] epoch: 355/500, step: 13500/19000, loss: 0.03924

[Evaluate] dev score: 0.73000, dev loss: 1.77541

[Train] epoch: 357/500, step: 13600/19000, loss: 0.01049

[Evaluate] dev score: 0.73000, dev loss: 1.77870

[Train] epoch: 360/500, step: 13700/19000, loss: 0.01205

[Evaluate] dev score: 0.73000, dev loss: 1.78517

[Train] epoch: 363/500, step: 13800/19000, loss: 0.00505

[Evaluate] dev score: 0.73000, dev loss: 1.78481

[Train] epoch: 365/500, step: 13900/19000, loss: 0.00416

[Evaluate] dev score: 0.73000, dev loss: 1.78524

[Train] epoch: 368/500, step: 14000/19000, loss: 0.00970

[Evaluate] dev score: 0.73000, dev loss: 1.78913

[Train] epoch: 371/500, step: 14100/19000, loss: 0.00992

[Evaluate] dev score: 0.73000, dev loss: 1.78916

[Train] epoch: 373/500, step: 14200/19000, loss: 0.03927

[Evaluate] dev score: 0.73000, dev loss: 1.79077

[Train] epoch: 376/500, step: 14300/19000, loss: 0.01398

[Evaluate] dev score: 0.72000, dev loss: 1.79417

[Train] epoch: 378/500, step: 14400/19000, loss: 0.01049

[Evaluate] dev score: 0.72000, dev loss: 1.79497

[Train] epoch: 381/500, step: 14500/19000, loss: 0.01269

[Evaluate] dev score: 0.72000, dev loss: 1.79722

[Train] epoch: 384/500, step: 14600/19000, loss: 0.01123

[Evaluate] dev score: 0.72000, dev loss: 1.79931

[Train] epoch: 386/500, step: 14700/19000, loss: 0.00823

[Evaluate] dev score: 0.72000, dev loss: 1.80139

[Train] epoch: 389/500, step: 14800/19000, loss: 0.00241

[Evaluate] dev score: 0.72000, dev loss: 1.80689

[Train] epoch: 392/500, step: 14900/19000, loss: 0.02594

[Evaluate] dev score: 0.72000, dev loss: 1.80951

[Train] epoch: 394/500, step: 15000/19000, loss: 0.01275

[Evaluate] dev score: 0.72000, dev loss: 1.81205

[Train] epoch: 397/500, step: 15100/19000, loss: 0.00252

[Evaluate] dev score: 0.72000, dev loss: 1.81857

[Train] epoch: 400/500, step: 15200/19000, loss: 0.03170

[Evaluate] dev score: 0.72000, dev loss: 1.81997

[Train] epoch: 402/500, step: 15300/19000, loss: 0.00160

[Evaluate] dev score: 0.72000, dev loss: 1.82440

[Train] epoch: 405/500, step: 15400/19000, loss: 0.00837

[Evaluate] dev score: 0.72000, dev loss: 1.82802

[Train] epoch: 407/500, step: 15500/19000, loss: 0.00461

[Evaluate] dev score: 0.72000, dev loss: 1.82951

[Train] epoch: 410/500, step: 15600/19000, loss: 0.00497

[Evaluate] dev score: 0.72000, dev loss: 1.83551

[Train] epoch: 413/500, step: 15700/19000, loss: 0.00222

[Evaluate] dev score: 0.72000, dev loss: 1.83732

[Train] epoch: 415/500, step: 15800/19000, loss: 0.00176

[Evaluate] dev score: 0.72000, dev loss: 1.84012

[Train] epoch: 418/500, step: 15900/19000, loss: 0.00433

[Evaluate] dev score: 0.72000, dev loss: 1.84767

[Train] epoch: 421/500, step: 16000/19000, loss: 0.00421

[Evaluate] dev score: 0.71000, dev loss: 1.85009

[Train] epoch: 423/500, step: 16100/19000, loss: 0.01154

[Evaluate] dev score: 0.71000, dev loss: 1.85613

[Train] epoch: 426/500, step: 16200/19000, loss: 0.00394

[Evaluate] dev score: 0.71000, dev loss: 1.86571

[Train] epoch: 428/500, step: 16300/19000, loss: 0.00451

[Evaluate] dev score: 0.71000, dev loss: 1.86908

[Train] epoch: 431/500, step: 16400/19000, loss: 0.00580

[Evaluate] dev score: 0.71000, dev loss: 1.87763

[Train] epoch: 434/500, step: 16500/19000, loss: 0.00293

[Evaluate] dev score: 0.71000, dev loss: 1.88435

[Train] epoch: 436/500, step: 16600/19000, loss: 0.00434

[Evaluate] dev score: 0.71000, dev loss: 1.88968

[Train] epoch: 439/500, step: 16700/19000, loss: 0.00134

[Evaluate] dev score: 0.71000, dev loss: 1.89978

[Train] epoch: 442/500, step: 16800/19000, loss: 0.00893

[Evaluate] dev score: 0.71000, dev loss: 1.90282

[Train] epoch: 444/500, step: 16900/19000, loss: 0.00361

[Evaluate] dev score: 0.71000, dev loss: 1.90847

[Train] epoch: 447/500, step: 17000/19000, loss: 0.00119

[Evaluate] dev score: 0.70000, dev loss: 1.91738

[Train] epoch: 450/500, step: 17100/19000, loss: 0.01069

[Evaluate] dev score: 0.70000, dev loss: 1.91850

[Train] epoch: 452/500, step: 17200/19000, loss: 0.00080

[Evaluate] dev score: 0.70000, dev loss: 1.92626

[Train] epoch: 455/500, step: 17300/19000, loss: 0.00269

[Evaluate] dev score: 0.70000, dev loss: 1.93184

[Train] epoch: 457/500, step: 17400/19000, loss: 0.00176

[Evaluate] dev score: 0.71000, dev loss: 1.93430

[Train] epoch: 460/500, step: 17500/19000, loss: 0.00212

[Evaluate] dev score: 0.71000, dev loss: 1.94325

[Train] epoch: 463/500, step: 17600/19000, loss: 0.00098

[Evaluate] dev score: 0.71000, dev loss: 1.94598

[Train] epoch: 465/500, step: 17700/19000, loss: 0.00076

[Evaluate] dev score: 0.71000, dev loss: 1.95027

[Train] epoch: 468/500, step: 17800/19000, loss: 0.00193

[Evaluate] dev score: 0.71000, dev loss: 1.95995

[Train] epoch: 471/500, step: 17900/19000, loss: 0.00128

[Evaluate] dev score: 0.71000, dev loss: 1.95994

[Train] epoch: 473/500, step: 18000/19000, loss: 0.00349

[Evaluate] dev score: 0.71000, dev loss: 1.96585

[Train] epoch: 476/500, step: 18100/19000, loss: 0.00139

[Evaluate] dev score: 0.71000, dev loss: 1.97238

[Train] epoch: 478/500, step: 18200/19000, loss: 0.00186

[Evaluate] dev score: 0.71000, dev loss: 1.97150

[Train] epoch: 481/500, step: 18300/19000, loss: 0.00246

[Evaluate] dev score: 0.71000, dev loss: 1.97660

[Train] epoch: 484/500, step: 18400/19000, loss: 0.00103

[Evaluate] dev score: 0.71000, dev loss: 1.97730

[Train] epoch: 486/500, step: 18500/19000, loss: 0.00174

[Evaluate] dev score: 0.71000, dev loss: 1.97380

[Train] epoch: 489/500, step: 18600/19000, loss: 0.00062

[Evaluate] dev score: 0.71000, dev loss: 1.97653

[Train] epoch: 492/500, step: 18700/19000, loss: 0.00298

[Evaluate] dev score: 0.71000, dev loss: 1.97168

[Train] epoch: 494/500, step: 18800/19000, loss: 0.00130

[Evaluate] dev score: 0.71000, dev loss: 1.97103

[Train] epoch: 497/500, step: 18900/19000, loss: 0.00053

[Evaluate] dev score: 0.71000, dev loss: 1.97714

[Evaluate] dev score: 0.71000, dev loss: 1.97366

[Train] Training done!====> Training LSTM with data of length 25.

[Train] epoch: 0/500, step: 0/19000, loss: 2.83505

[Train] epoch: 2/500, step: 100/19000, loss: 2.77655

[Evaluate] dev score: 0.10000, dev loss: 2.86074

[Evaluate] best accuracy performence has been updated: 0.00000 --> 0.10000

[Train] epoch: 5/500, step: 200/19000, loss: 2.49976

[Evaluate] dev score: 0.10000, dev loss: 2.84144

[Train] epoch: 7/500, step: 300/19000, loss: 2.49110

[Evaluate] dev score: 0.10000, dev loss: 2.83578

[Train] epoch: 10/500, step: 400/19000, loss: 2.41369

[Evaluate] dev score: 0.10000, dev loss: 2.83335

[Train] epoch: 13/500, step: 500/19000, loss: 2.46401

[Evaluate] dev score: 0.10000, dev loss: 2.83232

[Train] epoch: 15/500, step: 600/19000, loss: 2.38640

[Evaluate] dev score: 0.10000, dev loss: 2.83254

[Train] epoch: 18/500, step: 700/19000, loss: 2.51424[Evaluate] dev score: 0.40000, dev loss: 3.61628

[Evaluate] best accuracy performence has been updated: 0.38000 --> 0.40000

[Train] epoch: 394/500, step: 15000/19000, loss: 0.08060

[Evaluate] dev score: 0.36000, dev loss: 3.71179

[Train] epoch: 397/500, step: 15100/19000, loss: 0.04139

[Evaluate] dev score: 0.35000, dev loss: 3.81133

[Train] epoch: 400/500, step: 15200/19000, loss: 0.19323

[Evaluate] dev score: 0.37000, dev loss: 3.83067

[Train] epoch: 402/500, step: 15300/19000, loss: 0.03759

[Evaluate] dev score: 0.35000, dev loss: 3.85875

[Train] epoch: 405/500, step: 15400/19000, loss: 0.01963

[Evaluate] dev score: 0.36000, dev loss: 3.86882

[Train] epoch: 407/500, step: 15500/19000, loss: 0.03708

[Evaluate] dev score: 0.35000, dev loss: 3.88566

[Train] epoch: 410/500, step: 15600/19000, loss: 0.03960

[Evaluate] dev score: 0.36000, dev loss: 3.90958

[Train] epoch: 413/500, step: 15700/19000, loss: 0.01620

[Evaluate] dev score: 0.36000, dev loss: 3.92829

[Train] epoch: 415/500, step: 15800/19000, loss: 0.02653

[Evaluate] dev score: 0.36000, dev loss: 3.95439

[Train] epoch: 418/500, step: 15900/19000, loss: 0.01211

[Evaluate] dev score: 0.36000, dev loss: 3.96535

[Train] epoch: 421/500, step: 16000/19000, loss: 0.02010

[Evaluate] dev score: 0.36000, dev loss: 3.98574

[Train] epoch: 423/500, step: 16100/19000, loss: 0.01403

[Evaluate] dev score: 0.36000, dev loss: 4.00418

[Train] epoch: 426/500, step: 16200/19000, loss: 0.10261

[Evaluate] dev score: 0.33000, dev loss: 3.87917

[Train] epoch: 428/500, step: 16300/19000, loss: 0.50658

[Evaluate] dev score: 0.24000, dev loss: 4.19479

[Train] epoch: 431/500, step: 16400/19000, loss: 0.77854

[Evaluate] dev score: 0.37000, dev loss: 3.88633

[Train] epoch: 434/500, step: 16500/19000, loss: 0.05411

[Evaluate] dev score: 0.32000, dev loss: 4.37722

[Train] epoch: 436/500, step: 16600/19000, loss: 0.04985

[Evaluate] dev score: 0.35000, dev loss: 3.95593

[Train] epoch: 439/500, step: 16700/19000, loss: 0.04037

[Evaluate] dev score: 0.36000, dev loss: 4.05939

[Train] epoch: 442/500, step: 16800/19000, loss: 0.06667

[Evaluate] dev score: 0.37000, dev loss: 4.01447

[Train] epoch: 444/500, step: 16900/19000, loss: 0.03957

[Evaluate] dev score: 0.37000, dev loss: 4.12112

[Train] epoch: 447/500, step: 17000/19000, loss: 0.03272

[Evaluate] dev score: 0.34000, dev loss: 4.04134

[Train] epoch: 450/500, step: 17100/19000, loss: 0.13312

[Evaluate] dev score: 0.33000, dev loss: 4.29661

[Train] epoch: 452/500, step: 17200/19000, loss: 0.07697

[Evaluate] dev score: 0.32000, dev loss: 4.34585

[Train] epoch: 455/500, step: 17300/19000, loss: 0.01970

[Evaluate] dev score: 0.32000, dev loss: 4.23175

[Train] epoch: 457/500, step: 17400/19000, loss: 0.02952

[Evaluate] dev score: 0.34000, dev loss: 4.00266

[Train] epoch: 460/500, step: 17500/19000, loss: 0.03060

[Evaluate] dev score: 0.37000, dev loss: 4.19442

[Train] epoch: 463/500, step: 17600/19000, loss: 0.00943

[Evaluate] dev score: 0.35000, dev loss: 4.11684

[Train] epoch: 465/500, step: 17700/19000, loss: 0.01985

[Evaluate] dev score: 0.37000, dev loss: 4.13914

[Train] epoch: 468/500, step: 17800/19000, loss: 0.01026

[Evaluate] dev score: 0.37000, dev loss: 4.16462

[Train] epoch: 471/500, step: 17900/19000, loss: 0.01614

[Evaluate] dev score: 0.36000, dev loss: 4.19717

[Train] epoch: 473/500, step: 18000/19000, loss: 0.00953

[Evaluate] dev score: 0.35000, dev loss: 4.21827

[Train] epoch: 476/500, step: 18100/19000, loss: 0.01507

[Evaluate] dev score: 0.37000, dev loss: 4.23882

[Train] epoch: 478/500, step: 18200/19000, loss: 0.02248

[Evaluate] dev score: 0.37000, dev loss: 4.26983

[Train] epoch: 481/500, step: 18300/19000, loss: 0.02343

[Evaluate] dev score: 0.37000, dev loss: 4.28182

[Train] epoch: 484/500, step: 18400/19000, loss: 0.01243

[Evaluate] dev score: 0.37000, dev loss: 4.30134

[Train] epoch: 486/500, step: 18500/19000, loss: 0.00708

[Evaluate] dev score: 0.37000, dev loss: 4.32320

[Train] epoch: 489/500, step: 18600/19000, loss: 0.00910

[Evaluate] dev score: 0.37000, dev loss: 4.33397

[Train] epoch: 492/500, step: 18700/19000, loss: 0.02776

[Evaluate] dev score: 0.37000, dev loss: 4.35447

[Train] epoch: 494/500, step: 18800/19000, loss: 0.01732

[Evaluate] dev score: 0.37000, dev loss: 4.36823

[Train] epoch: 497/500, step: 18900/19000, loss: 0.01365

[Evaluate] dev score: 0.37000, dev loss: 4.38031

[Evaluate] dev score: 0.37000, dev loss: 4.41117

[Train] Training done!====> Training LSTM with data of length 30.

[Train] epoch: 0/500, step: 0/19000, loss: 2.83505

[Train] epoch: 2/500, step: 100/19000, loss: 2.78386

[Evaluate] dev score: 0.12000, dev loss: 2.86110

[Evaluate] best accuracy performence has been updated: 0.00000 --> 0.12000

[Train] epoch: 5/500, step: 200/19000, loss: 2.50157

[Evaluate] dev score: 0.10000, dev loss: 2.83898

[Train] epoch: 7/500, step: 300/19000, loss: 2.49030

[Evaluate] dev score: 0.10000, dev loss: 2.83275

[Train] epoch: 10/500, step: 400/19000, loss: 2.41555

[Evaluate] dev score: 0.10000, dev loss: 2.83050

[Train] epoch: 13/500, step: 500/19000, loss: 2.47067

[Evaluate] dev score: 0.10000, dev loss: 2.82899

[Train] epoch: 15/500, step: 600/19000, loss: 2.37797

[Evaluate] dev score: 0.10000, dev loss: 2.82922

[Train] epoch: 18/500, step: 700/19000, loss: 2.51159

[Evaluate] dev score: 0.10000, dev loss: 2.82841

[Train] epoch: 21/500, step: 800/19000, loss: 2.68927

[Evaluate] dev score: 0.10000, dev loss: 2.82814

[Train] epoch: 23/500, step: 900/19000, loss: 2.67566

[Evaluate] dev score: 0.10000, dev loss: 2.82848

[Train] epoch: 26/500, step: 1000/19000, loss: 2.72234

[Evaluate] dev score: 0.10000, dev loss: 2.82783

[Train] epoch: 28/500, step: 1100/19000, loss: 3.48299

[Evaluate] dev score: 0.10000, dev loss: 2.82849

[Train] epoch: 31/500, step: 1200/19000, loss: 2.78354

[Evaluate] dev score: 0.10000, dev loss: 2.82815

[Train] epoch: 34/500, step: 1300/19000, loss: 3.00769

[Evaluate] dev score: 0.10000, dev loss: 2.82761

[Train] epoch: 36/500, step: 1400/19000, loss: 3.04156

[Evaluate] dev score: 0.10000, dev loss: 2.82860

[Train] epoch: 39/500, step: 1500/19000, loss: 2.79295

[Evaluate] dev score: 0.10000, dev loss: 2.82796

[Train] epoch: 42/500, step: 1600/19000, loss: 3.26870

[Evaluate] dev score: 0.10000, dev loss: 2.82775

[Train] epoch: 44/500, step: 1700/19000, loss: 2.69724

[Evaluate] dev score: 0.10000, dev loss: 2.82910

[Train] epoch: 47/500, step: 1800/19000, loss: 2.58157

[Evaluate] dev score: 0.10000, dev loss: 2.82889

[Train] epoch: 50/500, step: 1900/19000, loss: 4.11195

[Train] epoch: 457/500, step: 17400/19000, loss: 0.00965

[Evaluate] dev score: 0.86000, dev loss: 0.68678

[Train] epoch: 460/500, step: 17500/19000, loss: 0.00763

[Evaluate] dev score: 0.87000, dev loss: 0.68706

[Train] epoch: 463/500, step: 17600/19000, loss: 0.01124

[Evaluate] dev score: 0.87000, dev loss: 0.68583

[Train] epoch: 465/500, step: 17700/19000, loss: 0.01042

[Evaluate] dev score: 0.87000, dev loss: 0.68894

[Train] epoch: 468/500, step: 17800/19000, loss: 0.00819

[Evaluate] dev score: 0.87000, dev loss: 0.68902

[Train] epoch: 471/500, step: 17900/19000, loss: 0.00186

[Evaluate] dev score: 0.86000, dev loss: 0.68932

[Train] epoch: 473/500, step: 18000/19000, loss: 0.00879

[Evaluate] dev score: 0.87000, dev loss: 0.69464

[Train] epoch: 476/500, step: 18100/19000, loss: 0.00581

[Evaluate] dev score: 0.87000, dev loss: 0.69183

[Train] epoch: 478/500, step: 18200/19000, loss: 0.01392

[Evaluate] dev score: 0.86000, dev loss: 0.69212

[Train] epoch: 481/500, step: 18300/19000, loss: 0.00584

[Evaluate] dev score: 0.87000, dev loss: 0.69869

[Train] epoch: 484/500, step: 18400/19000, loss: 0.01406

[Evaluate] dev score: 0.87000, dev loss: 0.69551

[Train] epoch: 486/500, step: 18500/19000, loss: 0.01131

[Evaluate] dev score: 0.87000, dev loss: 0.69632

[Train] epoch: 489/500, step: 18600/19000, loss: 0.00420

[Evaluate] dev score: 0.87000, dev loss: 0.70490

[Train] epoch: 492/500, step: 18700/19000, loss: 0.00802

[Evaluate] dev score: 0.88000, dev loss: 0.70548

[Evaluate] best accuracy performence has been updated: 0.87000 --> 0.88000

[Train] epoch: 494/500, step: 18800/19000, loss: 0.00547

[Evaluate] dev score: 0.88000, dev loss: 0.70771

[Train] epoch: 497/500, step: 18900/19000, loss: 0.00443

[Evaluate] dev score: 0.88000, dev loss: 0.71215

[Evaluate] dev score: 0.88000, dev loss: 0.72060

[Train] Training done!

====> Training LSTM with data of length 35.

[Train] epoch: 0/500, step: 0/19000, loss: 2.83505

[Train] epoch: 2/500, step: 100/19000, loss: 2.77430

[Evaluate] dev score: 0.12000, dev loss: 2.85861

[Evaluate] best accuracy performence has been updated: 0.00000 --> 0.12000

[Train] epoch: 5/500, step: 200/19000, loss: 2.49744

[Evaluate] dev score: 0.10000, dev loss: 2.83670

[Train] epoch: 7/500, step: 300/19000, loss: 2.48664

[Evaluate] dev score: 0.10000, dev loss: 2.83080

[Train] epoch: 10/500, step: 400/19000, loss: 2.42468

[Evaluate] dev score: 0.10000, dev loss: 2.82865

[Train] epoch: 13/500, step: 500/19000, loss: 2.45966

[Evaluate] dev score: 0.10000, dev loss: 2.82730

[Train] epoch: 15/500, step: 600/19000, loss: 2.37259

[Evaluate] dev score: 0.10000, dev loss: 2.82764

[Train] epoch: 18/500, step: 700/19000, loss: 2.50715

[Evaluate] dev score: 0.10000, dev loss: 2.82672

[Train] epoch: 21/500, step: 800/19000, loss: 2.69640

[Evaluate] dev score: 0.10000, dev loss: 2.82642

[Train] epoch: 23/500, step: 900/19000, loss: 2.66457

[Evaluate] dev score: 0.11000, dev loss: 2.82679

[Train] epoch: 26/500, step: 1000/19000, loss: 2.64764

[Evaluate] dev score: 0.10000, dev loss: 2.82769

[Train] epoch: 28/500, step: 1100/19000, loss: 3.42332[Evaluate] best accuracy performence has been updated: 0.86000 --> 0.89000

[Train] epoch: 476/500, step: 18100/19000, loss: 0.04217

[Evaluate] dev score: 0.88000, dev loss: 0.37585

[Train] epoch: 478/500, step: 18200/19000, loss: 0.04331

[Evaluate] dev score: 0.88000, dev loss: 0.37485

[Train] epoch: 481/500, step: 18300/19000, loss: 0.05118

[Evaluate] dev score: 0.88000, dev loss: 0.37668

[Train] epoch: 484/500, step: 18400/19000, loss: 0.06765

[Evaluate] dev score: 0.88000, dev loss: 0.37357

[Train] epoch: 486/500, step: 18500/19000, loss: 0.03764

[Evaluate] dev score: 0.89000, dev loss: 0.38093

[Train] epoch: 489/500, step: 18600/19000, loss: 0.04673

[Evaluate] dev score: 0.88000, dev loss: 0.38495

[Train] epoch: 492/500, step: 18700/19000, loss: 0.06683

[Evaluate] dev score: 0.88000, dev loss: 0.38080

[Train] epoch: 494/500, step: 18800/19000, loss: 0.05129

[Evaluate] dev score: 0.89000, dev loss: 0.38528

[Train] epoch: 497/500, step: 18900/19000, loss: 0.03119

[Evaluate] dev score: 0.89000, dev loss: 0.38632

[Evaluate] dev score: 0.89000, dev loss: 0.38519

[Train] Training done!

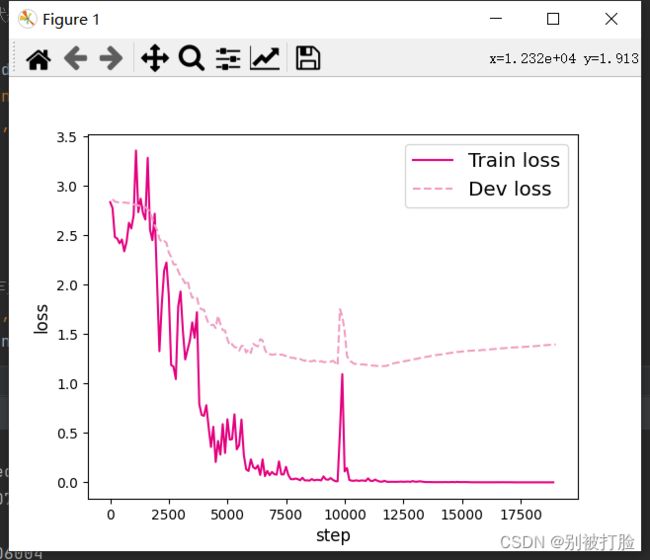

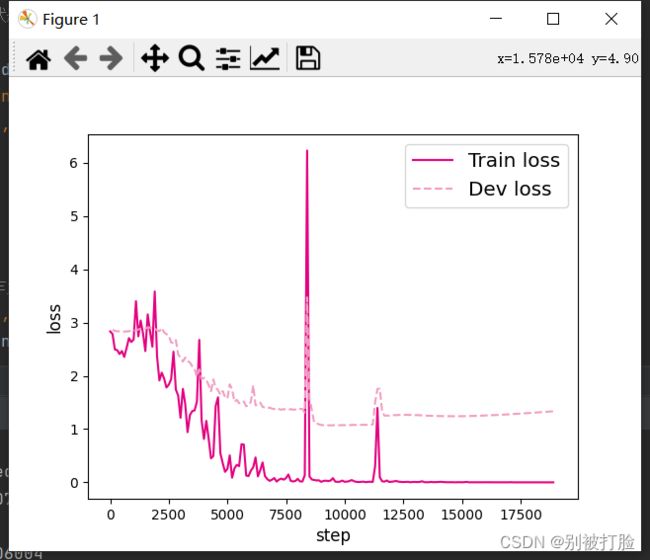

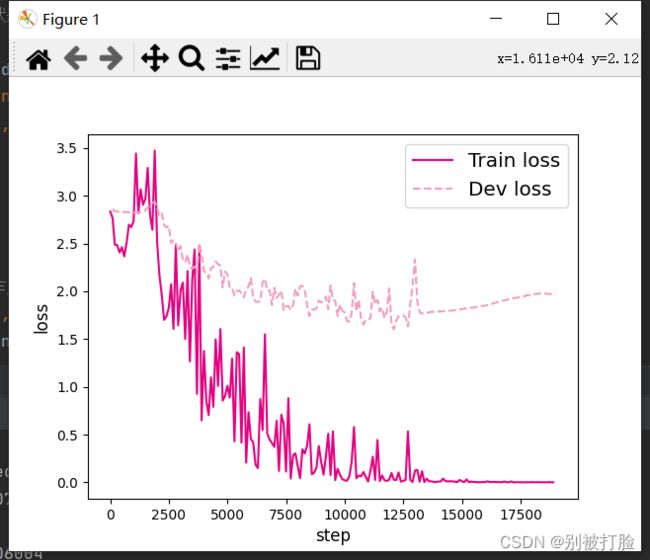

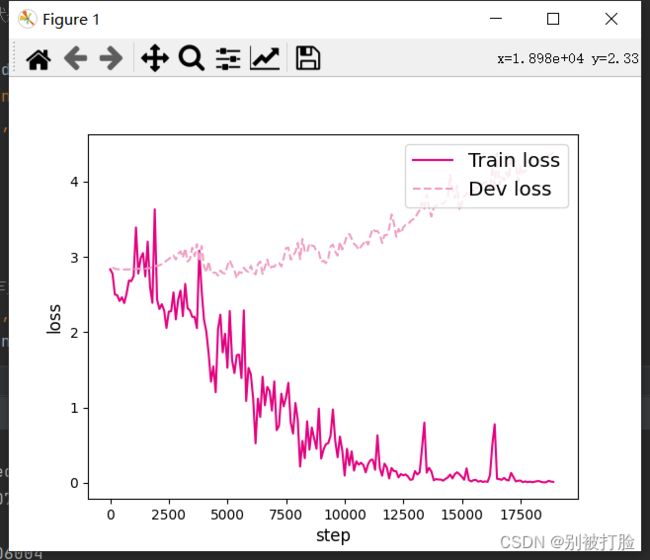

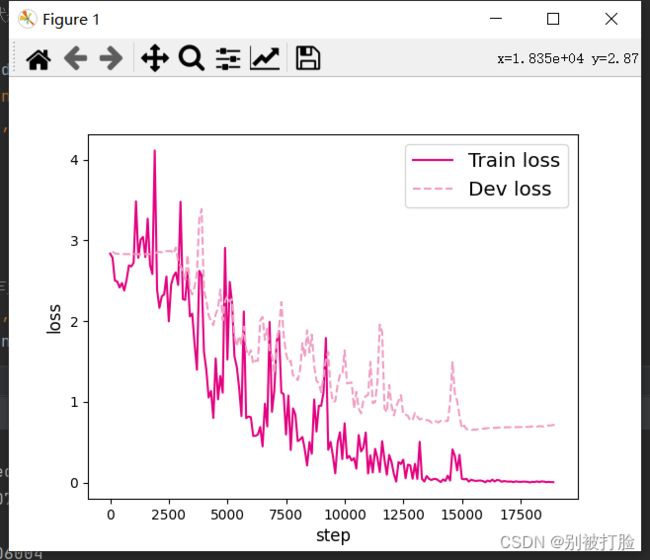

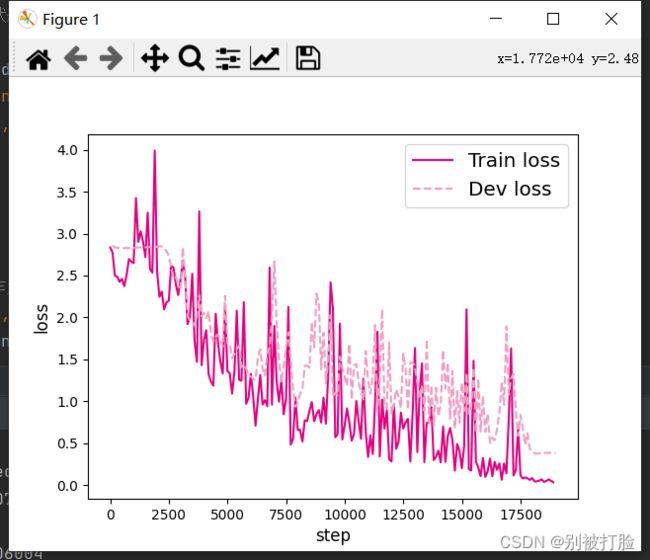

6.3.2.3 损失曲线展示

分别画出基于LSTM的各个长度的数字预测模型训练过程中,在训练集和验证集上的损失曲线,代码实现如下:

# 画出训练过程中的损失图

for length in lengths:

runner = lstm_runners[length]

fig_name = f"./images/6.11_{length}.pdf"

plot_training_loss(runner, fig_name, sample_step=100)用到的画图函数为:

import matplotlib.pyplot as plt

def plot_training_loss(runner, fig_name, sample_step):

plt.figure()

train_items = runner.train_step_losses[::sample_step]

train_steps = [x[0] for x in train_items]

train_losses = [x[1] for x in train_items]

plt.plot(train_steps, train_losses, color='#e4007f', label="Train loss")

dev_steps = [x[0] for x in runner.dev_losses]

dev_losses = [x[1] for x in runner.dev_losses]

plt.plot(dev_steps, dev_losses, color='#f19ec2', linestyle='--', label="Dev loss")

# 绘制坐标轴和图例

plt.ylabel("loss", fontsize='large')

plt.xlabel("step", fontsize='large')

plt.legend(loc='upper right', fontsize='x-large')

plt.savefig(fig_name)

plt.show()运行结果为:

L=10 L=15

L=20 L=25

L=30 L=35

【思考题1】LSTM与SRN实验结果对比,谈谈看法。(选做)

首先,要先把两个模型做一下对比

然后看一下SRN的结果

首先,借用一下老师的话,这个都是很明显可以看出来的, 就是随着序列长度的增加,正确率的变化

LSTM模型在不同长度数据集上进行训练后的损失变化,同SRN模型一样,随着序列长度的增加,训练集上的损失逐渐不稳定,验证集上的损失整体趋向于变大,这说明当序列长度增加时,保持长期依赖的能力同样在逐渐变弱. 相比,LSTM模型在序列长度增加时,收敛情况比SRN模型更好。

说一下我的理解。

LSTM的效果非常好,我感觉是因为,它是一个模型,可以把有用的记住,没用的舍弃,就是把这个模型,把有用的信息通过门控学习了,没用的舍弃了,至于哪个有用,哪个没用,以学习来判断。

6.3.3 模型评价

6.3.3.1 在测试集上进行模型评价

使用测试数据对在训练过程中保存的最好模型进行评价,观察模型在测试集上的准确率. 同时获取模型在训练过程中在验证集上最好的准确率,实现代码如下:

lstm_dev_scores = []

lstm_test_scores = []

for length in lengths:

print(f"Evaluate LSTM with data length {length}.")

runner = lstm_runners[length]

# 加载训练过程中效果最好的模型

model_path = os.path.join(save_dir, f"best_lstm_model_{length}.pdparams")

runner.load_model(model_path)

# 加载长度为length的数据

data_path = f"./datasets/{length}"

train_examples, dev_examples, test_examples = load_data(data_path)

test_set = DigitSumDataset(test_examples)

test_loader = torch.utils.data.DataLoader(test_set, batch_size=batch_size)

# 使用测试集评价模型,获取测试集上的预测准确率

score, _ = runner.evaluate(test_loader)

lstm_test_scores.append(score)

lstm_dev_scores.append(max(runner.dev_scores))

for length, dev_score, test_score in zip(lengths, lstm_dev_scores, lstm_test_scores):

print(f"[LSTM] length:{length}, dev_score: {dev_score}, test_score: {test_score: .5f}")运行结果为:

Evaluate LSTM with data length 20.

Evaluate LSTM with data length 25.

Evaluate LSTM with data length 30.

Evaluate LSTM with data length 35.

[LSTM] length:10, dev_score: 0.77, test_score: 0.77000

[LSTM] length:15, dev_score: 0.8, test_score: 0.82000

[LSTM] length:20, dev_score: 0.73, test_score: 0.76000

[LSTM] length:25, dev_score: 0.4, test_score: 0.31000

[LSTM] length:30, dev_score: 0.88, test_score: 0.88000

[LSTM] length:35, dev_score: 0.89, test_score: 0.82000

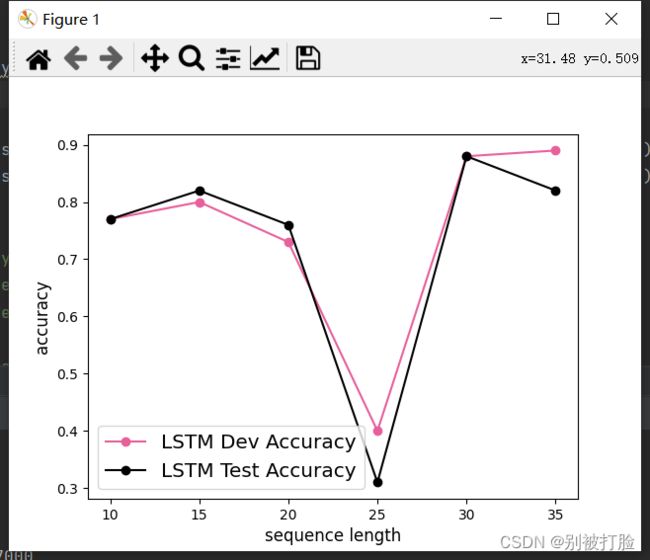

6.3.3.2 模型在不同长度的数据集上的准确率变化图

接下来,将SRN和LSTM在不同长度的验证集和测试集数据上的准确率绘制成图片,以方面观察。

import matplotlib.pyplot as plt

plt.plot(lengths, lstm_dev_scores, '-o', color='#e8609b', label="LSTM Dev Accuracy")

plt.plot(lengths, lstm_test_scores,'-o', color='#000000', label="LSTM Test Accuracy")

#绘制坐标轴和图例

plt.ylabel("accuracy", fontsize='large')

plt.xlabel("sequence length", fontsize='large')

plt.legend(loc='lower left', fontsize='x-large')

fig_name = "./images/6.12.pdf"

plt.savefig(fig_name)

plt.show()

运行结果为:

【思考题2】LSTM与SRN在不同长度数据集上的准确度对比,谈谈看法。(选做)

RNN的结果为:

首先,先说一下,最直观的体现就是,使用的纵坐标的范围都不一样,这可以直接的体现出LSTM的强大。

下边,放一下老师的解释(我把我的和老师的综合了一下)

LSTM模型与SRN模型在不同长度数据集上的准确度对比。随着数据集长度的增加,LSTM模型在验证集和测试集上的准确率整体也趋向于降低;同时LSTM模型的准确率显著高于SRN模型,表明LSTM模型保持长期依赖的能力要优于SRN模型.

6.3.3.3 LSTM模型门状态和单元状态的变化

LSTM模型通过门控机制控制信息的单元状态的更新,这里可以观察当LSTM在处理一条数字序列的时候,相应门和单元状态是如何变化的。首先需要对以上LSTM模型实现代码中,定义相应列表进行存储这些门和单元状态在每个时刻的向量。

import torch.nn.functional as F

# 声明LSTM和相关参数

class LSTM(nn.Module):

def __init__(self, input_size, hidden_size, para_attr=xavier_uniform):

super(LSTM, self).__init__()

self.input_size = input_size

self.hidden_size = hidden_size

# 初始化模型参数

self.W_i = torch.nn.Parameter(para_attr(torch.empty(size=[input_size, hidden_size], dtype=torch.float32)))

self.W_f = torch.nn.Parameter(para_attr(torch.empty(size=[input_size, hidden_size], dtype=torch.float32)))

self.W_o = torch.nn.Parameter(para_attr(torch.empty(size=[input_size, hidden_size], dtype=torch.float32)))

self.W_c = torch.nn.Parameter(para_attr(torch.empty(size=[input_size, hidden_size], dtype=torch.float32)))

self.U_i = torch.nn.Parameter(para_attr(torch.empty(size=[hidden_size, hidden_size], dtype=torch.float32)))

self.U_f = torch.nn.Parameter(para_attr(torch.empty(size=[hidden_size, hidden_size], dtype=torch.float32)))

self.U_o = torch.nn.Parameter(para_attr(torch.empty(size=[hidden_size, hidden_size], dtype=torch.float32)))

self.U_c = torch.nn.Parameter(para_attr(torch.empty(size=[hidden_size, hidden_size], dtype=torch.float32)))

self.b_i = torch.nn.Parameter(para_attr(torch.empty(size=[1, hidden_size], dtype=torch.float32)))

self.b_f = torch.nn.Parameter(para_attr(torch.empty(size=[1, hidden_size], dtype=torch.float32)))

self.b_o = torch.nn.Parameter(para_attr(torch.empty(size=[1, hidden_size], dtype=torch.float32)))

self.b_c = torch.nn.Parameter(para_attr(torch.empty(size=[1, hidden_size], dtype=torch.float32)))

# 初始化状态向量和隐状态向量

def init_state(self, batch_size):

hidden_state = torch.zeros(size=[batch_size, self.hidden_size], dtype=torch.float32)

cell_state = torch.zeros(size=[batch_size, self.hidden_size], dtype=torch.float32)

return hidden_state, cell_state

# 定义前向计算

def forward(self, inputs, states=None):

batch_size, seq_len, input_size = inputs.shape # inputs batch_size x seq_len x input_size

if states is None:

states = self.init_state(batch_size)

hidden_state, cell_state = states

# 定义相应的门状态和单元状态向量列表

self.Is = []

self.Fs = []

self.Os = []

self.Cs = []

# 初始化状态向量和隐状态向量

cell_state = torch.zeros(size=[batch_size, self.hidden_size], dtype=torch.float32)

hidden_state = torch.zeros(size=[batch_size, self.hidden_size], dtype=torch.float32)

# 执行LSTM计算,包括:隐藏门、输入门、遗忘门、候选状态向量、状态向量和隐状态向量

for step in range(seq_len):

input_step = inputs[:, step, :]

I_gate = F.sigmoid(torch.matmul(input_step, self.W_i) + torch.matmul(hidden_state, self.U_i) + self.b_i)

F_gate = F.sigmoid(torch.matmul(input_step, self.W_f) + torch.matmul(hidden_state, self.U_f) + self.b_f)

O_gate = F.sigmoid(torch.matmul(input_step, self.W_o) + torch.matmul(hidden_state, self.U_o) + self.b_o)

C_tilde = F.tanh(torch.matmul(input_step, self.W_c) + torch.matmul(hidden_state, self.U_c) + self.b_c)

cell_state = F_gate * cell_state + I_gate * C_tilde

hidden_state = O_gate * F.tanh(cell_state)

# 存储门状态向量和单元状态向量

self.Is.append(I_gate.numpy().copy())

self.Fs.append(F_gate.numpy().copy())

self.Os.append(O_gate.numpy().copy())

self.Cs.append(cell_state.numpy().copy())

return hidden_state接下来,需要使用新的LSTM模型,重新实例化一个runner,本节使用序列长度为10的模型进行此项实验,因此需要加载序列长度为10的模型。

# 实例化模型

base_model = LSTM(input_size, hidden_size)

model = Model_RNN4SeqClass(base_model, num_digits, input_size, hidden_size, num_classes)

# 指定优化器

optimizer = torch.optim.Adam(lr=lr, params=model.parameters())

# 定义评价指标

metric = Accuracy()

# 定义损失函数

loss_fn = torch.nn.CrossEntropyLoss()

# 基于以上组件,重新实例化Runner

runner = RunnerV3(model, optimizer, loss_fn, metric)

length = 10

# 加载训练过程中效果最好的模型

model_path = os.path.join(save_dir, f"best_lstm_model_{length}.pdparams")

runner.load_model(model_path)接下来,给定一条数字序列,并使用数字预测模型进行数字预测,这样便会将相应的门状态和单元状态向量保存至模型中. 然后分别从模型中取出这些向量,并将这些向量进行绘制展示。代码实现如下:

import seaborn as sns

def plot_tensor(inputs, tensor, save_path, vmin=0, vmax=1):

tensor = np.stack(tensor, axis=0)

tensor = np.squeeze(tensor, 1).T

plt.figure(figsize=(16,6))

# vmin, vmax定义了色彩图的上下界

ax = sns.heatmap(tensor, vmin=vmin, vmax=vmax)

ax.set_xticklabels(inputs)

ax.figure.savefig(save_path)

# 定义模型输入

inputs = [6, 7, 0, 0, 1, 0, 0, 0, 0, 0]

X = torch.tensor(inputs.copy())

X = X.unsqueeze(0)

# 进行模型预测,并获取相应的预测结果

logits = runner.predict(X)

predict_label = torch.argmax(logits, dim=-1)

print(f"predict result: {predict_label.numpy()[0]}")

# 输入门

Is = runner.model.rnn_model.Is

plot_tensor(inputs, Is, save_path="./images/6.13_I.pdf")

# 遗忘门

Fs = runner.model.rnn_model.Fs

plot_tensor(inputs, Fs, save_path="./images/6.13_F.pdf")

# 输出门

Os = runner.model.rnn_model.Os

plot_tensor(inputs, Os, save_path="./images/6.13_O.pdf")

# 单元状态

Cs = runner.model.rnn_model.Cs

plot_tensor(inputs, Cs, save_path="./images/6.13_C.pdf", vmin=-5, vmax=5)运行结果为:

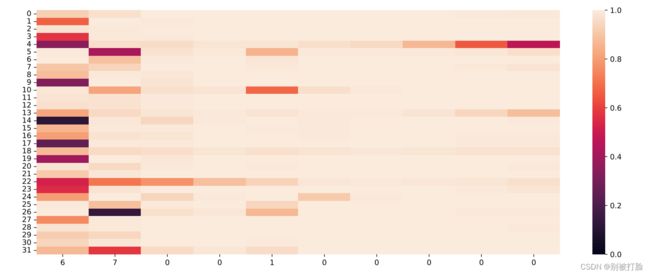

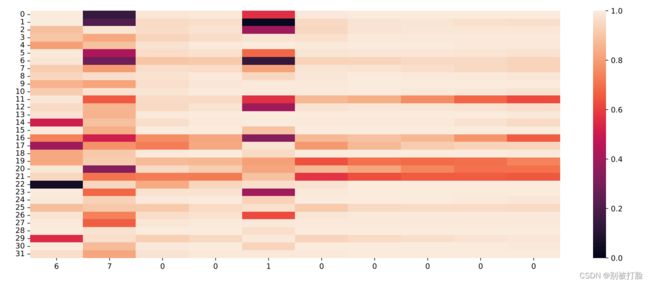

predict result: 13

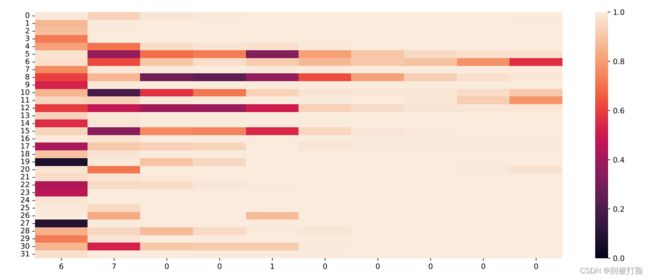

输入门:

遗忘门:

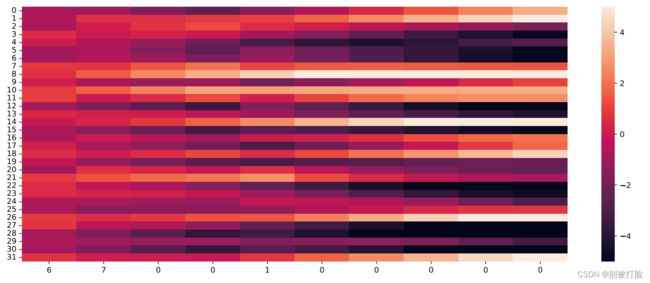

输出门:

单元状态:

【思考题3】分析LSTM中单元状态和门数值的变化图,并用自己的话解释该图。

先说一下,大体的计算流程,然后再放上老师的实例计算流程。

首先,看输入,计算各个门的情况,开关是有逻辑运算的结果决定的,至于逻辑运算的表达式,在上边,最开始那,然后就是计算出来状态后,判断一下c是遗忘,还是继承着更新,最后有输出门判断是否输出,输入门是控制着输入x和记忆状态进行运算的门。

下边是老师给出的实例。

当LSTM处理序列数据[6, 7, 0, 0, 1, 0, 0, 0, 0, 0]的过程中单元状态和门数值的变化图,其中横坐标为输入数字,纵坐标为相应门或单元状态向量的维度,颜色的深浅代表数值的大小。可以看到,当输入门遇到不同位置的数字0时,保持了相对一致的数值大小,表明对于0元素保持相同的门控过滤机制,避免输入信息的变化给当前模型带来困扰;当遗忘门遇到数字1后,遗忘门数值在一些维度上变小,表明对某些信息进行了遗忘;随着序列的输入,输出门和单元状态在某些维度上数值变小,在某些维度上数值变大,表明输出门在根据信息的重要性选择信息进行输出,同时单元状态也在保持着对文本预测重要的一些信息.

总结

首先,这两天在做抗原,一开始,吓了一跳,希望疫情快点过去吧,保定这确实有点严重。

其次,这次确实试了试LSTM,之前用过都是调库,用的直接写好函数,之前用来分析过数据随时间变化的情况,但是这次是真的理解了理解底层的原理。

其次,是最后输出了一下各个门,及单元状态,感觉真的是和推一遍一样,真的清晰了好多。

最后,当然是谢谢老师,谢谢老师在学习和生活上的关心(哈哈哈)。