计算机视觉的应用17-利用CrowdCountNet模型解决人群数量计算问题(pytorch搭建模型)

大家好,我是微学AI,今天给大家介绍一下计算机视觉的应用17-利用CrowdCountNet模型解决人群数量计算问题(pytorch搭建模型)。本篇文章,我将向大家展示如何使用CrowdCountNet这个神奇的工具,以及它是如何利用深度学习技术来解决复杂的人群计数问题。让我们一起进入这个充满活力和创新的世界,开启图像和视频中人群数量计算的新篇章!

目录

- 项目介绍

- 应用场景

- 人流监测和管理

- 安全防控

- 市场调研和决策支持

- 城市规划和交通管理

- 实战项目

- 数据准备

- 模型构建

- 模型训练

- 图片检测人群数量

- 视频检测人群数量

- 结论

1. 项目介绍

本文我将利用深度神经网络来解决一个现实中普遍存在的问题:如何准确计算图像和视频中的人群数量。当您走进拥挤的城市街头或繁忙的公共场所时,人群数量经常让人难以置信。然而,现在有了深度学习模型的帮助,我们可以轻松地通过计算机视觉来解决这个挑战。

CrowdCountNet是我们的主角,它是一种被广泛应用于图像识别和处理领域的深度学习模型。它背后的原理十分精巧,利用了神经网络的强大能力来理解和分析图像中的人群分布。这个模型通过学习大量的图像数据,自动捕捉到了各种人群密集度的模式和特征。

想象一下,当你看着一张摄像头拍摄的城市街景时,CrowdCountNet正在忙碌地工作着。它会逐像素地扫描整个图像,并识别每个像素点上是否存在人群。从细微的行人到人群聚集的区域,CrowdCountNet都能准确地捕捉到每个人的存在。

使用这个强大的深度学习模型,我们可以实现许多令人惊叹的功能。无论是为城市规划提供人流热图、帮助安保人员监控拥挤场所,还是为交通管理提供实时的交通流量信息,CrowdCountNet都能在不同领域发挥巨大作用。

2. 应用场景

2.1 人流监测和管理

在公共场所,例如商场、机场、火车站等,监测和管理人流量是至关重要的。我们的模型可以用于实时监测人流量,帮助管理者做出更有效的决策,比如调整人流方向,预防拥挤等。

在一个繁忙的购物中心,通过我们的人流监测系统,可以实时显示各个商店的人流量。商场管理员可以在控制中心的大屏幕上看到不同区域的人流状况,比如一楼的时尚区人流量饱和,而二楼的电子产品区人流相对稀少。管理员立即作出反应,调整楼梯和电梯的方向,引导顾客流向较空闲的区域,以缓解拥挤。

2.2 安全防控

在大型活动或集会中,通过实时监测人群数量,可以预防和控制安全事故的发生,及时制定疏散计划,提高人员安全。

想象一个音乐节现场,数以万计的观众聚集在一个开放的场地上。通过我们的人流监测系统,主办方能够实时获得观众的数量和密度数据。突然,系统发出警报,显示某个区域的人流超过了安全限制。主办方立刻启动紧急预案,引导人群有序撤离,避免发生踩踏事故。

2.3 市场调研和决策支持

商家可以通过监测店铺或某个区域的人流量,来评估其营销策略的效果,或者进行更准确的市场调研。

一家新开业的百货公司想要评估其广告宣传效果和吸引力。通过人流监测系统,他们可以统计每天进入商场的人数,并与营销活动的时间和内容进行对比。他们发现,当进行打折促销时,入场人数骤增,而在没有促销的日子里,人流量相对稀少。这为他们提供了有价值的市场调研数据,帮助他们更准确地评估促销策略的成效。

2.4 城市规划和交通管理

在城市规划和交通管理中,通过人群数量的监测,可以更好地理解和预测城市中的人流动态,从而更科学地进行城市规划和交通管理。

想象一座拥挤的大都市,上班高峰期大批人涌入地铁站。通过我们的人流监测系统,地铁管理部门可以实时了解不同地铁站的客流情况,并根据需求增加或减少列车班次。当一个地铁站即将达到容纳上限时,系统会自动发出警报,引导乘客选择其他线路或利用公共交通换乘,以减少人流压力。

2.5 相册与毕业生人数统计

通常毕业合影中有大批的人一起合作,我们要统计人数的话,基本都是人工去一个一个数出来,这样费时费力。现在利用模型直接统计合照人数,统计合照人数,判断是否学生来齐了。

3. 实战项目

在这个项目中,我们将首先讨论如何准备数据,然后构建和训练我们的模型,并最后使用我们的模型来检测人群数量。

3.1 数据准备

首先,我们需要准备一个包含大量标注人群数量的图像数据集。这可以是公开的人群数量数据集,也可以是自己收集并标注的数据集。

3.2 模型构建

然后,我们需要构建一个CrowdCountNet模型来学习如何从图像中预测人群数量。

import torch.nn as nn

import torch

import torch.nn.functional as F

from torch.autograd import Variable

from torch.nn.utils.weight_norm import weight_norm

import math

from collections import OrderedDict

class CrowdCountNet(nn.Module):

def __init__(self,

leaky_relu=False,

attn_weight=1,

fix_domain=1,

domain_center_model='',

**kwargs):

super(CrowdCountNet, self).__init__()

self.criterion_attn = torch.nn.MSELoss(reduction='sum')

self.domain_center_model = domain_center_model

self.attn_weight = attn_weight

self.fix_domain = fix_domain

self.cosine = 1

self.conv1 = nn.Conv2d(

3, 64, kernel_size=3, stride=2, padding=1, bias=False)

self.bn1 = nn.BatchNorm2d(64, momentum=BN_MOMENTUM)

self.conv2 = nn.Conv2d(

64, 64, kernel_size=3, stride=2, padding=1, bias=False)

self.bn2 = nn.BatchNorm2d(64, momentum=BN_MOMENTUM)

self.relu = nn.ReLU(inplace=True)

num_channels = 64

block = blocks_dict['BOTTLENECK']

num_blocks = 4

self.layer1 = self._make_layer(block, 64, num_channels, num_blocks)

stage1_out_channel = block.expansion * num_channels

# -- stage 2

self.stage2_cfg = {}

self.stage2_cfg['NUM_MODULES'] = 1

self.stage2_cfg['NUM_BRANCHES'] = 2

self.stage2_cfg['BLOCK'] = 'BASIC'

self.stage2_cfg['NUM_BLOCKS'] = [4, 4]

self.stage2_cfg['NUM_CHANNELS'] = [40, 80]

self.stage2_cfg['FUSE_METHOD'] = 'SUM'

num_channels = self.stage2_cfg['NUM_CHANNELS']

block = blocks_dict[self.stage2_cfg['BLOCK']]

num_channels = [

num_channels[i] * block.expansion

for i in range(len(num_channels))

]

self.transition1 = self._make_transition_layer([stage1_out_channel],

num_channels)

self.stage2, pre_stage_channels = self._make_stage(

self.stage2_cfg, num_channels)

# -- stage 3

self.stage3_cfg = {}

self.stage3_cfg['NUM_MODULES'] = 4

self.stage3_cfg['NUM_BRANCHES'] = 3

self.stage3_cfg['BLOCK'] = 'BASIC'

self.stage3_cfg['NUM_BLOCKS'] = [4, 4, 4]

self.stage3_cfg['NUM_CHANNELS'] = [40, 80, 160]

self.stage3_cfg['FUSE_METHOD'] = 'SUM'

num_channels = self.stage3_cfg['NUM_CHANNELS']

block = blocks_dict[self.stage3_cfg['BLOCK']]

num_channels = [

num_channels[i] * block.expansion

for i in range(len(num_channels))

]

self.transition2 = self._make_transition_layer(pre_stage_channels,

num_channels)

self.stage3, pre_stage_channels = self._make_stage(

self.stage3_cfg, num_channels)

last_inp_channels = np.int(np.sum(pre_stage_channels)) + 256

self.redc_layer = nn.Sequential(

nn.Conv2d(

in_channels=last_inp_channels,

out_channels=128,

kernel_size=3,

stride=1,

padding=1),

nn.BatchNorm2d(128, momentum=BN_MOMENTUM),

nn.ReLU(True),

)

self.aspp = nn.ModuleList(aspp(in_channel=128))

# additional layers specfic for Phase 3

self.pred_conv = nn.Conv2d(128, 512, 3, padding=1)

self.pred_bn = nn.BatchNorm2d(512)

self.GAP = nn.AdaptiveAvgPool2d(1)

# Specially for hidden domain

# Set the domain for learnable parameters

domain_center_src = np.load(self.domain_center_model)

G_SHA = torch.from_numpy(domain_center_src['G_SHA']).view(1, -1, 1, 1)

G_SHB = torch.from_numpy(domain_center_src['G_SHB']).view(1, -1, 1, 1)

G_QNRF = torch.from_numpy(domain_center_src['G_QNRF']).view(

1, -1, 1, 1)

self.n_domain = 3

self.G_all = torch.cat(

[G_SHA.clone(), G_SHB.clone(),

G_QNRF.clone()], dim=0)

self.G_all = nn.Parameter(self.G_all)

self.last_layer = nn.Sequential(

nn.Conv2d(

in_channels=128,

out_channels=64,

kernel_size=3,

stride=1,

padding=1),

nn.BatchNorm2d(64, momentum=BN_MOMENTUM),

nn.ReLU(True),

nn.Conv2d(

in_channels=64,

out_channels=32,

kernel_size=3,

stride=1,

padding=1),

nn.BatchNorm2d(32, momentum=BN_MOMENTUM),

nn.ReLU(True),

nn.Conv2d(

in_channels=32,

out_channels=1,

kernel_size=1,

stride=1,

padding=0),

)

def _make_transition_layer(self, num_channels_pre_layer,

num_channels_cur_layer):

num_branches_cur = len(num_channels_cur_layer)

num_branches_pre = len(num_channels_pre_layer)

transition_layers = []

for i in range(num_branches_cur):

if i < num_branches_pre:

if num_channels_cur_layer[i] != num_channels_pre_layer[i]:

transition_layers.append(

nn.Sequential(

nn.Conv2d(

num_channels_pre_layer[i],

num_channels_cur_layer[i],

3,

1,

1,

bias=False),

nn.BatchNorm2d(

num_channels_cur_layer[i],

momentum=BN_MOMENTUM), nn.ReLU(inplace=True)))

else:

transition_layers.append(None)

else:

conv3x3s = []

for j in range(i + 1 - num_branches_pre):

inchannels = num_channels_pre_layer[-1]

outchannels = num_channels_cur_layer[i] \

if j == i - num_branches_pre else inchannels

conv3x3s.append(

nn.Sequential(

nn.Conv2d(

inchannels, outchannels, 3, 2, 1, bias=False),

nn.BatchNorm2d(outchannels, momentum=BN_MOMENTUM),

nn.ReLU(inplace=True)))

transition_layers.append(nn.Sequential(*conv3x3s))

return nn.ModuleList(transition_layers)

def _make_layer(self, block, inplanes, planes, blocks, stride=1):

downsample = None

if stride != 1 or inplanes != planes * block.expansion:

downsample = nn.Sequential(

nn.Conv2d(

inplanes,

planes * block.expansion,

kernel_size=1,

stride=stride,

bias=False),

nn.BatchNorm2d(planes * block.expansion, momentum=BN_MOMENTUM),

)

layers = []

layers.append(block(inplanes, planes, stride, downsample))

inplanes = planes * block.expansion

for i in range(1, blocks):

layers.append(block(inplanes, planes))

return nn.Sequential(*layers)

def _make_stage(self,

layer_config,

num_inchannels,

multi_scale_output=True):

num_modules = layer_config['NUM_MODULES']

num_branches = layer_config['NUM_BRANCHES']

num_blocks = layer_config['NUM_BLOCKS']

num_channels = layer_config['NUM_CHANNELS']

block = blocks_dict[layer_config['BLOCK']]

fuse_method = layer_config['FUSE_METHOD']

modules = []

for i in range(num_modules):

# multi_scale_output is only used last module

if not multi_scale_output and i == num_modules - 1:

reset_multi_scale_output = False

else:

reset_multi_scale_output = True

modules.append(

HighResolutionModule(num_branches, block, num_blocks,

num_inchannels, num_channels, fuse_method,

reset_multi_scale_output))

num_inchannels = modules[-1].get_num_inchannels()

return nn.Sequential(*modules), num_inchannels

def forward(self, x):

x = self.conv1(x)

x = self.bn1(x)

x = self.relu(x)

x = self.conv2(x)

x = self.bn2(x)

x = self.relu(x)

x = self.layer1(x)

x_head_1 = x

x_list = []

for i in range(self.stage2_cfg['NUM_BRANCHES']):

if self.transition1[i] is not None:

x_list.append(self.transition1[i](x))

else:

x_list.append(x)

y_list = self.stage2(x_list)

x_list = []

for i in range(self.stage3_cfg['NUM_BRANCHES']):

if self.transition2[i] is not None:

x_list.append(self.transition2[i](y_list[-1]))

else:

x_list.append(y_list[i])

x = self.stage3(x_list)

# Replace the classification heaeder with custom setting

# Upsampling

x0_h, x0_w = x[0].size(2), x[0].size(3)

x1 = F.interpolate(

x[1], size=(x0_h, x0_w), mode='bilinear', align_corners=False)

x2 = F.interpolate(

x[2], size=(x0_h, x0_w), mode='bilinear', align_corners=False)

x = torch.cat([x[0], x1, x2, x_head_1], 1)

# first, reduce the channel down

x = self.redc_layer(x)

pred_attn = self.GAP(F.relu_(self.pred_bn(self.pred_conv(x))))

pred_attn = F.softmax(pred_attn, dim=1)

pred_attn_list = torch.chunk(pred_attn, 4, dim=1)

aspp_out = []

for k, v in enumerate(self.aspp):

if k % 2 == 0:

aspp_out.append(self.aspp[k + 1](v(x)))

else:

continue

# Using Aspp add, and relu inside

for i in range(4):

x = x + F.relu_(aspp_out[i] * 0.25) * pred_attn_list[i]

bz = x.size(0)

# -- Besides, we also need to let the prediction attention be close to visable domain

# -- Calculate the domain distance and get the weights

# - First, detach domains

G_all_d = self.G_all.detach() # use detached G_all for calulcating

pred_attn_d = pred_attn.detach().view(bz, 512, 1, 1)

if self.cosine == 1:

G_A, G_B, G_Q = torch.chunk(G_all_d, self.n_domain, dim=0)

cos_dis_A = F.cosine_similarity(pred_attn_d, G_A, dim=1).view(-1)

cos_dis_B = F.cosine_similarity(pred_attn_d, G_B, dim=1).view(-1)

cos_dis_Q = F.cosine_similarity(pred_attn_d, G_Q, dim=1).view(-1)

cos_dis_all = torch.stack([cos_dis_A, cos_dis_B,

cos_dis_Q]).view(bz, -1) # bz*3

cos_dis_all = F.softmax(cos_dis_all, dim=1)

target_attn = cos_dis_all.view(bz, self.n_domain, 1, 1, 1).expand(

bz, self.n_domain, 512, 1, 1) * self.G_all.view(

1, self.n_domain, 512, 1, 1).expand(

bz, self.n_domain, 512, 1, 1)

target_attn = torch.sum(

target_attn, dim=1, keepdim=False) # bz * 512 * 1 * 1

if self.fix_domain:

target_attn = target_attn.detach()

else:

raise ValueError('Have not implemented not cosine distance yet')

x = self.last_layer(x)

x = F.relu_(x)

x = F.interpolate(

x, size=(x0_h * 2, x0_w * 2), mode='bilinear', align_corners=False)

return x, pred_attn, target_attn

def init_weights(

self,

pretrained='',

):

logger.info('=> init weights from normal distribution')

for m in self.modules():

if isinstance(m, nn.Conv2d):

nn.init.normal_(m.weight, std=0.01)

if m.bias is not None:

nn.init.constant_(m.bias, 0)

elif isinstance(m, nn.BatchNorm2d):

nn.init.constant_(m.weight, 1)

nn.init.constant_(m.bias, 0)

if os.path.isfile(pretrained):

pretrained_dict = torch.load(pretrained)

logger.info(f'=> loading pretrained model {pretrained}')

model_dict = self.state_dict()

pretrained_dict = {

k: v

for k, v in pretrained_dict.items() if k in model_dict.keys()

}

for k, _ in pretrained_dict.items():

logger.info(f'=> loading {k} pretrained model {pretrained}')

model_dict.update(pretrained_dict)

self.load_state_dict(model_dict)

else:

assert 1 == 2

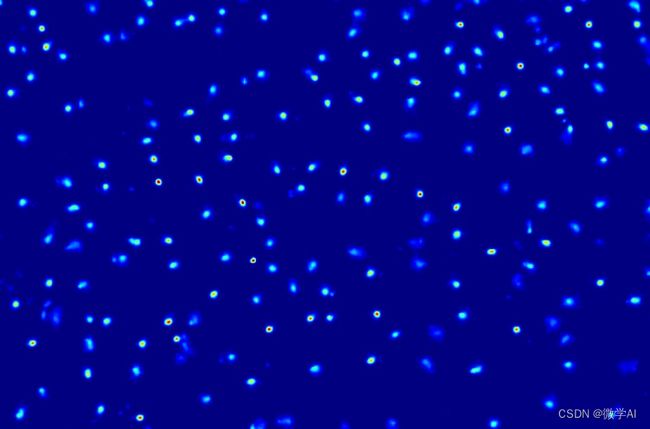

3.3 图片检测人群数量

接下来,我们加载已经训练好的模型进行预测,这里省略了中间复杂的过程,大家可以一键调用预测。

from modelscope.pipelines import pipeline

from modelscope.utils.constant import Tasks

from modelscope.outputs import OutputKeys

from modelscope.utils.cv.image_utils import numpy_to_cv2img

import cv2

crowd_model = pipeline(Tasks.crowd_counting,model='damo/cv_hrnet_crowd-counting_dcanet')

imgs = '111.png'

results = crowd_model(imgs)

print('人数为:', int(results[OutputKeys.SCORES]))

vis_img = results[OutputKeys.OUTPUT_IMG]

vis_img = numpy_to_cv2img(vis_img)

cv2.imwrite('result1.jpg', vis_img)

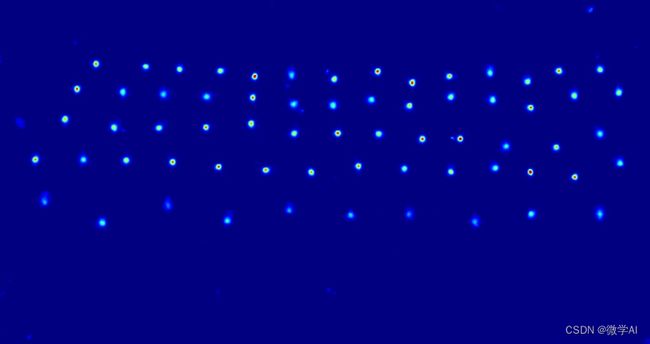

3.4 视频检测人群数量

对于视频,我们可以将其分解为一系列的图像帧,然后使用我们的模型来检测每一帧中的人群数量。

import cv2

from modelscope.outputs import OutputKeys

def predict_video(video_path):

cap = cv2.VideoCapture(video_path)

while (cap.isOpened()):

ret, frame = cap.read()

if ret:

gray = cv2.cvtColor(frame, cv2.COLOR_BGR2GRAY)

preds = crowd_model(gray)

print(preds[OutputKeys.SCORES])

else:

break

cap.release()

video_path = 'test.mp4'

predict_video(video_path)

4. 结论

在这个项目中,我们成功地使用深度学习模型来计算图像和视频中的人群数量。这个模型可以被广泛地应用于人流监测和管理、安全防控、市场调研和决策支持、城市规划和交通管理等领域。