【Keras-Inception v2】CIFAR-10

系列连载目录

- 请查看博客 《Paper》 4.1 小节 【Keras】Classification in CIFAR-10 系列连载

学习借鉴

- github:BIGBALLON/cifar-10-cnn

- 知乎专栏:写给妹子的深度学习教程

- Inception v2 Caffe 代码:https://github.com/soeaver/caffe-model/blob/master/cls/inception/deploy_inception-v2.prototxt

- GoogleNet网络详解与keras实现:https://blog.csdn.net/qq_25491201/article/details/78367696

参考

- 【Keras-CNN】CIFAR-10

- 本地远程访问Ubuntu16.04.3服务器上的TensorBoard

- caffe代码可视化工具

代码下载

- 链接:https://pan.baidu.com/s/1pkC0BG3sAKxRteZdmCEzHQ

提取码:70ij

硬件

- TITAN XP

文章目录

- 1 理论基础

- 2 代码实现

- 2.1 Inception_v2

- 2.2 Inception_v2_slim

- 3 总结

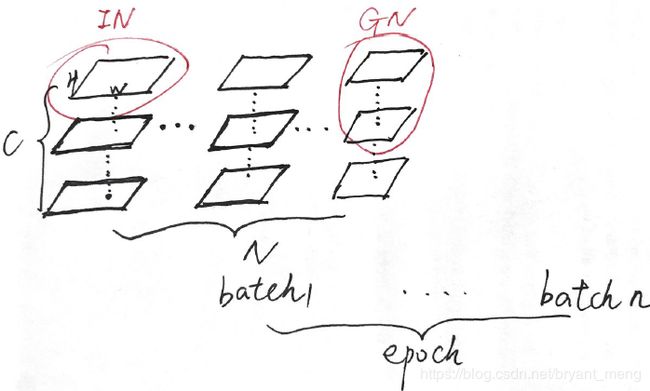

1 理论基础

参考【BN】《Batch Normalization: Accelerating Deep Network Training by Reducing Internal Covariate Shift》

Inception-v2 的结构如下,和Inception-v1相似,只是卷积之后都进行了 Batch Normalization 操作

- Inception-v2 通过 strides 来进行 feature map 分辨率的倍减,而不像 Inception-v1 在两个 inception 模块之间加入 stride 为2的 max pooling 操作

- #3×3 reduce表示 1×1→3×3 中的 1×1 操作

- double #3×3 reduce表示 1×1→3×3→3×3 中的 1×1 操作

- pass through 表示在上一个 Inception 中的 concatenation后的结果后接一个 max pooling(filter size = 3,stride = 2)

- inception(4c)、inception(4d)中,最后一列的 channels 好像有错误,inception(4c)中 160+160+160+96=576 最后一项的 channels 不是128,而应该是96,inception(4d)中 96+192+192+96=576 最后一项的 channels 不是128,而应该是96。

2 代码实现

[D] Why aren’t Inception-style networks successful on CIFAR-10/100?

![]()

2.1 Inception_v2

1)导入库,设置好超参数

import os

os.environ["CUDA_DEVICE_ORDER"]="PCI_BUS_ID"

os.environ["CUDA_VISIBLE_DEVICES"]="3"

import keras

import numpy as np

import math

from keras.datasets import cifar10

from keras.layers import Conv2D, MaxPooling2D, AveragePooling2D, ZeroPadding2D, GlobalAveragePooling2D

from keras.layers import Flatten, Dense, Dropout,BatchNormalization,Activation

from keras.models import Model

from keras.layers import Input, concatenate

from keras import optimizers, regularizers

from keras.preprocessing.image import ImageDataGenerator

from keras.initializers import he_normal

from keras.callbacks import LearningRateScheduler, TensorBoard, ModelCheckpoint

num_classes = 10

batch_size = 64 # 64 or 32 or other

epochs = 300

iterations = 782

USE_BN=True

LRN2D_NORM = True

DROPOUT=0.4

CONCAT_AXIS=3

weight_decay=1e-4

DATA_FORMAT='channels_last' # Theano:'channels_first' Tensorflow:'channels_last'

log_filepath = './inception_v2'

2)数据预处理并设置 learning schedule

def color_preprocessing(x_train,x_test):

x_train = x_train.astype('float32')

x_test = x_test.astype('float32')

mean = [125.307, 122.95, 113.865]

std = [62.9932, 62.0887, 66.7048]

for i in range(3):

x_train[:,:,:,i] = (x_train[:,:,:,i] - mean[i]) / std[i]

x_test[:,:,:,i] = (x_test[:,:,:,i] - mean[i]) / std[i]

return x_train, x_test

def scheduler(epoch):

if epoch < 70:

return 0.01

if epoch < 140:

return 0.001

return 0.0001

# load data

(x_train, y_train), (x_test, y_test) = cifar10.load_data()

y_train = keras.utils.to_categorical(y_train, num_classes)

y_test = keras.utils.to_categorical(y_test, num_classes)

x_train, x_test = color_preprocessing(x_train, x_test)

3)定义网络结构

定义 inception 模块

def inception_module(x,params,concat_axis,padding='same',data_format=DATA_FORMAT,increase=False,last=False,use_bias=True,kernel_initializer="he_normal",bias_initializer='zeros',kernel_regularizer=None,bias_regularizer=None,activity_regularizer=None,kernel_constraint=None,bias_constraint=None,lrn2d_norm=LRN2D_NORM,weight_decay=weight_decay):

(branch1,branch2,branch3,branch4)=params

if weight_decay:

kernel_regularizer=regularizers.l2(weight_decay)

bias_regularizer=regularizers.l2(weight_decay)

else:

kernel_regularizer=None

bias_regularizer=None

if increase:

#1x1->3x3

pathway2=Conv2D(filters=branch2[0],kernel_size=(1,1),strides=1,padding=padding,data_format=data_format,use_bias=use_bias,kernel_initializer=kernel_initializer,bias_initializer=bias_initializer,kernel_regularizer=kernel_regularizer,bias_regularizer=bias_regularizer,activity_regularizer=activity_regularizer,kernel_constraint=kernel_constraint,bias_constraint=bias_constraint)(x)

pathway2 = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(pathway2))

pathway2=Conv2D(filters=branch2[1],kernel_size=(3,3),strides=2,padding=padding,data_format=data_format,use_bias=use_bias,kernel_initializer=kernel_initializer,bias_initializer=bias_initializer,kernel_regularizer=kernel_regularizer,bias_regularizer=bias_regularizer,activity_regularizer=activity_regularizer,kernel_constraint=kernel_constraint,bias_constraint=bias_constraint)(pathway2)

pathway2 = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(pathway2))

#1x1->3x3+3x3

pathway3=Conv2D(filters=branch3[0],kernel_size=(1,1),strides=1,padding=padding,data_format=data_format,use_bias=use_bias,kernel_initializer=kernel_initializer,bias_initializer=bias_initializer,kernel_regularizer=kernel_regularizer,bias_regularizer=bias_regularizer,activity_regularizer=activity_regularizer,kernel_constraint=kernel_constraint,bias_constraint=bias_constraint)(x)

pathway3 = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(pathway3))

pathway3=Conv2D(filters=branch3[1],kernel_size=(3,3),strides=1,padding=padding,data_format=data_format,use_bias=use_bias,kernel_initializer=kernel_initializer,bias_initializer=bias_initializer,kernel_regularizer=kernel_regularizer,bias_regularizer=bias_regularizer,activity_regularizer=activity_regularizer,kernel_constraint=kernel_constraint,bias_constraint=bias_constraint)(pathway3)

pathway3 = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(pathway3))

pathway3=Conv2D(filters=branch3[1],kernel_size=(3,3),strides=2,padding=padding,data_format=data_format,use_bias=use_bias,kernel_initializer=kernel_initializer,bias_initializer=bias_initializer,kernel_regularizer=kernel_regularizer,bias_regularizer=bias_regularizer,activity_regularizer=activity_regularizer,kernel_constraint=kernel_constraint,bias_constraint=bias_constraint)(pathway3)

pathway3 = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(pathway3))

#3x3->1x1

pathway4=MaxPooling2D(pool_size=(3,3),strides=2,padding=padding,data_format=DATA_FORMAT)(x)

return concatenate([pathway2,pathway3,pathway4],axis=concat_axis)

else:

#1x1

pathway1=Conv2D(filters=branch1[0],kernel_size=(1,1),strides=1,padding=padding,data_format=data_format,use_bias=use_bias,kernel_initializer=kernel_initializer,bias_initializer=bias_initializer,kernel_regularizer=kernel_regularizer,bias_regularizer=bias_regularizer,activity_regularizer=activity_regularizer,kernel_constraint=kernel_constraint,bias_constraint=bias_constraint)(x)

pathway1 = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(pathway1))

#1x1->3x3

pathway2=Conv2D(filters=branch2[0],kernel_size=(1,1),strides=1,padding=padding,data_format=data_format,use_bias=use_bias,kernel_initializer=kernel_initializer,bias_initializer=bias_initializer,kernel_regularizer=kernel_regularizer,bias_regularizer=bias_regularizer,activity_regularizer=activity_regularizer,kernel_constraint=kernel_constraint,bias_constraint=bias_constraint)(x)

pathway2 = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(pathway2))

pathway2=Conv2D(filters=branch2[1],kernel_size=(3,3),strides=1,padding=padding,data_format=data_format,use_bias=use_bias,kernel_initializer=kernel_initializer,bias_initializer=bias_initializer,kernel_regularizer=kernel_regularizer,bias_regularizer=bias_regularizer,activity_regularizer=activity_regularizer,kernel_constraint=kernel_constraint,bias_constraint=bias_constraint)(pathway2)

pathway2 = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(pathway2))

#1x1->3x3+3x3

pathway3=Conv2D(filters=branch3[0],kernel_size=(1,1),strides=1,padding=padding,data_format=data_format,use_bias=use_bias,kernel_initializer=kernel_initializer,bias_initializer=bias_initializer,kernel_regularizer=kernel_regularizer,bias_regularizer=bias_regularizer,activity_regularizer=activity_regularizer,kernel_constraint=kernel_constraint,bias_constraint=bias_constraint)(x)

pathway3 = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(pathway3))

pathway3=Conv2D(filters=branch3[1],kernel_size=(3,3),strides=1,padding=padding,data_format=data_format,use_bias=use_bias,kernel_initializer=kernel_initializer,bias_initializer=bias_initializer,kernel_regularizer=kernel_regularizer,bias_regularizer=bias_regularizer,activity_regularizer=activity_regularizer,kernel_constraint=kernel_constraint,bias_constraint=bias_constraint)(pathway3)

pathway3 = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(pathway3))

pathway3=Conv2D(filters=branch3[1],kernel_size=(3,3),strides=1,padding=padding,data_format=data_format,use_bias=use_bias,kernel_initializer=kernel_initializer,bias_initializer=bias_initializer,kernel_regularizer=kernel_regularizer,bias_regularizer=bias_regularizer,activity_regularizer=activity_regularizer,kernel_constraint=kernel_constraint,bias_constraint=bias_constraint)(pathway3)

pathway3 = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(pathway3))

#3x3->1x1

if last:

pathway4=MaxPooling2D(pool_size=(3,3),strides=1,padding=padding,data_format=DATA_FORMAT)(x)

else:

pathway4=AveragePooling2D(pool_size=(3,3),strides=1,padding=padding,data_format=DATA_FORMAT)(x)

pathway4=Conv2D(filters=branch4[0],kernel_size=(1,1),strides=1,padding=padding,data_format=data_format,use_bias=use_bias,kernel_initializer=kernel_initializer,bias_initializer=bias_initializer,kernel_regularizer=kernel_regularizer,bias_regularizer=bias_regularizer,activity_regularizer=activity_regularizer,kernel_constraint=kernel_constraint,bias_constraint=bias_constraint)(pathway4)

pathway4 = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(pathway4))

return concatenate([pathway1,pathway2,pathway3,pathway4],axis=concat_axis)

4)搭建网络

def create_model(img_input):

x = Conv2D(64,kernel_size=(7,7),strides=(2,2),padding='same',

kernel_initializer="he_normal",kernel_regularizer=regularizers.l2(weight_decay))(img_input)

x=MaxPooling2D(pool_size=(3,3),strides=2,padding='same',data_format=DATA_FORMAT)(x)

x = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(x))

x = Conv2D(64,kernel_size=(1,1),strides=(1,1),padding='same',

kernel_initializer="he_normal",kernel_regularizer=regularizers.l2(weight_decay))(x)

x = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(x))

x = Conv2D(192,kernel_size=(3,3),strides=(1,1),padding='same',

kernel_initializer="he_normal",kernel_regularizer=regularizers.l2(weight_decay))(x)

x = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(x))

x=MaxPooling2D(pool_size=(3,3),strides=2,padding='same',data_format=DATA_FORMAT)(x)

x=inception_module(x,params=[(64,),(64,64),(64,96),(32,)],concat_axis=CONCAT_AXIS) #3a

x=inception_module(x,params=[(64,),(64,96),(64,96),(64,)],concat_axis=CONCAT_AXIS) #3b

x=inception_module(x,params=[(0,),(128,160),(64,96),(0,)],concat_axis=CONCAT_AXIS,increase=True) #3c

x=inception_module(x,params=[(224,),(64,96),(96,128),(128,)],concat_axis=CONCAT_AXIS) #4a

x=inception_module(x,params=[(192,),(96,128),(96,128),(128,)],concat_axis=CONCAT_AXIS) #4b

x=inception_module(x,params=[(160,),(128,160),(128,160),(96,)],concat_axis=CONCAT_AXIS) #4c

x=inception_module(x,params=[(96,),(128,192),(160,192),(96,)],concat_axis=CONCAT_AXIS) #4d

x=inception_module(x,params=[(0,),(128,192),(192,256),(0,)],concat_axis=CONCAT_AXIS,increase=True) #4e

x=inception_module(x,params=[(352,),(192,320),(160,224),(128,)],concat_axis=CONCAT_AXIS) #5a

x=inception_module(x,params=[(352,),(192,320),(192,224),(128,)],concat_axis=CONCAT_AXIS,last=True) #5b

x=Flatten()(x)

x=Dropout(DROPOUT)(x)

#x=Dense(output_dim=10,activation='linear')(x)

x = Dense(num_classes,activation='softmax',kernel_initializer="he_normal",

kernel_regularizer=regularizers.l2(weight_decay))(x)

return x

5)生成模型

img_input=Input(shape=(32,32,3))

output = create_model(img_input)

model=Model(img_input,output)

model.summary()

模型的参数量如下:

Total params: 10,210,090

Trainable params: 10,190,186

Non-trainable params: 19,904

对比 Inception v1 的参数量

Total params: 5,984,936

Trainable params: 5,984,424

Non-trainable params: 512

有 Batch Normalization 的参数量计算参考 【Keras-NIN】CIFAR-10

6)开始训练

# set optimizer

sgd = optimizers.SGD(lr=.1, momentum=0.9, nesterov=True)

model.compile(loss='categorical_crossentropy', optimizer=sgd, metrics=['accuracy'])

# set callback

tb_cb = TensorBoard(log_dir=log_filepath, histogram_freq=0)

change_lr = LearningRateScheduler(scheduler)

cbks = [change_lr,tb_cb]

# dump checkpoint if you need.(add it to cbks)

# ModelCheckpoint('./checkpoint-{epoch}.h5', save_best_only=False, mode='auto', period=10)

# set data augmentation

datagen = ImageDataGenerator(horizontal_flip=True,

width_shift_range=0.125,

height_shift_range=0.125,

fill_mode='constant',cval=0.)

datagen.fit(x_train)

# start training

model.fit_generator(datagen.flow(x_train, y_train,batch_size=batch_size),

steps_per_epoch=iterations,

epochs=epochs,

callbacks=cbks,

validation_data=(x_test, y_test))

model.save('inception_v2.h5')

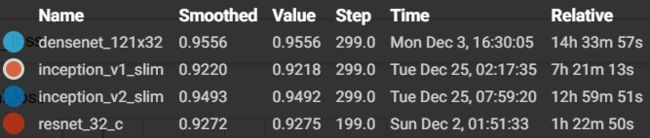

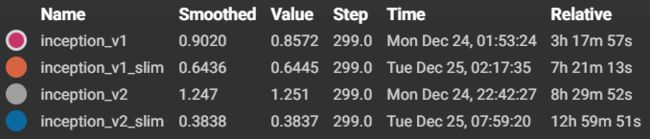

7)结果分析

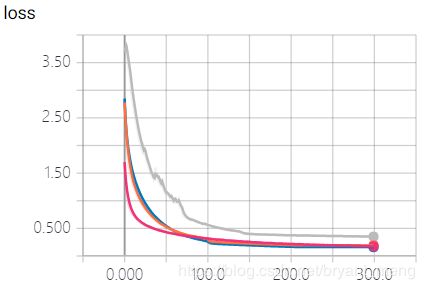

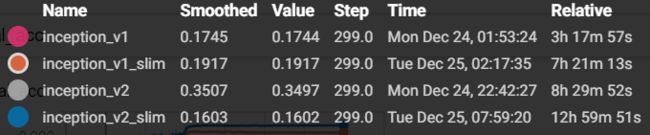

training accuracy 和 training loss

![]()

![]()

![]()

比不上 Inception-v1

…………

耐克标志,过拟合了,试试把网络瘦身下,去掉 stem 结构。

2.2 Inception_v2_slim

把 Inception_v2 中 stern 结构直接替换成一个卷积,inception 结构不变,因为stem 结构会把原图降到1/8的分辨率,对于 ImageNet(224x224) 还行,CIFRA-10(32x32)的话有些吃不消了。

- 调整 learning rate schedule

def scheduler(epoch):

if epoch < 100:

return 0.01

if epoch < 200:

return 0.001

return 0.0001

- 调整网络结构

def create_model(img_input):

x = Conv2D(192,kernel_size=(3,3),strides=(1,1),padding='same',

kernel_initializer="he_normal",kernel_regularizer=regularizers.l2(weight_decay))(img_input)

x = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(x))

x=inception_module(x,params=[(64,),(64,64),(64,96),(32,)],concat_axis=CONCAT_AXIS) #3a

x=inception_module(x,params=[(64,),(64,96),(64,96),(64,)],concat_axis=CONCAT_AXIS) #3b

x=inception_module(x,params=[(0,),(128,160),(64,96),(0,)],concat_axis=CONCAT_AXIS,increase=True) #3c

x=inception_module(x,params=[(224,),(64,96),(96,128),(128,)],concat_axis=CONCAT_AXIS) #4a

x=inception_module(x,params=[(192,),(96,128),(96,128),(128,)],concat_axis=CONCAT_AXIS) #4b

x=inception_module(x,params=[(160,),(128,160),(128,160),(96,)],concat_axis=CONCAT_AXIS) #4c

x=inception_module(x,params=[(96,),(128,192),(160,192),(96,)],concat_axis=CONCAT_AXIS) #4d

x=inception_module(x,params=[(0,),(128,192),(192,256),(0,)],concat_axis=CONCAT_AXIS,increase=True) #4e

x=inception_module(x,params=[(352,),(192,320),(160,224),(128,)],concat_axis=CONCAT_AXIS) #5a

x=inception_module(x,params=[(352,),(192,320),(192,224),(128,)],concat_axis=CONCAT_AXIS) #5b

x=GlobalAveragePooling2D()(x)

x = Dense(num_classes,activation='softmax',kernel_initializer="he_normal",

kernel_regularizer=regularizers.l2(weight_decay))(x)

return x

其它代码同 Inception_v2

参数量如下:

Total params: 10,090,538

Trainable params: 10,070,890

Non-trainable params: 19,648

对比 Inception_v2

Total params: 10,210,090

Trainable params: 10,190,186

Non-trainable params: 19,904

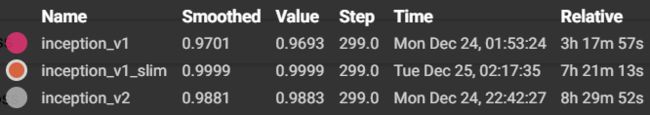

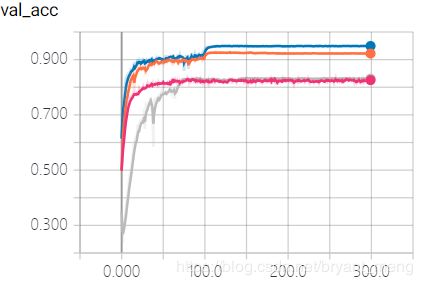

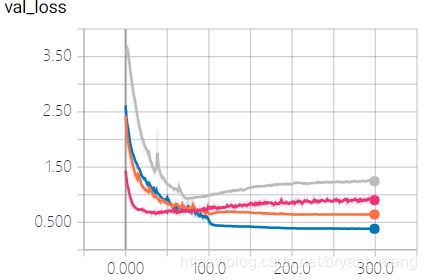

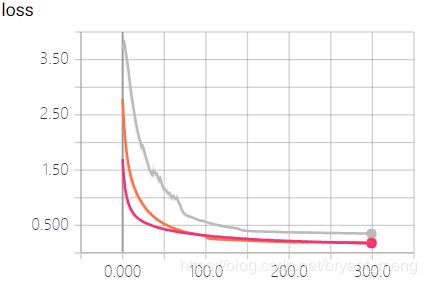

结果分析如下:

training accuracy 和 training loss

![]()

![]()

![]()

![]()

哇,精度快到了(95%),过拟合缓解了。哈哈哈,海王拿到他的叉了……

和前辈们对比下