etcd 集群运维实践

集群的备份和恢复

![]()

#!/bin/bashIP=123.123.123.123BACKUP_DIR=/alauda/etcd_bak/mkdir -p $BACKUP_DIRexport ETCDCTL_API=3etcdctl --endpoints=http://$IP:2379 snapshot save $BACKUP/snap-$(date +%Y%m%d%H%M).db# 备份一个节点的数据就可以恢复,实践中,为了防止定时任务配置的节点异常没有生成备份,建议多加几个

恢复集群

#!/bin/bash# 使用 etcdctl snapshot restore 生成各个节点的数据# 比较关键的变量是# --data-dir 需要是实际 etcd 运行时的数据目录# --name --initial-advertise-peer-urls 需要用各个节点的配置# --initial-cluster initial-cluster-token 需要和原集群一致ETCD_1=10.1.0.5ETCD_2=10.1.0.6ETCD_3=10.1.0.7for i in ETCD_1 ETCD_2 ETCD_3doexport ETCDCTL_API=3etcdctl snapshot restore snapshot.db \--data-dir=/var/lib/etcd \--name $i \--initial-cluster ${ETCD_1}=http://${ETCD_1}:2380,${ETCD_2}=http://${ETCD_2}:2380,${ETCD_3}=http://${ETCD_3}:2380 \--initial-cluster-token k8s_etcd_token \--initial-advertise-peer-urls http://$i:2380 && \mv /var/lib/etcd/ etcd_$idone# 把 etcd_10.1.0.5 复制到 10.1.0.5节点,覆盖/var/lib/etcd(同--data-dir路径)# 其他节点依次类推

用 etcd 自动创建的 SnapDb 恢复

#!/bin/bashexport ETCDCTL_API=3etcdctl snapshot restore snapshot.db \--skip-hash-check \--data-dir=/var/lib/etcd \--name 10.1.0.5 \--initial-cluster 10.1.0.5=http://10.1.0.5:2380,10.1.0.6=http://10.1.0.6:2380,10.1.0.7=http://10.1.0.7:2380 \--initial-cluster-token k8s_etcd_token \--initial-advertise-peer-urls http://10.1.0.5:2380# 也是所有节点都需要生成自己的数据目录,参考上一条# 和上一条命令唯一的差别是多了 --skip-hash-check (跳过完整性校验)# 这种方式不能确保 100% 可恢复,建议还是自己加备份# 通常恢复后需要做一下数据压缩和碎片整理,可参考相应章节

踩过的坑

[ 3.0.14 版 etcd restore 功能不可用 ] https://github.com/etcd-io/etcd/issues/7533

使用更新的 etcd 即可。

总结:恢复就是要拿 DB 去把 etcd 的数据生成一份,用同一个节点的,可以保证除了 restore 时候指定的参数外,所有数据都一样。这就是用一份 DB,操作三次(或者5次)的原因。

集群的扩容——从 1 到 3

![]()

#!/bin/bashexport ETCDCTL_API=2etcdctl --endpoints=http://10.1.0.6:2379 member add 10.1.0.6 http://10.1.0.6:2380etcdctl --endpoints=http://10.1.0.7:2379 member add 10.1.0.7 http://10.1.0.7:2380# ETCD_NAME="etcd_10.1.0.6"# ETCD_INITIAL_CLUSTER="10.1.0.6=http://10.1.0.6:2380,10.1.0.5=http://10.1.0.5:2380"# ETCD_INITIAL_CLUSTER_STATE="existing"

准备添加的节点 etcd 参数配置

#!/bin/bash/usr/local/bin/etcd--data-dir=/data.etcd--name 10.1.0.6--initial-advertise-peer-urls http://10.1.0.6:2380--listen-peer-urls http://10.1.0.6:2380--advertise-client-urls http://10.1.0.6:2379--listen-client-urls http://10.1.0.6:2379--initial-cluster 10.1.0.6=http://10.1.0.6:2380,10.1.0.5=http://10.1.0.5:2380--initial-cluster-state exsiting--initial-cluster-token k8s_etcd_token# --initial-cluster 集群所有节点的 name=ip:peer_url# --initial-cluster-state exsiting 告诉 etcd 自己归属一个已存在的集群,不要自立门户

踩过的坑

从 1 到 3 期间,会经过集群是两节点的状态,这时候可能集群的表现就像挂了,endpoint status 这些命令都不能用,所以我们需要用 member add 先把集群扩到三节点,然后再依次启动 etcd 实例,这样做就能确保 etcd 就是健康的。

从 3 到更多,其实还是 member add 啦,就放心搞吧。

集群加证书

![]()

curl -s -L -o /usr/bin/cfssl https://pkg.cfssl.org/R1.2/cfssl_linux-amd64curl -s -L -o /usr/bin/cfssljson https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64chmod +x /usr/bin/{cfssl,cfssljson}cd /etc/kubernetes/pki/etcd

# cat ca-config.json{"signing": {"default": {"expiry": "100000h"},"profiles": {"server": {"usages": ["signing", "key encipherment", "server auth", "client auth"],"expiry": "100000h"},"client": {"usages": ["signing", "key encipherment", "server auth", "client auth"],"expiry": "100000h"}}}}

# cat ca-csr.json{"CN": "etcd","key": {"algo": "rsa","size": 4096},"names": [{"C": "CN","L": "Beijing","O": "Alauda","OU": "PaaS","ST": "Beijing"}]}

# cat server-csr.json{"CN": "etcd-server","hosts": ["localhost","0.0.0.0","127.0.0.1","所有master 节点ip ","所有master 节点ip ","所有master 节点ip "],"key": {"algo": "rsa","size": 4096},"names": [{"C": "CN","L": "Beijing","O": "Alauda","OU": "PaaS","ST": "Beijing"}]}

# cat client-csr.json{"CN": "etcd-client","hosts": [""],"key": {"algo": "rsa","size": 4096},"names": [{"C": "CN","L": "Beijing","O": "Alauda","OU": "PaaS","ST": "Beijing"}]}

cd /etc/kubernetes/pki/etcdcfssl gencert -initca ca-csr.json | cfssljson -bare cacfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=server server-csr.json | cfssljson -bare servercfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=client client-csr.json | cfssljson -bare client

参考链接:https://lihaoquan.me/2017/3/29/etcd-https-setup.html

首先更新节点的peer-urls

export ETCDCTL_API=3etcdctl --endpoints=http://x.x.x.x:2379 member list# 1111111111 ..........# 2222222222 ..........# 3333333333 ..........etcdctl --endpoints=http://172.30.0.123:2379 member update 1111111111 --peer-urls=https://x.x.x.x:2380# 执行三次把三个节点的peer-urls都改成https

修改配置

![]()

# vim /etc/kubernetes/main*/etcd.yaml# etcd启动命令部分修改 http 为 https,启动状态改成 existing- --advertise-client-urls=https://x.x.x.x:2379- --initial-advertise-peer-urls=https://x.x.x.x:2380- --initial-cluster=xxx=https://x.x.x.x:2380,xxx=https://x.x.x.x:2380,xxx=https://x.x.x.x:2380- --listen-client-urls=https://x.x.x.x:2379- --listen-peer-urls=https://x.x.x.x:2380- --initial-cluster-state=existing# etcd 启动命令部分插入- --cert-file=/etc/kubernetes/pki/etcd/server.pem- --key-file=/etc/kubernetes/pki/etcd/server-key.pem- --peer-cert-file=/etc/kubernetes/pki/etcd/server.pem- --peer-key-file=/etc/kubernetes/pki/etcd/server-key.pem- --trusted-ca-file=/etc/kubernetes/pki/etcd/ca.pem- --peer-trusted-ca-file=/etc/kubernetes/pki/etcd/ca.pem- --peer-client-cert-auth=true- --client-cert-auth=true# 检索hostPath在其后插入- hostPath:path: /etc/kubernetes/pki/etcdtype: DirectoryOrCreatename: etcd-certs# 检索mountPath在其后插入- mountPath: /etc/kubernetes/pki/etcdname: etcd-certs

# vim /etc/kubernetes/main*/kube-apiserver.yaml# apiserver 启动部分插入,修改 http 为https- --etcd-cafile=/etc/kubernetes/pki/etcd/ca.pem- --etcd-certfile=/etc/kubernetes/pki/etcd/client.pem- --etcd-keyfile=/etc/kubernetes/pki/etcd/client-key.pem- --etcd-servers=https://x.x.x.x:2379,https://x.x.x.x:2379,https://x.x.x.x:2379

总结下就是,先准备一套证书。然后修改 etcd 内部通信地址为https,这时候etcd日志会报错(可以忽略),然后用etcd --带证书的参数启动,把所有链接etcd的地方都用上证书,即可。

遇到的坑

[ etcd 加证书后,apiserver 的健康检查还是 http 请求,etcd 会一直刷日志 ] https://github.com/etcd-io/etcd/issues/9285

2018-02-06 12:41:06.905234 I | embed: rejected connection from "127.0.0.1:35574" (error "EOF", ServerName "")

解决办法:直接去掉 apiserver 的健康检查,或者把默认的检查命令换成 curl(apiserver 的镜像里应该没有 curl,如果是刚需的话自己重新 build 一下吧)。

集群升级

![]()

v2 到 v3 的升级需要一个 merge 的操作,我并没有实际的实践过,也不太推荐这样做。

集群状态检查

![]()

#!/bin/bash# 如果证书的话,去掉--cert --key --cacert 即可# --endpoints= 需要写了几个节点的url,endpoint status就输出几条信息export ETCDCTL_API=3etcdctl \--endpoints=https://x.x.x.x:2379 \--cert=/etc/kubernetes/pki/etcd/client.pem \--key=/etc/kubernetes/pki/etcd/client-key.pem \--cacert=/etc/kubernetes/pki/etcd/ca.pem \endpoint status -w tableetcdctl --endpoints=xxxx endpoint healthetcdctl --endpoints=xxxx member listkubectl get cs

数据操作(删除、压缩、碎片整理)

![]()

ETCDCTL_API=2 etcdctl rm --recursive # v2 的 api 可以这样删除一个“目录”ETCDCTL_API=3 etcdctl --endpoints=xxx del /xxxxx --prefix # v3 的版本# 带证书的话,参考上一条添加 --cert --key --cacert 即可

遇到的坑:在一个客户环境里发现 Kubernetes 集群里的 “事件” 超级多,就是 kubectl describe xxx 看到的 events 部分信息,数据太大导致 etcd 跑的很累,我们就用这样的方式删掉没用的这些数据。

碎片整理

ETCDCTL_API=3 etcdctl --endpoints=xx:xx,xx:xx,xx:xx defragETCDCTL_API=3 etcdctl --endpoints=xx:xx,xx:xx,xx:xx endpoint status # 看数据量

压缩

ETCDCTL_API=3 etcdctl --endpoints=xx:xx,xx:xx,xx:xx compact# 这个在只有 K8s 用的 etcd 集群里作用不太大,可能具体场景我没遇到# 可参考这个文档# https://www.cnblogs.com/davygeek/p/8524477.html# 不过跑一下不碍事etcd --auto-compaction-retention=1# 添加这个参数让 etcd 运行时自己去做压缩

常见问题

![]()

etcd 对时间很依赖,所以集群里的节点时间一定要同步

磁盘空间不足,如果磁盘是被 etcd 自己吃完了,就需要考虑压缩和删数据啦

加证书后所有请求就都要带证书了,要不会提示 context deadline exceeded

做各个操作时 etcd 启动参数里标明节点状态的要小心,否则需要重新做一遍前面的步骤很麻烦

![]()

etcd 的日志在排查故障时很有用,如果我们用宿主机来部署 etcd,日志可以通过 systemd 检索到,但 kubeadm 方式启动的 etcd 在容器重启后就会丢失所有历史。我们可以用以下的方案来做——

shell 的重定向

etcd --xxxx --xxxx > /var/log/etcd.log# 配合 logratate 来做日志切割# 将日志通过 volume 挂载到宿主机

supervisor

supervisor 从容器刚开始流行时,就是保持服务持续运行很有效的工具。

Sidecar 容器(后续我在 GitHub 上补充一个例子,github.com/jing2uo)

Sidecar 可以简单理解为一个 Pod 里有多个容器(比如 kubedns)他们彼此可以看到对方的进程,因此我们可以用传统的 strace 来捕捉 etcd 进程的输出,然后在 Sidecar 这个容器里和 shell 重定向一样操作。

strace -e trace=write -s 200 -f -p 1

Kubeadm 1.13 部署的集群

![]()

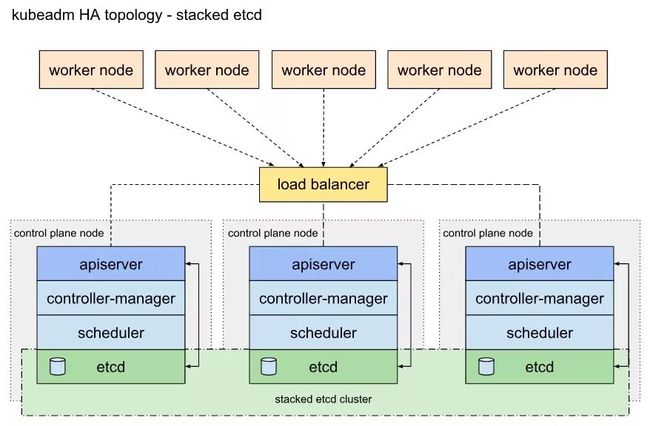

https://kubernetes.io/docs/setup/independent/ha-topology/

区分了 Stacked etcd topology 和 External etcd topology,官方的链接了这个图很形象——

这种模式下的 etcd 集群,最明显的差别是容器内 etcd 的initial-cluster 启动参数只有自己的 IP,会有点懵挂了我这该怎么去恢复。其实基本原理没有变,Kubeadm 藏了个 ConfigMap,启动参数被放在了这里——

kubectl get cm etcdcfg -n kube-system -o yaml

etcd:local:serverCertSANs:- "192.168.8.21"peerCertSANs:- "192.168.8.21"extraArgs:initial-cluster: 192.168.8.21=https://192.168.8.21:2380,192.168.8.22=https://192.168.8.22:2380,192.168.8.20=https://192.168.8.20:2380initial-cluster-state: newname: 192.168.8.21listen-peer-urls: https://192.168.8.21:2380listen-client-urls: https://192.168.8.21:2379advertise-client-urls: https://192.168.8.21:2379initial-advertise-peer-urls: https://192.168.8.21:2380

Q&A

![]()

Q:使用 Kubeadm 部署高可用集群是不是相当于先部署三个独立的单点 Master,最后靠 etcd 添加节点操作把数据打通?A:不是,Kubeadm 部署会在最开始就先建一个 etcd 集群,apiserver 启动之前就需要准备好 etcd,否则 apiserver 起不了,集群之间就没法通信。可以尝试手动搭一下集群,不用 Kubeadm,一个个把组件开起来,之后对Kubernetes的组件关系会理解更好的。

Q:etcd 跨机房高可用如何保证呢?管理 etcd 有好的 UI 工具推荐么?A:etcd 对时间和网络要求很高,所以跨机房的网络不好的话性能很差,光在那边选请输入链接描述举去了。我分享忘了提一个 etcd 的 mirror,可以去参考下做法。跨机房的话,我觉得高速网络是个前提吧,不过还没做过。UI 工具没找过,都是命令行操作来着。

Q:Kubeadm 启动的集群内 etcd节点,kubectl 操作 etcd 的备份恢复有尝试过吗?A:没有用 kubectl 去处理过 etcd 的备份恢复。etcd 的恢复依赖用 SnapDb 生成数据目录,把 etcd 进程丢进容器里,类似的操作避免不了,还有启动的状态需要修改。kubeadm 启动的 etcd 可以通过 kubectl 查询和 exec,但是数据操作应该不可以,比如恢复 etcd ing 时,无法连接 etcd,kubectl 还怎么工作?

Q:kubeadm-ha 启动 3 个 Master,有 3 个 etcd 节点,怎么跟集群外的 3 个 etcd 做集群,做成 3 Master 6 etcd?A:可以参考文档里的扩容部分,只要保证 etcd 的参数正确,即使一个集群一部分容器化,一部分宿主机,都是可以的(当然不建议这么做)。可以先用 kubeadm 搭一个集群,然后用扩容的方式把其他三个节点加进来,或者在 kubeadm 操作之前,先搭一个 etcd 集群。然后 kubeadm 调用它就可以。

Q:有没有试过 Kubeadm 的滚动升级,etcd 版本变更,各 Master 机分别重启,数据同步是否有异常等等?A:做过。Kubeadm 的滚动升级公司内部有从 1.7 一步步升级到 1.11、1.12 的文档,或多或少有一点小坑,不过今天主题是 etcd 所以没提这部分。各个 Master 分别重启后数据的一致我们测试时没问题,还有比较极端的是直接把三 Master 停机一天,再启动后也能恢复。

![]()