深度学习_目标检测_YOLOv5训练Pascal VOC格式的数据集教程

1.搭建环境

要求Python版本>=3.7,PyTorch版本>=1.5。

并且安装需要的库源:

pip install -U -r requirements.txt

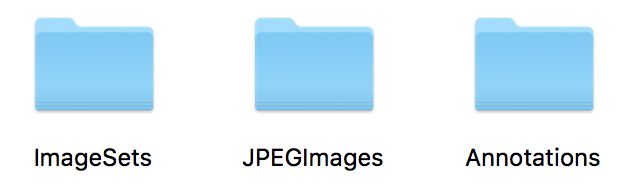

2.开始准备Pascal VOC格式的数据

上图是Pascal VOC格式数据集的标准格式。

为了应对YOLOv5的darknet格式 ,我们使用如下代码生成labels标签文件(为了简单起见,我们对train和test标签进行生成):

import xml.etree.ElementTree as ET

import pickle

import os

from os import listdir, getcwd

from os.path import join

sets = ['train', 'test']

classes = ['XO', 'PN', 'PI', 'NP', 'HD', 'FP', 'FB', 'FO'] # 自己训练的类别

def convert(size, box):

dw = 1. / size[0]

dh = 1. / size[1]

x = (box[0] + box[1]) / 2.0

y = (box[2] + box[3]) / 2.0

w = box[1] - box[0]

h = box[3] - box[2]

x = x * dw

w = w * dw

y = y * dh

h = h * dh

return (x, y, w, h)

def convert_annotation(image_id):

in_file = open('./Annotations/%s.xml' % (image_id))

out_file = open('./labels/%s.txt' % (image_id), 'w')

tree = ET.parse(in_file)

root = tree.getroot()

size = root.find('size')

w = int(size.find('width').text)

h = int(size.find('height').text)

for obj in root.iter('object'):

difficult = obj.find('difficult').text

cls = obj.find('name').text

if cls not in classes or int(difficult) == 1:

continue

cls_id = classes.index(cls)

xmlbox = obj.find('bndbox')

b = (float(xmlbox.find('xmin').text), float(xmlbox.find('xmax').text), float(xmlbox.find('ymin').text),

float(xmlbox.find('ymax').text))

bb = convert((w, h), b)

out_file.write(str(cls_id) + " " + " ".join([str(a) for a in bb]) + '\n')

wd = getcwd()

for image_set in sets:

if not os.path.exists('./labels/'):

os.makedirs('./labels/')

image_ids = open('./ImageSets/Main/%s.txt' % (image_set)).read().strip().split()

list_file = open('./%s.txt' % (image_set), 'w')

for image_id in image_ids:

list_file.write('./JPEGImages/%s.jpg\n' % (image_id))

convert_annotation(image_id)

list_file.close()

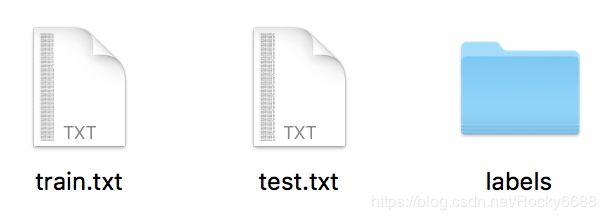

结果如下所示:

我们可以看到生成了train.txt、test.txt和labels文件。

3.数据集整合

在模型官方的github中给出了数据集整合的方法:Train Custom Data

我首先讲一讲官方的方法

从上图可以看到,我们需要将labels文件夹中的.txt文件分别对应的放入train2017和val2017中;同样的,JPEGImages文件夹中的.jpg图像文件也分别对应的放入train2017和val2017中。

我使用的方法

我是将labels里的文件全都复制到JPEGImages文件夹中。

这两种方法对应着下面配置训练文件的路径设置不同。

4. 配置训练文件

在data目录下新建VOC.yaml,配置训练的数据:

# COCO 2017 dataset http://cocodataset.org - first 128 training images

# Download command: python -c "from yolov5.utils.google_utils import gdrive_download; gdrive_download('1n_oKgR81BJtqk75b00eAjdv03qVCQn2f','coco128.zip')"

# Train command: python train.py --data ./data/coco128.yaml

# Dataset should be placed next to yolov5 folder:

# /parent_folder

# /coco128

# /yolov5

# train and val datasets (image directory or *.txt file with image paths)

#(上面第三步的不同体现在读取数据的路径,如果是官方的方法,我们填写如下路径:

#COCO/images/train2017/

#COCO/images/val2017/

#;如果使用我的方法,我们可以用上面生成labels时同时生成的train.txt和test.txt路径)

train: /root/yolov5-master/data/train.txt

val: /root/yolov5-master/data/test.txt

# number of classes

nc: 8

# class names

names: ['XO', 'PN', 'PI', 'NP', 'HD', 'FP', 'FB', 'FO']

models文件修改(我们想使用哪个模型就对应改哪个yaml),例如我们使用yolov5s.yaml:

# parameters

nc: 8 # number of classes <------------------ UPDATE to match your dataset(一般我们只需要改这个)

depth_multiple: 0.33 # model depth multiple

width_multiple: 0.50 # layer channel multiple

# anchors

anchors:

- [10,13, 16,30, 33,23] # P3/8

- [30,61, 62,45, 59,119] # P4/16

- [116,90, 156,198, 373,326] # P5/32

# yolov5 backbone

backbone:

# [from, number, module, args]

[[-1, 1, Focus, [64, 3]], # 1-P1/2

[-1, 1, Conv, [128, 3, 2]], # 2-P2/4

[-1, 3, Bottleneck, [128]],

[-1, 1, Conv, [256, 3, 2]], # 4-P3/8

[-1, 9, BottleneckCSP, [256, False]],

[-1, 1, Conv, [512, 3, 2]], # 6-P4/16

[-1, 9, BottleneckCSP, [512, False]],

[-1, 1, Conv, [1024, 3, 2]], # 8-P5/32

[-1, 1, SPP, [1024, [5, 9, 13]]],

[-1, 12, BottleneckCSP, [1024, False]], # 10

]

# yolov5 head

head:

[[-1, 1, nn.Conv2d, [na * (nc + 5), 1, 1, 0]], # 12 (P5/32-large)

[-2, 1, nn.Upsample, [None, 2, 'nearest']],

[[-1, 6], 1, Concat, [1]], # cat backbone P4

[-1, 1, Conv, [512, 1, 1]],

[-1, 3, BottleneckCSP, [512, False]],

[-1, 1, nn.Conv2d, [na * (nc + 5), 1, 1, 0]], # 16 (P4/16-medium)

[-2, 1, nn.Upsample, [None, 2, 'nearest']],

[[-1, 4], 1, Concat, [1]], # cat backbone P3

[-1, 1, Conv, [256, 1, 1]],

[-1, 3, BottleneckCSP, [256, False]],

[-1, 1, nn.Conv2d, [na * (nc + 5), 1, 1, 0]], # 21 (P3/8-small)

[[], 1, Detect, [nc, anchors]], # Detect(P3, P4, P5)

]

5.训练

接下来我们就可以进行训练啦,可以使用如下代码,也可自己的修改参数:

python train.py --data data/VOC.yaml --cfg models/yolov5s.yaml --weights '' --batch-size 16 --epochs 5