sqoop使用hcatalog抽取数据异常

sqoop使用hcatalog抽取数据到hive,开启hdfs sentry权限同步后sqoop hcatalog脚本执行任务失败,错误日志如下:

Job commit failed: org.apache.hive.hcatalog.common.HCatException : 2006 : Error adding partition to metastore. Cause : org.apache.hadoop.security.AccessControlException: Permission denied. user=basePlat is not the owner of inode=ss_dt=20180410

at org.apache.hadoop.hdfs.server.namenode.DefaultAuthorizationProvider.checkOwner(DefaultAuthorizationProvider.java:188)

at org.apache.hadoop.hdfs.server.namenode.DefaultAuthorizationProvider.checkPermission(DefaultAuthorizationProvider.java:174)

at org.apache.sentry.hdfs.SentryAuthorizationProvider.checkPermission(SentryAuthorizationProvider.java:178)

at org.apache.hadoop.hdfs.server.namenode.FSPermissionChecker.checkPermission(FSPermissionChecker.java:152)

at org.apache.hadoop.hdfs.server.namenode.FSDirectory.checkPermission(FSDirectory.java:3529)

at org.apache.hadoop.hdfs.server.namenode.FSDirectory.checkPermission(FSDirectory.java:3512)

at org.apache.hadoop.hdfs.server.namenode.FSDirectory.checkOwner(FSDirectory.java:3477)

at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.checkOwner(FSNamesystem.java:6582)

at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.setPermissionInt(FSNamesystem.java:1808)

at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.setPermission(FSNamesystem.java:1788)

at org.apache.hadoop.hdfs.server.namenode.NameNodeRpcServer.setPermission(NameNodeRpcServer.java:654)

at org.apache.hadoop.hdfs.server.namenode.AuthorizationProviderProxyClientProtocol.setPermission(AuthorizationProviderProxyClientProtocol.java:174)

at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolServerSideTranslatorPB.setPermission(ClientNamenodeProtocolServerSideTranslatorPB.java:454)

at org.apache.hadoop.hdfs.protocol.proto.ClientNamenodeProtocolProtos$ClientNamenodeProtocol$2.callBlockingMethod(ClientNamenodeProtocolProtos.java)

at org.apache.hadoop.ipc.ProtobufRpcEngine$Server$ProtoBufRpcInvoker.call(ProtobufRpcEngine.java:617)

at org.apache.hadoop.ipc.RPC$Server.call(RPC.java:1073)

at org.apache.hadoop.ipc.Server$Handler$1.run(Server.java:2220)

at org.apache.hadoop.ipc.Server$Handler$1.run(Server.java:2216)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:422)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1920)

at org.apache.hadoop.ipc.Server$Handler.run(Server.java:2214)

at org.apache.hive.hcatalog.mapreduce.FileOutputCommitterContainer.registerPartitions(FileOutputCommitterContainer.java:969)

at org.apache.hive.hcatalog.mapreduce.FileOutputCommitterContainer.commitJob(FileOutputCommitterContainer.java:249)

at org.apache.hadoop.mapreduce.v2.app.commit.CommitterEventHandler$EventProcessor.handleJobCommit(CommitterEventHandler.java:274)

at org.apache.hadoop.mapreduce.v2.app.commit.CommitterEventHandler$EventProcessor.run(CommitterEventHandler.java:237)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1142)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:617)

at java.lang.Thread.run(Thread.java:745)

Caused by: org.apache.hadoop.security.AccessControlException: Permission denied. user=basePlat is not the owner of inode=ss_dt=20180410

at org.apache.hadoop.hdfs.server.namenode.DefaultAuthorizationProvider.checkOwner(DefaultAuthorizationProvider.java:188)

at org.apache.hadoop.hdfs.server.namenode.DefaultAuthorizationProvider.checkPermission(DefaultAuthorizationProvider.java:174)

at org.apache.sentry.hdfs.SentryAuthorizationProvider.checkPermission(SentryAuthorizationProvider.java:178)

at org.apache.hadoop.hdfs.server.namenode.FSPermissionChecker.checkPermission(FSPermissionChecker.java:152)

at org.apache.hadoop.hdfs.server.namenode.FSDirectory.checkPermission(FSDirectory.java:3529)

at org.apache.hadoop.hdfs.server.namenode.FSDirectory.checkPermission(FSDirectory.java:3512)

at org.apache.hadoop.hdfs.server.namenode.FSDirectory.checkOwner(FSDirectory.java:3477)

at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.checkOwner(FSNamesystem.java:6582)

at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.setPermissionInt(FSNamesystem.java:1808)

at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.setPermission(FSNamesystem.java:1788)

at org.apache.hadoop.hdfs.server.namenode.NameNodeRpcServer.setPermission(NameNodeRpcServer.java:654)

at org.apache.hadoop.hdfs.server.namenode.AuthorizationProviderProxyClientProtocol.setPermission(AuthorizationProviderProxyClientProtocol.java:174)

at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolServerSideTranslatorPB.setPermission(ClientNamenodeProtocolServerSideTranslatorPB.java:454)

at org.apache.hadoop.hdfs.protocol.proto.ClientNamenodeProtocolProtos$ClientNamenodeProtocol$2.callBlockingMethod(ClientNamenodeProtocolProtos.java)

at org.apache.hadoop.ipc.ProtobufRpcEngine$Server$ProtoBufRpcInvoker.call(ProtobufRpcEngine.java:617)

at org.apache.hadoop.ipc.RPC$Server.call(RPC.java:1073)

at org.apache.hadoop.ipc.Server$Handler$1.run(Server.java:2220)

at org.apache.hadoop.ipc.Server$Handler$1.run(Server.java:2216)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:422)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1920)

at org.apache.hadoop.ipc.Server$Handler.run(Server.java:2214)

at sun.reflect.NativeConstructorAccessorImpl.newInstance0(Native Method)

at sun.reflect.NativeConstructorAccessorImpl.newInstance(NativeConstructorAccessorImpl.java:62)

at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45)

at java.lang.reflect.Constructor.newInstance(Constructor.java:423)

at org.apache.hadoop.ipc.RemoteException.instantiateException(RemoteException.java:106)

at org.apache.hadoop.ipc.RemoteException.unwrapRemoteException(RemoteException.java:73)

at org.apache.hadoop.hdfs.DFSClient.setPermission(DFSClient.java:2479)

at org.apache.hadoop.hdfs.DistributedFileSystem$26.doCall(DistributedFileSystem.java:1428)

at org.apache.hadoop.hdfs.DistributedFileSystem$26.doCall(DistributedFileSystem.java:1424)

at org.apache.hadoop.fs.FileSystemLinkResolver.resolve(FileSystemLinkResolver.java:81)

at org.apache.hadoop.hdfs.DistributedFileSystem.setPermission(DistributedFileSystem.java:1424)

at org.apache.hive.hcatalog.mapreduce.FileOutputCommitterContainer.applyGroupAndPerms(FileOutputCommitterContainer.java:408)

at org.apache.hive.hcatalog.mapreduce.FileOutputCommitterContainer.registerPartitions(FileOutputCommitterContainer.java:947)

... 6 more

Caused by: org.apache.hadoop.ipc.RemoteException(org.apache.hadoop.security.AccessControlException): Permission denied. user=basePlat is not the owner of inode=ss_dt=20180410

at org.apache.hadoop.hdfs.server.namenode.DefaultAuthorizationProvider.checkOwner(DefaultAuthorizationProvider.java:188)

at org.apache.hadoop.hdfs.server.namenode.DefaultAuthorizationProvider.checkPermission(DefaultAuthorizationProvider.java:174)

at org.apache.sentry.hdfs.SentryAuthorizationProvider.checkPermission(SentryAuthorizationProvider.java:178)

at org.apache.hadoop.hdfs.server.namenode.FSPermissionChecker.checkPermission(FSPermissionChecker.java:152)

at org.apache.hadoop.hdfs.server.namenode.FSDirectory.checkPermission(FSDirectory.java:3529)

at org.apache.hadoop.hdfs.server.namenode.FSDirectory.checkPermission(FSDirectory.java:3512)

at org.apache.hadoop.hdfs.server.namenode.FSDirectory.checkOwner(FSDirectory.java:3477)

at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.checkOwner(FSNamesystem.java:6582)

at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.setPermissionInt(FSNamesystem.java:1808)

at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.setPermission(FSNamesystem.java:1788)

at org.apache.hadoop.hdfs.server.namenode.NameNodeRpcServer.setPermission(NameNodeRpcServer.java:654)

at org.apache.hadoop.hdfs.server.namenode.AuthorizationProviderProxyClientProtocol.setPermission(AuthorizationProviderProxyClientProtocol.java:174)

at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolServerSideTranslatorPB.setPermission(ClientNamenodeProtocolServerSideTranslatorPB.java:454)

at org.apache.hadoop.hdfs.protocol.proto.ClientNamenodeProtocolProtos$ClientNamenodeProtocol$2.callBlockingMethod(ClientNamenodeProtocolProtos.java)

at org.apache.hadoop.ipc.ProtobufRpcEngine$Server$ProtoBufRpcInvoker.call(ProtobufRpcEngine.java:617)

at org.apache.hadoop.ipc.RPC$Server.call(RPC.java:1073)

at org.apache.hadoop.ipc.Server$Handler$1.run(Server.java:2220)

at org.apache.hadoop.ipc.Server$Handler$1.run(Server.java:2216)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:422)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1920)

at org.apache.hadoop.ipc.Server$Handler.run(Server.java:2214)

at org.apache.hadoop.ipc.Client.call(Client.java:1502)

at org.apache.hadoop.ipc.Client.call(Client.java:1439)

at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.invoke(ProtobufRpcEngine.java:230)

at com.sun.proxy.$Proxy10.setPermission(Unknown Source)

at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolTranslatorPB.setPermission(ClientNamenodeProtocolTranslatorPB.java:355)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.apache.hadoop.io.retry.RetryInvocationHandler.invokeMethod(RetryInvocationHandler.java:256)

at org.apache.hadoop.io.retry.RetryInvocationHandler.invoke(RetryInvocationHandler.java:104)

at com.sun.proxy.$Proxy11.setPermission(Unknown Source)

at org.apache.hadoop.hdfs.DFSClient.setPermission(DFSClient.java:2477)

... 12 more

解决方案

加上hdfs sentry权限同步后,目录文件所有者都是hive,真正的执行任务的用户是无权修改所有者的,所以执行失败。

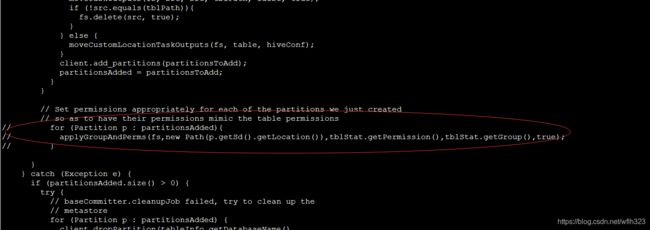

修改hive源码中/home/hadoop/hive-1.1.0-cdh5.11.1/hcatalog/core/src/main/java/org/apache/hive/hcatalog/mapreduce/ FileOutputCommitterContainer registerPartitions 方法中注释 applyGroupAndPerms,打包替换掉包