Hadoop学习笔记(4)—— java API 操作 hdfs(1)

前提是已经编译好了hadoop在win7上的源码,并且配置了正确的环境变量。参考笔记(3)参考地址

1 上传文件

package com.tzb.hdfs;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.junit.Before;

import org.junit.Test;

import java.io.IOException;

public class HdfsClientDemo {

FileSystem fs =null;

@Before

public void init() throws IOException {

Configuration conf = new Configuration();

conf.set("fs.defaultFS","hdfs://node1:9000");

//拿到一个文件系统操作的客户端实例对象

fs = FileSystem.get(conf);

}

@Test

public void testUpload() throws IOException, InterruptedException {

fs.copyFromLocalFile(new Path("H:/hdfs.txt"),new Path("/hdfs.txt.copy"));

fs.close();

}

public static void main(String[] args) throws IOException, InterruptedException {

}

}

程序报错,原因是默认 将本地电脑的用户名作为Hadoop的用户去通信,而本地电脑的用户名没有权限在Hadoop写数据

![]()

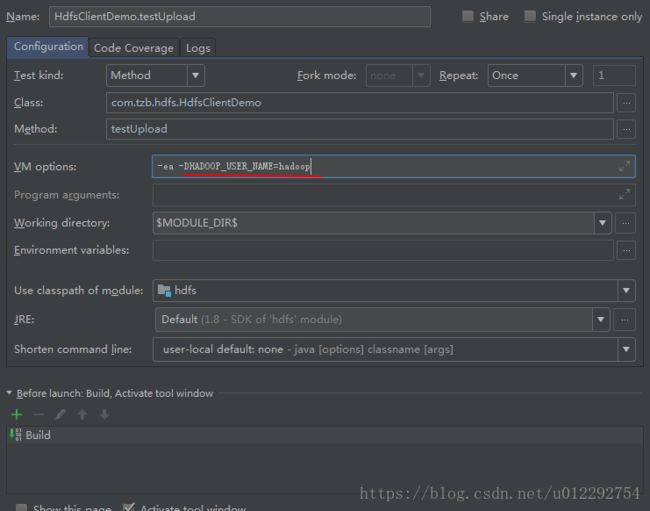

1.1 解决办法1:

在VM 的运行参数添加 -DHADOOP_USER_NAME=hadoop

@Before

public void init() throws IOException, URISyntaxException, InterruptedException {

Configuration conf = new Configuration();

conf.set("fs.defaultFS","hdfs://node1:9000");

//拿到一个文件系统操作的客户端实例对象

//fs=FileSystem.get(conf);

fs = FileSystem.get(new URI("hdfs://node1:9000"),conf,"hadoop");

}1.2 解决办法2

/*

* 客户端操作hdfs时,有一个用户身份

* 默认 hdfs客户端api会从jvm中获取一个参数作为自己的用户身份,-DHADOOP_USER_NAME=hadoop

*

* 也可以在构造客户端fs对象时,通过参数传递用户名

*

* */

@Before

public void init() throws IOException, URISyntaxException, InterruptedException {

Configuration conf = new Configuration();

conf.set("fs.defaultFS","hdfs://node1:9000");

//拿到一个文件系统操作的客户端实例对象

//fs=FileSystem.get(conf);

fs = FileSystem.get(new URI("hdfs://node1:9000"),conf,"hadoop");

}1.3 文件成功上传

2 文件下载

@Test

public void testDownload() throws IOException {

fs.copyToLocalFile(new Path("/hdfs.txt.copy"),new Path("f:/"));

}3

package com.tzb.hdfs;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.*;

import org.junit.Before;

import org.junit.Test;

import java.io.IOException;

import java.net.URI;

import java.net.URISyntaxException;

import java.util.Iterator;

import java.util.Map;

/*

* 客户端操作hdfs时,有一个用户身份

* 默认 hdfs客户端api会从jvm中获取一个参数作为自己的用户身份,-DHADOOP_USER_NAME=hadoop

*

* 也可以在构造客户端fs对象时,通过参数传递用户名

*

* */

public class HdfsClientDemo {

FileSystem fs = null;

Configuration conf = null;

@Before

public void init() throws IOException, URISyntaxException, InterruptedException {

conf = new Configuration();

conf.set("dfs.replication", "5");

//conf.set("fs.defaultFS", "hdfs://node1:9000");

//拿到一个文件系统操作的客户端实例对象

//fs=FileSystem.get(conf);

fs = FileSystem.get(new URI("hdfs://node1:9000"), conf, "hadoop");

}

@Test

public void testUpload() throws IOException, InterruptedException {

fs.copyFromLocalFile(new Path("H:/hdfs.txt"), new Path("/hdfs.txt.copy"));

fs.close();

}

@Test

public void testDownload() throws IOException {

fs.copyToLocalFile(new Path("/hdfs.txt.copy"), new Path("f:/"));

}

@Test

public void testConf() {

Iterator> it = conf.iterator();

while (it.hasNext()) {

Map.Entry ent = it.next();

System.out.println(ent.getKey() + " : " + ent.getValue());

}

}

@Test

public void testMkdir() throws IOException {

boolean mkdirs = fs.mkdirs(new Path("/testmkdir/aaa/bbb"));

System.out.println(mkdirs);

}

@Test

public void testDelete() throws IOException {

boolean flag = fs.delete(new Path("/testmkdir/aaa"), true);

System.out.println(flag);

}

@Test

public void testLs() throws IOException {

RemoteIterator listFiles= fs.listFiles(new Path("/"),true);

while(listFiles.hasNext()){

LocatedFileStatus fileStatus = listFiles.next();

System.out.println("Name: "+fileStatus.getPath().getName());

System.out.println("Blocksize: "+fileStatus.getBlockSize());

System.out.println("owner: "+fileStatus.getOwner());

System.out.println("replication: "+fileStatus.getReplication());

System.out.println("===========================");

}

}

@Test

public void testLs2() throws IOException {

FileStatus[] listStatus = fs.listStatus(new Path("/"));

for(FileStatus file:listStatus){

System.out.println("Name: "+file.getPath().getName());

System.out.println(file.isFile()?"file":"directory");

}

}

public static void main(String[] args) throws IOException, InterruptedException {

}

}